19.1 Traditional Approach

The classical mediation analysis follows the approach introduced by Baron and Kenny (1986), though it has limitations, particularly in requiring the first step (\(X \to Y\)) to be significant. Despite its shortcomings, this framework provides a useful foundation.

19.1.1 Steps in the Traditional Mediation Model

Mediation is typically assessed through three regression models:

- Total Effect: \(X \to Y\)

- Path \(a\): \(X \to M\)

- Path \(b\) and Direct Effect (\(c'\)): \(X + M \to Y\)

where:

- \(X\) = independent (causal) variable

- \(Y\) = dependent (outcome) variable

- \(M\) = mediating variable

Originally, Baron and Kenny (1986) required the direct path \(X \to Y\) to be significant. However, mediation can still occur even if this direct effect is not significant. For example:

The effect of \(X\) on \(Y\) might be fully absorbed by \(M\).

Multiple mediators (\(M_1, M_2\)) with opposing effects could cancel each other out, leading to a non-significant direct effect.

19.1.2 Graphical Representation of Mediation

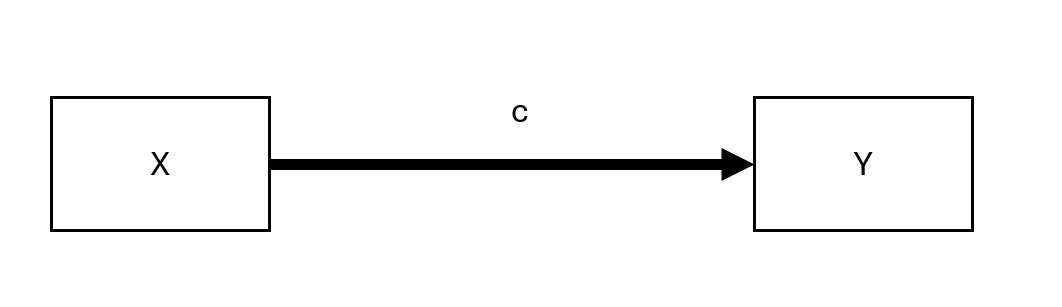

19.1.2.1 Unmediated Model

Figure 19.1: Unmediated Model

Here, \(c\) represents the total effect of \(X\) on \(Y\).

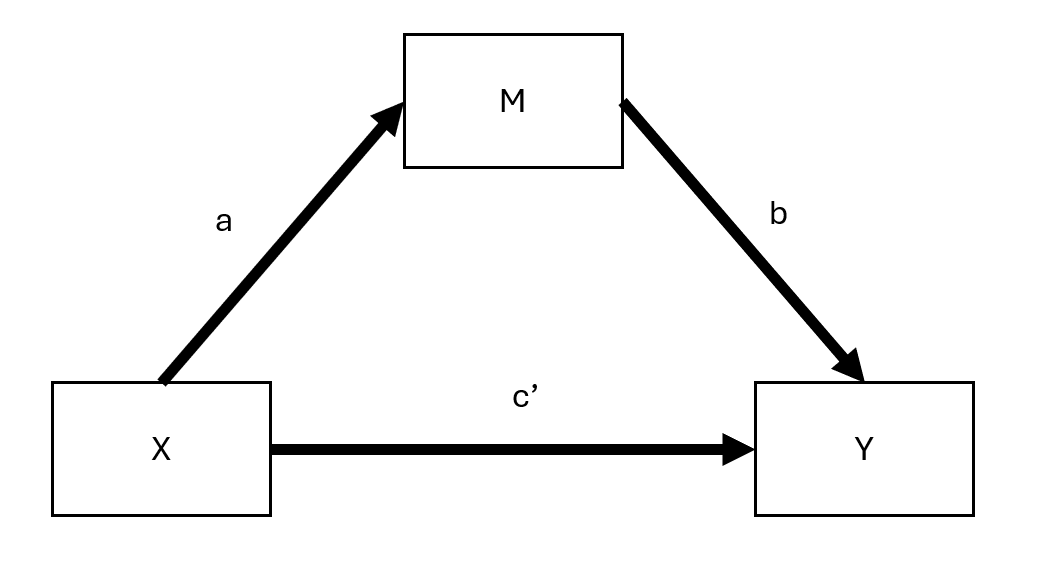

19.1.2.2 Mediated Model

Figure 19.2: Mediated Model

Here:

- \(c'\) = direct effect (effect of \(X\) on \(Y\) after accounting for mediation)

- \(ab\) = indirect effect (mediation pathway)

Thus, we can express:

\[ \text{total effect} = \text{direct effect} + \text{indirect effect} \]

or,

\[ c = c' + ab \]

This equation holds under standard linear models but not necessarily in cases such as:

- Latent variable models

- Logistic regression (only an approximation)

- Multilevel models (Bauer, Preacher, and Gil 2006)

19.1.3 Measuring Mediation

Several approaches exist for quantifying the indirect effect (\(ab\)):

Proportional Reduction Approach:

\[1 - \frac{c'}{c}\]

Not recommended due to high instability, especially when \(c\) is small (D. P. MacKinnon, Warsi, and Dwyer 1995).Product Method:

\[a \times b\]

The most common approach.Difference Method:

\[c - c'\]

Conceptually similar to the product method but less precise in small samples.

19.1.4 Assumptions in Linear Mediation Models

For valid mediation analysis, the following assumptions should hold:

- No unmeasured confounders between \(X-Y\), \(X-M\), and \(M-Y\).

- No reverse causality: \(X\) should not be influenced by a confounder (\(C\)) that also affects \(M-Y\).

- Measurement reliability: \(M\) should be measured without error (if not, consider errors-in-variables models).

Regression Equations for Mediation Steps

Step 1: Total Effect of \(X\) on \(Y\)

\[ Y = \beta_0 + cX + \epsilon \]

- The significance of \(c\) is not required for mediation to occur.

Step 2: Effect of \(X\) on \(M\)

\[ M = \alpha_0 + aX + \epsilon \]

- The coefficient \(a\) must be significant for mediation analysis to proceed.

Step 3: Effect of \(M\) on \(Y\) (Including \(X\))

\[ Y = \gamma_0 + c'X + bM + \epsilon \]

- If \(c'\) becomes non-significant after including \(M\), full mediation occurs.

- If \(c'\) is reduced but remains significant, partial mediation is present.

| Effect of \(X\) on \(Y\) | Mediation Type |

|---|---|

| \(b_4\) (from Step 3) is insignificant | Full mediation |

| \(b_4 < b_1\) (from Step 1) but still significant | Partial mediation |

19.1.5 Testing for Mediation

Several statistical tests exist to assess whether the indirect effect (\(ab\)) is significant:

- Sobel Test (Sobel 1982)

- Based on the standard error of \(ab\).

- Limitation: Assumes normality of \(ab\), which may not hold in small samples.

- Joint Significance Test

- If both \(a\) and \(b\) are significant, mediation is likely.

- Bootstrapping (Preferred) Shrout and Bolger (2002)

- Estimates the confidence interval for \(ab\).

- Does not assume normality.

- Recommended for small-to-moderate sample sizes.

19.1.6 Additional Considerations

- Proximal mediation (where path \(a\) exceeds path \(b\)) can lead to multicollinearity and reduced statistical power. In contrast, distal mediation (where path \(b\) exceeds path \(a\)) tends to maximize power. In fact, slightly distal mediators—where \(b\) is somewhat larger than \(a\)—often strike an ideal balance for power in mediation analyses (Hoyle 1999).

- Tests of direct effects (\(c\) and \(c'\)) generally have lower power than tests of the indirect effect (\(ab\)). As a result, it is possible for the indirect effect (\(ab\)) to be statistically significant even when the direct effect (\(c\)) is not. This situation can appear to indicate “complete mediation,” yet the lack of a statistically significant direct effect between \(X\) and \(Y\) (i.e., \(c'\)) does not definitively rule out other possibilities (Kenny and Judd 2014).

- Because testing \(ab\) essentially combines two tests, it often provides a power advantage over testing \(c'\) alone. However, using a non-significant \(c'\) as the sole criterion for claiming complete mediation should be done cautiously—if at all—given the importance of adequate sample size and power. Indeed, Hayes and Scharkow (2013) recommend avoiding claims of complete mediation based solely on a non-significant \(c'\), particularly when partial mediation may still be present.

19.1.7 Assumptions in Mediation Analysis

Valid mediation analysis requires several key assumptions, which can be categorized into causal direction, interaction effects, measurement reliability, and confounding control.

19.1.7.1 Direction

Causal Order of Variables

A simple but weak solution is to measure \(X\) before \(M\) and \(Y\) to prevent reverse causality (i.e., \(M\) or \(Y\) causing \(X\)). Similarly, measuring \(M\) before \(Y\) avoids feedback effects of \(Y\) on \(M\).

However, causal feedback loops between \(M\) and \(Y\) may still exist.

If we assume full mediation (\(c' = 0\)), models with reciprocal causal effects between \(M\) and \(Y\) can be estimated using instrumental variables (IV).

E. R. Smith (1982) suggests treating both \(M\) and \(Y\) as potential mediators of each other, requiring distinct instrumental variables for each to avoid cross-contamination of causal effects.

19.1.7.2 Interaction Effects in Mediation

If \(M\) interacts with \(X\) in predicting \(Y\), then \(M\) is both a mediator and a moderator (Baron and Kenny 1986).

The interaction term \(X \times M\) should always be included in the model to account for possible moderation effects.

For interpreting such interactions in mediation models, see (T. VanderWeele 2015), who provides a framework for moderated mediation analysis.

19.1.7.3 Reliability

Measurement error in any of the three key variables (\(X, M, Y\)) can bias estimates of mediation effects.

- Measurement Error in the Mediator (\(M\)):

- Biases both \(b\) and \(c'\).

- Potential solution: Model \(M\) as a latent variable (reduces bias but may decrease statistical power) (Ledgerwood and Shrout 2011).

- Specific effects:

- \(b\) is attenuated (biased toward 0).

- \(c'\) is:

- Overestimated if \(ab > 0\).

- Underestimated if \(ab < 0\).

- Measurement Error in the Treatment (\(X\)):

- Biases both \(a\) and \(b\).

- Specific effects:

- \(a\) is attenuated.

- \(b\) is:

- Overestimated if \(ac' > 0\).

- Underestimated if \(ac' < 0\).

- Measurement Error in the Outcome (\(Y\)):

- If unstandardized, there is no bias.

- If standardized, there is attenuation bias (reduced effect sizes due to error variance).

19.1.7.4 Confounding in Mediation Analysis

Omitted variable bias can distort any of the three core relationships (\(X \to Y\), \(X \to M\), \(M \to Y\)). Addressing confounding requires either design-based or statistical solutions.

Design-Based Strategies (Preferred if Feasible)

- Randomization of the independent variable (\(X\)) reduces confounding bias.

- Randomization of the mediator (\(M\)), if possible, further strengthens causal claims.

- Controlling for measured confounders, though this only addresses observable confounding.

Statistical Strategies (When Randomization is Not Possible)

- Instrumental Variables Approach:

- Used when a confounder affects both \(M\) and \(Y\).

- Front-door adjustment can be applied if there exists a third variable that fully mediates the effect of \(M\) on \(Y\) while being independent of the confounder.

- Weighting Methods (e.g., Inverse Probability Weighting - IPW):

- Corrects for confounding by reweighting observations to balance confounders across treatment groups.

- Requires the strong ignorability assumption: All confounders must be measured and correctly specified (Westfall and Yarkoni 2016).

- While this assumption cannot be formally tested, sensitivity analyses can help assess robustness.

- See Heiss for R code on implementing IPW in mediation models.

19.1.8 Indirect Effect Tests

Testing the indirect effect (\(ab\)) is crucial in mediation analysis. Several methods exist, each with its advantages and limitations.

19.1.8.1 Sobel Test (Delta Method)

- Developed by Sobel (1982).

- Also known as the delta method.

- Not recommended because it assumes the sampling distribution of \(ab\) is normal, which often does not hold (D. P. MacKinnon, Warsi, and Dwyer 1995).

The standard error (SE) of the indirect effect is:

\[ SE_{ab} = \sqrt{\hat{b}^2 s_{\hat{a}}^2 + \hat{a}^2 s_{\hat{b}}^2} \]

The Z-statistic for testing whether \(ab\) is significantly different from 0 is:

\[ z = \frac{\hat{ab}}{\sqrt{\hat{b}^2 s_{\hat{a}}^2 + \hat{a}^2 s_{\hat{b}}^2}} \]

Disadvantages

- Assumes \(a\) and \(b\) are independent.

- Assumes \(ab\) follows a normal distribution.

- Poor performance in small samples.

- Lower power and more conservative than bootstrapping.

Special Case: Inconsistent Mediation

- Mediation can occur even when direct and indirect effects have opposite signs, known as inconsistent mediation (D. P. MacKinnon, Fairchild, and Fritz 2007).

- This happens when the mediator acts as a suppressor variable, leading to counteracting paths.

library(bda)

library(mediation)

data("boundsdata")

# Sobel Test for Mediation

bda::mediation.test(boundsdata$med, boundsdata$ttt, boundsdata$out) |>

tibble::rownames_to_column() |>

causalverse::nice_tab(2)

#> rowname Sobel Aroian Goodman

#> 1 z.value 4.05 4.03 4.07

#> 2 p.value 0.00 0.00 0.0019.1.8.2 Joint Significance Test

- Tests if the indirect effect is nonzero by checking whether both \(a\) and \(b\) are statistically significant.

- Assumes \(a \perp b\) (independence of paths).

- Performs similarly to bootstrapping (Hayes and Scharkow 2013).

- More robust to non-normality but can be sensitive to heteroscedasticity (Fossum and Montoya 2023).

- Does not provide confidence intervals, making effect size interpretation harder.

19.1.8.3 Bootstrapping (Preferred Method)

- First applied to mediation by Bollen and Stine (1990).

- Uses resampling to empirically estimate the sampling distribution of the indirect effect.

- Does not require normality assumptions or \(a \perp b\) independence.

- Works well with small samples.

- Can handle complex models.

Which Bootstrapping Method?

- Percentile bootstrap is preferred due to better Type I error rates (Tibbe and Montoya 2022).

- Bias-corrected bootstrapping can be too liberal (inflates Type I errors) (Fritz, Taylor, and MacKinnon 2012).

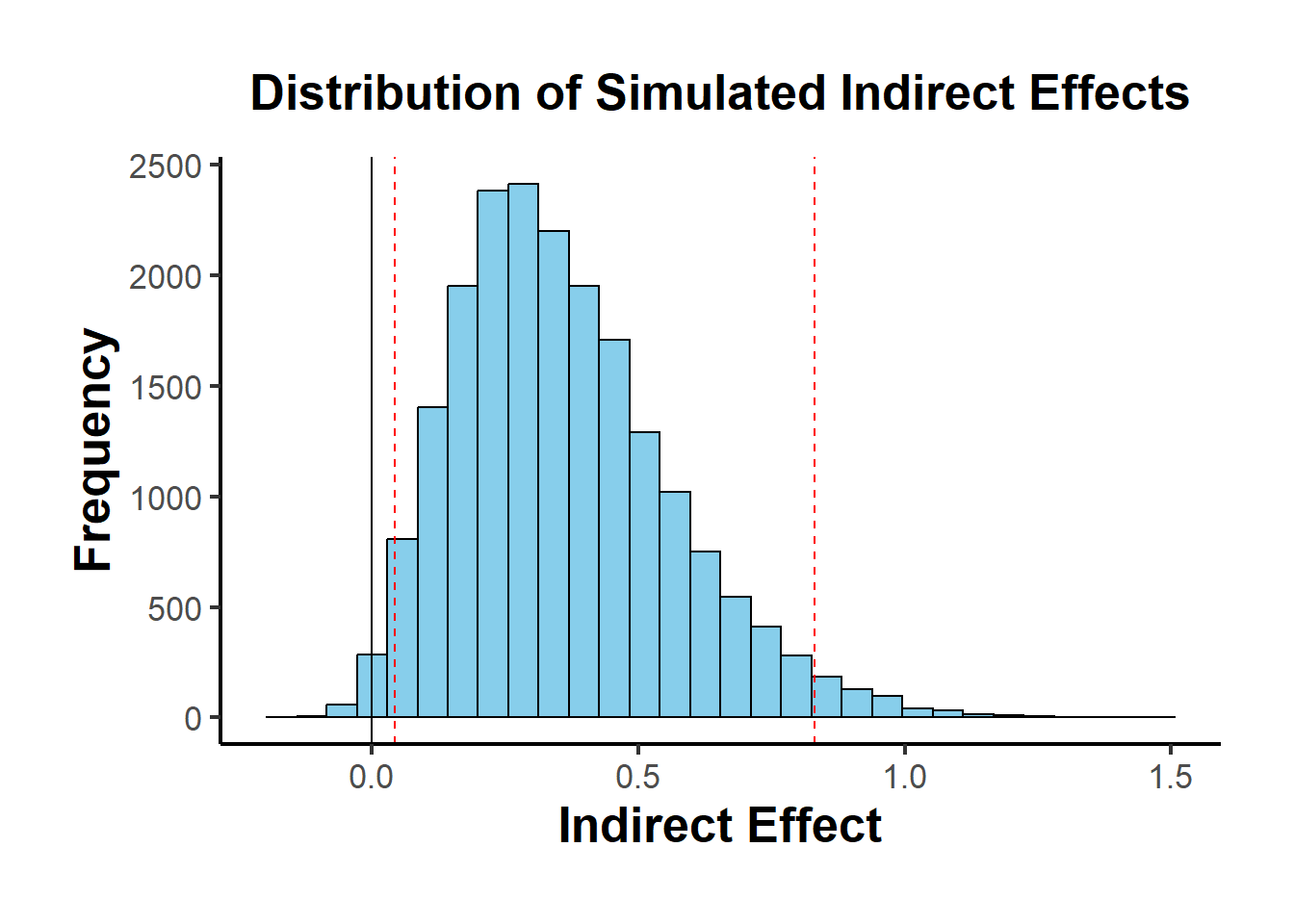

Special Case: Meta-Analytic Bootstrapping

- Bootstrapping can be applied without raw data, using only \(a, b, var(a), var(b), cov(a,b)\) from multiple studies.

# Meta-Analytic Bootstrapping for Mediation

library(causalverse)

result <- causalverse::med_ind(

a = 0.5,

b = 0.7,

var_a = 0.04,

var_b = 0.05,

cov_ab = 0.01

)

Figure 19.3: Distribution of Simulated Indirect Effects

When an instrumental variable (IV) is available, the causal effect can be estimated more reliably. Below are visual representations.

library(DiagrammeR)

# Simple Treatment-Outcome Model

grViz("

digraph {

graph []

node [shape = plaintext]

X [label = 'Treatment']

Y [label = 'Outcome']

edge [minlen = 2]

X->Y

{ rank = same; X; Y }

}")

# Mediation Model with an Instrument

grViz("

digraph {

graph []

node [shape = plaintext]

X [label ='Treatment', shape = box]

Y [label ='Outcome', shape = box]

M [label ='Mediator', shape = box]

IV [label ='Instrument', shape = box]

edge [minlen = 2]

IV->X

X->M

M->Y

X->Y

{ rank = same; X; Y; M }

}")Mediation Analysis with Fixed Effects Models

library(mediation)

library(fixest)

data("boundsdata")

# Step 1: Total Effect (c)

out1 <- feols(out ~ ttt, data = boundsdata)

# Step 2: Indirect Effect (a)

out2 <- feols(med ~ ttt, data = boundsdata)

# Step 3: Direct & Indirect Effect (c' & b)

out3 <- feols(out ~ med + ttt, data = boundsdata)

# Proportion of Mediation

coef(out2)['ttt'] * coef(out3)['med'] / coef(out1)['ttt'] * 100

#> ttt

#> 68.63609Bootstrapped Mediation Analysis

library(boot)

set.seed(1)

# Define the bootstrapping function

mediation_fn <- function(data, i) {

df <- data[i,]

a_path <- feols(med ~ ttt, data = df)

a <- coef(a_path)['ttt']

b_path <- feols(out ~ med + ttt, data = df)

b <- coef(b_path)['med']

cp <- coef(b_path)['ttt']

# Indirect Effect (a * b)

ind_ef <- a * b

total_ef <- a * b + cp

return(c(ind_ef, total_ef))

}

# Perform Bootstrapping

boot_med <- boot(boundsdata, mediation_fn, R = 100, parallel = "multicore", ncpus = 2)

boot_med

#>

#> ORDINARY NONPARAMETRIC BOOTSTRAP

#>

#>

#> Call:

#> boot(data = boundsdata, statistic = mediation_fn, R = 100, parallel = "multicore",

#> ncpus = 2)

#>

#>

#> Bootstrap Statistics :

#> original bias std. error

#> t1* 0.04112035 0.0006346725 0.009539903

#> t2* 0.05991068 -0.0004462572 0.029556611

# Summary and Confidence Intervals

summary(boot_med) |> causalverse::nice_tab()

#> R original bootBias bootSE bootMed

#> 1 100 0.04 0 0.01 0.04

#> 2 100 0.06 0 0.03 0.06

# Confidence Intervals (percentile bootstrap preferred)

boot.ci(boot_med, type = c("norm", "perc"))

#> BOOTSTRAP CONFIDENCE INTERVAL CALCULATIONS

#> Based on 100 bootstrap replicates

#>

#> CALL :

#> boot.ci(boot.out = boot_med, type = c("norm", "perc"))

#>

#> Intervals :

#> Level Normal Percentile

#> 95% ( 0.0218, 0.0592 ) ( 0.0249, 0.0623 )

#> Calculations and Intervals on Original Scale

#> Some percentile intervals may be unstable

# Point Estimates (Indirect and Total Effects)

colMeans(boot_med$t)

#> [1] 0.04175502 0.05946442Alternatively, use the robmed package for robust mediation analysis:

19.1.9 Power Analysis for Mediation

To assess whether the study has sufficient power to detect mediation effects, use:

library(pwr2ppl)

# Power analysis for the indirect effect (ab path)

medjs(

rx1m1 = .3, # Correlation: X → M (path a)

rx1y = .1, # Correlation: X → Y (path c')

rym1 = .3, # Correlation: M → Y (path b)

n = 100, # Sample size

alpha = 0.05,

mvars = 1, # Number of mediators

rep = 1000 # Replications (use 10,000 for accuracy)

)For interactive power analysis, see Kenny’s Mediation Power App.

Summary of Indirect Effect Tests

| Test | Pros | Cons |

|---|---|---|

| Sobel Test | Simple, fast | Assumes normality, low power |

| Joint Significance Test | Robust to non-normality | No confidence interval |

| Bootstrapping (Recommended) | No normality assumption, handles small samples | May be liberal if bias-corrected |

19.1.10 Multiple Mediation Analysis

In some cases, a single mediator (\(M\)) does not fully capture the indirect effect of \(X\) on \(Y\). Multiple mediation models extend traditional mediation by including two or more mediators, allowing us to examine how multiple pathways contribute to an outcome.

Several R packages handle multiple mediation models:

- manymome: A flexible package for multiple mediation modeling.

mma: Used for multiple mediator models.

19.1.10.1 Multiple Mediators: Structural Equation Modeling Approach

A popular method for estimating multiple mediation models is Structural Equation Modeling using lavaan.

To test multiple mediation, we first simulate data where two mediators (\(M_1\) and \(M_2\)) contribute to the outcome (\(Y\)).

# Load required packages

library(MASS) # For mvrnorm (generating correlated errors)

library(lavaan)

# Function to generate synthetic data

generate_data <- function(n = 10000, a1 = 0.5, a2 = -0.35,

b1 = 0.7, b2 = 0.48,

corr = TRUE, correlation_value = 0.7) {

set.seed(12345)

X <- rnorm(n) # Independent variable

# Generate correlated errors for mediators

if (corr) {

Sigma <- matrix(c(1, correlation_value, correlation_value, 1), nrow = 2)

errors <- mvrnorm(n, mu = c(0, 0), Sigma = Sigma)

} else {

errors <- mvrnorm(n, mu = c(0, 0), Sigma = diag(2))

}

M1 <- a1 * X + errors[, 1]

M2 <- a2 * X + errors[, 2]

Y <- b1 * M1 + b2 * M2 + rnorm(n) # Outcome variable

return(data.frame(X = X, M1 = M1, M2 = M2, Y = Y))

}We analyze the indirect effects through both mediators (\(M_1\) and \(M_2\)).

- Correctly Modeling Correlated Mediators

# Generate data with correlated mediators

Data_corr <- generate_data(n = 10000, corr = TRUE, correlation_value = 0.7)

# Define SEM model for multiple mediation

model_corr <- '

Y ~ b1 * M1 + b2 * M2 + c * X

M1 ~ a1 * X

M2 ~ a2 * X

M1 ~~ M2 # Correlated mediators (modeling correlation correctly)

'

# Fit SEM model

fit_corr <- sem(model_corr, data = Data_corr)

# Extract parameter estimates

parameterEstimates(fit_corr)[, c("lhs", "rhs", "est", "se", "pvalue")]

#> lhs rhs est se pvalue

#> 1 Y M1 0.700 0.014 0.000

#> 2 Y M2 0.487 0.014 0.000

#> 3 Y X -0.009 0.015 0.545

#> 4 M1 X 0.519 0.010 0.000

#> 5 M2 X -0.340 0.010 0.000

#> 6 M1 M2 0.677 0.012 0.000

#> 7 Y Y 0.975 0.014 0.000

#> 8 M1 M1 0.973 0.014 0.000

#> 9 M2 M2 0.982 0.014 0.000

#> 10 X X 1.000 0.000 NA2. Incorrectly Ignoring Correlation Between Mediators

# Define SEM model without modeling mediator correlation

model_uncorr <- '

Y ~ b1 * M1 + b2 * M2 + c * X

M1 ~ a1 * X

M2 ~ a2 * X

'

# Fit incorrect model

fit_uncorr <- sem(model_uncorr, data = Data_corr)

# Compare parameter estimates

parameterEstimates(fit_uncorr)[, c("lhs", "rhs", "est", "se", "pvalue")]

#> lhs rhs est se pvalue

#> 1 Y M1 0.700 0.010 0.000

#> 2 Y M2 0.487 0.010 0.000

#> 3 Y X -0.009 0.012 0.443

#> 4 M1 X 0.519 0.010 0.000

#> 5 M2 X -0.340 0.010 0.000

#> 6 Y Y 0.975 0.014 0.000

#> 7 M1 M1 0.973 0.014 0.000

#> 8 M2 M2 0.982 0.014 0.000

#> 9 X X 1.000 0.000 NAComparison of Model Fits

To check whether modeling correlation matters, we compare AIC and RMSEA.

# Extract model fit statistics

fit_measures <- function(fit) {

fitMeasures(fit, c("aic", "bic", "rmsea", "chisq"))

}

# Compare model fits

fit_measures(fit_corr) # Correct model (correlated mediators)

#> aic bic rmsea chisq

#> 77932.45 77997.34 0.00 0.00

fit_measures(fit_uncorr) # Incorrect model (ignores correlation)

#> aic bic rmsea chisq

#> 84453.208 84510.891 0.808 6522.762- If AIC and RMSEA are lower in the correlated model, it suggests that accounting for correlated errors improves fit.

After fitting the model, we assess:

Direct Effect: The effect of \(X\) on \(Y\) after accounting for both mediators (\(c'\)).

Indirect Effects:

\(a_1 \times b_1\): Effect of \(X \to M_1 \to Y\).

\(a_2 \times b_2\): Effect of \(X \to M_2 \to Y\).

Total Effect: Sum of direct and indirect effects.

# Extract indirect and direct effects

parameterEstimates(fit_corr, standardized = TRUE)

#> lhs op rhs label est se z pvalue ci.lower ci.upper std.lv

#> 1 Y ~ M1 b1 0.700 0.014 50.489 0.000 0.673 0.727 0.700

#> 2 Y ~ M2 b2 0.487 0.014 35.284 0.000 0.460 0.514 0.487

#> 3 Y ~ X c -0.009 0.015 -0.606 0.545 -0.038 0.020 -0.009

#> 4 M1 ~ X a1 0.519 0.010 52.563 0.000 0.499 0.538 0.519

#> 5 M2 ~ X a2 -0.340 0.010 -34.314 0.000 -0.360 -0.321 -0.340

#> 6 M1 ~~ M2 0.677 0.012 56.915 0.000 0.654 0.700 0.677

#> 7 Y ~~ Y 0.975 0.014 70.711 0.000 0.948 1.002 0.975

#> 8 M1 ~~ M1 0.973 0.014 70.711 0.000 0.946 1.000 0.973

#> 9 M2 ~~ M2 0.982 0.014 70.711 0.000 0.955 1.010 0.982

#> 10 X ~~ X 1.000 0.000 NA NA 1.000 1.000 1.000

#> std.all std.nox

#> 1 0.528 0.528

#> 2 0.345 0.345

#> 3 -0.006 -0.006

#> 4 0.465 0.465

#> 5 -0.325 -0.325

#> 6 0.692 0.692

#> 7 0.447 0.447

#> 8 0.784 0.784

#> 9 0.895 0.895

#> 10 1.000 1.000If \(c'\) is reduced (but still significant), we have partial mediation. If \(c' \approx 0\), it suggests full mediation.

# Load required packages

library(MASS) # for mvrnorm

library(lavaan)

# Function to generate synthetic data with correctly correlated errors for mediators

generate_data <-

function(n = 10000,

a1 = 0.5,

a2 = -0.35,

b1 = 0.7,

b2 = 0.48,

corr = TRUE,

correlation_value = 0.7) {

set.seed(12345)

X <- rnorm(n)

# Generate correlated errors using a multivariate normal distribution

if (corr) {

Sigma <- matrix(c(1, correlation_value, correlation_value, 1), nrow = 2) # Higher covariance matrix for errors

errors <- mvrnorm(n, mu = c(0, 0), Sigma = Sigma) # Generate correlated errors

} else {

errors <- mvrnorm(n, mu = c(0, 0), Sigma = diag(2)) # Independent errors

}

M1 <- a1 * X + errors[, 1]

M2 <- a2 * X + errors[, 2]

Y <- b1 * M1 + b2 * M2 + rnorm(n) # Y depends on M1 and M2

data.frame(X = X, M1 = M1, M2 = M2, Y = Y)

}

# Ground truth for comparison

ground_truth <- data.frame(Parameter = c("b1", "b2"), GroundTruth = c(0.7, 0.48))

# Function to extract relevant estimates, standard errors, and model fit

extract_estimates_b1_b2 <- function(fit) {

estimates <- parameterEstimates(fit)

estimates <- estimates[estimates$lhs == "Y" & estimates$rhs %in% c("M1", "M2"), c("rhs", "est", "se")]

estimates$Parameter <- ifelse(estimates$rhs == "M1", "b1", "b2")

estimates <- estimates[, c("Parameter", "est", "se")]

fit_stats <- fitMeasures(fit, c("aic", "bic", "rmsea", "chisq"))

return(list(estimates = estimates, fit_stats = fit_stats))

}

# Case 1: Correlated errors for mediators (modeled correctly)

Data_corr <- generate_data(n = 10000, corr = TRUE, correlation_value = 0.7)

model_corr <- '

Y ~ b1 * M1 + b2 * M2 + c * X

M1 ~ a1 * X

M2 ~ a2 * X

M1 ~~ M2 # Correlated mediators (errors)

'

fit_corr <- sem(model = model_corr, data = Data_corr)

results_corr <- extract_estimates_b1_b2(fit_corr)

# Case 2: Uncorrelated errors for mediators (modeled correctly)

Data_uncorr <- generate_data(n = 10000, corr = FALSE)

model_uncorr <- '

Y ~ b1 * M1 + b2 * M2 + c * X

M1 ~ a1 * X

M2 ~ a2 * X

'

fit_uncorr <- sem(model = model_uncorr, data = Data_uncorr)

results_uncorr <- extract_estimates_b1_b2(fit_uncorr)

# Case 3: Correlated errors, but not modeled as correlated

fit_corr_incorrect <- sem(model = model_uncorr, data = Data_corr)

results_corr_incorrect <- extract_estimates_b1_b2(fit_corr_incorrect)

# Case 4: Uncorrelated errors, but modeled as correlated

fit_uncorr_incorrect <- sem(model = model_corr, data = Data_uncorr)

results_uncorr_incorrect <- extract_estimates_b1_b2(fit_uncorr_incorrect)

# Combine all estimates for comparison

estimates_combined <- list(

"Correlated (Correct)" = results_corr$estimates,

"Uncorrelated (Correct)" = results_uncorr$estimates,

"Correlated (Incorrect)" = results_corr_incorrect$estimates,

"Uncorrelated (Incorrect)" = results_uncorr_incorrect$estimates

)

# Combine all into a single table

comparison_table <- do.call(rbind, lapply(names(estimates_combined), function(case) {

df <- estimates_combined[[case]]

df$Case <- case

df

}))

# Merge with ground truth for final comparison

comparison_table <- merge(comparison_table, ground_truth, by = "Parameter")

# Display the comparison table

comparison_table

#> Parameter est se Case GroundTruth

#> 1 b1 0.7002984 0.013870433 Correlated (Correct) 0.70

#> 2 b1 0.6973612 0.009859426 Uncorrelated (Correct) 0.70

#> 3 b1 0.7002984 0.010010367 Correlated (Incorrect) 0.70

#> 4 b1 0.6973612 0.009859634 Uncorrelated (Incorrect) 0.70

#> 5 b2 0.4871118 0.013805615 Correlated (Correct) 0.48

#> 6 b2 0.4868318 0.010009908 Uncorrelated (Correct) 0.48

#> 7 b2 0.4871118 0.009963588 Correlated (Incorrect) 0.48

#> 8 b2 0.4868318 0.010010119 Uncorrelated (Incorrect) 0.48

# Display model fit statistics for each case

fit_stats_combined <- list(

"Correlated (Correct)" = results_corr$fit_stats,

"Uncorrelated (Correct)" = results_uncorr$fit_stats,

"Correlated (Incorrect)" = results_corr_incorrect$fit_stats,

"Uncorrelated (Incorrect)" = results_uncorr_incorrect$fit_stats

)

fit_stats_combined

#> $`Correlated (Correct)`

#> aic bic rmsea chisq

#> 77932.45 77997.34 0.00 0.00

#>

#> $`Uncorrelated (Correct)`

#> aic bic rmsea chisq

#> 84664.312 84721.995 0.000 0.421

#>

#> $`Correlated (Incorrect)`

#> aic bic rmsea chisq

#> 84453.208 84510.891 0.808 6522.762

#>

#> $`Uncorrelated (Incorrect)`

#> aic bic rmsea chisq

#> 84665.89 84730.78 0.00 0.0019.1.11 Multiple Treatments in Mediation

In some cases, multiple independent variables (\(X_1\), \(X_2\)) influence the same mediators. This is called multiple treatments mediation (Hayes and Preacher 2014).

For an example in PROCESS (SPSS/R), see:

Process Mediation with Multiple Treatments.