30 Difference-in-Differences

Difference-in-Differences (DID) is a widely used causal inference method for estimating the effect of policy interventions or exogenous shocks when randomized experiments are not feasible. The key idea behind DID is to compare changes in outcomes over time between treated and control groups, under the assumption that, absent treatment, both groups would have followed parallel trends.

The method thrives in business analytics because transaction logs, panel dashboards, and quarterly filings already furnish the required data. Marketers appreciate its story-telling clarity, and finance analysts value its ability to control for economy-wide shocks without heavy modeling.

Why Analysts Love DID?

- Intuitive visuals – A two-line plot can immediately signal whether pre-treatment trends look parallel.

- Light data demands – Only two time points and a clear treatment onset are needed.

- Extendable – Regression frameworks let you handle staggered treatments, multiple periods, or continuous exposures.

- Transparent – Assumptions and identifying variation are easy to communicate to executives, regulators, or reviewers.

DID analysis can go beyond simple treatment effects by exploring causal mechanisms using mediation and moderation analyses:

- Mediation Under DiD: Examines how intermediate variables (e.g., consumer sentiment, brand perception) mediate the treatment effect (Habel, Alavi, and Linsenmayer 2021).

- Moderation Analysis: Studies how treatment effects vary across different groups (e.g., high vs. low brand loyalty) (Goldfarb and Tucker 2011).

Throughout this chapter you’ll learn to implement DID, diagnose its assumptions, and defend your findings in a boardroom or peer-review letter. Real-world cases will keep the discussion grounded.

And don’t worry: the equations stay in the wings until the intuition takes center stage.

30.1 Empirical Studies

30.1.1 Applications of DID in Marketing

DID has been extensively applied in marketing and business research to measure the impact of policy changes, advertising campaigns, and competitive actions. Below are several notable examples:

- TV Advertising & Online Shopping (Liaukonyte, Teixeira, and Wilbur 2015): Examines how TV ads influence consumer behavior in online shopping.

- Political Advertising & Voting Behavior (Wang, Lewis, and Schweidel 2018): Uses geographic discontinuities at state borders to analyze how ad sources and tone affect voter turnout.

- Music Streaming & Consumption (Datta, Knox, and Bronnenberg 2018): Investigates how adopting a music streaming service affects total music consumption.

- Data Breaches & Customer Spending (Janakiraman, Lim, and Rishika 2018): Analyzes how customer spending changes after a firm announces a data breach.

- Price Monitoring & Policy Enforcement (Israeli 2018): Studies the effect of digital monitoring on minimum advertised price policy enforcement.

- Foreign Direct Investment & Firm Responses (Ramani and Srinivasan 2019): Examines how firms in India responded to FDI liberalization reforms in 1991.

- Paywalls & Readership (Pattabhiramaiah, Sriram, and Manchanda 2019): Investigates how implementing paywalls affects online news consumption.

- Aggregators & Airline Business (Akca and Rao 2020): Evaluates how online aggregators impact airline ticket sales.

- Nutritional Labels & Competitive Response (Lim et al. 2020): Analyzes whether nutrition labels affect the nutritional quality of competing brands.

- Payment Disclosure & Physician Behavior (Guo, Sriram, and Manchanda 2020): Studies how payment disclosure laws impact prescription behavior.

- Fake Reviews & Sales (S. He, Hollenbeck, and Proserpio 2022): Uses an Amazon policy change to measure the effect of fake reviews on sales and ratings.

- Data Protection Regulations & Website Usage (Peukert et al. 2022): Assesses the impact of GDPR regulations on website usage and online business models.

30.1.2 Applications of DID in Economics

DID has also been extensively applied in economics, particularly in policy evaluation, labor economics, and macroeconomics:

- Natural Experiments in Development Economics (Rosenzweig and Wolpin 2000)

- Instrumental Variables & Natural Experiments (J. D. Angrist and Krueger 2001)

- DID in Macroeconomic Policy Analysis (Fuchs-Schündeln and Hassan 2016)

30.2 Visualization

Before diving into estimation, it is always wise to (i) confirm the treatment pattern and (ii) eyeball the outcomes.

The panelView package offers quick heatmaps and outcome traces that make these checks painless.

30.2.1 Data check

library(panelView)

library(fixest)

library(tidyverse)

base_stagg <- fixest::base_stagg |>

# treatment status

dplyr::mutate(treat_stat = dplyr::if_else(time_to_treatment < 0, 0, 1)) |>

select(id, year, treat_stat, y)

head(base_stagg)

#> id year treat_stat y

#> 2 90 1 0 0.01722971

#> 3 89 1 0 -4.58084528

#> 4 88 1 0 2.73817174

#> 5 87 1 0 -0.65103066

#> 6 86 1 0 -5.33381664

#> 7 85 1 0 0.4956263130.2.2 Treatment Assignment Heatmap

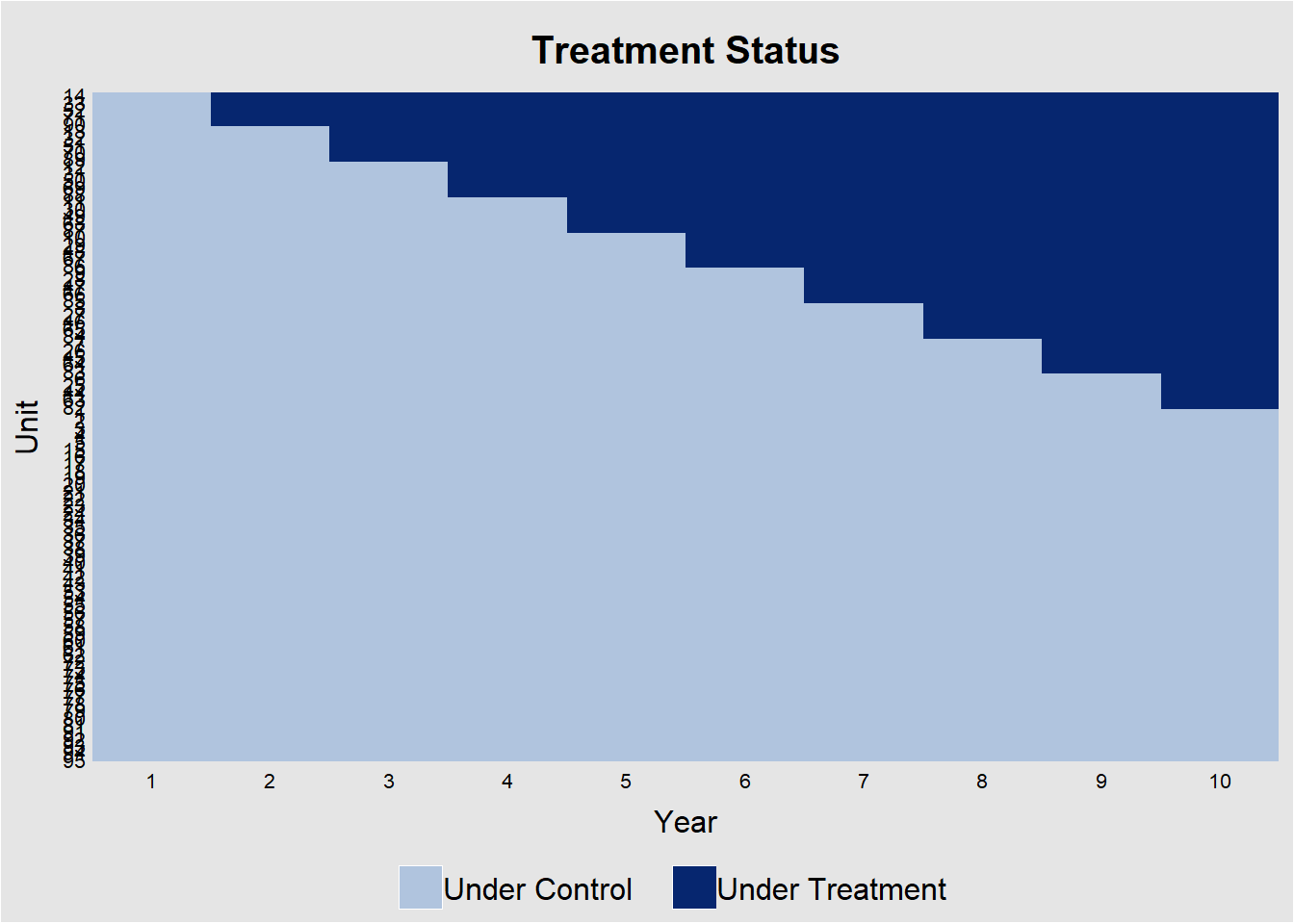

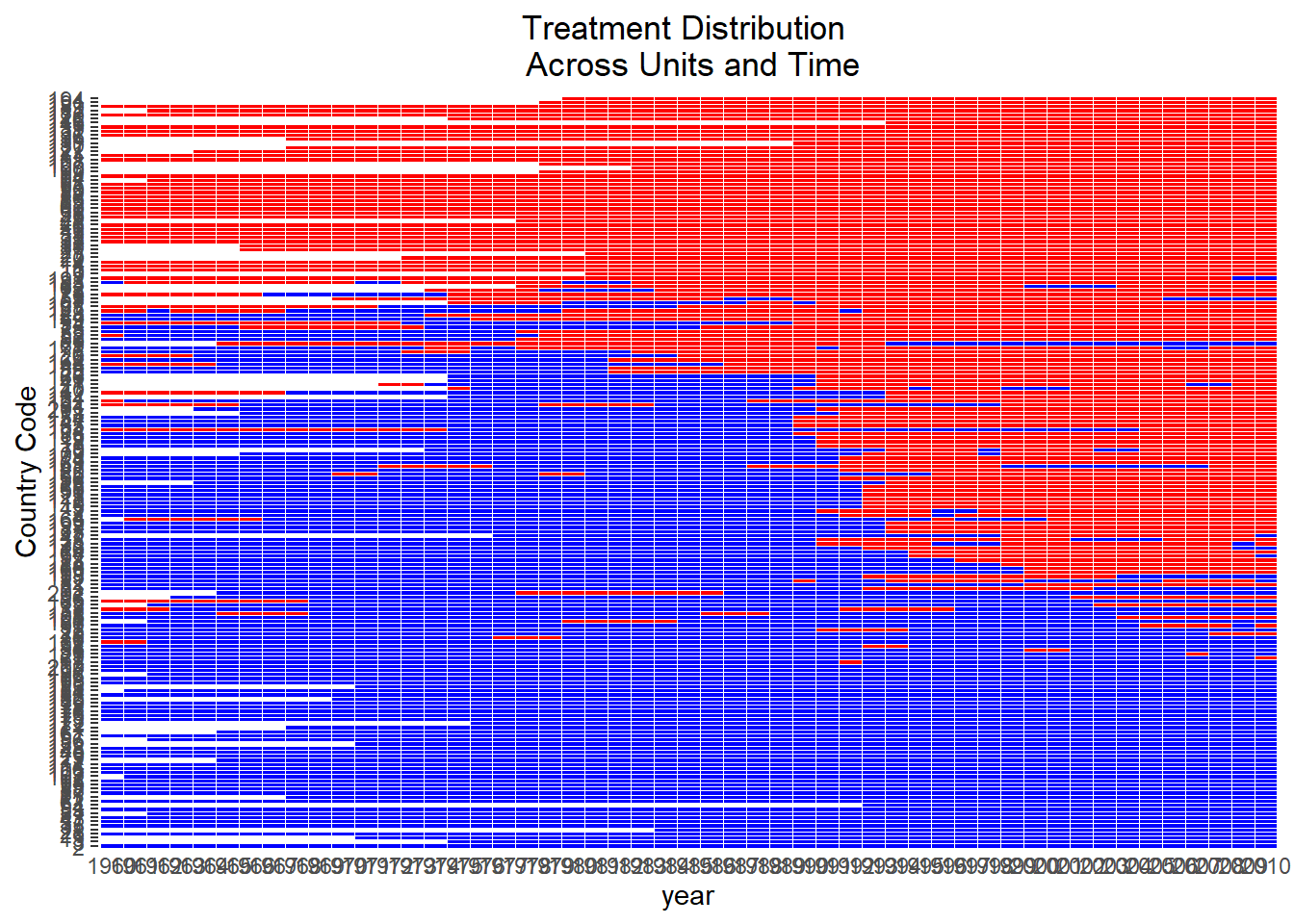

Figure 30.1 shows the heatmap of treatment status for each unit over 10 years.

panelView::panelview(

y ~ treat_stat,

data = base_stagg,

index = c("id", "year"),

xlab = "Year",

ylab = "Unit",

display.all = F,

gridOff = T,

by.timing = T

)

Figure 30.1: Treatment Assignment Over Time by Unit

The diagonal “step” confirms that not all units adopt at once. This would be a perfect for a staggered-DiD design. Horizontal segments without a color change indicate units that never adopt.

Alternatively, specifying the outcome and treatment status will also return the exact figure (Figure 30.2)

# alternatively specification

panelView::panelview(

Y = "y",

D = "treat_stat",

data = base_stagg,

index = c("id", "year"),

xlab = "Year",

ylab = "Unit",

display.all = F,

gridOff = T,

by.timing = T

)

Figure 30.2: Staggered Treatment Timing Across Units

30.2.3 Raw Outcome Trajectories

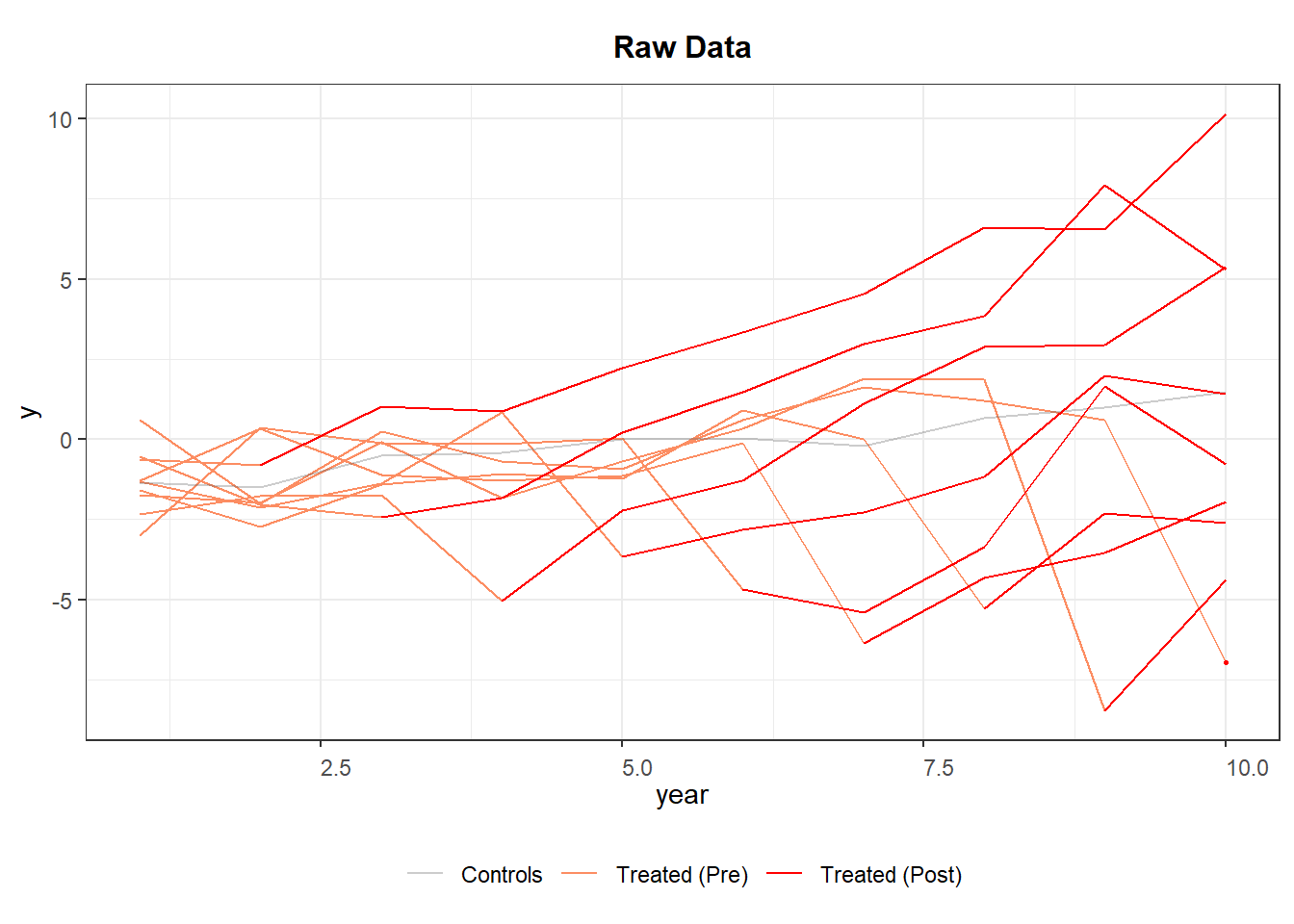

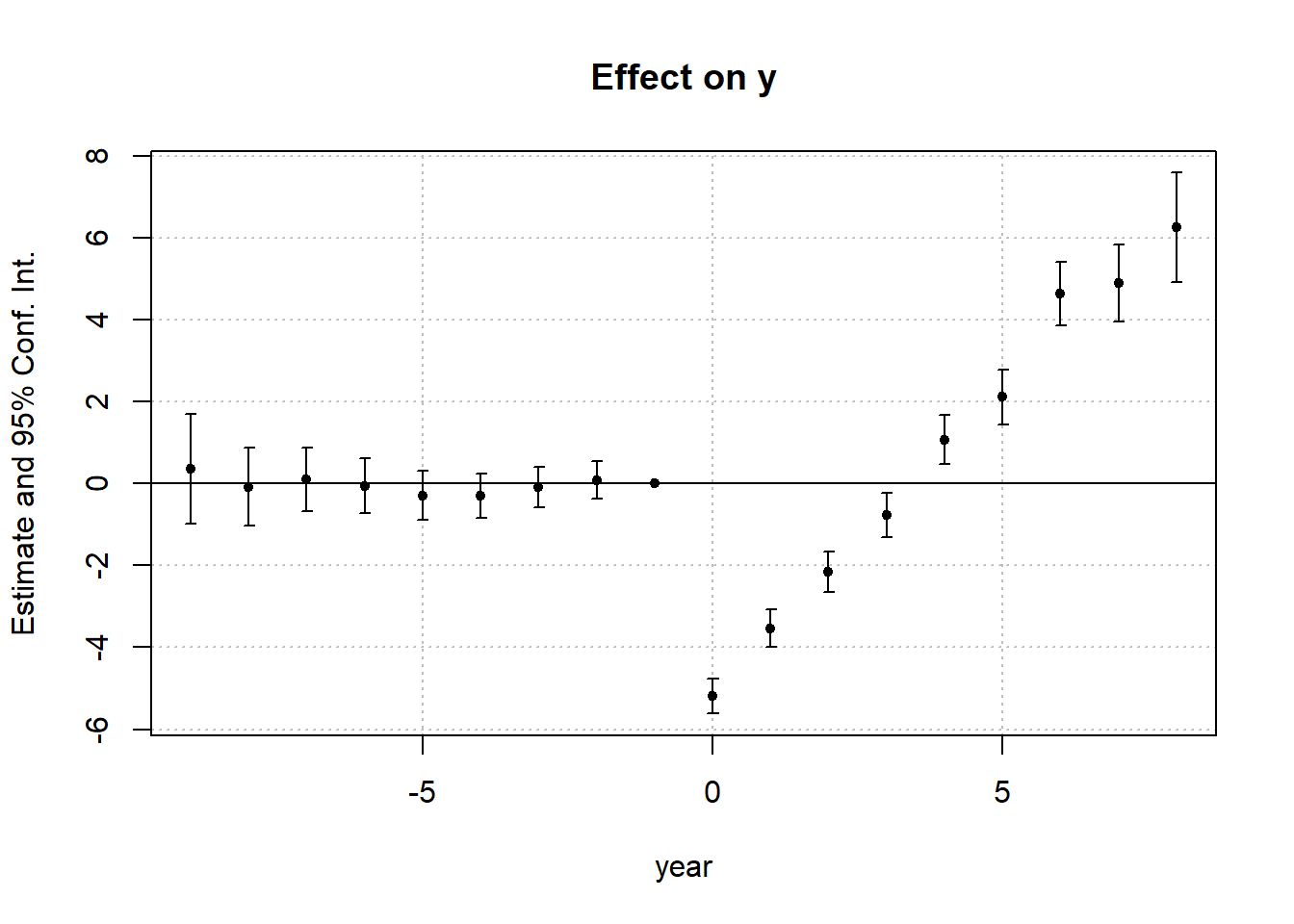

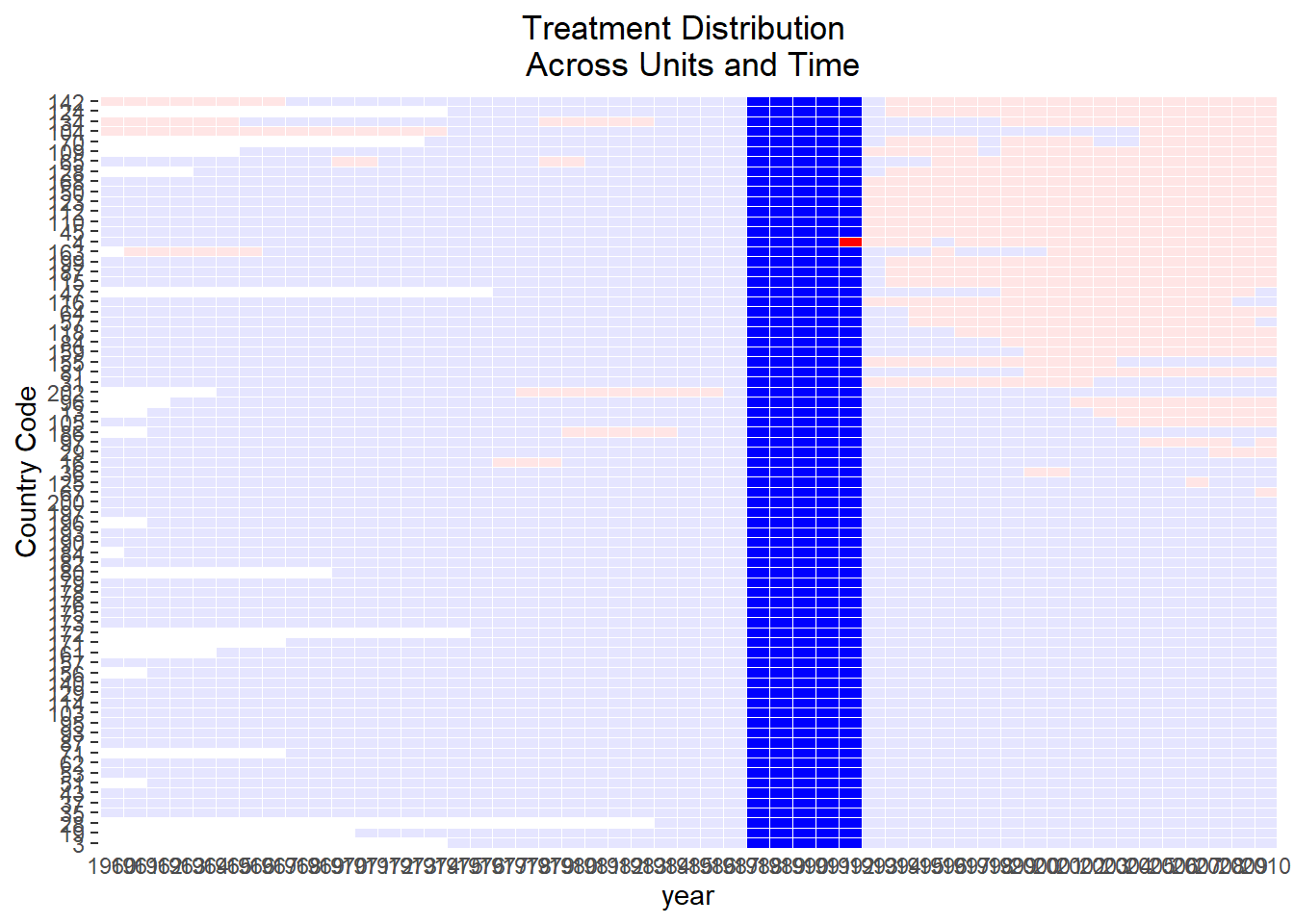

Figure 30.3 shows the trajectories of different cohorts overtime.

# Average outcomes for each cohort

panelView::panelview(

data = base_stagg,

Y = "y",

D = "treat_stat",

index = c("id", "year"),

by.timing = T,

display.all = F,

type = "outcome",

by.cohort = T

)

#> Number of unique treatment histories: 10

Figure 30.3: Raw Panel Data by Treatment Status Over Time

If the red segments diverge immediately after treatment while the orange segments blend with gray beforehand, the visual evidence is supportive of a treatment effect and parallel pre-trends.

30.2.4 Event-time Averages

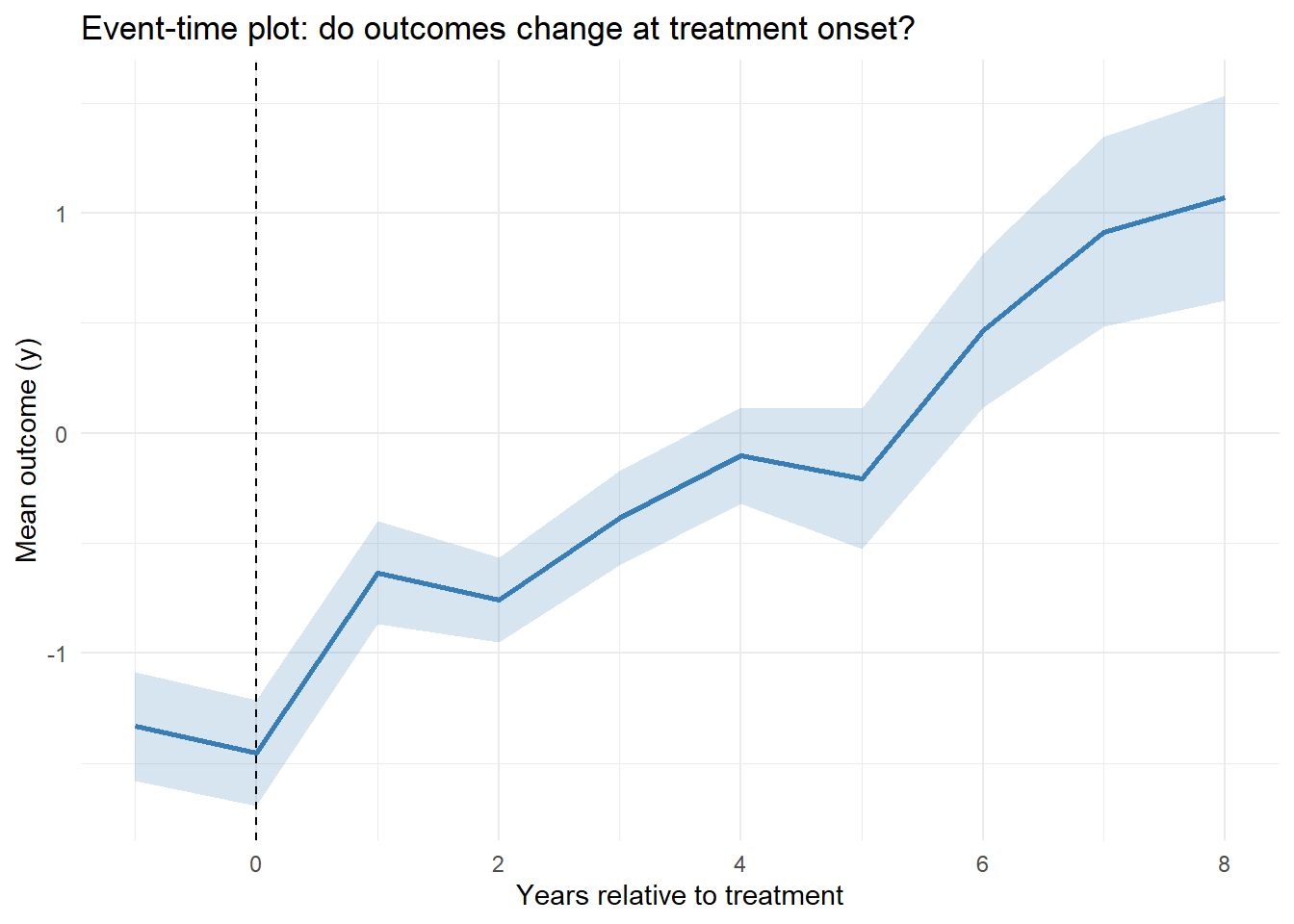

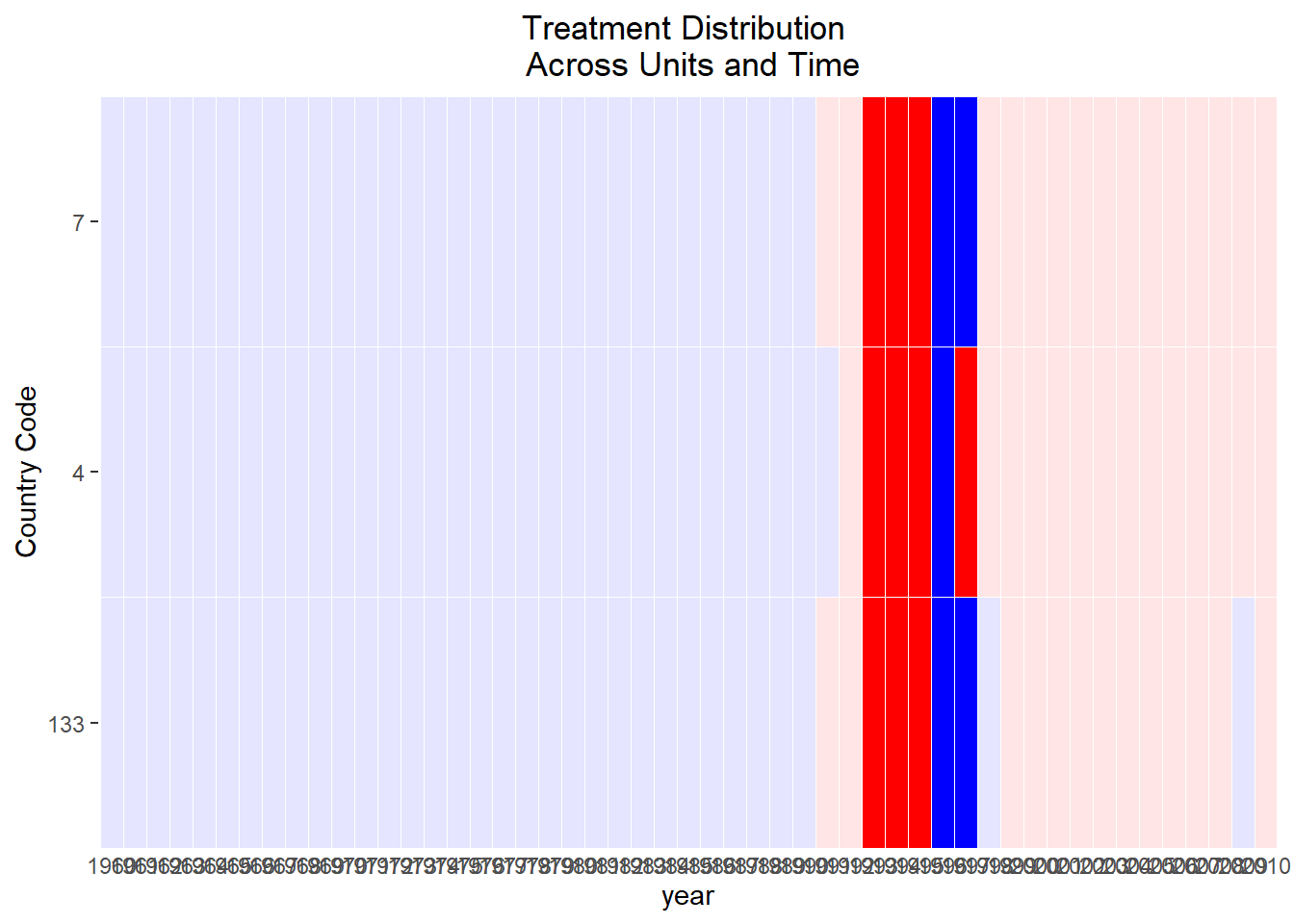

A more focused diagnostic is to plot the average outcome in event time (years relative to first treatment) (Figure 30.4).

base_stagg |>

group_by(event_time = year - min(year[treat_stat == 1])) |>

summarise(y_mean = mean(y),

se = sd(y) / sqrt(n())) |>

ggplot(aes(event_time, y_mean)) +

geom_line(color = "#377eb8", linewidth = 1) +

geom_ribbon(aes(ymin = y_mean - se, ymax = y_mean + se),

fill = "#377eb8",

alpha = .2) +

geom_vline(xintercept = 0, linetype = "dashed") +

labs(x = "Years relative to treatment",

y = "Mean outcome (y)",

title = "Event-time plot: do outcomes change at treatment onset?") +

theme_minimal()

Figure 30.4: Event Time Averages

A flat pre-trend (negative event times) and a noticeable jump at event-time 0 support the identifying assumptions for staggered DiD.

30.3 Simple Difference-in-Differences

DID first emerged as an econometric workhorse for natural experiments, settings where policy shocks or geographic quirks mimic random assignment. Since then, its reach has grown dramatically: marketing A/B roll-outs, corporate ESG mandates, even the staggered release of a mobile-app feature now routinely call on DID for credible impact estimates.

At its computational heart lies the Fixed Effects Estimator, which sweeps out any time-invariant heterogeneity across units and any shocks common to all periods—making DID a cornerstone technique for causal inference in observational data.

DID cleverly harnesses inter-temporal variation between groups in two complementary ways to fight omitted variable bias:

- Cross-sectional comparison: Compares treated and control units at the same point in time, canceling bias from shocks that hit both groups equally (e.g., nationwide inflation). This helps avoid omitted variable bias due to common trends.

- Time-series comparison: Tracks the same unit over time, purging bias from any fixed, unit-specific traits (e.g., a chain’s brand equity, a region’s climate). This helps mitigate omitted variable bias due to cross-sectional heterogeneity.

By taking the difference of differences, we simultaneously:

- Remove common trends that could confound a simple cross-sectional comparison.

- Eliminate unit-specific constants that would spoil a pure time-series analysis.

30.3.1 Basic Setup of DID

Consider a simple setting in Table 30.1 with:

- Treatment Group (\(D_i = 1\))

- Control Group (\(D_i = 0\))

- Pre-Treatment Period (\(T = 0\))

- Post-Treatment Period (\(T = 1\))

| After Treatment (\(T = 1\)) | Before Treatment (\(T = 0\)) | |

|---|---|---|

| Treated (\(D_i = 1\)) | \(E[Y_{1i}(1)|D_i = 1]\) | \(E[Y_{0i}(0)|D_i = 1]\) |

| Control (\(D_i = 0\)) | \(E[Y_{0i}(1)|D_i = 0]\) | \(E[Y_{0i}(0)|D_i = 0]\) |

The fundamental challenge: We cannot observe \(E[Y_{0i}(1)|D_i = 1]\) (i.e., the counterfactual outcome for the treated group had they not received treatment).

DID estimates the Average Treatment Effect on the Treated using the following formula:

\[ \begin{aligned} E[Y_1(1) - Y_0(1) | D = 1] &= \{E[Y(1)|D = 1] - E[Y(1)|D = 0] \} \\ &- \{E[Y(0)|D = 1] - E[Y(0)|D = 0] \} \end{aligned} \]

This formulation differences out time-invariant unobserved factors, assuming the parallel trends assumption holds.

- For the treated group, we isolate the difference between being treated and not being treated.

- If the control group would have experienced a different trajectory, the DID estimate may be biased.

- Since we cannot observe treatment variation in the control group, we cannot infer the treatment effect for this group.

# Load required libraries

library(dplyr)

library(ggplot2)

set.seed(1)

# Simulated dataset for illustration

data <- data.frame(

time = rep(c(0, 1), each = 50), # Pre (0) and Post (1)

treated = rep(c(0, 1), times = 50), # Control (0) and Treated (1)

error = rnorm(100)

)

# Generate outcome variable

data$outcome <-

5 + 3 * data$treated + 2 * data$time +

4 * data$treated * data$time + data$error

# Compute averages for 2x2 table

table_means <- data %>%

group_by(treated, time) %>%

summarize(mean_outcome = mean(outcome), .groups = "drop") %>%

mutate(

group = paste0(ifelse(treated == 1, "Treated", "Control"), ", ",

ifelse(time == 1, "Post", "Pre"))

)

# Display the 2x2 table

table_2x2 <- table_means %>%

select(group, mean_outcome) %>%

tidyr::spread(key = group, value = mean_outcome)

print("2x2 Table of Mean Outcomes:")

#> [1] "2x2 Table of Mean Outcomes:"

print(table_2x2)

#> # A tibble: 1 × 4

#> `Control, Post` `Control, Pre` `Treated, Post` `Treated, Pre`

#> <dbl> <dbl> <dbl> <dbl>

#> 1 7.19 5.20 14.0 8.00

# Calculate Diff-in-Diff manually

# Treated, Post

Y11 <- table_means$mean_outcome[table_means$group == "Treated, Post"]

# Treated, Pre

Y10 <- table_means$mean_outcome[table_means$group == "Treated, Pre"]

# Control, Post

Y01 <- table_means$mean_outcome[table_means$group == "Control, Post"]

# Control, Pre

Y00 <- table_means$mean_outcome[table_means$group == "Control, Pre"]

diff_in_diff_formula <- (Y11 - Y10) - (Y01 - Y00)

# Estimate DID using OLS

model <- lm(outcome ~ treated * time, data = data)

ols_estimate <- coef(model)["treated:time"]

# Print results

results <- data.frame(

Method = c("Diff-in-Diff Formula", "OLS Estimate"),

Estimate = c(diff_in_diff_formula, ols_estimate)

)

print("Comparison of DID Estimates:")

#> [1] "Comparison of DID Estimates:"

print(results)

#> Method Estimate

#> Diff-in-Diff Formula 4.035895

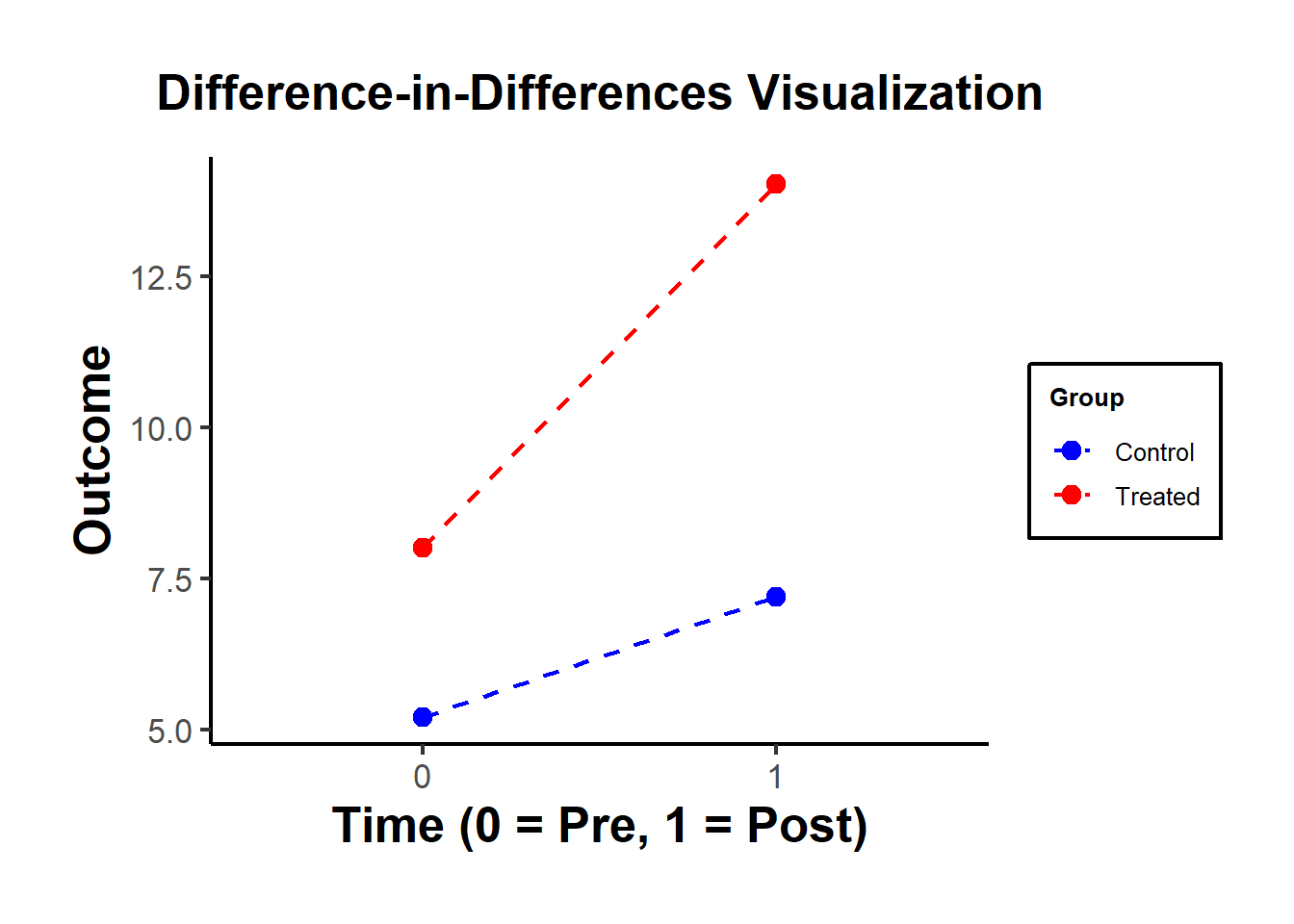

#> treated:time OLS Estimate 4.035895Figure 30.5 shows a simple visualization of the DID in practice.

# Visualization

ggplot(data,

aes(

x = as.factor(time),

y = outcome,

color = as.factor(treated),

group = treated

)) +

stat_summary(fun = mean, geom = "point", size = 3) +

stat_summary(fun = mean,

geom = "line",

linetype = "dashed") +

labs(

title = "Difference-in-Differences Visualization",

x = "Time (0 = Pre, 1 = Post)",

y = "Outcome",

color = "Group"

) +

scale_color_manual(labels = c("Control", "Treated"),

values = c("blue", "red")) +

causalverse::ama_theme()

Figure 30.5: DiD Visualization

| Control (0) | Treated (1) | |

|---|---|---|

| Pre (0) | \(\bar{Y}_{00} = 5\) | \(\bar{Y}_{10} = 8\) |

| Post (1) | \(\bar{Y}_{01} = 7\) | \(\bar{Y}_{11} = 14\) |

Table 30.2 organizes the mean outcomes into four cells:

Control Group, Pre-period (\(\bar{Y}_{00}\)): Mean outcome for the control group before the intervention.

Control Group, Post-period (\(\bar{Y}_{01}\)): Mean outcome for the control group after the intervention.

Treated Group, Pre-period (\(\bar{Y}_{10}\)): Mean outcome for the treated group before the intervention.

Treated Group, Post-period (\(\bar{Y}_{11}\)): Mean outcome for the treated group after the intervention.

The DID treatment effect calculated from the simple formula of averages is identical to the estimate from an OLS regression with an interaction term.

The treatment effect is calculated as:

\(\text{DID} = (\bar{Y}_{11} - \bar{Y}_{10}) - (\bar{Y}_{01} - \bar{Y}_{00})\)

Compute manually:

\((\bar{Y}_{11} - \bar{Y}_{10}) - (\bar{Y}_{01} - \bar{Y}_{00})\)

Use OLS regression:

\(Y_{it} = \beta_0 + \beta_1 \text{treated}_i + \beta_2 \text{time}_t + \beta_3 (\text{treated}_i \cdot \text{time}_t) + \epsilon_{it}\)

Using the simulated table:

\(\text{DID} = (14 - 8) - (7 - 5) = 6 - 2 = 4\)

This matches the interaction term coefficient (\(\beta_3 = 4\)) from the OLS regression.

Both methods give the same result!

30.3.2 Extensions of DID

30.3.2.1 DID with More Than Two Groups or Time Periods

DID can be extended to multiple treatments, multiple controls, and more than two periods:

\[ Y_{igt} = \alpha_g + \gamma_t + \beta I_{gt} + \delta X_{igt} + \epsilon_{igt} \]

where:

\(\alpha_g\) = Group-Specific Fixed Effects (e.g., firm, region).

\(\gamma_t\) = Time-Specific Fixed Effects (e.g., year, quarter).

\(\beta\) = DID Effect.

\(I_{gt}\) = Interaction Terms (Treatment × Post-Treatment).

\(\delta X_{igt}\) = Additional Covariates.

This is known as the Two-Way Fixed Effects DID model. However, TWFE performs poorly under staggered treatment adoption, where different groups receive treatment at different times.

30.3.2.2 Examining Long-Term Effects (Dynamic DID)

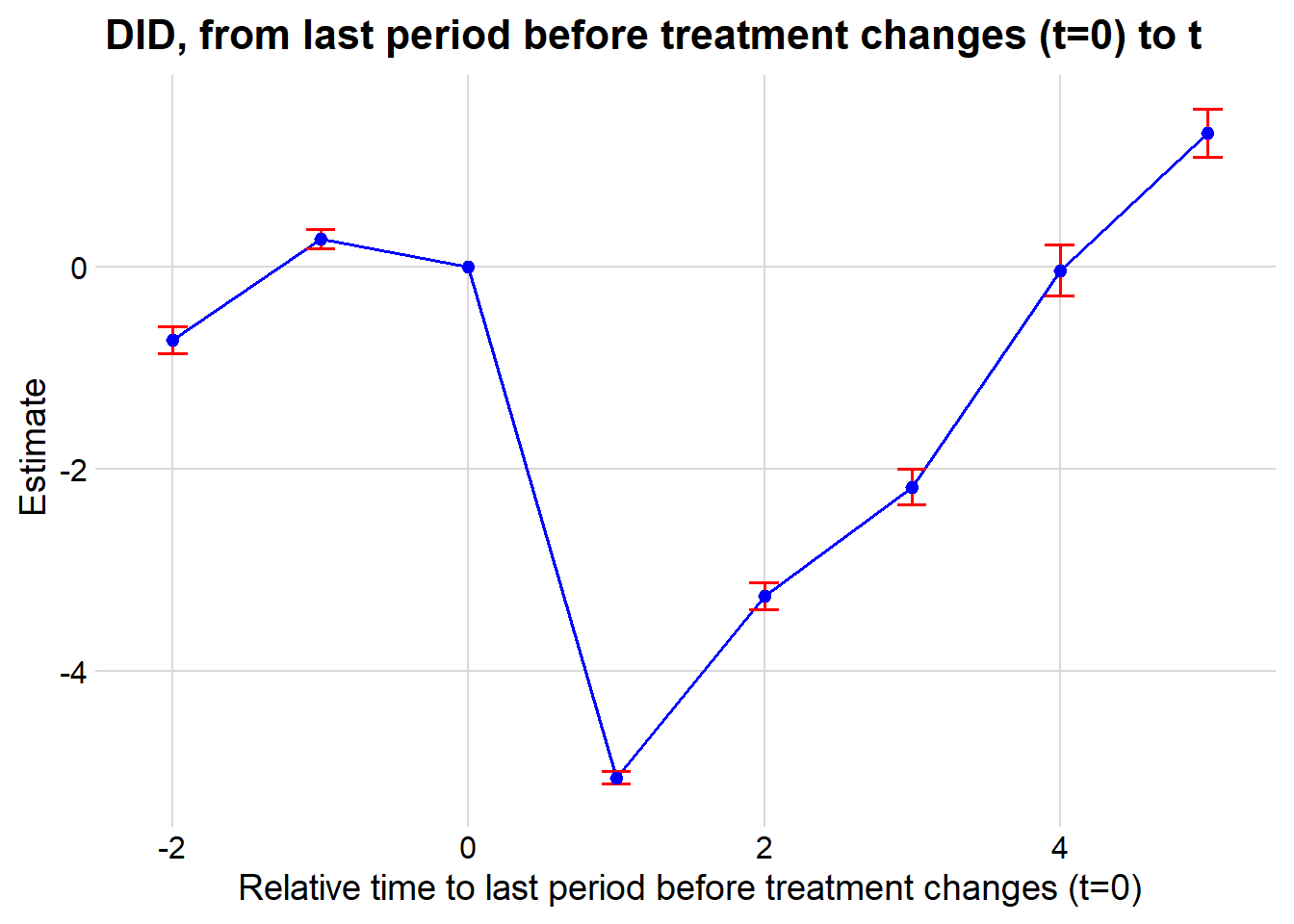

To examine the dynamic treatment effects (that are not under rollout/staggered design), we can create a centered time variable (Table 30.3).

| Centered Time Variable | Interpretation |

|---|---|

| \(t = -2\) | Two periods before treatment |

| \(t = -1\) | One period before treatment |

| \(t = 0\) |

Last pre-treatment period right before treatment period (Baseline/Reference Group) |

| \(t = 1\) | Treatment period |

| \(t = 2\) | One period after treatment |

Dynamic Treatment Model Specification

By interacting this factor variable, we can examine the dynamic effect of treatment (i.e., whether it’s fading or intensifying):

\[ \begin{aligned} Y &= \alpha_0 + \alpha_1 Group + \alpha_2 Time \\ &+ \beta_{-T_1} Treatment + \beta_{-(T_1 -1)} Treatment + \dots + \beta_{-1} Treatment \\ &+ \beta_1 + \dots + \beta_{T_2} Treatment \end{aligned} \]

where:

\(\beta_0\) (Baseline Period) is the reference group (i.e., drop from the model).

\(T_1\) = Pre-Treatment Period.

\(T_2\) = Post-Treatment Period.

Treatment coefficients (\(\beta_t\)) measure the effect over time.

Key Observations:

Pre-treatment coefficients should be close to zero (\(\beta_{-T_1}, \dots, \beta_{-1} \approx 0\)), ensuring no pre-trend bias.

Post-treatment coefficients should be significantly different from zero (\(\beta_1, \dots, \beta_{T_2} \neq 0\)), measuring the treatment effect over time.

Higher standard errors with more interactions: Including too many lags can reduce precision.

30.3.2.3 DID on Relationships, Not Just Levels

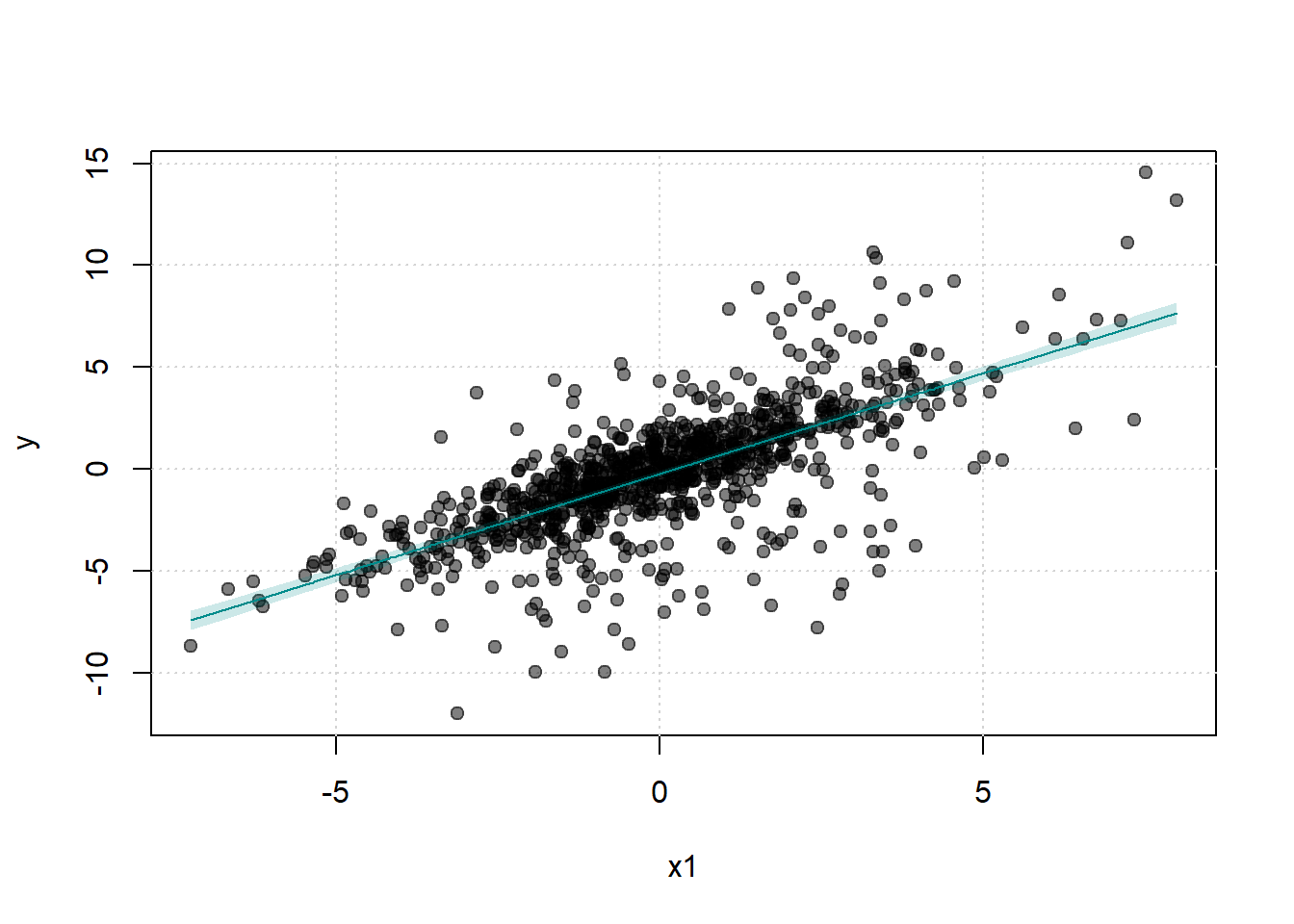

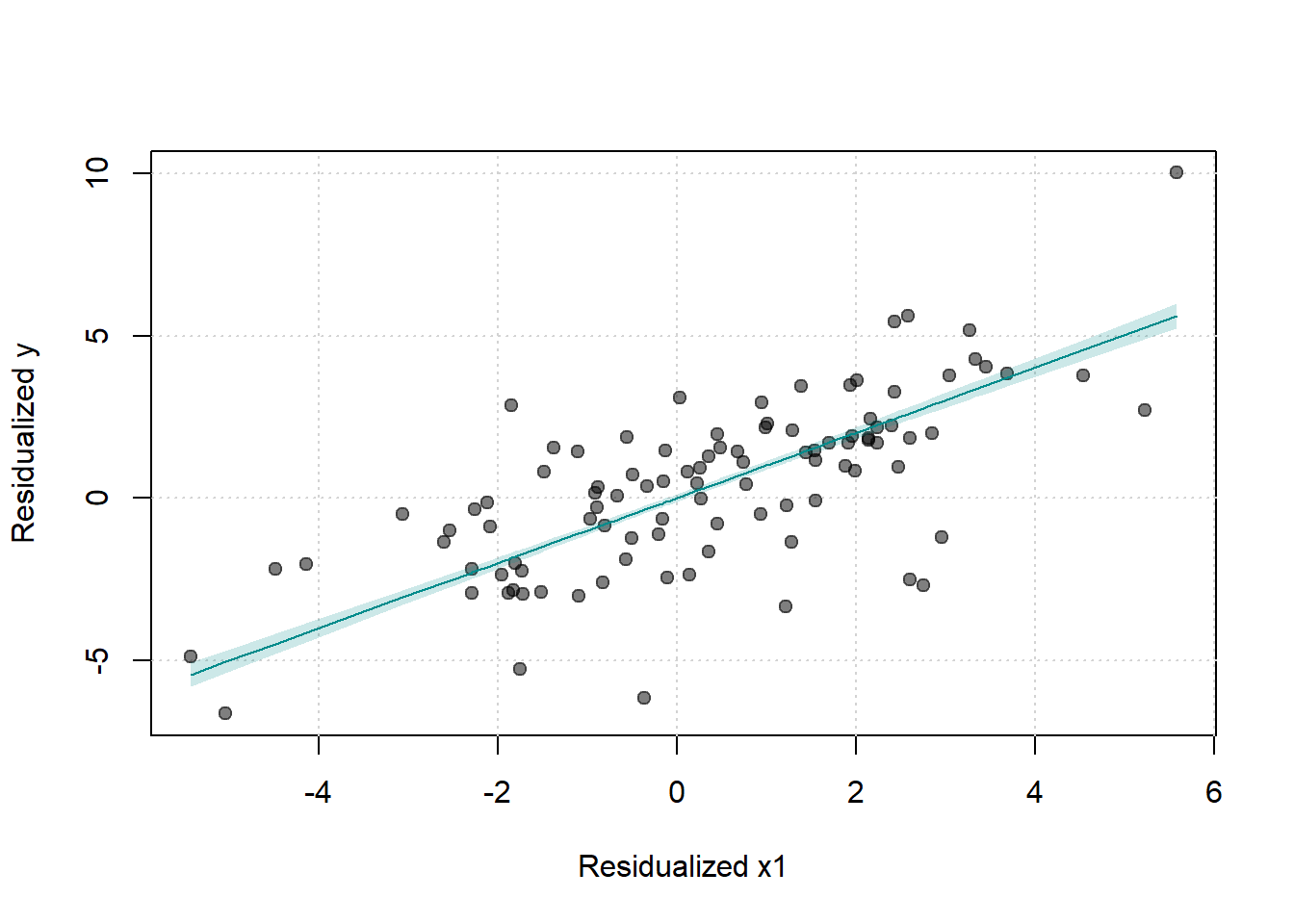

While DID is most commonly applied to examine treatment effects on outcome levels, it can also be used to estimate how treatment affects the relationship between variables. This approach treats estimated coefficients from first-stage regressions as outcomes in a second-stage DID analysis.

Standard DID examines whether treatment changes the level of an outcome \(Y_{it}\). However, researchers may be interested in whether treatment changes how \(Y\) responds to some predictor \(X\), that is, whether treatment affects the coefficient \(\beta\) in the relationship:

\[ Y_{it} = \alpha + \beta X_{it} + \epsilon_{it} \]

This requires a two-stage approach where regression coefficients themselves become the unit of analysis.

Two-Stage Estimation Procedure

Stage 1: Estimate Group-Period-Specific Relationships

For each combination of group \(g\) and time period \(t\), estimate:

\[ Y_{igt} = \alpha_{gt} + \beta_{gt} X_{igt} + \epsilon_{igt} \]

This yields a set of estimated coefficients \(\{\hat{\beta}_{gt}\}\), where each \(\hat{\beta}_{gt}\) captures the relationship between \(X\) and \(Y\) for group \(g\) in period \(t\).

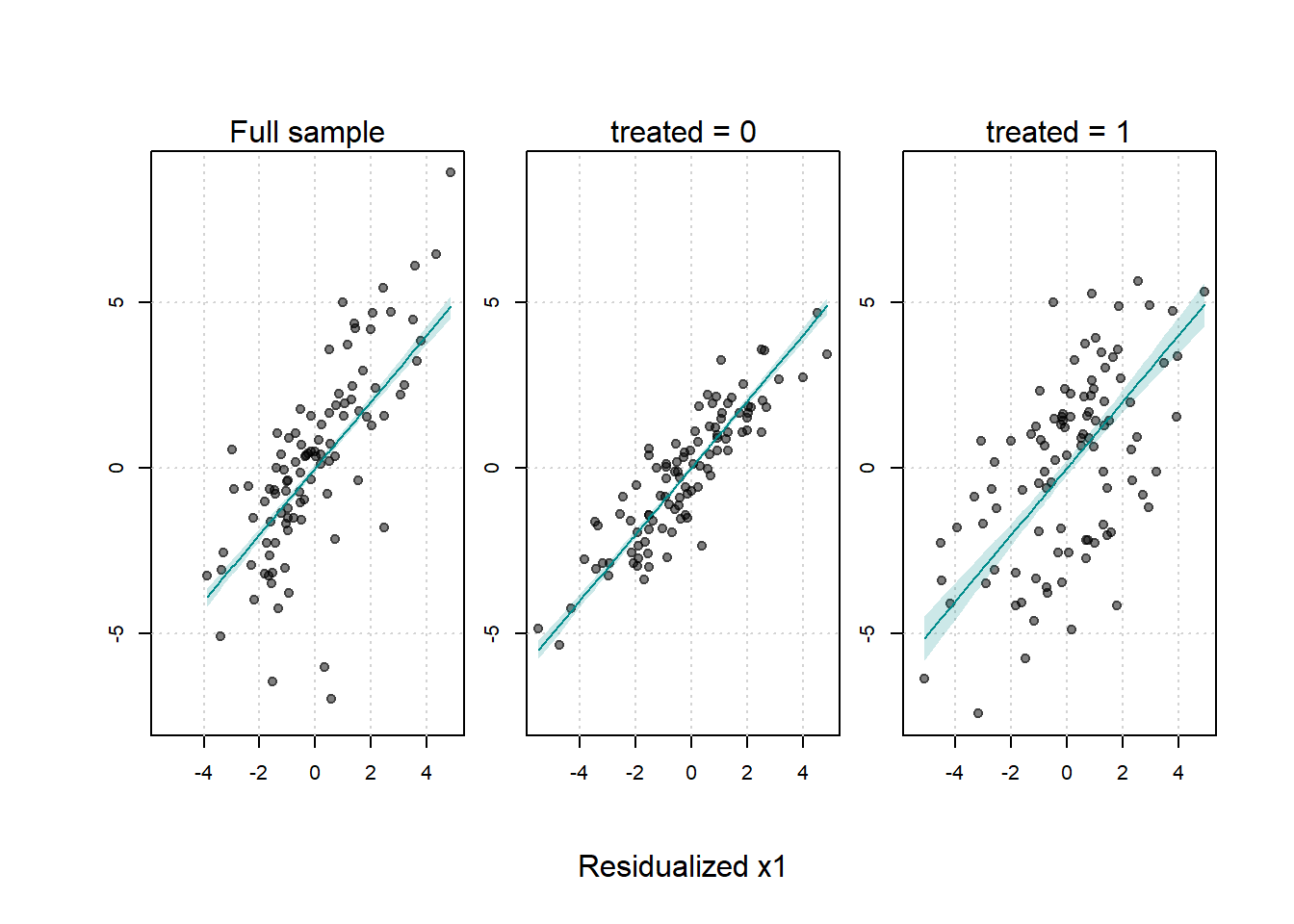

Stage 2: Apply DID to the Estimated Coefficients

Treat the estimated coefficients \(\hat{\beta}_{gt}\) as the outcome variable in a standard DID framework:

\[ \hat{\beta}_{gt} = \alpha_0 + \alpha_1 Treated_g + \alpha_2 Post_t + \delta (Treated_g \times Post_t) + u_{gt} \]

where:

- \(\hat{\beta}_{gt}\) = Estimated coefficient from Stage 1 (the “outcome”).

- \(Treated_g\) = Indicator for treatment group.

- \(Post_t\) = Indicator for post-treatment period.

- \(\delta\) = DID estimate of treatment effect on the relationship.

The coefficient \(\delta\) measures whether the relationship between \(X\) and \(Y\) changed differentially for the treated group after treatment.

The DID estimator can be expressed as:

\[ \begin{aligned} \hat{\delta} &= (\hat{\beta}_{Treated}^{Post} - \hat{\beta}_{Treated}^{Pre}) - (\hat{\beta}_{Control}^{Post} - \hat{\beta}_{Control}^{Pre}) \\ &= \text{Change in relationship for treated} - \text{Change in relationship for control} \end{aligned} \]

This captures the causal effect of treatment on the structural parameter \(\beta\), controlling for secular trends that affect both groups.

30.3.2.3.1 Example: Price Sensitivity Before and After a Policy Change

Suppose we want to test whether a consumer protection law changes how price affects demand.

Stage 1: For each state \(s\) and year \(t\), estimate:

\[ \log(Quantity_{ist}) = \alpha_{st} + \beta_{st} \log(Price_{ist}) + \epsilon_{ist} \]

where \(i\) indexes individual transactions. This gives us state-year specific price elasticities \(\{\hat{\beta}_{st}\}\).

Stage 2: Use these elasticities as outcomes in a DID model:

\[ \hat{\beta}_{st} = \alpha_0 + \alpha_1 Treated_s + \alpha_2 Post_t + \delta (Treated_s \times Post_t) + u_{st} \]

If \(\delta < 0\), the policy made consumers more price-sensitive (more elastic demand) in treated states. If \(\delta > 0\), consumers became less price-sensitive.

30.3.2.3.2 Standard Error Correction

A critical issue is that Stage 2 uses estimated coefficients \(\hat{\beta}_{gt}\) as the dependent variable, which introduces generated regressor problems. The standard errors from Stage 2 are incorrect because they don’t account for estimation uncertainty in \(\hat{\beta}_{gt}\).

Solutions:

Bootstrapping: Resample at the individual level, re-estimate both stages, and calculate standard errors from the bootstrap distribution.

Weighted Least Squares: Weight Stage 2 observations by the inverse of the variance of \(\hat{\beta}_{gt}\):

\[ w_{gt} = \frac{1}{\text{Var}(\hat{\beta}_{gt})} = \frac{1}{SE(\hat{\beta}_{gt})^2} \]

This gives more weight to precisely estimated relationships.

- Stacked Regression: Pool all individual observations and include group-period fixed effects interacted with \(X\):

\[ Y_{igt} = \sum_{g,t} \beta_{gt} (I_{gt} \times X_{igt}) + \text{controls} + \epsilon_{igt} \]

Then test whether \(\{\beta_{gt}\}\) follow a DID pattern using an F-test or linear combinations.

30.3.2.3.3 Dynamic Effects on Relationships

The two-stage approach can be extended to examine how relationships evolve over time:

Stage 1: Estimate period-specific coefficients:

\[ Y_{igt} = \alpha_{gt} + \beta_{gt} X_{igt} + \epsilon_{igt} \]

Stage 2: Event study specification:

\[ \hat{\beta}_{gt} = \alpha_g + \gamma_t + \sum_{k \neq -1} \delta_k (Treated_g \times Period_{t=k}) + u_{gt} \]

where \(Period_{t=k}\) are indicators for time relative to treatment (with \(k=-1\) as the reference period).

Key Observations:

- Pre-treatment coefficients (\(\delta_k\) for \(k < -1\)) should be near zero, indicating parallel trends in the relationship.

- Post-treatment coefficients (\(\delta_k\) for \(k \geq 0\)) show how the relationship evolves after treatment.

- This reveals whether effects on relationships are immediate, delayed, or fade over time.

30.3.2.3.4 Applications

1. Advertising Effectiveness:

Does a competitor’s market entry change advertising elasticity?

- Stage 1: Estimate \(\frac{\partial \log(Sales)}{\partial \log(Advertising)}\) for each market-period.

- Stage 2: DID on these elasticities comparing markets with/without competitor entry.

2. Labor Supply Elasticity:

Does a tax reform change labor supply responsiveness to wages?

- Stage 1: Estimate \(\frac{\partial Hours}{\partial Wage}\) for each region-year.

- Stage 2: DID comparing regions with different tax policy changes.

3. Educational Returns:

Does a curriculum reform change the returns to study time?

- Stage 1: Estimate \(\frac{\partial GPA}{\partial StudyHours}\) for each school-year.

- Stage 2: DID comparing schools that adopted vs. didn’t adopt the reform.

4. Platform Network Effects:

Does a platform algorithm change affect network externalities?

- Stage 1: Estimate \(\frac{\partial UserValue}{\partial NetworkSize}\) for each market-quarter.

- Stage 2: DID around the algorithm change.

30.3.2.3.5 Advantages and Limitations

Advantages:

- Causal inference on mechanisms: Identifies how treatment changes behavioral responses, not just outcomes.

- Flexibility: Can be applied to any estimable relationship (elasticities, marginal effects, coefficients).

- Policy-relevant: Directly tests whether policies alter structural parameters of interest.

Limitations:

- Two-stage uncertainty: Standard errors require careful correction for generated regressors.

- Data requirements: Needs sufficient observations within each group-period to estimate first-stage relationships precisely.

- Parallel trends in relationships: Requires that relationships (not just levels) would have trended similarly absent treatment—a stronger assumption.

- Aggregation: Loses individual-level variation when collapsing to group-period coefficients.

30.3.2.3.6 Relationship to Heterogeneous Treatment Effects

DID on relationships is conceptually related to, but distinct from, heterogeneous treatment effects. A three-way interaction \((X \times Treated \times Post)\) estimates whether treatment effects vary across levels of \(X\). In contrast, DID on relationships estimates whether the effect of \(X\) on \(Y\) changes due to treatment, a fundamentally different question about structural parameters rather than treatment heterogeneity.

This two-stage approach transforms DID into a tool for testing structural change hypotheses, enabling researchers to make causal claims about how treatments alter the fundamental relationships governing economic, social, or behavioral systems.

30.3.3 Goals of DID

-

Pre-Treatment Coefficients Should Be Insignificant

- Ensure that \(\beta_{-T_1}, \dots, \beta_{-1} = 0\) (similar to a Placebo Test).

-

Post-Treatment Coefficients Should Be Significant

- Verify that \(\beta_1, \dots, \beta_{T_2} \neq 0\).

- Examine whether the trend in post-treatment coefficients is increasing or decreasing over time.

library(tidyverse)

library(fixest)

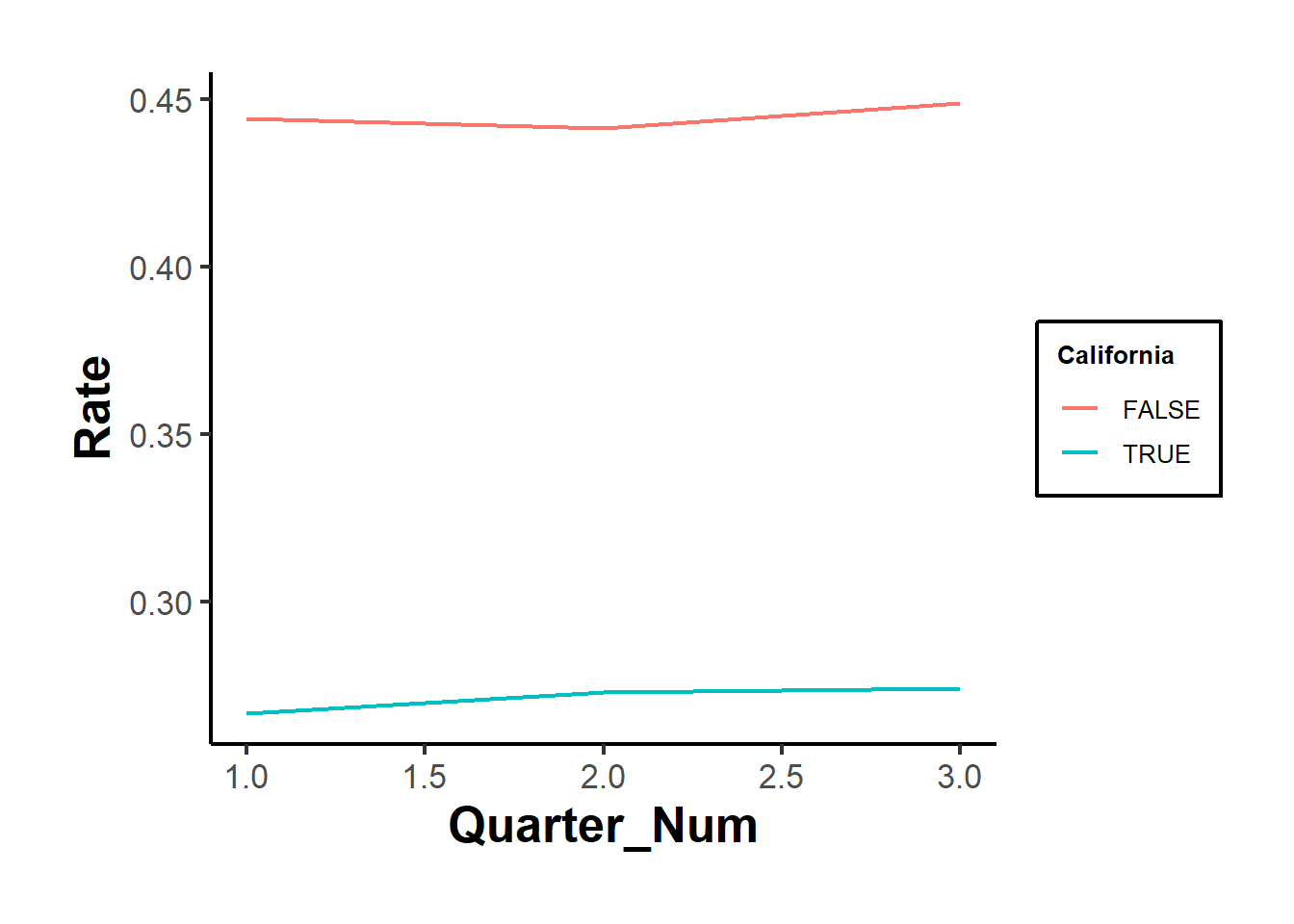

od <- causaldata::organ_donations %>%

# Treatment variable

dplyr::mutate(California = State == 'California') %>%

# centered time variable

dplyr::mutate(center_time = as.factor(Quarter_Num - 3))

# where 3 is the reference period precedes the treatment period

class(od$California)

#> [1] "logical"

class(od$State)

#> [1] "character"

cali <- feols(Rate ~ i(center_time, California, ref = 0) |

State + center_time,

data = od)

etable(cali)

#> cali

#> Dependent Var.: Rate

#>

#> California x center_time = -2 -0.0029 (0.0360)

#> California x center_time = -1 0.0063 (0.0360)

#> California x center_time = 1 -0.0216 (0.0360)

#> California x center_time = 2 -0.0203 (0.0360)

#> California x center_time = 3 -0.0222 (0.0360)

#> Fixed-Effects: ----------------

#> State Yes

#> center_time Yes

#> _____________________________ ________________

#> S.E. type IID

#> Observations 162

#> R2 0.97934

#> Within R2 0.00979

#> ---

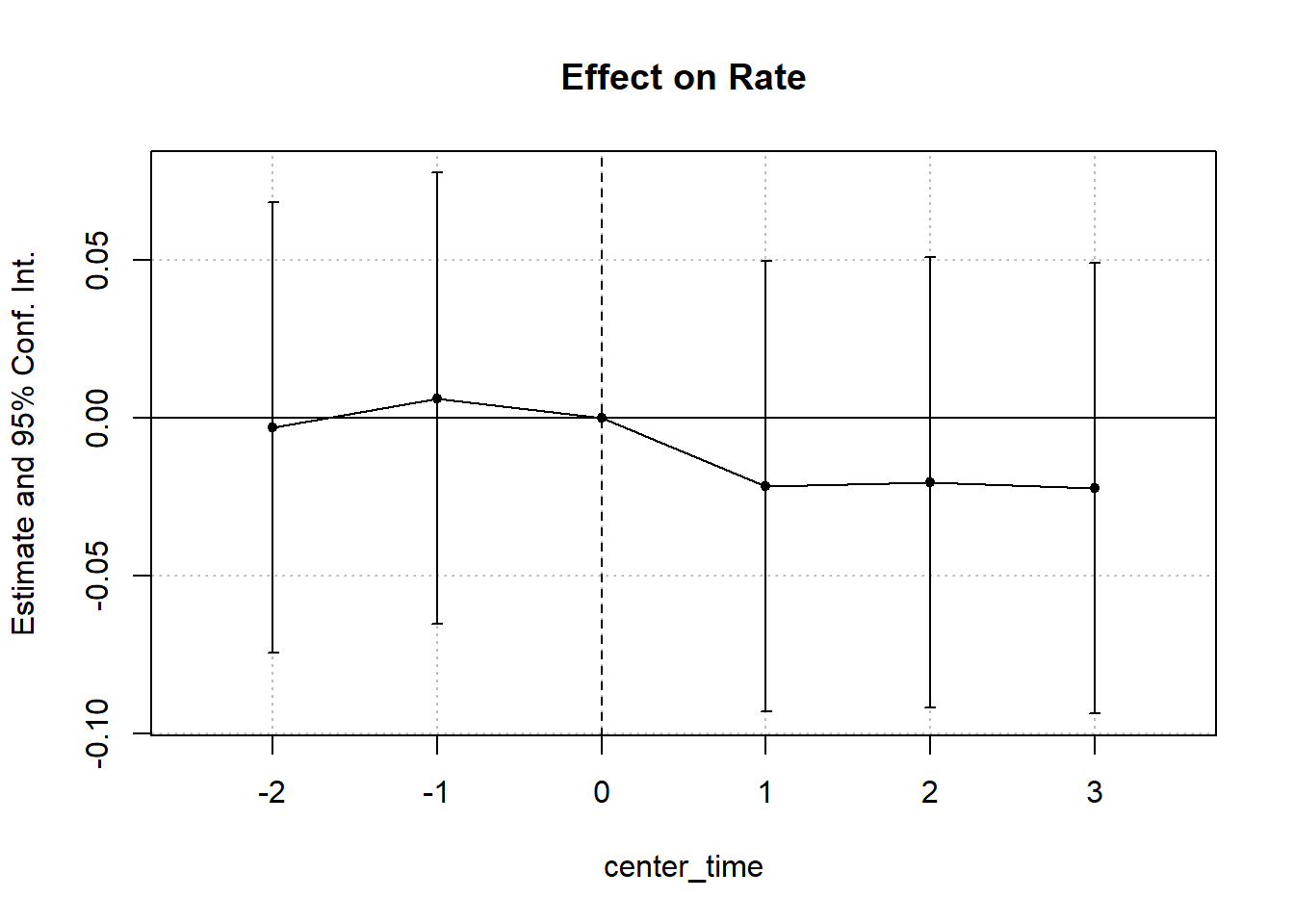

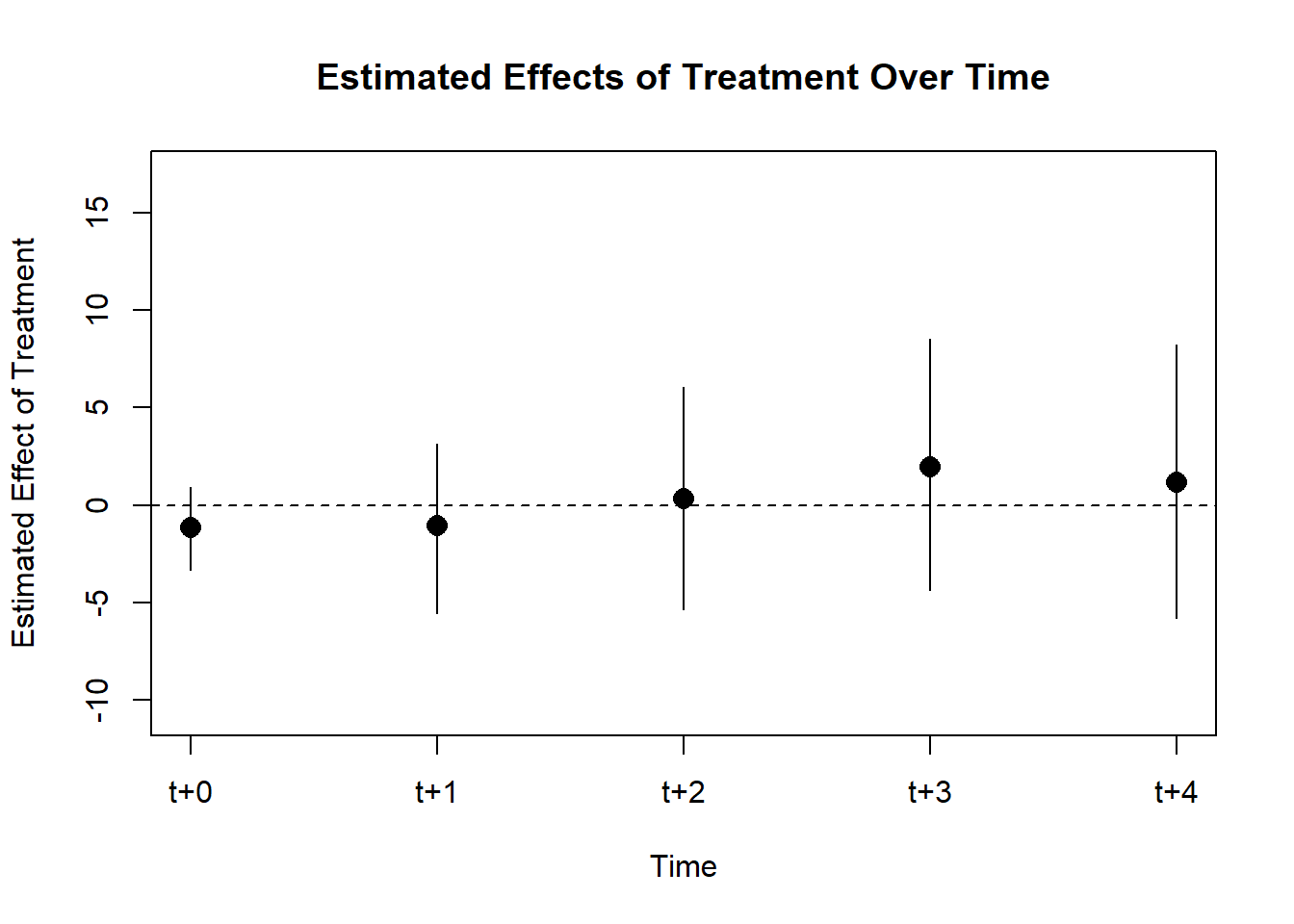

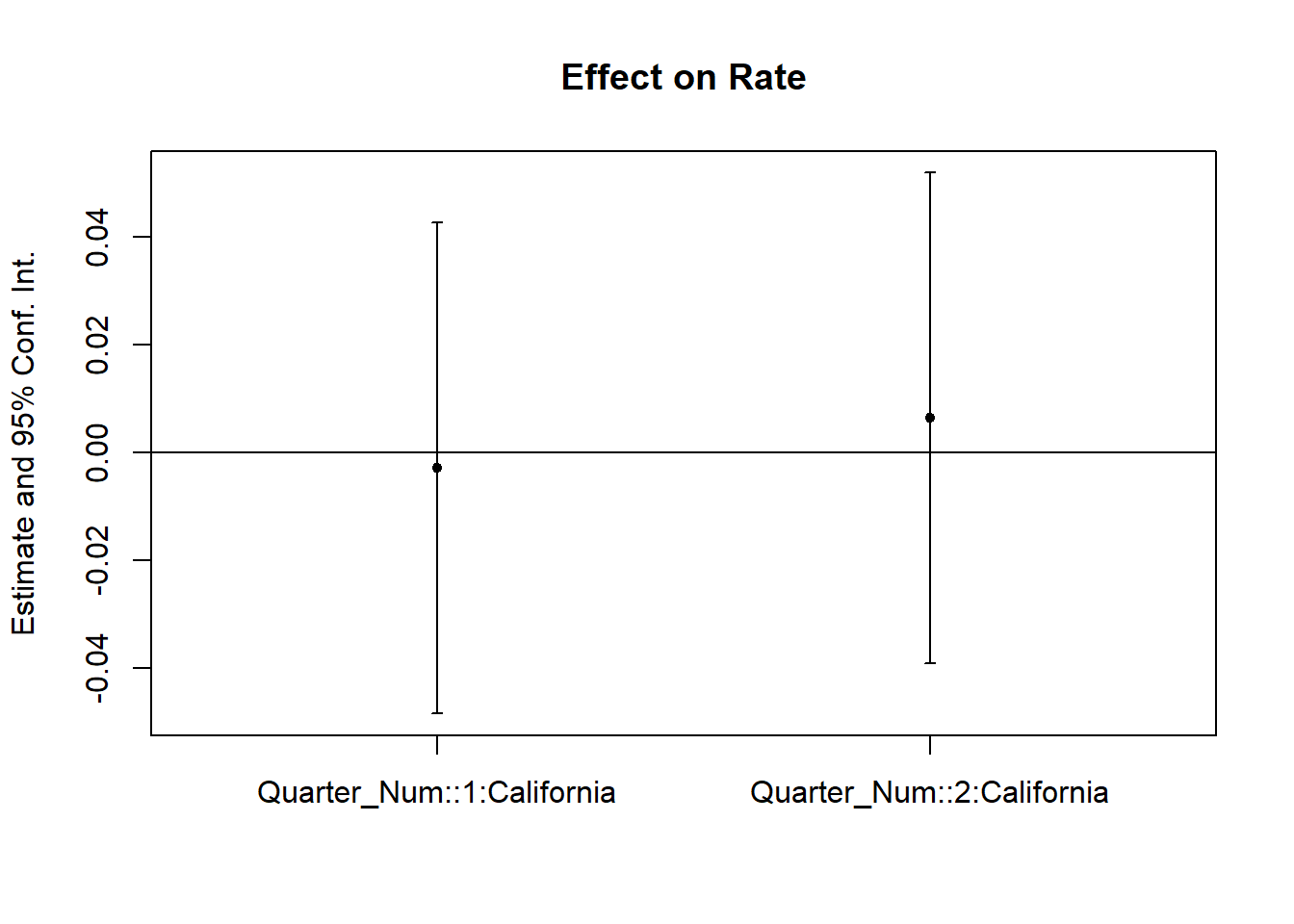

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Figure 30.6 shows the fixed effects estimates over time.

iplot(cali, pt.join = T)

Figure 30.6: Estimated Effect on Rate Over Time

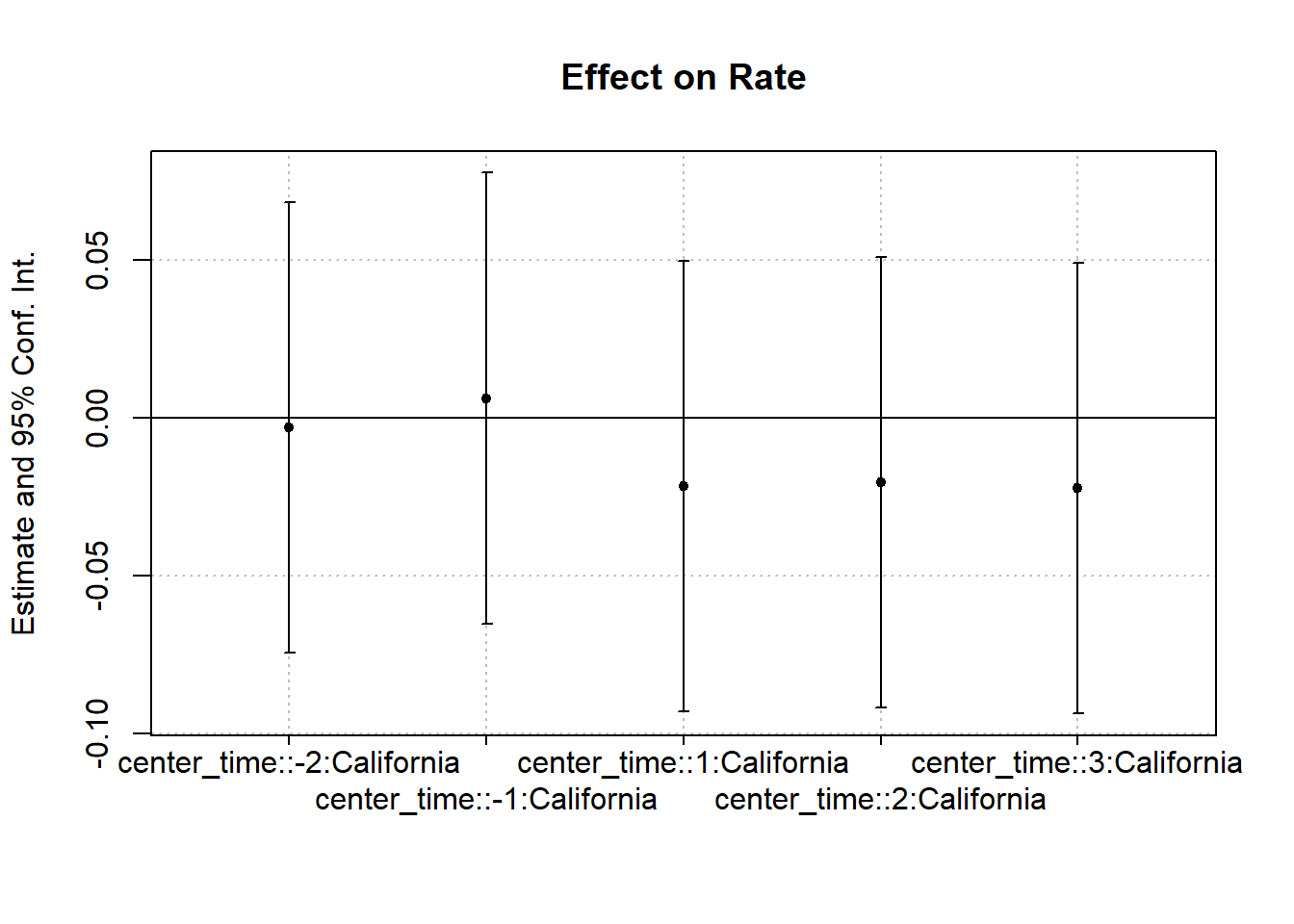

Figure 30.7 shows the same plot with a different plotting function.

coefplot(cali)

Figure 30.7: Interaction Effect on Rate

30.4 Empirical Research Walkthrough

30.4.1 Example: The Unintended Consequences of “Ban the Box” Policies

Doleac and Hansen (2020) examine the unintended effects of “Ban the Box” (BTB) policies, which prevent employers from asking about criminal records during the hiring process. The intended goal of BTB was to increase job access for individuals with criminal records. However, the study found that employers, unable to observe criminal history, resorted to statistical discrimination based on race, leading to unintended negative consequences.

Three Types of “Ban the Box” Policies:

- Public employers only

- Private employers with government contracts

- All employers

Identification Strategy

- If any county within a Metropolitan Statistical Area (MSA) adopts BTB, the entire MSA is considered treated.

- If a state passes a law banning BTB, then all counties in that state are treated.

The basic DiD model is:

\[ Y_{it} = \beta_0 + \beta_1 \text{Post}_t + \beta_2 \text{Treat}_i + \beta_3 (\text{Post}_t \times \text{Treat}_i) + \epsilon_{it} \]

where:

- \(Y_{it}\) = employment outcome for individual \(i\) at time \(t\)

- \(\text{Post}_t\) = indicator for post-treatment period

- \(\text{Treat}_i\) = indicator for treated MSAs

- \(\beta_3\) = the DiD coefficient, capturing the effect of BTB on employment

- \(\epsilon_{it}\) = error term

Limitations: If different locations adopt BTB at different times, this model is not valid due to staggered treatment timing.

For settings where different MSAs adopt BTB at different times, we use a staggered DiD approach:

\[ \begin{aligned} E_{imrt} &= \alpha + \beta_1 BTB_{imt} W_{imt} + \beta_2 BTB_{mt} + \beta_3 BTB_{mt} H_{imt} \\ &+ \delta_m + D_{imt} \beta_5 + \lambda_{rt} + \delta_m \times f(t) \beta_7 + e_{imrt} \end{aligned} \]

where:

- \(i\) = individual, \(m\) = MSA, \(r\) = region (e.g., Midwest, South), \(t\) = year

- \(W\) = White; \(B\) = Black; \(H\) = Hispanic

- \(BTB_{imt}\) = Ban the Box policy indicator

- \(\delta_m\) = MSA fixed effect

- \(D_{imt}\) = individual-level controls

- \(\lambda_{rt}\) = region-by-time fixed effect

- \(\delta_m \times f(t)\) = linear time trend within MSA

Fixed Effects Considerations:

Including \(\lambda_r\) and \(\lambda_t\) separately gives broader fixed effects.

Using \(\lambda_{rt}\) provides more granular controls for regional time trends.

To estimate the effects for Black men specifically, the model simplifies to:

\[ E_{imrt} = \alpha + BTB_{mt} \beta_1 + \delta_m + D_{imt} \beta_5 + \lambda_{rt} + (\delta_m \times f(t)) \beta_7 + e_{imrt} \]

To check for pre-trends and dynamic effects, we estimate:

\[ \begin{aligned} E_{imrt} &= \alpha + BTB_{m (t - 3)} \theta_1 + BTB_{m (t - 2)} \theta_2 + BTB_{m (t - 1)} \theta_3 \\ &+ BTB_{mt} \theta_4 + BTB_{m (t + 1)} \theta_5 + BTB_{m (t + 2)} \theta_6 + BTB_{m (t + 3)} \theta_7 \\ &+ \delta_m + D_{imt} \beta_5 + \lambda_{r} + (\delta_m \times f(t)) \beta_7 + e_{imrt} \end{aligned} \]

Key points:

- Leave out \(BTB_{m (t - 1)} \theta_3\) as the reference category (to avoid perfect collinearity).

- If \(\theta_2\) is significantly different from \(\theta_3\), it suggests pre-trend issues, which could indicate anticipatory effects before BTB implementation.

Substantively, Shoag and Veuger (2021) show that Ban-the-box policies increased employment in high-crime neighborhoods by up to 4%, especially in the public sector and low-wage jobs. This is the first nationwide evidence that such laws improve job access for areas with many ex-offenders.

30.4.2 Example: Minimum Wage and Employment

Card and Krueger (1993) famously studied the effect of an increase in the minimum wage on employment, challenging the traditional economic view that higher wages reduce employment.

- Philipp Leppert provides an R-based replication.

- Original datasets are available at David Card’s website.

Setting

- Treatment group: New Jersey (NJ), which increased its minimum wage.

- Control group: Pennsylvania (PA), which did not change its minimum wage.

- Outcome variable: Employment levels in fast-food restaurants.

The study used a Difference-in-Differences approach to estimate the impact (Table 30.4).

| State | After (Post) | Before (Pre) | Difference | |

|---|---|---|---|---|

| Treatment | NJ | A | B | A - B |

| Control | PA | C | D | C - D |

| A - C | B - D | (A - B) - (C - D) |

where:

- \(A - B\) captures the treatment effect plus general time trends.

- \(C - D\) captures only the general time trends.

- \((A - B) - (C - D)\) isolates the causal effect of the minimum wage increase.

For the DiD estimator to be valid, the following conditions must hold:

-

Parallel Trends Assumption

- The employment trends in NJ and PA would have been the same in the absence of the policy change.

- Pre-treatment employment trends should be similar between the two states.

-

No “Switchers”

- The policy must not induce restaurants to switch locations between NJ and PA (e.g., a restaurant relocating across the border).

-

PA as a Valid Counterfactual

- PA represents what NJ would have looked like had it not changed the minimum wage.

- The study focuses on bordering counties to increase comparability.

The main regression specification is:

\[ Y_{jt} = \beta_0 + NJ_j \beta_1 + POST_t \beta_2 + (NJ_j \times POST_t)\beta_3+ X_{jt}\beta_4 + \epsilon_{jt} \]

where:

- \(Y_{jt}\) = Employment in restaurant \(j\) at time \(t\)

- \(NJ_j\) = 1 if restaurant is in NJ, 0 if in PA

- \(POST_t\) = 1 if post-policy period, 0 if pre-policy

- \((NJ_j \times POST_t)\) = DiD interaction term, capturing the causal effect of NJ’s minimum wage increase

- \(X_{jt}\) = Additional controls (optional)

- \(\epsilon_{jt}\) = Error term

Notes on Model Specification

\(\beta_3\) (DiD coefficient) is the key parameter of interest, representing the causal impact of the policy.

\(\beta_4\) (controls \(X_{jt}\)) is not necessary for unbiasedness but improves efficiency.

-

If we difference out the pre-period (\(\Delta Y_{jt} = Y_{j,Post} - Y_{j,Pre}\)), we can simplify the model:

\[ \Delta Y_{jt} = \alpha + NJ_j \beta_1 + \epsilon_{jt} \]

Here, we no longer need \(\beta_2\) for the post-treatment period.

An alternative specification uses high-wage NJ restaurants as a control group, arguing that they were not affected by the minimum wage increase. However:

- This approach eliminates cross-state differences, but

- It may be harder to interpret causality, as the control group is not entirely untreated.

A common misconception in DiD is that treatment and control groups must have the same baseline levels of the dependent variable (e.g., employment levels). However:

- DiD only requires parallel trends, meaning the slopes of employment changes should be the same pre-treatment.

- If pre-treatment trends diverge, this threatens validity.

- If post-treatment trends converge, it may suggest policy effects rather than pre-trend violations.

Is Parallel Trends a Necessary or Sufficient Condition?

- Not sufficient: Even if pre-trends are parallel, other confounders could affect results.

- Not necessary: Parallel trends may emerge only after treatment, depending on behavioral responses.

Thus, we cannot prove DiD is valid. We can only present evidence that supports the assumptions.

30.4.3 Example: The Effects of Grade Policies on Major Choice

Butcher, McEwan, and Weerapana (2014) investigate how grading policies influence students’ major choices. The central theory is that grading standards vary by discipline, which affects students’ decisions.

Why do the highest-achieving students often major in hard sciences?

-

Grading Practices Differ Across Majors

- In STEM fields, grading is often stricter, meaning professors are less likely to give students the benefit of the doubt.

- In contrast, softer disciplines (e.g., humanities) may have more lenient grading, making students’ experiences more pleasant.

-

Labor Market Incentives

- Degrees with lower market value (e.g., humanities) might compensate by offering a more pleasant academic experience.

- STEM degrees tend to be more rigorous but provide higher job market returns.

To examine how grades influence major selection, the study first estimates an OLS model:

\[ E_{ij} = \beta_0 + X_i \beta_1 + G_j \beta_2 + \epsilon_{ij} \]

where:

- \(E_{ij}\) = Indicator for whether student \(i\) chooses major \(j\).

- \(X_i\) = Student-level attributes (e.g., SAT scores, demographics).

- \(G_j\) = Average grade in major \(j\).

- \(\beta_2\) = Key coefficient, capturing how grading standards influence major choice.

Potential Biases in \(\hat{\beta}_2\):

-

Negative Bias:

- Departments with lower enrollment rates may offer higher grades to attract students.

- This endogenous response leads to a downward bias in the OLS estimate.

-

Positive Bias:

- STEM majors attract the best students, so their grades would naturally be higher if ability were controlled.

- If ability is not fully accounted for, \(\hat{\beta}_2\) may be upward biased.

To address potential endogeneity in OLS, the study uses a difference-in-differences approach:

\[ Y_{idt} = \beta_0 + POST_t \beta_1 + Treat_d \beta_2 + (POST_t \times Treat_d)\beta_3 + X_{idt} + \epsilon_{idt} \]

where:

- \(Y_{idt}\) = Average grade in department \(d\) at time \(t\) for student \(i\).

- \(POST_t\) = 1 if post-policy period, 0 otherwise.

- \(Treat_d\) = 1 if department is treated (i.e., grade policy change), 0 otherwise.

- \((POST_t \times Treat_d)\) = DiD interaction term, capturing the causal effect of grade policy changes on major choice.

- \(X_{idt}\) = Additional student controls.

| Group | Intercept (\(\beta_0\)) | Treatment (\(\beta_2\)) | Post (\(\beta_1\)) | Interaction (\(\beta_3\)) |

|---|---|---|---|---|

| Treated, Pre | 1 | 1 | 0 | 0 |

| Treated, Post | 1 | 1 | 1 | 1 |

| Control, Pre | 1 | 0 | 0 | 0 |

| Control, Post | 1 | 0 | 1 | 0 |

Table 30.5 shows how we can think about the design matrix for DID.

- The average pre-period outcome for the control group is given by \(\beta_0\).

- The key coefficient of interest is \(\beta_3\), which captures the difference in the post-treatment effect between treated and control groups.

A more flexible specification includes fixed effects:

\[ Y_{idt} = \alpha_0 + (POST_t \times Treat_d) \alpha_1 + \theta_d + \delta_t + X_{idt} + u_{idt} \]

where:

- \(\theta_d\) = Department fixed effects (absorbing \(Treat_d\)).

- \(\delta_t\) = Time fixed effects (absorbing \(POST_t\)).

- \(\alpha_1\) = Effect of policy change (equivalent to \(\beta_3\) in the simpler model).

Why Use Fixed Effects?

-

More flexible specification:

- Instead of assuming a uniform treatment effect across groups, this model allows for department-specific differences (\(\theta_d\)) and time-specific shocks (\(\delta_t\)).

-

Higher degrees of freedom:

- Fixed effects absorb variation that would otherwise be attributed to \(POST_t\) and \(Treat_d\), making the estimation more efficient.

Interpretation of Results

- If \(\alpha_1 > 0\), then the policy increased grades in treated departments.

- If \(\alpha_1 < 0\), then the policy decreased grades in treated departments.

30.5 One Difference

The regression formula is as follows Liaukonytė, Tuchman, and Zhu (2023):

\[ y_{ut} = \beta \text{Post}_t + \gamma_u + \gamma_w(t) + \gamma_l + \gamma_g(u)p(t) + \epsilon_{ut} \]

where

- \(y_{ut}\): Outcome of interest for unit u in time t.

- \(\text{Post}_t\): Dummy variable representing a specific post-event period.

- \(\beta\): Coefficient measuring the average change in the outcome after the event relative to the pre-period.

- \(\gamma_u\): Fixed effects for each unit.

- \(\gamma_w(t)\): Time-specific fixed effects to account for periodic variations.

- \(\gamma_l\): Dummy variable for a specific significant period (e.g., a major event change).

- \(\gamma_g(u)p(t)\): Group x period fixed effects for flexible trends that may vary across different categories (e.g., geographical regions) and periods.

- \(\epsilon_{ut}\): Error term.

This model can be used to analyze the impact of an event on the outcome of interest while controlling for various fixed effects and time-specific variations, but using units themselves pre-treatment as controls.

30.6 Two-Way Fixed Effects

A generalization of the Difference-in-Differences model is the two-way fixed effects (TWFE) model, which accounts for multiple groups and multiple time periods by including both unit and time fixed effects. In practice, TWFE is frequently used to estimate causal effects in panel data settings. However, it is not a design-based, non-parametric causal estimator (Imai and Kim 2021), and it can suffer from severe biases if the treatment effect is heterogeneous across units or time.

When applying TWFE to datasets with multiple treatment groups and staggered treatment timing, the estimated causal coefficient is a weighted average of all possible two-group, two-period DiD comparisons. Crucially, some of these weights can be negative (Goodman-Bacon 2021), which leads to potential biases. The weighting scheme depends on:

- Group sizes

- Variation in treatment timing

- Placement in the middle of the panel (units in the middle tend to get the highest weight)

30.6.1 Canonical TWFE Model

The canonical TWFE model is typically written as:

\[ Y_{it} = \alpha_i + \lambda_t + \tau W_{it} + \beta X_{it} + \epsilon_{it}, \]

where:

\(Y_{it}\) = Outcome for unit \(i\) at time \(t\)

\(\alpha_i\) = Unit fixed effect

\(\lambda_t\) = Time fixed effect

\(\tau\) = Causal effect of treatment

\(W_{it}\) = Treatment indicator (\(1\) if treated, \(0\) otherwise)

\(X_{it}\) = Covariates

\(\epsilon_{it}\) = Error term

An illustrative TWFE event-study model (Stevenson and Wolfers 2006):

\[ \begin{aligned} Y_{it} &= \sum_{k} \beta_{k} \cdot Treatment_{it}^{k} + \eta_{i} + \lambda_{t} + Controls_{it} + \epsilon_{it}, \end{aligned} \]

where:

\(Treatment_{it}^k\): Indicator for whether unit \(i\) is in its \(k\)-th year relative to treatment at time \(t\).

\(\eta_i\): Unit fixed effects, controlling for time-invariant unobserved heterogeneity.

\(\lambda_t\): Time fixed effects, capturing overall macro shocks.

Standard Errors: Typically clustered at the group or cohort level.

Usually, researchers drop the period immediately before treatment (\(k=-1\)) to avoid collinearity. However, dropping this or another period inappropriately can shift or bias the estimates.

When there are only two time periods \((T=2)\), TWFE simplifies to the traditional DiD model. Under homogeneous treatment effects and if the parallel trends assumption holds, \(\hat{\tau}_{OLS}\) is unbiased. Specifically, the model assumes (Imai and Kim 2021):

-

Homogeneous treatment effects across units and time periods, meaning:

- No dynamic treatment effects (i.e., treatment effects do not evolve over time).

- The treatment effect is constant across units (Goodman-Bacon 2021; Clément De Chaisemartin and d’Haultfoeuille 2020; L. Sun and Abraham 2021; Borusyak, Jaravel, and Spiess 2021).

- Parallel trends assumption

- Linear additive effects are valid (Imai and Kim 2021).

However, in practice, treatment effects are often heterogeneous. If effects vary by cohort or over time, then standard TWFE estimates can be biased, particularly when there is staggered adoption or dynamic treatment effects (Goodman-Bacon 2021; Clément De Chaisemartin and d’Haultfoeuille 2020; L. Sun and Abraham 2021; Borusyak, Jaravel, and Spiess 2021). Hence, to use the TWFE, we actually have to argue why the effects are homogeneous to justify TWFE use:

-

Assess treatment heterogeneity: If heterogeneity exists, TWFE may produce biased estimates. Researchers should:

- Plot treatment timing across units.

- Decompose the treatment effect using the Goodman-Bacon decomposition to identify negative weights.

- Check the proportion of never-treated observations: When 80% or more of the sample is never treated, TWFE bias is negligible.

- Beware of bias worsening with long-run effects.

-

Dropping relative time periods:

- If all units eventually receive treatment, two relative time periods must be dropped to avoid multicollinearity.

- Some software packages drop periods randomly; if a post-treatment period is dropped, bias may result.

- The standard approach is to drop periods -1 and -2.

-

Sources of treatment heterogeneity:

- Delayed treatment effects: The impact of treatment may take time to manifest.

- Evolving effects: Treatment effects can increase or change over time (e.g., phase-in effects).

TWFE compares different types of treatment/control groups:

-

Valid comparisons:

- Newly treated units vs. control units

- Newly treated units vs. not-yet treated units

-

Problematic comparisons:

- Newly treated units vs. already treated units (since already treated units do not represent the correct counterfactual).

-

Strict exogeneity violations:

- Presence of time-varying confounders

- Feedback from past outcomes to treatment (Imai and Kim 2019)

-

Functional form restrictions:

- Assumes treatment effect homogeneity.

- No carryover effects or anticipation effects (Imai and Kim 2019).

30.6.2 Limitations of TWFE

TWFE DiD is valid only under strong assumptions that the treatment effect does not vary across units or over time. In reality, we almost always see some form of treatment heterogeneity:

- No dynamic treatment effects: The model requires that the treatment effect not evolve over time.

- No unit-level differences: The treatment effect must be constant across all units.

- Linear additive effects: TWFE assumes that the underlying data-generating process is captured by additive fixed effects plus a constant treatment effect (Imai and Kim 2021).

If any of these assumptions are violated, TWFE can produce biased estimates. Specifically:

- Negative Weights & Biased Estimates: With multiple groups and staggered timing, the TWFE estimate becomes a complicated average of “two-group, two-period” DiD comparisons, some of which can receive negative weights (Goodman-Bacon 2021).

- Potential Bias from Dropping Relative Time Periods: If all units eventually get treated, software often drops a reference period (or periods) to avoid multicollinearity. If the dropped period is post-treatment, the bias can worsen. Researchers often drop relative time \(-1\) or \(-2\).

- Delayed or Evolving Treatment Effects: If the effect of treatment takes time to manifest or changes over time, TWFE’s single coefficient \(\tau\) can be misleading.

When two time periods only exist, TWFE collapses back to the traditional DiD model, making these problems far less severe. But as soon as one moves beyond a single treatment period or has variation in treatment timing, these issues become critical.

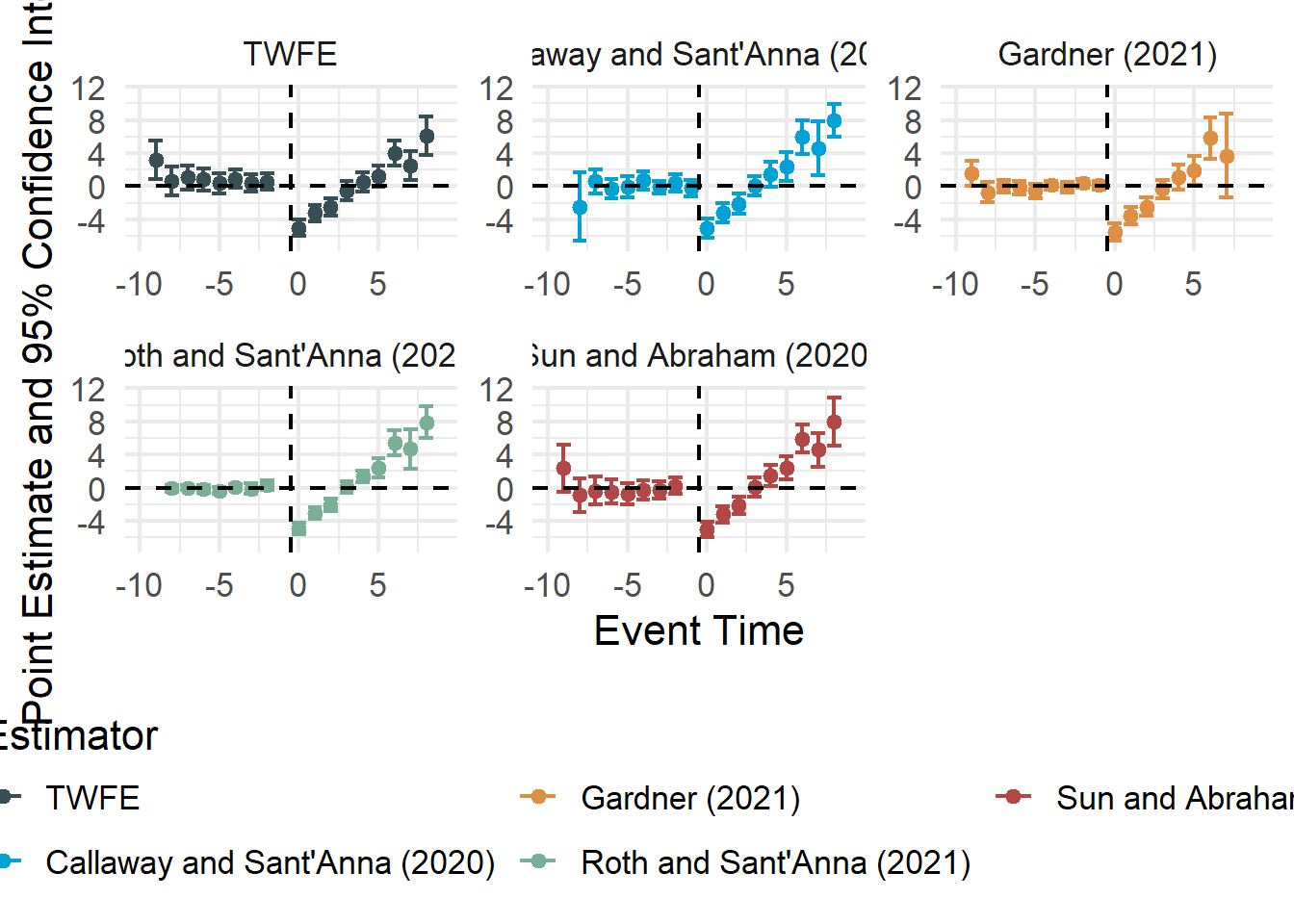

Several authors (L. Sun and Abraham 2021; Callaway and Sant’Anna 2021; Goodman-Bacon 2021) have raised concerns that TWFE DiD regressions under staggered adoption:

- Mixes Cohorts: May unintentionally compare newly treated units to already treated units, conflating post-treatment behavior of early adopters with the pre-treatment trends of later adopters.

- Negative Weights: Some group comparisons receive negative weights, which can reverse the sign of the overall estimate.

- Pre-Treatment Leads: Leads may appear non-zero if earlier-treated groups remain in the sample while later adopters are still untreated.

- Long-Run Effects: Heterogeneity in lagged (long-run) effects can exacerbate bias.

In fields like finance and accounting, newer estimators often reveal null or much smaller effects than standard TWFE once bias is properly accounted for (Baker, Larcker, and Wang 2022).

30.6.3 Diagnosing and Addressing Bias in TWFE

Researchers can identify and mitigate the biases arising from heterogeneous treatment effects through diagnostic checks and alternative estimators:

- Purpose: Decomposes the TWFE DiD estimate into the sum of all two-group, two-period comparisons.

- Insight: Reveals which comparisons have negative weights and how much each comparison contributes to the overall estimate (Goodman-Bacon 2021).

- Implementation: Identify subgroups by treatment timing, then examine each group–time pair to see how it contributes to the aggregate TWFE coefficient.

- Visual Inspection: Always plot the distribution of treatment timing across units.

- High Risk of Bias: If treatment is staggered and many units differ in their adoption times, standard TWFE will often be biased.

- Assessing Treatment Heterogeneity Directly

- Check for Variation in Effects: If there is a theoretical or empirical reason to believe that treatment effects differ by subgroup or over time, TWFE might not be appropriate.

- Size of Never-Treated Sample: When 80% or more of the sample is never treated, the potential for bias in TWFE is smaller. However, large shares of treated units with varied adoption times raise red flags.

- Long-Run Effects: Bias can worsen if the treatment effect accumulates or changes over time.

30.6.3.1 Goodman-Bacon Decomposition

The Goodman-Bacon decomposition (Goodman-Bacon 2021) is a powerful diagnostic tool for understanding the TWFE estimator in settings with staggered treatment adoption. This approach clarifies how the TWFE DiD estimate is a weighted average of many 2×2 difference-in-differences comparisons between groups treated at different times (or never treated).

Key Takeaways

- A pairwise DiD estimate (\(\tau\)) receives more weight when:

- The treatment happens closer to the midpoint of the observation window.

- The comparison involves more observations (e.g., more units or more years).

- Comparisons between early-treated and later-treated groups can produce negative weights, potentially biasing the aggregate TWFE estimate.

We illustrate the decomposition using the castle dataset from the bacondecomp package:

library(bacondecomp)

library(tidyverse)

# Load and inspect the castle dataset

castle <- bacondecomp::castle %>%

dplyr::select(l_homicide, post, state, year)

head(castle)

#> l_homicide post state year

#> 1 2.027356 0 Alabama 2000

#> 2 2.164867 0 Alabama 2001

#> 3 1.936334 0 Alabama 2002

#> 4 1.919567 0 Alabama 2003

#> 5 1.749841 0 Alabama 2004

#> 6 2.130440 0 Alabama 2005Running the Goodman-Bacon Decomposition

# Apply Goodman-Bacon decomposition

df_bacon <- bacon(

formula = l_homicide ~ post,

data = castle,

id_var = "state",

time_var = "year"

)

#> type weight avg_est

#> 1 Earlier vs Later Treated 0.05976 -0.00554

#> 2 Later vs Earlier Treated 0.03190 0.07032

#> 3 Treated vs Untreated 0.90834 0.08796

# Display weighted average of the decomposition

weighted_avg <- sum(df_bacon$estimate * df_bacon$weight)

weighted_avg

#> [1] 0.08181162Comparing with the TWFE Estimate

library(broom)

# Fit a TWFE model

fit_tw <- lm(l_homicide ~ post + factor(state) + factor(year), data = castle)

tidy(fit_tw)

#> # A tibble: 61 × 5

#> term estimate std.error statistic p.value

#> <chr> <dbl> <dbl> <dbl> <dbl>

#> 1 (Intercept) 1.95 0.0624 31.2 2.84e-118

#> 2 post 0.0818 0.0317 2.58 1.02e- 2

#> 3 factor(state)Alaska -0.373 0.0797 -4.68 3.77e- 6

#> 4 factor(state)Arizona 0.0158 0.0797 0.198 8.43e- 1

#> 5 factor(state)Arkansas -0.118 0.0810 -1.46 1.44e- 1

#> 6 factor(state)California -0.108 0.0810 -1.34 1.82e- 1

#> 7 factor(state)Colorado -0.696 0.0810 -8.59 1.14e- 16

#> 8 factor(state)Connecticut -0.785 0.0810 -9.68 2.08e- 20

#> 9 factor(state)Delaware -0.547 0.0810 -6.75 4.18e- 11

#> 10 factor(state)Florida -0.251 0.0798 -3.14 1.76e- 3

#> # ℹ 51 more rowsInterpretation: The TWFE estimate (approx. 0.08) equals the weighted average of the Bacon decomposition estimates, confirming the decomposition’s validity.

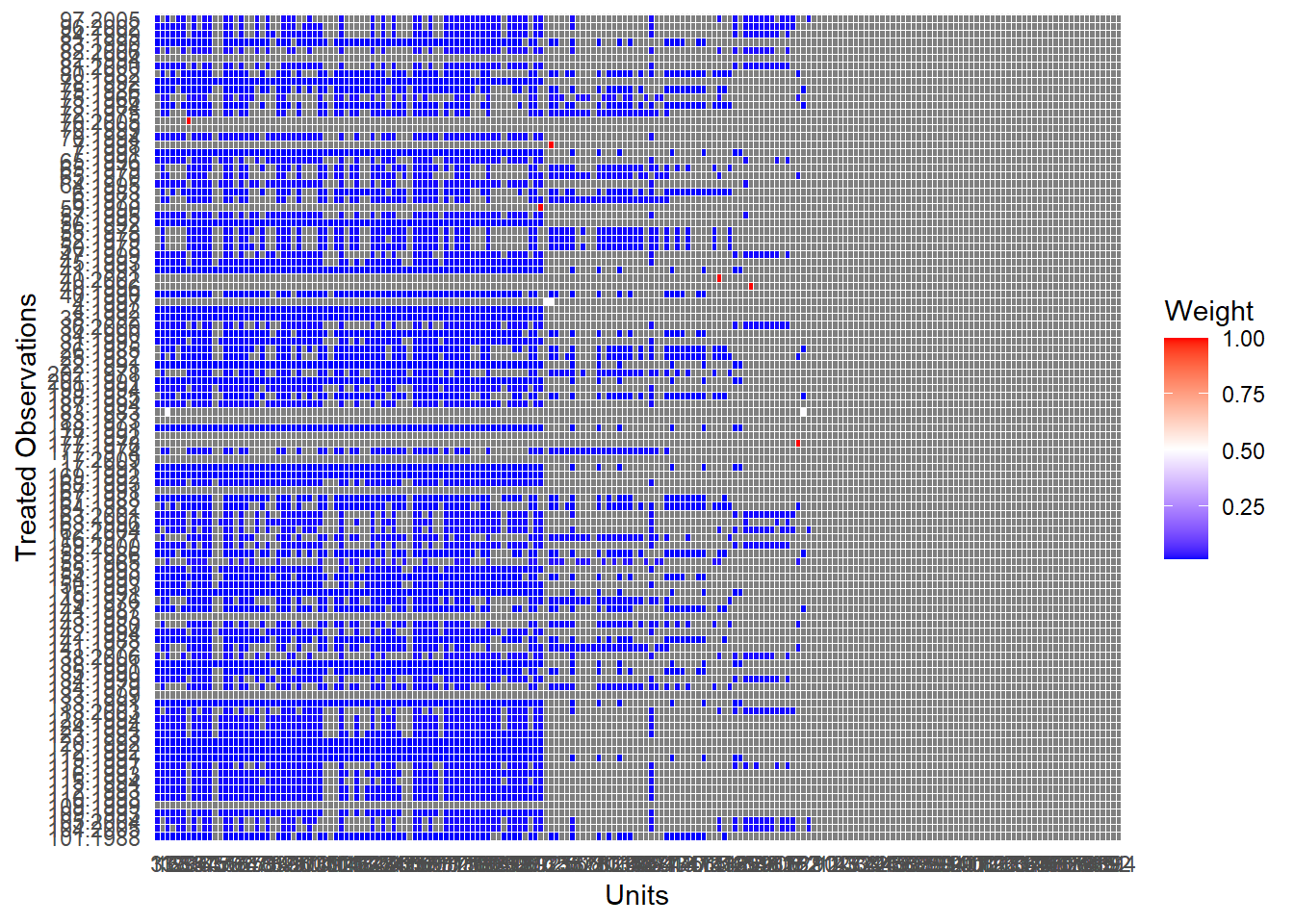

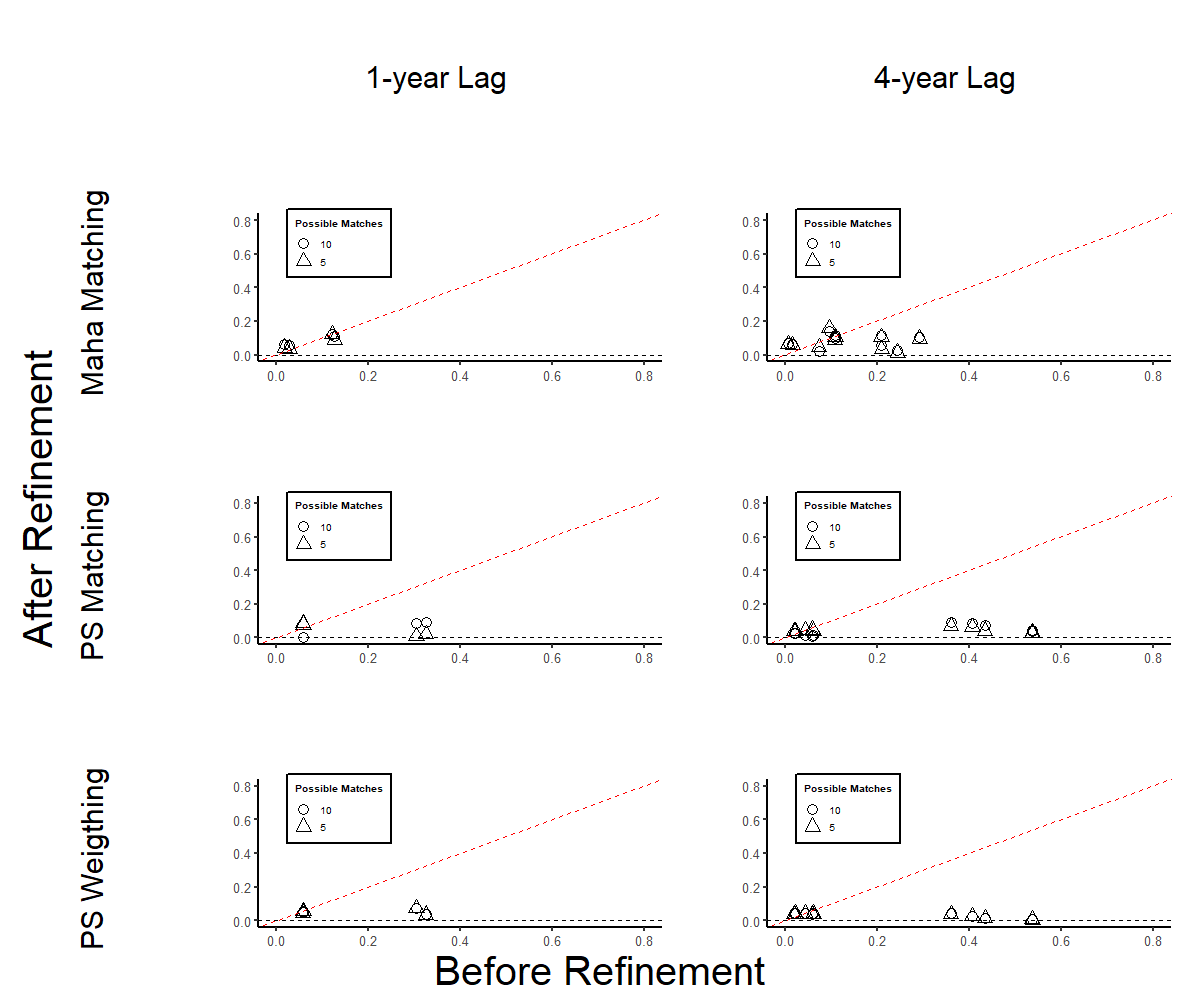

Visualizing the Decomposition (Figure 30.8)

library(ggplot2)

ggplot(df_bacon) +

aes(

x = weight,

y = estimate,

color = type

) +

geom_point() +

labs(

x = "Weight",

y = "Estimate",

color = "Comparison Type"

) +

causalverse::ama_theme()

Figure 30.8: Decomposition of Treatment Effects by Comparison Type and Weight

Insight: This plot shows the contribution of each 2×2 DiD comparison, highlighting how estimates with large weights dominate the overall TWFE coefficient.

Interpretation and Practical Implications

- Purpose: Decomposes the TWFE DiD estimate into the sum of all two-group, two-period comparisons.

- Insight: Reveals how much each comparison contributes to the overall estimate and whether any have negative or misleading effects.

-

Implementation:

- Identify subgroups by treatment timing.

- Compute DiD for each 2×2 comparison (early vs. late, late vs. never, etc.).

- Evaluate how these contribute to the final TWFE estimate.

When time-varying covariates are included that allow for identification within treatment timing groups, certain problematic comparisons (like “early vs. late”) may no longer influence the TWFE estimator directly. These scenarios may collapse into simpler within-group estimates, improving identification (Table 30.6).

| Comparison Type | Description | Common Issue |

|---|---|---|

| Treated vs. Never | Clean comparisons if never-treated units exist | Often reliable |

| Early vs. Late | Later group is control in earlier period | May introduce bias |

| Late vs. Early | Early group is control in later period | May reverse causality |

| Treated vs. Treated | Within-treatment variation by timing | Sensitive to dynamics |

30.6.4 Remedies for TWFE’s Shortcomings

This section outlines alternative estimators and design-based approaches that explicitly handle heterogeneous treatment effects, staggered adoption (Baker, Larcker, and Wang 2022), and dynamic treatment effects better than standard TWFE (e.g., Modern Estimators for Staggered Adoption).

Callaway and Sant’Anna (2021) propose a two-step approach:

- Group-time treatment effects: In each time period, estimate the effect for the cohort that first received treatment in that period (compared to a never-treated group).

- Aggregate: Use a bootstrap procedure to account for autocorrelation and clustering, then aggregate across groups.

- Advantages: Allows for heterogeneous treatment effects across groups and over time; compares treated groups only with never-treated units (or well-chosen controls).

-

Implementation:

didpackage in R.

L. Sun and Abraham (2021) build on Callaway and Sant’Anna (2021) to handle event-study settings:

- Lags and Leads: Capture dynamic treatment effects by including time lags and leads relative to the event (treatment).

- Cohort-Specific Estimates: Estimate separate paths of outcomes for each cohort, controlling for other cohorts carefully.

- Interaction-Weighted Estimator: Adjusts for differences in when treatment began.

-

Implementation:

fixestpackage in R.

Imai and Kim (2021) develop methods allowing units to switch in and out of treatment:

- Matching to create a weighted version of TWFE, addressing some of the bias from heterogeneous effects.

-

Implementation:

wfeandPanelMatchR packages.

- Two-Stage Difference-in-Differences (DiD2S)

Gardner (2022) propose two-stage DiD:

- Idea: Partial out fixed effects first, then perform a second-stage regression that focuses on within-group/time variation.

- Strength: Handles heterogeneous treatment effects well, especially when never-treated units are present.

-

Implementation:

did2sR package.

- If a study has never-treated units, Clément De Chaisemartin and d’Haultfoeuille (2020) suggest an switching DiD estimator to recover the average treatment effect.

- Caveat: This approach still fails to detect heterogeneity if treatment effects vary with exposure length (L. Sun and Shapiro 2022).

- Design-Based Approaches: Arkhangelsky et al. (2024) offer further refinements that incorporate inverse probability weighting.

- Goal: Improve balance and reduce bias from non-random treatment timing.

-

Stacked DID (Simpler but Biased)

- Build stacked datasets for each treatment cohort, running separate regressions for each “event window.”

- This approach is simpler but can still carry biases if the underlying assumptions are violated (Gormley and Matsa 2011; Cengiz et al. 2019; Deshpande and Li 2019).

-

Doubly Robust Difference-in-Differences Estimators (DR-DID) (Sant’Anna and Zhao 2020)

- DR-DID estimators combine outcome regression and propensity score weighting to identify treatment effects, remaining consistent if either model is correctly specified.

- They achieve local efficiency under joint correctness and can be applied to both panel and repeated cross-section data.

- Nonlinear Difference-in-Differences

30.6.5 Best Practices and Recommendations

Below are practical guidelines for deciding when to use TWFE and how to diagnose or address potential bias.

-

When is TWFE Appropriate?

- Single Treatment Period: TWFE DiD works well if there is only one treatment period for all treated units (no variation in timing).

- Homogeneous Effects: If strong theoretical or empirical reasons suggest constant treatment effects across cohorts and over time, TWFE remains a reasonable choice.

-

Diagnosing and Addressing Bias with Staggered Adoption

- Plot Treatment Timing: Examine the distribution of treatment timing across units. If treatment adoption is highly staggered, TWFE is likely to produce biased estimates.

-

Decomposition Methods: Use the Goodman-Bacon Decomposition (Goodman-Bacon 2021) to see how TWFE pools comparisons (and whether negative weights emerge). If decomposition is infeasible (e.g., unbalanced panels), the share of never-treated units can indicate potential bias severity.

- Decomposes the TWFE DiD estimate into two-group, two-period comparisons.

- Identifies which comparisons receive negative weights, which can lead to biased estimates.

- Helps determine the influence of specific groups on the overall estimate.

- Discuss Heterogeneity: Explicitly state the likelihood of treatment effect heterogeneity; incorporate it into the research design.

-

Event-Study Specifications within TWFE

- Avoid Arbitrary Binning: Do not collapse multiple time periods into a single bin unless you can justify homogeneous effects within that bin.

- Full Relative-Time Indicators: Include flexible event-time indicators, carefully choosing a reference period (commonly \(-1\), the year before treatment). Specifically, Include fully flexible relative time indicators, and justify the reference period (usually \(l = -1\) or the period just prior to treatment).

- Beware of Multicollinearity: Including leads and lags can cause multicollinearity and artificially produce significant “pre-trends.”

- Drop the Right Periods: If all units eventually get treated, dropping post-treatment periods accidentally can bias results.

- Consider Alternative Estimators

30.7 Multiple Periods and Variation in Treatment Timing

TWFE has been extended beyond the simple DiD setup to multiple periods and staggered adoption (where treatment occurs at different times for different units). Such designs are common in applied economics, public policy, and longitudinal research. However, standard TWFE regressions can be biased in these contexts when treatment effects are heterogeneous across groups or over time.

30.7.1 Staggered Difference-in-Differences

In staggered treatment adoption (also called event-study DiD or dynamic DiD):

- Different units adopt the treatment at different time periods.

- Standard TWFE often produces biased estimates because it “pools” all treated units (regardless of when they started treatment) together, implicitly comparing newly treated units to already treated ones.

- Treatments that occurred earlier may contaminate the counterfactual for later adopters if the model does not properly handle dynamic or heterogeneous effects (Wing et al. 2024; Baker, Larcker, and Wang 2022).

- For applied guidance, see (Wing et al. 2024) and recommendations in (Baker, Larcker, and Wang 2022).

Researchers should be aware that standard TWFE can mix treatment effects of early adopters (long-exposed) with later adopters (newly exposed), potentially assigning negative weights to particular group comparisons (Goodman-Bacon 2021).

When using staggered adoption, the following assumptions are critical:

-

Rollout Exogeneity

Treatment assignment and timing should be uncorrelated with potential outcomes.- Evidence: Regress adoption on pre-treatment variables. And if you find evidence of correlation, include linear trends interacted with pre-treatment variables (Hoynes and Schanzenbach 2009)

-

Evidence (Deshpande and Li 2019, 223):

- Treatment is random: Regress treatment status at the unit level to all pre-treatment observables. If you have some that are predictive of treatment status, you might have to argue why it’s not a worry. At best, you want this.

- Treatment timing is random: Conditional on treatment, regress timing of the treatment on pre-treatment observables. At least, you want this.

No Confounding Events

Ensure no other policies or shocks coincide with the staggered treatment rollout.-

Exclusion Restrictions

- No Anticipation: Treatment timing should not affect outcomes prior to treatment.

- Invariance to History: Treatment duration shouldn’t matter; only the treated status matters (often violated).

-

Standard DID Assumptions

- Parallel Trends (Conditional or Unconditional)

- Random Sampling

- Overlap (Common Support)

- Effect Additivity

30.8 Modern Estimators for Staggered Adoption

30.8.1 Group-Time Average Treatment Effects (Callaway and Sant’Anna 2021)

Notation Recap

\(Y_{it}(0)\): Potential outcome for unit \(i\) at time \(t\) in the absence of treatment.

\(Y_{it}(g)\): Potential outcome for unit \(i\) at time \(t\) if first treated in period \(g\).

-

\(Y_{it}\): Observed outcome for unit \(i\) at time \(t\).

\[ Y_{it} = \begin{cases} Y_{it}(0), & \text{if unit } i \text{ never treated ( } C_i = 1 \text{)} \\ 1\{G_i > t\} Y_{it}(0) + 1\{G_i \le t\} Y_{it}(G_i), & \text{otherwise} \end{cases} \]

\(G_i\): Group assignment, i.e., the time period when unit \(i\) first receives treatment.

\(C_i = 1\): Indicator that unit \(i\) never receives treatment (the never-treated group).

\(D_{it} = 1\{G_i \le t\}\): Indicator that unit \(i\) has been treated by time \(t\).

Assumptions

The following assumptions are typically imposed to identify treatment effects in staggered adoption settings.

Staggered Treatment Adoption

Once treated, a unit remains treated in all subsequent periods.

Formally, \(D_{it}\) is non-decreasing in \(t\).-

Parallel Trends Assumptions (Conditional or Unconditional on Covariates)

Two common variants:

-

Parallel trends based on never-treated units: \[

\mathbb{E}[Y_t(0) - Y_{t-1}(0) | G_i = g] = \mathbb{E}[Y_t(0) - Y_{t-1}(0) | C_i = 1]

\] Interpretation:

- The average potential outcome trends of the treated group (\(G_i = g\)) are the same as the never-treated group, absent treatment.

-

Parallel trends based on not-yet-treated units: \[

\mathbb{E}[Y_t(0) - Y_{t-1}(0) | G_i = g] = \mathbb{E}[Y_t(0) - Y_{t-1}(0) | D_{is} = 0, G_i \ne g]

\] Interpretation:

- Units not yet treated by time \(s\) (\(D_{is} = 0\)) can serve as controls for units first treated at \(g\).

These assumptions can also be conditional on covariates \(X\), as:

\[ \mathbb{E}[Y_t(0) - Y_{t-1}(0) | X_i, G_i = g] = \mathbb{E}[Y_t(0) - Y_{t-1}(0) | X_i, C_i = 1] \]

-

Parallel trends based on never-treated units: \[

\mathbb{E}[Y_t(0) - Y_{t-1}(0) | G_i = g] = \mathbb{E}[Y_t(0) - Y_{t-1}(0) | C_i = 1]

\] Interpretation:

Random Sampling

Units are sampled independently and identically from the population.Irreversibility of Treatment

Once treated, units do not revert to untreated status.Overlap (Positivity)

For each group \(g\), the propensity of receiving treatment at \(g\) lies strictly within \((0, 1)\): \[ 0 < \mathbb{P}(G_i = g | X_i) < 1 \]

The Group-Time ATT, \(ATT(g, t)\), measures the average treatment effect for units first treated in period \(g\), evaluated at time \(t\).

\[ ATT(g, t) = \mathbb{E}[Y_t(g) - Y_t(0) | G_i = g] \]

Interpretation:

\(g\) indexes when the group first receives treatment.

\(t\) is the time period when the effect is evaluated.

\(ATT(g, t)\) captures how treatment effects evolve over time, following adoption at time \(g\).

Identification of \(ATT(g, t)\)

Using Never-Treated Units as Controls: \[ ATT(g, t) = \mathbb{E}[Y_t - Y_{g-1} | G_i = g] - \mathbb{E}[Y_t - Y_{g-1} | C_i = 1], \quad \forall t \ge g \]

Using Not-Yet-Treated Units as Controls: \[ ATT(g, t) = \mathbb{E}[Y_t - Y_{g-1} | G_i = g] - \mathbb{E}[Y_t - Y_{g-1} | D_{it} = 0, G_i \ne g], \quad \forall t \ge g \]

-

Conditional Parallel Trends (with Covariates):

If treatment assignment depends on covariates \(X_i\), adjust the parallel trends assumption:- Never-treated controls: \[ ATT(g, t) = \mathbb{E}[Y_t - Y_{g-1} | X_i, G_i = g] - \mathbb{E}[Y_t - Y_{g-1} | X_i, C_i = 1], \quad \forall t \ge g \]

- Not-yet-treated controls: \[ ATT(g, t) = \mathbb{E}[Y_t - Y_{g-1} | X_i, G_i = g] - \mathbb{E}[Y_t - Y_{g-1} | X_i, D_{it} = 0, G_i \ne g], \quad \forall t \ge g \]

Aggregating \(ATT(g, t)\): Common Parameters of Interest

-

Average Treatment Effect per Group (\(\theta_S(g)\)):

Average effect over all periods after treatment for group \(g\): \[ \theta_S(g) = \frac{1}{\tau - g + 1} \sum_{t = g}^{\tau} ATT(g, t) \]- \(\tau\): Last time period in the panel.

Overall Average Treatment Effect on the Treated (ATT) (\(\theta_S^O\)):

Weighted average of \(\theta_S(g)\) across groups \(g\), weighted by their group size: \[ \theta_S^O = \sum_{g=2}^{\tau} \theta_S(g) \cdot \mathbb{P}(G_i = g) \]Dynamic Treatment Effects (\(\theta_D(e)\)):

Average effect after \(e\) periods of treatment exposure: \[ \theta_D(e) = \sum_{g=2}^{\tau} \mathbb{1}\{g + e \le \tau\} \cdot ATT(g, g + e) \cdot \mathbb{P}(G_i = g | g + e \le \tau) \]Calendar Time Treatment Effects (\(\theta_C(t)\)):

Average treatment effect at time \(t\) across all groups treated by \(t\): \[ \theta_C(t) = \sum_{g=2}^{\tau} \mathbb{1}\{g \le t\} \cdot ATT(g, t) \cdot \mathbb{P}(G_i = g | g \le t) \]Average Calendar Time Treatment Effect (\(\theta_C\)):

Average of \(\theta_C(t)\) across all post-treatment periods: \[ \theta_C = \frac{1}{\tau - 1} \sum_{t=2}^{\tau} \theta_C(t) \]

The staggered() function offers several estimands, each defining a different way of aggregating group-time average treatment effects into a single overall treatment effect:

Simple: Equally weighted across all groups.

Cohort: Weighted by group sizes (i.e., treated cohorts).

Calendar: Weighted by the number of observations in each calendar time.

library(staggered)

library(fixest)

data("base_stagg")

# Simple weighted average ATT

staggered(

df = base_stagg,

i = "id",

t = "year",

g = "year_treated",

y = "y",

estimand = "simple"

)

#> estimate se se_neyman

#> 1 -0.7110941 0.2211943 0.2214245

# Cohort weighted ATT (i.e., by treatment cohort size)

staggered(

df = base_stagg,

i = "id",

t = "year",

g = "year_treated",

y = "y",

estimand = "cohort"

)

#> estimate se se_neyman

#> 1 -2.724242 0.2701093 0.2701745

# Calendar weighted ATT (i.e., by year)

staggered(

df = base_stagg,

i = "id",

t = "year",

g = "year_treated",

y = "y",

estimand = "calendar"

)

#> estimate se se_neyman

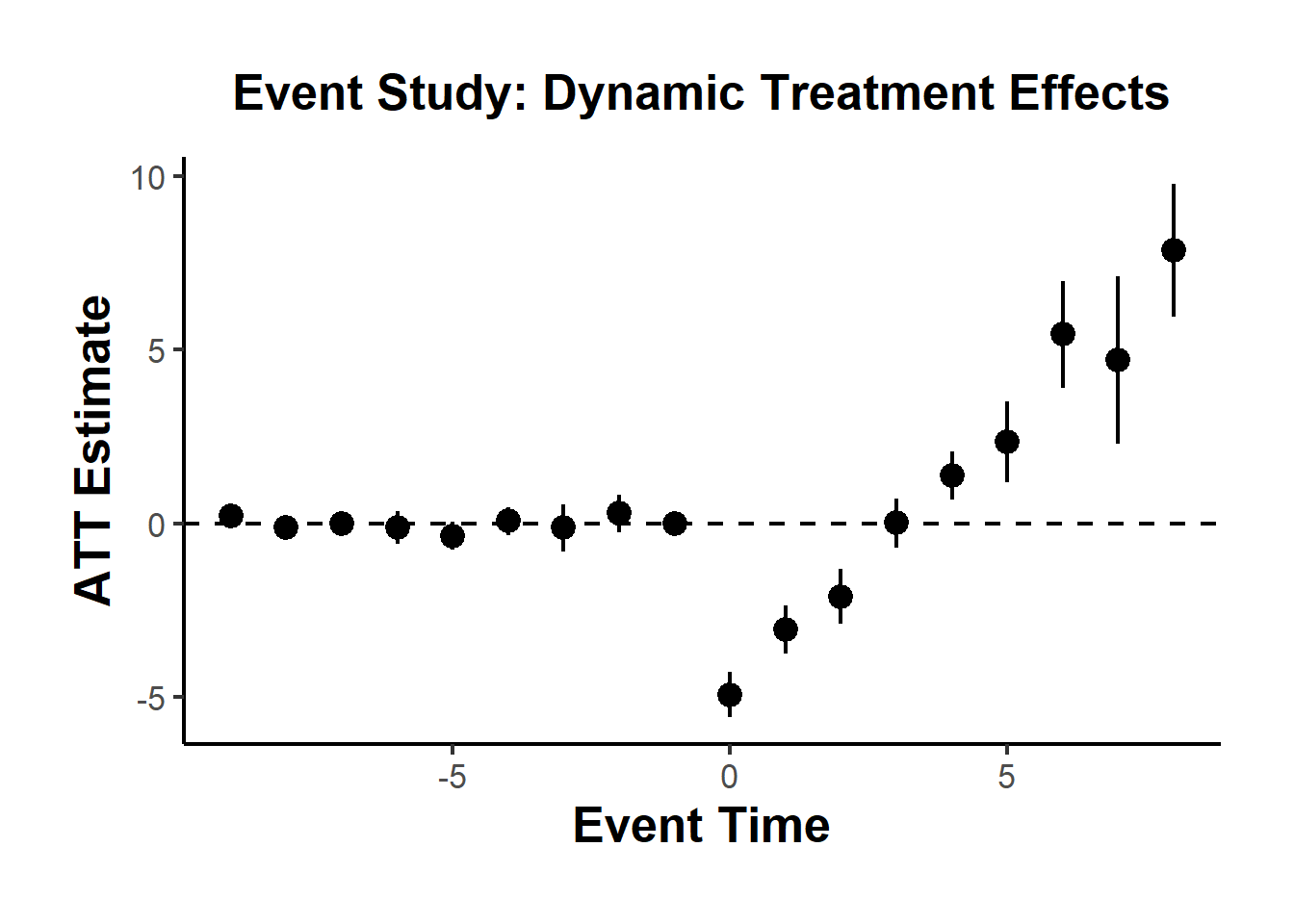

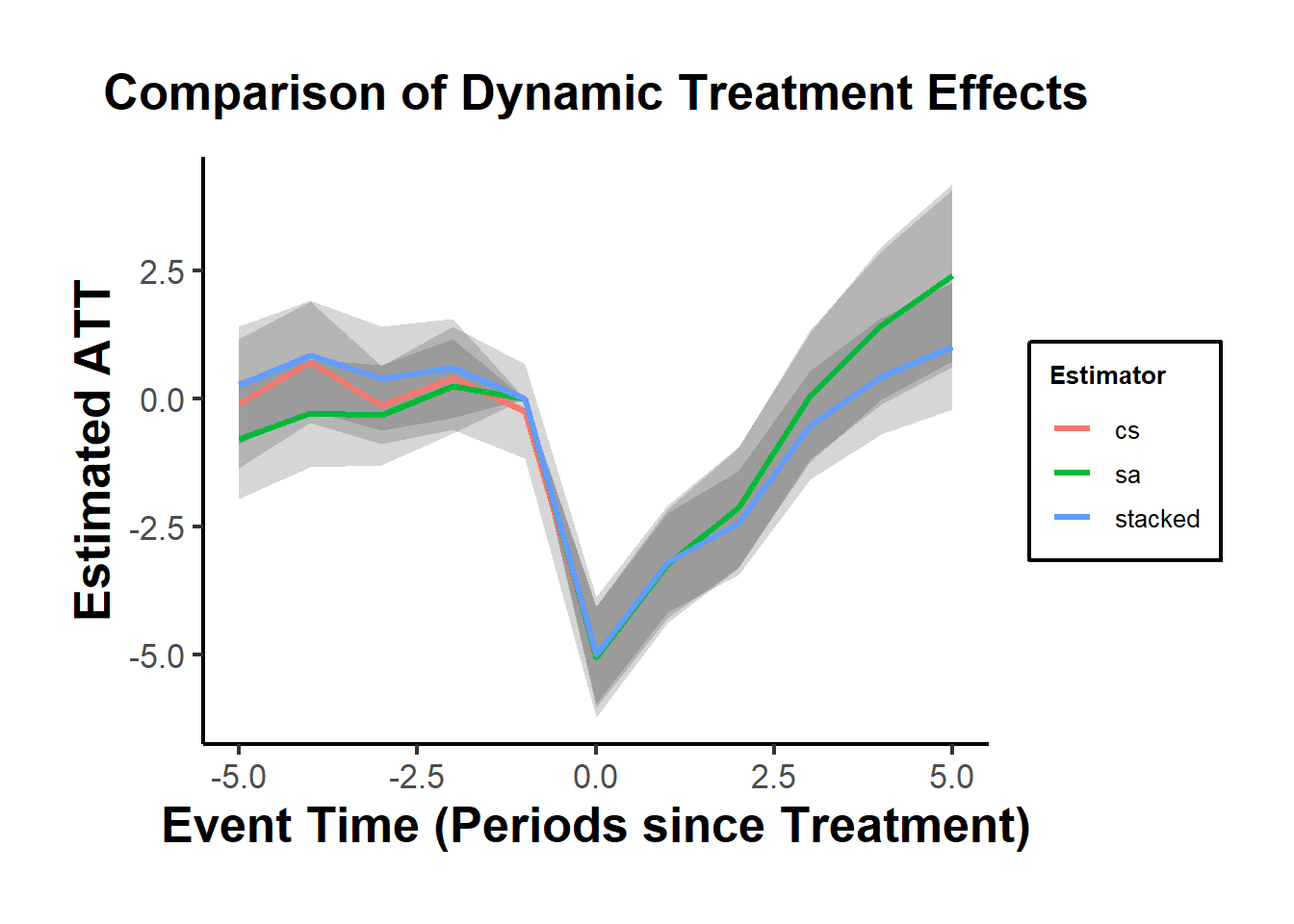

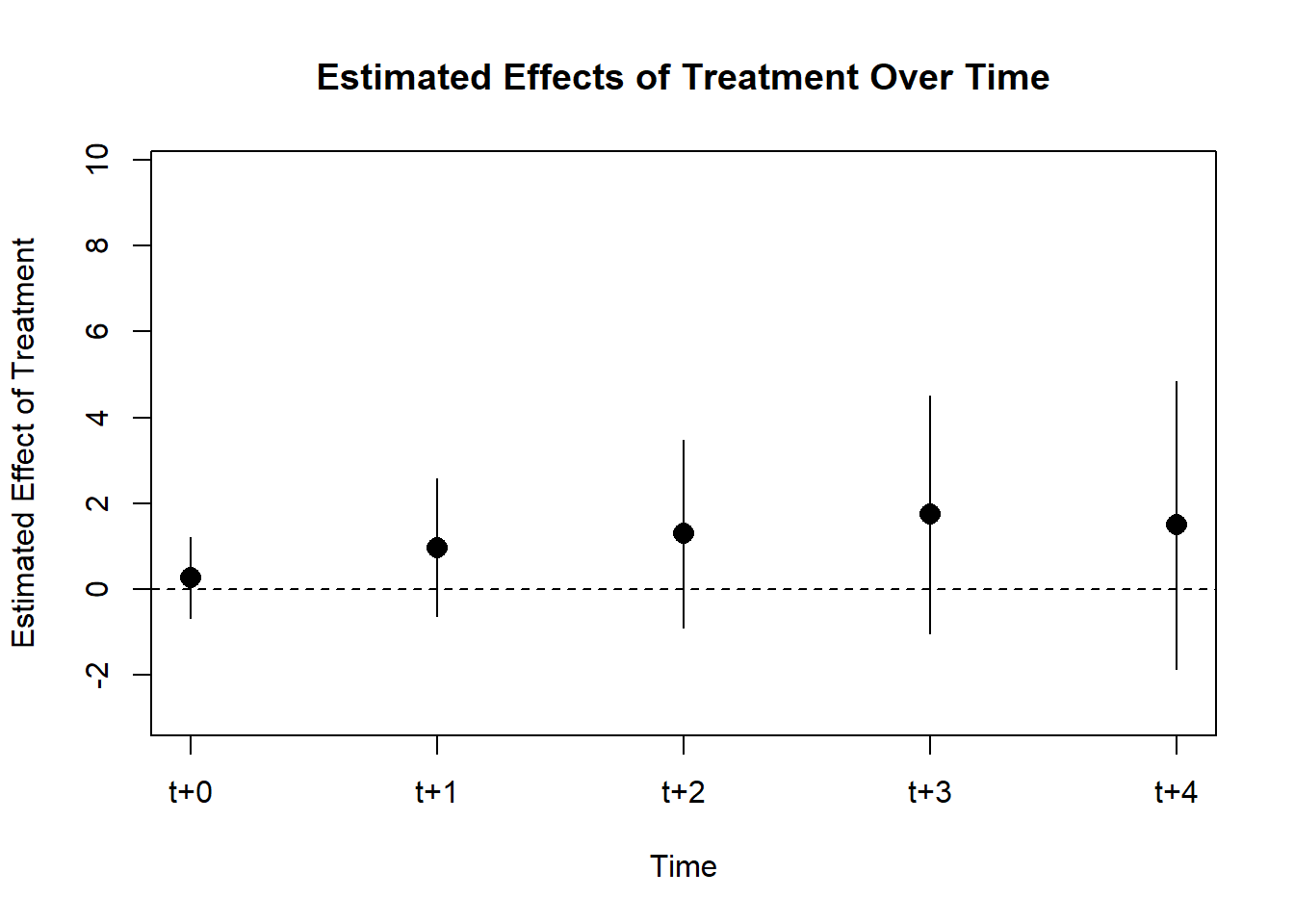

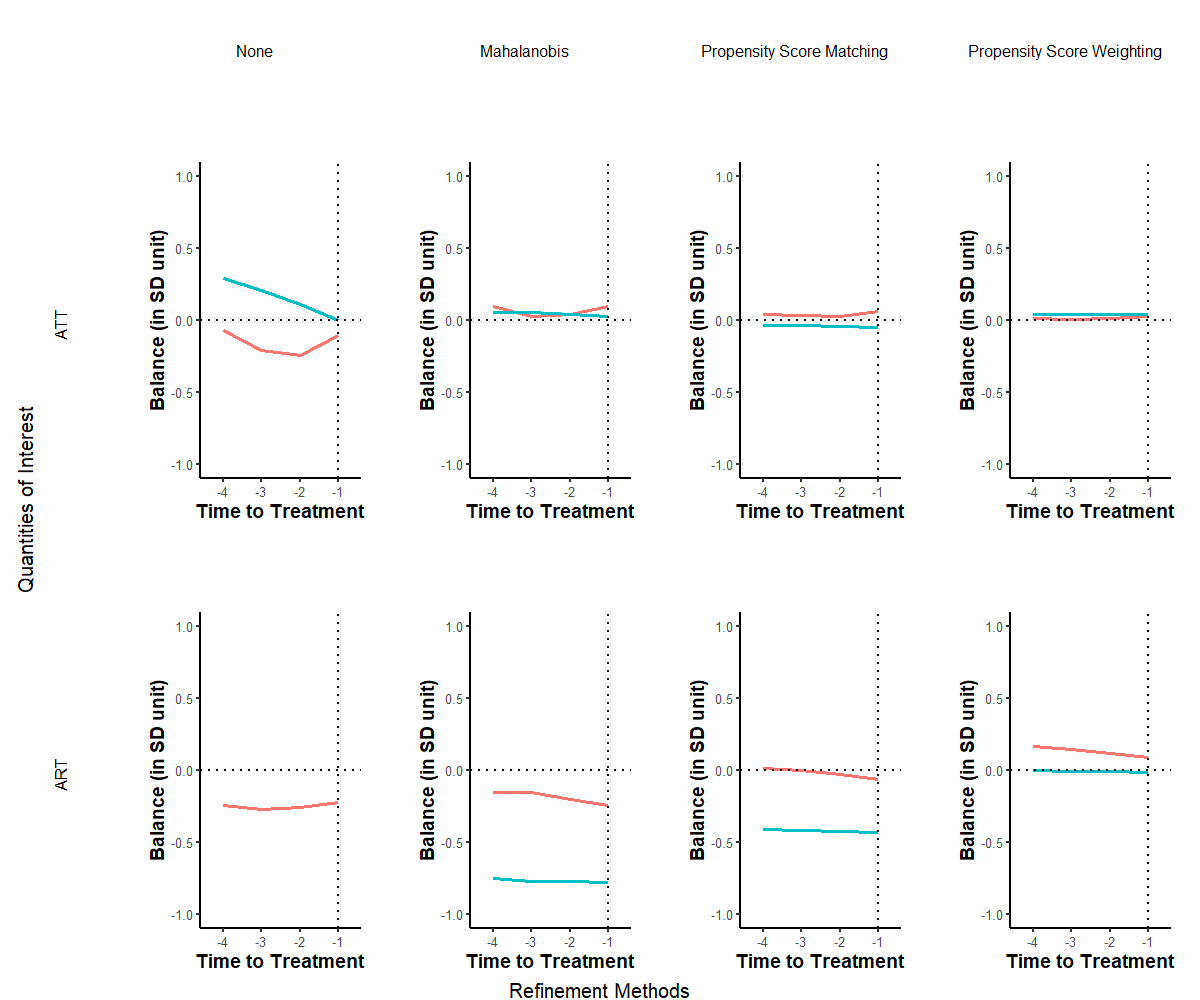

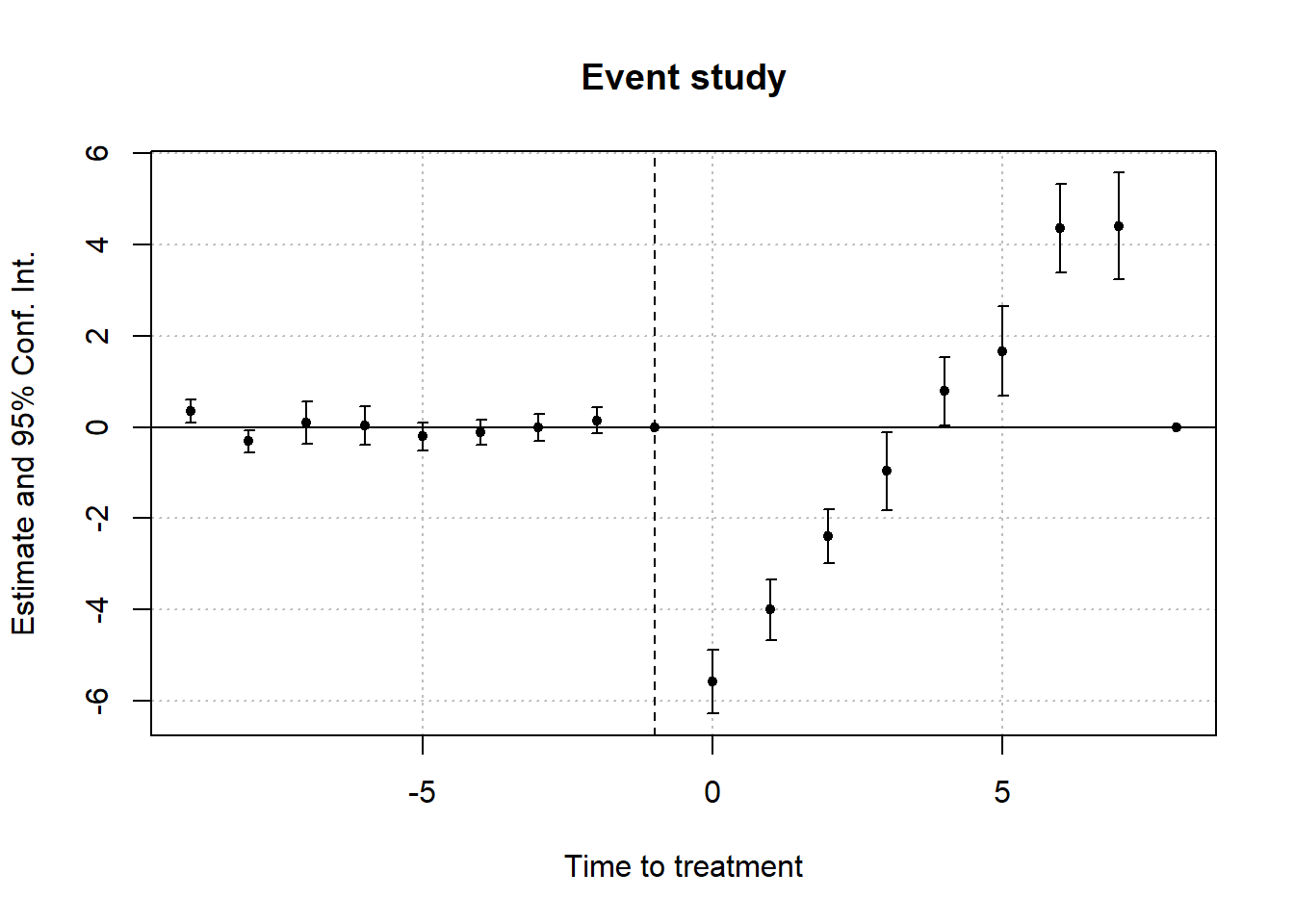

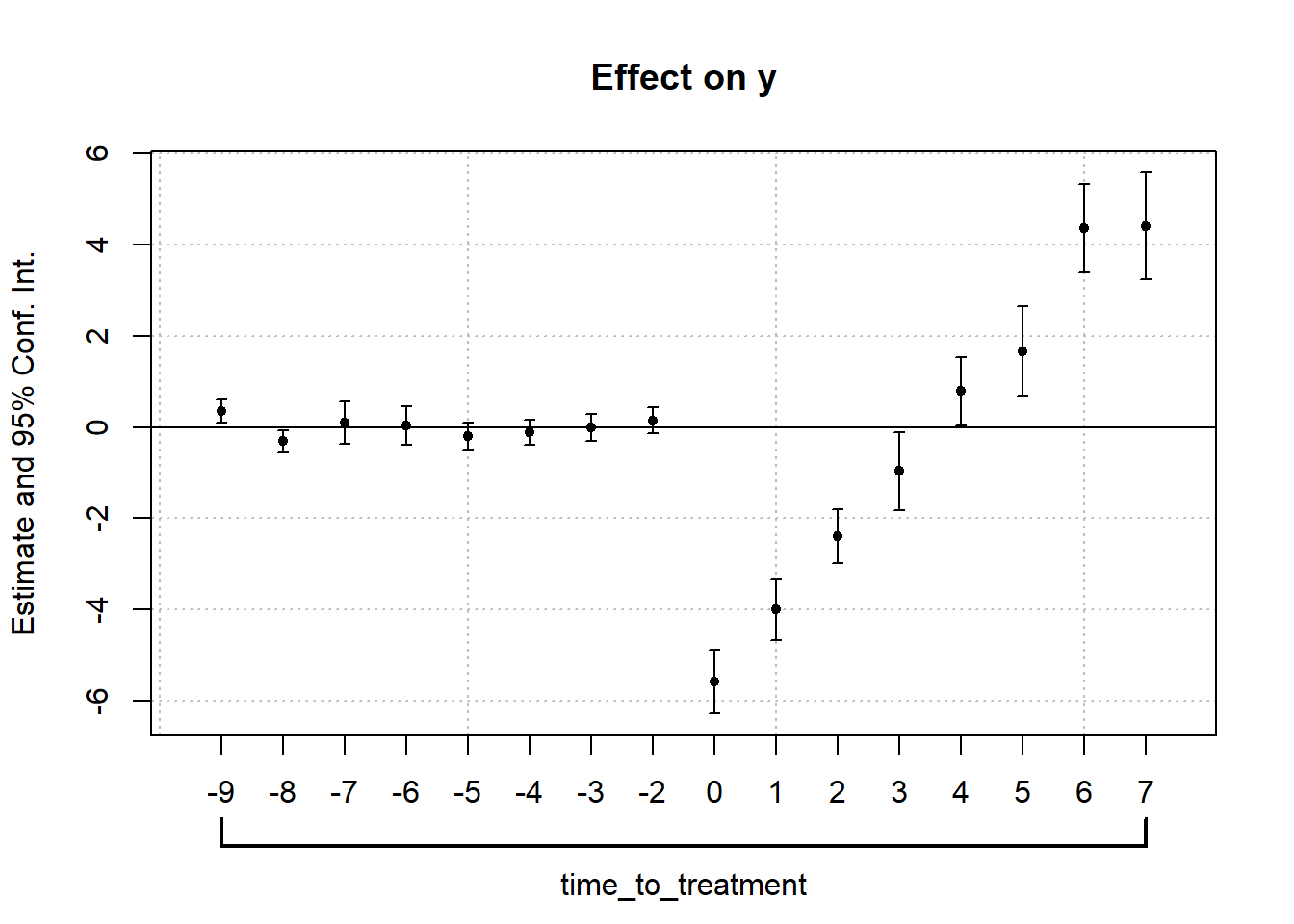

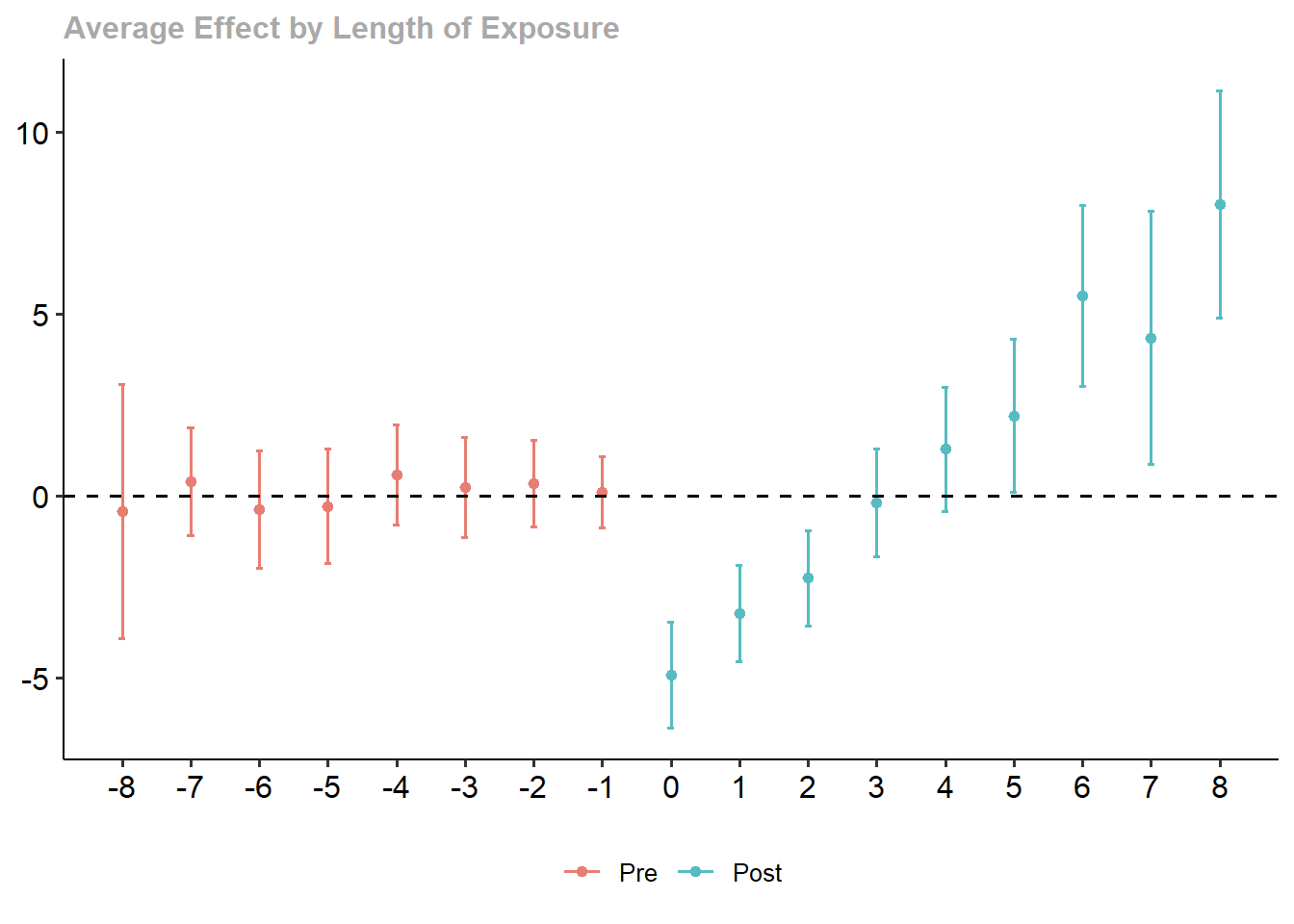

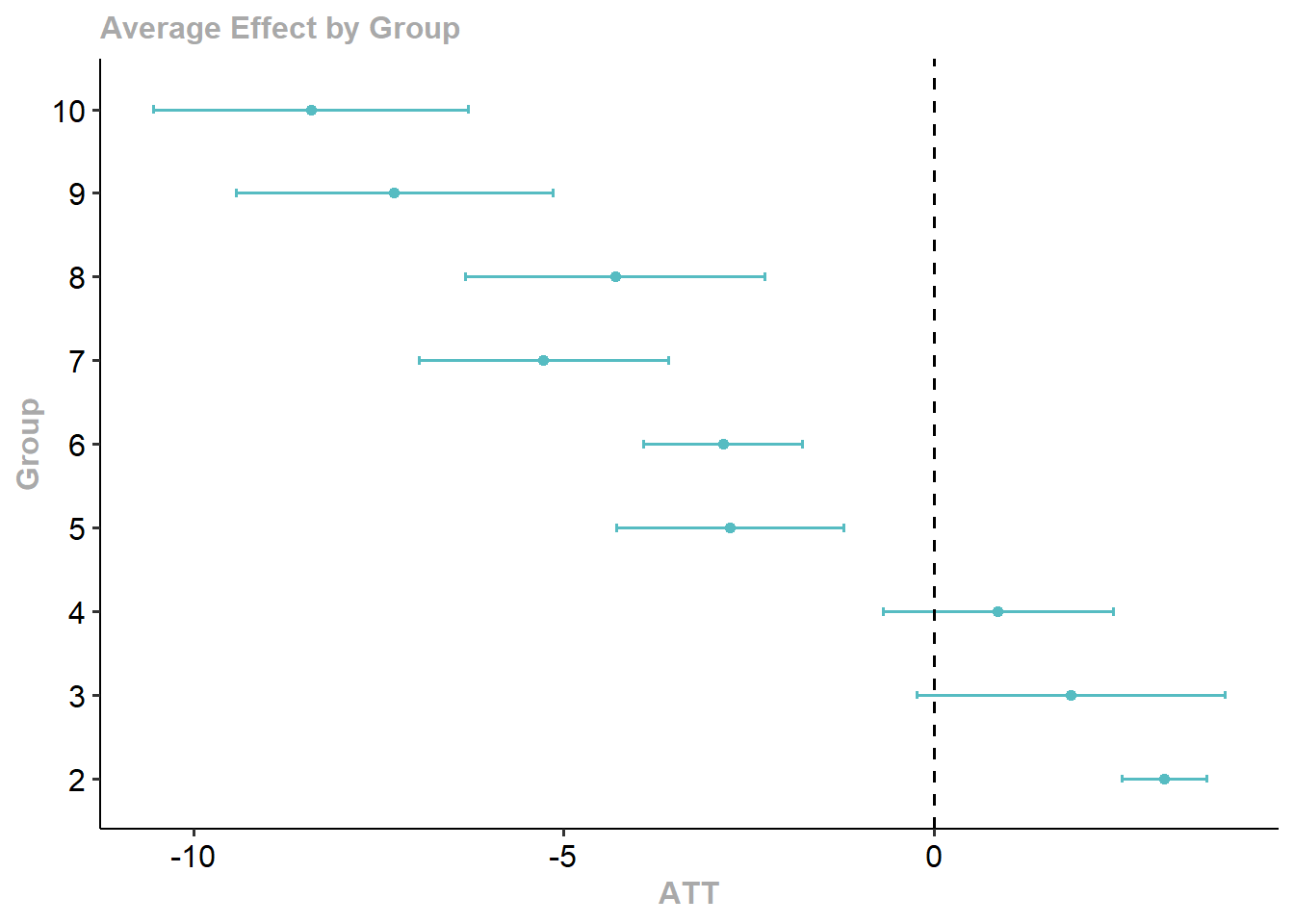

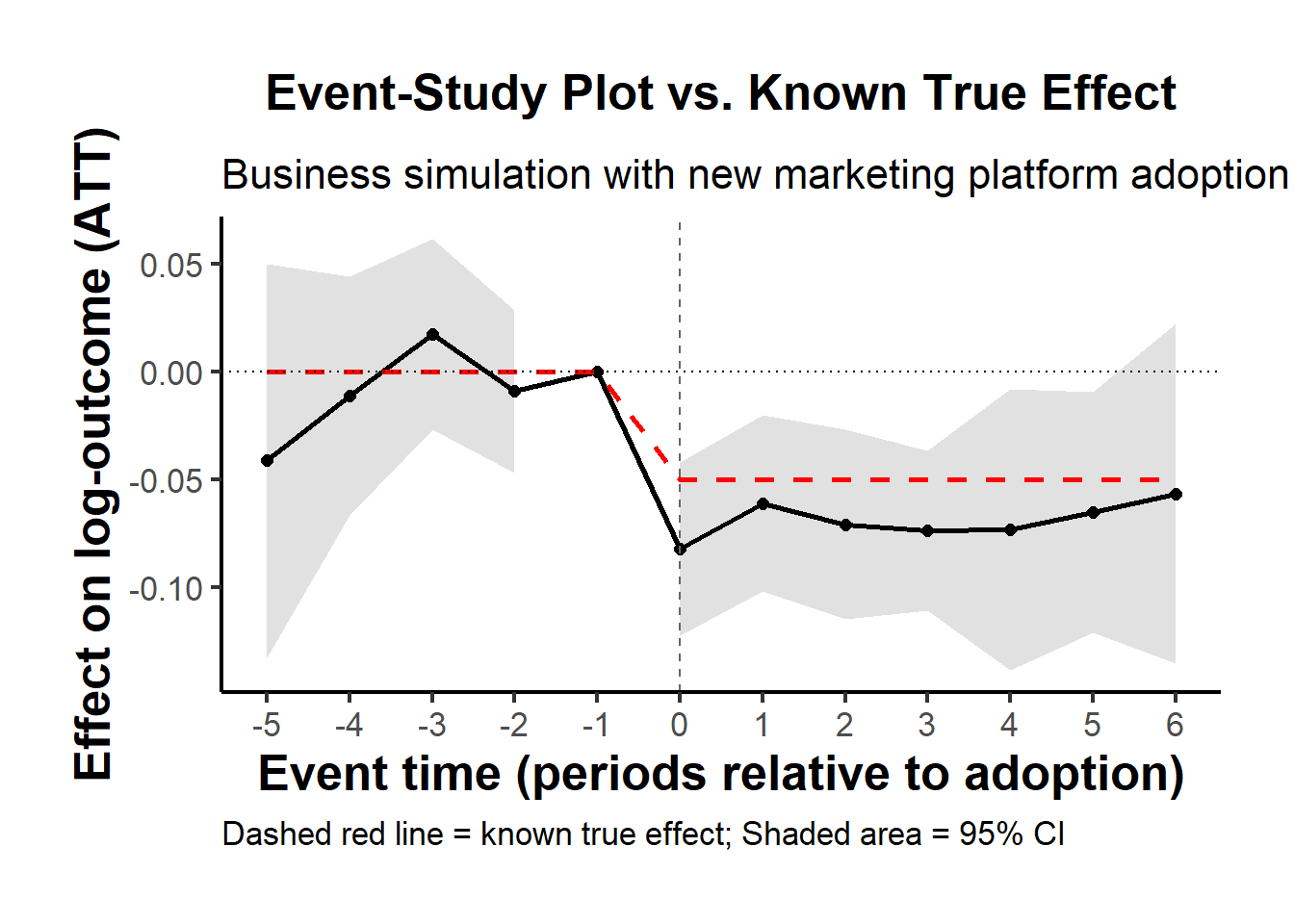

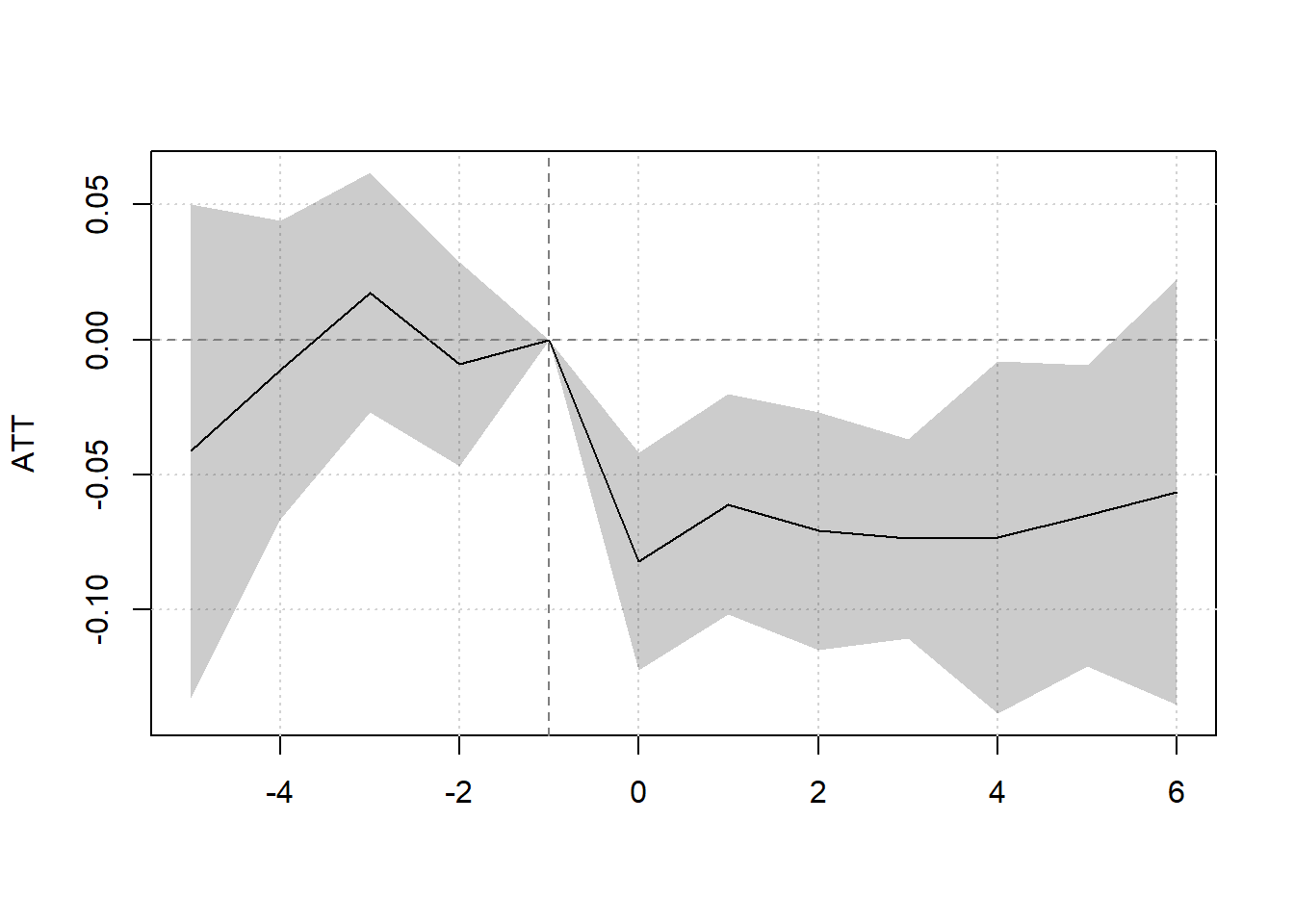

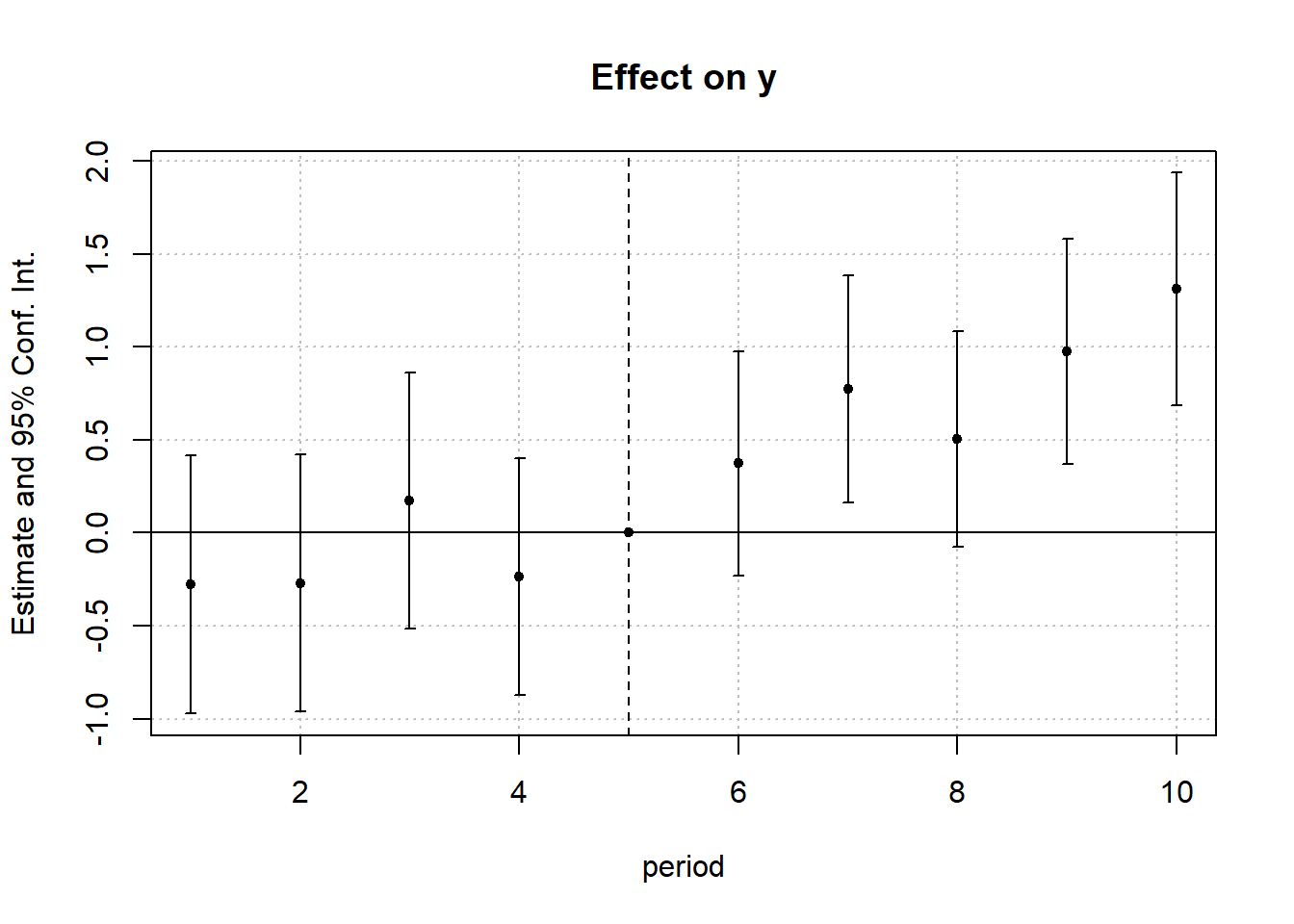

#> 1 -0.5861831 0.1768297 0.1770729To visualize treatment dynamics around the time of adoption, the event study specification estimates dynamic treatment effects relative to the time of treatment (Figure 30.9).

res <- staggered(

df = base_stagg,

i = "id",

t = "year",

g = "year_treated",

y = "y",

estimand = "eventstudy",

eventTime = -9:8

)

# Plotting the event study with pointwise confidence intervals

library(ggplot2)

library(dplyr)

ggplot(

res |> mutate(

ymin_ptwise = estimate - 1.96 * se,

ymax_ptwise = estimate + 1.96 * se

),

aes(x = eventTime, y = estimate)

) +

geom_pointrange(aes(ymin = ymin_ptwise, ymax = ymax_ptwise)) +

geom_hline(yintercept = 0, linetype = "dashed") +

xlab("Event Time") +

ylab("ATT Estimate") +

ggtitle("Event Study: Dynamic Treatment Effects") +

causalverse::ama_theme()

Figure 30.9: Event Study Dynamic Treatment Effects

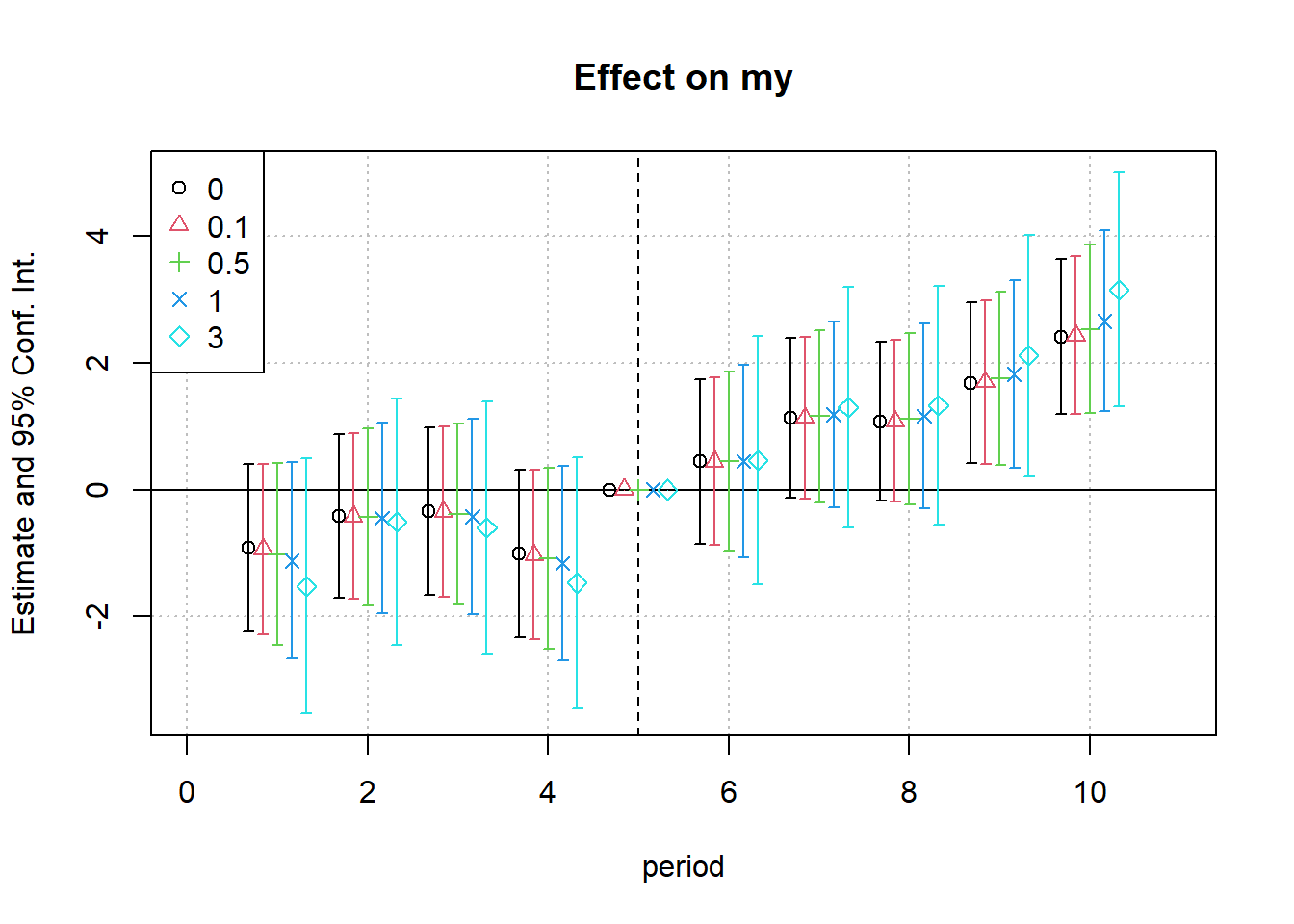

The staggered package also includes direct implementations of alternative estimators:

staggered_cs()implements the Callaway and Sant’Anna (2021) estimator.staggered_sa()implements the L. Sun and Abraham (2021) estimator, which adjusts for bias from comparisons involving already-treated units.

# Callaway and Sant’Anna estimator

staggered_cs(

df = base_stagg,

i = "id",

t = "year",

g = "year_treated",

y = "y",

estimand = "simple"

)

#> estimate se se_neyman

#> 1 -0.7994889 0.4484987 0.4486122

# Sun and Abraham estimator

staggered_sa(

df = base_stagg,

i = "id",

t = "year",

g = "year_treated",

y = "y",

estimand = "simple"

)

#> estimate se se_neyman

#> 1 -0.7551901 0.4407818 0.4409525To assess statistical significance under the sharp null hypothesis \(H_0: \text{TE} = 0\), the staggered package includes an option for Fisher’s randomization (permutation) test. This approach tests whether the observed estimate could plausibly occur under a random reallocation of treatment timings.

# Fisher Randomization Test

staggered(

df = base_stagg,

i = "id",

t = "year",

g = "year_treated",

y = "y",

estimand = "simple",

compute_fisher = TRUE,

num_fisher_permutations = 100

)

#> estimate se se_neyman fisher_pval fisher_pval_se_neyman

#> 1 -0.7110941 0.2211943 0.2214245 0 0

#> num_fisher_permutations

#> 1 100This test provides a non-parametric method for inference and is particularly useful when the number of groups is small or standard errors are unreliable due to clustering or heteroskedasticity.

30.8.2 Cohort Average Treatment Effects (L. Sun and Abraham 2021)

L. Sun and Abraham (2021) propose a solution to the TWFE problem in staggered adoption settings by introducing an interaction-weighted estimator for dynamic treatment effects. This estimator is based on the concept of Cohort Average Treatment Effects on the Treated (CATT), which accounts for variation in treatment timing and dynamic treatment responses.

Traditional TWFE estimators implicitly assume homogeneous treatment effects and often rely on treated units serving as controls for later-treated units. When treatment effects vary over time or across groups, this leads to contaminated comparisons, especially in event-study specifications.

L. Sun and Abraham (2021) address this issue by:

Estimating cohort-specific treatment effects relative to time since treatment.

Using never-treated units as controls, or in their absence, the last-treated cohort.

30.8.2.1 Defining the Parameter of Interest: CATT

Let \(E_i = e\) denote the period when unit \(i\) first receives treatment. The cohort-specific average treatment effect on the treated (CATT) is defined as: \[ CATT_{e, l} = \mathbb{E}[Y_{i, e + l} - Y_{i, e + l}^\infty \mid E_i = e] \] Where:

\(l\) is the relative period (e.g., \(l = -1\) is one year before treatment, \(l = 0\) is the treatment year).

\(Y_{i, e + l}^\infty\) is the potential outcome without treatment.

\(Y_{i, e + l}\) is the observed outcome.

This formulation allows one to trace out the dynamic effect of treatment for each cohort, relative to their treatment start time.

L. Sun and Abraham (2021) extend the interaction-weighted idea to panel settings, originally introduced by Gibbons, Suárez Serrato, and Urbancic (2018) in a cross-sectional context.

They propose regressing the outcome on:

Relative time indicators constructed by interacting treatment cohort (\(E_i\)) with time (\(t\)).

Unit and time fixed effects.

This method explicitly estimates \(CATT_{e, l}\) terms, avoiding the contaminating influence of already-treated units that TWFE models often suffer from.

Relative Period Bin Indicator

\[ D_{it}^l = \mathbb{1}(t - E_i = l) \]

- \(E_i\): The time period when unit \(i\) first receives treatment.

- \(l\): The relative time period: how many periods have passed since treatment began.

- Static Specification

\[ Y_{it} = \alpha_i + \lambda_t + \mu_g \sum_{l \ge 0} D_{it}^l + \epsilon_{it} \]

- \(\alpha_i\): Unit fixed effects.

- \(\lambda_t\): Time fixed effects.

- \(\mu_g\): Effect for group \(g\).

- Excludes periods prior to treatment.

- Dynamic Specification

\[ Y_{it} = \alpha_i + \lambda_t + \sum_{\substack{l = -K \\ l \neq -1}}^{L} \mu_l D_{it}^l + \epsilon_{it} \]

- Includes leads and lags of treatment indicators \(D_{it}^l\).

- Excludes one period (typically \(l = -1\)) to avoid perfect collinearity.

- Tests for pre-treatment parallel trends rely on the leads (\(l < 0\)).

30.8.2.2 Identifying Assumptions

- Parallel Trends

For identification, it is assumed that untreated potential outcomes follow parallel trends across cohorts in the absence of treatment: \[ \mathbb{E}[Y_{it}^\infty - Y_{i, t-1}^\infty \mid E_i = e] = \text{constant across } e \] This allows us to use never-treated or not-yet-treated units as valid counterfactuals.

- No Anticipatory Effects

Treatment should not influence outcomes before it is implemented. That is: \[ CATT_{e, l} = 0 \quad \text{for all } l < 0 \] This ensures that any pre-trends are not driven by behavioral changes in anticipation of treatment.

- Treatment Effect Homogeneity (Optional)

The treatment effect is consistent across cohorts for each relative period. Each adoption cohort should have the same path of treatment effects. In other words, the trajectory of each treatment cohort is similar.

Although L. Sun and Abraham (2021) allow treatment effect heterogeneity, some settings may assume homogeneous effects within cohorts and periods:

Each cohort has the same pattern of response over time.

This is relaxed in their design but assumed in simpler TWFE settings.

30.8.2.3 Comparison to Other Designs

Different DiD designs make distinct assumptions about how treatment effects vary (Table 30.7)

| Study | Vary Over Time | Vary Across Cohorts | Notes |

|---|---|---|---|

| L. Sun and Abraham (2021) | ✓ | ✓ | Allows full heterogeneity |

| Callaway and Sant’Anna (2021) | ✓ | ✓ | Estimates group × time ATTs |

| Borusyak, Jaravel, and Spiess (2024) | ✓ | ✗ |

Homogeneous across cohorts Heterogeneity over time |

| Athey and Imbens (2022) | ✗ | ✓ | Heterogeneity only across adoption cohorts |

| Clement De Chaisemartin and D’haultfœuille (2023) | ✓ | ✓ | Complete heterogeneity |

| Goodman-Bacon (2021) | ✓ or ✗ | ✗ or ✓ |

Restricts one dimension Heterogeneity either “vary across units but not over time” or “vary over time but not across units”. |

30.8.2.4 Sources of Treatment Effect Heterogeneity

Several forces can generate heterogeneous treatment effects:

Calendar Time Effects: Macro events (e.g., recessions, policy changes) affect cohorts differently.

Selection into Timing: Units self-select into early/late treatment based on anticipated effects.

Composition Differences: Adoption cohorts may differ in observed or unobserved ways.

Such heterogeneity can bias TWFE estimates, which often average effects across incomparable groups.

30.8.2.5 Technical Issues

When using an event-study TWFE regression to estimate dynamic treatment effects in staggered adoption settings, one must exclude certain relative time indicators to avoid perfect multicollinearity. This arises because relative period indicators are linearly dependent due to the presence of unit and time fixed effects.

Specifically, the following two terms must be addressed:

The period immediately before treatment (\(l = -1\)): This period is typically omitted and serves as the baseline for comparison. This normalization has been standard practice in event study regressions prior to L. Sun and Abraham (2021) .

A distant post-treatment period (e.g., \(l = +5\) or \(l = +10\)): L. Sun and Abraham (2021) clarified that in addition to the baseline period, at least one other relative time indicator, typically from the far tail of the post-treatment distribution, must be dropped, binned, or trimmed to avoid multicollinearity among the relative time dummies. This issue emerges because fixed effects absorb much of the within-unit and within-time variation, reducing the effective rank of the design matrix.

Dropping certain relative periods (especially pre-treatment periods) introduces an implicit normalization: the estimates for included periods are now interpreted relative to the omitted periods. If treatment effects are present in these omitted periods (e.g., due to anticipation or early effects), this will contaminate the estimates of included relative periods.

To avoid this contamination, researchers often assume that all pre-treatment periods have zero treatment effect, i.e.,

\[ CATT_{e, l} = 0 \quad \text{for all } l < 0 \]

This assumption ensures that excluded pre-treatment periods form a valid counterfactual, and estimates for \(l \geq 0\) are not biased due to normalization.