34 Instrumental Variables

In many empirical settings, we seek to estimate the causal effect of an explanatory variable \(X\) on an outcome variable \(Y\). A common starting point is the Ordinary Least Squares regression:

\[ Y = \beta_0 + \beta_1 X + \varepsilon. \]

For OLS to provide an unbiased and consistent estimate of \(\beta_1\), the explanatory variable \(X\) must satisfy the exogeneity condition:

\[ \mathbb{E}[\varepsilon \mid X] = 0. \]

However, when \(X\) is correlated with the error term \(\varepsilon\), this assumption is violated, leading to endogeneity. As a result, the OLS estimator is biased and inconsistent. Common causes of endogeneity include:

- Omitted Variable Bias (OVB): When a relevant variable is omitted from the regression, leading to correlation between \(X\) and \(\varepsilon\).

- Simultaneity: When \(X\) and \(Y\) are jointly determined, such as in supply-and-demand models.

- Measurement Error: Errors in measuring \(X\) introduce bias in estimation.

- Attenuation Bias in Errors-in-Variables: measurement error in the independent variable leads to an underestimate of the true effect (biasing the coefficient toward zero).

Instrumental Variables (IV) estimation addresses endogeneity by introducing an instrument \(Z\) that affects \(Y\) only through \(X\). Similar to RCT, we try to introduce randomization (random assignment to treatment) to our treatment variable by using only variation in the instrument.

Logic of using an instrument:

Use only exogenous variation to see the variation in treatment (try to exclude all endogenous variation in the treatment)

Use only exogenous variation to see the variation in outcome (try to exclude all endogenous variation in the outcome)

See the relationship between treatment and outcome in terms of residual variations that are exogenous to omitted variables.

For an instrument \(Z\) to be valid, it must satisfy two conditions:

Relevance Condition: The instrument \(Z\) must be correlated with the endogenous variable \(X\): \[ \text{Cov}(Z, X) \neq 0. \]

Exogeneity Condition (Exclusion Restriction): The instrument \(Z\) must be uncorrelated with the error term \(\varepsilon\) and affect \(Y\) only through \(X\): \[ \text{Cov}(Z, \varepsilon) = 0. \]

These conditions ensure that \(Z\) provides exogenous variation in \(X\), allowing us to isolate the causal effect of \(X\) on \(Y\).

These conditions ensure that \(Z\) provides exogenous variation in \(X\), allowing us to estimate the causal effect of \(X\) on \(Y\). Random assignment of \(Z\) helps ensure exogeneity, but we must also confirm that \(Z\) influences \(Y\) only through \(X\) to satisfy the exclusion restriction.

The IV approach dates back to early econometric research in the 1920s and 1930s, with a significant role in Cowles Commission studies on simultaneous equations. Key contributions include:

- P. G. Wright (1928): One of the earliest applications, studying supply and demand for pig iron.

- J. Angrist and Imbens (1991): Popularized IV methods using quarter-of-birth as an instrument for education.

The credibility revolution in econometrics (1990s–2000s) led to widespread use of IVs in applied research, particularly in economics, political science, and epidemiology.

34.1 Challenges with Instrumental Variables

While IVs can provide a solution to endogeneity, several challenges arise:

- Exclusion Restriction Violations: If \(Z\) affects \(Y\) through any channel other than \(X\), the IV estimate is biased.

- Repeated Use of Instruments: Common instruments, such as weather or policy changes, may be invalid due to their widespread application across studies (Gallen 2020). One needs to test for invalid instruments (Hausman-like test).

- A notable example is Mellon (2021), who documents that 289 social sciences studies have used weather as an instrument for 195 variables, raising concerns about exclusion violations.

- Heterogeneous Treatment Effects: The Local Average Treatment Effect (LATE) estimated by IV applies only to compliers—units whose treatment status is affected by the instrument.

- Weak Instruments: Too little correlation with the endogenous regressor yields unstable estimates.

- Invalid Instruments: If the instrument violates exogeneity, your results are inconsistent.

- Interpretation Mistakes: The IV identifies only the effect for those “marginal” units whose treatment status is driven by the instrument.

34.2 Framework for Instrumental Variables

We consider a binary treatment framework where:

\(D_i \sim Bernoulli(p)\) is a dummy treatment variable.

\((Y_{0i}, Y_{1i})\) are the potential outcomes under control and treatment.

The observed outcome is: \[ Y_i = Y_{0i} + (Y_{1i} - Y_{0i}) D_i. \]

-

We introduce an instrumental variable \(Z_i\) satisfying: \[ Z_i \perp (Y_{0i}, Y_{1i}, D_{0i}, D_{1i}). \]

- This means \(Z_i\) is independent of potential outcomes and potential treatment status.

- \(Z_i\) must also be correlated with \(D_i\) to satisfy the relevance condition.

34.2.1 Constant-Treatment-Effect Model

Under the constant treatment effect assumption (i.e., the treatment effect is the same for all individuals),

\[ \begin{aligned} Y_{0i} &= \alpha + \eta_i, \\ Y_{1i} - Y_{0i} &= \rho, \\ Y_i &= Y_{0i} + D_i (Y_{1i} - Y_{0i}) \\ &= \alpha + \eta_i + D_i \rho \\ &= \alpha + \rho D_i + \eta_i. \end{aligned} \]

where:

- \(\eta_i\) captures individual-level heterogeneity.

- \(\rho\) is the constant treatment effect.

The problem with OLS estimation is that \(D_i\) may be correlated with \(\eta_i\), leading to endogeneity bias.

34.2.2 Instrumental Variable Solution

A valid instrument \(Z_i\) allows us to estimate the causal effect \(\rho\) via:

\[ \begin{aligned} \rho &= \frac{\text{Cov}(Y_i, Z_i)}{\text{Cov}(D_i, Z_i)} \\ &= \frac{\text{Cov}(Y_i, Z_i) / V(Z_i) }{\text{Cov}(D_i, Z_i) / V(Z_i)} \\ &= \frac{\text{Reduced form estimate}}{\text{First-stage estimate}} \\ &= \frac{E[Y_i |Z_i = 1] - E[Y_i | Z_i = 0]}{E[D_i |Z_i = 1] - E[D_i | Z_i = 0 ]}. \end{aligned} \]

This ratio measures the treatment effect only if \(Z_i\) is a valid instrument.

34.2.3 Heterogeneous Treatment Effects and the LATE Framework

In a more general framework where treatment effects vary across individuals,

Define potential outcomes as: \[ Y_i(d,z) = \text{outcome for unit } i \text{ given } D_i = d, Z_i = z. \]

-

Define treatment status based on \(Z_i\): \[ D_i = D_{0i} + Z_i (D_{1i} - D_{0i}). \]

where:

- \(D_{1i}\) is the treatment status when \(Z_i = 1\).

- \(D_{0i}\) is the treatment status when \(Z_i = 0\).

- \(D_{1i} - D_{0i}\) is the causal effect of \(Z_i\) on \(D_i\).

34.2.4 Assumptions for LATE Identification

34.2.4.1 Independence (Instrument Randomization)

The instrument must be as good as randomly assigned:

\[ [\{Y_i(d,z); \forall d, z \}, D_{1i}, D_{0i} ] \perp Z_i. \]

This ensures that \(Z_i\) is uncorrelated with potential outcomes and potential treatment status.

This assumption let the first-stage equation be the average causal effect of \(Z_i\) on \(D_i\)

\[ \begin{aligned} E[D_i |Z_i = 1] - E[D_i | Z_i = 0] &= E[D_{1i} |Z_i = 1] - E[D_{0i} |Z_i = 0] \\ &= E[D_{1i} - D_{0i}] \end{aligned} \]

This assumption also is sufficient for a causal interpretation of the reduced form, where we see the effect of the instrument \(Z_i\) on the outcome \(Y_i\):

\[ E[Y_i |Z_i = 1 ] - E[Y_i|Z_i = 0] = E[Y_i (D_{1i}, Z_i = 1) - Y_i (D_{0i} , Z_i = 0)] \]

34.2.4.2 Exclusion Restriction

This is also known as the existence of the instrument assumption (G. W. Imbens and Angrist 1994). The instrument should only affect \(Y_i\) through \(D_i\) (i.e., the treatment \(D_i\) fully mediates the effect of \(Z_i\) on \(Y_i\)):

\[ \begin{aligned} Y_{1i} &= Y_i (1,1) = Y_i (1,0)\\ Y_{0i} &= Y_i (0,1) = Y_i (0,0) \end{aligned} \]

Under this assumption (and assume \(Y_{1i, Y_{0i}}\) already satisfy the independence assumption), the observed outcome \(Y_i\) can be rewritten as:

\[ \begin{aligned} Y_i &= Y_i (0, Z_i) + [Y_i (1 , Z_i) - Y_i (0, Z_i)] D_i \\ &= Y_{0i} + (Y_{1i} - Y_{0i}) D_i. \end{aligned} \]

This assumption let us go from reduced-form causal effects to treatment effects (J. D. Angrist and Imbens 1995).

34.2.4.3 Monotonicity (No Defiers)

We assume that \(Z_i\) affects \(D_i\) in a monotonic way:

\[ D_{1i} \geq D_{0i}, \quad \forall i. \]

- This assumption lets us assume that there is a first stage, in which we examine the proportion of the population that \(D_i\) is driven by \(Z_i\). It implies that \(Z_i\) only moves individuals toward treatment, but never away. This rules out “defiers” (i.e., individuals who would have taken the treatment when not assigned but refuse when assigned).

- This assumption is used to solve to problem of the shifts between participation status back to non-participation status.

Alternatively, one can solve the same problem by assuming constant (homogeneous) treatment effect (G. W. Imbens and Angrist 1994), but this is rather restrictive.

A third solution is the assumption that there exists a value of the instrument, where the probability of participation conditional on that value is 0 J. Angrist and Imbens (1991).

Under monotonicity,

\[ \begin{aligned} E[D_{1i} - D_{0i} ] = P[D_{1i} > D_{0i}]. \end{aligned} \]

34.2.5 Local Average Treatment Effect Theorem

Given Independence, Exclusion, and Monotonicity, we obtain the LATE result (J. D. Angrist and Pischke 2009, 4.4.1):

\[ \begin{aligned} \frac{E[Y_i | Z_i = 1] - E[Y_i | Z_i = 0]}{E[D_i |Z_i = 1] - E[D_i |Z_i = 0]} = E[Y_{1i} - Y_{0i} | D_{1i} > D_{0i}]. \end{aligned} \]

This states that the IV estimator recovers the causal effect only for compliers—units whose treatment status changes due to \(Z_i\).

IV only identifies treatment effects for switchers (compliers):

| Switcher Type | Compliance Type | Definition |

|---|---|---|

| Switchers | Compliers | \(D_{1i} > D_{0i}\) (take treatment if \(Z_i = 1\), not if \(Z_i = 0\)) |

| Non-switchers | Always-Takers | \(D_{1i} = D_{0i} = 1\) (always take treatment) |

| Non-switchers | Never-Takers | \(D_{1i} = D_{0i} = 0\) (never take treatment) |

- IV estimates nothing for always-takers and never-takers since their treatment status is unaffected by \(Z_i\) (Similar to the fixed-effects models).

34.2.6 IV in Randomized Trials (Noncompliance)

- In randomized trials, if compliance is imperfect (i.e., compliance is voluntary), where individuals in the treatment group will not always take the treatment (e.g., selection bias), intention-to-treat (ITT) estimates are valid but contaminated by noncompliance.

- IV estimation using random assignment (\(Z_i\)) as an instrument for actual treatment received (\(D_i\)) recovers the LATE.

\[ \begin{aligned} \frac{E[Y_i |Z_i = 1] - E[Y_i |Z_i = 0]}{E[D_i |Z_i = 1]} = \frac{\text{Intent-to-Treat Effect}}{\text{Compliance Rate}} = E[Y_{1i} - Y_{0i} |D_i = 1]. \end{aligned} \]

Under full compliance, LATE = Treatment Effect on the Treated (TOT).

34.3 Estimation

34.3.1 Two-Stage Least Squares Estimation

Two-Stage Least Squares (2SLS) is the most widely used IV estimator It’s a special case of IV-GMM. Consider the structural equation:

\[ Y_i = X_i \beta + \varepsilon_i, \]

where \(X_i\) is endogenous. We introduce an instrument \(Z_i\) satisfying:

- Relevance: \(Z_i\) is correlated with \(X_i\).

- Exogeneity: \(Z_i\) is uncorrelated with \(\varepsilon_i\).

2SLS Steps

-

First-Stage Regression: Predict \(X_i\) using the instrument: \[ X_i = \pi_0 + \pi_1 Z_i + v_i. \]

- Obtain fitted values \(\hat{X}_i = \pi_0 + \pi_1 Z_i\).

-

Second-Stage Regression: Use \(\hat{X}_i\) in place of \(X_i\): \[ Y_i = \beta_0 + \beta_1 \hat{X}_i + \varepsilon_i. \]

- The estimated \(\hat{\beta}_1\) is our IV estimator.

library(fixest)

base = iris

names(base) = c("y", "x1", "x_endo_1", "x_inst_1", "fe")

set.seed(2)

base$x_inst_2 = 0.2 * base$y + 0.2 * base$x_endo_1 + rnorm(150, sd = 0.5)

base$x_endo_2 = 0.2 * base$y - 0.2 * base$x_inst_1 + rnorm(150, sd = 0.5)

# IV Estimation

est_iv = feols(y ~ x1 | x_endo_1 + x_endo_2 ~ x_inst_1 + x_inst_2, base)

summary(est_iv)

#> TSLS estimation - Dep. Var.: y

#> Endo. : x_endo_1, x_endo_2

#> Instr. : x_inst_1, x_inst_2

#> Second stage: Dep. Var.: y

#> Observations: 150

#> Standard-errors: IID

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 1.831380 0.411435 4.45121 1.6844e-05 ***

#> fit_x_endo_1 0.444982 0.022086 20.14744 < 2.2e-16 ***

#> fit_x_endo_2 0.639916 0.307376 2.08186 3.9100e-02 *

#> x1 0.565095 0.084715 6.67051 4.9180e-10 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#> RMSE: 0.398842 Adj. R2: 0.725033

#> F-test (1st stage), x_endo_1: stat = 903.1628, p < 2.2e-16 , on 2 and 146 DoF.

#> F-test (1st stage), x_endo_2: stat = 3.2583, p = 0.041268, on 2 and 146 DoF.

#> Wu-Hausman: stat = 6.7918, p = 0.001518, on 2 and 144 DoF.Diagnostic Tests

To assess instrument validity:

fitstat(est_iv, type = c("n", "f", "ivf", "ivf1", "ivf2", "ivwald", "cd"))

#> Observations: 150

#> F-test: stat = 131.9612, p < 2.2e-16 , on 3 and 146 DoF.

#> F-test (1st stage), x_endo_1: stat = 903.1628, p < 2.2e-16 , on 2 and 146 DoF.

#> F-test (1st stage), x_endo_2: stat = 3.2583, p = 0.041268, on 2 and 146 DoF.

#> F-test (2nd stage): stat = 194.1967, p < 2.2e-16 , on 2 and 146 DoF.

#> Wald (1st stage), x_endo_1 : stat = 903.1628, p < 2.2e-16 , on 2 and 146 DoF, VCOV: IID.

#> Wald (1st stage), x_endo_2 : stat = 3.2583, p = 0.041268, on 2 and 146 DoF, VCOV: IID.

#> Cragg-Donald: 3.11162To set default printing

# always add second-stage Wald test

setFixest_print(fitstat = ~ . + ivwald2)

est_ivTo see results from different stages

# first-stage

summary(est_iv, stage = 1)

# second-stage

summary(est_iv, stage = 2)

# both stages

etable(summary(est_iv, stage = 1:2), fitstat = ~ . + ivfall + ivwaldall.p)

etable(summary(est_iv, stage = 2:1), fitstat = ~ . + ivfall + ivwaldall.p)

# .p means p-value, not statistic

# `all` means IV only34.3.2 IV-GMM

The Generalized Method of Moments (GMM) provides a flexible estimation framework that generalizes the Instrumental Variables (IV) approach, including 2SLS as a special case. The key idea behind GMM is to use moment conditions derived from economic models to estimate parameters efficiently, even in the presence of endogeneity.

Consider the standard linear regression model:

\[ Y = X\beta + u, \quad u \sim (0, \Omega) \]

where:

- \(Y\) is an \(N \times 1\) vector of the dependent variable.

- \(X\) is an \(N \times k\) matrix of endogenous regressors.

- \(\beta\) is a \(k \times 1\) vector of coefficients.

- \(u\) is an \(N \times 1\) vector of error terms.

- \(\Omega\) is the variance-covariance matrix of \(u\).

To address endogeneity in \(X\), we introduce an \(N \times l\) matrix of instruments, \(Z\), where \(l \geq k\). The moment conditions are then given by:

\[ E[Z_i' u_i] = E[Z_i' (Y_i - X_i \beta)] = 0. \]

In practice, these expectations are replaced by their sample analogs. The empirical moment conditions are given by:

\[ \bar{g}(\beta) = \frac{1}{N} \sum_{i=1}^{N} Z_i' (Y_i - X_i \beta) = \frac{1}{N} Z' (Y - X\beta). \]

GMM estimates \(\beta\) by minimizing a quadratic function of these sample moments.

34.3.2.1 IV and GMM Estimators

- Exactly Identified Case (\(l = k\))

When the number of instruments equals the number of endogenous regressors (\(l = k\)), the moment conditions uniquely determine \(\beta\). In this case, the IV estimator is:

\[ \hat{\beta}_{IV} = (Z'X)^{-1}Z'Y. \]

This is equivalent to the classical 2SLS estimator.

- Overidentified Case (\(l > k\))

When there are more instruments than endogenous variables (\(l > k\)), the system has more moment conditions than parameters. In this case, we project \(X\) onto the instrument space:

\[ \hat{X} = Z(Z'Z)^{-1} Z' X = P_Z X. \]

The 2SLS estimator is then given by:

\[ \begin{aligned} \hat{\beta}_{2SLS} &= (\hat{X}'X)^{-1} \hat{X}' Y \\ &= (X'P_Z X)^{-1} X' P_Z Y. \end{aligned} \]

However, 2SLS does not optimally weight the instruments when \(l > k\). The IV-GMM approach resolves this issue.

34.3.2.2 IV-GMM Estimation

The GMM estimator is obtained by minimizing the objective function:

\[ J (\hat{\beta}_{GMM} ) = N \bar{g}(\hat{\beta}_{GMM})' W \bar{g} (\hat{\beta}_{GMM}), \]

where \(W\) is an \(l \times l\) symmetric weighting matrix.

For the IV-GMM estimator, solving the first-order conditions yields:

\[ \hat{\beta}_{GMM} = (X'ZWZ' X)^{-1} X'ZWZ'Y. \]

For any weighting matrix \(W\), this is a consistent estimator. The optimal choice of \(W\) is \(S^{-1}\), where \(S\) is the covariance matrix of the moment conditions:

\[ S = E[Z' u u' Z] = \lim_{N \to \infty} N^{-1} [Z' \Omega Z]. \]

A feasible estimator replaces \(S\) with its sample estimate from the 2SLS residuals:

\[ \hat{\beta}_{FEGMM} = (X'Z \hat{S}^{-1} Z' X)^{-1} X'Z \hat{S}^{-1} Z'Y. \]

When \(\Omega\) satisfies standard assumptions:

- Errors are independently and identically distributed.

- \(S = \sigma_u^2 I_N\).

- The optimal weighting matrix is proportional to the identity matrix.

Then, the IV-GMM estimator simplifies to the standard IV (or 2SLS) estimator.

Comparison of 2SLS and IV-GMM

| Feature | 2SLS | IV-GMM |

|---|---|---|

| Instrument usage | Uses a subset of available instruments | Uses all available instruments |

| Weighting | No weighting applied | Weights instruments for efficiency |

| Efficiency | Suboptimal in overidentified cases | Efficient when \(W = S^{-1}\) |

| Overidentification test | Not available | Uses Hansen’s \(J\)-test (overid test) |

Key Takeaways:

- Use IV-GMM whenever overidentification is a concern (i.e., \(l > k\)).

- 2SLS is a special case of IV-GMM when the weighting matrix is proportional to the identity matrix.

- IV-GMM improves efficiency by optimally weighting the moment conditions.

# Standard approach

library(gmm)

gmm_model <- gmm(y ~ x1, ~ x_inst_1 + x_inst_2, data = base)

summary(gmm_model)

#>

#> Call:

#> gmm(g = y ~ x1, x = ~x_inst_1 + x_inst_2, data = base)

#>

#>

#> Method: twoStep

#>

#> Kernel: Quadratic Spectral(with bw = 0.72368 )

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 1.4385e+01 1.8960e+00 7.5871e+00 3.2715e-14

#> x1 -2.7506e+00 6.2101e-01 -4.4292e+00 9.4584e-06

#>

#> J-Test: degrees of freedom is 1

#> J-test P-value

#> Test E(g)=0: 7.9455329 0.0048206

#>

#> Initial values of the coefficients

#> (Intercept) x1

#> 16.117875 -3.36062234.3.2.3 Overidentification Test: Hansen’s \(J\)-Statistic

A key advantage of IV-GMM is that it allows testing of instrument validity through the Hansen \(J\)-test (also known as the GMM distance test or Hayashi’s C-statistic). The test statistic is:

\[ J = N \bar{g}(\hat{\beta}_{GMM})' \hat{S}^{-1} \bar{g} (\hat{\beta}_{GMM}), \]

which follows a \(\chi^2\) distribution with degrees of freedom equal to the number of overidentifying restrictions (\(l - k\)). A significant \(J\)-statistic suggests that the instruments may not be valid.

34.3.2.4 Cluster-Robust Standard Errors

In empirical applications, errors often exhibit heteroskedasticity or intra-group correlation (clustering), violating the assumption of independently and identically distributed errors. Standard IV-GMM estimators remain consistent but may not be efficient if clustering is ignored.

To address this, we adjust the GMM weighting matrix by incorporating cluster-robust variance estimation. Specifically, the covariance matrix of the moment conditions \(S\) is estimated as:

\[ \hat{S} = \frac{1}{N} \sum_{c=1}^{C} \left( \sum_{i \in c} Z_i' u_i \right) \left( \sum_{i \in c} Z_i' u_i \right)', \]

where:

\(C\) is the number of clusters,

\(i \in c\) represents observations belonging to cluster \(c\),

\(u_i\) is the residual for observation \(i\),

\(Z_i\) is the vector of instruments.

Using this robust weighting matrix, we compute a clustered GMM estimator that remains consistent and improves inference when clustering is present.

# Load required packages

library(gmm)

library(dplyr)

library(MASS) # For generalized inverse if needed

# General IV-GMM function with clustering

gmmcl <- function(formula, instruments, data, cluster_var, lambda = 1e-6) {

# Ensure cluster_var exists in data

if (!(cluster_var %in% colnames(data))) {

stop("Error: Cluster variable not found in data.")

}

# Step 1: Initial GMM estimation (identity weighting matrix)

initial_gmm <- gmm(formula, instruments, data = data, vcov = "TrueFixed",

weightsMatrix = diag(ncol(model.matrix(instruments, data))))

# Extract residuals

u_hat <- residuals(initial_gmm)

# Matrix of instruments

Z <- model.matrix(instruments, data)

# Ensure clusters are treated as a factor

data[[cluster_var]] <- as.factor(data[[cluster_var]])

# Compute clustered weighting matrix

cluster_groups <- split(seq_along(u_hat), data[[cluster_var]])

# Remove empty clusters (if any)

cluster_groups <- cluster_groups[lengths(cluster_groups) > 0]

# Initialize cluster-based covariance matrix

S_cluster <- matrix(0, ncol(Z), ncol(Z)) # Zero matrix

# Compute clustered weight matrix

for (indices in cluster_groups) {

if (length(indices) > 0) { # Ensure valid clusters

u_cluster <- matrix(u_hat[indices], ncol = 1) # Convert to column matrix

Z_cluster <- Z[indices, , drop = FALSE] # Keep matrix form

S_cluster <- S_cluster + t(Z_cluster) %*% (u_cluster %*% t(u_cluster)) %*% Z_cluster

}

}

# Normalize by sample size

S_cluster <- S_cluster / nrow(data)

# Ensure S_cluster is invertible

S_cluster <- S_cluster + lambda * diag(ncol(S_cluster)) # Regularization

# Compute inverse or generalized inverse if needed

if (qr(S_cluster)$rank < ncol(S_cluster)) {

S_cluster_inv <- ginv(S_cluster) # Use generalized inverse (MASS package)

} else {

S_cluster_inv <- solve(S_cluster)

}

# Step 2: GMM estimation using clustered weighting matrix

final_gmm <- gmm(formula, instruments, data = data, vcov = "TrueFixed",

weightsMatrix = S_cluster_inv)

return(final_gmm)

}

# Example: Simulated Data for IV-GMM with Clustering

set.seed(123)

n <- 200 # Total observations

C <- 50 # Number of clusters

data <- data.frame(

cluster = rep(1:C, each = n / C), # Cluster variable

z1 = rnorm(n),

z2 = rnorm(n),

x1 = rnorm(n),

y1 = rnorm(n)

)

data$x1 <- data$z1 + data$z2 + rnorm(n) # Endogenous regressor

data$y1 <- data$x1 + rnorm(n) # Outcome variable

# Run standard IV-GMM (without clustering)

gmm_results_standard <- gmm(y1 ~ x1, ~ z1 + z2, data = data)

# Run IV-GMM with clustering

gmm_results_clustered <- gmmcl(y1 ~ x1, ~ z1 + z2, data = data, cluster_var = "cluster")

# Display results for comparison

summary(gmm_results_standard)

#>

#> Call:

#> gmm(g = y1 ~ x1, x = ~z1 + z2, data = data)

#>

#>

#> Method: twoStep

#>

#> Kernel: Quadratic Spectral(with bw = 1.09893 )

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 4.4919e-02 6.5870e-02 6.8193e-01 4.9528e-01

#> x1 9.8409e-01 4.4215e-02 2.2257e+01 9.6467e-110

#>

#> J-Test: degrees of freedom is 1

#> J-test P-value

#> Test E(g)=0: 1.6171 0.2035

#>

#> Initial values of the coefficients

#> (Intercept) x1

#> 0.05138658 0.98580796

summary(gmm_results_clustered)

#>

#> Call:

#> gmm(g = formula, x = instruments, vcov = "TrueFixed", weightsMatrix = S_cluster_inv,

#> data = data)

#>

#>

#> Method: One step GMM with fixed W

#>

#> Kernel: Quadratic Spectral

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 4.9082e-02 7.0878e-05 6.9249e+02 0.0000e+00

#> x1 9.8238e-01 5.2798e-05 1.8606e+04 0.0000e+00

#>

#> J-Test: degrees of freedom is 1

#> J-test P-value

#> Test E(g)=0: 1247099 034.3.3 Limited Information Maximum Likelihood

LIML is an alternative to 2SLS that performs better when instruments are weak.

It solves: \[ \min_{\lambda} \left| \begin{bmatrix} Y - X\beta \\ \lambda (D - X\gamma) \end{bmatrix} \right| \] where \(\lambda\) is an eigenvalue.

34.3.4 Jackknife IV

JIVE reduces small-sample bias by leaving each observation out when estimating first-stage fitted values:

\[ \begin{aligned} \hat{X}_i^{(-i)} &= Z_i (Z_{-i}'Z_{-i})^{-1} Z_{-i}'X_{-i}. \\ \hat{\beta}_{JIVE} &= (X^{(-i)'}X^{(-i)})^{-1}X^{(-i)'} Y \end{aligned} \]

library(AER)

jive_model = ivreg(y ~ x_endo_1 | x_inst_1, data = base, method = "jive")

summary(jive_model)

#>

#> Call:

#> ivreg(formula = y ~ x_endo_1 | x_inst_1, data = base, method = "jive")

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -1.2390 -0.3022 -0.0206 0.2772 1.0039

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 4.34586 0.08096 53.68 <2e-16 ***

#> x_endo_1 0.39848 0.01964 20.29 <2e-16 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 0.4075 on 148 degrees of freedom

#> Multiple R-Squared: 0.7595, Adjusted R-squared: 0.7578

#> Wald test: 411.6 on 1 and 148 DF, p-value: < 2.2e-1634.3.5 Control Function Approach

The Control Function (CF) approach, also known as two-stage residual inclusion (2SRI), is a method used to address endogeneity in regression models. This approach is particularly suited for models with nonadditive errors, such as discrete choice models or cases where both the endogenous variable and the outcome are binary.

The control function approach is particularly useful in:

- Binary outcome and binary endogenous variable models:

- In rare events, the second stage typically uses a logistic model (E. Tchetgen Tchetgen 2014).

- In non-rare events, a risk ratio regression is often more appropriate.

- Marketing applications:

- Used in consumer choice models to account for endogeneity in demand estimation (Petrin and Train 2010).

The general model setup is:

\[ Y = g(X) + U \]

\[ X = \pi(Z) + V \]

with the key assumptions:

Conditional mean independence:

\[E(U |Z,V) = E(U|V)\]

This implies that once we control for \(V\), the instrumental variable \(Z\) does not directly affect \(U\).Instrument relevance:

\[E(V|Z) = 0\]

This ensures that \(Z\) is a valid instrument for \(X\).

Under the control function approach, the expectation of \(Y\) conditional on \((Z,V)\) can be rewritten as:

\[ E(Y|Z,V) = g(X) + E(U|Z,V) = g(X) + E(U|V) = g(X) + h(V). \]

Here, \(h(V)\) is the control function that captures endogeneity through the first-stage residuals.

34.3.5.1 Implementation

Rather than replacing the endogenous variable \(X_i\) with its predicted value \(\hat{X}_i\), the CF approach explicitly incorporates the residuals from the first-stage regression:

Stage 1: Estimate First-Stage Residuals

Estimate the endogenous variable using its instrumental variables:

\[ X_i = Z_i \pi + v_i. \]

Obtain the residuals:

\[ \hat{v}_i = X_i - Z_i \hat{\pi}. \]

Stage 2: Include Residuals in Outcome Equation

Regress the outcome variable on \(X_i\) and the first-stage residuals:

\[ Y_i = X_i \beta + \gamma \hat{v}_i + \varepsilon_i. \]

If endogeneity is present, \(\gamma \neq 0\); otherwise, the endogenous regressor \(X\) would be exogenous.

34.3.5.2 Comparison to Two-Stage Least Squares

The control function method differs from 2SLS depending on whether the model is linear or nonlinear:

- Linear Endogenous Variables:

- When both \(X\) and \(Y\) are continuous, the CF approach is equivalent to 2SLS.

- Nonlinear Endogenous Variables:

- If \(X\) is nonlinear (e.g., a binary treatment), CF differs from 2SLS and often performs better.

- Nonlinear in Parameters:

- In models where \(g(X)\) is nonlinear (e.g., logit/probit models), CF is typically superior to 2SLS because it explicitly models endogeneity via the control function \(h(V)\).

library(fixest)

library(tidyverse)

library(modelsummary)

# Set the seed for reproducibility

set.seed(123)

n = 1000

# Generate the exogenous variable from a normal distribution

exogenous <- rnorm(n, mean = 5, sd = 1)

# Generate the omitted variable as a function of the exogenous variable

omitted <- rnorm(n, mean = 2, sd = 1)

# Generate the endogenous variable as a function of the omitted variable and the exogenous variable

endogenous <- 5 * omitted + 2 * exogenous + rnorm(n, mean = 0, sd = 1)

# nonlinear endogenous variable

endogenous_nonlinear <- 5 * omitted^2 + 2 * exogenous + rnorm(100, mean = 0, sd = 1)

unrelated <- rexp(n, rate = 1)

# Generate the response variable as a function of the endogenous variable and the omitted variable

response <- 4 + 3 * endogenous + 6 * omitted + rnorm(n, mean = 0, sd = 1)

response_nonlinear <- 4 + 3 * endogenous_nonlinear + 6 * omitted + rnorm(n, mean = 0, sd = 1)

response_nonlinear_para <- 4 + 3 * endogenous ^ 2 + 6 * omitted + rnorm(n, mean = 0, sd = 1)

# Combine the variables into a data frame

my_data <-

data.frame(

exogenous,

omitted,

endogenous,

response,

unrelated,

response,

response_nonlinear,

response_nonlinear_para

)

# View the first few rows of the data frame

# head(my_data)

wo_omitted <- feols(response ~ endogenous + sw0(unrelated), data = my_data)

w_omitted <- feols(response ~ endogenous + omitted + unrelated, data = my_data)

# ivreg::ivreg(response ~ endogenous + unrelated | exogenous, data = my_data)

iv <- feols(response ~ 1 + sw0(unrelated) | endogenous ~ exogenous, data = my_data)

etable(

wo_omitted,

w_omitted,

iv,

digits = 2

# vcov = list("each", "iid", "hetero")

)

#> wo_omitted.1 wo_omitted.2 w_omitted iv.1

#> Dependent Var.: response response response response

#>

#> Constant -3.8*** (0.30) -3.6*** (0.31) 3.9*** (0.16) 12.0*** (1.4)

#> endogenous 4.0*** (0.01) 4.0*** (0.01) 3.0*** (0.01) 3.2*** (0.07)

#> unrelated -0.14. (0.08) -0.02 (0.03)

#> omitted 6.0*** (0.08)

#> _______________ ______________ ______________ _____________ _____________

#> S.E. type IID IID IID IID

#> Observations 1,000 1,000 1,000 1,000

#> R2 0.98756 0.98760 0.99817 0.11406

#> Adj. R2 0.98755 0.98757 0.99816 0.11317

#>

#> iv.2

#> Dependent Var.: response

#>

#> Constant 12.2*** (1.4)

#> endogenous 3.2*** (0.07)

#> unrelated -0.28. (0.16)

#> omitted

#> _______________ _____________

#> S.E. type IID

#> Observations 1,000

#> R2 0.11608

#> Adj. R2 0.11430

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Linear in parameter and linear in endogenous variable

# manual

# 2SLS

first_stage = lm(endogenous ~ exogenous, data = my_data)

new_data = cbind(my_data, new_endogenous = predict(first_stage, my_data))

second_stage = lm(response ~ new_endogenous, data = new_data)

summary(second_stage)

#>

#> Call:

#> lm(formula = response ~ new_endogenous, data = new_data)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -68.126 -14.949 0.608 15.099 73.842

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 11.9910 5.7671 2.079 0.0379 *

#> new_endogenous 3.2097 0.2832 11.335 <2e-16 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 21.49 on 998 degrees of freedom

#> Multiple R-squared: 0.1141, Adjusted R-squared: 0.1132

#> F-statistic: 128.5 on 1 and 998 DF, p-value: < 2.2e-16

new_data_cf = cbind(my_data, residual = resid(first_stage))

second_stage_cf = lm(response ~ endogenous + residual, data = new_data_cf)

summary(second_stage_cf)

#>

#> Call:

#> lm(formula = response ~ endogenous + residual, data = new_data_cf)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -5.1039 -1.0065 0.0247 0.9480 4.2521

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 11.99102 0.39849 30.09 <2e-16 ***

#> endogenous 3.20974 0.01957 164.05 <2e-16 ***

#> residual 0.95036 0.02159 44.02 <2e-16 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 1.485 on 997 degrees of freedom

#> Multiple R-squared: 0.9958, Adjusted R-squared: 0.9958

#> F-statistic: 1.175e+05 on 2 and 997 DF, p-value: < 2.2e-16

modelsummary(list(second_stage, second_stage_cf))| (1) | (2) | |

|---|---|---|

| (Intercept) | 11.991 | 11.991 |

| (5.767) | (0.398) | |

| new_endogenous | 3.210 | |

| (0.283) | ||

| endogenous | 3.210 | |

| (0.020) | ||

| residual | 0.950 | |

| (0.022) | ||

| Num.Obs. | 1000 | 1000 |

| R2 | 0.114 | 0.996 |

| R2 Adj. | 0.113 | 0.996 |

| AIC | 8977.0 | 3633.5 |

| BIC | 8991.8 | 3653.2 |

| Log.Lik. | -4485.516 | -1812.768 |

| F | 128.483 | 117473.460 |

| RMSE | 21.47 | 1.48 |

Nonlinear in endogenous variable

# 2SLS

first_stage = lm(endogenous_nonlinear ~ exogenous, data = my_data)

new_data = cbind(my_data, new_endogenous_nonlinear = predict(first_stage, my_data))

second_stage = lm(response_nonlinear ~ new_endogenous_nonlinear, data = new_data)

summary(second_stage)

#>

#> Call:

#> lm(formula = response_nonlinear ~ new_endogenous_nonlinear, data = new_data)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -101.26 -53.01 -13.50 39.33 376.16

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 11.7539 21.6478 0.543 0.587

#> new_endogenous_nonlinear 3.1253 0.5993 5.215 2.23e-07 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 70.89 on 998 degrees of freedom

#> Multiple R-squared: 0.02653, Adjusted R-squared: 0.02555

#> F-statistic: 27.2 on 1 and 998 DF, p-value: 2.234e-07

new_data_cf = cbind(my_data, residual = resid(first_stage))

second_stage_cf = lm(response_nonlinear ~ endogenous_nonlinear + residual, data = new_data_cf)

summary(second_stage_cf)

#>

#> Call:

#> lm(formula = response_nonlinear ~ endogenous_nonlinear + residual,

#> data = new_data_cf)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -12.8559 -0.8337 0.4429 1.3432 4.3147

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 11.75395 0.67012 17.540 < 2e-16 ***

#> endogenous_nonlinear 3.12525 0.01855 168.469 < 2e-16 ***

#> residual 0.13577 0.01882 7.213 1.08e-12 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 2.194 on 997 degrees of freedom

#> Multiple R-squared: 0.9991, Adjusted R-squared: 0.9991

#> F-statistic: 5.344e+05 on 2 and 997 DF, p-value: < 2.2e-16

modelsummary(list(second_stage, second_stage_cf))| (1) | (2) | |

|---|---|---|

| (Intercept) | 11.754 | 11.754 |

| (21.648) | (0.670) | |

| new_endogenous_nonlinear | 3.125 | |

| (0.599) | ||

| endogenous_nonlinear | 3.125 | |

| (0.019) | ||

| residual | 0.136 | |

| (0.019) | ||

| Num.Obs. | 1000 | 1000 |

| R2 | 0.027 | 0.999 |

| R2 Adj. | 0.026 | 0.999 |

| AIC | 11364.2 | 4414.7 |

| BIC | 11378.9 | 4434.4 |

| Log.Lik. | -5679.079 | -2203.371 |

| F | 27.196 | 534439.006 |

| RMSE | 70.82 | 2.19 |

Nonlinear in parameters

# 2SLS

first_stage = lm(endogenous ~ exogenous, data = my_data)

new_data = cbind(my_data, new_endogenous = predict(first_stage, my_data))

second_stage = lm(response_nonlinear_para ~ new_endogenous, data = new_data)

summary(second_stage)

#>

#> Call:

#> lm(formula = response_nonlinear_para ~ new_endogenous, data = new_data)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -1402.34 -462.21 -64.22 382.35 3090.62

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) -1137.875 173.811 -6.547 9.4e-11 ***

#> new_endogenous 122.525 8.534 14.357 < 2e-16 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 647.7 on 998 degrees of freedom

#> Multiple R-squared: 0.1712, Adjusted R-squared: 0.1704

#> F-statistic: 206.1 on 1 and 998 DF, p-value: < 2.2e-16

new_data_cf = cbind(my_data, residual = resid(first_stage))

second_stage_cf = lm(response_nonlinear_para ~ endogenous_nonlinear + residual, data = new_data_cf)

summary(second_stage_cf)

#>

#> Call:

#> lm(formula = response_nonlinear_para ~ endogenous_nonlinear +

#> residual, data = new_data_cf)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -904.77 -154.35 -20.41 143.24 953.04

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 492.2494 32.3530 15.21 < 2e-16 ***

#> endogenous_nonlinear 23.5991 0.8741 27.00 < 2e-16 ***

#> residual 30.5914 3.7397 8.18 8.58e-16 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 245.9 on 997 degrees of freedom

#> Multiple R-squared: 0.8806, Adjusted R-squared: 0.8804

#> F-statistic: 3676 on 2 and 997 DF, p-value: < 2.2e-16

modelsummary(list(second_stage, second_stage_cf))| (1) | (2) | |

|---|---|---|

| (Intercept) | -1137.875 | 492.249 |

| (173.811) | (32.353) | |

| new_endogenous | 122.525 | |

| (8.534) | ||

| endogenous_nonlinear | 23.599 | |

| (0.874) | ||

| residual | 30.591 | |

| (3.740) | ||

| Num.Obs. | 1000 | 1000 |

| R2 | 0.171 | 0.881 |

| R2 Adj. | 0.170 | 0.880 |

| AIC | 15788.6 | 13853.1 |

| BIC | 15803.3 | 13872.7 |

| Log.Lik. | -7891.307 | -6922.553 |

| F | 206.123 | 3676.480 |

| RMSE | 647.01 | 245.58 |

34.3.6 Fuller and Bias-Reduced IV

Fuller adjusts LIML for bias reduction.

fuller_model = ivreg(y ~ x_endo_1 | x_inst_1, data = base, method = "fuller", k = 1)

summary(fuller_model)

#>

#> Call:

#> ivreg(formula = y ~ x_endo_1 | x_inst_1, data = base, method = "fuller",

#> k = 1)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -1.2390 -0.3022 -0.0206 0.2772 1.0039

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 4.34586 0.08096 53.68 <2e-16 ***

#> x_endo_1 0.39848 0.01964 20.29 <2e-16 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 0.4075 on 148 degrees of freedom

#> Multiple R-Squared: 0.7595, Adjusted R-squared: 0.7578

#> Wald test: 411.6 on 1 and 148 DF, p-value: < 2.2e-1634.4 Asymptotic Properties of the IV Estimator

IV estimation provides consistent and asymptotically normal estimates of structural parameters under a specific set of assumptions. Understanding the asymptotic properties of the IV estimator requires clarity on the identification conditions and the large-sample behavior of the estimator.

Consider the linear structural model:

\[ Y = X \beta + u \]

Where:

\(Y\) is the dependent variable (\(n \times 1\))

\(X\) is a matrix of endogenous regressors (\(n \times k\))

\(u\) is the error term

\(\beta\) is the parameter vector of interest (\(k \times 1\))

Suppose we have a matrix of instruments \(Z\) (\(n \times m\)), with \(m \ge k\).

The IV estimator of \(\beta\) is:

\[ \hat{\beta}_{IV} = (Z'X)^{-1} Z'Y \]

Alternatively, when using 2SLS, this is equivalent to:

\[ \hat{\beta}_{2SLS} = (X'P_ZX)^{-1} X'P_ZY \]

Where:

- \(P_Z = Z (Z'Z)^{-1} Z'\) is the projection matrix onto the column space of \(Z\).

34.4.1 Consistency

For \(\hat{\beta}_{IV}\) to be consistent, the following conditions must hold as \(n \to \infty\):

- Instrument Exogeneity

\[ \mathbb{E}[Z'u] = 0 \]

Instruments must be uncorrelated with the structural error term.

Guarantees instrument validity.

- Instrument Relevance

\[ \mathrm{rank}(\mathbb{E}[Z'X]) = k \]

Instruments must be correlated with the endogenous regressors.

Ensures identification of \(\beta\).

If this fails, the model is underidentified, and \(\hat{\beta}_{IV}\) does not converge to the true \(\beta\).

- Random Sampling (IID Observations)

- \(\{(Y_i, X_i, Z_i)\}_{i=1}^n\) are independent and identically distributed (i.i.d.).

- In more general settings, stationarity and mixing conditions can relax this.

- Finite Moments

- \(\mathbb{E}[||Z||^2] < \infty\) and \(\mathbb{E}[||u||^2] < \infty\)

- Ensures Law of Large Numbers applies to sample moments.

If these conditions are satisfied: \[ \hat{\beta}_{IV} \overset{p}{\to} \beta \] This means the IV estimator is consistent.

34.4.2 Asymptotic Normality

In addition to consistency conditions, we require:

- Homoskedasticity (Optional but Simplifying)

\[ \mathbb{E}[u u' | Z] = \sigma^2 I \]

Simplifies variance estimation.

If violated, heteroskedasticity-robust variance estimators must be used.

- Central Limit Theorem Conditions

- Sample moments must satisfy a CLT: \[ \sqrt{n} \left( \frac{1}{n} \sum_{i=1}^n Z_i u_i \right) \overset{d}{\to} N(0, \Omega) \] Where \(\Omega = \mathbb{E}[Z_i Z_i' u_i^2]\).

Under the above conditions: \[ \sqrt{n}(\hat{\beta}_{IV} - \beta) \overset{d}{\to} N(0, V) \]

Where the asymptotic variance-covariance matrix \(V\) is: \[ V = (Q_{ZX})^{-1} Q_{Zuu} (Q_{ZX}')^{-1} \] With:

\(Q_{ZX} = \mathbb{E}[Z_i X_i']\)

\(Q_{Zuu} = \mathbb{E}[Z_i Z_i' u_i^2]\)

34.4.3 Asymptotic Efficiency

- Optimal Instrument Choice

- Among all IV estimators, 2SLS is efficient when the instrument matrix \(Z\) contains all relevant information.

- Generalized Method of Moments (GMM) can deliver efficiency gains in the presence of heteroskedasticity, by optimally weighting the moment conditions.

GMM Estimator

\[ \hat{\beta}_{GMM} = \arg \min_{\beta} \left( \frac{1}{n} \sum_{i=1}^n Z_i (Y_i - X_i' \beta) \right)' W \left( \frac{1}{n} \sum_{i=1}^n Z_i (Y_i - X_i' \beta) \right) \]

Where \(W\) is an optimal weighting matrix, typically:

\[ W = \Omega^{-1} \]

Result

- If \(Z\) is overidentified (\(m > k\)), GMM can be more efficient than 2SLS.

- When instruments are exactly identified (\(m = k\)), IV, 2SLS, and GMM coincide.

Summary Table of Conditions

| Condition | Requirement | Purpose |

|---|---|---|

| Instrument Exogeneity | \(\mathbb{E}[Z'u] = 0\) | Instrument validity |

| Instrument Relevance | \(\mathrm{rank}(\mathbb{E}[Z'X]) = k\) | Model identification |

| Random Sampling | IID (or stationary and mixing) | LLN and CLT applicability |

| Finite Second Moments | \(\mathbb{E}[||Z||^2] < \infty\), etc. | LLN and CLT applicability |

| Homoskedasticity (optional) | \(\mathbb{E}[u u' | Z] = \sigma^2 I\) | Simplifies variance formulas |

| Optimal Weighting | \(W = \Omega^{-1}\) in GMM | Asymptotic efficiency |

34.5 Inference

Inference in IV models, particularly when instruments are weak, presents serious challenges that can undermine standard testing and confidence interval procedures. In this section, we explore the core issues of IV inference under weak instruments, discuss the standard and alternative approaches, and outline practical guidelines for applied research.

Consider the just-identified linear IV model:

\[ Y = \beta X + u \]

where:

\(X\) is endogenous: \(\text{Cov}(X, u) \neq 0\).

-

\(Z\) is an instrumental variable satisfying:

Relevance: \(\text{Cov}(Z, X) \neq 0\).

Exogeneity: \(\text{Cov}(Z, u) = 0\).

The IV estimator of \(\beta\) is consistent under these assumptions.

A commonly used approach for inference is the t-ratio method, constructing a 95% confidence interval as:

\[ \hat{\beta} \pm 1.96 \sqrt{\hat{V}_N(\hat{\beta})} \]

However, this approach is invalid when instruments are weak. Specifically:

The t-ratio does not follow a standard normal distribution under weak instruments.

Confidence intervals based on this method can severely under-cover the true parameter.

Hypothesis tests can over-reject, even in large samples.

This problem was first systematically identified by Staiger and Stock (1997) and Dufour (1997). Weak instruments create distortions in the finite-sample distribution of \(\hat{\beta}\).

Common Practices and Misinterpretations

- Overreliance on t-Ratio Tests

- Popular but problematic when instruments are weak.

- Known to over-reject null hypotheses and under-cover confidence intervals.

- Documented extensively by Nelson and Startz (1990), Bound, Jaeger, and Baker (1995), Dufour (1997), and Lee et al. (2022).

- Weak Instrument Diagnostics

- First-Stage F-Statistic:

- Rule of thumb: \(F > 10\) often used but simplistic and misleading.

- More accurate critical values provided by Stock and Yogo (2005).

- For 95% coverage, \(F > 16.38\) is often cited (Staiger and Stock 1997).

- Misinterpretations and Pitfalls

- Mistakenly interpreting \(\hat{\beta} \pm 1.96 \times \hat{SE}\) as a 95% CI when the instrument is weak, Staiger and Stock (1997) show that under \(F > 16.38\), the nominal 95% CI may only offer 85% coverage.

- Pretesting for weak instruments can exacerbate inference problems (A. R. Hall, Rudebusch, and Wilcox 1996).

- Selective model specification based on weak instrument diagnostics may introduce additional distortions (I. Andrews, Stock, and Sun 2019).

34.5.1 Weak Instruments Problem

An alternative statistic accounts for weak instrument issues by adjusting the standard Anderson-Rubin (AR) test:

\[ \hat{t}^2 = \hat{t}^2_{AR} \times \frac{1}{1 - \hat{\rho} \frac{\hat{t}_{AR}}{\hat{f}} + \frac{\hat{t}^2_{AR}}{\hat{f}^2}} \]

Where:

\(\hat{t}^2_{AR} \sim \chi^2(1)\) under the null, even with weak instruments (T. W. Anderson and Rubin 1949).

\(\hat{t}_{AR} = \dfrac{\hat{\pi}(\hat{\beta} - \beta_0)}{\sqrt{\hat{V}_N (\hat{\pi} (\hat{\beta} - \beta_0))}} \sim N(0,1)\).

\(\hat{f} = \dfrac{\hat{\pi}}{\sqrt{\hat{V}_N(\hat{\pi})}}\) measures instrument strength (first-stage F-stat).

\(\hat{\pi}\) is the coefficient from the first-stage regression of \(X\) on \(Z\).

\(\hat{\rho} = \text{Cov}(Zv, Zu)\) captures the correlation between first-stage residuals and \(u\).

Implications

- Even in large samples, \(\hat{t}^2 \neq \hat{t}^2_{AR}\) because the adjustment term does not converge to zero unless instruments are strong and \(\rho = 0\).

- The distribution of \(\hat{t}\) does not match the standard normal but follows a more complex distribution described by Staiger and Stock (1997) and Stock and Yogo (2005).

The divergence between \(\hat{t}^2\) and \(\hat{t}^2_{AR}\) depends on:

- Instrument Strength (\(\pi\)): Higher correlation between \(Z\) and \(X\) mitigates the problem.

- First-Stage F-statistic (\(E(F)\)): A weak first-stage regression increases the bias and distortion.

- Endogeneity Level (\(|\rho|\)): Greater correlation between \(X\) and \(u\) exacerbates inference errors.

| Scenario | Conditions | Inference Quality |

|---|---|---|

| Worst Case | \(\pi = 0\), \(|\rho| = 1\) | \(\hat{\beta} \pm 1.96 \times SE\) fails; Type I error = 100% |

| Best Case | \(\rho = 0\) (No endogeneity) or very large \(\hat{f}\) (strong \(Z\)) | Standard inference works; intervals cover \(\beta\) with correct rate |

| Intermediate Case | Moderate \(\pi\), \(\rho\), and \(F\) | Coverage and Type I error lie between extremes; standard inference risky |

34.5.2 Solutions and Approaches for Valid Inference

- Assume the Problem Away (Risky Assumptions)

- High First-Stage F-statistic:

- Require \(E(F) > 142.6\) for near-validity (Lee et al. 2022).

- While the first-stage \(F\) is observable, this threshold is high and often impractical.

- Low Endogeneity:

- Assume \(|\rho| < 0.565\) Lee et al. (2022). In other words, we assume endogeneity to be less than moderat level.

- This undermines the motivation for IV in the first place, which exists precisely because of suspected endogeneity.

- High First-Stage F-statistic:

- Confront the Problem Directly (Robust Methods)

-

Anderson-Rubin (AR) Test (T. W. Anderson and Rubin 1949):

- Valid under weak instruments.

- Tests whether \(Z\) explains variation in \(Y - \beta_0 X\).

-

tF Procedure (Lee et al. 2022):

- Combines t-statistics and F-statistics in a unified testing framework.

- Offers valid inference in presence of weak instruments.

-

Andrews-Kolesár (AK) Procedure (J. Angrist and Kolesár 2023):

- Provides uniformly valid confidence intervals for \(\beta\).

- Allows for weak instruments and arbitrary heteroskedasticity.

- Especially useful in overidentified settings.

-

Anderson-Rubin (AR) Test (T. W. Anderson and Rubin 1949):

34.5.3 Anderson-Rubin Approach

The Anderson-Rubin (AR) test, originally proposed by T. W. Anderson and Rubin (1949), remains one of the most robust inferential tools in the context of instrumental variable estimation, particularly when instruments are weak or endogenous regressors exhibit complex error structures.

The AR test directly evaluates the joint null hypothesis that:

\[ H_0: \beta = \beta_0 \]

by testing whether the instruments explain any variation in the residuals \(Y - \beta_0 X\). Under the null, the model becomes:

\[ Y - \beta_0 X = u \]

Given that \(\text{Cov}(Z, u) = 0\) (by the IV exogeneity assumption), the test regresses \((Y - \beta_0 X)\) on \(Z\). The test statistic is constructed as:

\[ AR(\beta_0) = \frac{(Y - \beta_0 X)' P_Z (Y - \beta_0 X)}{\hat{\sigma}^2} \]

- \(P_Z\) is the projection matrix onto the column space of \(Z\): \(P_Z = Z (Z'Z)^{-1} Z'\).

- \(\hat{\sigma}^2\) is an estimate of the error variance (under homoskedasticity).

Under \(H_0\), the statistic follows a chi-squared distribution:

\[ AR(\beta_0) \sim \chi^2(q) \]

where \(q\) is the number of instruments (1 in a just-identified model).

Key Properties of the AR Test

- Robust to Weak Instruments:

- The AR test does not rely on the strength of the instruments.

- Its distribution under the null hypothesis remains valid even when the instruments are weak (Staiger and Stock 1997).

- Robust to Non-Normality and Homoskedastic Errors:

- Maintains correct Type I error rates even under non-normal errors (Staiger and Stock 1997).

- Optimality properties under homoskedastic errors are established in D. W. Andrews, Moreira, and Stock (2006) and M. J. Moreira (2009).

- Robust to Heteroskedasticity, Clustering, and Autocorrelation:

- The AR test has been generalized to account for heteroskedasticity, clustered errors, and autocorrelation (Stock and Wright 2000; H. Moreira and Moreira 2019).

- Valid inference is possible when combined with heteroskedasticity-robust variance estimators or cluster-robust techniques.

| Setting | Validity | Reference |

|---|---|---|

| Non-Normal, Homoskedastic Errors | Valid without distributional assumptions | (Staiger and Stock 1997) |

| Heteroskedastic Errors | Generalized AR test remains valid; robust variance estimation recommended | (Stock and Wright 2000) |

| Clustered or Autocorrelated Errors | Extensions available using cluster-robust and HAC variance estimators | (H. Moreira and Moreira 2019) |

| Optimality under Homoskedasticity | AR test minimizes Type II error among invariant tests | (D. W. Andrews, Moreira, and Stock 2006; M. J. Moreira 2009) |

The AR test is relatively simple to implement and is available in most econometric software. Here’s an intuitive step-by-step breakdown:

- Specify the null hypothesis value \(\beta_0\).

- Compute the residual \(u = Y - \beta_0 X\).

- Regress \(u\) on \(Z\) and obtain the \(R^2\) from this regression.

- Compute the test statistic:

\[ AR(\beta_0) = \frac{R^2 \cdot n}{q} \]

(For a just-identified model with a single instrument, \(q=1\).)

- Compare \(AR(\beta_0)\) to the \(\chi^2(q)\) distribution to determine significance.

library(ivDiag)

# AR test (robust to weak instruments)

# example by the package's authors

ivDiag::AR_test(

data = rueda,

Y = "e_vote_buying",

# treatment

D = "lm_pob_mesa",

# instruments

Z = "lz_pob_mesa_f",

controls = c("lpopulation", "lpotencial"),

cl = "muni_code",

CI = FALSE

)

#> $Fstat

#> F df1 df2 p

#> 48.4768 1.0000 4350.0000 0.0000

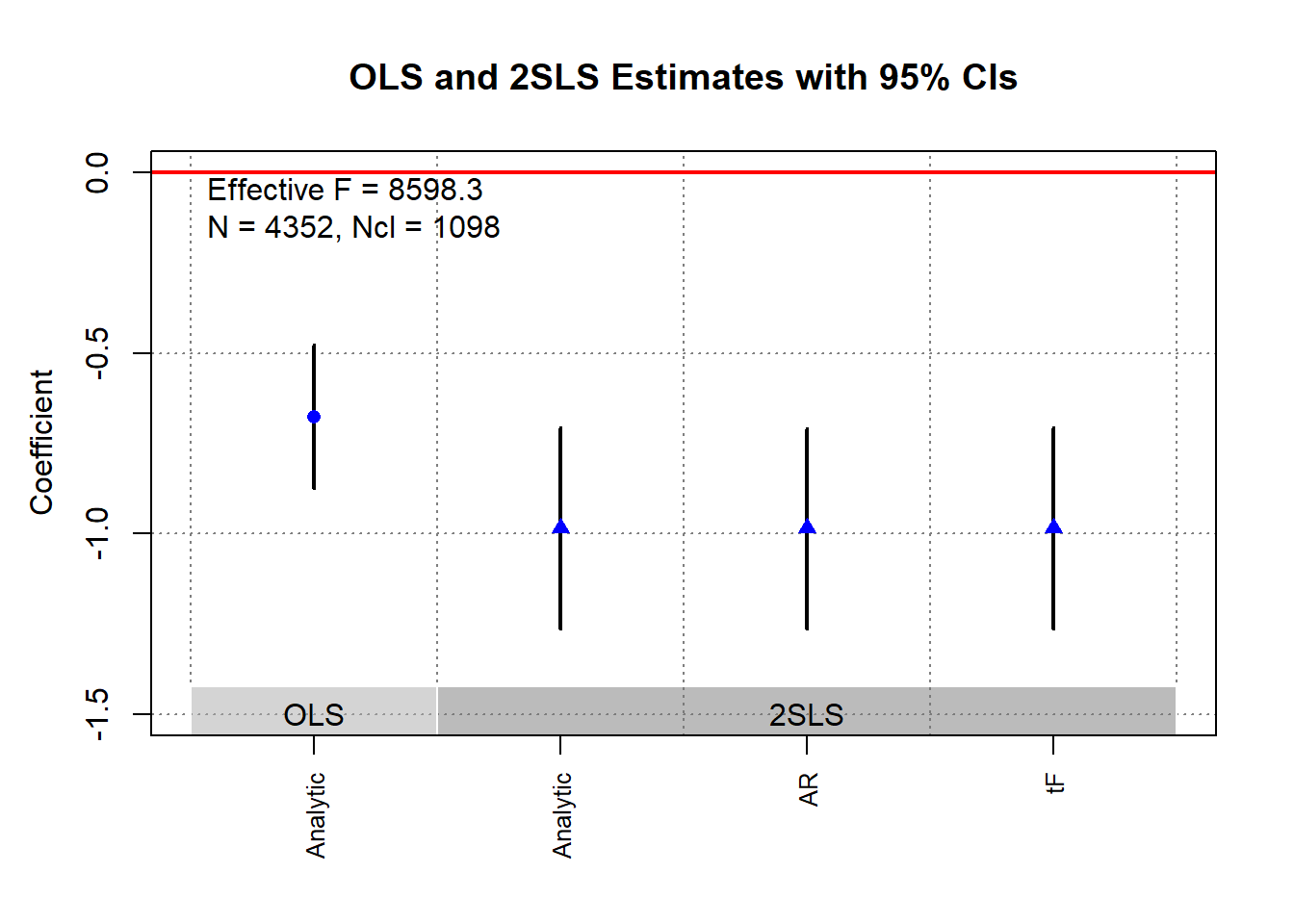

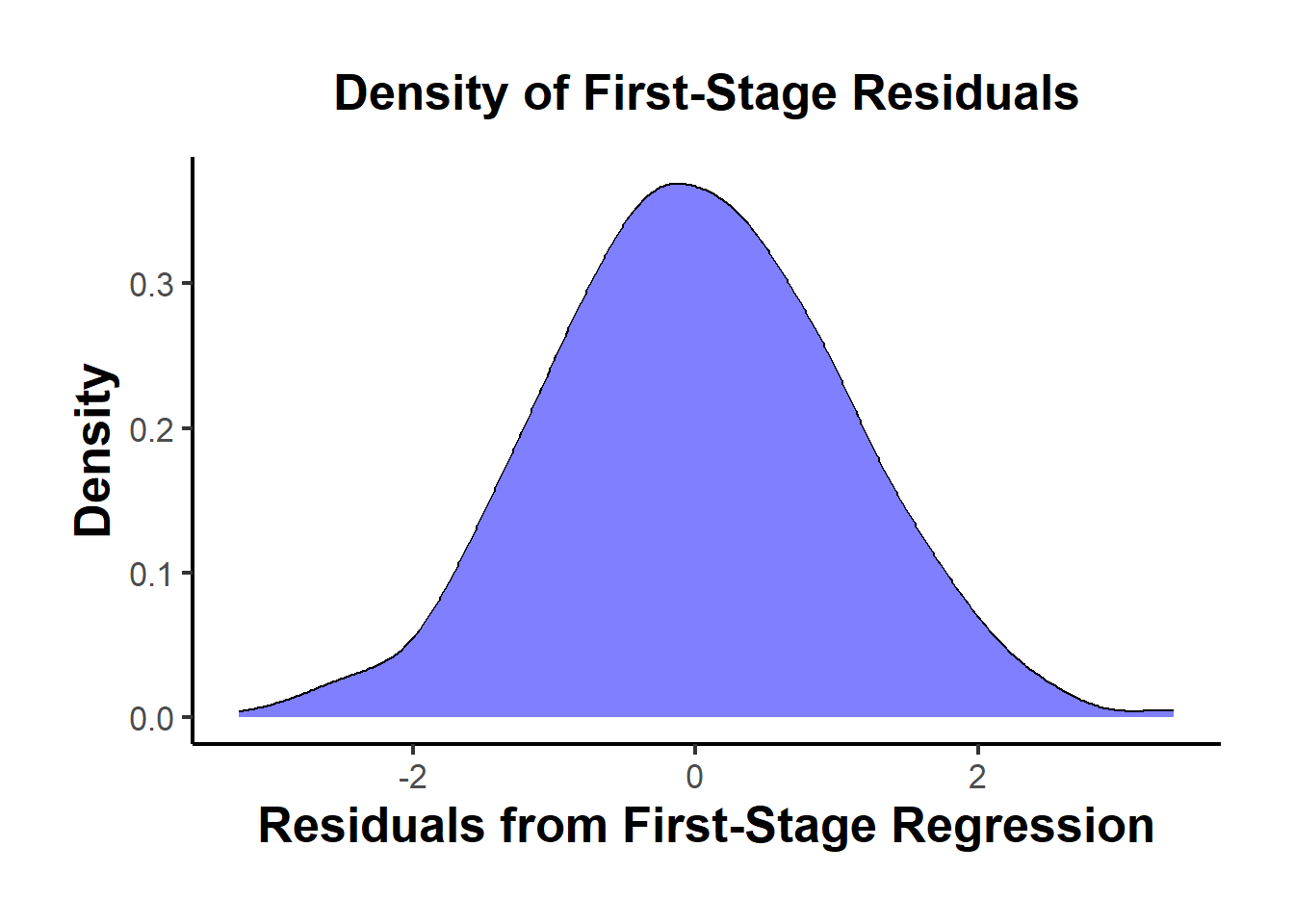

g <- ivDiag::ivDiag(

data = rueda,

Y = "e_vote_buying",

D = "lm_pob_mesa",

Z = "lz_pob_mesa_f",

controls = c("lpopulation", "lpotencial"),

cl = "muni_code",

cores = 4,

bootstrap = FALSE

)

g$AR

#> $Fstat

#> F df1 df2 p

#> 48.4768 1.0000 4350.0000 0.0000

#>

#> $ci.print

#> [1] "[-1.2626, -0.7073]"

#>

#> $ci

#> [1] -1.2626 -0.7073

#>

#> $bounded

#> [1] TRUE

ivDiag::plot_coef(g)

34.5.4 tF Procedure

Lee et al. (2022) introduce the tF procedure, an inference method specifically designed for just-identified IV models (single endogenous regressor and single instrument). It addresses the shortcomings of traditional 2SLS \(t\)-tests under weak instruments and offers a solution that is conceptually familiar to researchers trained in standard econometric practices.

Unlike the Anderson-Rubin test, which inverts hypothesis tests to form confidence sets, the tF procedure adjusts standard \(t\)-statistics and standard errors directly, making it a more intuitive extension of traditional hypothesis testing.

The tF procedure is widely applicable in settings where just-identified IV models arise, including:

Randomized controlled trials with imperfect compliance

(e.g., Local Average Treatment Effects in G. W. Imbens and Angrist (1994)).Fuzzy Regression Discontinuity Designs

(e.g., Lee and Lemieux (2010)).Fuzzy Regression Kink Designs

(e.g., (Card et al. 2015)).

A comparison of the AR approach and the tF procedure can be found in I. Andrews, Stock, and Sun (2019).

| Feature | Anderson-Rubin | tF Procedure |

|---|---|---|

| Robustness to Weak IV | Yes (valid under weak instruments) | Yes (valid under weak instruments) |

| Finite Confidence Intervals | No (interval becomes infinite for \(F \le 3.84\)) | Yes (finite intervals for all \(F\) values) |

| Interval Length | Often longer, especially when \(F\) is moderate (e.g., \(F = 16\)) | Typically shorter than AR intervals for \(F > 3.84\) |

| Ease of Interpretation | Requires inverting tests; less intuitive | Directly adjusts \(t\)-based standard errors; more intuitive |

| Computational Simplicity | Moderate (inversion of hypothesis tests) | Simple (multiplicative adjustment to standard errors) |

- With \(F > 3.84\), the AR test’s expected interval length is infinite, whereas the tF procedure guarantees finite intervals, making it superior in practical applications with weak instruments.

The tF procedure adjusts the conventional 2SLS \(t\)-ratio for the first-stage F-statistic strength. Instead of relying on a pre-testing threshold (e.g., \(F > 10\)), the tF approach provides a smooth adjustment to the standard errors.

Key Features:

- Adjusts the 2SLS \(t\)-ratio based on the observed first-stage F-statistic.

- Applies different adjustment factors for different significance levels (e.g., 95% and 99%).

- Remains valid even when the instrument is weak, offering finite confidence intervals even when the first-stage F-statistic is low.

Advantages of the tF Procedure

- Smooth Adjustment for First-Stage Strength

The tF procedure smoothly adjusts inference based on the observed first-stage F-statistic, avoiding the need for arbitrary pre-testing thresholds (e.g., \(F > 10\)).

-

It produces finite and usable confidence intervals even when the first-stage F-statistic is low:

\[ F > 3.84 \]

-

This threshold aligns with the critical value of 3.84 for a 95% Anderson-Rubin confidence interval, but with a crucial advantage:

- The AR interval becomes unbounded (i.e., infinite length) when \(F \le 3.84\).

- The tF procedure, in contrast, still provides a finite confidence interval, making it more practical in weak instrument cases.

- Clear and Interpretable Confidence Levels

-

The tF procedure offers transparent confidence intervals that:

Directly incorporate the impact of first-stage instrument strength on the critical values used for inference.

Mirror the distortion-free properties of robust methods like the Anderson-Rubin test, but remain closer in spirit to conventional \(t\)-based inference.

Researchers can interpret tF-based 95% and 99% confidence intervals using familiar econometric tools, without needing to invert hypothesis tests or construct confidence sets.

- Robustness to Common Error Structures

-

The tF procedure remains robust in the presence of:

- Heteroskedasticity

- Clustering

- Autocorrelation

-

No additional adjustments are necessary beyond the use of a robust variance estimator for both:

- The first-stage regression

- The second-stage IV regression

As long as the same robust variance estimator is applied consistently, the tF adjustment maintains valid inference without imposing additional computational complexity.

- Applicability to Published Research

-

One of the most powerful features of the tF procedure is its flexibility for re-evaluating published studies:

Researchers only need the reported first-stage F-statistic and standard errors from the 2SLS estimates.

No access to the original data is required to recalculate confidence intervals or test statistical significance using the tF adjustment.

-

This makes the tF procedure particularly valuable for meta-analyses, replications, and robustness checks of published IV studies, where:

- Raw data may be unavailable, or

- Replication costs are high.

Consider the linear IV model with additional covariates \(W\):

\[ Y = X \beta + W \gamma + u \]

\[ X = Z \pi + W \xi + \nu \]

Where:

\(Y\): Outcome variable.

\(X\): Endogenous regressor of interest.

\(Z\): Instrumental variable (single instrument case).

\(W\): Vector of exogenous controls, possibly including an intercept.

\(u\), \(\nu\): Error terms.

Key Statistics:

-

\(t\)-ratio for the IV estimator:

\[ \hat{t} = \frac{\hat{\beta} - \beta_0}{\sqrt{\hat{V}_N (\hat{\beta})}} \]

-

\(t\)-ratio for the first-stage coefficient:

\[ \hat{f} = \frac{\hat{\pi}}{\sqrt{\hat{V}_N (\hat{\pi})}} \]

-

First-stage F-statistic:

\[ \hat{F} = \hat{f}^2 \]

where

- \(\hat{\beta}\): Instrumental variable estimator.

- \(\hat{V}_N (\hat{\beta})\): Estimated variance of \(\hat{\beta}\), possibly robust to deal with non-iid errors.

- \(\hat{t}\): \(t\)-ratio under the null hypothesis.

- \(\hat{f}\): \(t\)-ratio under the null hypothesis of \(\pi=0\).

Under traditional asymptotics large samples, the \(t\)-ratio statistic follows:

\[ \hat{t}^2 \to^d t^2 \]

With critical values:

\(\pm 1.96\) for a 5% significance test.

\(\pm 2.58\) for a 1% significance test.

However, in IV settings (particularly with weak instruments):

The distribution of the \(t\)-statistic is distorted (i.e., \(t\)-distribution might not be normal), even in large samples.

The distortion arises because the strength of the instrument (\(F\)) and the degree of endogeneity (\(\rho\)) affect the \(t\)-distribution.

Stock and Yogo (2005) provide a formula to quantify this distortion (in the just-identified case) for Wald test statistics using 2SLS.:

\[ t^2 = f + t_{AR} + \rho f t_{AR} \]

Where:

\(\hat{f} \to^d f\)

\(\bar{f} = \dfrac{\pi}{\sqrt{\dfrac{1}{N} AV(\hat{\pi})}}\) and \(AV(\hat{\pi})\) is the asymptotic variance of \(\hat{\pi}\)

\(t_{AR}\) is asymptotically standard normal (\(AR = t^2_{AR}\))

\(\rho\) measures the correlation (degree of endogeneity) between \(Zu\) and \(Z\nu\) (when data are homoskedastic, \(\rho\) is the correlation between \(u\) and \(\nu\)).

Implications:

- For low \(\rho\) (\(\rho \in [0, 0.5]\)), rejection probabilities can be below nominal levels.

- For high \(\rho\) (\(\rho = 0.8\)), rejection rates can be inflated, e.g., 13% rejection at a nominal 5% significance level.

- Reliance on standard \(t\)-ratios leads to incorrect test sizes and invalid confidence intervals.

The tF procedure corrects for these distortions by adjusting the standard error of the 2SLS estimator based on the observed first-stage F-statistic.

Steps:

- Estimate \(\hat{\beta}\) and its conventional SE from 2SLS.

- Compute the first-stage \(\hat{F}\).

- Multiply the conventional SE by an adjustment factor, which depends on \(\hat{F}\) and the desired confidence level.

- Compute new \(t\)-ratios and construct confidence intervals using standard critical values (e.g., \(\pm 1.96\) for 95% CI).

Lee et al. (2022) refer to the adjusted standard errors as “0.05 tF SE” (for a 5% significance level) and “0.01 tF SE” (for 1%).

Lee et al. (2022) conducted a review of recent single-instrument studies in the American Economic Review.

Key Findings:

- For at least 25% of the examined specifications:

- tF-adjusted confidence intervals were 49% longer at the 5% level.

- tF-adjusted confidence intervals were 136% longer at the 1% level.

- Even among specifications with \(F > 10\) and \(t > 1.96\):

- Approximately 25% became statistically insignificant at the 5% level after applying the tF adjustment.

Takeaway:

- The tF procedure can substantially alter inference conclusions.

- Published studies can be re-evaluated with the tF method using only the reported first-stage F-statistics, without requiring access to the underlying microdata.

library(ivDiag)

g <- ivDiag::ivDiag(

data = rueda,

Y = "e_vote_buying",

D = "lm_pob_mesa",

Z = "lz_pob_mesa_f",

controls = c("lpopulation", "lpotencial"),

cl = "muni_code",

cores = 4,

bootstrap = FALSE

)

g$tF

#> F cF Coef SE t CI2.5% CI97.5% p-value

#> 8598.3264 1.9600 -0.9835 0.1424 -6.9071 -1.2626 -0.7044 0.0000

# example in fixest package

library(fixest)

library(tidyverse)

base = iris

names(base) = c("y", "x1", "x_endo_1", "x_inst_1", "fe")

set.seed(2)

base$x_inst_2 = 0.2 * base$y + 0.2 * base$x_endo_1 + rnorm(150, sd = 0.5)

base$x_endo_2 = 0.2 * base$y - 0.2 * base$x_inst_1 + rnorm(150, sd = 0.5)

est_iv = feols(y ~ x1 | x_endo_1 + x_endo_2 ~ x_inst_1 + x_inst_2, base)

est_iv

#> TSLS estimation - Dep. Var.: y

#> Endo. : x_endo_1, x_endo_2

#> Instr. : x_inst_1, x_inst_2

#> Second stage: Dep. Var.: y

#> Observations: 150

#> Standard-errors: IID

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 1.831380 0.411435 4.45121 1.6844e-05 ***

#> fit_x_endo_1 0.444982 0.022086 20.14744 < 2.2e-16 ***

#> fit_x_endo_2 0.639916 0.307376 2.08186 3.9100e-02 *

#> x1 0.565095 0.084715 6.67051 4.9180e-10 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#> RMSE: 0.398842 Adj. R2: 0.725033

#> F-test (1st stage), x_endo_1: stat = 903.1628, p < 2.2e-16 , on 2 and 146 DoF.

#> F-test (1st stage), x_endo_2: stat = 3.2583, p = 0.041268, on 2 and 146 DoF.

#> Wu-Hausman: stat = 6.7918, p = 0.001518, on 2 and 144 DoF.

res_est_iv <- est_iv$coeftable |>

rownames_to_column()

coef_of_interest <-

res_est_iv[res_est_iv$rowname == "fit_x_endo_1", "Estimate"]

se_of_interest <-

res_est_iv[res_est_iv$rowname == "fit_x_endo_1", "Std. Error"]

fstat_1st <- fitstat(est_iv, type = "ivf1")[[1]]$stat

# To get the correct SE based on 1st-stage F-stat (This result is similar without adjustment since F is large)

# the results are the new CIS and p.value

tF(coef = coef_of_interest, se = se_of_interest, Fstat = fstat_1st) |>

causalverse::nice_tab(5)

#> F cF Coef SE t CI2.5. CI97.5. p.value

#> 1 903.1628 1.96 0.445 0.0221 20.1474 0.4017 0.4883 0

# We can try to see a different 1st-stage F-stat and how it changes the results

tF(coef = coef_of_interest, se = se_of_interest, Fstat = 2) |>

causalverse::nice_tab(5)

#> F cF Coef SE t CI2.5. CI97.5. p.value

#> 1 2 18.66 0.445 0.0221 20.1474 0.0329 0.8571 0.034334.5.5 AK Approach

J. Angrist and Kolesár (2024) offer a reappraisal of just-identified IV models, focusing on the finite-sample properties of conventional inference in cases where a single instrument is used for a single endogenous variable. Their findings challenge some of the more pessimistic views about weak instruments and inference distortions in microeconometric applications.

Rather than propose a new estimator or test, Angrist and Kolesár provide a framework and rationale supporting the validity of traditional just-ID IV inference in many practical settings. Their insights clarify when conventional t-tests and confidence intervals can be trusted, and they offer practical guidance on first-stage pretesting, bias reduction, and endogeneity considerations.

AK apply their framework to three canonical studies:

- J. D. Angrist and Krueger (1991) - Education returns

- J. D. Angrist and Evans (1998) - Family size and female labor supply

- J. D. Angrist and Lavy (1999) - Class size effects

Findings:

Endogeneity (\(\rho\)) in these studies is moderate (typically \(|\rho| < 0.47\)).

Conventional t-tests and confidence intervals work reasonably well.

In many micro applications, theoretical bounds on causal effects and plausible OVB scenarios limit \(\rho\), supporting the validity of conventional inference.

Key Contributions of the AK Approach

Reassessing Bias and Coverage:

AK demonstrate that conventional IV estimates and t-tests in just-ID IV models often perform better than theory might suggest—provided the degree of endogeneity (\(\rho\)) is moderate, and the first-stage F-statistic is not extremely weak.-

First-Stage Sign Screening:

- They propose sign screening as a simple, costless strategy to halve the median bias of IV estimators.

- Screening on the sign of the estimated first-stage coefficient (i.e., using only samples where the first-stage estimate has the correct sign) improves the finite-sample performance of just-ID IV estimates without degrading confidence interval coverage.

-

Bias-Minimizing Screening Rule:

- AK show that setting the first-stage t-statistic threshold \(c = 0\), i.e., requiring only the correct sign of the first-stage estimate, minimizes median bias while preserving conventional coverage properties.

-

Practical Implication:

- They argue that conventional just-ID IV inference, including t-tests and confidence intervals, is likely valid in most microeconometric applications, especially where theory or institutional knowledge suggests the direction of the first-stage relationship.

34.5.5.1 Model Setup and Notation

AK adopt a reduced-form and first-stage specification for just-ID IV models:

\[ \begin{aligned} Y_i &= Z_i \delta + X_i' \psi_1 + u_i \\ D_i &= Z_i \pi + X_i' \psi_2 + v_i \end{aligned} \] where

- \(Y_i\): Outcome variable

- \(D_i\): Endogenous treatment variable

- \(Z_i\): Instrumental variable (single instrument)

- \(X_i\): Control variables

- \(u_i, v_i\): Error terms

Parameter of Interest:

\[ \beta = \frac{\delta}{\pi} \]

34.5.5.2 Endogeneity and Instrument Strength

AK characterize the two key parameters governing finite-sample inference:

Instrument Strength:

\[ E[F] = \frac{\pi^2}{\sigma^2_{\hat{\pi}}} + 1 \]

(Expected value of the first-stage F-statistic.)Endogeneity:

\[ \rho = \text{cor}(\hat{\delta} - \hat{\pi} \beta, \hat{\pi}) \]

Measures the degree of correlation between reduced-form and first-stage residuals (or between \(u\) and \(v\) under homoskedasticity).

Key Insight:

For \(\rho < 0.76\), the coverage of conventional 95% confidence intervals is distorted by less than 5%, regardless of the first-stage F-statistic.

34.5.5.3 First-Stage Sign Screening

AK argue that pre-screening based on the sign of the first-stage estimate (\(\hat{\pi}\)) offers bias reduction without compromising confidence interval coverage.

Screening Rule:

- Screen if \(\hat{\pi} > 0\)

(or \(\hat{\pi} < 0\) if the theoretical sign is negative).

Results:

- Halves median bias of the IV estimator.

- No degradation of confidence interval coverage.

This screening approach:

Avoids the pitfalls of pre-testing based on first-stage F-statistics (which can exacerbate bias and distort inference).

Provides a “free lunch”: bias reduction with no coverage cost.

34.5.5.4 Rejection Rates and Confidence Interval Coverage

- Rejection rates of conventional t-tests stay close to the nominal level (5%) if \(|\rho| < 0.76\), independent of instrument strength.

- For \(|\rho| < 0.565\), conventional t-tests exhibit no over-rejection, aligning with findings from Lee et al. (2022).

Comparison with AR and tF Procedures:

| Approach | Bias Reduction | Coverage | CI Length (F > 3.84) |

|---|---|---|---|

| AK Sign Screening | Halves median bias | Near-nominal | Finite |

| AR Test | No bias (inversion method) | Exact | Infinite |

| tF Procedure | Bias adjusted | Near-nominal | Longer than AK (especially for moderate F) |

34.6 Testing Assumptions

We are interested in estimating the causal effect of an endogenous regressor \(X_2\) on an outcome variable \(Y\), using instrumental variables \(Z\) to address endogeneity.

The structural model of interest is:

\[ Y = \beta_1 X_1 + \beta_2 X_2 + \epsilon \]

- \(X_1\): Exogenous regressors

- \(X_2\): Endogenous regressor(s)

- \(Z\): Instrumental variables

If \(Z\) satisfies the relevance and exogeneity assumptions, we can identify \(\beta_2\) as:

\[ \beta_2 = \frac{Cov(Z, Y)}{Cov(Z, X_2)} \]

Alternatively, in terms of reduced form and first stage estimates:

- Reduced Form (effect of \(Z\) on \(Y\)):

\[ \rho = \frac{Cov(Y, Z)}{Var(Z)} \]

- First Stage (effect of \(Z\) on \(X_2\)):

\[ \pi = \frac{Cov(X_2, Z)}{Var(Z)} \]

- IV Estimate:

\[ \beta_2 = \frac{Cov(Y,Z)}{Cov(X_2, Z)} = \frac{\rho}{\pi} \]

To interpret \(\beta_2\) as the causal effect of \(X_2\) on \(Y\), the following assumptions must hold:

34.6.1 Relevance Assumption

In IV estimation, instrument relevance ensures that the instrument(s) \(Z\) can explain sufficient variation in the endogenous regressor(s) \(X_2\) to identify the structural equation:

\[ Y = \beta_1 X_1 + \beta_2 X_2 + \epsilon \]

The relevance condition requires that the instrument(s) \(Z\) be correlated with the endogenous variable(s) \(X_2\), conditional on other covariates \(X_1\). Formally:

\[ Cov(Z, X_2) \ne 0 \]

Or, in matrix notation for multiple instruments and regressors, the matrix of correlations (or more generally, the projection matrix) between \(Z\) and \(X_2\) must be of full column rank. This guarantees that \(Z\) has non-trivial explanatory power for \(X_2\).

An equivalent condition in terms of population moment conditions is that:

\[ E[Z' (X_2 - E[X_2 | Z])] \ne 0 \]

This condition ensures the identification of \(\beta_2\). Without it, the IV estimator would be undefined due to division by zero in its ratio form:

\[ \hat{\beta}_2^{IV} = \frac{Cov(Z, Y)}{Cov(Z, X_2)} \]

The first-stage regression operationalizes the relevance assumption:

\[ X_2 = Z \pi + X_1 \gamma + u \]

- \(\pi\): Vector of first-stage coefficients, measuring the effect of instruments on the endogenous regressor(s).

- \(u\): First-stage residual.

Identification of \(\beta_2\) requires that \(\pi \ne 0\). If \(\pi = 0\), the instrument has no explanatory power for \(X_2\), and the IV procedure collapses.

34.6.1.1 Weak Instruments

Even when \(Cov(Z, X_2) \ne 0\), weak instruments pose a serious problem in finite samples:

- Bias: The IV estimator becomes biased in the direction of the OLS estimator.

- Size distortion: Hypothesis tests can have inflated Type I error rates.

- Variance: Estimates become highly variable and unreliable.

Asymptotic vs. Finite Sample Problems

- IV estimators are consistent as \(n \to \infty\) if the relevance condition holds.

- With weak instruments, convergence can be so slow that finite-sample behavior is practically indistinguishable from inconsistency.

Boundaries between relevance and strength are thus critical in applied work.

34.6.1.2 First-Stage F-Statistic

In a single endogenous regressor case, the first-stage F-statistic is the standard test for instrument strength.

First-Stage Regression:

\[ X_2 = Z \pi + X_1 \gamma + u \]

We test:

\[ \begin{aligned} H_0&: \pi = 0 \quad \text{(Instruments have no explanatory power)} \\ H_1&: \pi \ne 0 \quad \text{(Instruments explain variation in $X_2$)} \end{aligned} \]

F-Statistic Formula:

\[ F = \frac{(SSR_r - SSR_{ur}) / q}{SSR_{ur} / (n - k - 1)} \]

- \(SSR_r\): Sum of squared residuals from the restricted model (no instruments).

- \(SSR_{ur}\): Sum of squared residuals from the unrestricted model (with instruments).

- \(q\): Number of excluded instruments (restrictions tested).

- \(n\): Number of observations.

- \(k\): Number of control variables.

Interpretation:

- A rule of thumb (Staiger and Stock 1997): If \(F < 10\), instruments are weak.

- However, Lee et al. (2022) criticizes this threshold, advocating for model-specific diagnostics.

- M. J. Moreira (2003) proposes the Conditional Likelihood Ratio test for inference under weak instruments (D. W. Andrews, Moreira, and Stock 2008).

Use linearHypothesis() in R to test instrument relevance.

34.6.1.3 Cragg-Donald Test

The Cragg-Donald statistic is essentially the same as the Wald statistic of the joint significance of the instruments in the first stage (Cragg and Donald 1993), and it’s used specifically when you have multiple endogenous regressors. It’s calculated as:

\[ CD = n \times (R_{ur}^2 - R_r^2) \]

where:

\(R_{ur}^2\) and \(R_r^2\) are the R-squared values from the unrestricted and restricted models respectively.

\(n\) is the number of observations.

For one endogenous variable, the Cragg-Donald test results should align closely with those from Stock and Yogo. The Anderson canonical correlation test, a likelihood ratio test, also works under similar conditions, contrasting with Cragg-Donald’s Wald statistic approach. Both are valid with one endogenous variable and at least one instrument.

library(cragg)

library(AER) # for dataaset

data("WeakInstrument")

cragg_donald(

# control variables

X = ~ 1,

# endogeneous variables

D = ~ x,

# instrument variables

Z = ~ z,

data = WeakInstrument

)

#> Cragg-Donald test for weak instruments:

#>

#> Data: WeakInstrument

#> Controls: ~1

#> Treatments: ~x

#> Instruments: ~z

#>

#> Cragg-Donald Statistic: 4.566136

#> Df: 198Large CD statistic implies that the instruments are strong, but not in our case here. But to judge it against some critical value, we have to look at Stock-Yogo

34.6.1.4 Stock-Yogo

The Stock-Yogo test does not directly compute a statistic like the F-test or Cragg-Donald, but rather uses pre-computed critical values to assess the strength of instruments. It often uses the eigenvalues derived from the concentration matrix: