39.1 Bad Controls

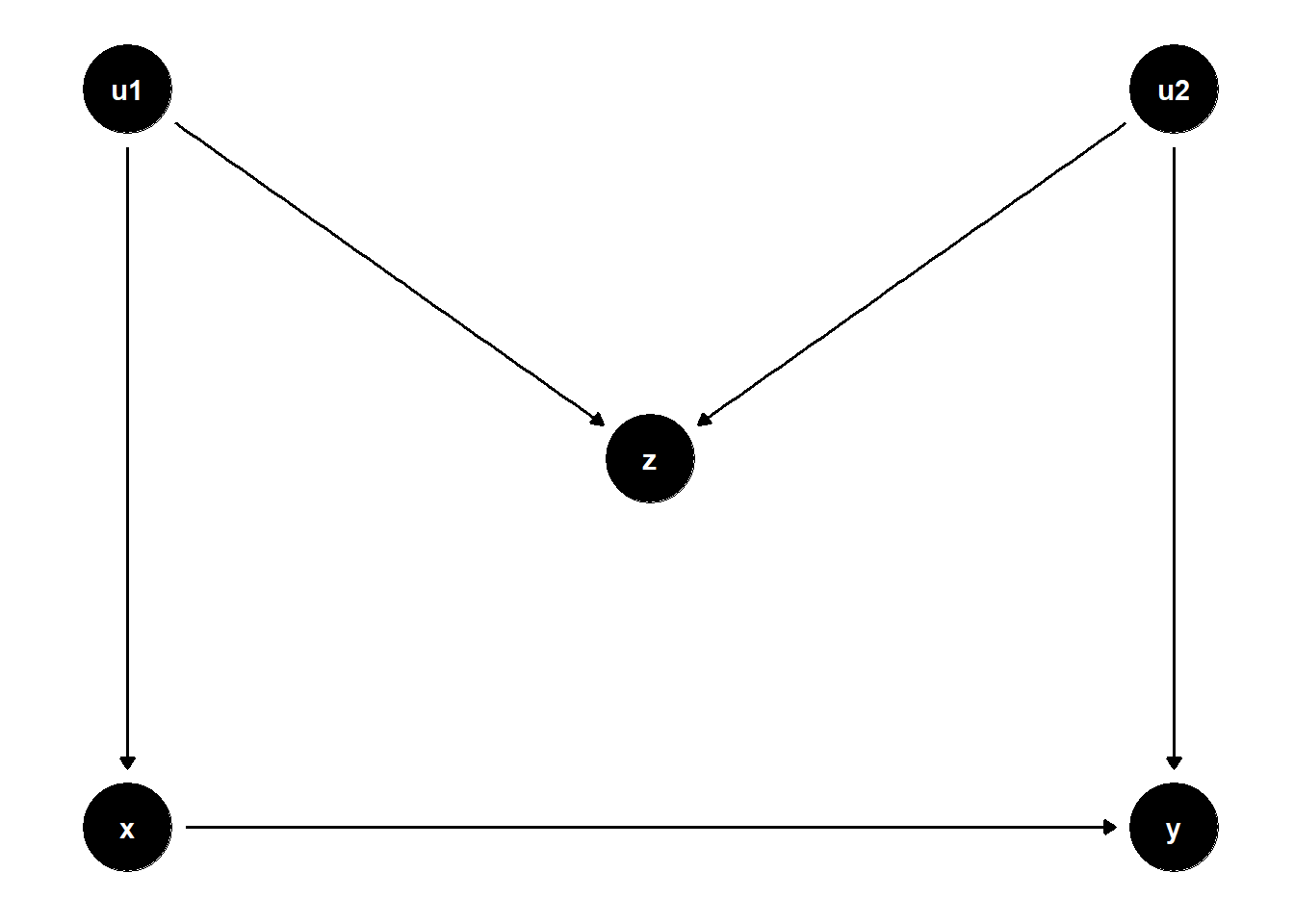

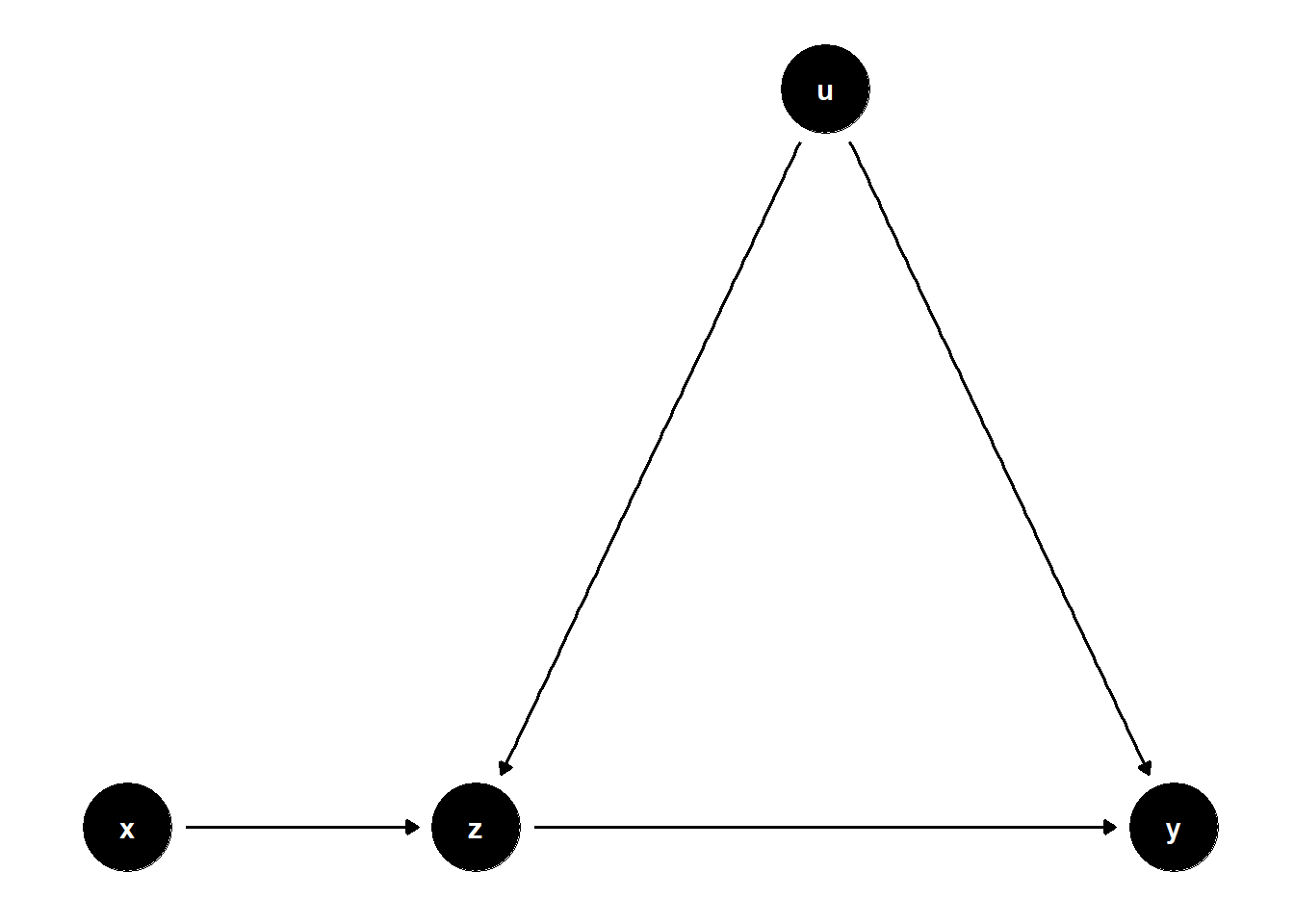

39.1.1 M-bias

Traditional textbooks (G. W. Imbens and Rubin 2015; J. D. Angrist and Pischke 2009) consider \(Z\) as a good control because it’s a pre-treatment variable, where it correlates with the treatment and the outcome.

This is most prevalent in Matching Methods, where we are recommended to include all “pre-treatment” variables.

However, it is a bad control because it opens the back-door path \(Z \leftarrow U_1 \to Z \leftarrow U_2 \to Y\)

# cleans workspace

rm(list = ls())

# DAG

## specify edges

model <- dagitty("dag{x->y; u1->x; u1->z; u2->z; u2->y}")

# set u as latent

latents(model) <- c("u1", "u2")

## coordinates for plotting

coordinates(model) <- list(x = c(

x = 1,

u1 = 1,

z = 2,

u2 = 3,

y = 3

),

y = c(

x = 1,

u1 = 2,

z = 1.5,

u2 = 2,

y = 1

))

## ggplot

ggdag(model) + theme_dag()

Even though \(Z\) can correlate with both \(X\) and \(Y\) very well, it’s not a confounder.

Controlling for \(Z\) can bias the \(X \to Y\) estimate, because it opens the colliding path \(X \leftarrow U_1 \rightarrow Z \leftarrow U_2 \leftarrow Y\)

n <- 1e4

u1 <- rnorm(n)

u2 <- rnorm(n)

z <- u1 + u2 + rnorm(n)

x <- u1 + rnorm(n)

causal_coef <- 2

y <- causal_coef * x - 4*u2 + rnorm(n)

jtools::export_summs(lm(y ~ x), lm(y ~ x + z))| Model 1 | Model 2 | |

|---|---|---|

| (Intercept) | -0.07 | -0.07 * |

| (0.04) | (0.03) | |

| x | 1.99 *** | 2.78 *** |

| (0.03) | (0.03) | |

| z | -1.59 *** | |

| (0.02) | ||

| N | 10000 | 10000 |

| R2 | 0.31 | 0.57 |

| *** p < 0.001; ** p < 0.01; * p < 0.05. | ||

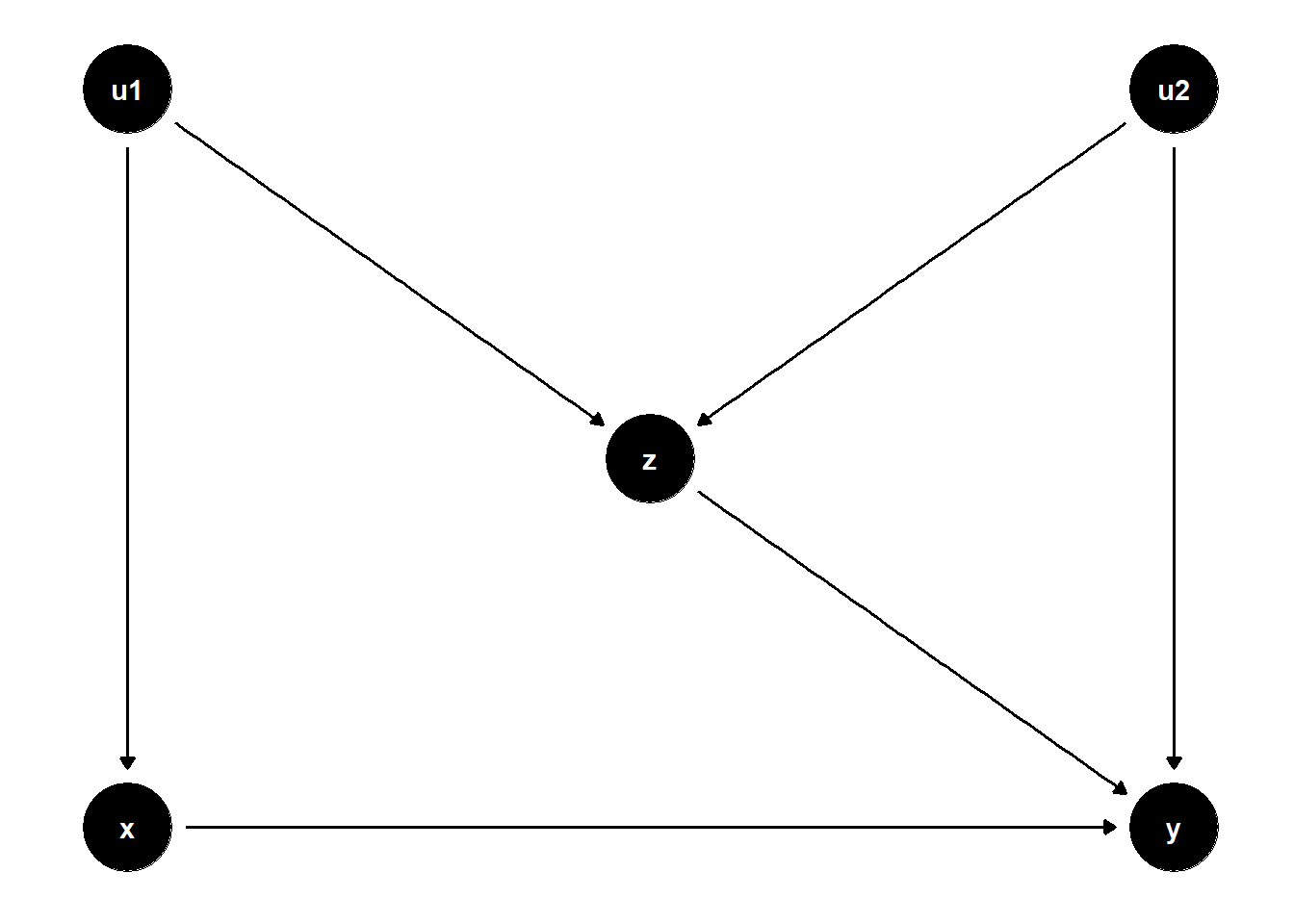

Another worse variation is

# cleans workspace

rm(list = ls())

# DAG

## specify edges

model <- dagitty("dag{x->y; u1->x; u1->z; u2->z; u2->y; z->y}")

# set u as latent

latents(model) <- c("u1", "u2")

## coordinates for plotting

coordinates(model) <- list(

x = c(x=1, u1=1, z=2, u2=3, y=3),

y = c(x=1, u1=2, z=1.5, u2=2, y=1))

## ggplot

ggdag(model) + theme_dag()

You can’t do much in this case.

If you don’t control for \(Z\), then you have an open back-door path \(X \leftarrow U_1 \to Z \to Y\), and the unadjusted estimate is biased

If you control for \(Z\), then you open backdoor path \(X \leftarrow U_1 \to Z \leftarrow U_2 \to Y\), and the adjusted estimate is also biased

Hence, we cannot identify the causal effect in this case.

We can do sensitivity analyses to examine (Cinelli et al. 2019; Cinelli and Hazlett 2020)

- the plausible bounds on the strength of the direct effect of \(Z \to Y\)

- the strength of the effects of the latent variables

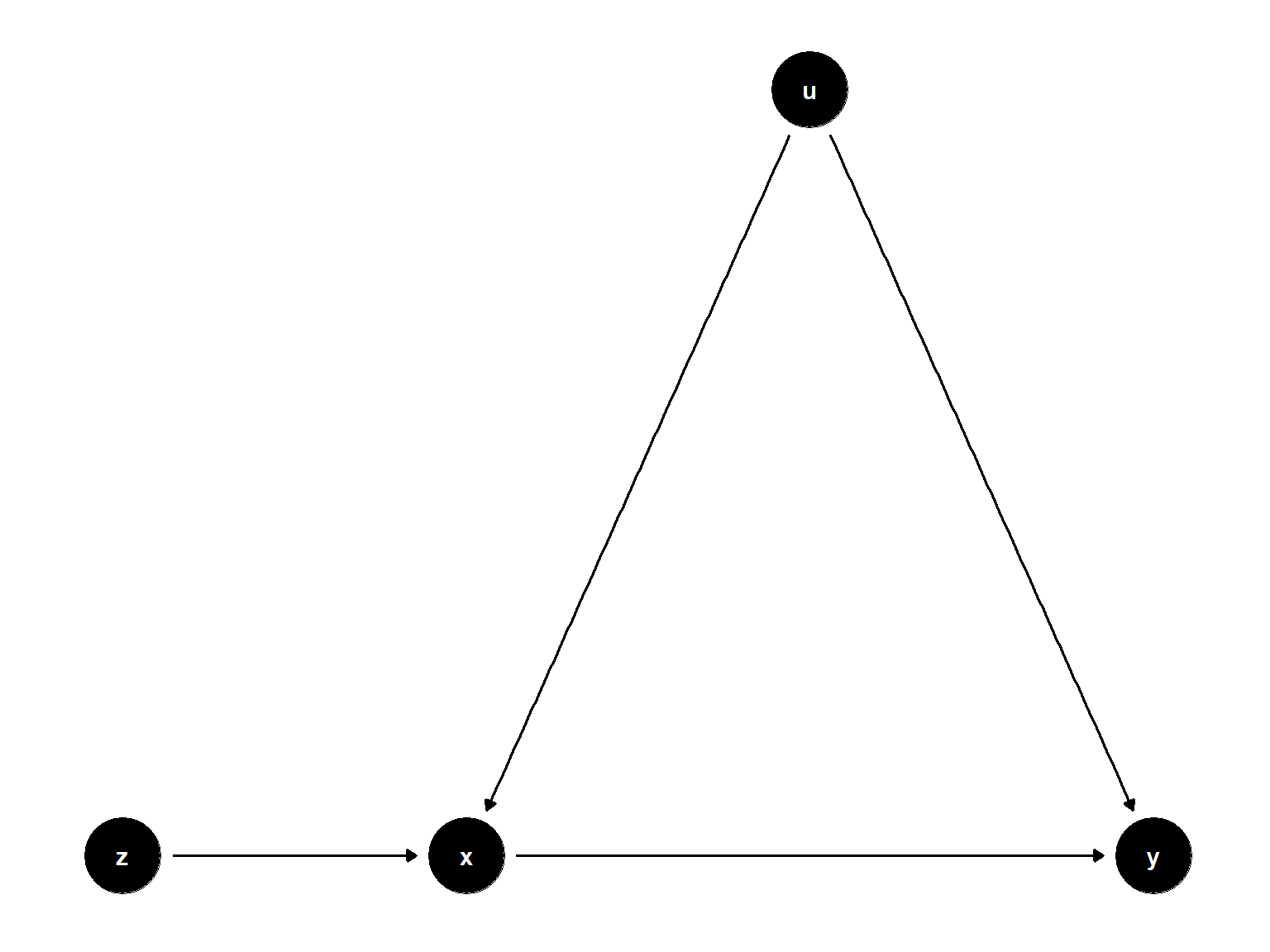

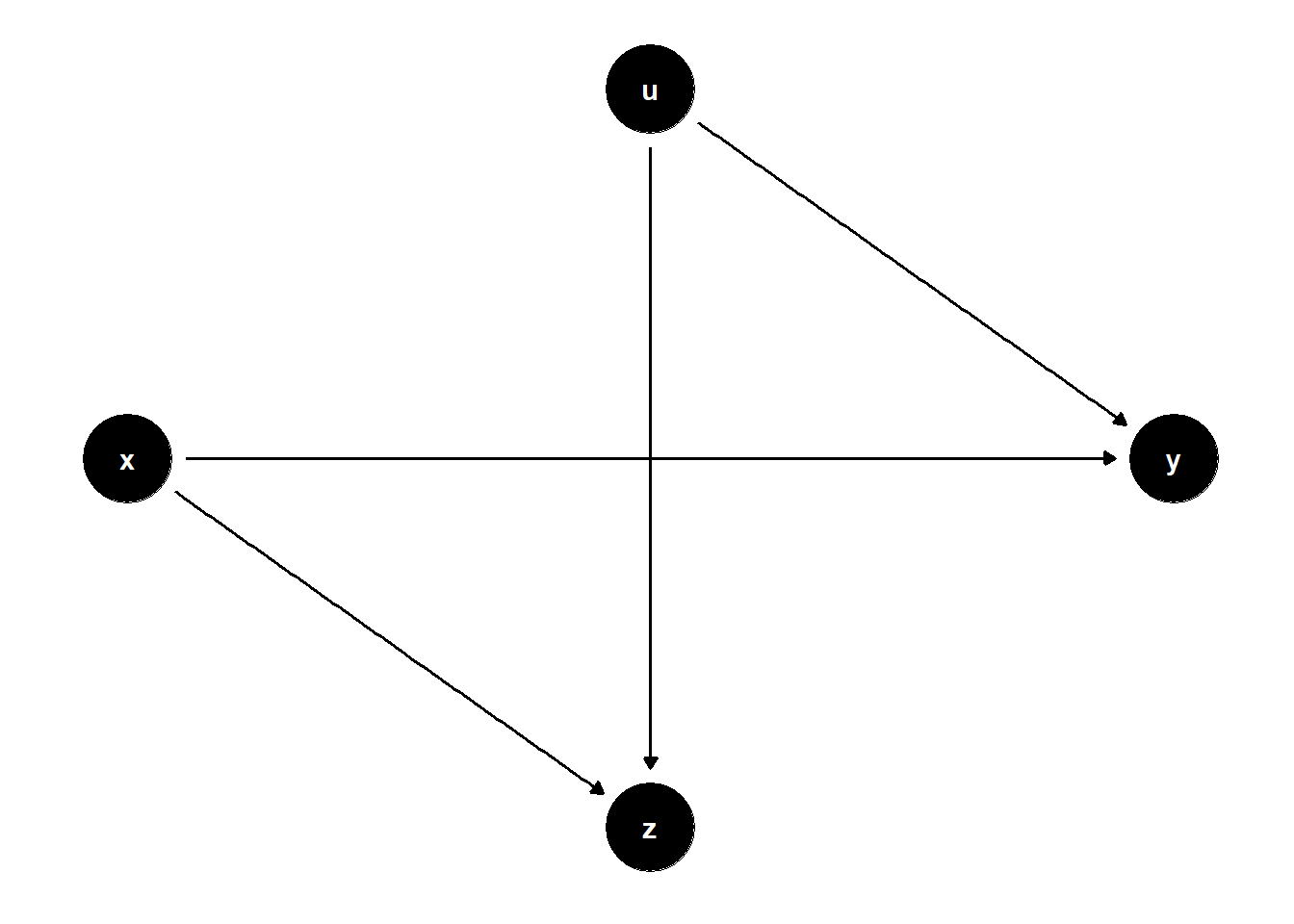

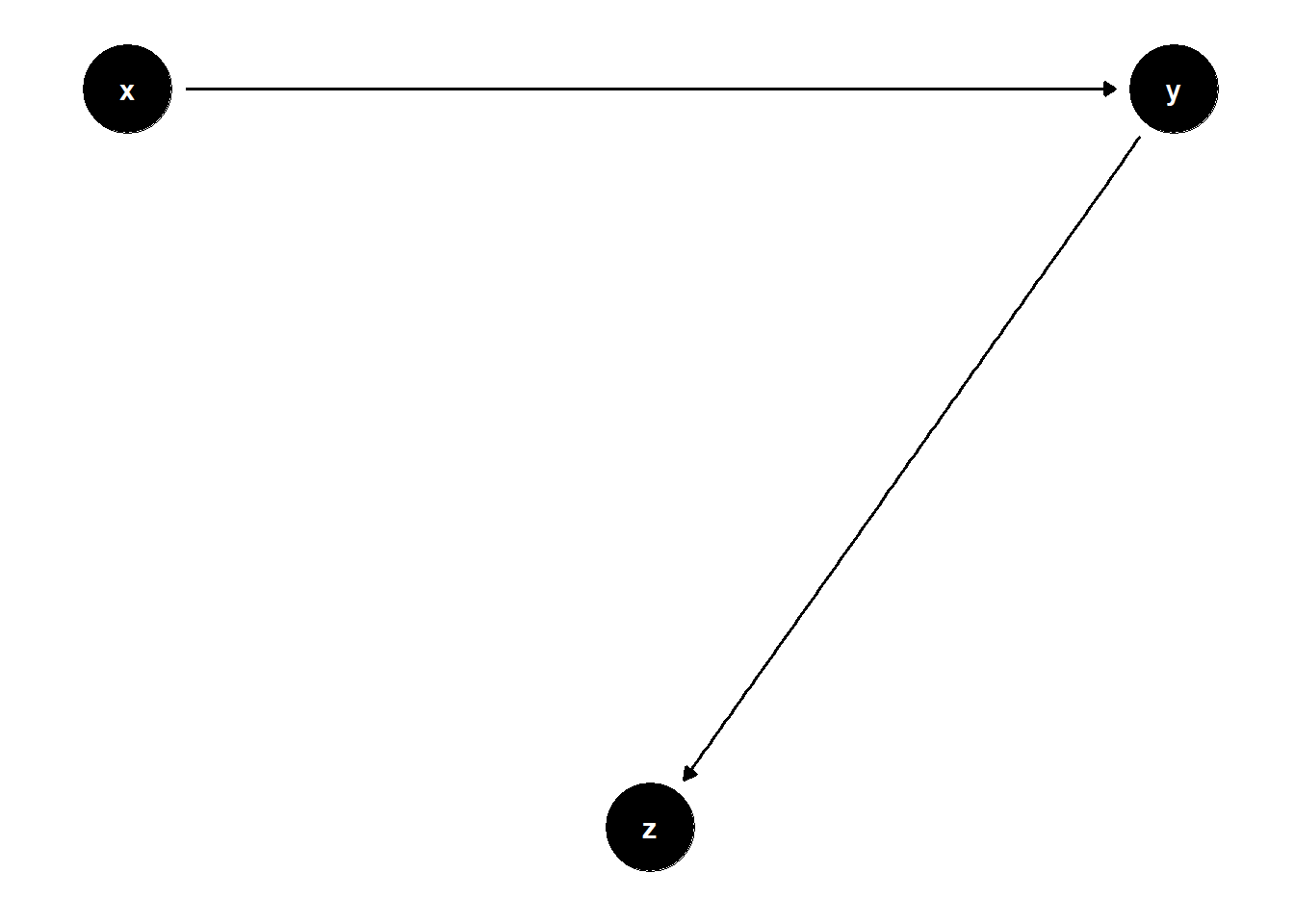

39.1.2 Bias Amplification

# cleans workspace

rm(list = ls())

# DAG

## specify edges

model <- dagitty("dag{x->y; u->x; u->y; z->x}")

# set u as latent

latents(model) <- c("u")

## coordinates for plotting

coordinates(model) <- list(

x = c(z=1, x=2, u=3, y=4),

y = c(z=1, x=1, u=2, y=1))

## ggplot

ggdag(model) + theme_dag()

Controlling for Z amplifies the omitted variable bias

n <- 1e4

z <- rnorm(n)

u <- rnorm(n)

x <- 2*z + u + rnorm(n)

y <- x + 2*u + rnorm(n)

jtools::export_summs(lm(y ~ x), lm(y ~ x + z))| Model 1 | Model 2 | |

|---|---|---|

| (Intercept) | -0.02 | -0.01 |

| (0.02) | (0.02) | |

| x | 1.34 *** | 1.99 *** |

| (0.01) | (0.01) | |

| z | -1.98 *** | |

| (0.03) | ||

| N | 10000 | 10000 |

| R2 | 0.72 | 0.80 |

| *** p < 0.001; ** p < 0.01; * p < 0.05. | ||

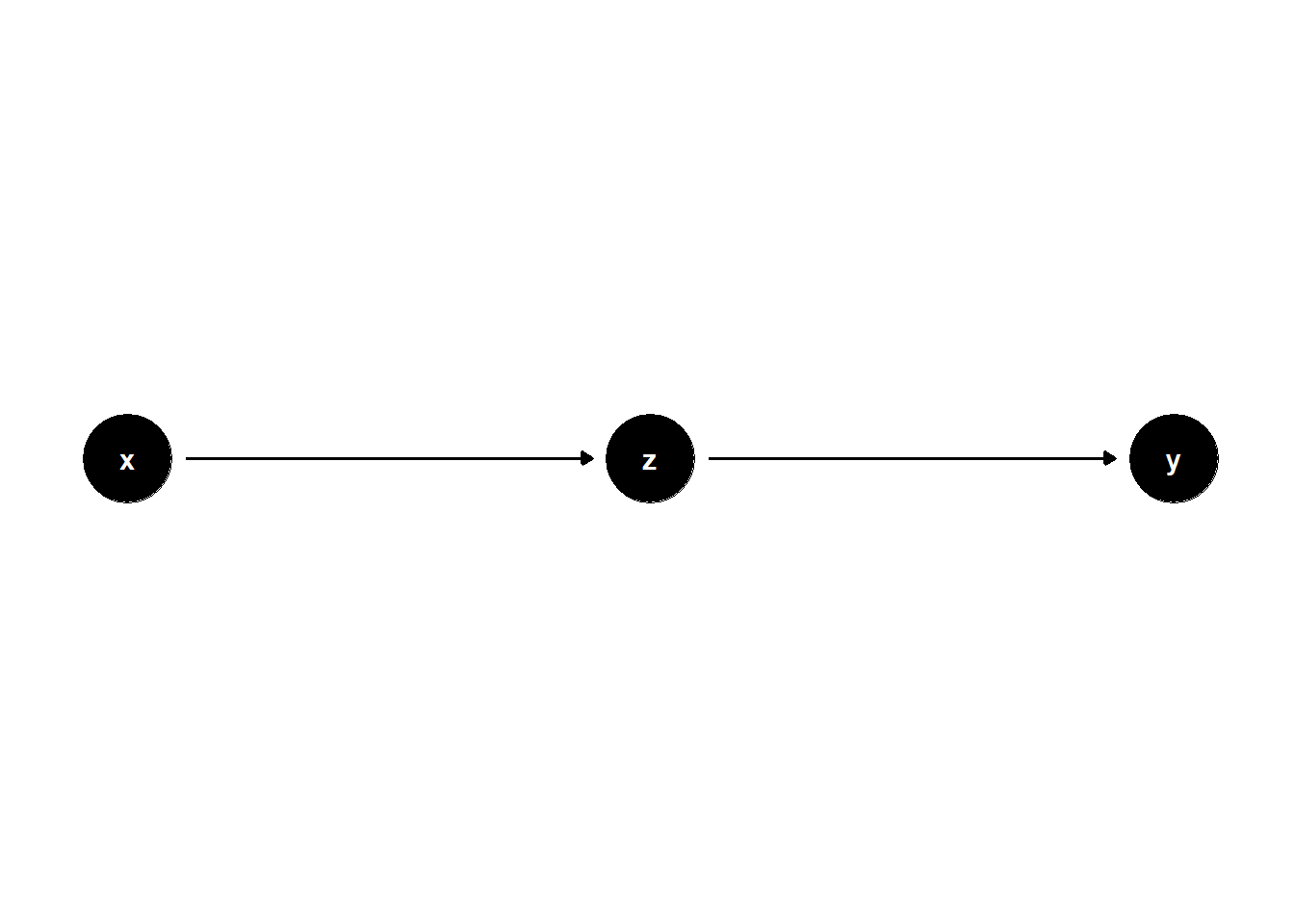

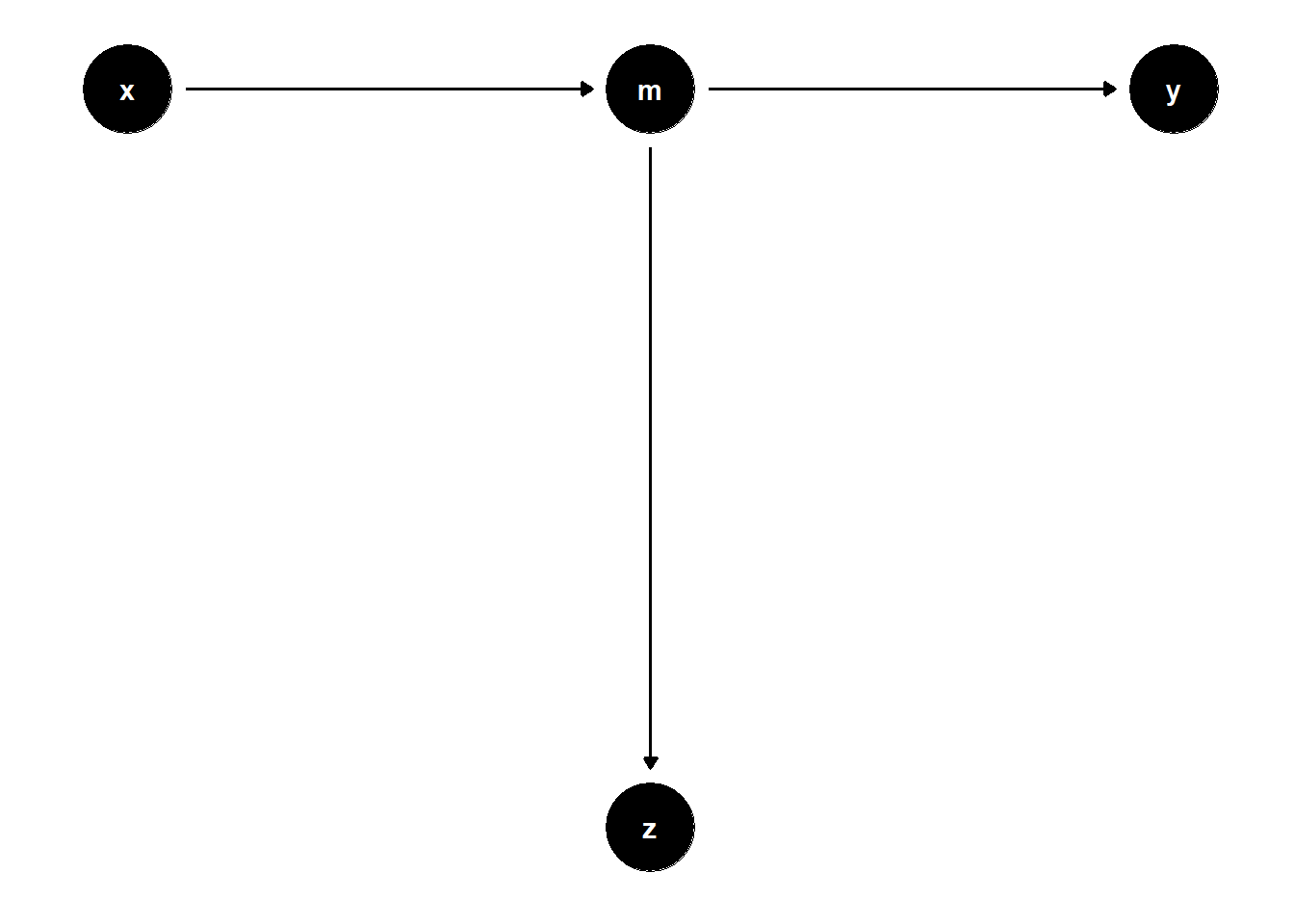

39.1.3 Overcontrol bias

Sometimes, this is similar to controlling for variables that are proxy of the dependent variable.

# cleans workspace

rm(list = ls())

# DAG

## specify edges

model <- dagitty("dag{x->z; z->y}")

## coordinates for plotting

coordinates(model) <- list(

x = c(x=1, z=2, y=3),

y = c(x=1, z=1, y=1))

## ggplot

ggdag(model) + theme_dag()

If X is a proxy for Z (i.e., a mediator between Z and Y), controlling for Z is bad

n <- 1e4

x <- rnorm(n)

z <- x + rnorm(n)

y <- z + rnorm(n)

jtools::export_summs(lm(y ~ x), lm(y ~ x + z))| Model 1 | Model 2 | |

|---|---|---|

| (Intercept) | 0.01 | 0.00 |

| (0.01) | (0.01) | |

| x | 1.01 *** | 0.00 |

| (0.01) | (0.01) | |

| z | 1.01 *** | |

| (0.01) | ||

| N | 10000 | 10000 |

| R2 | 0.33 | 0.67 |

| *** p < 0.001; ** p < 0.01; * p < 0.05. | ||

Now you see that \(Z\) is significant, which is technically true, but we are interested in the causal coefficient of \(X\) on \(Y\).

Another setting for overcontrol bias is

# cleans workspace

rm(list = ls())

# DAG

## specify edges

model <- dagitty("dag{x->m; m->z; m->y}")

## coordinates for plotting

coordinates(model) <- list(

x = c(x=1, m=2, z=2, y=3),

y = c(x=2, m=2, z=1, y=2))

## ggplot

ggdag(model) + theme_dag()

n <- 1e4

x <- rnorm(n)

m <- x + rnorm(n)

z <- m + rnorm(n)

y <- m + rnorm(n)

jtools::export_summs(lm(y ~ x), lm(y ~ x + z))| Model 1 | Model 2 | |

|---|---|---|

| (Intercept) | 0.01 | 0.00 |

| (0.01) | (0.01) | |

| x | 0.99 *** | 0.48 *** |

| (0.01) | (0.01) | |

| z | 0.51 *** | |

| (0.01) | ||

| N | 10000 | 10000 |

| R2 | 0.33 | 0.50 |

| *** p < 0.001; ** p < 0.01; * p < 0.05. | ||

Another setting for this bias is

# cleans workspace

rm(list = ls())

# DAG

## specify edges

model <- dagitty("dag{x->z; z->y; u->z; u->y}")

# set u as latent

latents(model) <- "u"

## coordinates for plotting

coordinates(model) <- list(

x = c(x=1, z=2, u=3, y=4),

y = c(x=1, z=1, u=2, y=1))

## ggplot

ggdag(model) + theme_dag()

set.seed(1)

n <- 1e4

x <- rnorm(n)

u <- rnorm(n)

z <- x + u + rnorm(n)

y <- z + u + rnorm(n)

jtools::export_summs(lm(y ~ x), lm(y ~ x + z))| Model 1 | Model 2 | |

|---|---|---|

| (Intercept) | -0.01 | -0.01 |

| (0.02) | (0.01) | |

| x | 1.01 *** | -0.47 *** |

| (0.02) | (0.01) | |

| z | 1.48 *** | |

| (0.01) | ||

| N | 10000 | 10000 |

| R2 | 0.15 | 0.78 |

| *** p < 0.001; ** p < 0.01; * p < 0.05. | ||

The total effect of \(X\) on \(Y\) is not biased (i.e., \(1.01 \approx 1.48 - 0.47\)).

Controlling for Z will fail to identify the direct effect of \(X\) on \(Y\) and opens the biasing path \(X \rightarrow Z \leftarrow U \rightarrow Y\)

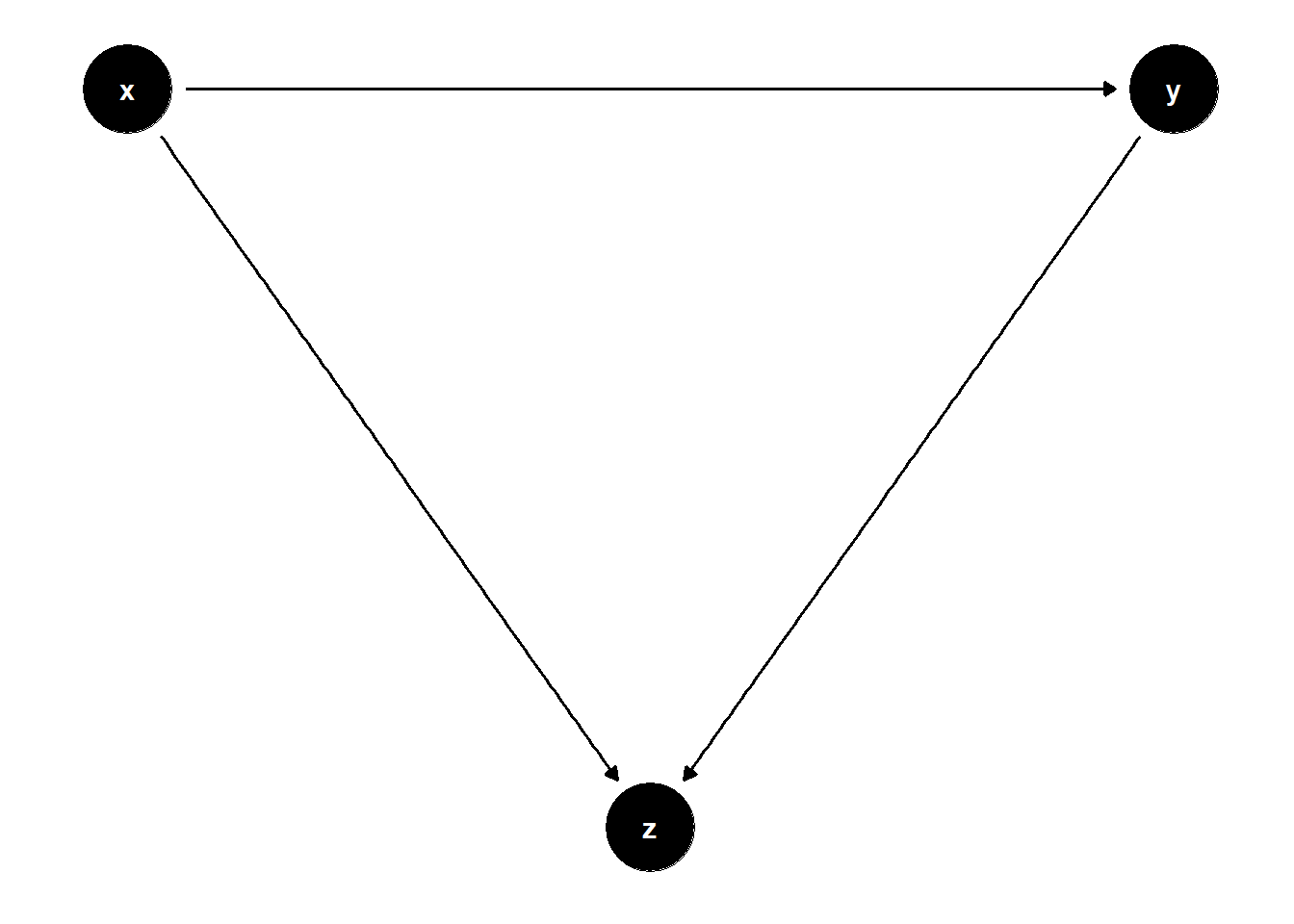

39.1.4 Selection Bias

Also known as “collider stratification bias”

rm(list = ls())

# DAG

## specify edges

model <- dagitty("dag{x->y; x->z; u->z;u->y}")

# set u as latent

latents(model) <- "u"

## coordinates for plotting

coordinates(model) <- list(

x = c(x=1, z=2, u=2, y=3),

y = c(x=3, z=2, u=4, y=3))

## ggplot

ggdag(model) + theme_dag()

Adjusting \(Z\) opens the colliding path \(X \to Z \leftarrow U \to Y\)

n <- 1e4

x <- rnorm(n)

u <- rnorm(n)

z <- x + u + rnorm(n)

y <- x + 2*u + rnorm(n)

jtools::export_summs(lm(y ~ x), lm(y ~ x + z))| Model 1 | Model 2 | |

|---|---|---|

| (Intercept) | -0.01 | 0.01 |

| (0.02) | (0.02) | |

| x | 0.97 *** | -0.03 |

| (0.02) | (0.02) | |

| z | 1.00 *** | |

| (0.01) | ||

| N | 10000 | 10000 |

| R2 | 0.16 | 0.49 |

| *** p < 0.001; ** p < 0.01; * p < 0.05. | ||

Another setting is

rm(list = ls())

# DAG

## specify edges

model <- dagitty("dag{x->y; x->z; y->z}")

## coordinates for plotting

coordinates(model) <- list(

x = c(x=1, z=2, y=3),

y = c(x=2, z=1, y=2))

## ggplot

ggdag(model) + theme_dag()

Controlling \(Z\) opens the colliding path \(X \to Z \leftarrow Y\)

n <- 1e4

x <- rnorm(n)

y <- x + rnorm(n)

z <- x + y + rnorm(n)

jtools::export_summs(lm(y ~ x), lm(y ~ x + z))| Model 1 | Model 2 | |

|---|---|---|

| (Intercept) | 0.00 | 0.00 |

| (0.01) | (0.01) | |

| x | 1.03 *** | -0.00 |

| (0.01) | (0.01) | |

| z | 0.51 *** | |

| (0.00) | ||

| N | 10000 | 10000 |

| R2 | 0.51 | 0.76 |

| *** p < 0.001; ** p < 0.01; * p < 0.05. | ||

39.1.5 Case-control Bias

rm(list = ls())

# DAG

## specify edges

model <- dagitty("dag{x->y; y->z}")

## coordinates for plotting

coordinates(model) <- list(

x = c(x=1, z=2, y=3),

y = c(x=2, z=1, y=2))

## ggplot

ggdag(model) + theme_dag()

Controlling \(Z\) opens a virtual collider (a descendant of a collider).

However, if \(X\) truly has no causal effect on \(Y\). Then, controlling for \(Z\) is valid for testing whether the effect of \(X\) on \(Y\) is 0 because X is d-separated from \(Y\) regardless of adjusting for \(Z\)

n <- 1e4

x <- rnorm(n)

y <- x + rnorm(n)

z <- y + rnorm(n)

jtools::export_summs(lm(y ~ x), lm(y ~ x + z))| Model 1 | Model 2 | |

|---|---|---|

| (Intercept) | -0.00 | -0.00 |

| (0.01) | (0.01) | |

| x | 1.00 *** | 0.50 *** |

| (0.01) | (0.01) | |

| z | 0.50 *** | |

| (0.00) | ||

| N | 10000 | 10000 |

| R2 | 0.50 | 0.75 |

| *** p < 0.001; ** p < 0.01; * p < 0.05. | ||