31 Day 31 (July 19)

31.1 Announcements

- Work day on tuesday

- Work days next week?

- Up next

- Model selection

- Random effects/mixed model

- Generalized linear models

- Machine learning (e.g., regression trees, random forest)

- Generalized additive models

31.2 Model selection

Recall that all inference is conditional on the statistical model that we have chosen to use.

- Components of a statistical model

- Are the assumptions met?

- Are there better models we could have used?

- What should you do if you have two or more competing models?

Information criterion

- Motivation: Adjusted \(R^2\)

- Recall \[\hat{R}^{2}=1-\frac{(\mathbf{y}-\mathbf{X}\hat{\boldsymbol{\beta}})^{\prime}(\mathbf{y}-\mathbf{X}\hat{\boldsymbol{\beta})}}{(\mathbf{y}-\mathbf{1}\hat{\beta}_{0})^{\prime}(\mathbf{y}-\mathbf{1}\hat{\beta}_{0})}\]

- As we add more predictors to \(\mathbf{X}\), \(R^{2}\) monotonically increases when calculated using in-sample data.

- Calculating \(R^{2}\) using in-sample data results in a biased estimator of the \(R^{2}\) calculated using “out-of-sample” (i.e., \(\text{E}(\hat{R}_{\text{in-sample}}^{2})\neq R_{\text{out-of-sample}}^{2}\))

- Adjusted \(R^{2}\) is a biased corrected version of \(R^{2}\). \[\hat{R}^{2}_{\text{adjusted}}=1-\bigg{(}\frac{n-1}{n-p}\bigg{)}\frac{(\mathbf{y}-\mathbf{X}\hat{\boldsymbol{\beta}})^{\prime}(\mathbf{y}-\mathbf{X}\hat{\boldsymbol{\beta})}}{(\mathbf{y}-\mathbf{1}\hat{\beta}_{0})^{\prime}(\mathbf{y}-\mathbf{1}\hat{\beta}_{0})}\]

- Many different estimators exist for adjusted \(R^{2}\)

- Different assumptions lead to different estimators

- Gelman, Hwang, and Vehtari (2014) on estimating out-of-sample predictive accuracy using in-sample data

- “We know of no approximation that works in general….Each of these methods has flaws, which tells us that any predictive accuracy measure that we compute will be only approximate”

- “One difficulty is that all the proposed measures are attempting to perform what is, in general, an impossible task: to obtain an unbiased (or approximately unbiased) and accurate measure of out-of-sample prediction error that will be valid over a general class of models…”

- Motivation: Adjusted \(R^2\)

Akaike’s Information Criterion

- The log-likelihood as a scoring rule \[\mathcal{l}(\boldsymbol{\theta}|\mathbf{y})\]

- The log-likelihood for the linear model \[\mathcal{l}(\boldsymbol{\beta},\sigma^2|\mathbf{y})=-\frac{n}{2}\text{ log}(2\pi\sigma^2) - \frac{1}{2\sigma^2}\sum_{i=1}^{n}(y_{i} - \mathbf{x}_{i}^{\prime}\boldsymbol{\beta})^2.\]

- Like \(R^2\), \(\mathcal{l}(\boldsymbol{\beta},\sigma^2|\mathbf{y})\) can be used to measure predictive accuracy on in-sample or out of sample data.

- Unlike \(R^2\), the log-likelihood is a general scoring rule. For example, let \(\mathbf{y} \sim \text{Bernoulli}(p)\) then \[\sum_{i=1}^{n}y_{i}\text{log}(p)+(1-y_{i})\text{log}({1-p}).\]

- The log-likelihood is a relative score and can be difficult to interpret.

- AIC uses the in-sample log-likelihood, but achieves an (asymptotically) unbiased estimate the log-likelihood on the out-of-sample data by adding a bias correction. The statistic is then multiplied by negative two \[AIC=-2\mathcal{l}(\boldsymbol{\theta}|\mathbf{y}) + 2q\]

- What is a meaninful difference in AIC?

- AIC is a derived quanitity. Just like \(R^2\) we can and should get measures of uncertainty (e.g., 95% CI).

Example

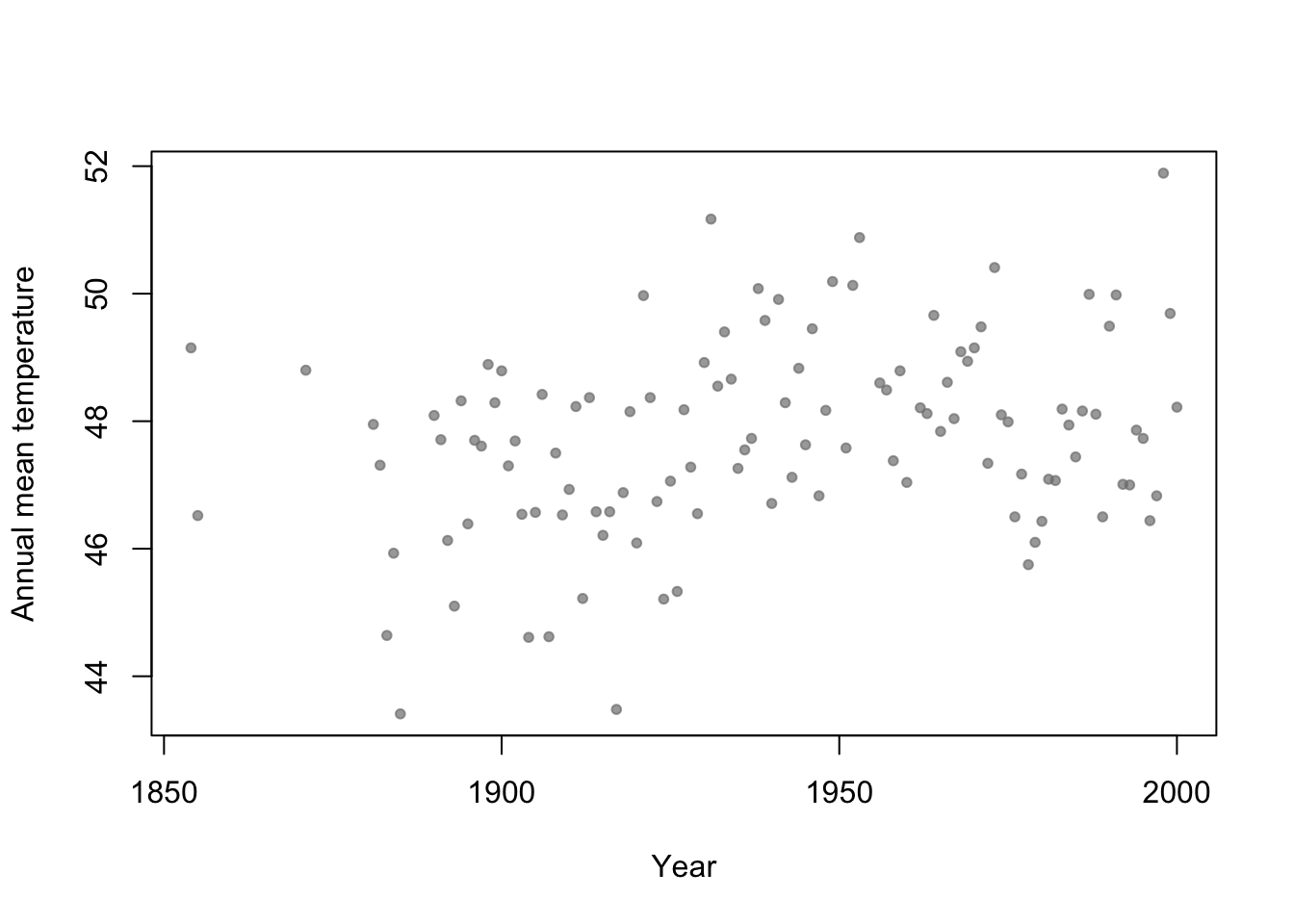

- Motivation: Annual mean temperature in Ann Arbor Michigan

library(faraway) plot(aatemp$year,aatemp$temp,xlab="Year",ylab="Annual mean temperature",pch=20,col=rgb(0.5,0.5,0.5,0.7))

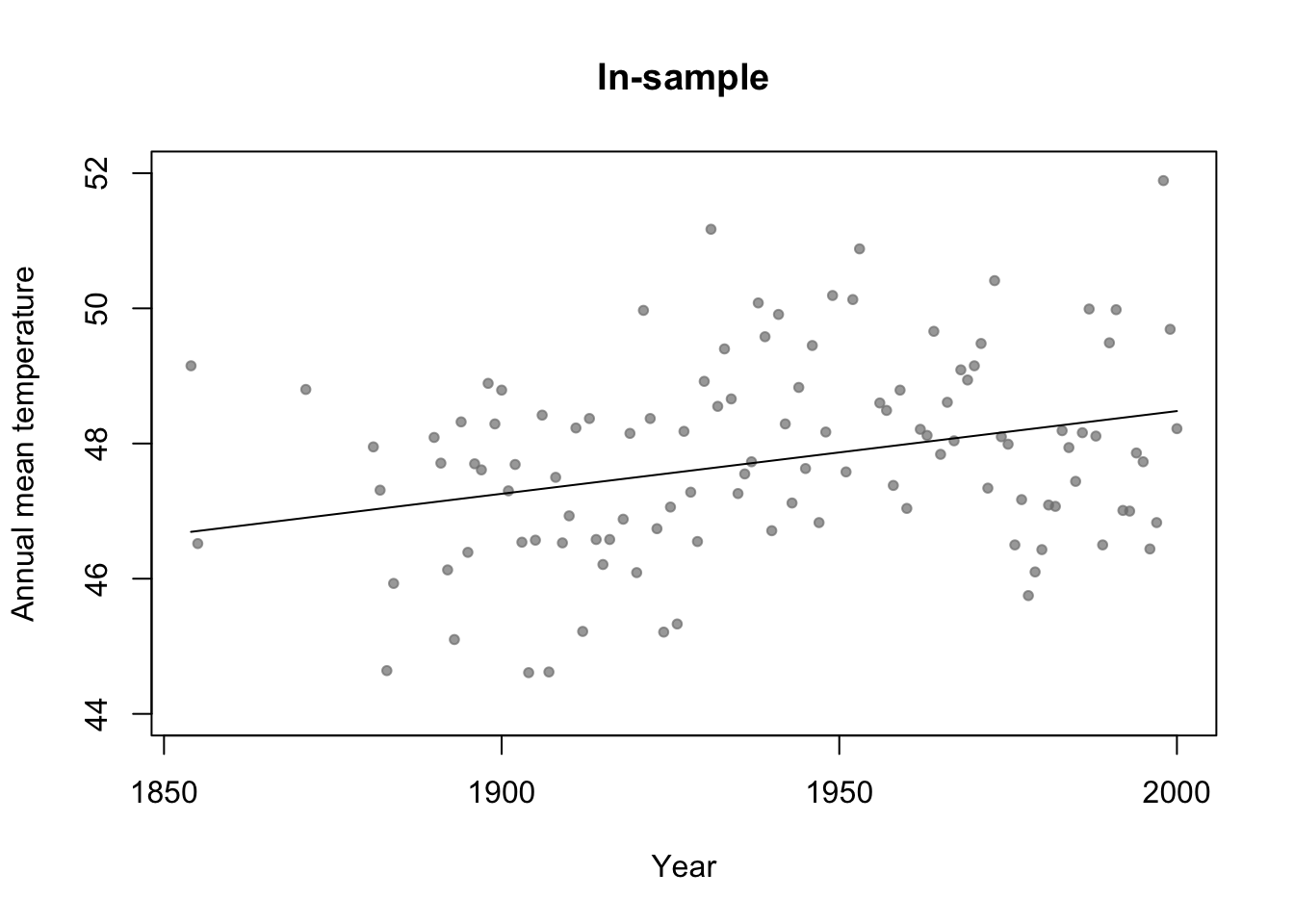

df.in_sample <- aatemp # Use all years for in-sample data # Intercept only model m1 <- lm(temp ~ 1,data=df.in_sample) # Simple regression model m2 <- lm(temp ~ year,data=df.in_sample) par(mfrow=c(1,1)) # Plot predictions (in-sample) plot(df.in_sample$year,df.in_sample$temp,main="In-sample",xlab="Year",ylab="Annual mean temperature",ylim=c(44,52),pch=20,col=rgb(0.5,0.5,0.5,0.7)) lines(df.in_sample$year,predict(m2))

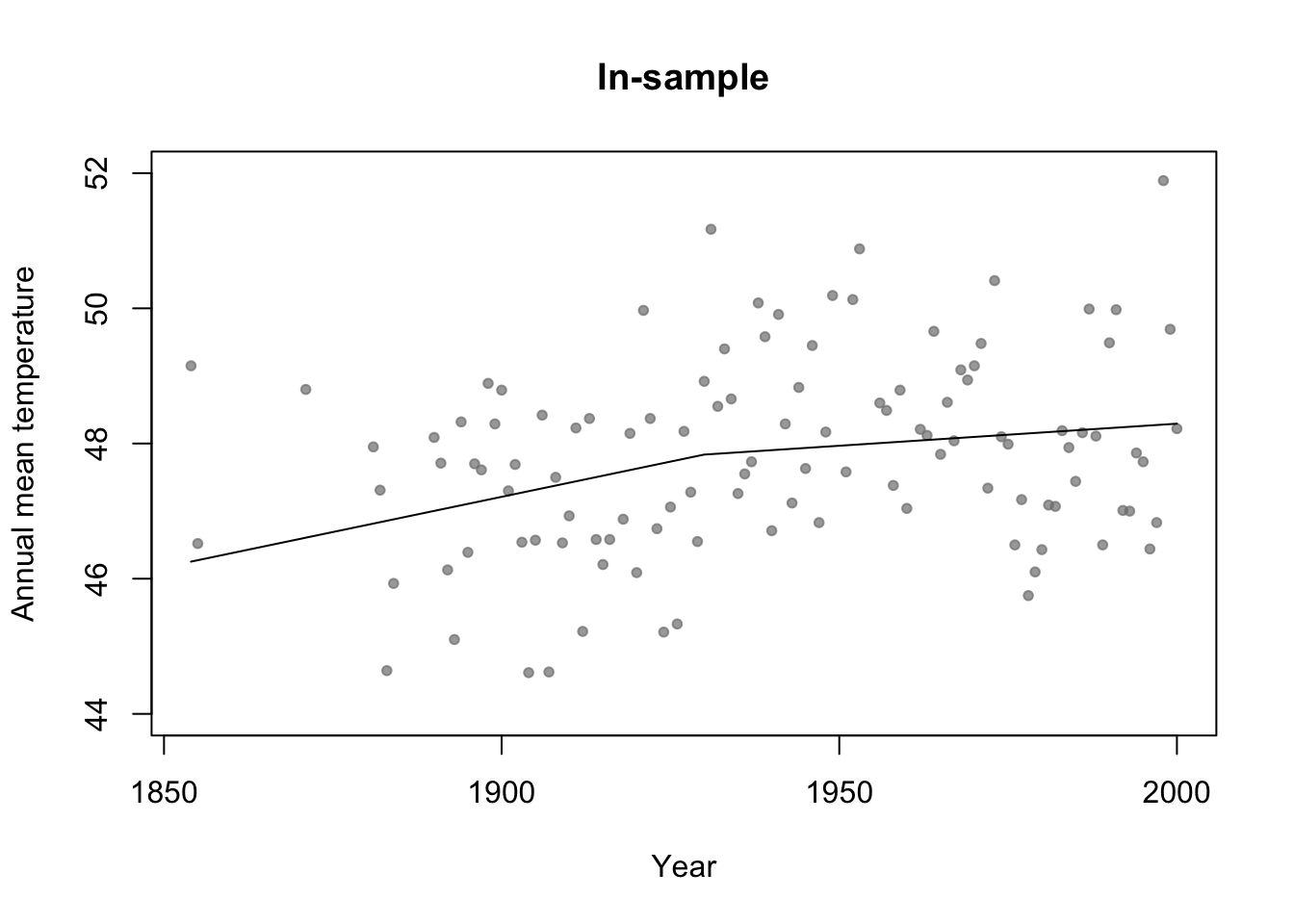

# Broken stick basis function f1 <- function(x,c){ifelse(x<c,c-x,0)} f2 <- function(x,c){ifelse(x>=c,x-c,0)} m3 <- lm(temp ~ f1(year,1930) + f2(year,1930),data=df.in_sample) par(mfrow=c(1,1)) # Plot predictions (in-sample) plot(df.in_sample$year,df.in_sample$temp,main="In-sample",xlab="Year",ylab="Annual mean temperature",ylim=c(44,52),pch=20,col=rgb(0.5,0.5,0.5,0.7)) lines(df.in_sample$year,predict(m3))

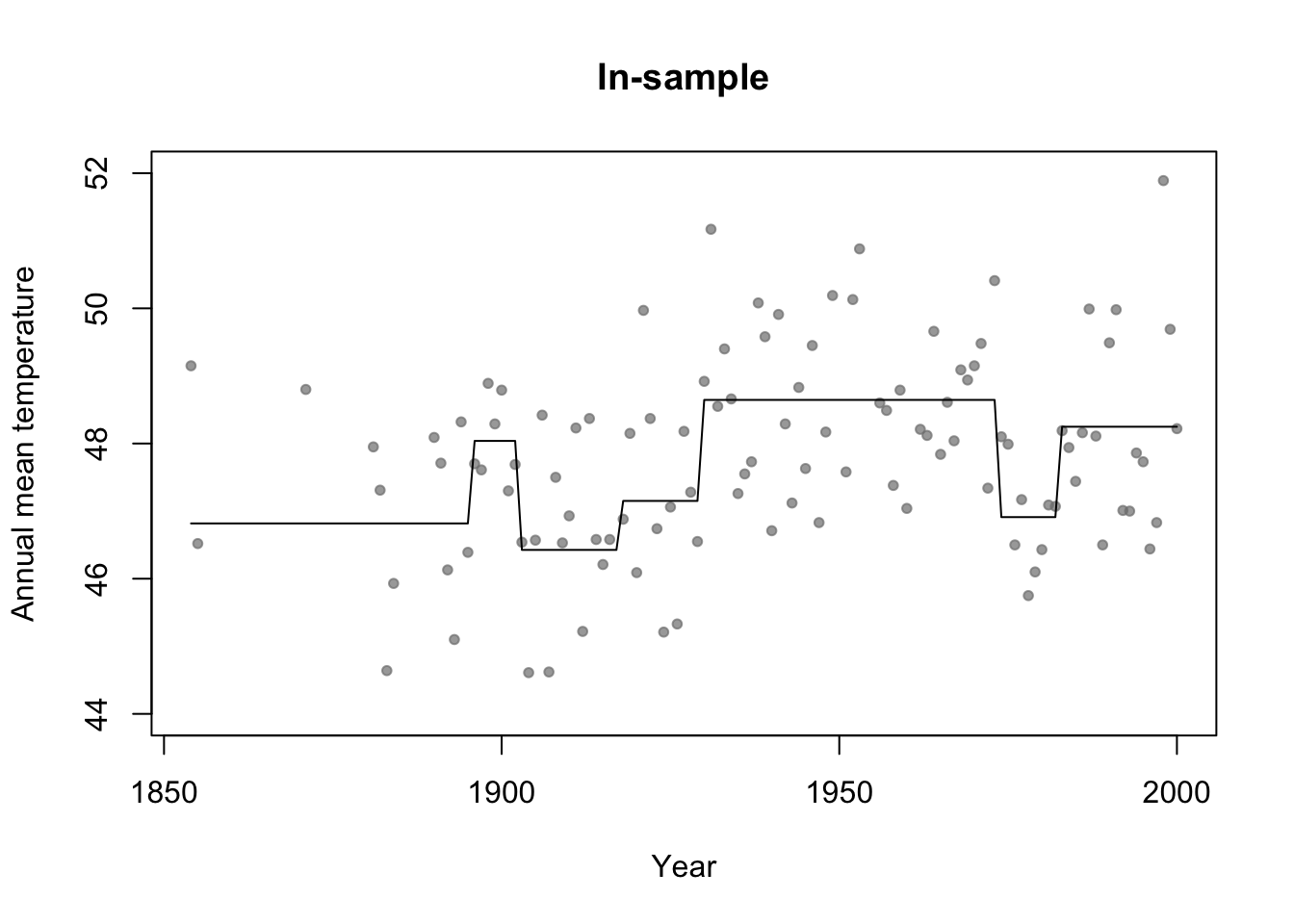

library(rpart) # Fit regression tree model m4 <- rpart(temp ~ year,data=df.in_sample) par(mfrow=c(1,1)) # Plot predictions (in-sample) plot(df.in_sample$year,df.in_sample$temp,main="In-sample",xlab="Year",ylab="Annual mean temperature",ylim=c(44,52),pch=20,col=rgb(0.5,0.5,0.5,0.7)) lines(df.in_sample$year,predict(m4))

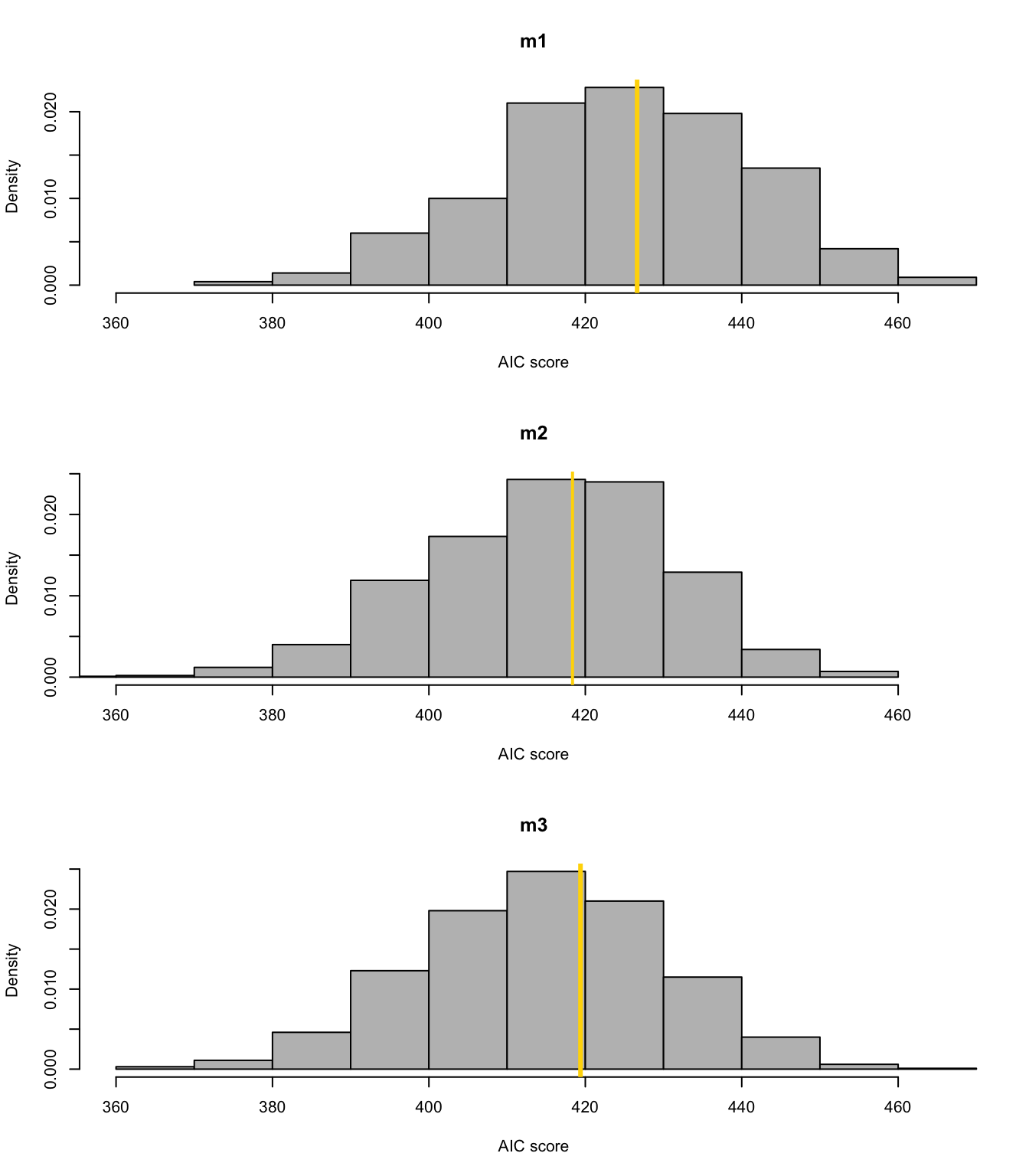

#Adjusted R-squared summary(m1)$adj.r.square## [1] 0summary(m2)$adj.r.square## [1] 0.0772653summary(m3)$adj.r.square## [1] 0.07692598# Not clear how to calculate adjusted R^2 for m4 (regression tree) # AIC y <- df.in_sample$temp y.hat_m1 <- predict(m1,newdata=df.in_sample) y.hat_m2 <- predict(m2,newdata=df.in_sample) y.hat_m3 <- predict(m3,newdata=df.in_sample) y.hat_m4 <- predict(m4,newdata=df.in_sample) sigma2.hat_m1 <- summary(m1)$sigma^2 sigma2.hat_m2 <- summary(m2)$sigma^2 sigma2.hat_m3 <- summary(m3)$sigma^2 # m1 #log-likelihood ll <- sum(dnorm(y,y.hat_m1,sigma2.hat_m1^0.5,log=TRUE)) # same as logLik(m1) q <- 1 + 1 # number of estimated betas + number estimated sigma2s #AIC -2*ll+2*q #Same as AIC(m1)## [1] 426.6276# m2 #log-likelihood ll <- sum(dnorm(y,y.hat_m2,sigma2.hat_m2^0.5,log=TRUE)) # same as logLik(m2) q <- 2 + 1 # number of estimated betas + number estimated sigma2s #AIC -2*ll+2*q #Same as AIC(m2)## [1] 418.38# m3 #log-likelihood ll <- sum(dnorm(y,y.hat_m3,sigma2.hat_m3^0.5,log=TRUE)) # same as logLik(m3) q <- 3 + 1 # number of estimated betas + number estimated sigma2s #AIC -2*ll+2*q #Same as AIC(m3)## [1] 419.4223# m4 n <- length(y) q <- 7 + 6 + 1 # number of estimated "intercept" terms in each termimal node + number of estimated "breaks" + number estimated sigma2s sigma2.hat_m4 <- t(y - y.hat_m4)%*%(y - y.hat_m4)/(n - q - 1) # log-likelihood ll <- sum(dnorm(y,y.hat_m4,sigma2.hat_m4^0.5,log=TRUE)) #AIC -2*ll+2*q## [1] 406.5715Example: assessing uncertainity in model selection statistics

library(mosaic) boot <- function(){ m1 <- lm(temp ~ 1,data=resample(df.in_sample)) m2 <- lm(temp ~ year,data=resample(df.in_sample)) m3 <- lm(temp ~ f1(year,1930) + f2(year,1930),data=resample(df.in_sample)) cbind(AIC(m1),AIC(m2),AIC(m3))} bs.aic.samplles <- do(1000)*boot() par(mfrow=c(3,1)) hist(bs.aic.samplles[,1],col="grey",main="m1",freq=FALSE,xlab="AIC score",xlim=range(bs.aic.samplles)) abline(v=AIC(m1),col="gold",lwd=3) hist(bs.aic.samplles[,2],col="grey",main="m2",freq=FALSE,xlab="AIC score",xlim=range(bs.aic.samplles)) abline(v=AIC(m2),col="gold",lwd=2) hist(bs.aic.samplles[,3],col="grey",main="m3",freq=FALSE,xlab="AIC score",xlim=range(bs.aic.samplles)) abline(v=AIC(m3),col="gold",lwd=3)

Summary

- Making statistical inference after model selection?

- Discipline specific views (e.g., AIC in wildlife ecology).

- There are many choices for model selection using in-sample data. Knowing the “best” model selection approach would require you to know a lot about all other procedures.

- AIC (Akaike 1973)

- BIC (Swartz 1978)

- CAIC (Vaida & Blanchard 2005)

- DIC (Spiegelhalter et al. 2002)

- ERIC (Hui et al. 2015)

- And the list goes on

- I would recommend using methods that you understand.

- In my experience it takes a larger data set to do estimation and model selection

- If you have a large data set, split it and use out-of-sample data for estimation

- If you don’t have a large data set, make sure you assess the uncertainty. You might not have enough data to do both estimation and model selection.

References

Gelman, Andrew, Jessica Hwang, and Aki Vehtari. 2014. “Understanding Predictive Information Criteria for Bayesian Models.” Statistics and Computing 24 (6): 997–1016.