7 Lab 3 (R)

7.1 Lab Goals & Instructions

Goals

- Produce three key plots to evaluate your linear regression model

- Run through all the ways to evaluate each assumption of the linear regression model

- Learn how to save predicted values and residuals from your model

Research Question: What features are associated with how wealthy a billionaire is?

Instructions

- Download the data and the script file from the lab files below.

- Run through the script file and reference the explanations on this page if you get stuck.

- If you have time, complete the challenge activity on your own.

Jump Links to Commands in this Lab:

Plot 1: Residuals vs Fitted (Predicted) Values

Plot 2: Scale Location Plot

Plot 3: Q-Q Plot

Saving predicted values

Saving residuals

Linearity

Homoskedasticity

Normal Errors

Uncorrelated Errors

X is not invariant

X is independent of the error

7.3 Three Key Plots to Test Assumptions

After you conduct a linear regression, I recommend that you produce these three plots to test whether or not the assumptions of a linear regression model are violated. There are many different ways to test the assumptions of linear regression, but I find these three plots to be relatively simple, and they cover most of the assumptions. In the following sections we’ll go through a variety of ways to test each assumption.

I also recommend using this plot because they take advantage of some built-in plots that R will produce after you run a linear regression.

Make sure you run the linear regression code first. In this regression, we’re still looking at whether gender and age are associated with wealth of billionaires.

# Turn female into a factor variable

mydata <- mydata %>%

mutate(female = factor(female))

# Run the model and save it

fit_lm <- lm(wealthworthinbillions ~ female + age, data = mydata)Plot 1: Residuals vs Predicted (Fitted) Values

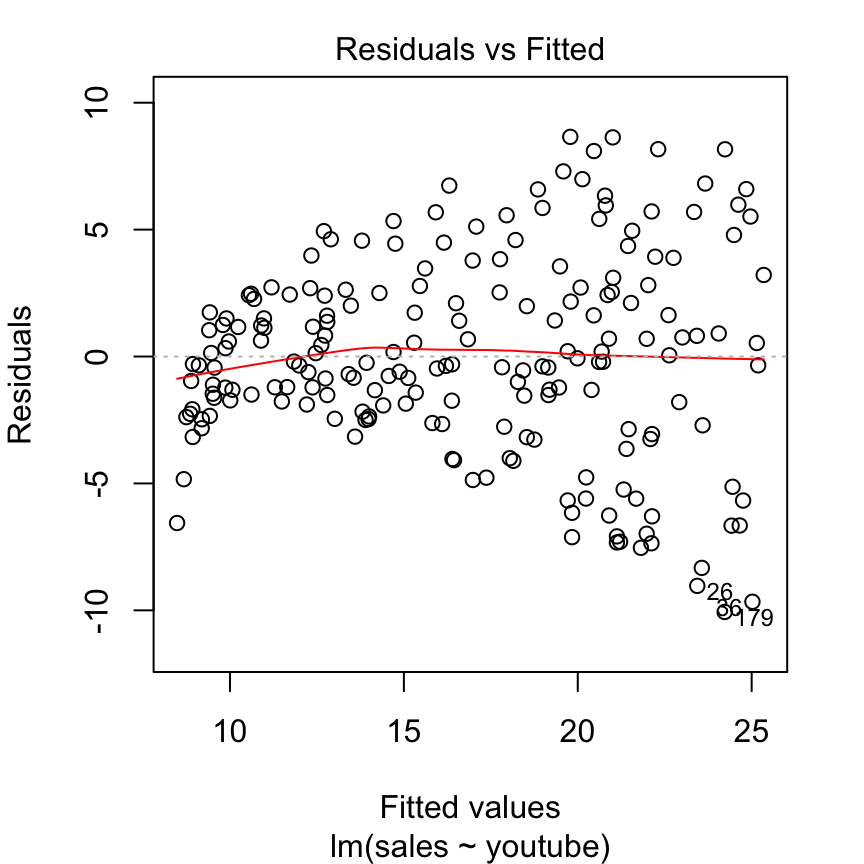

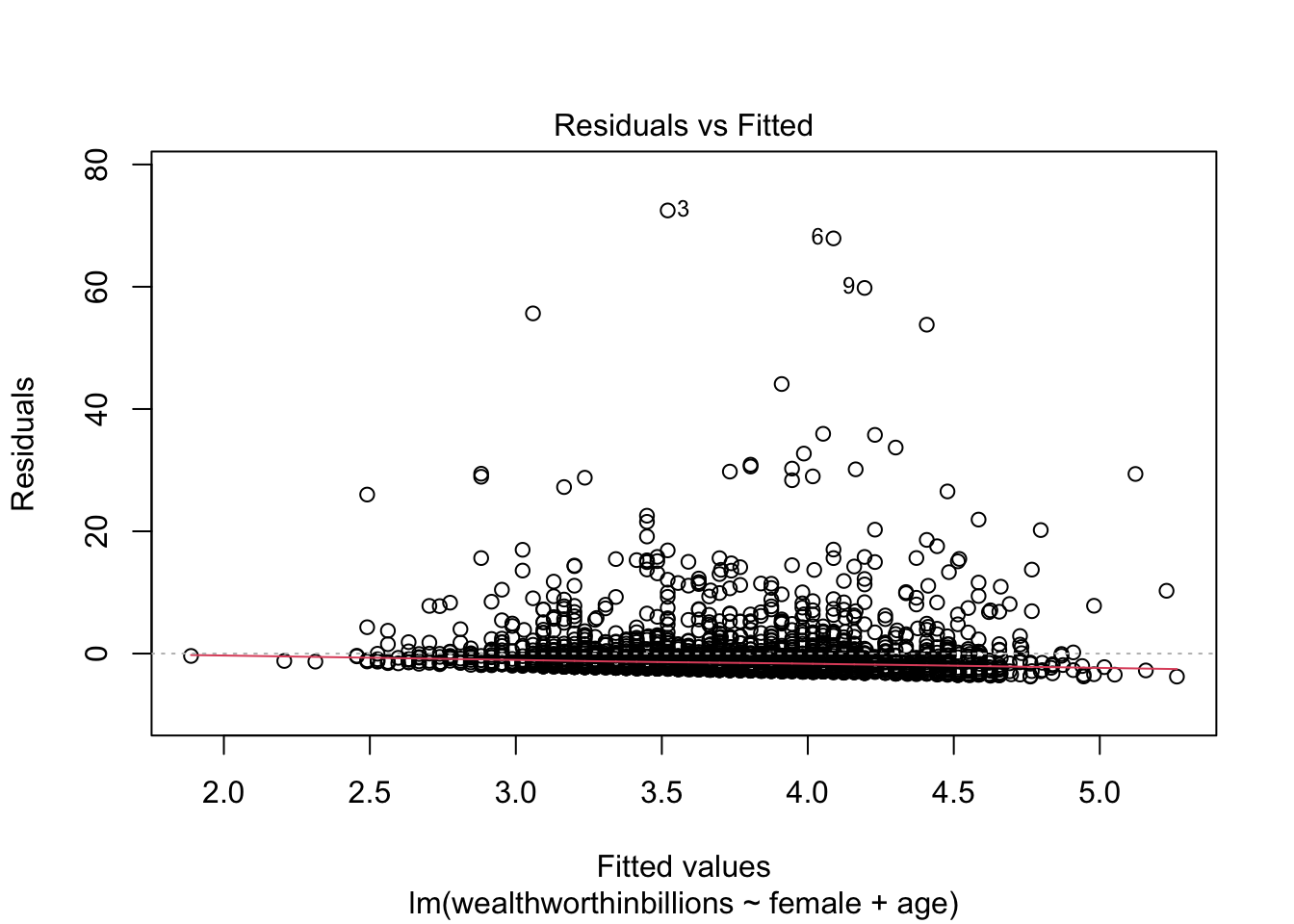

This plot puts the residuals on the y axis and your predicted values on the x-axis. So the logic is: for each predicted value, how big is the residual or error between the predicted and observed values? We then look to see if there is a pattern in those errors in our model, which would indicate our problem.

This plot can help us detect:

- Linearity: The residuals should form a horizontal band around 0, which is approximated by the red line. If linearity does not hold, there will be a curve to the line.

- Homoskedasticity: If this assumption holds, the spread of the points will be approximately the same from left to right. If it does not hold, there may be a cone shaped pattern to the points.

This is an example of a residuals vs fitted plot where the linearity assumption is met, but there is evidence of heteroskedasticity (that classic cone shape to the error points).

Let’s produce this plot for our model. Again, we’re making use of some built-in plots that R makes for us. We specify which plot we want R to show by putting which = 1 as an option.

plot(fit_lm, which = 1)

It can be difficult to detect violations of the homoskedasticity assumption with this plot, so we move on two our second plot.

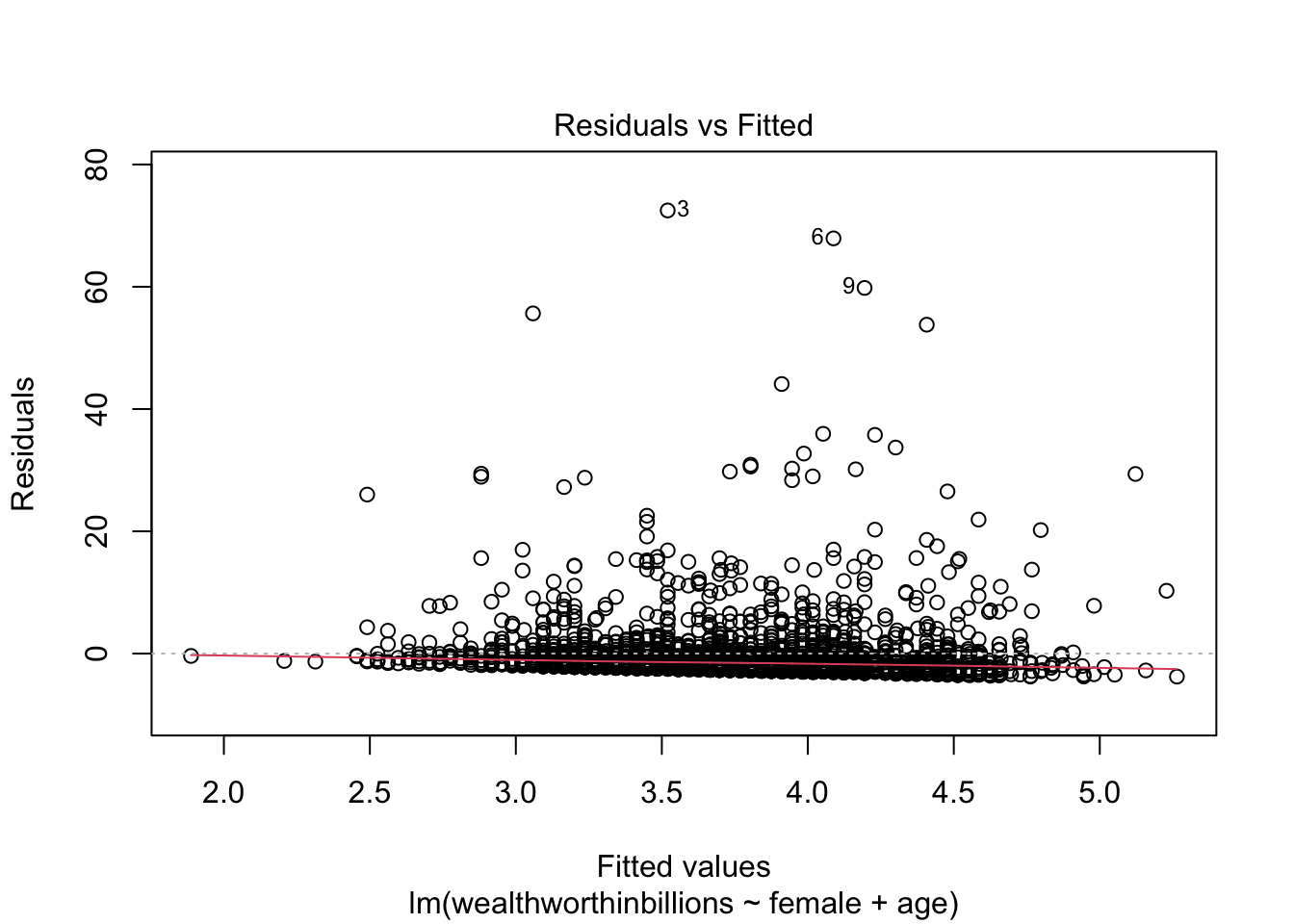

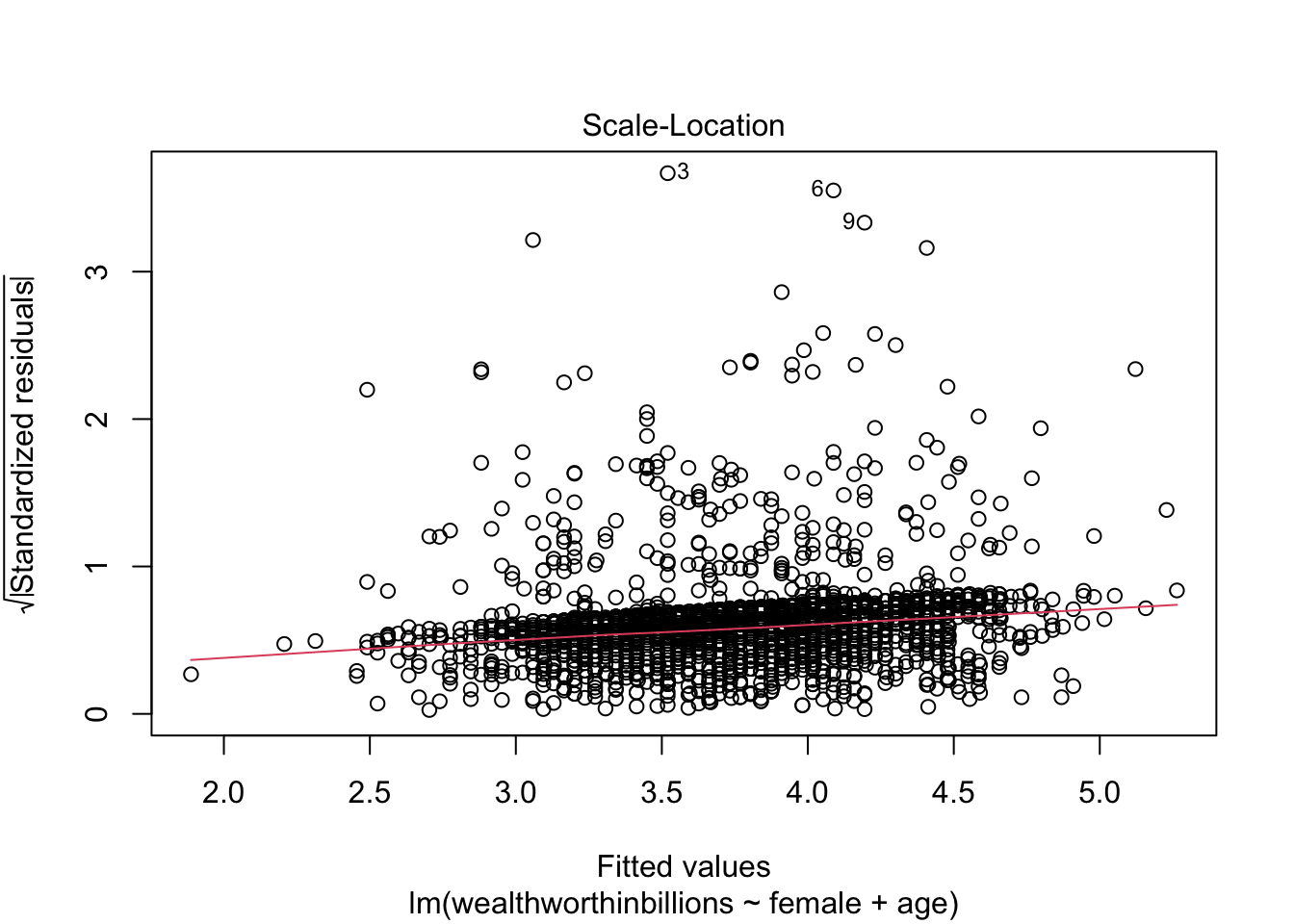

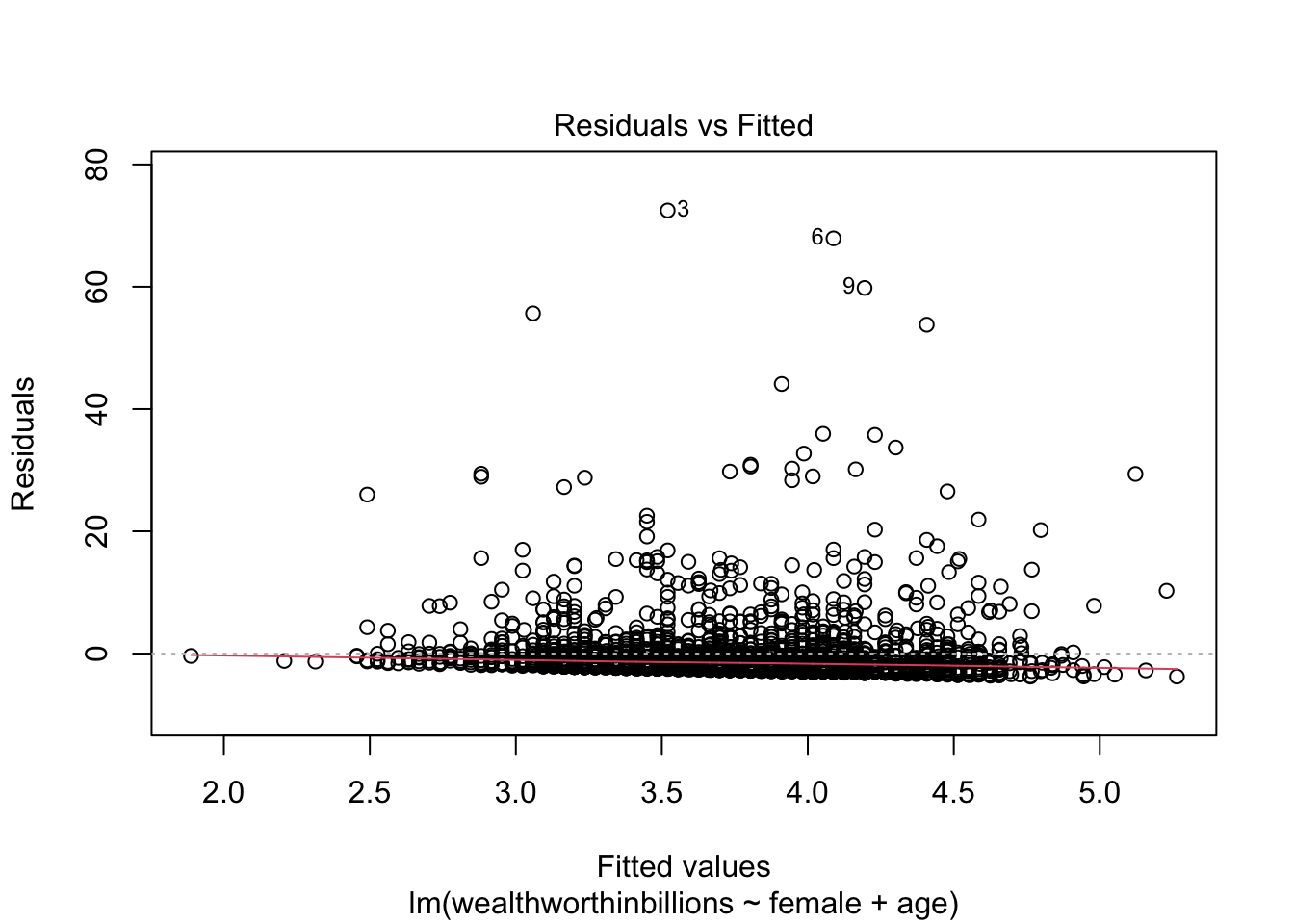

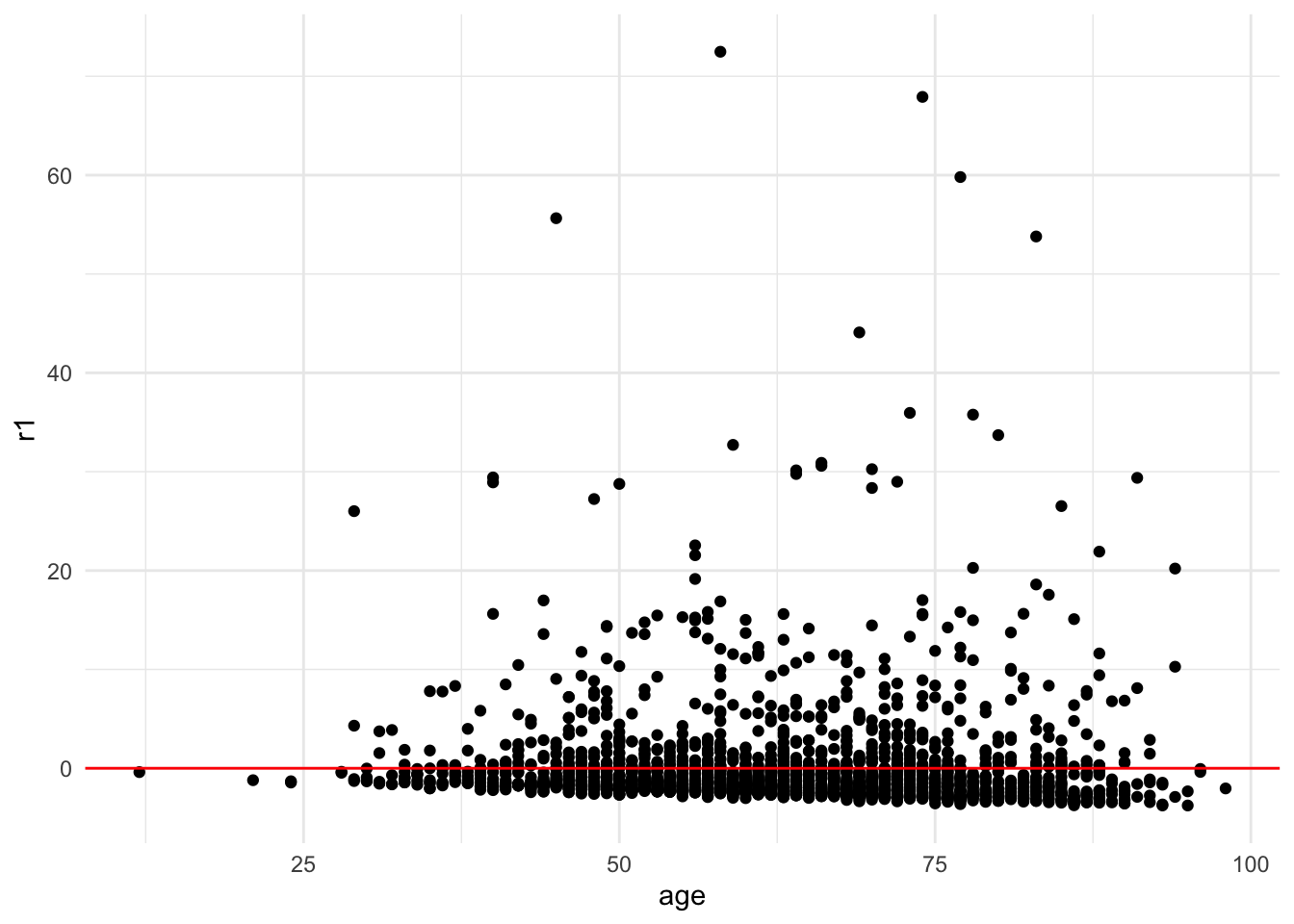

Plot 2: Scale Location Plot

This plot is very similar to our previous one, but it makes it easier to detect heteroskedasticity. This time we plot the square root of standardized residuals on the y axis and the fitted values again on our x axis. This lets us look at a line representing the mean of the √standardized residuals, or the spread of the error across values.

This plot is primarily good for evaluating:

- Homoskedasticity: If this assumption holds, the standardized residuals will be roughly horizontal, aka the red line should be approximately horizontal. The residuals should also appear randomly scattered around the plot.

Let’s take a look at this plot for our model. This time we’re selecting the third plot in R’s linear regression repotoire.

plot(fit_lm, which = 3)

In this case we can more clearly see that the line is NOT horizontal, and that the variance of our errors increases across our predicted values.

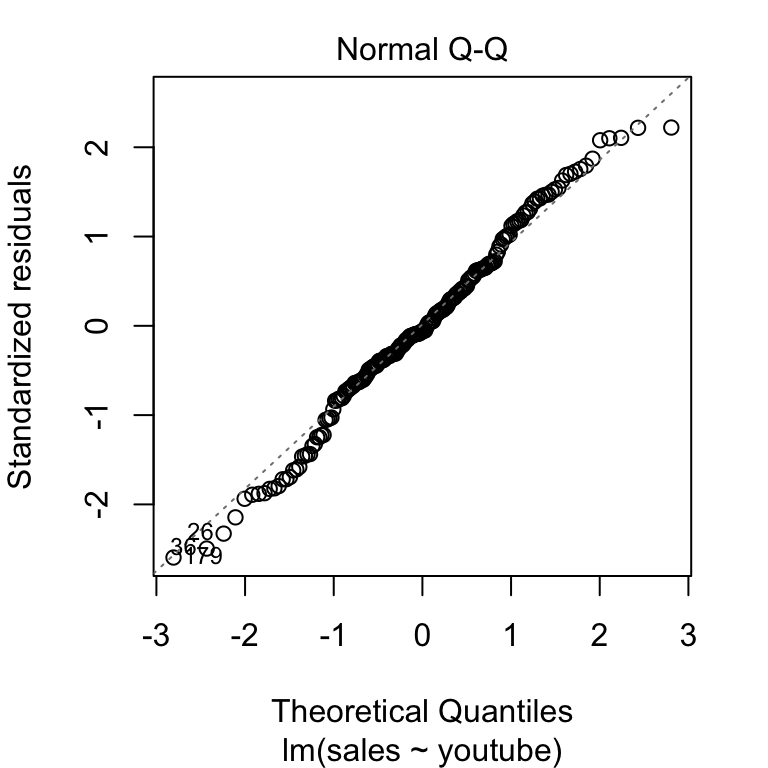

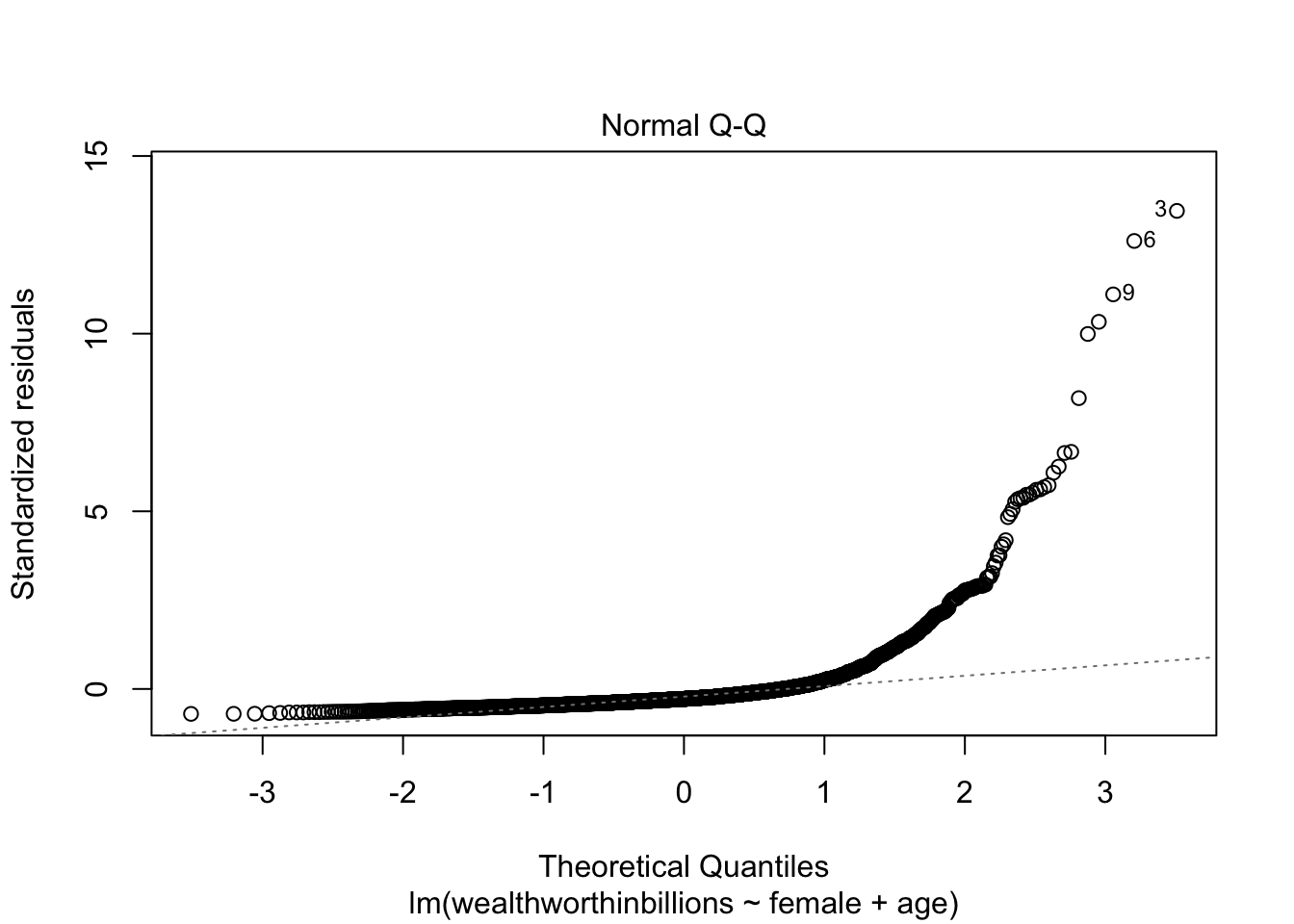

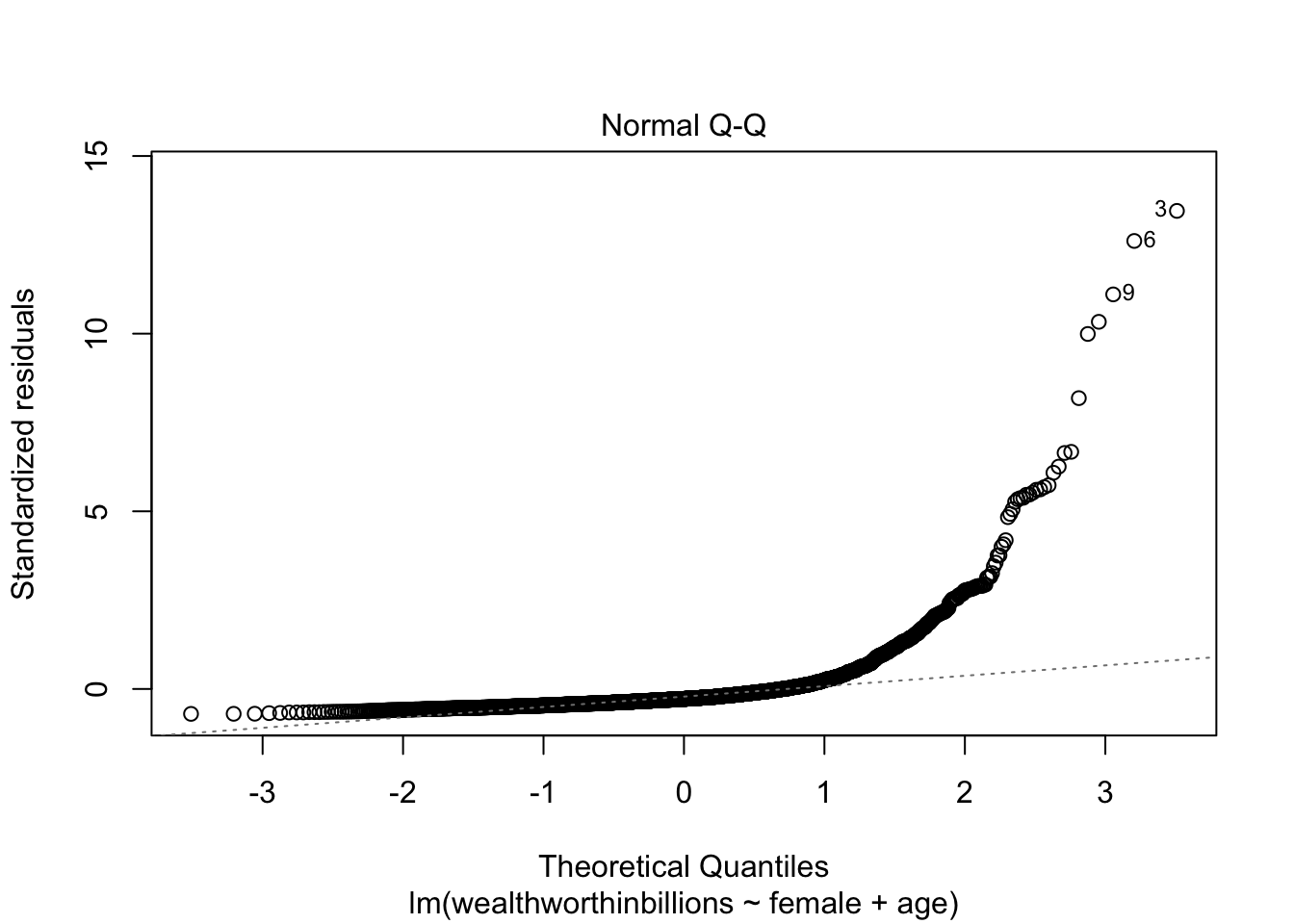

Plot 3: Normal Q-Q Plot

The final key plot that helps us test our assumptions is a normal Q-Q plot (or quantile-quantile plot). A Normal Q-Q plot takes your data, sorts it into ascending order, and puts those points into quantiles. It then plots those quantiles against what we would expect a normal distribution to look like. In more plain terms, we are plotting our error values and seeing how close they fall to the normal distribution. This graph plots the normal distribution as a straight line and the farther our points fall from that line, the less normal the errors are.

Here’s an example of a Q-Q plot with errors that follow the normal distribution. You can see that the plotted points fall close to the dotted line representing normality.

Now let’s look at this plot in our model. We are selecting the second plot in the sequence.

plot(fit_lm, which = 2)

You can clearly see that our errors do NOT follow the normal distribution.

7.4 Saving Predicted Values and Residuals

Before we go through each assumption, you should know how to save predicted values and residuals from a linear regression model. Because we can only look at residuals and predicted values that are in our model, you want to first limit the dataset to the values in the model. In our case, we’re dropping all values that are missing on any of our three variables:

mydata_lm <- mydata %>%

drop_na(wealthworthinbillions, female, age)Let’s check how many cases we dropped, which you can verify is the same as the number of observations in our model.

nrow(mydata) - nrow(mydata_lm)[1] 385Predicted Values

When we run a linear regression, we produce a linear equation that describes the relationships between our variables. In this lab, our linear equation is:

\[ y_{wealth} = A + \beta_1 x_{gender} + \beta_2 x_{age} + e_i\]

Our predicted value is what this equation would produce if we ingore the error term. The error term bumps the value up or down to what is actually observed. But if we just went by the equation, the predicted value is what the model estimates would occur. Once we run a regression and save the results, we can very simply add those predicted values to our dataset:

mydata_lm$yhat <- predict(fit_lm)Note: I have labeled the predicted values as “yhat” because it is common in statistics to represent the predicted value with the symbol \(\hat y\) (i.e. a y with a little hat on top).

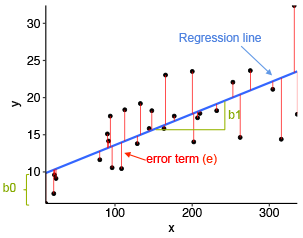

Residuals

As I mentioned above, there is often a difference between our predicted value and our observed value of Y in our dataset. The residual or error represents that difference. Here’s a graph I like that visualizes what the residuals are:

Just like the predicted value, we can easily save the residuals onto our dataset.

mydata_lm$r1 <- residuals(fit_lm)Then you can look at the residuals (or the predicted values) like any other variable.

summary(mydata_lm$r1) # descriptive statistics Min. 1st Qu. Median Mean 3rd Qu. Max.

-3.76422 -2.21700 -1.50716 0.00000 -0.09109 72.47989 head(mydata_lm$r1) # first 6 observations 1 2 3 4 5 6

15.61875 55.64129 72.47989 11.23145 28.35398 67.91202 7.5 Testing each assumption

With the three plots above, you can assess most of the assumptions. However, you also have a whole slew of tools at your disposal to look more at each assumption (at least the ones that are testable). Let’s go through each one by one. I’ll also remind you what each assumption means.

7.5.1 Linearity

Assumption: The relationship between whatever units our X and Y variables are measured in can be reasonably predicted by a linear line.

With this assumption, we have to make sure that the relationship between our X and Y variables falls along a straight line, and not a curved one. We discussed some initial ways to evaluate this assumption last week, which we’ll review here along with looking at means of X on y and our Residuals vs. Predicted plot.

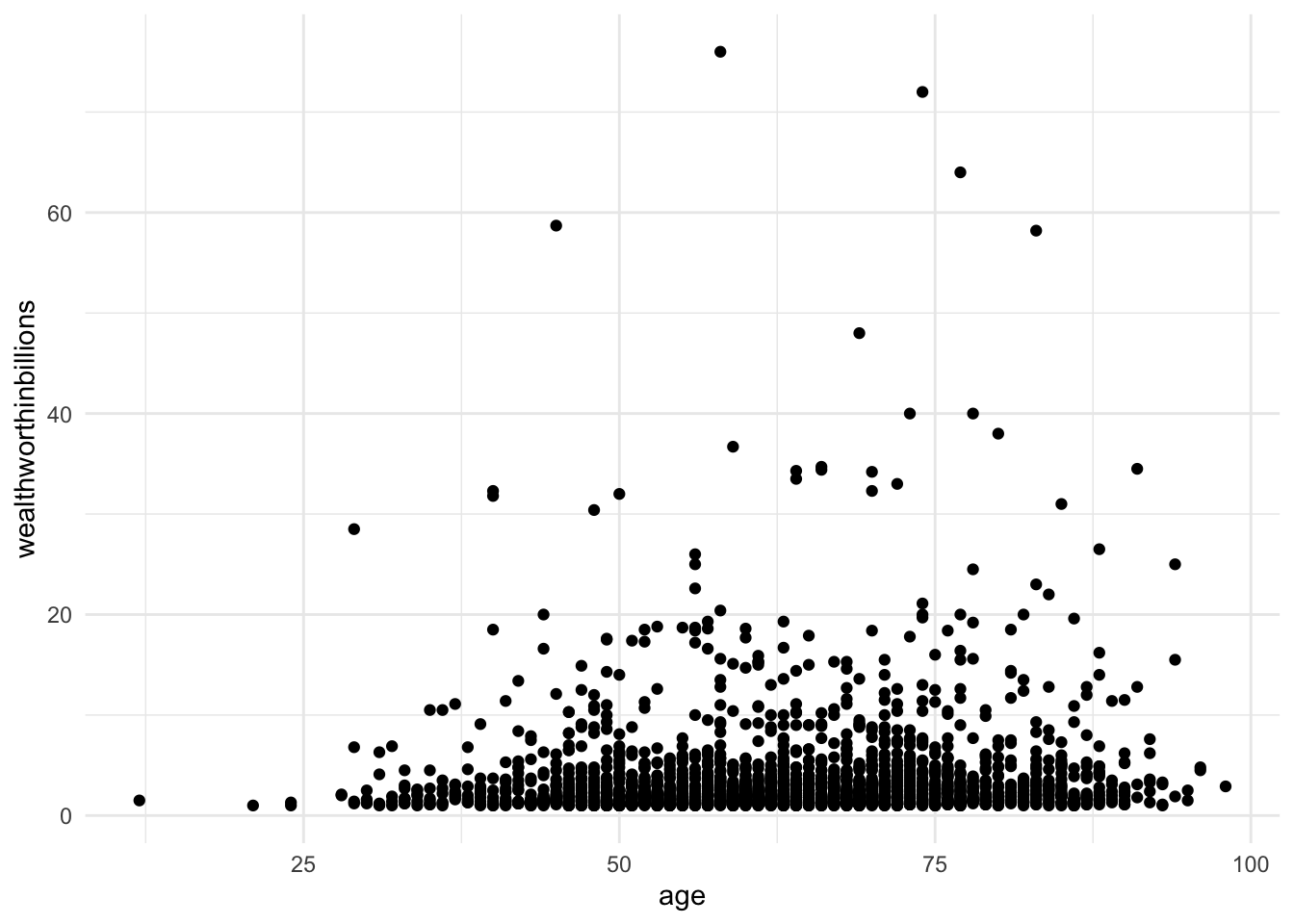

Method 1: Scatterplot of y on x

A simple way of testing this assumption is to look at a scatterplot, but this method can only look at one X as a time.

ggplot(data = mydata_lm, aes(y = wealthworthinbillions, x = age)) +

geom_point() +

theme_minimal()

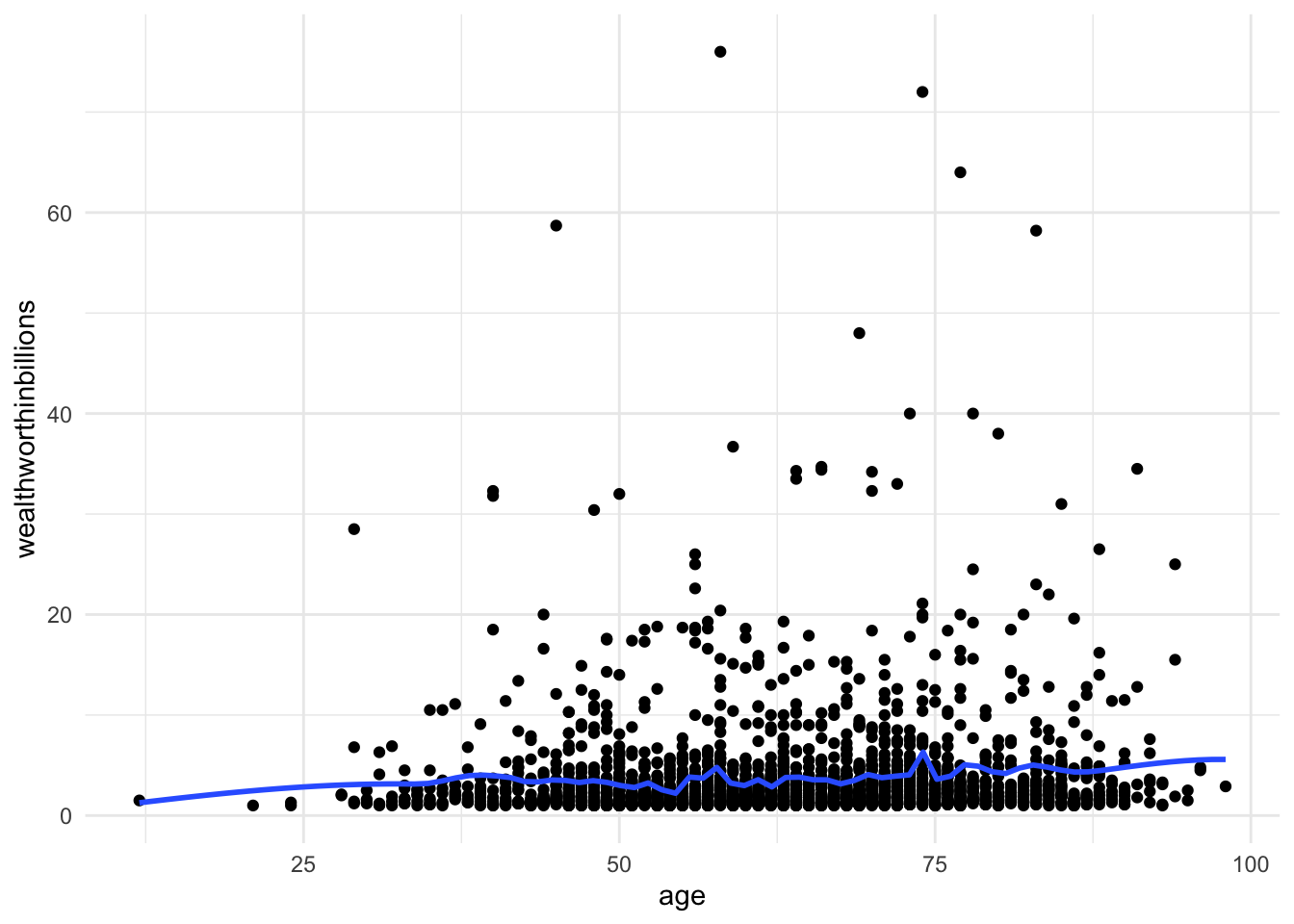

Method 2: Scatterplot with lowess smoothing line

Adding a lowess smoothing line can make the pattern more visible in busy scatterplots. But again,t his can only look at one X at a time.

ggplot(data = mydata_lm, aes(y = wealthworthinbillions, x = age)) +

geom_point() +

geom_smooth(method = "loess", se = FALSE, span = 0.1) +

# se = FALSE removes the confidence interval around the line.

theme_minimal()

Method 3: Means of X on Y

This is a method we went over in class. To make sure a continuous X variable has a linear relationship with our Y, we can break it up into categories, and then look at the means of those categories. Here are the steps in R.

Step 1: Create a categorical variable from your X

# Create the categories

mydata_lm <- mydata_lm %>%

mutate(age2 = ifelse(age > 20 & age <= 40, "21-40",

ifelse(age >40 & age <= 60, "41-60",

ifelse(age > 60 & age <= 80, "61-80",

ifelse(age >80 & age <= 100, "81-100",

NA)))))

# Now let's turn that into a factor

mydata_lm <- mydata_lm %>%

mutate(age2 = factor(age2,

levels = c("21-40", "41-60", "61-80", "81-100")))Step 2: Calculate the means for each category and save as dataframe

age_means <- mydata_lm %>%

group_by(age2) %>%

summarise(mean = mean(wealthworthinbillions)) %>%

drop_na() # dropping the NA category, which we don't need to visualize Step 3: Plot the means

ggplot(data = age_means, aes(x = age2, y = mean, group =1)) +

geom_line() +

geom_point() +

labs(

title = "Means of X on Y",

x = "Age",

y = "Mean of Wealth"

) +

theme_minimal() A couple notes on the code. I added both a line and a point geom because I think adding the points makes the graph easier to read. On “group = 1,” for line graphs, the data points must be grouped so that it knows which points to connect. In this case, it is simple – all points should be connected, so group=1.

A couple notes on the code. I added both a line and a point geom because I think adding the points makes the graph easier to read. On “group = 1,” for line graphs, the data points must be grouped so that it knows which points to connect. In this case, it is simple – all points should be connected, so group=1.

Method 4: Residuals vs. Fitted (Predicted) Values

This is our first plot above. Again you should be looking for non-linear patterns in this plot. The residuals should form a horizontal band around 0, and the red line should be roughly horizontal.

plot(fit_lm, which = 1)

7.5.2 Homoskedasticity

Assumption: The variance of the error term is the same for all X’s.

With this assumption we are concerned about the size of the residuals (i.e. errors) for each observation. We expect there to be some differences between the predicted values and the observed values. However, we assume that those differences don’t follow any noticeable pattern. For example, if we get a lot bigger errors for people with greater ages, that would be a problem. We call that problem heteroskedasticity. The opposite of our assumption of homoskedasticity. These are some of my favorite words to pronounce in statistics.

There are three methods we’ll go over to detect hetereoskedasticity.

Method 1: Residuals vs Predicted Values Plot

Back to our favorite key plot. To evaluate this plot for heteroskedasticity, you want to look for any identifable pattern in the residuals. A cone shape is often indicative of hetereoskedasticity. If the residuals appear randomly scattered, that is an indicator that our assumption of homoskedasticity has been met.

plot(fit_lm, which = 1)

Method 2: Standardized Residuals vs. Fitted Values

Now we move to our second key plot. There should be no discernable pattern in the residuals, and the points should occupy the same space above and below the red line. The red line should be roughly horizontal.

plot(fit_lm, which = 3)

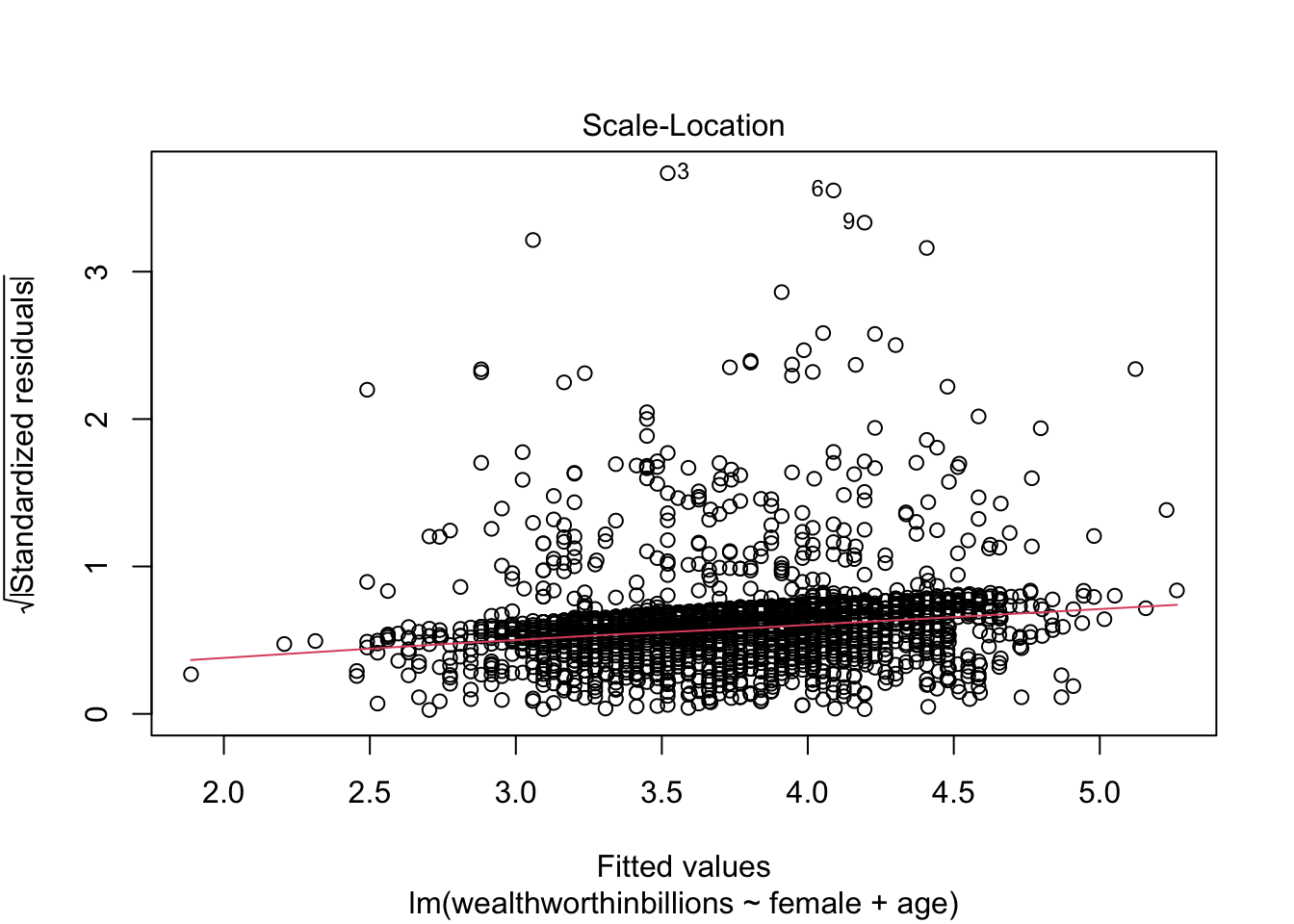

Method 3: Scatter Plot of residuals vs continuous x variable

Again with this method we are looking for a random pattern of errors. By choosing a specific x variable, you can see whether the errors follow a pattern along the distribution of any given variable in your model.

ggplot(mydata_lm, aes(x = age, y = r1)) +

geom_point() +

geom_hline(yintercept = 0, color = "red") +

theme_minimal()

7.5.3 Normally Distributed Errors

Assumption: The errors (i.e. residuals) follow a normal distribution.

Like our last assumption, a linear regression model assumes that the errors don’t have any particular pattern. In fact, we expect the errors to follow the normal distribution. The normal distribution is one of the most common distributions that describes the probability of event occurring. It is relatively straightforward to detect whether or not this assumption is violated. If our sample is large (\(n \geq40\)), then we don’t have to worry too much about violating this assumption. If our sample is small then we cannot trust the results of the model.

Here are three ways to detect non-normally distributed errors.

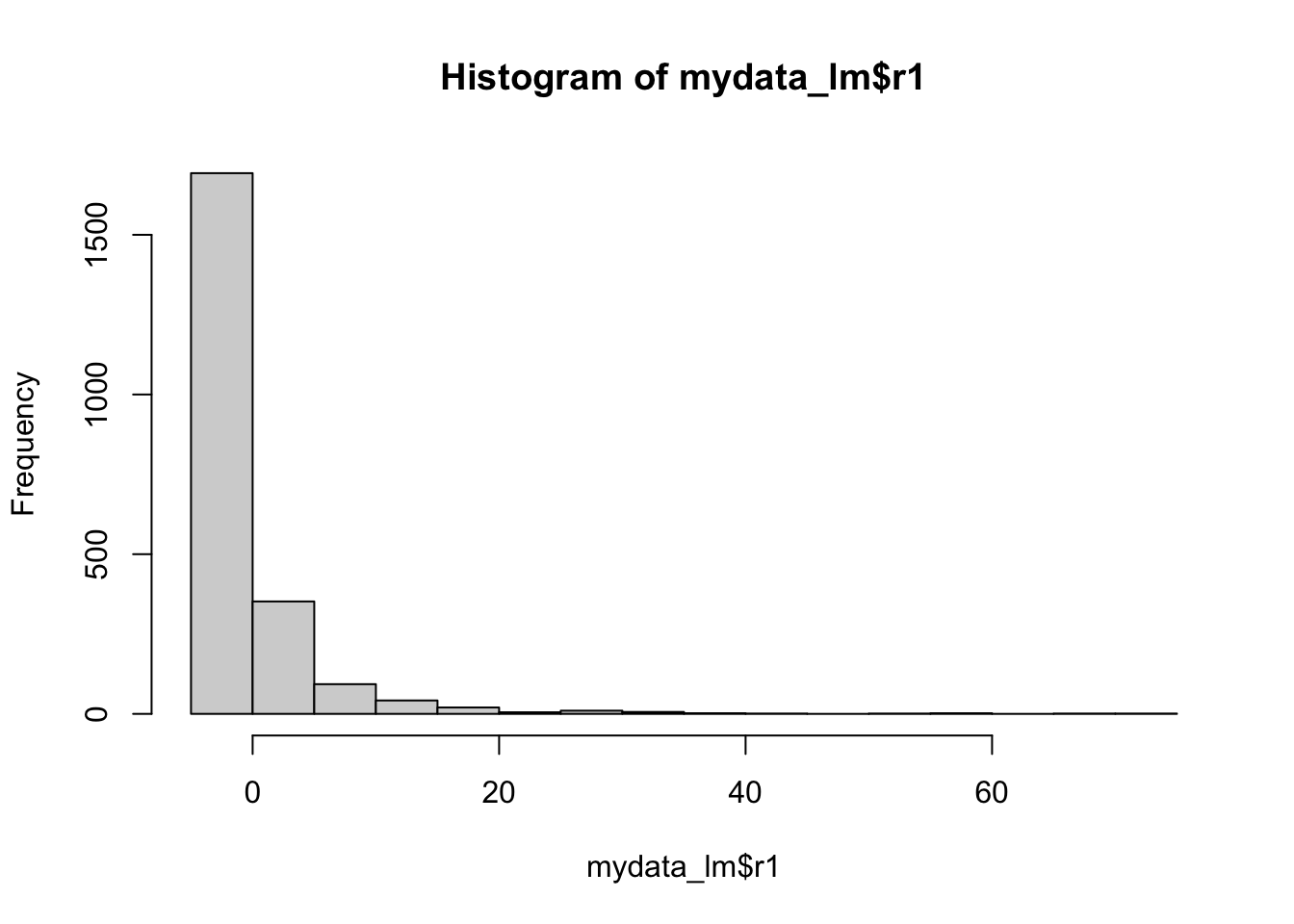

Method 1: Histogram of Residuals

Here you want to make sure that histogram looks roughly like a normal distribution. You can google what that looks like if you forget, but it is your classic bell curve.

hist(mydata_lm$r1)

Method 2: Skew of residuals

We haven’t touched on skew as a descriptive statistics in class. It is automatically produced in Stata descriptives, but not in R. However, we can very easily pull out just the skew using a command from the moments library. You are looking out for a skew greater than 1 to indicate heavy skew.

skewness(mydata_lm$r1)[1] 5.946823Method 3: Q-Q Plot

This is our third key plot. Again, it plots the normal distribution as a straight line and the farther the points from the line, the less normal the errors.

plot(fit_lm, which=2)

7.5.4 Uncorrelated Errors

Assumption: The errors (i.e. residuals) are not correlated with each other.

There is no “method” per se to ensure that your errors are not correlated with each other. This is something you have to recognize about the structure of the dataset. Ask yourself: Are there groupings of observations in my dataset? Would observations in those groupings potentially look more like each other than those in other groups?

Some examples would be students from the same school, observations from different years, people from the same city, patients in the same hospital, and so on. There may be unobservable factors related to being in that group that make errors correlated with each other. We will talk ways to account for this in the future.

A look at year reveals we might have this problem in our model:

mydata_lm %>%

group_by(year) %>%

count()# A tibble: 3 × 2

# Groups: year [3]

year n

<dbl> <int>

1 1996 223

2 2001 416

3 2014 1590We can see that people were collected from waves. This means we have a time series. If you delve in further (i.e. look at rank==1) you’ll notice an example of the time series danger: most people who were extremely wealthy in the first waves were likely to be the top of the pile in future assessments, even if their wealth changed.

In this situation, we’d have to ask ourselves what the best approach is to address the correlated errors.

7.5.5 X is not invariant

Assumption: X is not invariant.

Again, there’s no method to detect this one other than making sure you have variation in your independent variables. Is everyone in your study the same age, well then you can’t detect any effect of different ages! Make sure you have different values for your independent variables.

7.5.6 X is independent of the error terms

Assumption: The errors (i.e. residuals) are independent of X. Meaning, there is no ommitted variable biasing our model.

This assumption all has to do with unseen variables. It cannot be tested for. In the script file, I had you do some correlations with the error terms. That doesn’t work because you want to know whether those errors are related to variables NOT in your dataset.Therefore, you just have to read in your area, get feedback, and think through what might be affecting the relationship you are studying that you do not have measures for.

7.6 Challenge Activity

Run another regression with wealth as your outcome and one or two new variables. It can be the same one as last week if you got this far.

Create the three key plots and determine whether or the assumptions of linearity, homoskedasticity, and normality of errors are violated.

This activity is not in any way graded, but if you’d like me to give you feedback email me your script file and a few sentences interpreting your results.