13 Conditional Process Analysis with a Multicategorical Antecedent

With his opening lines, Hayes prepared us:

At the end of any great fireworks show is the grand finale, where the pyrotechnicians throw everything remaining in their arsenal at you at once, leaving you amazed, dazed, and perhaps temporarily a little hard of hearing. Although this is not the final chapter of this book, I am now going to throw everything at you at once with an example of the most complicated conditional process model I will cover in this book. (Andrew F. Hayes, 2018, p. 469)

Enjoy the fireworks. 🎆

13.1 Revisiting sexual discrimination in the workplace

Here we load a couple necessary packages, load the data, and take a glimpse().

library(tidyverse)

protest <- read_csv("data/protest/protest.csv")

glimpse(protest)## Rows: 129

## Columns: 6

## $ subnum <dbl> 209, 44, 124, 232, 30, 140, 27, 64, 67, 182, 85, 109, 122, 69, 45, 28, 170, 66, 1…

## $ protest <dbl> 2, 0, 2, 2, 2, 1, 2, 0, 0, 0, 2, 2, 0, 1, 1, 0, 1, 2, 2, 1, 2, 1, 1, 2, 2, 0, 1, …

## $ sexism <dbl> 4.87, 4.25, 5.00, 5.50, 5.62, 5.75, 5.12, 6.62, 5.75, 4.62, 4.75, 6.12, 4.87, 5.8…

## $ angry <dbl> 2, 1, 3, 1, 1, 1, 2, 1, 6, 1, 2, 5, 2, 1, 1, 1, 2, 1, 3, 4, 1, 1, 1, 5, 1, 5, 1, …

## $ liking <dbl> 4.83, 4.50, 5.50, 5.66, 6.16, 6.00, 4.66, 6.50, 1.00, 6.83, 5.00, 5.66, 5.83, 6.5…

## $ respappr <dbl> 4.25, 5.75, 4.75, 7.00, 6.75, 5.50, 5.00, 6.25, 3.00, 5.75, 5.25, 7.00, 4.50, 6.2…With a little ifelse(), we can make the d1 and d2 contrast-coded dummies.

protest <- protest %>%

mutate(d1 = ifelse(protest == 0, -2/3, 1/3),

d2 = ifelse(protest == 0, 0,

ifelse(protest == 1, -1/2, 1/2)))Now load brms.

library(brms)Our statistical model follows two primary equations,

\[\begin{align*} M & = i_M + a_1 D_1 + a_2 D_2 + a_3 W + a_4 D_1 W + a_5 D_2 W + e_M \\ Y & = i_Y + c_1' D_1 + c_2' D_2 + c_3' W + c_4' D_1 W + c_5' D_2 W + b M + e_Y. \end{align*}\]

Here’s how we might specify the sub-model formulas with bf().

m_model <- bf(respappr ~ 1 + d1 + d2 + sexism + d1:sexism + d2:sexism)

y_model <- bf(liking ~ 1 + d1 + d2 + sexism + d1:sexism + d2:sexism + respappr)Now we’re ready to fit our primary model, the conditional process model with a multicategorical antecedent.

model13.1 <- brm(

data = protest,

family = gaussian,

m_model + y_model + set_rescor(FALSE),

chains = 4, cores = 4,

file = "fits/model13.01")Here’s the model summary, which coheres reasonably well with the output in Table 13.1.

print(model13.1, digits = 3)## Family: MV(gaussian, gaussian)

## Links: mu = identity; sigma = identity

## mu = identity; sigma = identity

## Formula: respappr ~ 1 + d1 + d2 + sexism + d1:sexism + d2:sexism

## liking ~ 1 + d1 + d2 + sexism + d1:sexism + d2:sexism + respappr

## Data: protest (Number of observations: 129)

## Draws: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## respappr_Intercept 4.596 0.665 3.265 5.894 1.001 5371 3217

## liking_Intercept 3.464 0.637 2.233 4.720 1.000 5679 2883

## respappr_d1 -2.878 1.441 -5.779 -0.080 1.003 3292 2691

## respappr_d2 1.628 1.623 -1.556 4.785 1.001 4002 2756

## respappr_sexism 0.044 0.128 -0.202 0.298 1.001 5393 3059

## respappr_d1:sexism 0.845 0.279 0.308 1.397 1.002 3334 2693

## respappr_d2:sexism -0.235 0.312 -0.860 0.369 1.002 4007 2797

## liking_d1 -2.722 1.176 -5.092 -0.390 1.001 3623 2491

## liking_d2 0.046 1.326 -2.597 2.659 1.000 3438 2622

## liking_sexism 0.074 0.105 -0.130 0.281 1.000 6357 2603

## liking_respappr 0.367 0.075 0.223 0.514 1.000 5468 2925

## liking_d1:sexism 0.524 0.233 0.059 0.989 1.001 3595 2696

## liking_d2:sexism -0.038 0.256 -0.542 0.468 1.000 3411 2593

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma_respappr 1.146 0.075 1.015 1.301 1.000 4786 3092

## sigma_liking 0.917 0.059 0.812 1.038 1.001 4828 2869

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

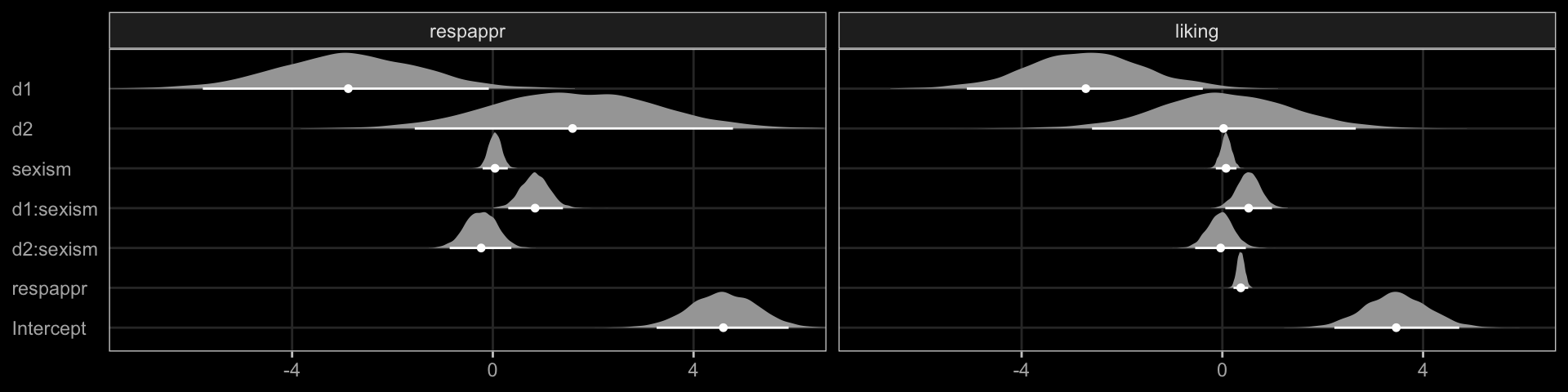

## scale reduction factor on split chains (at convergence, Rhat = 1).Instead of the table format of Hayes’s Table 3 (p. 475), why not display the parameter summaries in a coefficient plot?

library(ggdark)

library(tidybayes)

draws <- as_draws_df(model13.1)

draws %>%

pivot_longer(starts_with("b_")) %>%

mutate(name = str_remove(name, "b_")) %>%

separate(name, into = c("criterion", "predictor"), sep = "_") %>%

mutate(criterion = factor(criterion, levels = c("respappr", "liking")),

predictor = factor(predictor,

levels = c("Intercept", "respappr", "d2:sexism", "d1:sexism", "sexism", "d2", "d1"))) %>%

ggplot(aes(x = value, y = predictor, group = predictor)) +

stat_halfeye(.width = .95, normalize = "xy",

color = "white", size = 1/3) +

coord_cartesian(xlim = c(-7, 6)) +

labs(x = NULL, y = NULL) +

dark_theme_bw() +

theme(axis.text.y = element_text(hjust = 0),

axis.ticks.y = element_blank(),

panel.grid.major = element_line(color = "grey20"),

panel.grid.minor = element_blank()) +

facet_wrap(~ criterion)

Note our use of dark_theme_bw() from the ggdark package.

The Bayesian \(R^2\) distributions are reasonably close to the estimates in the text.

bayes_R2(model13.1) %>% round(digits = 3)## Estimate Est.Error Q2.5 Q97.5

## R2respappr 0.322 0.053 0.214 0.421

## R2liking 0.297 0.056 0.183 0.39913.2 Looking at the components of the indirect effect of \(X\)

A mediation process contains at least two “stages.” The first stage is the effect of the presumed causal antecedent variable \(X\) on the proposed mediator \(M\), and the second stage is the effect of the mediator \(M\) on the final consequent variable \(Y\). More complex models, such as the serial mediation model, will contain more stages. In a model such as the one that is the focus of this chapter with only a single mediator, the indirect effect of \(X\) on \(Y\) through \(M\) is quantified as the product of the effects in these two stages. When one or both of the stages of a mediation process is moderated, making sense of the indirect effect requires getting intimate with each of the stages, so that when they are integrated or multiplied together, you can better understand how differences or changes in \(X\) map on to differences in \(Y\) through a mediator differently depending on the value of a moderator. (p. 480)

13.2.1 Examining the first stage of the mediation process.

When making a newdata object to feed into fitted() with more complicated models, it can be useful to review the model formula like so.

model13.1$formula## respappr ~ 1 + d1 + d2 + sexism + d1:sexism + d2:sexism

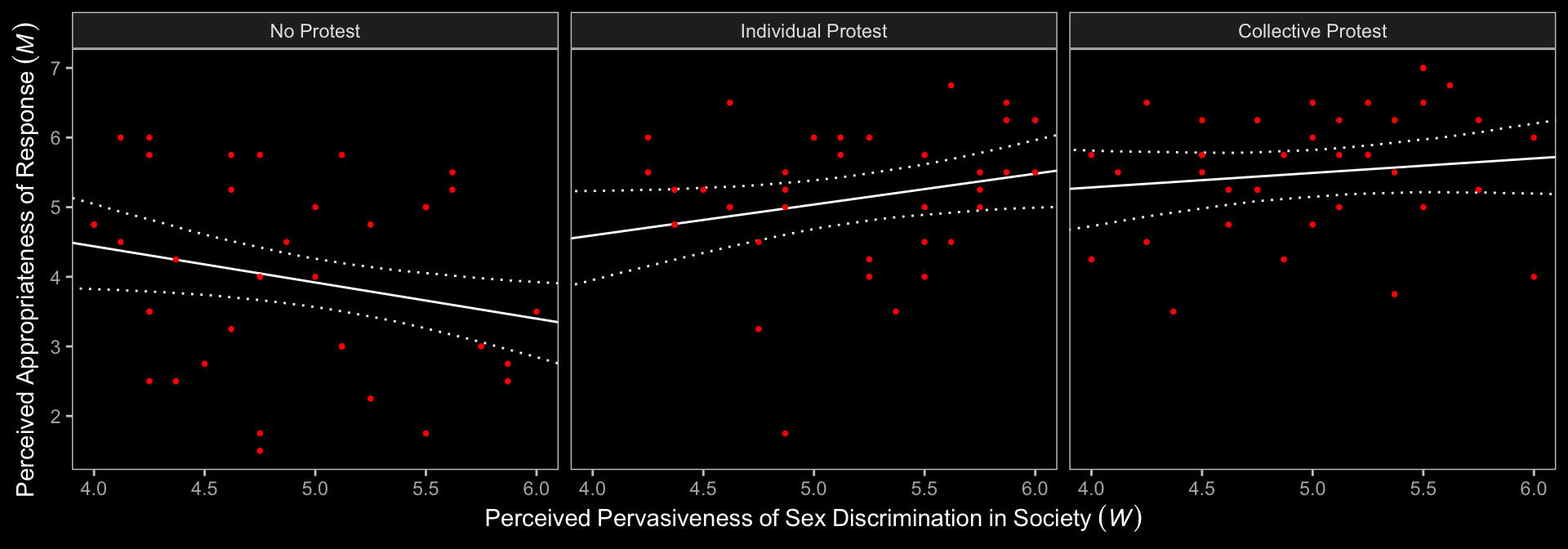

## liking ~ 1 + d1 + d2 + sexism + d1:sexism + d2:sexism + respapprNow we’ll prep for and make our version of Figure 13.3.

nd <- tibble(d1 = c(1/3, -2/3, 1/3),

d2 = c(1/2, 0, -1/2)) %>%

expand_grid(sexism = seq(from = 3.5, to = 6.5, length.out = 30))

f1 <- fitted(model13.1,

newdata = nd,

resp = "respappr") %>%

data.frame() %>%

bind_cols(nd) %>%

mutate(condition = ifelse(d2 == 0, "No Protest",

ifelse(d2 == -1/2, "Individual Protest", "Collective Protest"))) %>%

mutate(condition = factor(condition, levels = c("No Protest", "Individual Protest", "Collective Protest")))

protest <- protest %>%

mutate(condition = ifelse(protest == 0, "No Protest",

ifelse(protest == 1, "Individual Protest", "Collective Protest"))) %>%

mutate(condition = factor(condition, levels = c("No Protest", "Individual Protest", "Collective Protest")))

f1 %>%

ggplot(aes(x = sexism, group = condition)) +

geom_ribbon(aes(ymin = Q2.5, ymax = Q97.5),

linetype = 3, color = "white", fill = "transparent") +

geom_line(aes(y = Estimate),

color = "white") +

geom_point(data = protest,

aes(x = sexism, y = respappr),

color = "red", size = 2/3) +

coord_cartesian(xlim = c(4, 6)) +

labs(x = expression(Perceived~Pervasiveness~of~Sex~Discrimination~"in"~Society~(italic(W))),

y = expression(Perceived~Appropriateness~of~Response~(italic(M)))) +

dark_theme_bw() +

theme(panel.grid = element_blank()) +

facet_wrap(~condition)

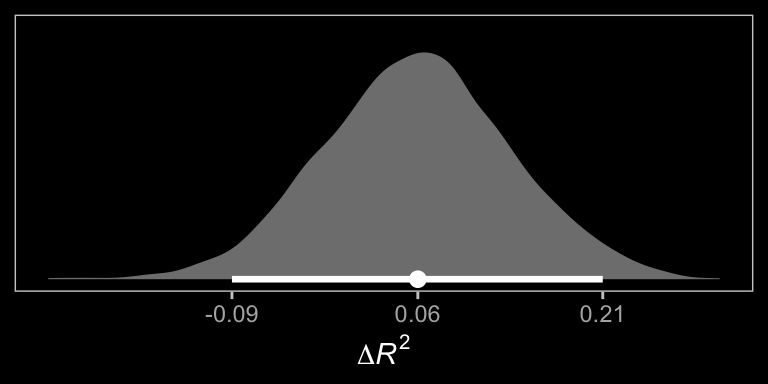

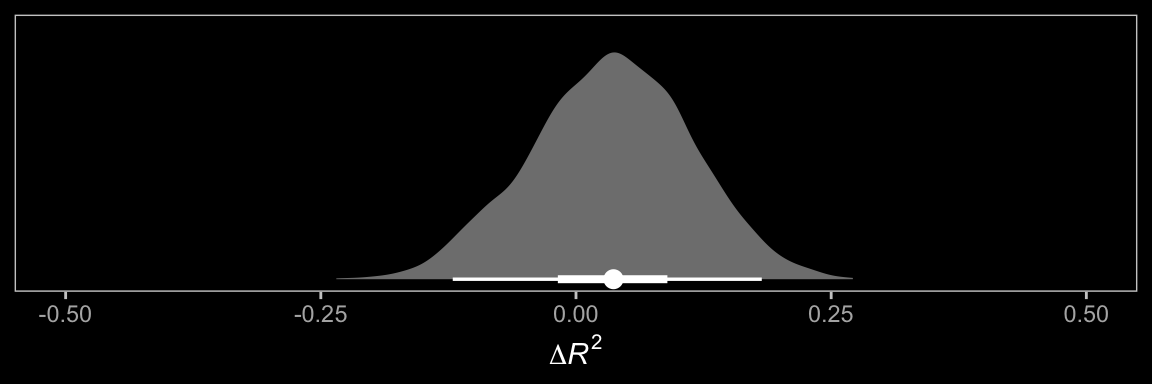

In order to get the \(\Delta R^2\) distribution analogous to the change in \(R^2\) \(F\)-test Hayes discussed on page 482, we’ll have to first refit the model without the interaction for the \(M\) criterion. Here are the sub-models.

m_model <- bf(respappr ~ 1 + d1 + d2 + sexism)

y_model <- bf(liking ~ 1 + d1 + d2 + respappr + sexism + d1:sexism + d2:sexism)Now we fit model13.2.

model13.2 <- brm(

data = protest,

family = gaussian,

m_model + y_model + set_rescor(FALSE),

chains = 4, cores = 4,

file = "fits/model13.02")With model13.2 in hand, we’re ready to compare \(R^2\) distributions.

# extract the R2 draws and wrangle

r2 <- tibble(model13.1 = bayes_R2(model13.1, resp = "respappr", summary = F)[, 1],

model13.2 = bayes_R2(model13.2, resp = "respappr", summary = F)[, 1]) %>%

mutate(difference = model13.1 - model13.2)

# breaks

breaks <- median_qi(r2$difference, .width = .95) %>%

pivot_longer(starts_with("y")) %>%

pull(value)

# plot!

r2 %>%

ggplot(aes(x = difference, y = 0)) +

stat_halfeye(fill = "grey50", color = "white",

point_interval = median_qi, .width = 0.95) +

scale_x_continuous(expression(Delta*italic(R)^2),

breaks = breaks, labels = round(breaks, digits = 2)) +

scale_y_continuous(NULL, breaks = NULL) +

dark_theme_bw() +

theme(panel.grid = element_blank())

And we might also compare the models by their information criteria.

model13.1 <- add_criterion(model13.1, criterion = c("waic", "loo"))

model13.2 <- add_criterion(model13.2, criterion = c("waic", "loo"))

loo_compare(model13.1, model13.2, criterion = "loo") %>%

print(simplify = F)## elpd_diff se_diff elpd_loo se_elpd_loo p_loo se_p_loo looic se_looic

## model13.1 0.0 0.0 -380.1 14.8 16.1 2.7 760.3 29.6

## model13.2 -2.6 4.0 -382.8 14.9 14.1 2.5 765.5 29.7loo_compare(model13.1, model13.2, criterion = "waic") %>%

print(simplify = F)## elpd_diff se_diff elpd_waic se_elpd_waic p_waic se_p_waic waic se_waic

## model13.1 0.0 0.0 -379.9 14.8 15.9 2.7 759.9 29.5

## model13.2 -2.7 4.0 -382.7 14.8 14.0 2.5 765.3 29.7The Bayesian \(R^2\), the LOO-CV, and the WAIC all suggest there’s little difference between the two models with respect to their predictive utility. In such a case, I’d lean on theory to choose between them. If inclined, one could also do Bayesian model averaging.

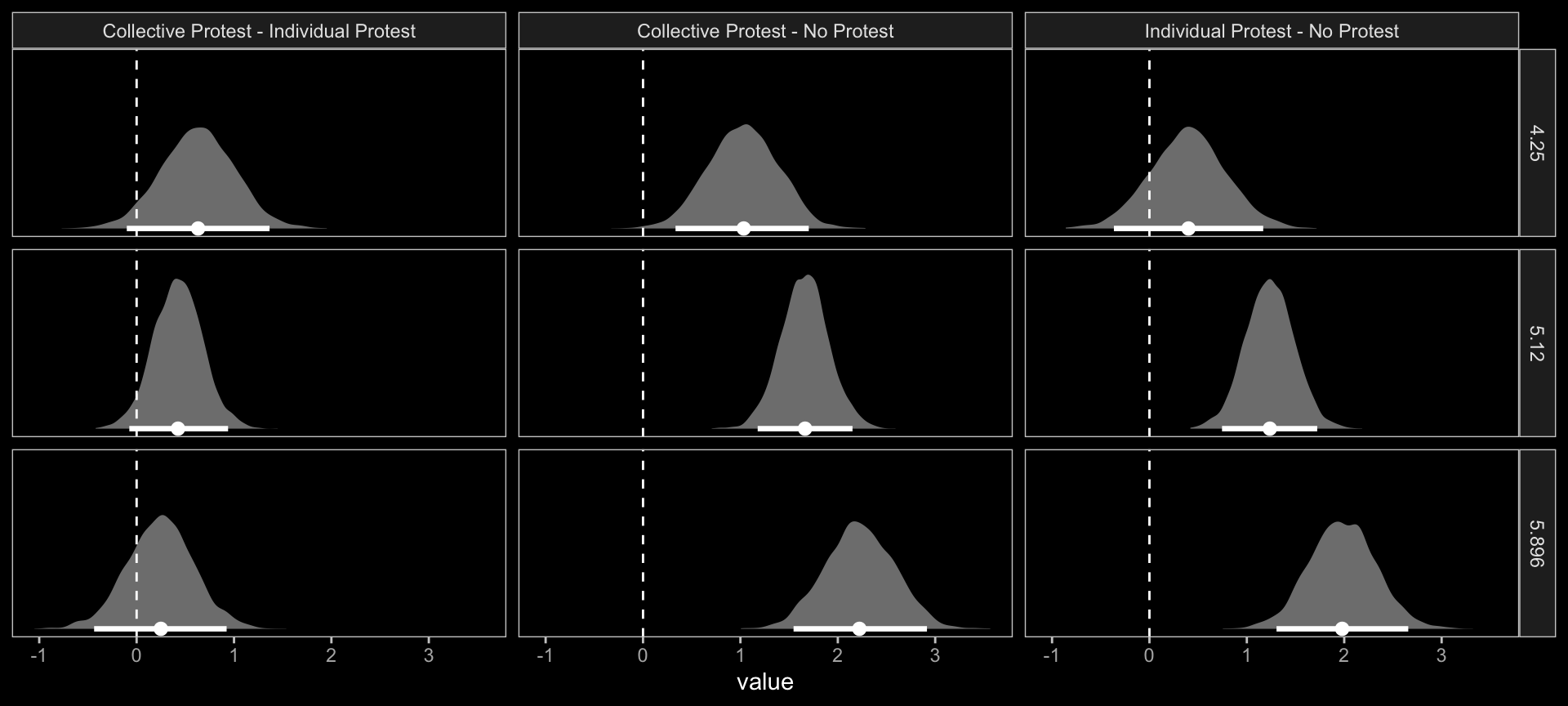

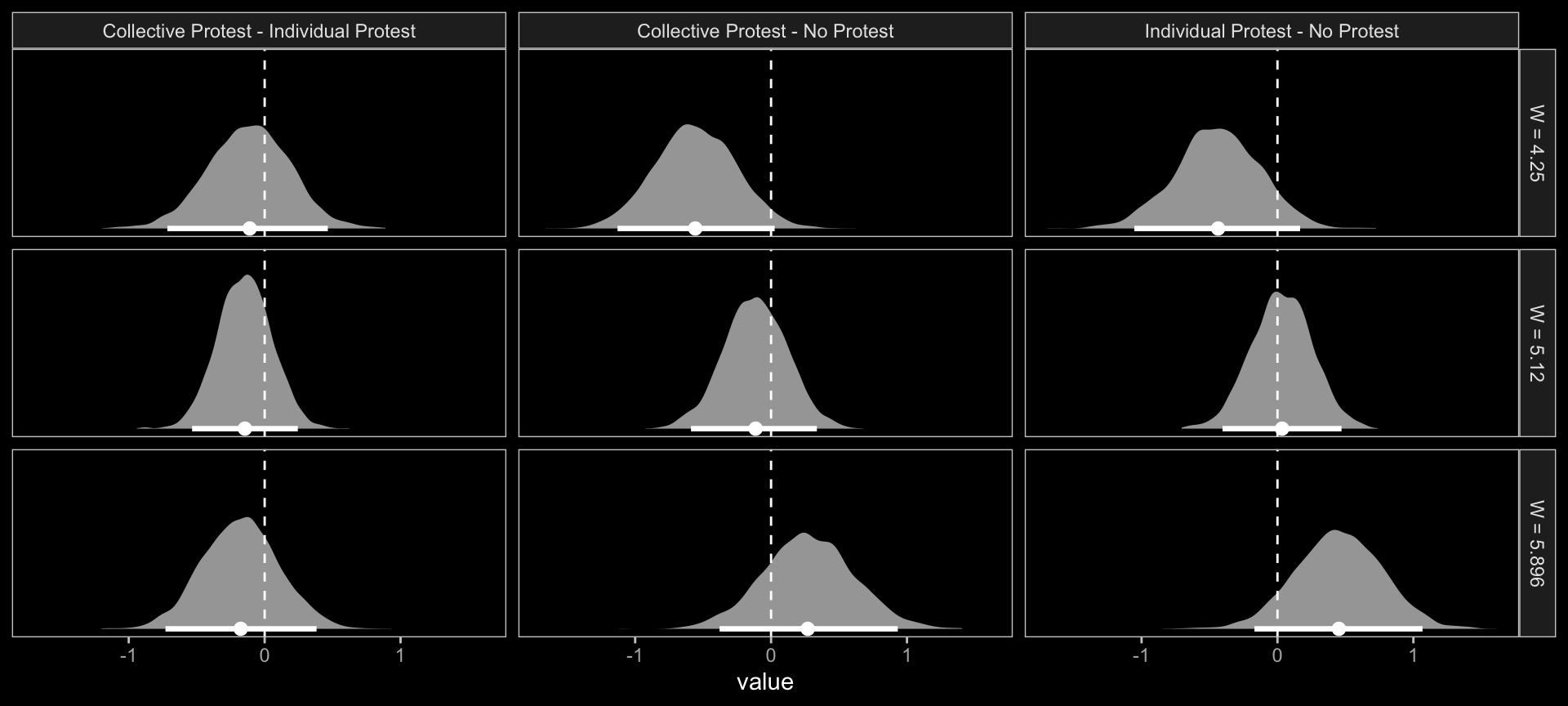

Within our Bayesian modeling paradigm, we don’t have a direct analogue to the \(F\)-tests Hayes presented on page 483. We can just extract the fitted draws and wrangle to get the difference scores.

# we need new `nd` data

nd <- protest %>%

distinct(d1, d2, condition) %>%

expand_grid(sexism = c(4.250, 5.120, 5.896))

# extract the fitted draws

f1 <- add_epred_draws(model13.1,

newdata = nd,

resp = "respappr") %>%

ungroup() %>%

select(sexism, condition, .epred, .draw) %>%

pivot_wider(names_from = condition, values_from = .epred) %>%

mutate(`Individual Protest - No Protest` = `Individual Protest` - `No Protest`,

`Collective Protest - No Protest` = `Collective Protest` - `No Protest`,

`Collective Protest - Individual Protest` = `Collective Protest` - `Individual Protest`)

# a tiny bit more wrangling and we're ready

f1 %>%

pivot_longer(cols = contains("-")) %>%

# plot the difference distributions!

ggplot(aes(x = value, y = 0)) +

stat_halfeye(point_interval = median_qi, .width = .95,

fill = "grey50", color = "white") +

geom_vline(xintercept = 0, linetype = 2) +

scale_y_continuous(NULL, breaks = NULL) +

facet_grid(sexism ~ name) +

dark_theme_bw() +

theme(panel.grid = element_blank())

Did you notice our use of the add_epred_draws() function? add_epred_draws() is a convenience function from tidybayes, that works in a similar way to brms::fitted(). The advantage of the add_epred_draws() approach is it returns the output in a tidy tibble format and, if you use the newdata argument, it will automatically add those predictor values to the output. To learn more about add_epred_draws() and other similar functions, check out Kay’s (2021) tutorial, Extracting and visualizing tidy draws from brms models.

Now we have f1, it’s easy to get the typical numeric summaries for all of the differences.

f1 %>%

select(sexism, contains("-")) %>%

pivot_longer(-sexism) %>%

group_by(name, sexism) %>%

mean_qi() %>%

mutate_if(is.double, round, digits = 3) %>%

select(name:.upper) %>%

rename(mean = value)## # A tibble: 9 × 5

## name sexism mean .lower .upper

## <chr> <dbl> <dbl> <dbl> <dbl>

## 1 Collective Protest - Individual Protest 4.25 0.63 -0.101 1.36

## 2 Collective Protest - Individual Protest 5.12 0.425 -0.075 0.939

## 3 Collective Protest - Individual Protest 5.90 0.243 -0.436 0.924

## 4 Collective Protest - No Protest 4.25 1.03 0.334 1.70

## 5 Collective Protest - No Protest 5.12 1.66 1.18 2.15

## 6 Collective Protest - No Protest 5.90 2.23 1.55 2.92

## 7 Individual Protest - No Protest 4.25 0.398 -0.365 1.17

## 8 Individual Protest - No Protest 5.12 1.24 0.745 1.72

## 9 Individual Protest - No Protest 5.90 1.98 1.30 2.66The three levels of Collective Protest - Individual Protest correspond nicely with some of the analyses Hayes presented on pages 484–486. For example, consider this snip from page 485: “the difference in perceived appropriateness between those told she collectively protested and those told she individually protested is not quite statistically significant, \(\theta_{D_{2}\rightarrow M} | (W = 4.250) = 0.634,\) \(t(123) = 1.705,\) \(p = .091,\) \(95\%\ \text{CI} = -0.102\) to \(1.370\).” That corresponds very nicely to the top row of our last bit of output.

However, these don’t get at the differences Hayes expressed as \(\theta_{D_{1}\rightarrow M}\) on pages 484–486. For those, we’ll have to work directly with the as_draws_df() output.

draws <- as_draws_df(model13.1)

draws %>%

transmute(`4.250` = b_respappr_d1 + `b_respappr_d1:sexism` * 4.250,

`5.210` = b_respappr_d1 + `b_respappr_d1:sexism` * 5.120,

`5.896` = b_respappr_d1 + `b_respappr_d1:sexism` * 5.896) %>%

pivot_longer(everything()) %>%

group_by(name) %>%

mean_qi(value) %>%

mutate_if(is.double, round, digits = 3) %>%

select(name:.upper) %>%

rename(mean = value,

`Difference in Catherine's perceived behavior between being told she protested or not when W` = name)## # A tibble: 3 × 4

## Difference in Catherine's perceived behavior between being told she proteste…¹ mean .lower .upper

## <chr> <dbl> <dbl> <dbl>

## 1 4.250 0.713 0.079 1.34

## 2 5.210 1.45 1.03 1.88

## 3 5.896 2.10 1.51 2.71

## # … with abbreviated variable name

## # ¹`Difference in Catherine's perceived behavior between being told she protested or not when W`In the same way, here are the corresponding posterior summaries for the various combinations of \(\theta_{D_{2}\rightarrow M}\) conditional on three levels of \(W\).

draws %>%

transmute(`4.250` = b_respappr_d2 + `b_respappr_d2:sexism` * 4.250,

`5.210` = b_respappr_d2 + `b_respappr_d2:sexism` * 5.120,

`5.896` = b_respappr_d2 + `b_respappr_d2:sexism` * 5.896) %>%

pivot_longer(everything()) %>%

group_by(name) %>%

mean_qi(value) %>%

mutate_if(is.double, round, digits = 3) %>%

select(name:.upper) %>%

rename(mean = value,

`Difference in Catherine's perceived behavior between being told she protested or not when W` = name)## # A tibble: 3 × 4

## Difference in Catherine's perceived behavior between being told she proteste…¹ mean .lower .upper

## <chr> <dbl> <dbl> <dbl>

## 1 4.250 0.63 -0.101 1.36

## 2 5.210 0.425 -0.075 0.939

## 3 5.896 0.243 -0.436 0.924

## # … with abbreviated variable name

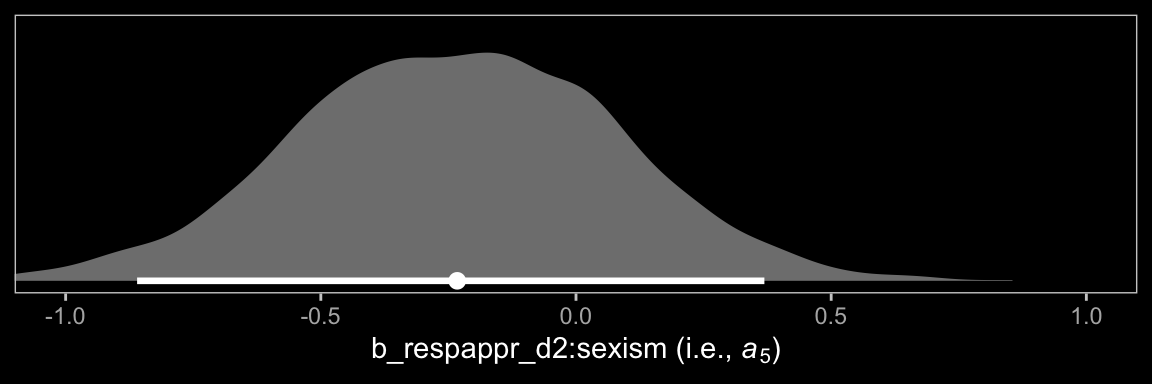

## # ¹`Difference in Catherine's perceived behavior between being told she protested or not when W`At the end of the subsection, Hayes highlighted \(a_5\). Here it is.

draws %>%

ggplot(aes(x = `b_respappr_d2:sexism`, y = 0)) +

stat_halfeye(point_interval = median_qi, .width = 0.95,

fill = "grey50", color = "white") +

scale_y_continuous(NULL, breaks = NULL) +

coord_cartesian(xlim = c(-1, 1)) +

xlab(expression("b_respappr_d2:sexism (i.e., "*italic(a)[5]*")")) +

dark_theme_bw() +

theme(panel.grid = element_blank())

Turns out \(a_5\) has a wide posterior.

13.2.2 Estimating the second stage of the mediation process.

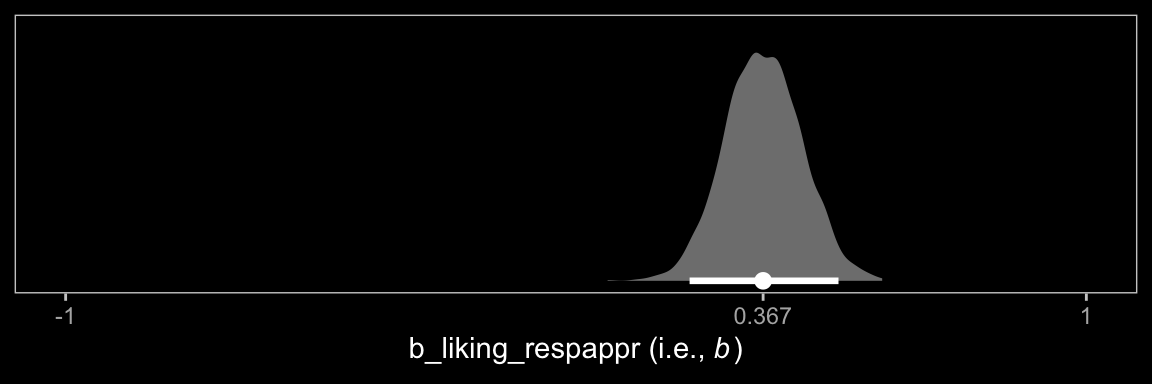

Now here’s \(b\).

draws %>%

ggplot(aes(x = b_liking_respappr, y = 0)) +

stat_halfeye(point_interval = median_qi, .width = 0.95,

fill = "grey50", color = "white") +

scale_x_continuous(expression("b_liking_respappr (i.e., "*italic(b)*")"),

breaks = c(-1, median(draws$b_liking_respappr), 1),

labels = c(-1, median(draws$b_liking_respappr) %>% round(3), 1)) +

scale_y_continuous(NULL, breaks = NULL) +

coord_cartesian(xlim = c(-1, 1)) +

dark_theme_bw() +

theme(panel.grid = element_blank())

Note how we plotted \(b\) on the same range of the \(x\)-axis as we did \(a_5\), above. This posterior is much more narrow.

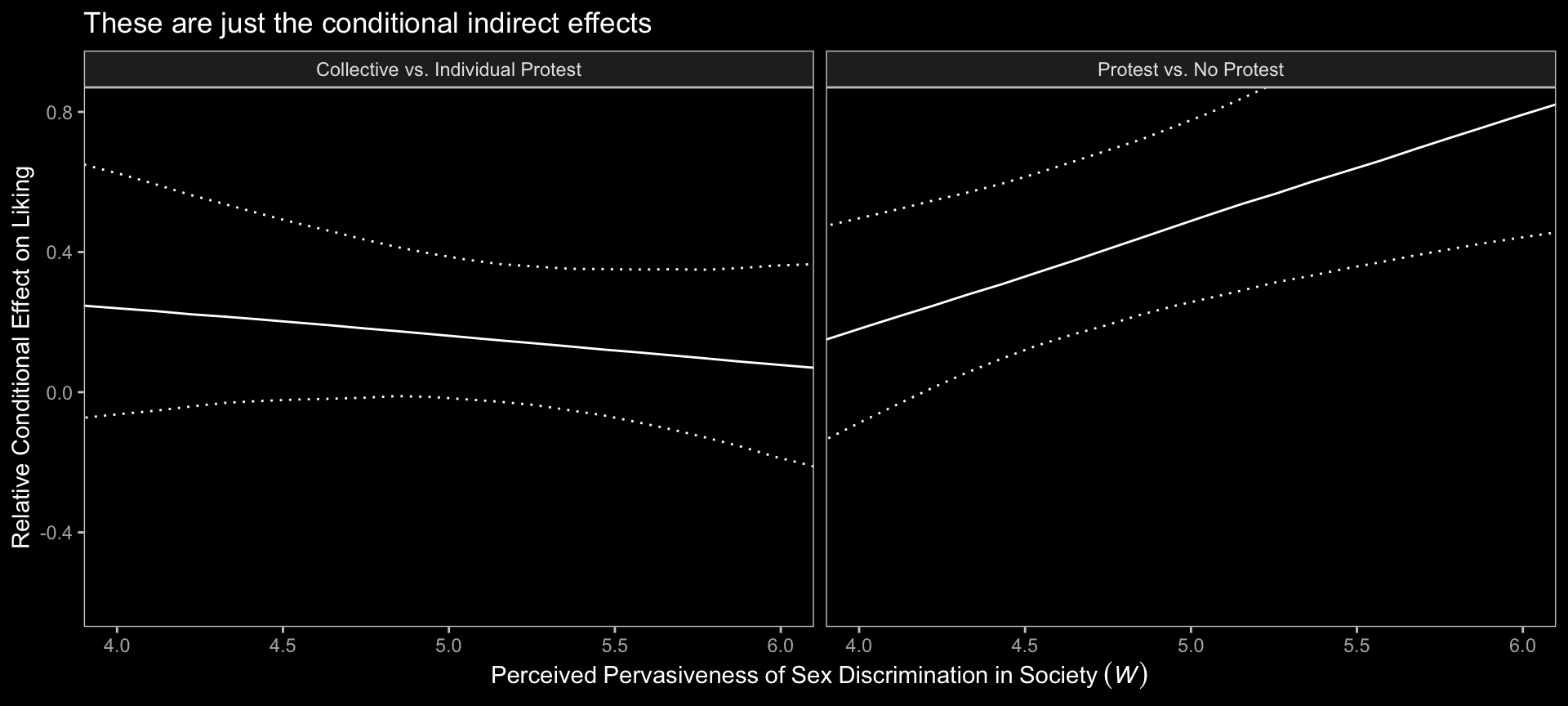

13.3 Relative conditional indirect effects

When \(X\) is a multicategorical variable representing \(g = 3\) groups, there are two indirect effects, which we called relative indirect effects in Chapter 10. But these relative indirect effects are still products of effects. In this example, because one of these effects is a function, then the relative indirect effects become a function as well. (p. 487, emphasis in the original)

Before we use Hayes’s formulas at the top of page 488 to re-express the posterior in terms of the relative conditional indirect effects, we might want to clarify which of the draws columns correspond to the relevant parameters.

- \(a_1\) =

b_respappr_d1 - \(a_2\) =

b_respappr_d2 - \(a_4\) =

b_respappr_d1:sexism - \(a_5\) =

b_respappr_d2:sexism - \(b\) =

b_liking_respappr

To get our posterior transformations, we’ll use the expand_grid()-based approach from Chapter 12. Here’s the preparatory data wrangling.

indirect <- draws %>%

expand_grid(sexism = seq(from = 3.5, to = 6.5, length.out = 30)) %>%

mutate(`Protest vs. No Protest` = (b_respappr_d1 + `b_respappr_d1:sexism` * sexism) * b_liking_respappr,

`Collective vs. Individual Protest` = (b_respappr_d2 + `b_respappr_d2:sexism` * sexism) * b_liking_respappr) %>%

pivot_longer(contains("Protest")) %>%

select(sexism:value) %>%

group_by(name, sexism) %>%

median_qi(value)

head(indirect)## # A tibble: 6 × 8

## name sexism value .lower .upper .width .point .interval

## <chr> <dbl> <dbl> <dbl> <dbl> <dbl> <chr> <chr>

## 1 Collective vs. Individual Protest 3.5 0.281 -0.120 0.770 0.95 median qi

## 2 Collective vs. Individual Protest 3.60 0.273 -0.108 0.738 0.95 median qi

## 3 Collective vs. Individual Protest 3.71 0.264 -0.0963 0.707 0.95 median qi

## 4 Collective vs. Individual Protest 3.81 0.255 -0.0840 0.678 0.95 median qi

## 5 Collective vs. Individual Protest 3.91 0.246 -0.0716 0.646 0.95 median qi

## 6 Collective vs. Individual Protest 4.02 0.238 -0.0616 0.621 0.95 median qiNow we’ve saved our results in indirect, we just need to plug them into ggplot() to make our version of Figure 13.4.

indirect %>%

ggplot(aes(x = sexism, y = value, ymin = .lower, ymax = .upper, group = name)) +

geom_ribbon(color = "white", fill = "transparent", linetype = 3) +

geom_line(color = "white") +

coord_cartesian(xlim = c(4, 6),

ylim = c(-.6, .8)) +

labs(title = "These are just the conditional indirect effects",

x = expression(Perceived~Pervasiveness~of~Sex~Discrimination~'in'~Society~(italic(W))),

y = "Relative Conditional Effect on Liking") +

dark_theme_bw() +

theme(legend.position = "none",

panel.grid = element_blank()) +

facet_grid(~ name)

Do not that unlike the figure in the text, we’re only displaying the conditional indirect effects. Once you include the 95% intervals, things get too cluttered to add in other effects. Here’s how we might make our version of Table 13.2 based on posterior means.

draws %>%

expand_grid(w = c(4.250, 5.125, 5.896)) %>%

rename(b = b_liking_respappr) %>%

mutate(`relative effect of d1` = (b_respappr_d1 + `b_respappr_d1:sexism` * w),

`relative effect of d2` = (b_respappr_d2 + `b_respappr_d2:sexism` * w)) %>%

mutate(`conditional indirect effect of d1` = `relative effect of d1` * b,

`conditional indirect effect of d2` = `relative effect of d2` * b) %>%

pivot_longer(cols = c(contains("of d"), b)) %>%

group_by(w, name) %>%

summarise(mean = mean(value) %>% round(digits = 3)) %>%

pivot_wider(names_from = name, values_from = mean) %>%

select(w, `relative effect of d1`, `relative effect of d2`, everything())## # A tibble: 3 × 6

## # Groups: w [3]

## w `relative effect of d1` `relative effect of d2` b conditional indirect effect …¹ condi…²

## <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

## 1 4.25 0.713 0.63 0.367 0.263 0.231

## 2 5.12 1.45 0.424 0.367 0.534 0.156

## 3 5.90 2.10 0.243 0.367 0.773 0.089

## # … with abbreviated variable names ¹`conditional indirect effect of d1`,

## # ²`conditional indirect effect of d2`13.4 Testing and probing moderation of mediation

Surely by now you knew we weren’t going to be satisfied with summarizing the model with a bunch of posterior means.

13.4.1 A test of moderation of the relative indirect effect.

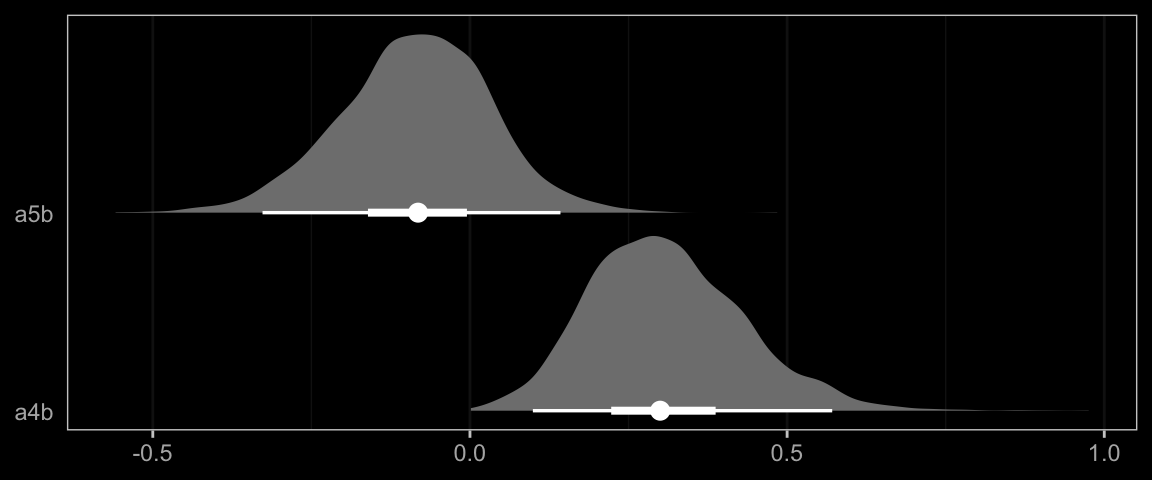

In this section Hayes referred to \(a_4 b\) and \(a_5b\) as the indexes of moderated mediation of the indirect effects of Protest vs. No Protest and Collective vs. Individual Protest, respectively. To express their uncertainty we’ll just work directly with the as_draws_df() output, which we’ve saved as draws.

draws <- draws %>%

mutate(a4b = `b_respappr_d1:sexism` * b_liking_respappr,

a5b = `b_respappr_d2:sexism` * b_liking_respappr)

draws %>%

pivot_longer(a4b:a5b, names_to = "parameter") %>%

group_by(parameter) %>%

mean_qi(value) %>%

mutate_if(is.double, round, digits = 3) %>%

select(parameter:.upper)## # A tibble: 2 × 4

## parameter value .lower .upper

## <chr> <dbl> <dbl> <dbl>

## 1 a4b 0.31 0.099 0.571

## 2 a5b -0.086 -0.327 0.143Here they are in a stat_halfeye() plot.

draws %>%

pivot_longer(a4b:a5b, names_to = "parameter") %>%

ggplot(aes(x = value, y = parameter)) +

stat_halfeye(point_interval = median_qi, .width = c(0.95, 0.5),

fill = "grey50", color = "white") +

scale_y_discrete(NULL, expand = expansion(add = 0.1)) +

xlab(NULL) +

dark_theme_bw() +

theme(axis.ticks.y = element_blank(),

panel.grid.major.y = element_blank(),

panel.grid.minor.y = element_blank())

13.4.2 Probing moderation of mediation.

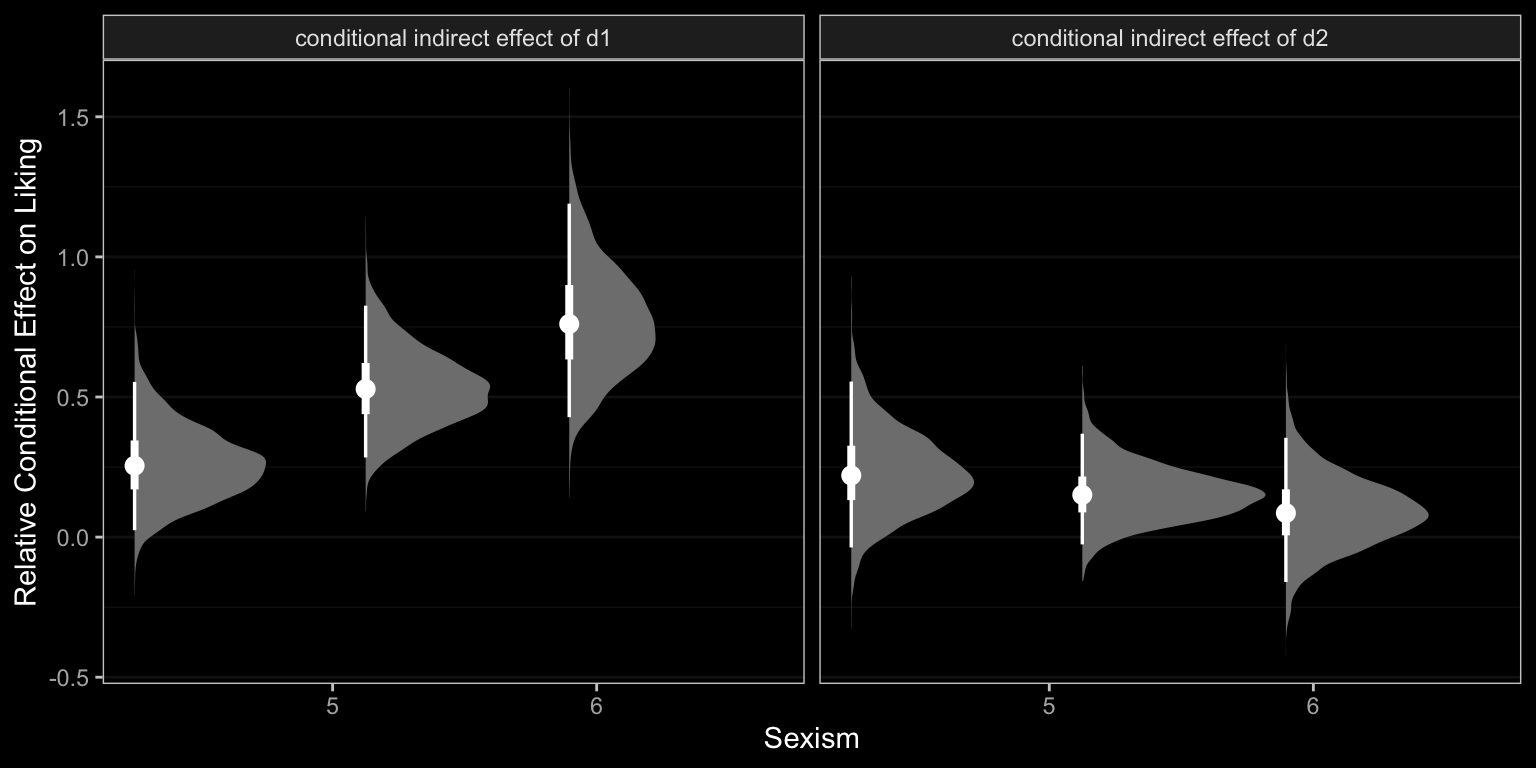

We already computed the relevant 95% credible intervals at the end of Section 13.3. We could inspect those in a stat_halfeye() plot, too.

# we did this all before

draws %>%

expand_grid(w = c(4.250, 5.125, 5.896)) %>%

rename(b = b_liking_respappr) %>%

mutate(`relative effect of d1` = (b_respappr_d1 + `b_respappr_d1:sexism` * w),

`relative effect of d2` = (b_respappr_d2 + `b_respappr_d2:sexism` * w)) %>%

mutate(`conditional indirect effect of d1` = `relative effect of d1` * b,

`conditional indirect effect of d2` = `relative effect of d2` * b) %>%

pivot_longer(contains("conditional")) %>%

# now plot instead of summarizing

ggplot(aes(x = w, y = value)) +

stat_halfeye(point_interval = median_qi, .width = c(0.95, 0.5),

fill = "grey50", color = "white") +

labs(x = "Sexism",

y = "Relative Conditional Effect on Liking") +

dark_theme_bw() +

theme(panel.grid.minor.x = element_blank(),

panel.grid.major.x = element_blank()) +

facet_wrap(~ name)

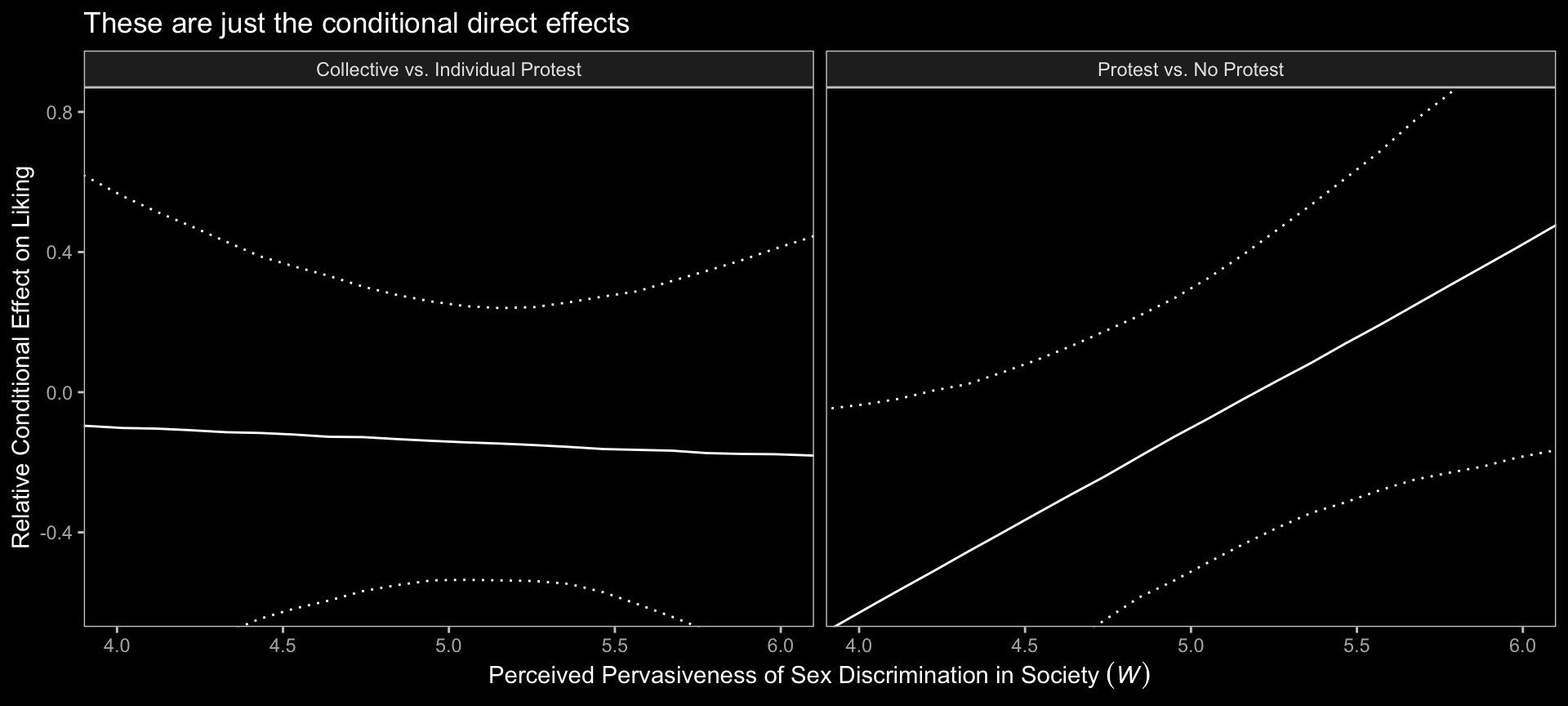

13.5 Relative conditional direct effects

In order to get the \(R^2\) difference distribution analogous to the change in \(R^2\) \(F\)-test Hayes discussed on pages 495–496, we’ll have to first refit the model without the interaction for the \(Y\) criterion, liking.

m_model <- bf(respappr ~ 1 + d1 + d2 + sexism + d1:sexism + d2:sexism)

y_model <- bf(liking ~ 1 + d1 + d2 + respappr + sexism)

model13.3 <- brm(

data = protest,

family = gaussian,

m_model + y_model + set_rescor(FALSE),

chains = 4, cores = 4,

file = "fits/model13.03")Here’s the \(\Delta R^2\) density for our \(Y\), liking.

# wrangle

tibble(model13.1 = bayes_R2(model13.1, resp = "liking", summary = F)[, 1],

model13.3 = bayes_R2(model13.3, resp = "liking", summary = F)[, 1]) %>%

mutate(difference = model13.1 - model13.3) %>%

# plot

ggplot(aes(x = difference, y = 0)) +

stat_halfeye(point_interval = median_qi, .width = c(0.95, 0.5),

fill = "grey50", color = "white") +

scale_y_continuous(NULL, breaks = NULL) +

coord_cartesian(xlim = c(-.5, .5)) +

xlab(expression(Delta*italic(R)^2)) +

dark_theme_bw() +

theme(panel.grid = element_blank())

We’ll also compare the models by their information criteria.

model13.3 <- add_criterion(model13.3, criterion = c("waic", "loo"))

loo_compare(model13.1, model13.3, criterion = "loo") %>% print(simplify = F)## elpd_diff se_diff elpd_loo se_elpd_loo p_loo se_p_loo looic se_looic

## model13.1 0.0 0.0 -380.1 14.8 16.1 2.7 760.3 29.6

## model13.3 -0.7 2.8 -380.8 15.6 14.6 2.7 761.6 31.2loo_compare(model13.1, model13.3, criterion = "waic") %>% print(simplify = F)## elpd_diff se_diff elpd_waic se_elpd_waic p_waic se_p_waic waic se_waic

## model13.1 0.0 0.0 -379.9 14.8 15.9 2.7 759.9 29.5

## model13.3 -0.7 2.8 -380.6 15.5 14.4 2.6 761.3 31.1As when we went through these steps for resp = "respappr", above, the Bayesian \(R^2\), the LOO-CV, and the WAIC all suggest there’s little difference between the two models with respect to predictive utility. In such a case, I’d lean on theory to choose between them. If inclined, one could also do Bayesian model averaging.

Our approach to plotting the relative conditional direct effects will mirror what we did for the relative conditional indirect effects, above. Here are the brm() parameters that correspond to the parameter names of Hayes’s notation.

- \(c_1\) =

b_liking_d1 - \(c_2\) =

b_liking_d2 - \(c_4\) =

b_liking_d1:sexism - \(c_5\) =

b_liking_d2:sexism

With all clear, we’re ready to make our version of Figure 13.4 with respect to the conditional direct effects.

# wrangle

draws %>%

expand_grid(sexism = seq(from = 3.5, to = 6.5, length.out = 30)) %>%

mutate(`Protest vs. No Protest` = b_liking_d1 + `b_liking_d1:sexism` * sexism,

`Collective vs. Individual Protest` = b_liking_d2 + `b_liking_d2:sexism` * sexism) %>%

pivot_longer(contains("Protest")) %>%

group_by(name, sexism) %>%

median_qi(value) %>%

# plot

ggplot(aes(x = sexism, y = value, ymin = .lower, ymax = .upper)) +

geom_ribbon(color = "white", fill = "transparent", linetype = 3) +

geom_line() +

coord_cartesian(xlim = c(4, 6),

ylim = c(-.6, .8)) +

labs(title = "These are just the conditional direct effects",

x = expression("Perceived Pervasiveness of Sex Discrimination in Society "*(italic(W))),

y = "Relative Conditional Effect on Liking") +

dark_theme_bw() +

theme(legend.position = "none",

panel.grid = element_blank()) +

facet_grid(~ name)

Holy smokes, them are some wide 95% CIs! No wonder the information criteria and \(R^2\) comparisons were so uninspiring.

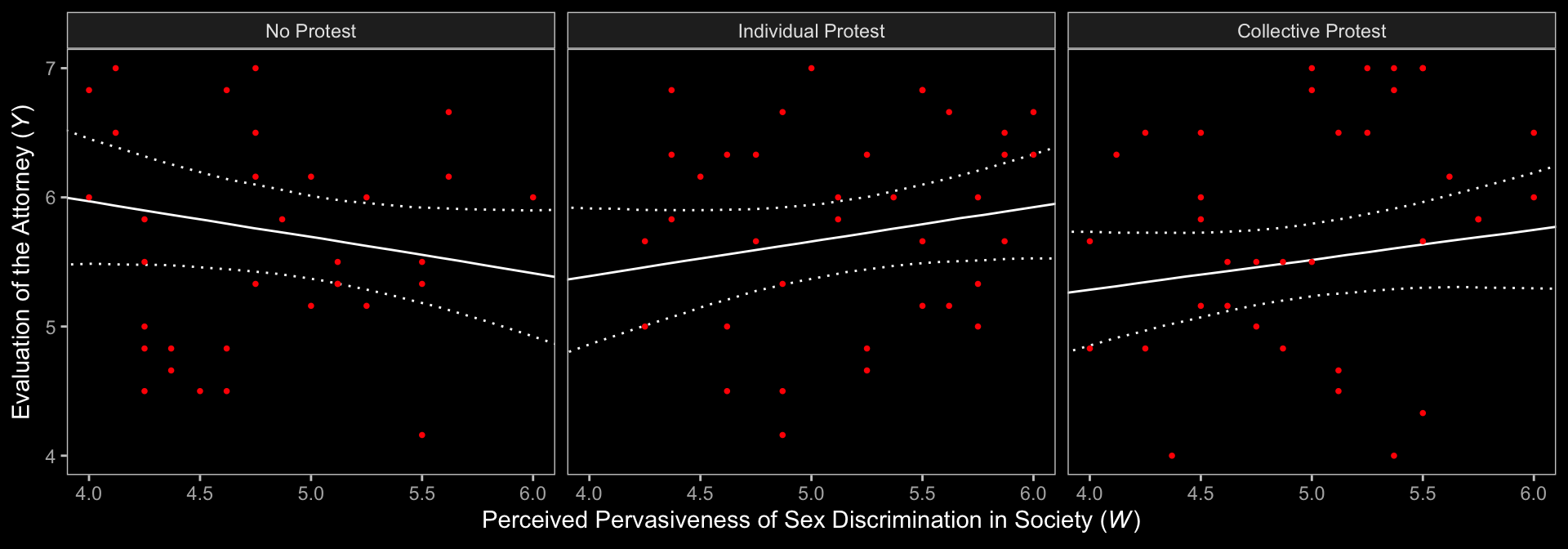

Notice that the \(y\)-axis is on the parameter space. When Hayes made his Figure 13.5, he put the \(y\)-axis on the liking space, instead. When we want things in the parameter space, we work with the output of as_draws_df(); when we want them in the criterion space, we typically use fitted(). This time, however, we’ll practice again with tidbayes::add_epred_draws().

# we need new `nd` data

nd <- protest %>%

distinct(d1, d2, condition) %>%

expand_grid(sexism = seq(from = 3.5, to = 6.5, length.out = 30)) %>%

mutate(respappr = mean(protest$respappr))

# feed `nd` into `add_epred_draws()` and then summarize with `median_qi()`

f <- add_epred_draws(model13.1,

newdata = nd,

resp = "liking") %>%

median_qi(.epred)

# plot!

f %>%

ggplot(aes(x = sexism)) +

geom_ribbon(aes(ymin = .lower, ymax = .upper),

linetype = 3, color = "white", fill = "transparent") +

geom_line(aes(y = .epred)) +

geom_point(data = protest,

aes(y = liking),

color = "red", size = 2/3) +

coord_cartesian(xlim = c(4, 6),

ylim = c(4, 7)) +

labs(x = expression(paste("Perceived Pervasiveness of Sex Discrimination in Society (", italic(W), ")")),

y = expression(paste("Evaluation of the Attorney (", italic(Y), ")"))) +

dark_theme_bw() +

theme(panel.grid = element_blank()) +

facet_wrap(~ condition)

Relative to the text, we expanded the range of the \(y\)-axis a bit to show more of that data (and there’s even more data outside of our expanded range). Also note how after doing so and after including the 95% CI bands, the crossing regression line effect in Hayes’s Figure 13.5 isn’t as impressive looking any more.

On pages 497 and 498, Hayes discussed more omnibus \(F\)-tests. Much like with the \(M\) criterion, we won’t come up with Bayesian \(F\)-tests, but we might go ahead and make pairwise comparisons at the three percentiles Hayes prefers.

# we need new `nd` data

nd <- protest %>%

distinct(d1, d2, condition) %>%

expand_grid(sexism = c(4.250, 5.120, 5.896)) %>%

mutate(respappr = mean(protest$respappr))

# define f

f <- add_epred_draws(model13.1,

newdata = nd,

resp = "liking") %>%

ungroup() %>%

select(condition, sexism, .draw, .epred) %>%

pivot_wider(names_from = condition, values_from = .epred) %>%

mutate(`Individual Protest - No Protest` = `Individual Protest` - `No Protest`,

`Collective Protest - No Protest` = `Collective Protest` - `No Protest`,

`Collective Protest - Individual Protest` = `Collective Protest` - `Individual Protest`)

# a tiny bit more wrangling and we're ready to plot the difference distributions

f %>%

select(sexism, contains("-")) %>%

pivot_longer(-sexism) %>%

mutate(sexism = str_c("W = ", sexism)) %>%

ggplot(aes(x = value, y = 0)) +

stat_halfeye(point_interval = median_qi, .width = .95,

color = "white") +

geom_vline(xintercept = 0, linetype = 2) +

scale_y_continuous(NULL, breaks = NULL) +

dark_theme_bw() +

theme(panel.grid = element_blank()) +

facet_grid(sexism ~ name)

Now we have f, it’s easy to get the typical numeric summaries for the differences.

f %>%

select(sexism, contains("-")) %>%

pivot_longer(-sexism) %>%

group_by(name, sexism) %>%

mean_qi(value) %>%

mutate_if(is.double, round, digits = 3) %>%

select(name:.upper) %>%

rename(mean = value)## # A tibble: 9 × 5

## name sexism mean .lower .upper

## <chr> <dbl> <dbl> <dbl> <dbl>

## 1 Collective Protest - Individual Protest 4.25 -0.114 -0.716 0.465

## 2 Collective Protest - Individual Protest 5.12 -0.147 -0.534 0.244

## 3 Collective Protest - Individual Protest 5.90 -0.176 -0.73 0.382

## 4 Collective Protest - No Protest 4.25 -0.554 -1.13 0.026

## 5 Collective Protest - No Protest 5.12 -0.115 -0.589 0.337

## 6 Collective Protest - No Protest 5.90 0.277 -0.379 0.933

## 7 Individual Protest - No Protest 4.25 -0.44 -1.05 0.166

## 8 Individual Protest - No Protest 5.12 0.032 -0.405 0.471

## 9 Individual Protest - No Protest 5.90 0.453 -0.17 1.07We don’t have \(p\)-values, but who needs them? All the differences are small in magnitude and have wide 95% intervals straddling zero.

To get the difference scores Hayes presented on pages 498–500, one might execute something like this.

draws %>%

transmute(d1_4.250 = b_liking_d1 + `b_liking_d1:sexism` * 4.250,

d1_5.120 = b_liking_d1 + `b_liking_d1:sexism` * 5.120,

d1_5.896 = b_liking_d1 + `b_liking_d1:sexism` * 5.896,

d2_4.250 = b_liking_d2 + `b_liking_d2:sexism` * 4.250,

d2_5.120 = b_liking_d2 + `b_liking_d2:sexism` * 5.120,

d2_5.896 = b_liking_d2 + `b_liking_d2:sexism` * 5.896) %>%

pivot_longer(everything(),

names_sep = "_",

names_to = c("protest dummy", "sexism")) %>%

group_by(`protest dummy`, sexism) %>%

mean_qi() %>%

mutate_if(is.double, round, digits = 3) %>%

select(`protest dummy`:.upper) %>%

rename(mean = value)## # A tibble: 6 × 5

## `protest dummy` sexism mean .lower .upper

## <chr> <chr> <dbl> <dbl> <dbl>

## 1 d1 4.250 -0.497 -1.01 0.011

## 2 d1 5.120 -0.042 -0.453 0.371

## 3 d1 5.896 0.365 -0.208 0.949

## 4 d2 4.250 -0.114 -0.716 0.465

## 5 d2 5.120 -0.147 -0.534 0.244

## 6 d2 5.896 -0.176 -0.73 0.382Each of those was our Bayesian version of an iteration of what you might call \(\theta_{D_i \rightarrow Y} | W\).

Session info

sessionInfo()## R version 4.2.2 (2022-10-31)

## Platform: x86_64-apple-darwin17.0 (64-bit)

## Running under: macOS Big Sur ... 10.16

##

## Matrix products: default

## BLAS: /Library/Frameworks/R.framework/Versions/4.2/Resources/lib/libRblas.0.dylib

## LAPACK: /Library/Frameworks/R.framework/Versions/4.2/Resources/lib/libRlapack.dylib

##

## locale:

## [1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

##

## attached base packages:

## [1] stats graphics grDevices utils datasets methods base

##

## other attached packages:

## [1] tidybayes_3.0.2 ggdark_0.2.1 brms_2.18.0 Rcpp_1.0.9 forcats_0.5.1 stringr_1.4.1

## [7] dplyr_1.0.10 purrr_1.0.1 readr_2.1.2 tidyr_1.2.1 tibble_3.1.8 ggplot2_3.4.0

## [13] tidyverse_1.3.2

##

## loaded via a namespace (and not attached):

## [1] readxl_1.4.1 backports_1.4.1 plyr_1.8.7 igraph_1.3.4

## [5] svUnit_1.0.6 splines_4.2.2 crosstalk_1.2.0 TH.data_1.1-1

## [9] rstantools_2.2.0 inline_0.3.19 digest_0.6.31 htmltools_0.5.3

## [13] fansi_1.0.3 magrittr_2.0.3 checkmate_2.1.0 googlesheets4_1.0.1

## [17] tzdb_0.3.0 modelr_0.1.8 RcppParallel_5.1.5 matrixStats_0.63.0

## [21] vroom_1.5.7 xts_0.12.1 sandwich_3.0-2 prettyunits_1.1.1

## [25] colorspace_2.0-3 rvest_1.0.2 ggdist_3.2.1 haven_2.5.1

## [29] xfun_0.35 callr_3.7.3 crayon_1.5.2 jsonlite_1.8.4

## [33] lme4_1.1-31 survival_3.4-0 zoo_1.8-10 glue_1.6.2

## [37] gtable_0.3.1 gargle_1.2.0 emmeans_1.8.0 distributional_0.3.1

## [41] pkgbuild_1.3.1 rstan_2.21.8 abind_1.4-5 scales_1.2.1

## [45] mvtnorm_1.1-3 emo_0.0.0.9000 DBI_1.1.3 miniUI_0.1.1.1

## [49] xtable_1.8-4 bit_4.0.4 stats4_4.2.2 StanHeaders_2.21.0-7

## [53] DT_0.24 htmlwidgets_1.5.4 httr_1.4.4 threejs_0.3.3

## [57] arrayhelpers_1.1-0 posterior_1.3.1 ellipsis_0.3.2 pkgconfig_2.0.3

## [61] loo_2.5.1 farver_2.1.1 sass_0.4.2 dbplyr_2.2.1

## [65] utf8_1.2.2 labeling_0.4.2 tidyselect_1.2.0 rlang_1.0.6

## [69] reshape2_1.4.4 later_1.3.0 munsell_0.5.0 cellranger_1.1.0

## [73] tools_4.2.2 cachem_1.0.6 cli_3.6.0 generics_0.1.3

## [77] broom_1.0.2 evaluate_0.18 fastmap_1.1.0 processx_3.8.0

## [81] knitr_1.40 bit64_4.0.5 fs_1.5.2 nlme_3.1-160

## [85] mime_0.12 projpred_2.2.1 xml2_1.3.3 compiler_4.2.2

## [89] bayesplot_1.10.0 shinythemes_1.2.0 rstudioapi_0.13 gamm4_0.2-6

## [93] reprex_2.0.2 bslib_0.4.0 stringi_1.7.8 highr_0.9

## [97] ps_1.7.2 Brobdingnag_1.2-8 lattice_0.20-45 Matrix_1.5-1

## [101] nloptr_2.0.3 markdown_1.1 shinyjs_2.1.0 tensorA_0.36.2

## [105] vctrs_0.5.1 pillar_1.8.1 lifecycle_1.0.3 jquerylib_0.1.4

## [109] bridgesampling_1.1-2 estimability_1.4.1 httpuv_1.6.5 R6_2.5.1

## [113] bookdown_0.28 promises_1.2.0.1 gridExtra_2.3 codetools_0.2-18

## [117] boot_1.3-28 colourpicker_1.1.1 MASS_7.3-58.1 gtools_3.9.4

## [121] assertthat_0.2.1 withr_2.5.0 shinystan_2.6.0 multcomp_1.4-20

## [125] mgcv_1.8-41 parallel_4.2.2 hms_1.1.1 grid_4.2.2

## [129] minqa_1.2.5 coda_0.19-4 rmarkdown_2.16 googledrive_2.0.0

## [133] shiny_1.7.2 lubridate_1.8.0 base64enc_0.1-3 dygraphs_1.1.1.6