Chapter 2 R Lab 1 - 22/03/2023

In this lecture we will learn how to implement the K-nearest neighbors (KNN) method for classification and regression problems.

The following packages are required: tidyverseand tidymodels. You already know the tidyverse package from the Coding for Data Science course (module 1 of this course). The tidymodels package is briefly described in the following section.

library(tidyverse)## ── Attaching packages ──────────────────── tidyverse 1.3.2 ──

## ✔ ggplot2 3.3.6 ✔ purrr 0.3.4

## ✔ tibble 3.1.7 ✔ dplyr 1.0.9

## ✔ tidyr 1.2.0 ✔ stringr 1.4.0

## ✔ readr 2.1.2 ✔ forcats 0.5.1

## ── Conflicts ─────────────────────── tidyverse_conflicts() ──

## ✖ dplyr::filter() masks stats::filter()

## ✖ dplyr::lag() masks stats::lag()library(tidymodels)## ── Attaching packages ─────────────────── tidymodels 1.0.0 ──

## ✔ broom 1.0.4 ✔ rsample 1.1.1

## ✔ dials 1.1.0 ✔ tune 1.0.1

## ✔ infer 1.0.4 ✔ workflows 1.1.3

## ✔ modeldata 1.1.0 ✔ workflowsets 1.0.0

## ✔ parsnip 1.0.4 ✔ yardstick 1.1.0

## ✔ recipes 1.0.1

## ── Conflicts ────────────────────── tidymodels_conflicts() ──

## ✖ scales::discard() masks purrr::discard()

## ✖ dplyr::filter() masks stats::filter()

## ✖ recipes::fixed() masks stringr::fixed()

## ✖ dplyr::lag() masks stats::lag()

## ✖ yardstick::spec() masks readr::spec()

## ✖ recipes::step() masks stats::step()

## • Use tidymodels_prefer() to resolve common conflicts.2.1 The tidymodels package

The tidymodels package is a collection of packages for statistical analysis and modeling. It shares the same philosophy, grammar and data structure of the tidyverse package (see https://tidymodels.tidymodels.org for more information).

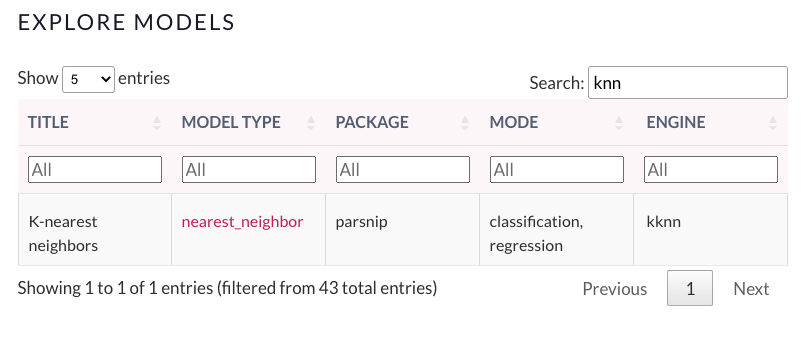

In R there could be several packages that fit the same type of model. For example KNN can be implemented by using the kknn and FNN package. Note that each package is unique in the way it defines the function (and its arguments) for model fitting. tidymodels represents an interface to these packages (named engine) implementing the same model. At the link https://www.tidymodels.org/find/parsnip/ you can find all the models (and corresponding engines) that can be implemented using tidymodels. In this lab we are interested in KNN, thus we searched for KNN at the previous link. The following picture shows that the tidymodels function we will need is called nearest_neighbor and the engine that will be used is kknn. This means that the kknn package is also required for this lab.

library(kknn)2.2 KNN for regression problems

For KNN regression we will use data regarding bike sharing (link). The data are stored in the file named bikesharing.csv which is available in the e-learning. The data regard the bike sharing counts aggregated on daily basis.

We start by importing and exploring the data. Please, note that in the following code my file path is shown; obviously for your computer it will be different:

bike = read.csv("./files/bikesharing.csv")

head(bike)## instant dteday season yr mnth holiday weekday

## 1 1 2011-01-01 1 0 1 0 6

## 2 2 2011-01-02 1 0 1 0 0

## 3 3 2011-01-03 1 0 1 0 1

## 4 4 2011-01-04 1 0 1 0 2

## 5 5 2011-01-05 1 0 1 0 3

## 6 6 2011-01-06 1 0 1 0 4

## workingday weathersit temp atemp hum windspeed

## 1 0 2 0.344167 0.363625 0.805833 0.1604460

## 2 0 2 0.363478 0.353739 0.696087 0.2485390

## 3 1 1 0.196364 0.189405 0.437273 0.2483090

## 4 1 1 0.200000 0.212122 0.590435 0.1602960

## 5 1 1 0.226957 0.229270 0.436957 0.1869000

## 6 1 1 0.204348 0.233209 0.518261 0.0895652

## casual registered cnt

## 1 331 654 985

## 2 131 670 801

## 3 120 1229 1349

## 4 108 1454 1562

## 5 82 1518 1600

## 6 88 1518 1606glimpse(bike)## Rows: 731

## Columns: 16

## $ instant <int> 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 1…

## $ dteday <chr> "2011-01-01", "2011-01-02", "2011-01-03"…

## $ season <int> 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1…

## $ yr <int> 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0…

## $ mnth <int> 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1…

## $ holiday <int> 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0…

## $ weekday <int> 6, 0, 1, 2, 3, 4, 5, 6, 0, 1, 2, 3, 4, 5…

## $ workingday <int> 0, 0, 1, 1, 1, 1, 1, 0, 0, 1, 1, 1, 1, 1…

## $ weathersit <int> 2, 2, 1, 1, 1, 1, 2, 2, 1, 1, 2, 1, 1, 1…

## $ temp <dbl> 0.344167, 0.363478, 0.196364, 0.200000, …

## $ atemp <dbl> 0.363625, 0.353739, 0.189405, 0.212122, …

## $ hum <dbl> 0.805833, 0.696087, 0.437273, 0.590435, …

## $ windspeed <dbl> 0.1604460, 0.2485390, 0.2483090, 0.16029…

## $ casual <int> 331, 131, 120, 108, 82, 88, 148, 68, 54,…

## $ registered <int> 654, 670, 1229, 1454, 1518, 1518, 1362, …

## $ cnt <int> 985, 801, 1349, 1562, 1600, 1606, 1510, …The dataset is composed by 731 rows (i.e. days) and 16 variables. We are in particular interested in the following quantitative variables (in this case \(p=3\) regressors):

atemp: normalized feeling temperature in Celsiushum: normalized humiditywindspeed: normalized wind speedcnt: count of total rental bikes (response variable)

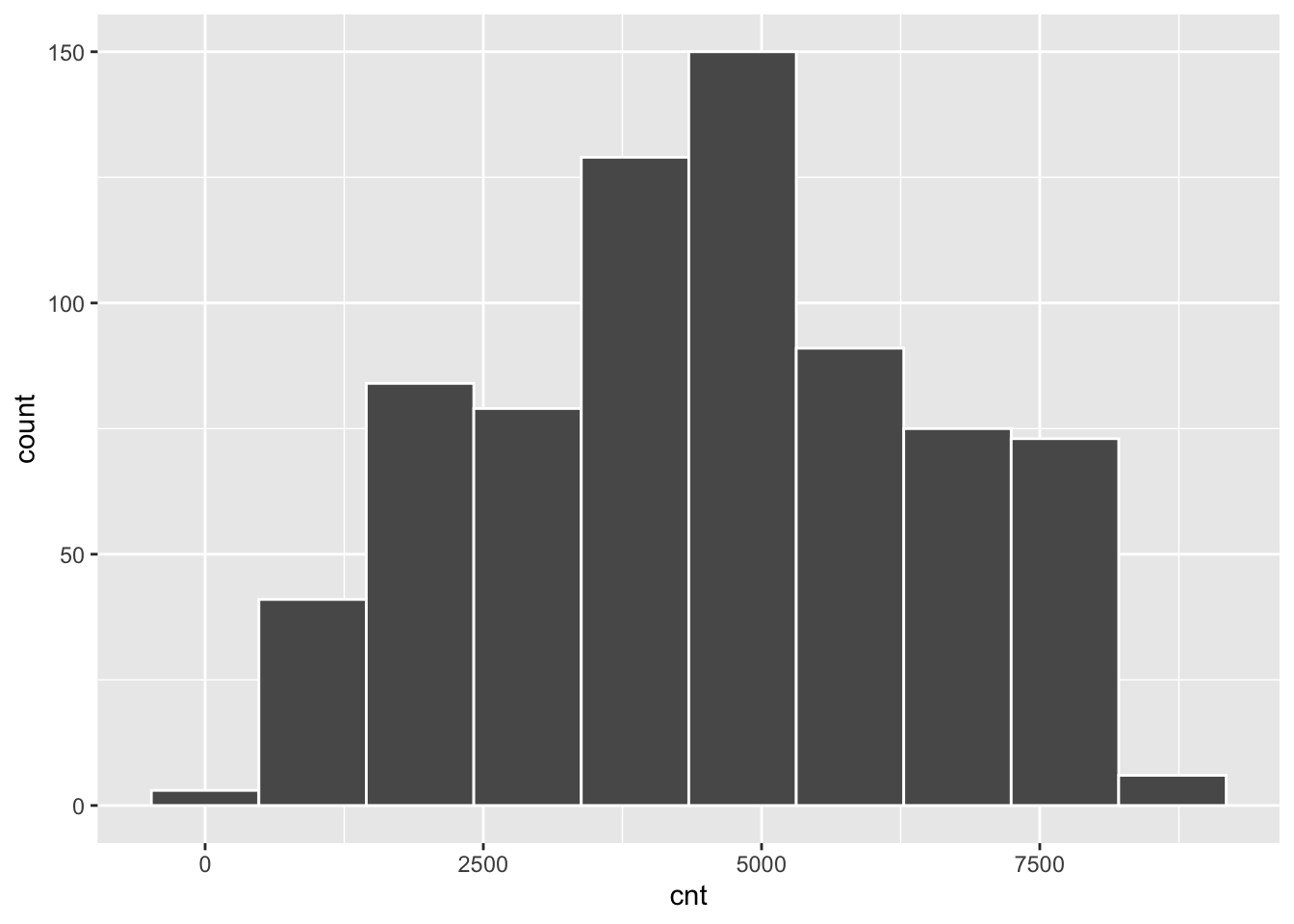

The response variable cnt can be summarized as follows:

summary(bike$cnt)## Min. 1st Qu. Median Mean 3rd Qu. Max.

## 22 3152 4548 4504 5956 8714bike %>%

ggplot()+

geom_histogram(aes(cnt),

bins=10, col="white")

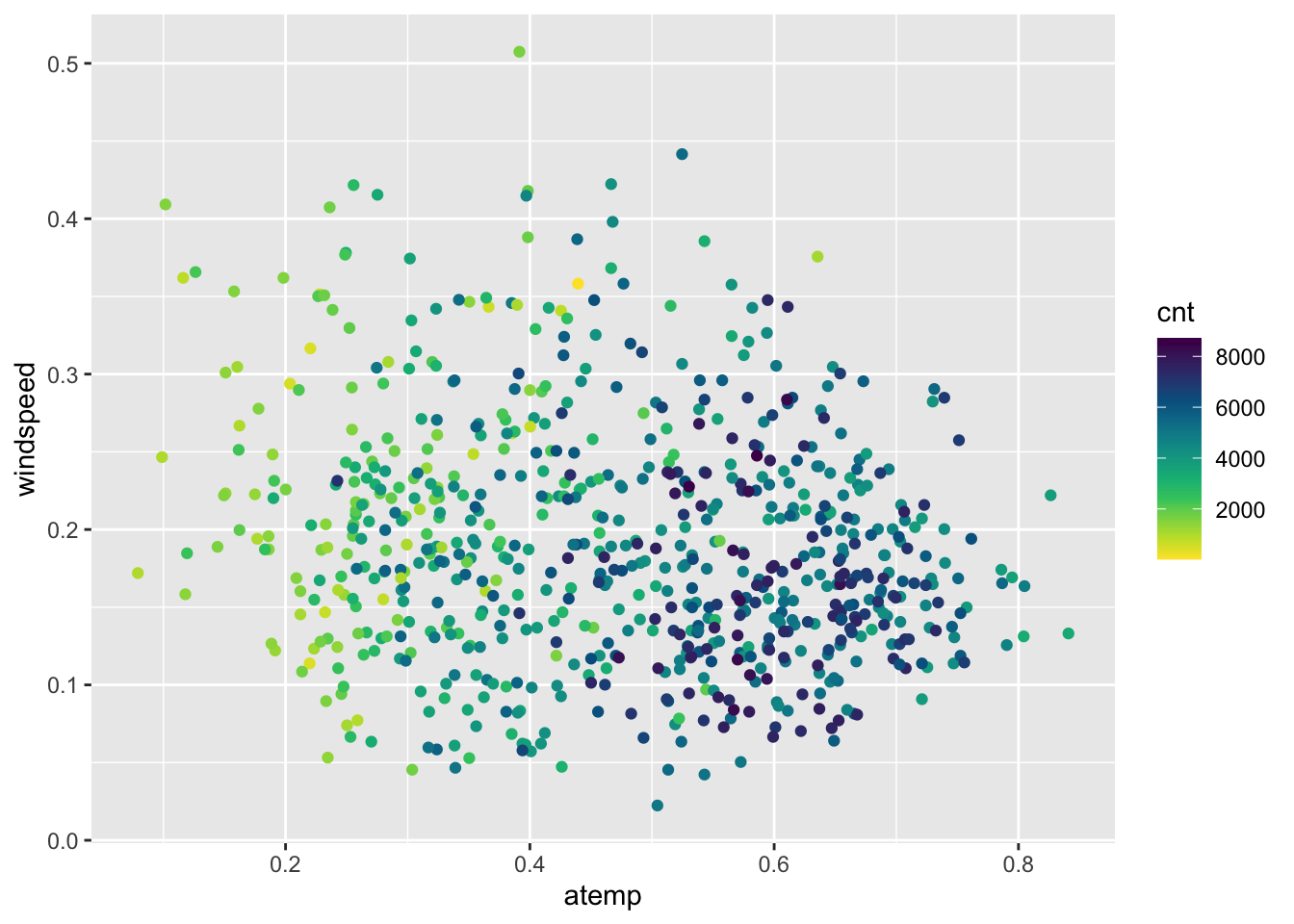

Obviously, the number of bike rentals depends strongly on weather conditions. In the following plot we represent cnt as a function of atemp and windspeed, specifying a scale color from yellow to dark blue with 12 levels:

bike %>%

ggplot()+

geom_point(aes(x=atemp,y=windspeed,col=cnt))+

scale_colour_gradientn(colours = rev(hcl.colors(12)))

It can be observed that the number of bike rentals increases with temperature.

2.2.1 Creation of the training, validation and test set: method 1

We divide the dataset into 3 subsets:

- training set: containing 70% of the observations

- validating set: containing 15% of the observations

- test set: containing 15% of the observations

The procedure is done randomly by using the function sample. In order to have reproducible results, it is necessary to set the seed.

set.seed(1, sample.kind = "Rejection")

# Sample the indexes for the training observations

index <- sample(c(1,2,3),

size=nrow(bike),

prob=c(0.7,0.15,0.15),

replace=T) # the same unit (1,2 or 3) must be resampled!

head(index)## [1] 1 1 1 2 1 2# Create a new dataframe selecting the training observations

bike_training <- bike[index==1,]

head(bike_training)## instant dteday season yr mnth holiday weekday

## 1 1 2011-01-01 1 0 1 0 6

## 2 2 2011-01-02 1 0 1 0 0

## 3 3 2011-01-03 1 0 1 0 1

## 5 5 2011-01-05 1 0 1 0 3

## 8 8 2011-01-08 1 0 1 0 6

## 9 9 2011-01-09 1 0 1 0 0

## workingday weathersit temp atemp hum windspeed

## 1 0 2 0.344167 0.363625 0.805833 0.160446

## 2 0 2 0.363478 0.353739 0.696087 0.248539

## 3 1 1 0.196364 0.189405 0.437273 0.248309

## 5 1 1 0.226957 0.229270 0.436957 0.186900

## 8 0 2 0.165000 0.162254 0.535833 0.266804

## 9 0 1 0.138333 0.116175 0.434167 0.361950

## casual registered cnt

## 1 331 654 985

## 2 131 670 801

## 3 120 1229 1349

## 5 82 1518 1600

## 8 68 891 959

## 9 54 768 822# Create a new test dataframe selecting the validation observation

bike_validation <- bike[index==2,]

head(bike_validation)## instant dteday season yr mnth holiday weekday

## 4 4 2011-01-04 1 0 1 0 2

## 6 6 2011-01-06 1 0 1 0 4

## 7 7 2011-01-07 1 0 1 0 5

## 18 18 2011-01-18 1 0 1 0 2

## 21 21 2011-01-21 1 0 1 0 5

## 29 29 2011-01-29 1 0 1 0 6

## workingday weathersit temp atemp hum

## 4 1 1 0.200000 0.212122 0.590435

## 6 1 1 0.204348 0.233209 0.518261

## 7 1 2 0.196522 0.208839 0.498696

## 18 1 2 0.216667 0.232333 0.861667

## 21 1 1 0.177500 0.157833 0.457083

## 29 0 1 0.196522 0.212126 0.651739

## windspeed casual registered cnt

## 4 0.1602960 108 1454 1562

## 6 0.0895652 88 1518 1606

## 7 0.1687260 148 1362 1510

## 18 0.1467750 9 674 683

## 21 0.3532420 75 1468 1543

## 29 0.1453650 123 975 1098# Create a new test dataframe selecting the validation observation

bike_test <- bike[index==3,]

head(bike_test)## instant dteday season yr mnth holiday weekday

## 15 15 2011-01-15 1 0 1 0 6

## 17 17 2011-01-17 1 0 1 1 1

## 20 20 2011-01-20 1 0 1 0 4

## 35 35 2011-02-04 1 0 2 0 5

## 37 37 2011-02-06 1 0 2 0 0

## 39 39 2011-02-08 1 0 2 0 2

## workingday weathersit temp atemp hum

## 15 0 2 0.233333 0.248112 0.498750

## 17 0 2 0.175833 0.176771 0.537500

## 20 1 2 0.261667 0.255050 0.538333

## 35 1 2 0.211304 0.228587 0.585217

## 37 0 1 0.285833 0.291671 0.568333

## 39 1 1 0.220833 0.198246 0.537917

## windspeed casual registered cnt

## 15 0.157963 222 1026 1248

## 17 0.194017 117 883 1000

## 20 0.195904 83 1844 1927

## 35 0.127839 88 1620 1708

## 37 0.141800 354 1269 1623

## 39 0.361950 64 1466 1530The validation set will be used to tune the model, i.e. to choose the best value of the tuning parameter (the number of neighbors) which minimizes the validation error. Finally, we will rejoin together training and validation datasets and we will calculate the Test MSE .

2.2.2 Implementation of KNN regression with \(K=1\)

We start by considering the case when \(K=1\), corresponding to the most flexible model, when only one neighbor is considered.

As mention in Section 2.1 we use the nearest_neighbor() function (see https://parsnip.tidymodels.org/reference/nearest_neighbor.html) to create a “KNN” regression model specification with \(K = 1\) (the number of neighbors) and weight_func = "rectangular" (uniform weights for neighbors). We then set the engine to kknn (which is the used package) and the mode to regression (this specifies which is prediction outcome mode). We use select() from the dplyr package to choose the relevant columns for our training and validation datasets. We finally use the fit() function to fit the KNN regression model to the training data, using the formula cnt ~ atemp + windspeed + hum.

# Specify the KNN regression model with K = 1

knn_spec <- nearest_neighbor(neighbors = 1, weight_func = "rectangular") %>%

set_engine("kknn") %>%

set_mode("regression")

# Prepare the training and validation datasets

bike_training <- bike_training %>%

select(atemp, windspeed, hum, cnt)

bike_validation <- bike_validation %>%

select(atemp, windspeed, hum, cnt)

# Fit the KNN regression model to the training data using the specified model

knn_fit <- knn_spec %>%

fit(cnt ~ atemp + windspeed + hum, data = bike_training)then using the predict() function and based on the fitted model, we make predictions on the validation dataset. The predictions are stored in the KNNpred1 object.

# Make predictions on the validation data

KNNpred1 <- knn_fit %>%

predict(bike_validation)

head(KNNpred1)## # A tibble: 6 × 1

## .pred

## <dbl>

## 1 2431

## 2 1746

## 3 1204

## 4 2177

## 5 822

## 6 1985We are in particular interested in the variable .pred which contains the predictions \(\hat y_0\) for the validation observations. It is then possible to compute the validation mean square error (MSE):

\[

\text{mean}(y_0-\hat y_0)^2

\]

where average is computed over all the obsevations in the validation set.

# Compute the MSE

mean((bike_validation$cnt-KNNpred1$.pred)^2)## [1] 3171956We can also compute the metrics using metrics() function from yardstick package by specifying the truth and estimate arguments.

# Bind the true values from bike_validation to the predictions in KNNpred1

bike_validation <- bike_validation %>%

bind_cols(KNNpred1) %>%

rename(knn1_pred = .pred)

# Calculate the metrics

bike_validation %>%

metrics(truth = cnt, estimate = knn1_pred) ## # A tibble: 3 × 3

## .metric .estimator .estimate

## <chr> <chr> <dbl>

## 1 rmse standard 1781.

## 2 rsq standard 0.323

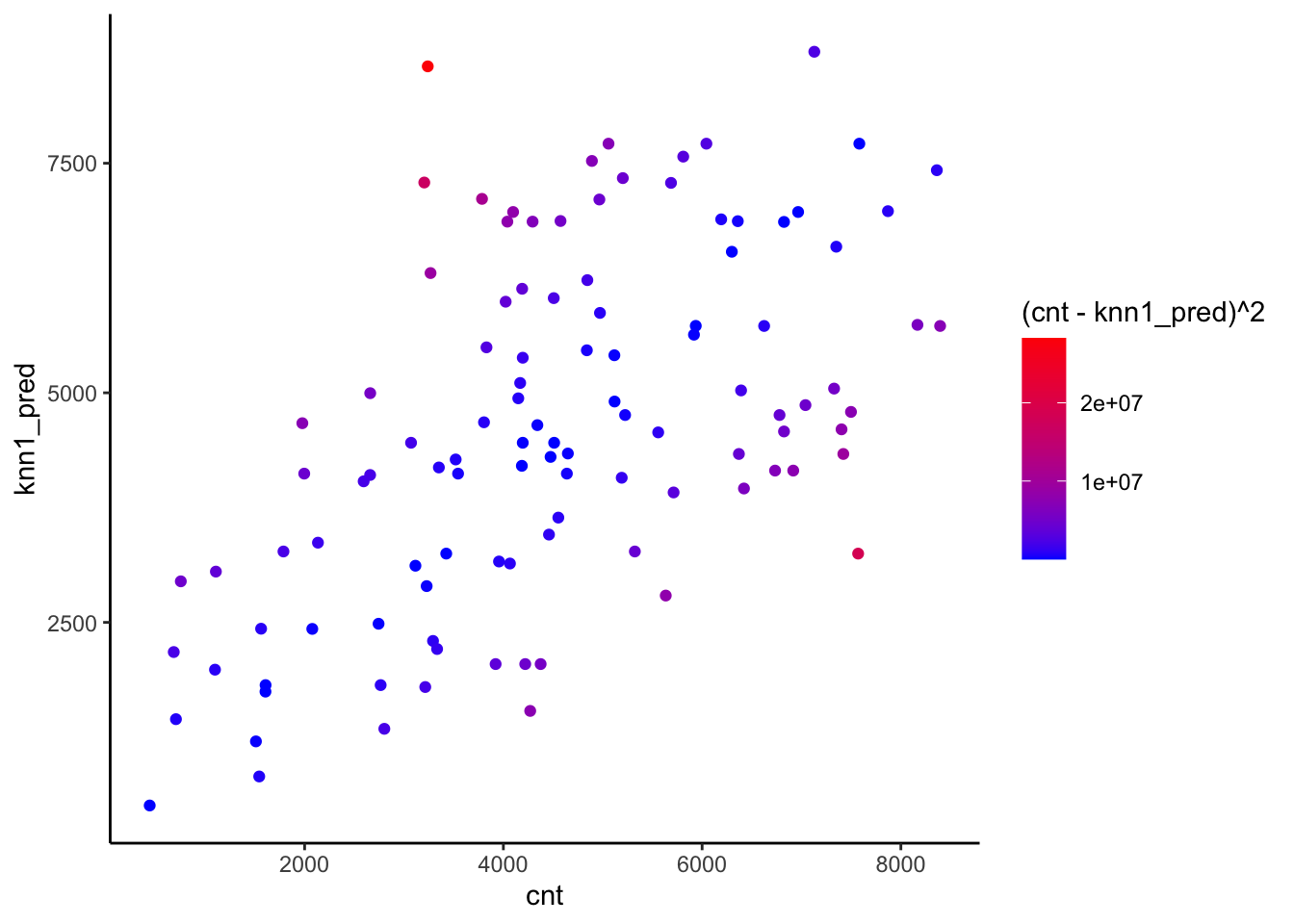

## 3 mae standard 1440.It is also possible to plot observed and predicted values.

To do this we represent graphically the observed and predicted values and assign to the points a color given by the values of the squared error:

bike_validation %>%

ggplot() +

geom_point(aes(x=cnt,y=knn1_pred,col=(cnt-knn1_pred)^2)) +

scale_color_gradient(low="blue",high="red") +

theme_classic() Blue observations are characterized by a smaller squared error meaning that predictions are close to observed values.

Blue observations are characterized by a smaller squared error meaning that predictions are close to observed values.

2.2.3 Implementation of KNN regression with different values of \(K\)

We now use a for loop to implement automatically the KNN regression for different values of \(K\) . In particular, we consider the values 1, 10, 25, 50,100, 200 and 500. Each step of the loop, indexed by a variable i, considers a different value of \(K\). We want to save in a vector all the values of the test MSE.

# Create an empty vector where values of the MSE will be saved

mse_vec <- c()

# Create a vector with the values of K

K_vec <- c(1, 10, 25, 50, 100, 200, 500)

# Start the for loop

for (i in 1:length(K_vec)) {

# Define the KNN model specification

knn_spec <- nearest_neighbor(neighbors = K_vec[i], weight_func = "rectangular") %>%

set_engine("kknn") %>%

set_mode("regression")

# Fit the KNN model

knn_fit <- knn_spec %>%

fit(cnt ~ atemp + windspeed + hum, data = bike_training)

# Make predictions using the KNN model

knn_preds <- knn_fit %>%

predict(new_data = bike_validation)

# Calculate the MSE

mse_vec[i] <- mean((bike_validation$cnt - knn_preds$.pred)^2)

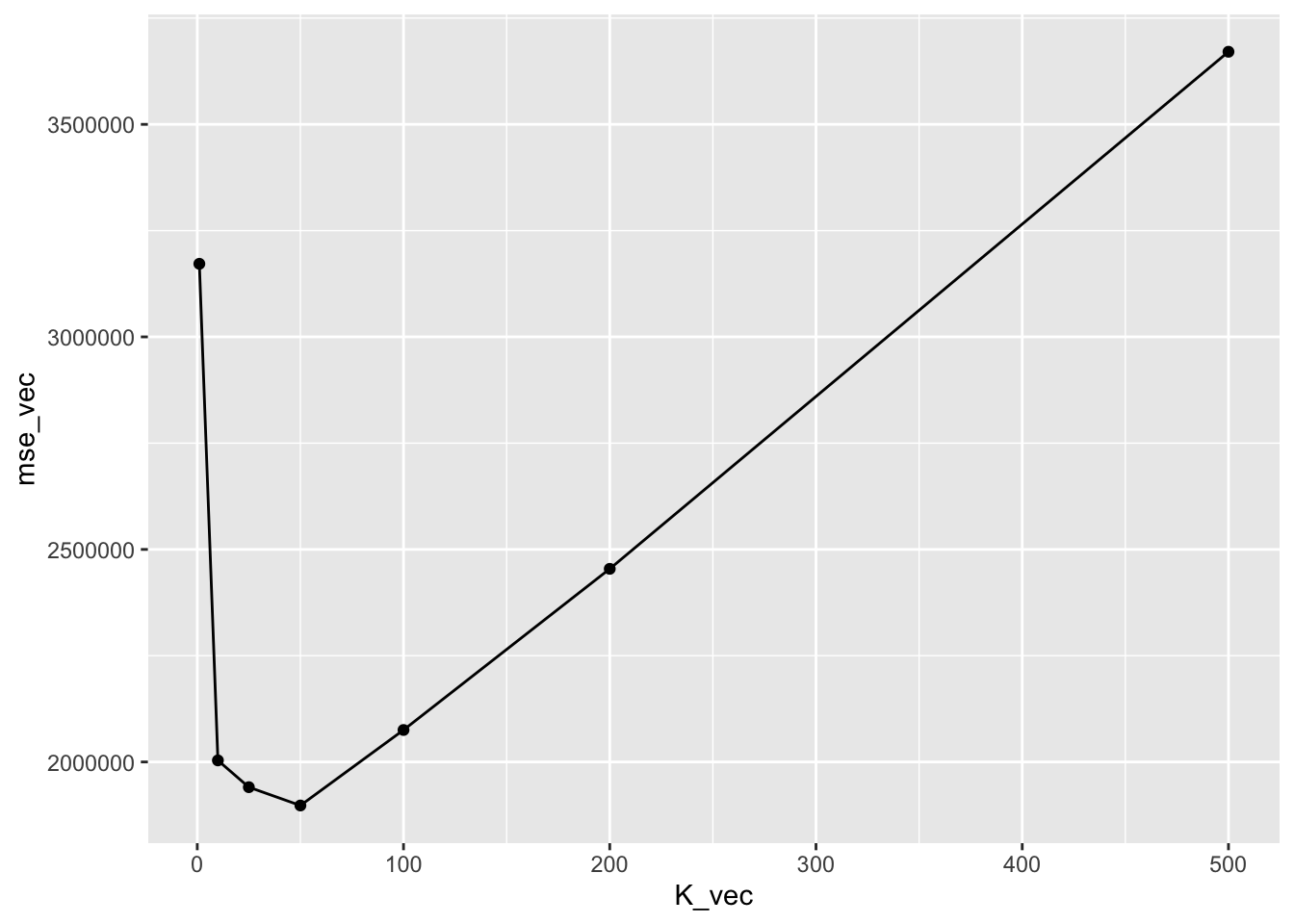

}The value of the validation MSE are the following:

mse_vec## [1] 3171956 2003670 1940664 1897585 2075204 2454330 3670818It is useful to plot the values of the test MSE as a function of \(k\):

data.frame(K_vec,mse_vec) %>%

ggplot(aes(K_vec,mse_vec)) +

geom_point()+

geom_line()

Note the U-shape of the plotted line which is typical of the test error.

The value of \(K\) which minimizes the MSE is in position 4 and is given by \(K\) equal to 50:

# Smallest MSE

MSE_KNN = min(mse_vec)

MSE_KNN## [1] 1897585# Its position

which.min(mse_vec)## [1] 4# Corresponding value of k

K_vec[which.min(mse_vec)]## [1] 50K_final <- K_vec[which.min(mse_vec)]2.2.4 Assessment of the tuned model

Now we can finally calculate the Test MSE with the selected parameter. Recall to rejoin together the training and the validation data for a new larger training set.

bike_validation = bike_validation %>% select(-knn1_pred)

bike_training = rbind(bike_validation, bike_training) # Define a nearest neighbors model specification

knn_spec <- nearest_neighbor(neighbors = K_final, weight_func = "rectangular") %>%

set_engine("kknn") %>%

set_mode("regression")

# Fit the final KNN model on the combined training data

knn_final_fit <- knn_spec %>%

fit(cnt ~ atemp + hum + windspeed, data = bike_training)

# Make predictions on the bike_test dataset

predictions <- predict(knn_final_fit, bike_test) %>%

bind_cols(bike_test)

# Calculate the mean squared error for the final KNN model

mse_knn_final <- mean((predictions$.pred - predictions$cnt)^2)

mse_knn_final## [1] 17666872.2.5 Comparison of KNN with the multiple linear model

We consider for the same set of data also the multiple linear model with 3 regressors: \[ Y = \beta_0+\beta_1 X_1+\beta_2 X_2+\beta_3 X_3 +\epsilon \]

This is usually implemented by using the lm function. Here we instead use the linear_reg function as implemented in the tidymodels style; however note that the engine is the usual lm function (see https://parsnip.tidymodels.org/reference/details_linear_reg_lm.html):

# Define a linear regression model specification

lm_spec <- linear_reg() %>% # Create a linear regression model specification

set_engine("lm")

# Fit the linear regression model on the bike_training dataset

lm_bike_fit <- lm_spec %>%

fit(cnt ~ atemp + windspeed + hum, data = bike_training) # Fit the model using the specified formula and data

# Print the summary of the fitted linear regression model

summary(lm_bike_fit$fit)##

## Call:

## stats::lm(formula = cnt ~ atemp + windspeed + hum, data = data)

##

## Residuals:

## Min 1Q Median 3Q Max

## -4928.3 -1036.5 -86.7 1116.6 3554.3

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 3858.7 367.3 10.505 < 2e-16 ***

## atemp 7552.0 360.2 20.968 < 2e-16 ***

## windspeed -4658.7 763.7 -6.100 1.87e-09 ***

## hum -3268.4 410.8 -7.957 8.50e-15 ***

## ---

## Signif. codes:

## 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 1418 on 617 degrees of freedom

## Multiple R-squared: 0.4646, Adjusted R-squared: 0.462

## F-statistic: 178.5 on 3 and 617 DF, p-value: < 2.2e-16The corresponding predictions and test MSE can be obtained as follows by making use of the predict function:

# Make predictions using the linear regression model

pred_lm <- predict(lm_bike_fit, new_data = bike_test)

# Calculate the mean squared error (MSE)

MSE_lm <- mean((bike_test$cnt - pred_lm$.pred)^2)

MSE_lm## [1] 2090156Note that the test MSE of the linear regression model is higher than the KNN MSE with \(k=50\).

2.2.6 Comparison of KNN with the multiple linear model with quadratic terms

As a sort of “tuning”, we try to extend the previous linear model by including quadratic terms of the regressors: \[ Y = \beta_0+\beta_1 X_1+\beta_2 X_2+\beta_3 X_3 + \beta_4 X_1^2 + \beta_5 X_2^2 + \beta_6 X_3^2+\epsilon \]

This is still a linear model in the parameters (not in the variables). Polynomial regression models are able to produce non-linear fitted functions. It is worth mentioning that also the order of the polynomial d should be tuned but, due to time constraints, we don’t do it.

This model can be implemented by using the poly function (with degree=2 in this case) in the formula specification. This specifies a model including \(X\) and \(X^2\).

# we use the same lm_spec that we used for simple linear regression

# Fit the model using the training data

lm_poly_fit <- fit(lm_spec,

cnt ~ poly(atemp, 2) + poly(windspeed, 2) + poly(hum, 2),

data = bike_training)As before we compute the test MSE:

pred_lm2 <- predict(lm_poly_fit, new_data = bike_test)

# Calculate the mean squared error (MSE)

MSE_lm2 <- mean((bike_test$cnt - pred_lm2$.pred)^2)

MSE_lm2## [1] 18643832.2.7 Final comparison

We can now compare the MSE of the best KNN model and of the 2 linear regression models:

mse_knn_final## [1] 1766687MSE_lm## [1] 2090156MSE_lm2## [1] 1864383Results show that the multiple linear regression model has the worst performance and the KNN with \(k=50\) have the best performance.

2.3 KNN for classification problems

For KNN classification we will use the iris dataset which is included in R (see ?iris). It contains the measurements in centimeters of the variables sepal length and width and petal length and width, respectively, for 50 flowers from each of 3 species of iris. The species are Iris setosa, versicolor, and virginica.

glimpse(iris)## Rows: 150

## Columns: 5

## $ Sepal.Length <dbl> 5.1, 4.9, 4.7, 4.6, 5.0, 5.4, 4.6, 5.0…

## $ Sepal.Width <dbl> 3.5, 3.0, 3.2, 3.1, 3.6, 3.9, 3.4, 3.4…

## $ Petal.Length <dbl> 1.4, 1.4, 1.3, 1.5, 1.4, 1.7, 1.4, 1.5…

## $ Petal.Width <dbl> 0.2, 0.2, 0.2, 0.2, 0.2, 0.4, 0.3, 0.2…

## $ Species <fct> setosa, setosa, setosa, setosa, setosa…summary(iris)## Sepal.Length Sepal.Width Petal.Length

## Min. :4.300 Min. :2.000 Min. :1.000

## 1st Qu.:5.100 1st Qu.:2.800 1st Qu.:1.600

## Median :5.800 Median :3.000 Median :4.350

## Mean :5.843 Mean :3.057 Mean :3.758

## 3rd Qu.:6.400 3rd Qu.:3.300 3rd Qu.:5.100

## Max. :7.900 Max. :4.400 Max. :6.900

## Petal.Width Species

## Min. :0.100 setosa :50

## 1st Qu.:0.300 versicolor:50

## Median :1.300 virginica :50

## Mean :1.199

## 3rd Qu.:1.800

## Max. :2.500The variable Species is the response categorical variables with 3 categories.

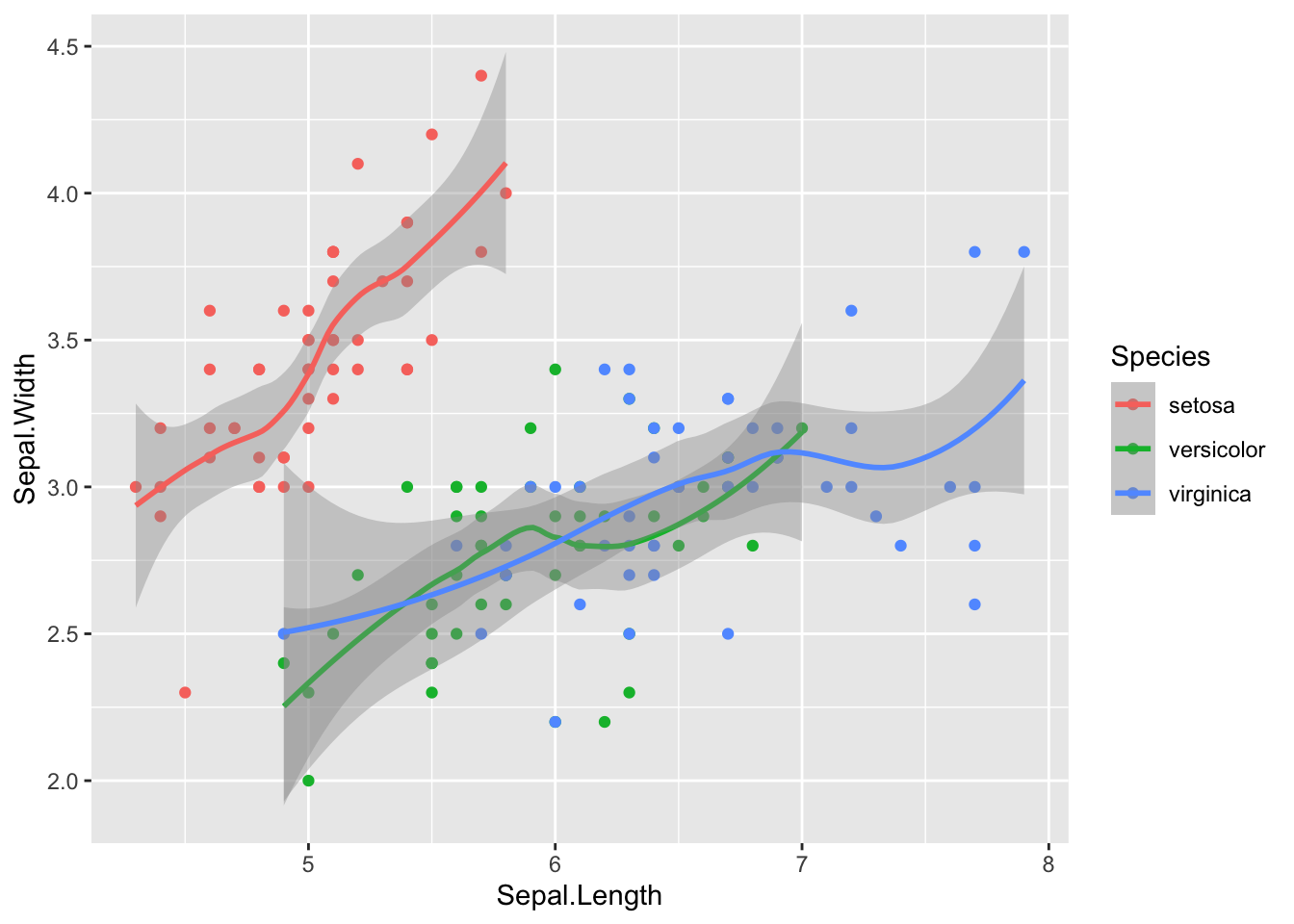

As usual we start with visualizing the data with a plot. In this case we represent Species as a function of Sepal.Length and Sepal.Width:

iris %>%

ggplot(aes(Sepal.Length, Sepal.Width,col=Species))+

geom_point()+

geom_smooth()## `geom_smooth()` using method = 'loess' and formula 'y ~ x' The following plot instead considers

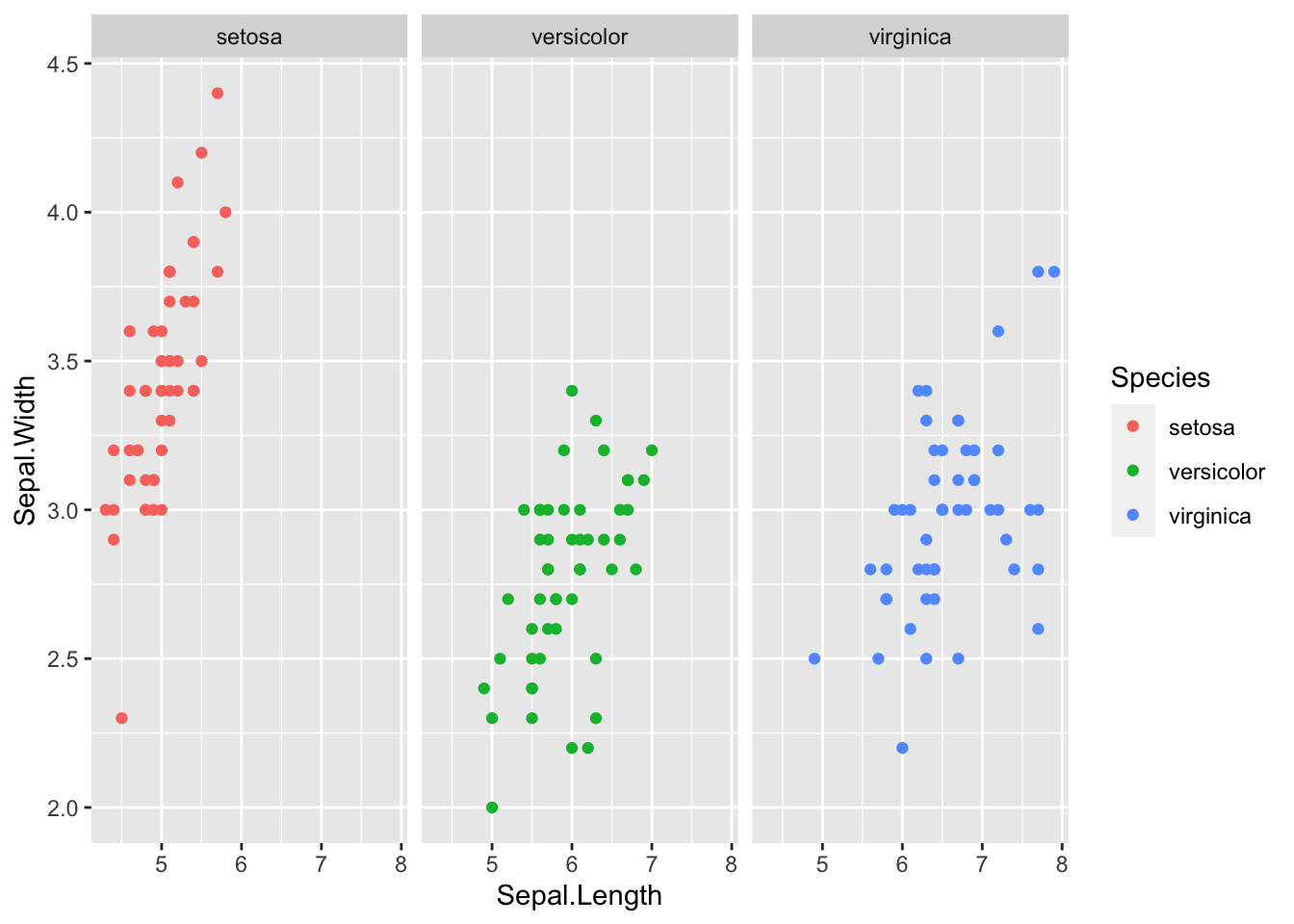

The following plot instead considers Species as a function of Petal.Length and Petal.Width by using separate plots:

iris %>%

ggplot(aes(Sepal.Length, Sepal.Width,col=Species))+

geom_point()+

facet_wrap(~Species)

We split the dataset into 2 subsets. We don’t need three datasets because we are only interested in the tuning and not in model comparison in this section. The datasets will be:

- training set: containing 70% of the observations

- test set: containing 30% of the observations

The procedure is done randomly by using the function slice_sample. In order to have reproducible results, it is necessary to set the seed.

set.seed(1, sample.kind="Rejection")

# sample a vector of indexes/positions

iris <- iris %>% mutate(id = row_number()) #create a new column with the row numbers

iris_training <- iris %>% slice_sample(prop=0.7)

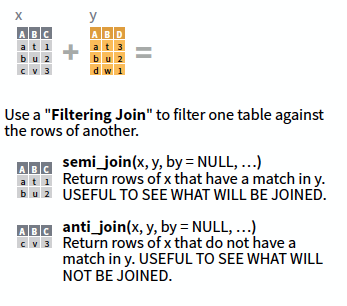

iris_test <- anti_join(x=iris, y=iris_training, by="id")For a graphical description of the anti_join function have a look at the following picture:

2.3.1 KNN classification with \(K=1\)

We now implement KNN classification with \(K=1\) by using the nearest_neighbor() as previously done for the regression KNN. As before we have to specify the regressors for the training and test observations and the response variable vector. The only difference with respect to KNN regression is that we have to set the mode to classification.

# Create a k-Nearest Neighbors model specification

knn_spec <- nearest_neighbor(neighbors = 1, weight_func = "rectangular") %>%

set_engine("kknn") %>%

set_mode("classification")

# Fit the model using the training data

knn_fit <- knn_spec %>%

fit(Species ~ ., data = iris_training)

# Make predictions using the test data

out1 <- predict(knn_fit, iris_test, type = "class") # set the type to 'prob' to see

#the probability of each class

#set it to 'class' to see only the predictionPredictions are contained in the out1$.pred_class object:

# Print the output

out1$.pred_class## [1] setosa setosa setosa setosa setosa

## [6] setosa setosa setosa setosa setosa

## [11] setosa setosa setosa setosa setosa

## [16] versicolor versicolor versicolor versicolor versicolor

## [21] versicolor versicolor versicolor versicolor versicolor

## [26] versicolor versicolor versicolor versicolor versicolor

## [31] versicolor versicolor virginica versicolor virginica

## [36] virginica virginica virginica virginica virginica

## [41] virginica virginica virginica virginica virginica

## Levels: setosa versicolor virginicaTo compare predictions \(\hat y_0\) and observed values \(y_0\) it is useful to create the confusion matrix which is a double-entry matrix with predicted and observed categories and the corresponding frequencies.

To create the confusion matrix we use the conf_mat() function from the yardstick package.

iris_test = iris_test %>%

mutate(pred_knn = out1$.pred_class)

confusion_matrix <- iris_test %>%

conf_mat(truth = Species, estimate = pred_knn)In the main diagonal we can read the number of correctly classified observations. Outside from the main diagonal we have the number of missclassified observations.

The percentage test error rate is computed as follows:

#test error rate (1 - accuracy)

mean(out1$.pred_class != iris_test$Species)*100## [1] 2.222222# we can also use the accuracy function

test_err = iris_test %>%

accuracy(truth = Species, estimate = pred_knn) # accuracy

(1 - test_err$.estimate) * 100 # error rate## [1] 2.222222In this case we see that 2.22% of the observations are missclassified.

2.3.2 KNN classification with different values of \(K\)

We now consider different values for \(K\). In particular we run the KNN algorithm for all the values of \(K\) from 1 to 100 and save the test error rate in a vector:

maxK <- 100

errorrate <- c()

for (i in 1:maxK) {

# KNN classification with tidymodels

knn_spec <- nearest_neighbor(weight_func = "rectangular", neighbors = i) %>%

set_engine("kknn") %>%

set_mode("classification")

knn_fit <- knn_spec %>%

fit(Species ~ ., data = iris_training)

# Make predictions

out <- predict(knn_fit, iris_test, type = "class")

# Test error rate (save in the i-th position of the vector)

errorrate[i] <- mean(out$.pred_class != iris_test$Species)

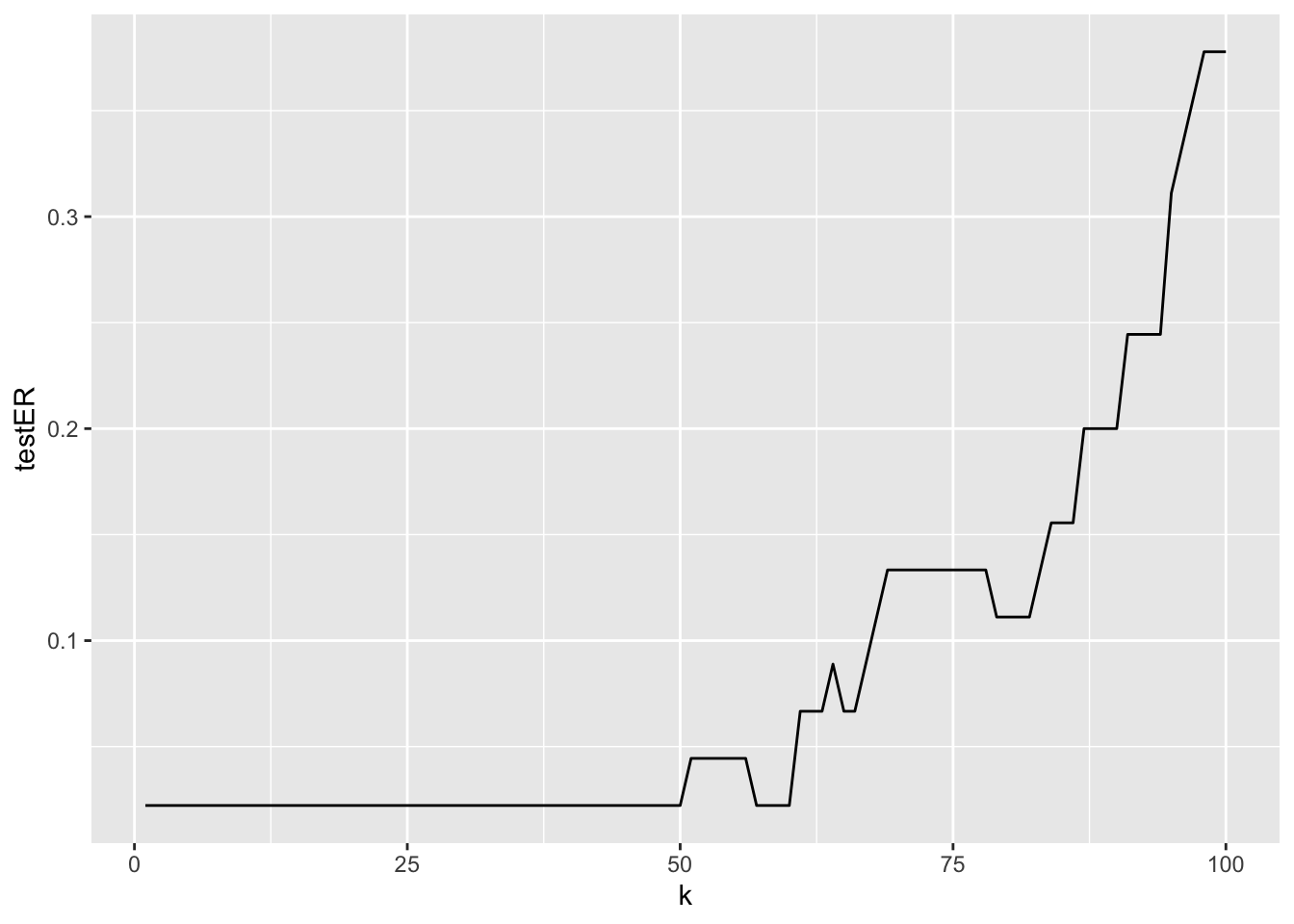

}To plot the test error rate as a function of \(K\) by using ggplot we can proceed as follows:

data.frame(k=1:maxK,testER = errorrate) %>%

ggplot() +

geom_line(aes(x=k,y=testER))

The best value of \(K\) which minimizes the test error rate is given by \(K=\) 1:

which.min(errorrate) ## [1] 1Note that there are other bigger values of \(K\) which provide the same lowest error rate (0.0222222):

which(errorrate==min(errorrate))## [1] 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19

## [20] 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38

## [39] 39 40 41 42 43 44 45 46 47 48 49 50 57 58 59 60This gives us the possibility to choose a different level of flexibility without worsening the test error.

2.4 Exercises Lab 1

2.4.1 Exercise 1

Use the Weekly data set contained in the ISLR package. See ?Weekly.

library(ISLR)

library(tidyverse)

library(tidymodels)

?Weekly

head(Weekly)## Year Lag1 Lag2 Lag3 Lag4 Lag5 Volume Today

## 1 1990 0.816 1.572 -3.936 -0.229 -3.484 0.1549760 -0.270

## 2 1990 -0.270 0.816 1.572 -3.936 -0.229 0.1485740 -2.576

## 3 1990 -2.576 -0.270 0.816 1.572 -3.936 0.1598375 3.514

## 4 1990 3.514 -2.576 -0.270 0.816 1.572 0.1616300 0.712

## 5 1990 0.712 3.514 -2.576 -0.270 0.816 0.1537280 1.178

## 6 1990 1.178 0.712 3.514 -2.576 -0.270 0.1544440 -1.372

## Direction

## 1 Down

## 2 Down

## 3 Up

## 4 Up

## 5 Up

## 6 Downglimpse(Weekly)## Rows: 1,089

## Columns: 9

## $ Year <dbl> 1990, 1990, 1990, 1990, 1990, 1990, 1990,…

## $ Lag1 <dbl> 0.816, -0.270, -2.576, 3.514, 0.712, 1.17…

## $ Lag2 <dbl> 1.572, 0.816, -0.270, -2.576, 3.514, 0.71…

## $ Lag3 <dbl> -3.936, 1.572, 0.816, -0.270, -2.576, 3.5…

## $ Lag4 <dbl> -0.229, -3.936, 1.572, 0.816, -0.270, -2.…

## $ Lag5 <dbl> -3.484, -0.229, -3.936, 1.572, 0.816, -0.…

## $ Volume <dbl> 0.1549760, 0.1485740, 0.1598375, 0.161630…

## $ Today <dbl> -0.270, -2.576, 3.514, 0.712, 1.178, -1.3…

## $ Direction <fct> Down, Down, Up, Up, Up, Down, Up, Up, Up,…- How many data are available for each year? What is the temporal frequency of the data?

- Produce the boxplot which represents

Todayreturns as a function ofYear. Do you observe any difference across years? - Provide the scatterplot matrix for

TodayandLag1. Do you observe strong correlations? - Plot the distribution of the variable

Direction(with a barplot) for year 2010. Use percentage and not absolute frequencies. - Plot the distribution of the variable

Direction(with a barplot) separately for all the available years. Use percentage and not absolute frequencies. Attention: percentages should be computed by considering the number of observations available for each year and not the total number of observations. - Consider as training set the data available from 1990 to 2008 with

Lag1,Lag2andLag3as predictors andDirectionas response variable. How many data do you have in the training and test datasets? - Compute the test error rate for the KNN classifier with \(k=1\).

- Compute the test error rate for the KNN classifier for \(k\) from 1 to 50. Use the

forloop and save the results in a vector. - Plot the error rate as a function of \(k\). Which value of \(k\) do you suggest to use?

2.4.2 Exercise 2

In this exercise we will develop a classifier to predict if a given car gets high or low gas mileage using the Auto dataset. The data are included in the ISLR package (see Auto).

library(ISLR)

?Auto- Explore the data.

- Create a binary variable, named

mpg01, that is equal to 1 ifmpgis bigger than its median, and a 0 otherwise. Add the variablempg01to the existing dataframe. How many observations are classified with 1? - Study how

cylinders,weight,displacementandhorsepowervary according to the factormpg01. - Consider as training set the cars released in even years. Use as regressor the following variables:

cylinders,weight,displacementandhorsepower. How many data do you have in the training and test datasets? Suggestion: use the modulus operator%%to check if a year is even (e.g.4%%2and5%%2). - Run KNN with \(k=1\). Which is the value of the test error rate?

- Perform KNN with \(k\) from 1 to 50 using a

forloop. - Plot the test error rate as a function of \(k\).

- For which value of \(k\) do we get the lowest test error rate?

2.4.3 Exercise 3

Consider the Carseats data set available from the ISLR library. Read the help to understand which variables are available (and if they are qualitative or quantitative).

library(ISLR)

library(tidyverse)

library(tidymodels)

?CarseatsPlot

Salesas a function ofPrice.Split the dataset in two separate sets: the training set with 70% of the observations and 30% for the test set. Use 14 as seed.

Fit a regression model to predict

SalesusingPrice.Compute the MSE for the model with

Salesas dependent variable andPriceas regressor.Now implement KNN regression using the same variables you used for the linear regression model above. Consider all the values of \(k\) between 1 and 200. Which is the best value of \(k\) in terms of validation error?

Compare the regression model and the KNN regression (with the chosen value of \(k\)). Which is the best model?

2.4.4 Exercise 4

This exercise uses simulated data.

Use the

rnormfunction to create a vector namedxcontaining \(n=100\) values simulated from a N(0,1) distribution. Set the seed equal to 1. These values represent the predictor’s values.Use the

rnormfunction to create a vector namedepscontaining \(n=100\) values simulated from a N(0,0.025) distribution (variance=0.025). Set the seed equal to 2. These values represent the error’s values (\(\epsilon\)).Using

xandeps, obtain a vectory(the response values) according to the following (true) model. Create also a data frame containing the values ofxandy. \[ Y = -1+0.5X+\epsilon \]Which is the length of

y? What are the values of \(\beta_0\) and \(\beta_1\) in this model?Plot the simulated data.

Fit a least squares linear model to predict

yusingx. Compare the true values of the parameters with the corresponding estimates.Display the least squares line in the scatterplot obtained before. Add to the plot also the straight line corresponding to the true model (suggestion: use

geom_abline).

Fit a polynomial regression model to predict

yusingxandx^2. Is there evidence that the quadratic term improves the model fit?Repeat points 1.-3. changing the data generation process in such a way that there is less noise in the data (the values of

xdon’t change). Consider for example a variance for the error equal to 0.001 (use a new name for the response data frame, for exampledata2). Describe your results.Repeat points 1.-3. changing the data generation process in such a way that there is more noise in the data (the values of

xdon’t change). Consider for example a variance for the error equal to 1 (use a new name for the response data, for exampledata3). Describe your results.