Chapter 3 Interval Estimation via Simulation

3.1 Sampling Variability and Margin of Error

3.1.1 Estimation From a Sample

We are interested in estimating the proportion of blues among all M&M’s produced.

If this seems trivial, imagine estimating:

The proportion of patients who experience pain relief after taking a certain medication.

The proportion of defective car batteries among all car batteries produced by a manufacturer.

The proportion of all voters who support a particular candidate.

In each situation, it is not realistic to believe we could observe all individuals of interest (i.e. our population).

Instead, we observe only a sample and try to make inferences about the population based on the sample.

3.1.2 A Sample of M&M

In a fun-sized pack of M&M’s we observe the following colors:

Using our sample, we estimate that the proportion of blues is \(\hat{p}=\frac{6}{14}\approx 0.429\).

3.1.3 Estimating Sampling Variability

Of course, each sample would be different, so we shouldn’t expect the proportion of blue’s among all M&M’s to exactly match the proportion in our sample. Instead, we can use our sample estimate (\(\hat{p}\)) to get a reasonable range for where the population parameter \(p\) could lie.

We need to know how much the proportion of blues could vary from one sample to the next. (The more variability, the wider our interval will need to be).

3.1.4 Estimating Sampling Variability

In order to estimate how much sample-to-sample variability is associated with \(\hat{p}\), we’ll follow these steps:

- Start with the sample of 14 “M&M’s.”

- Randomly draw a single “M&M” and record the color. Put the “M&M” back in the bag.

- Draw another “M&M.” Again record the color and put it back.

- Repeat steps 2-3 12 more times to create a sample of size 14

- Record the proportion of blue M&M’s in your sample of 14 in the spreadsheet, next to your name, and sample 1.

- Repeat steps 1-5 2 more times, and record the proportion of blue’s next to your name, and samples 2 and 3.

3.1.5 Reading In Our Results

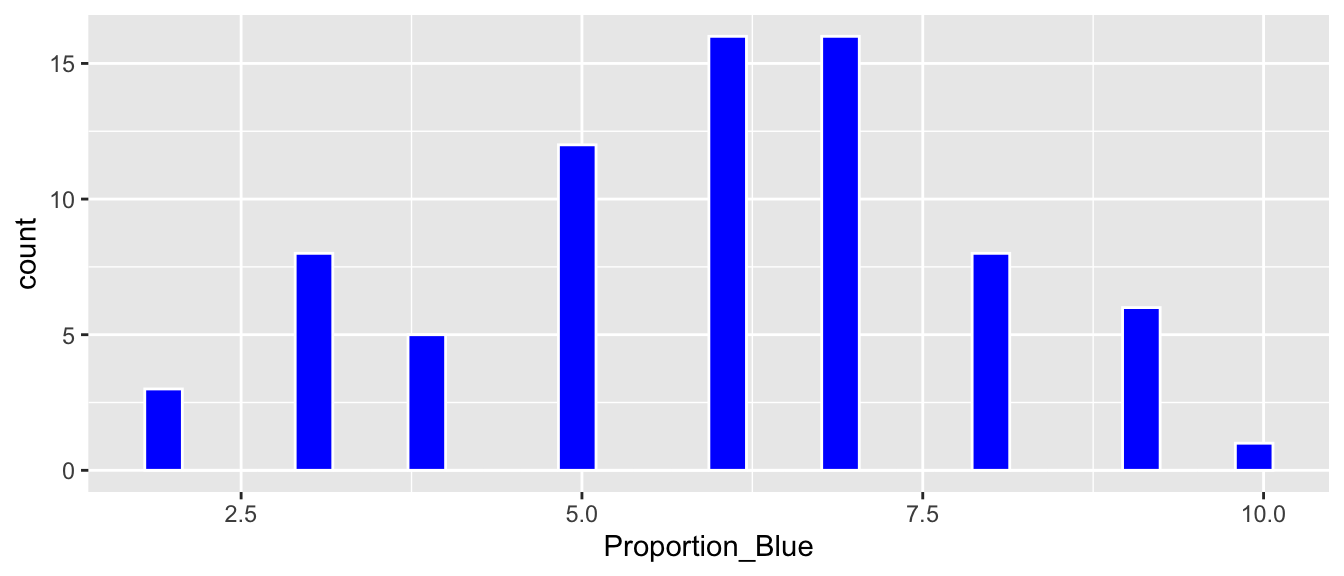

head(Class_Bootstrap_Results)## [1] 6 6 8 5 9 73.1.6 Distribution of Proportion Blue in Simulations

ggplot(data=Class_Bootstrap_Results, aes(x=Proportion_Blue)) +

geom_histogram(fill="blue", color="white")

3.1.7 Percentile Confidence Interval

c(q.025, q.975)## 2.5% 97.5%

## 2 9Based on our simulation, we are 95% confident that the proportion of blues among all M&M’s is between 2 and 9.

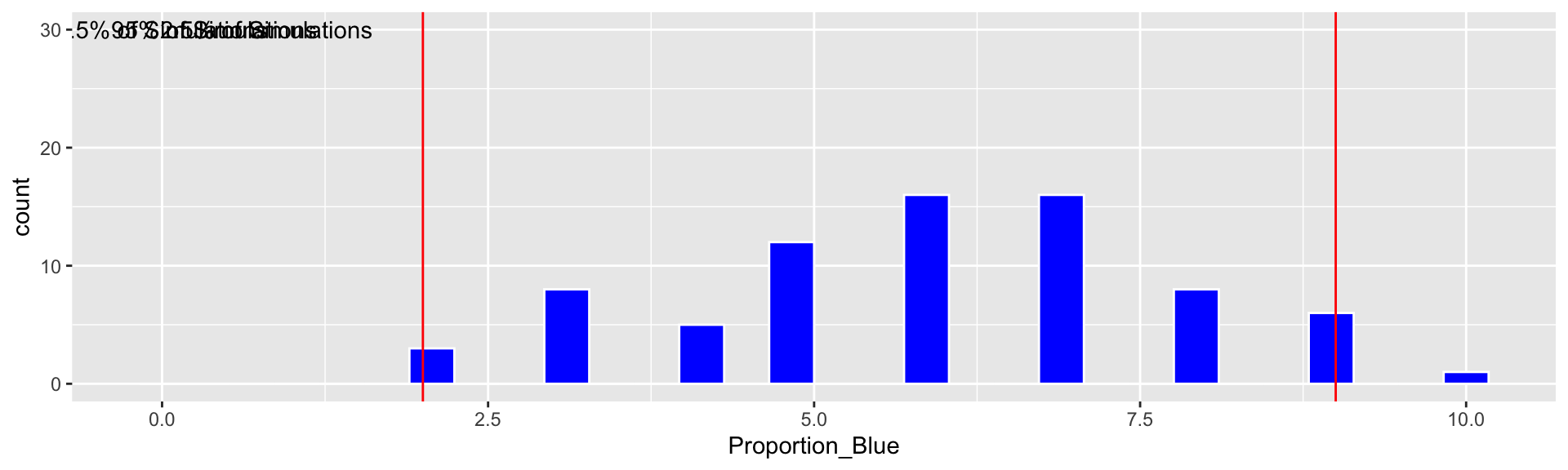

3.1.8 Simulation Using R

Although our results give us some sense of how much the proportion of blues could vary from one sample to the next, it would be better if we had LOTS of samples. We’ll use R to simulate doing this 10,000 times.

Color <- c(rep("Blue", 6), rep("Orange", 3), rep("Green", 2), rep("Yellow", 2),

rep("Red", 1))

Sample <- data.frame(Color)

BootstrapSample <- sample_n(Sample, 14, replace=TRUE)

t(BootstrapSample)## [,1] [,2] [,3] [,4] [,5] [,6] [,7] [,8] [,9]

## Color "Yellow" "Blue" "Yellow" "Blue" "Blue" "Orange" "Red" "Blue" "Orange"

## [,10] [,11] [,12] [,13] [,14]

## Color "Yellow" "Blue" "Orange" "Green" "Green"3.1.9 Simulating Many Samples in R

Proportion_Blue <- rep(NA, 10000)

for (i in 1:10000){

BootstrapSample <- sample_n(Sample, 14, replace=TRUE)

Proportion_Blue[i] <- sum(BootstrapSample$Color=="Blue")/14

}

Candy_Bootstrap_Results <- data.frame(Proportion_Blue)3.1.10 Bootstrap Results

Proportion of blues in first 6 samples

head(Candy_Bootstrap_Results)## Proportion_Blue

## 1 0.2857143

## 2 0.2142857

## 3 0.5000000

## 4 0.4285714

## 5 0.6428571

## 6 0.35714293.2 Bootstrap Confidence Intervals

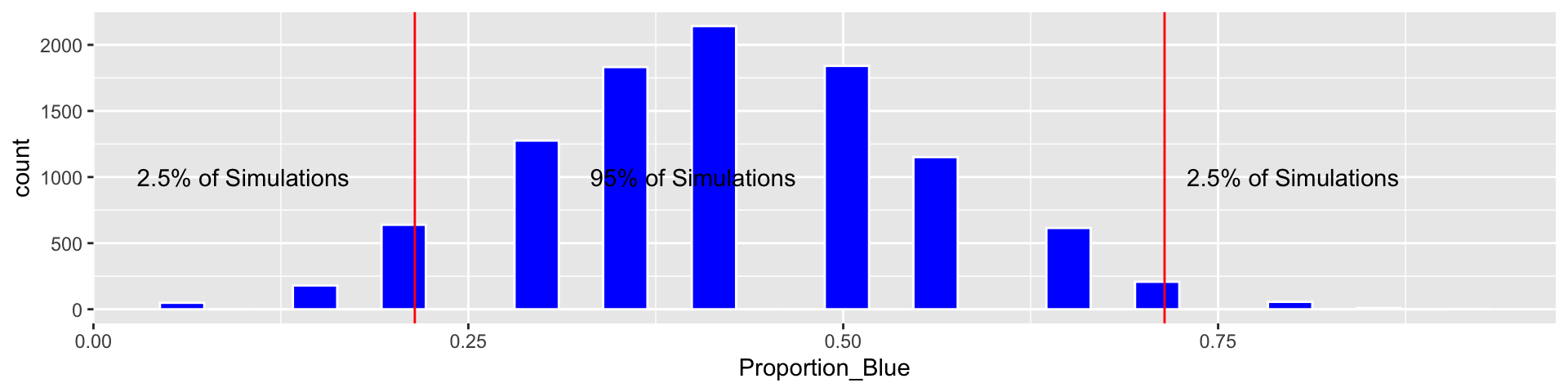

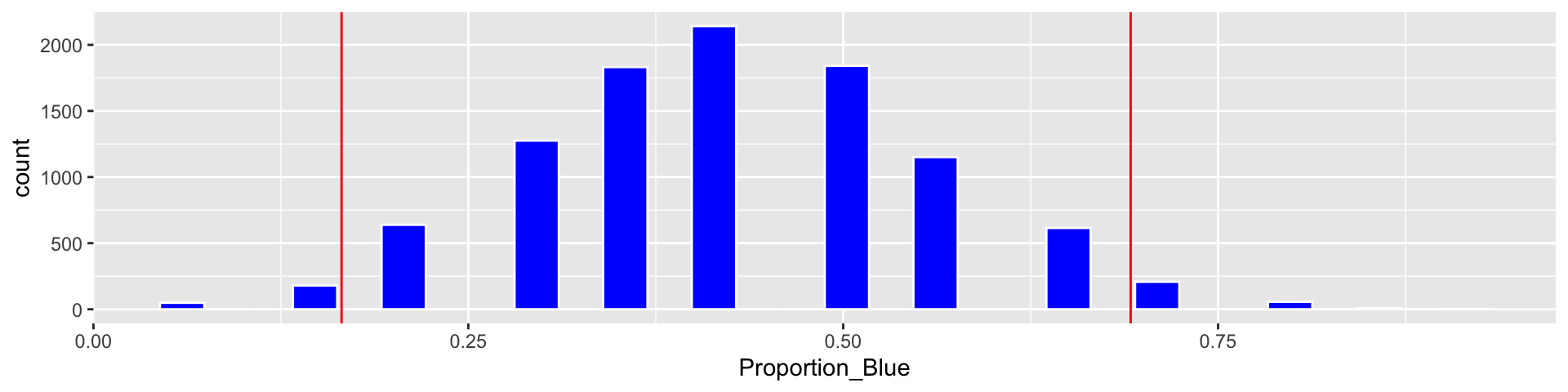

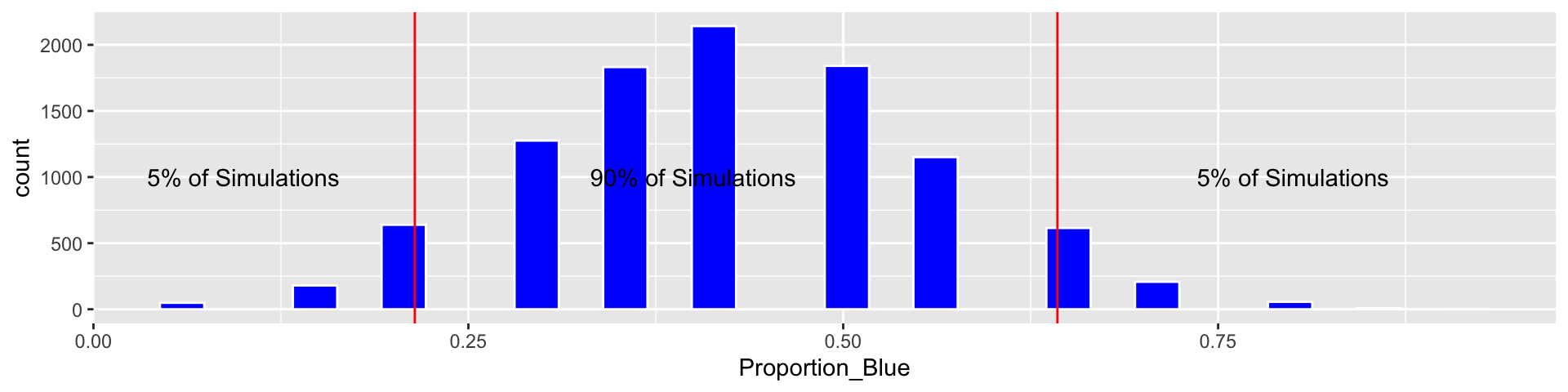

3.2.1 Bootstrap Distribution from R Simulation

c(q.025, q.975)## 2.5% 97.5%

## 0.2142857 0.7142857Based on our simulation, we are 95% confident that the proportion of blues among all M&M’s is between 0.2142857 and 0.7142857.

We call this a percentile bootstrap confidence interval.

3.2.2 Standard Error

The bootstrap standard error is the standard deviation of the bootstrap distribution. It measures the amount of variability in a statistic (in this cases difference in proportions) between samples of the given size.

SE <- sd(Candy_Bootstrap_Results$Proportion_Blue)

SE## [1] 0.1315613Based on our simulation, we estimate that the standard error in proportion of blues in a sample of 14 is 0.1315613

3.2.3 Bootstrap Standard Error Confidence Intervals

- When the sampling distribution of a statistic is symmetric and bell-shaped, approximately 95% of all samples will lead to a statistic that is within 2 standard errors of the desired population quantity.

95% bootstrap standard error confidence interval:

\[ \text{Statistic} \pm 2\times\text{Standard Error} \]

- it is only appropriate to use the bootstrap standard error confidence interval method when a sampling distribution is symmetric and bell-shaped

3.2.4 Calculating Standard Error Confidence Interval

Estimate <- 6/14

c(Estimate - 2*SE, Estimate + 2*SE)## [1] 0.1654488 0.6916941We add the cutoff points for the standard error confidence interval

Based on our simulation, we are 95% confident that the proportion of blues among all M&M’s is between 0.1654488 and 0.6916941.

3.2.5 Comparison of Bootstrap Confidence Intervals

A 95% bootstrap percentile confidence interval is determined by finding the 0.025 and 0.975 quantiles of the bootstrap distribution (i.e. by taking the middle 95% of the distribution).

A 95% standard error confidence interval is determined using

\[ \text{Statistic} \pm 2\times\text{Standard Error} \]

When the bootstrap distribution is symmetric and bell-shaped, these intervals will be approximately the same.

The \(\pm\) \(2\times\text{SE}\) is called a margin of error, and the resulting range of plausible values for the population parameter \(p\) is called a confidence interval.

A confidence interval gives a reasonable range for a value pertaining to the entire population, using information from a sample.

3.2.6 90% Bootstrap Percentile Confidence Interval

q.05 <- quantile(Candy_Bootstrap_Results$Proportion_Blue, 0.05)

q.95 <- quantile(Candy_Bootstrap_Results$Proportion_Blue, 0.95)

c(q.05, q.95)## 5% 95%

## 0.2142857 0.6428571Based on our simulation, we are 90% confident that the proportion of blues among all M&M’s is between 0.2142857 and 0.6428571.

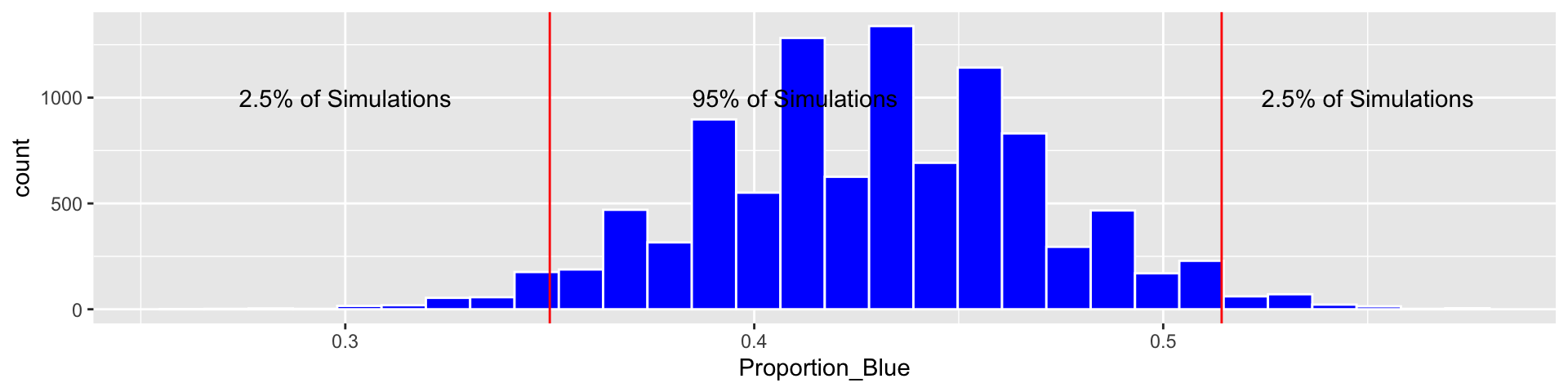

3.2.7 Impact of Sample Size

Suppose, hypothetically, that instead of a sample of 14, we had a sample of 140, with the same proportions for each color.

Color <- c(rep("Blue", 60), rep("Orange", 30), rep("Green", 20), rep("Yellow", 20),

rep("Red", 10))

Sample <- data.frame(Color)

Proportion_Blue <- rep(NA, 10000)

for (i in 1:10000){

BootstrapSample <- sample_n(Sample, 140, replace=TRUE)

Proportion_Blue[i] <- sum(BootstrapSample$Color=="Blue")/140

}

Candy_Bootstrap_Results <- data.frame(Proportion_Blue)3.2.8 Bootstrap CI for \(n=140\)

c(q.025, q.975)## 2.5% 97.5%

## 0.3500000 0.5142857Based on our simulation, we are 95% confident that the proportion of blues among all M&M’s is between 0.35 and 0.5142857.

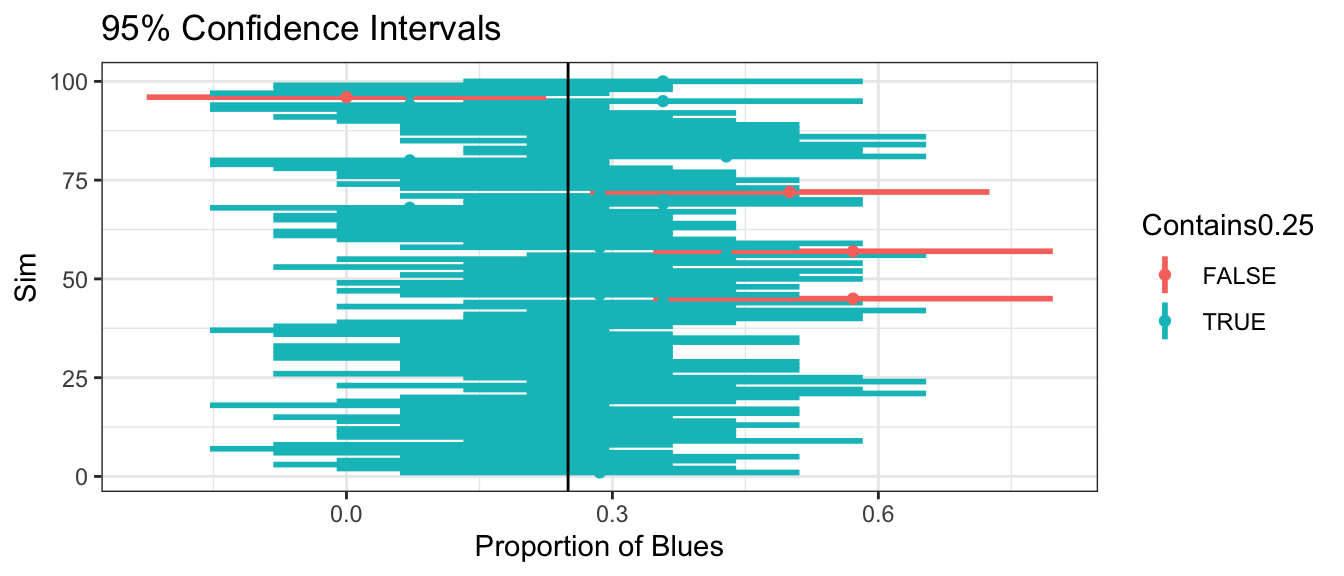

3.2.9 What does “95% Confidence” Mean?

95% confidence means that if we were to repeat our procedure on many, random samples, then in the long run, 95% of them would contain the true population parameter.

Suppose that in reality 25% of all M&M’s were blue. We simulate 100 different samples of 14 M&M’s and calculate confidence intervals for the percentage of blue’s, based on each sample. Intervals are colored according to whether or not the contain the true (typically unknown) parameter value \(p=0.25\)

96 of the samples result in intervals that contain the true proportion \(p=0.25\).

3.3 Bootstrapping Distribution of Sample Mean

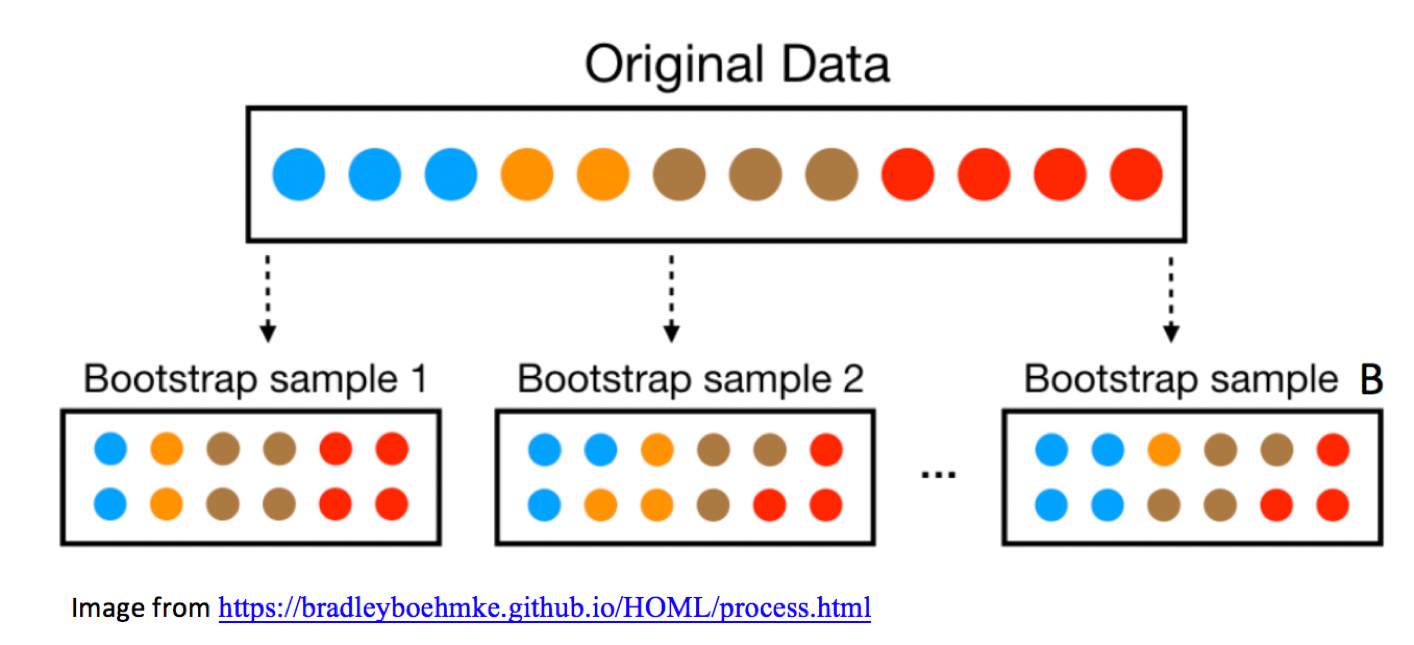

3.3.1 General Bootstrapping Procedure

The bootstrap procedure can be applied to quantify uncertainty associated with a wide range of statistics (for example, sample proportions, means, medians, standard deviations, regression coefficients, F-statistics, etc.)

Given a statistic that was calculated from a sample…

Procedure:

Take a sample of the same size as the original sample, by sampling cases from the original sample, with replacement.

Calculate the statistic of interest in the bootstrap sample.

Repeat steps 2 and 3 many (say 10,000) times, keeping track of the statistic calculated in each bootstrap sample.

Look at the distribution of statistics calculated from the bootstrap samples. The variability in this distribution can be used to approximate the variability in the sampling distribution for the statistic of interest.

3.3.2 Bootstrap Illustration

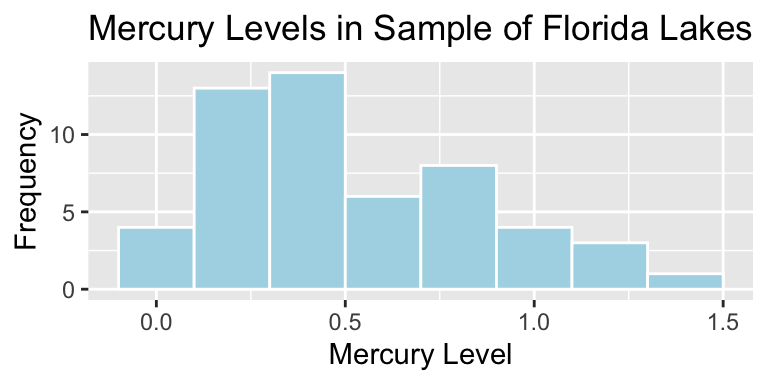

3.3.3 Mercury Levels in Florida Lakes

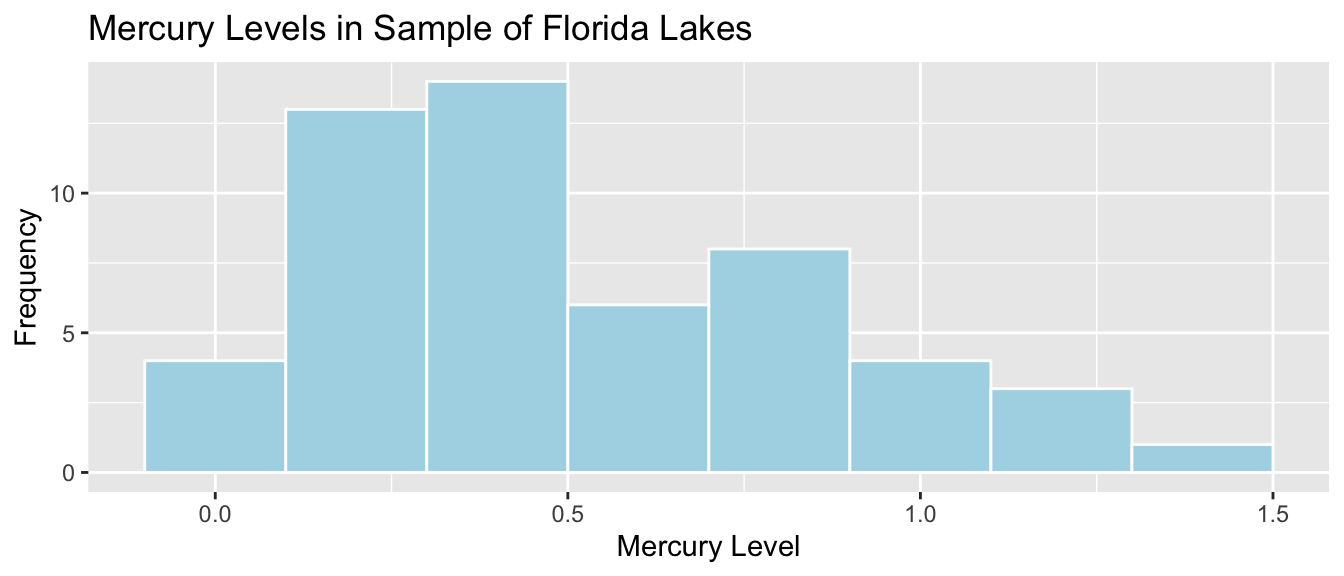

A 2004 study by Lange, T., Royals, H. and Connor, L. examined Mercury accumulation in large-mouth bass, taken from a sample of 53 Florida Lakes. If Mercury accumulation exceeds 0.5 ppm, then there are environmental concerns. In fact, the legal safety limit in Canada is 0.5 ppm, although it is 1 ppm in the United States.

3.3.4 Florida Lakes Dataset

data("FloridaLakes")

glimpse(FloridaLakes)## Rows: 53

## Columns: 12

## $ ID <int> 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 1…

## $ Lake <chr> "Alligator", "Annie", "Apopka", "Blue Cypress", "Bri…

## $ Alkalinity <dbl> 5.9, 3.5, 116.0, 39.4, 2.5, 19.6, 5.2, 71.4, 26.4, 4…

## $ pH <dbl> 6.1, 5.1, 9.1, 6.9, 4.6, 7.3, 5.4, 8.1, 5.8, 6.4, 5.…

## $ Calcium <dbl> 3.0, 1.9, 44.1, 16.4, 2.9, 4.5, 2.8, 55.2, 9.2, 4.6,…

## $ Chlorophyll <dbl> 0.7, 3.2, 128.3, 3.5, 1.8, 44.1, 3.4, 33.7, 1.6, 22.…

## $ AvgMercury <dbl> 1.23, 1.33, 0.04, 0.44, 1.20, 0.27, 0.48, 0.19, 0.83…

## $ NumSamples <int> 5, 7, 6, 12, 12, 14, 10, 12, 24, 12, 12, 12, 7, 43, …

## $ MinMercury <dbl> 0.85, 0.92, 0.04, 0.13, 0.69, 0.04, 0.30, 0.08, 0.26…

## $ MaxMercury <dbl> 1.43, 1.90, 0.06, 0.84, 1.50, 0.48, 0.72, 0.38, 1.40…

## $ ThreeYrStdMercury <dbl> 1.53, 1.33, 0.04, 0.44, 1.33, 0.25, 0.45, 0.16, 0.72…

## $ AgeData <int> 1, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 0, 1, 1, 1…3.3.5 Mercury Level in Sample of 53 Florida Lakes

## Lake AvgMercury

## Alligator 1.23

## Annie 1.33

## Apopka 0.04

## Blue Cypress 0.44

## Brick 1.20

## Bryant 0.27

## Cherry 0.48

## Crescent 0.19

## Deer Point 0.83

## Dias 0.81

## Dorr 0.71

## Down 0.50

## Eaton 0.49

## East Tohopekaliga 1.16

## Farm-13 0.05

## George 0.15

## Griffin 0.19## Lake AvgMercury

## Harney 0.77

## Hart 1.08

## Hatchineha 0.98

## Iamonia 0.63

## Istokpoga 0.56

## Jackson 0.41

## Josephine 0.73

## Kingsley 0.34

## Kissimmee 0.59

## Lochloosa 0.34

## Louisa 0.84

## Miccasukee 0.50

## Minneola 0.34

## Monroe 0.28

## Newmans 0.34

## Ocean Pond 0.87

## Ocheese Pond 0.56

## Okeechobee 0.17## Lake AvgMercury

## Orange 0.18

## Panasoffkee 0.19

## Parker 0.04

## Placid 0.49

## Puzzle 1.10

## Rodman 0.16

## Rousseau 0.10

## Sampson 0.48

## Shipp 0.21

## Talquin 0.86

## Tarpon 0.52

## Tohopekaliga 0.65

## Trafford 0.27

## Trout 0.94

## Tsala Apopka 0.40

## Weir 0.43

## Wildcat 0.25

## Yale 0.27Sample Mean:

mean(FloridaLakes$AvgMercury)## [1] 0.52716983.3.6 Distribution of Mercury Levels

## # A tibble: 1 x 5

## MeanHg MedianHg StDevHG PropOver1 N

## <dbl> <dbl> <dbl> <dbl> <int>

## 1 0.527 0.48 0.341 0.113 533.3.7 Bootstrapping for Mean Mercury Level in All Lakes

In our sample of 53 lakes, the mean mercury level is 0.527 ppm. However, this was just a random sample of lakes. We are interested in a confidence interval for the mean mercury level for all Florida Lakes.

Bootstrapping Procedure

Take a bootstrap sample of size 53, by sampling lakes with replacement.

Calculate the mean mercury concentration in the bootstrap sample.

Repeat steps 2 and 3 10,000 times, keeping track of the mean mercury concentrations in each bootstrap sample.

Look at the distribution of mean concentrations from the bootstrap samples. The variability in this distribution can be used to approximate the variability in the sampling distribution for the sample mean.

3.3.8 Original Sample

## Lake AvgMercury

## Alligator 1.23

## Annie 1.33

## Apopka 0.04

## Blue Cypress 0.44

## Brick 1.20

## Bryant 0.27

## Cherry 0.48

## Crescent 0.19

## Deer Point 0.83

## Dias 0.81

## Dorr 0.71

## Down 0.50

## Eaton 0.49

## East Tohopekaliga 1.16

## Farm-13 0.05

## George 0.15

## Griffin 0.19## Lake AvgMercury

## Harney 0.77

## Hart 1.08

## Hatchineha 0.98

## Iamonia 0.63

## Istokpoga 0.56

## Jackson 0.41

## Josephine 0.73

## Kingsley 0.34

## Kissimmee 0.59

## Lochloosa 0.34

## Louisa 0.84

## Miccasukee 0.50

## Minneola 0.34

## Monroe 0.28

## Newmans 0.34

## Ocean Pond 0.87

## Ocheese Pond 0.56

## Okeechobee 0.17## Lake AvgMercury

## Orange 0.18

## Panasoffkee 0.19

## Parker 0.04

## Placid 0.49

## Puzzle 1.10

## Rodman 0.16

## Rousseau 0.10

## Sampson 0.48

## Shipp 0.21

## Talquin 0.86

## Tarpon 0.52

## Tohopekaliga 0.65

## Trafford 0.27

## Trout 0.94

## Tsala Apopka 0.40

## Weir 0.43

## Wildcat 0.25

## Yale 0.27Sample Mean:

mean(FloridaLakes$AvgMercury)## [1] 0.52716983.3.9 Five Bootstrap Samples

The sample_n() function samples the specified number rows from a data frame, with or without replacement.

BootstrapSample1 <- sample_n(FloridaLakes, 53, replace=TRUE) %>% arrange(Lake)BootstrapSample2 <- sample_n(FloridaLakes, 53, replace=TRUE) %>% arrange(Lake)BootstrapSample3 <- sample_n(FloridaLakes, 53, replace=TRUE) %>% arrange(Lake)BootstrapSample4 <- sample_n(FloridaLakes, 53, replace=TRUE) %>% arrange(Lake)BootstrapSample5 <- sample_n(FloridaLakes, 53, replace=TRUE) %>% arrange(Lake)3.3.10 Bootstrap Sample 1

## Lake AvgMercury

## Alligator 1.23

## Annie 1.33

## Apopka 0.04

## Bryant 0.27

## Bryant 0.27

## Cherry 0.48

## Cherry 0.48

## Deer Point 0.83

## Deer Point 0.83

## Dias 0.81

## Dias 0.81

## Farm-13 0.05

## Farm-13 0.05

## Farm-13 0.05

## Griffin 0.19

## Hart 1.08

## Hatchineha 0.98## Lake AvgMercury

## Hatchineha 0.98

## Iamonia 0.63

## Istokpoga 0.56

## Josephine 0.73

## Josephine 0.73

## Kingsley 0.34

## Kingsley 0.34

## Kissimmee 0.59

## Kissimmee 0.59

## Lochloosa 0.34

## Miccasukee 0.50

## Newmans 0.34

## Newmans 0.34

## Newmans 0.34

## Okeechobee 0.17

## Okeechobee 0.17

## Parker 0.04

## Parker 0.04## Lake AvgMercury

## Placid 0.49

## Rodman 0.16

## Rousseau 0.10

## Sampson 0.48

## Sampson 0.48

## Sampson 0.48

## Talquin 0.86

## Talquin 0.86

## Talquin 0.86

## Tarpon 0.52

## Trout 0.94

## Trout 0.94

## Trout 0.94

## Tsala Apopka 0.40

## Wildcat 0.25

## Wildcat 0.25

## Wildcat 0.25

## Yale 0.27Bootstrap Sample Mean:

mean(BootstrapSample1$AvgMercury)## [1] 0.51094343.3.11 Bootstrap Sample 2

## Lake AvgMercury

## Annie 1.33

## Apopka 0.04

## Brick 1.20

## Cherry 0.48

## Cherry 0.48

## Cherry 0.48

## Crescent 0.19

## Deer Point 0.83

## Dorr 0.71

## Down 0.50

## East Tohopekaliga 1.16

## East Tohopekaliga 1.16

## Eaton 0.49

## Farm-13 0.05

## George 0.15

## Griffin 0.19

## Griffin 0.19## Lake AvgMercury

## Iamonia 0.63

## Iamonia 0.63

## Istokpoga 0.56

## Josephine 0.73

## Kissimmee 0.59

## Kissimmee 0.59

## Louisa 0.84

## Miccasukee 0.50

## Minneola 0.34

## Ocean Pond 0.87

## Okeechobee 0.17

## Okeechobee 0.17

## Orange 0.18

## Orange 0.18

## Orange 0.18

## Orange 0.18

## Panasoffkee 0.19

## Panasoffkee 0.19## Lake AvgMercury

## Panasoffkee 0.19

## Puzzle 1.10

## Puzzle 1.10

## Puzzle 1.10

## Rodman 0.16

## Rodman 0.16

## Rodman 0.16

## Shipp 0.21

## Talquin 0.86

## Talquin 0.86

## Talquin 0.86

## Tarpon 0.52

## Tohopekaliga 0.65

## Trafford 0.27

## Trout 0.94

## Weir 0.43

## Weir 0.43

## Yale 0.27Bootstrap Sample Mean:

mean(BootstrapSample2$AvgMercury)## [1] 0.52113213.3.12 Bootstrap Sample 3

## Lake AvgMercury

## Annie 1.33

## Annie 1.33

## Annie 1.33

## Annie 1.33

## Apopka 0.04

## Apopka 0.04

## Blue Cypress 0.44

## Blue Cypress 0.44

## Blue Cypress 0.44

## Blue Cypress 0.44

## Cherry 0.48

## Down 0.50

## Eaton 0.49

## Eaton 0.49

## George 0.15

## Hart 1.08

## Hart 1.08## Lake AvgMercury

## Hatchineha 0.98

## Iamonia 0.63

## Jackson 0.41

## Kissimmee 0.59

## Louisa 0.84

## Miccasukee 0.50

## Minneola 0.34

## Newmans 0.34

## Ocean Pond 0.87

## Ocean Pond 0.87

## Ocheese Pond 0.56

## Ocheese Pond 0.56

## Orange 0.18

## Orange 0.18

## Panasoffkee 0.19

## Rodman 0.16

## Rodman 0.16

## Rousseau 0.10## Lake AvgMercury

## Sampson 0.48

## Sampson 0.48

## Shipp 0.21

## Talquin 0.86

## Tarpon 0.52

## Trafford 0.27

## Trafford 0.27

## Trafford 0.27

## Trout 0.94

## Tsala Apopka 0.40

## Weir 0.43

## Wildcat 0.25

## Wildcat 0.25

## Wildcat 0.25

## Yale 0.27

## Yale 0.27

## Yale 0.27

## Yale 0.27Bootstrap Sample Mean:

mean(BootstrapSample3$AvgMercury)## [1] 0.50660383.3.13 Bootstrap Sample 4

## Lake AvgMercury

## Alligator 1.23

## Alligator 1.23

## Annie 1.33

## Annie 1.33

## Apopka 0.04

## Bryant 0.27

## Cherry 0.48

## Crescent 0.19

## Deer Point 0.83

## Down 0.50

## Farm-13 0.05

## George 0.15

## Griffin 0.19

## Harney 0.77

## Hart 1.08

## Hatchineha 0.98

## Hatchineha 0.98## Lake AvgMercury

## Hatchineha 0.98

## Istokpoga 0.56

## Jackson 0.41

## Kingsley 0.34

## Miccasukee 0.50

## Miccasukee 0.50

## Minneola 0.34

## Minneola 0.34

## Minneola 0.34

## Monroe 0.28

## Newmans 0.34

## Ocean Pond 0.87

## Ocean Pond 0.87

## Okeechobee 0.17

## Orange 0.18

## Orange 0.18

## Orange 0.18

## Panasoffkee 0.19## Lake AvgMercury

## Placid 0.49

## Placid 0.49

## Placid 0.49

## Puzzle 1.10

## Rousseau 0.10

## Rousseau 0.10

## Sampson 0.48

## Shipp 0.21

## Talquin 0.86

## Tarpon 0.52

## Tarpon 0.52

## Tohopekaliga 0.65

## Trafford 0.27

## Trafford 0.27

## Weir 0.43

## Wildcat 0.25

## Yale 0.27

## Yale 0.27Bootstrap Sample Mean:

mean(BootstrapSample4$AvgMercury)## [1] 0.50886793.3.14 Bootstrap Sample 5

## Lake AvgMercury

## Alligator 1.23

## Alligator 1.23

## Annie 1.33

## Apopka 0.04

## Brick 1.20

## Bryant 0.27

## Cherry 0.48

## Down 0.50

## Down 0.50

## East Tohopekaliga 1.16

## Eaton 0.49

## Farm-13 0.05

## Farm-13 0.05

## Farm-13 0.05

## Harney 0.77

## Harney 0.77

## Hart 1.08## Lake AvgMercury

## Hatchineha 0.98

## Lochloosa 0.34

## Lochloosa 0.34

## Louisa 0.84

## Miccasukee 0.50

## Miccasukee 0.50

## Minneola 0.34

## Monroe 0.28

## Monroe 0.28

## Monroe 0.28

## Monroe 0.28

## Newmans 0.34

## Newmans 0.34

## Newmans 0.34

## Okeechobee 0.17

## Orange 0.18

## Orange 0.18

## Placid 0.49## Lake AvgMercury

## Placid 0.49

## Puzzle 1.10

## Puzzle 1.10

## Rodman 0.16

## Rousseau 0.10

## Shipp 0.21

## Shipp 0.21

## Talquin 0.86

## Talquin 0.86

## Tarpon 0.52

## Trafford 0.27

## Trafford 0.27

## Trafford 0.27

## Trafford 0.27

## Tsala Apopka 0.40

## Weir 0.43

## Weir 0.43

## Wildcat 0.25Bootstrap Sample Mean:

mean(BootstrapSample5$AvgMercury)## [1] 0.49811323.3.15 Code for Florida Lakes Bootstrap for Sample Mean

MeanHg <- rep(NA, 10000)

for (i in 1:10000){

BootstrapSample <- sample_n(FloridaLakes, 53, replace=TRUE)

MeanHg[i] <- mean(BootstrapSample$AvgMercury)

}

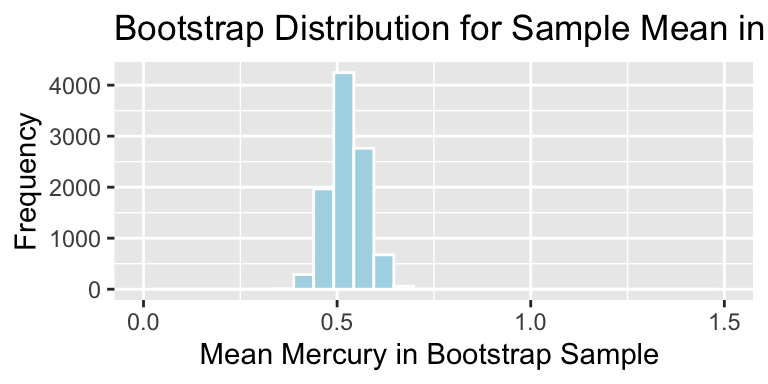

Lakes_Bootstrap_Results_Mean <- data.frame(MeanHg)3.3.16 Florida Lakes Bootstrap Distribution for Mean

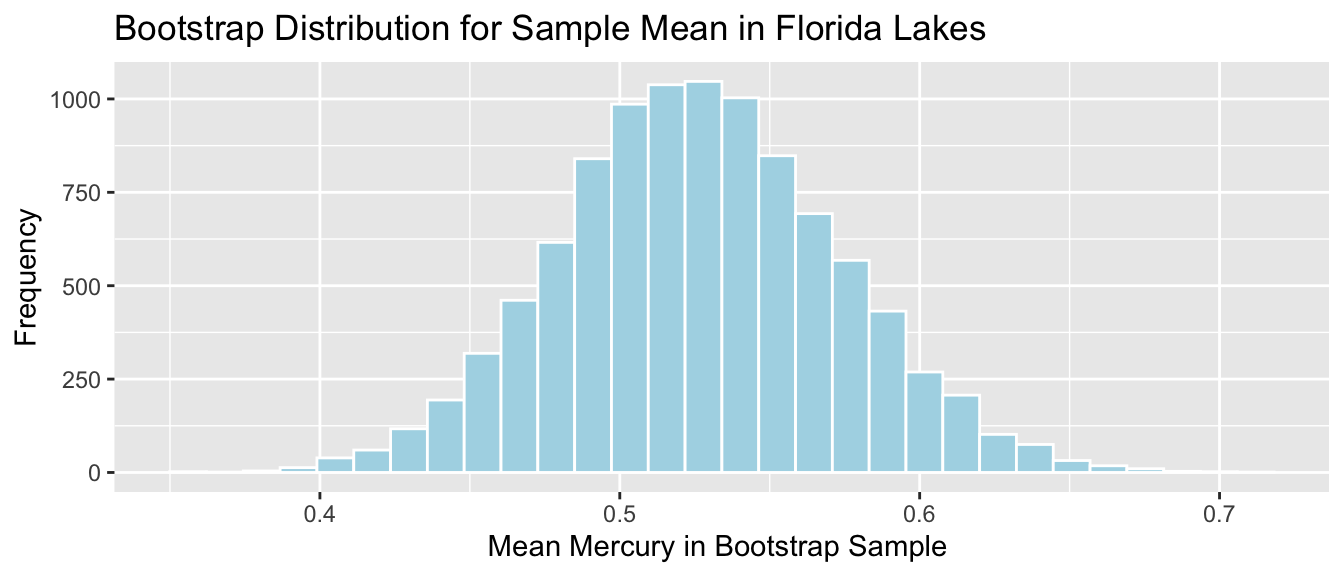

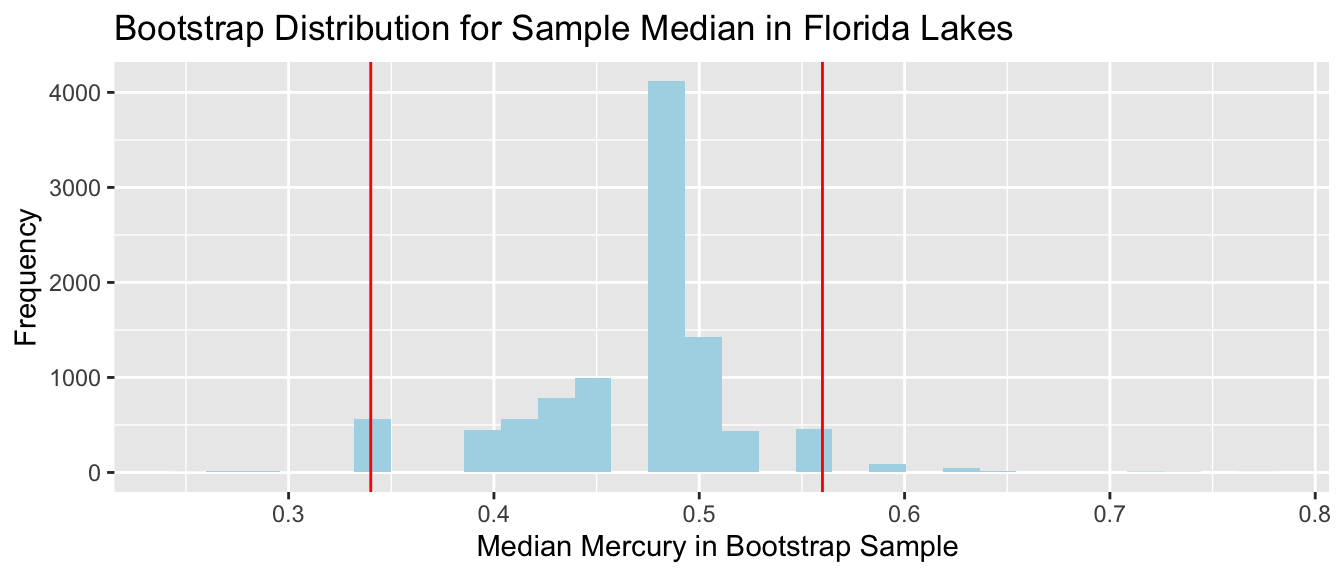

Lakes_Bootstrap_Mean <- ggplot(data=Lakes_Bootstrap_Results_Mean, aes(x=MeanHg)) +

geom_histogram(color="white", fill="lightblue") +

xlab("Mean Mercury in Bootstrap Sample ") + ylab("Frequency") +

ggtitle("Bootstrap Distribution for Sample Mean in Florida Lakes") +

theme(legend.position = "none")

Lakes_Bootstrap_Mean

3.3.17 Bootstrap Percentile Interval for Mean Hg Level

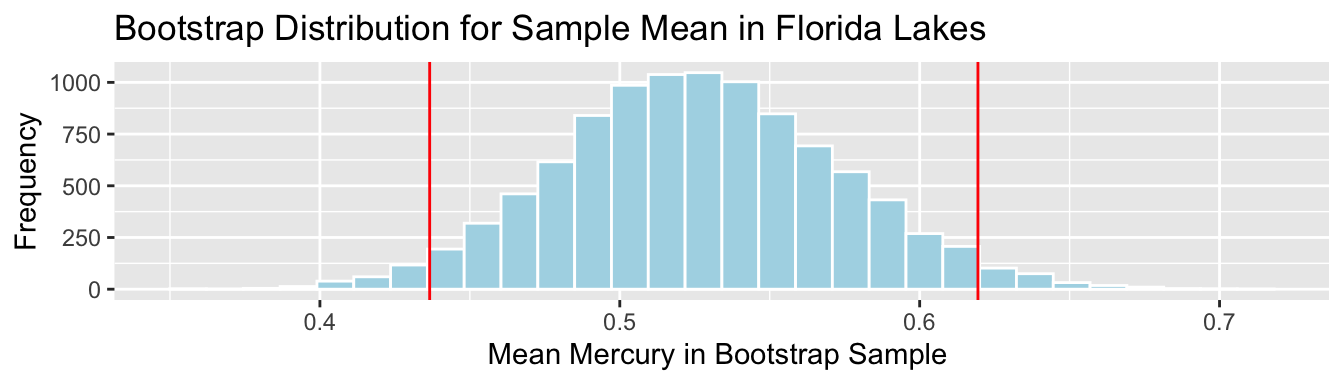

q.025 <- quantile(Lakes_Bootstrap_Results_Mean$MeanHg, 0.025)

q.975 <- quantile(Lakes_Bootstrap_Results_Mean$MeanHg, 0.975)

c(q.025, q.975)## 2.5% 97.5%

## 0.4366038 0.6194340Lakes_Bootstrap_Mean + geom_vline(xintercept=c(q.025,q.975), color="red")

We are 95% confident that the mean mercury level is all Florida Lakes is between 0.44 and 0.62 ppm.

3.3.18 Bootstrap SE Interval for Mean Hg Level

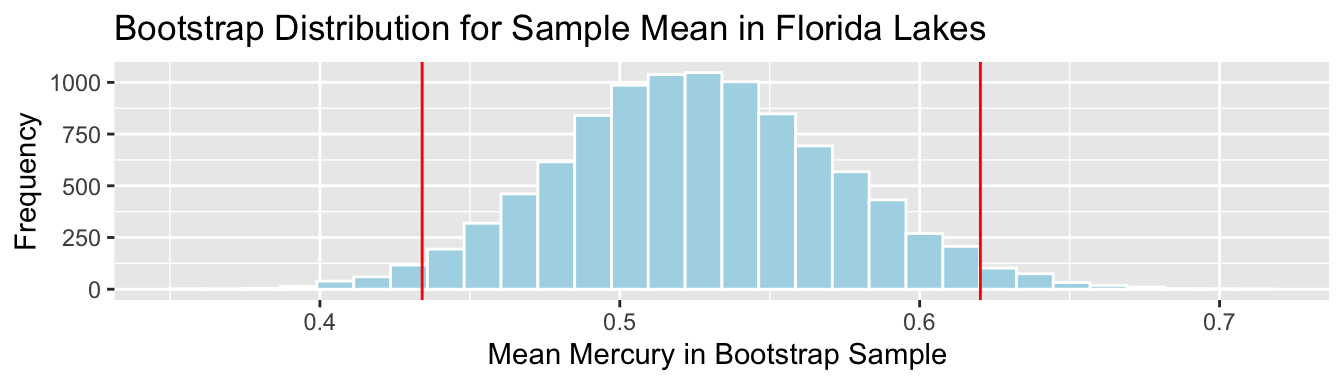

SampMean <- mean(FloridaLakes$AvgMercury)

SE <- sd(Lakes_Bootstrap_Results_Mean$MeanHg)

c(SampMean-2*SE, SampMean+2*SE)## [1] 0.4340912 0.6202485Lakes_Bootstrap_Mean + geom_vline(xintercept=c(c(SampMean-2*SE, SampMean+2*SE)), color="red")

We are 95% confident that the mean mercury level is all Florida Lakes is between 0.43 and 0.62 ppm.

Again, the percentile and standard error intervals closely agree.

3.3.19 Standard Deviation and Standard Error

- Standard error is the standard deviation of the distribution of a statistic, across many samples of the given size. This is different than the sample standard deviation, which pertains to the amount of variability between individuals in the sample

Standard Deviation:

sd(FloridaLakes$AvgMercury)## [1] 0.3410356The standard deviation in mercury levels between individual lakes is 0.341 ppm.

Standard Error for Mean:

SE <- sd(Lakes_Bootstrap_Results_Mean$MeanHg); SE## [1] 0.04653932The standard deviation in the distribution for mean mercury levels between different samples of 53 lakes is approximately 0.0465393 ppm.

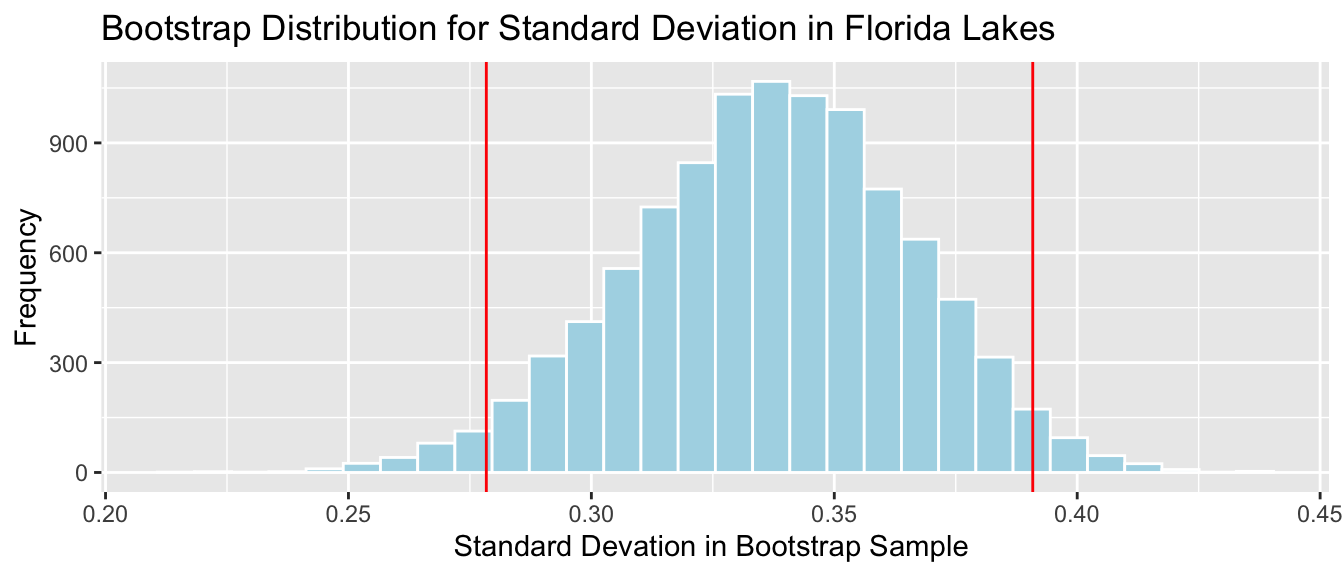

3.4 Bootstrapping Distribution for Statistics other than Mean

3.4.1 Other Quanitites for Florida Lakes

We might be interested in quantities other than mean mercury level for the lakes. For example:

- standard deviation in mercury level

sd(FloridaLakes$AvgMercury)## [1] 0.3410356- percentage of lakes with mercury level exceeding 1 ppm

mean(FloridaLakes$AvgMercury>1)## [1] 0.1132075- median mercury level

median(FloridaLakes$AvgMercury)## [1] 0.483.4.2 Bootstrapping for Other Quantities

We can also use bootstrapping to obtain confidence intervals for the median and standard deviation in mercury levels in Florida lakes.

StDevHg <- rep(NA, 10000)

PropOver1 <- rep(NA, 10000)

MedianHg <- rep(NA, 10000)

for (i in 1:10000){

BootstrapSample <- sample_n(FloridaLakes, 53, replace=TRUE)

StDevHg[i] <- sd(BootstrapSample$AvgMercury)

PropOver1[i] <- mean(BootstrapSample$AvgMercury>1)

MedianHg[i] <- median(BootstrapSample$AvgMercury)

}

Lakes_Bootstrap_Results_Other <- data.frame(MedianHg, PropOver1, StDevHg)3.4.3 Lakes Bootstrap Percentile CI for St. Dev.

q.025 <- quantile(Lakes_Bootstrap_Results_Other$StDevHg, 0.025)

q.975 <- quantile(Lakes_Bootstrap_Results_Other$StDevHg, 0.975)

c(q.025, q.975)## 2.5% 97.5%

## 0.2783774 0.3908507

We are 95% confident that the standard deviation in mercury level for all Florida Lakes is between 0.28 and 0.39 ppm.

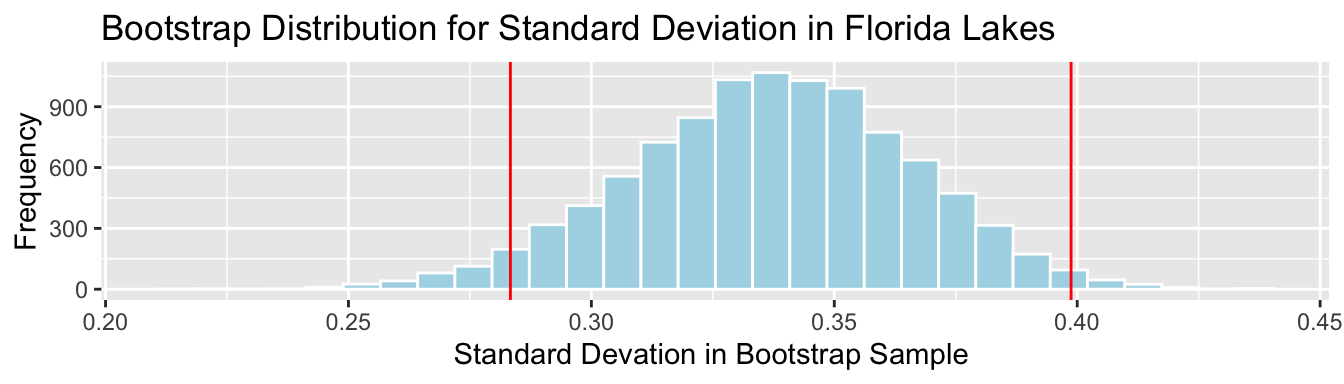

3.4.4 Bootstrap SE Interval for St.Dev. in Hg Level

SampSD <- sd(FloridaLakes$AvgMercury)

SE <- sd(Lakes_Bootstrap_Results_Other$StDevHg)

c(SampSD-2*SE, SampSD+2*SE)## [1] 0.2833343 0.3987369Lakes_Bootstrap_SD + geom_vline(xintercept=c(c(SampSD-2*SE, SampSD+2*SE)), color="red")

We are 95% confident that the standard deviation in mercury level is all Florida Lakes is between 0.28 and 0.4 ppm.

Again, the percentile and standard error intervals closely agree.

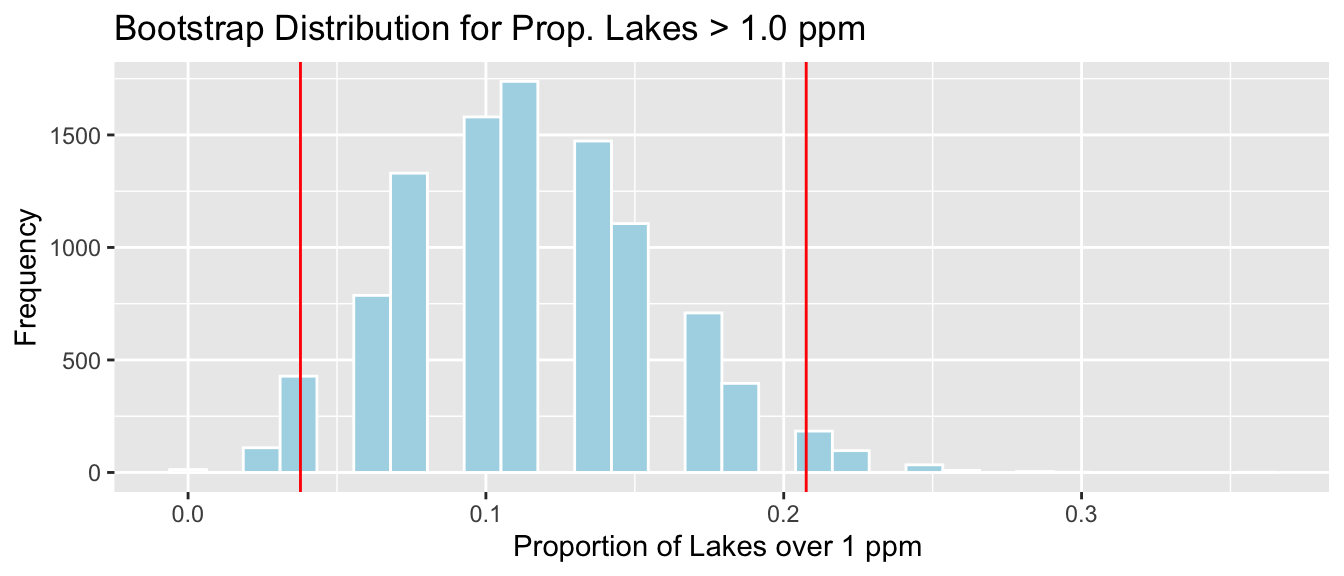

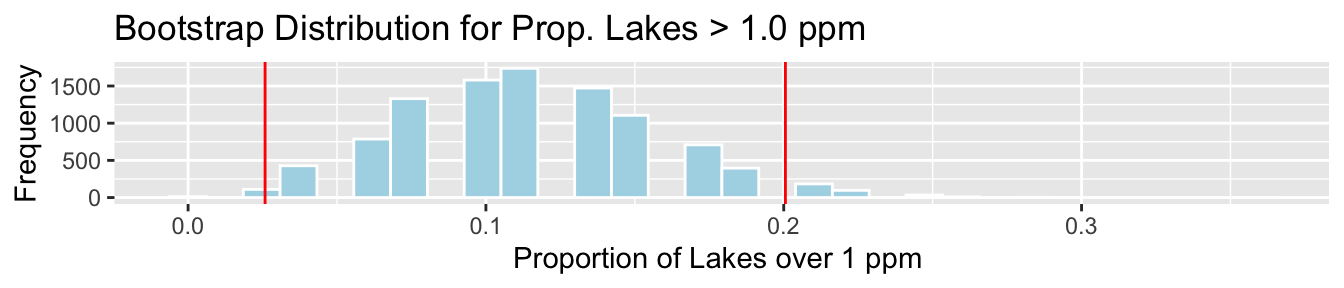

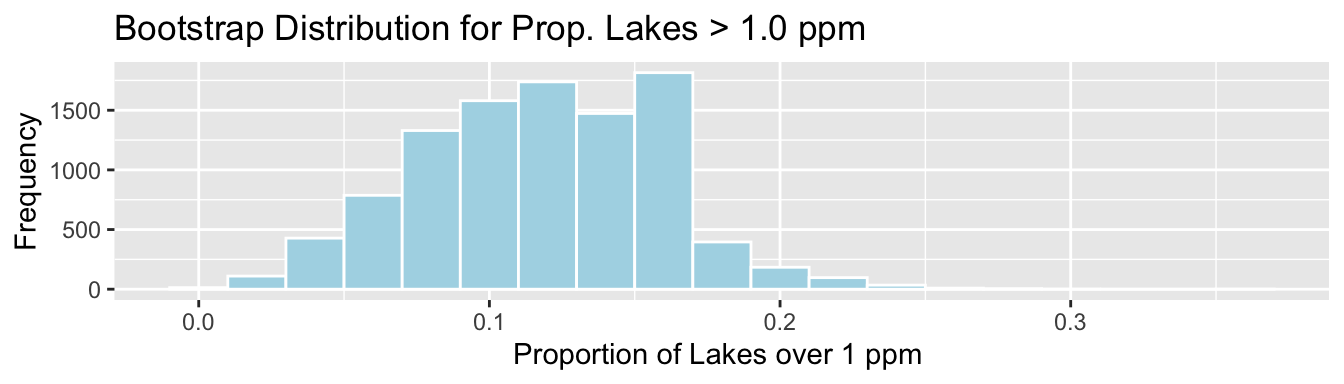

3.4.5 Bootstrap Percentile CI for Prop. > 1 ppm

q.025 <- quantile(Lakes_Bootstrap_Results_Other$PropOver1, 0.025)

q.975 <- quantile(Lakes_Bootstrap_Results_Other$PropOver1, 0.975)

c(q.025, q.975)## 2.5% 97.5%

## 0.03773585 0.20754717

We are 95% confident that the proportion of all Florida lakes with mercury level over 1 ppm is between 0.04 and 0.21.

3.4.6 Bootstrap SE Interval for Prop > 1 ppm

PropOver1 <- mean(FloridaLakes$AvgMercury>1)

SE <- sd(Lakes_Bootstrap_Results_Other$PropOver1)

c(PropOver1-2*SE, PropOver1+2*SE)## [1] 0.02585519 0.20055991Lakes_Bootstrap_PropOver1 + geom_vline(xintercept=c(c(PropOver1-2*SE, PropOver1+2*SE)), color="red")

We are 95% confident that the standard deviation in mercury level is all Florida Lakes is between 0.03 and 0.2 ppm.

There are small differences between the bootstrap percentile interval and bootstrap standard error intervals. This is due to slight right-skewness in the bootstrap situations. When this happens, the bootstrap percentile interval is usually more reliable.

3.4.7 Bootstrap Percentile CI for Median

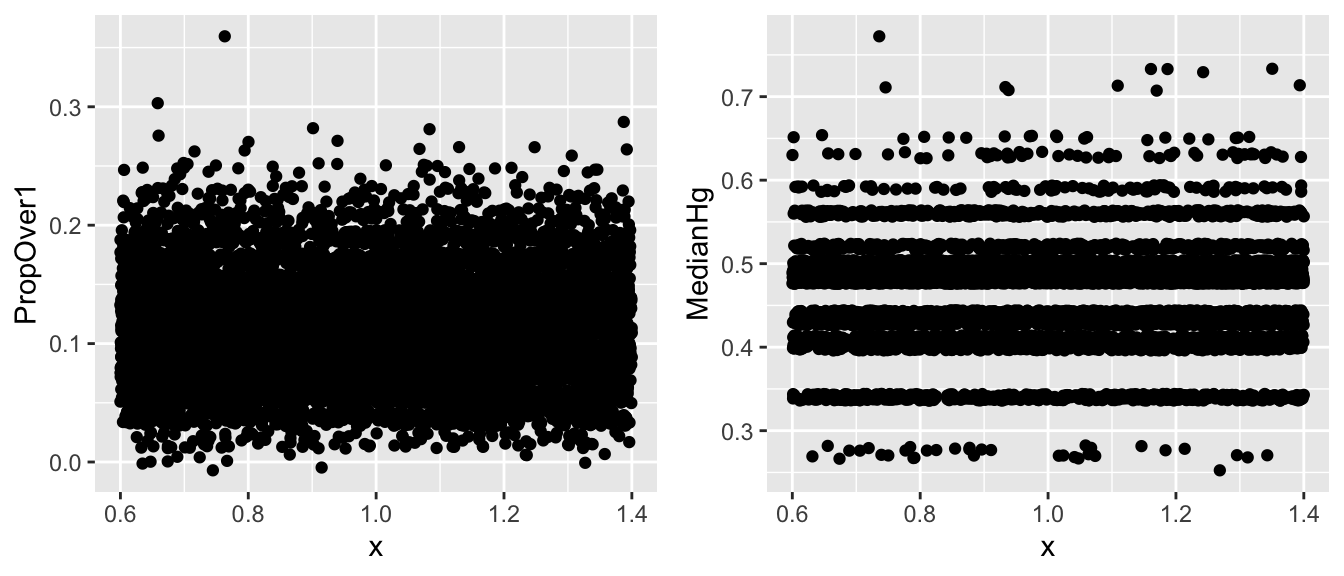

We should not draw conclusions from this bootstrap distribution. The bootstrap is unreliable when we see the same values coming up repeatedly in clusters, with large gaps in between.

This can be an issue for statistics that are a single value from the dataset (for example median)

3.4.8 When Gaps are/ aren’t OK

- Sometimes,

ggplot()shows gaps in a histogram, due mainly to binwidth. If the points seems to follow a fairly smooth trend (such as for prop > 1), then bootstrapping is ok. If there are large clusters and gaps (such as for median), bootstrapping is inadvisable.

Jitter plots can help us look for clusters and gaps.

V1 <- ggplot(data=Lakes_Bootstrap_Results_Other, aes(y=PropOver1, x=1)) + geom_jitter()

V2 <- ggplot(data=Lakes_Bootstrap_Results_Other, aes(y=MedianHg, x=1)) + geom_jitter()

grid.arrange(V1, V2, ncol=2)

3.4.9 Changing Binwidth in Histogram

Sometimes, default settings in

geom_histogram()lead to less that optimal graphs. ( For example, oddly-placed gaps that do not accurately represent the shape of the data)When a histogram shows undesired gaps, that are not really indivative of large gaps in the data, we can sometimes get rid of them by adjusting the binwidth.

Before you do this, explore the data, such as through jitter plots. Do not change binwidth to intentionally manipulate or hide undesirable information. Your goal should be to find a plot that accurately displays the shape/trend in the data.

ggplot(data=Lakes_Bootstrap_Results_Other, aes(x=PropOver1)) +

geom_histogram(color="white", fill="lightblue", binwidth=0.02) +

xlab("Proportion of Lakes over 1 ppm ") + ylab("Frequency") +

ggtitle("Bootstrap Distribution for Prop. Lakes > 1.0 ppm")

3.4.10 What Bootstrapping Does and Doesn’t Do

The purpose of bootsrapping is to quantify the amount of uncertainty associated with a statistic that was calculated from a sample.

A common misperception is that bootstrapping somehow increases the size of a sample by creating copies (or sampling with replacement). This is wrong!!!! Bootstrap samples are obtained by sampling from the original data, so they contain no new information and do not increase sample size. They simply help us understand how much our sample result could reasonably differ from that of the full population.

3.5 Bootstrap Distribution for Difference in Sample Means

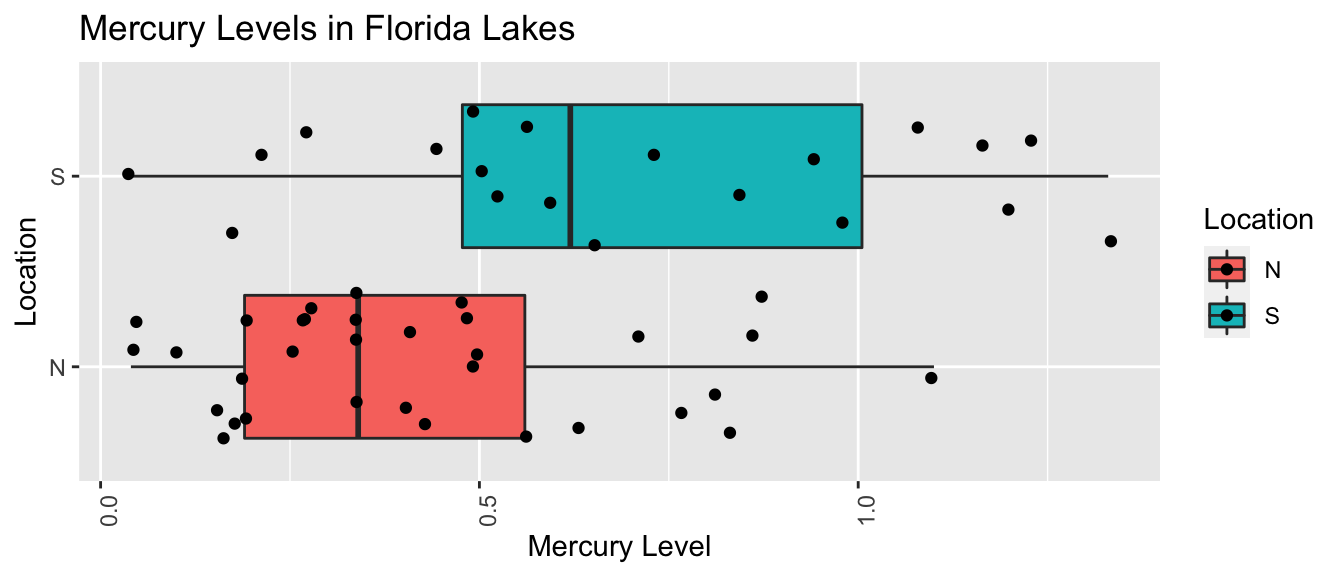

3.5.1 Mercury Levels in Northern vs Southern Florida Lakes

We previously estimated the mean mercury level in Florida Lakes. We might be interested in whether mercury levels are higher or lower, on average, in Northern Florida compared to Southern Florida.

We’ll divide the state along route 50, which runs East-West, passing through Northern Orlando.

Figure 3.2: from Google Maps

3.5.2 Comparing Northern and Southern Lakes

We add a variable indicating whether each lake lies in the northern or southern part of the state.

library(Lock5Data)

data(FloridaLakes)

#Location relative to rt. 50

FloridaLakes$Location <- as.factor(c("S","S","N","S","S","N","N","N","N","N","N","S","N","S","N","N","N","N","S","S","N","S","N","S","N","S","N","S","N","N","N","N","N","N","S","N","N","S","S","N","N","N","N","S","N","S","S","S","S","N","N","N","N"))

head(FloridaLakes %>% select(Lake, AvgMercury, Location))## # A tibble: 6 x 3

## Lake AvgMercury Location

## <chr> <dbl> <fct>

## 1 Alligator 1.23 S

## 2 Annie 1.33 S

## 3 Apopka 0.04 N

## 4 Blue Cypress 0.44 S

## 5 Brick 1.2 S

## 6 Bryant 0.27 N3.5.3 Comparing Lakes in North and South Florida

LakesBP <- ggplot(data=FloridaLakes, aes(x=Location, y=AvgMercury, fill=Location)) +

geom_boxplot() + geom_jitter() + ggtitle("Mercury Levels in Florida Lakes") +

xlab("Location") + ylab("Mercury Level") + theme(axis.text.x = element_text(angle = 90)) + coord_flip()

LakesBP

3.5.4 Comparing Lakes in North and South Florida (cont.)

LakesTable <- FloridaLakes %>% group_by(Location) %>% summarize(MeanHg=mean(AvgMercury),

StDevHg=sd(AvgMercury),

N=n())

LakesTable## # A tibble: 2 x 4

## Location MeanHg StDevHg N

## <fct> <dbl> <dbl> <int>

## 1 N 0.425 0.270 33

## 2 S 0.696 0.384 203.5.5 Model for Northern and Southern Lakes

\(\widehat{\text{Hg}} = b_0 +b_1\text{I}_{\text{South}}\)

- \(b_0\) represents the mean mercury level for lakes in North Florida, and

- \(b_1\) represents the mean difference in mercury level for lakes in South Florida, compared to North Florida

3.5.6 Model for Lakes R Output

Lakes_M <- lm(data=FloridaLakes, AvgMercury ~ Location)

summary(Lakes_M)##

## Call:

## lm(formula = AvgMercury ~ Location, data = FloridaLakes)

##

## Residuals:

## Min 1Q Median 3Q Max

## -0.65650 -0.23455 -0.08455 0.24350 0.67545

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 0.42455 0.05519 7.692 0.000000000441 ***

## LocationS 0.27195 0.08985 3.027 0.00387 **

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 0.3171 on 51 degrees of freedom

## Multiple R-squared: 0.1523, Adjusted R-squared: 0.1357

## F-statistic: 9.162 on 1 and 51 DF, p-value: 0.0038683.5.7 Interpreting Lakes Regression Output

\(\widehat{\text{Hg}} = 0.4245455 +0.2719545\text{I}_{\text{South}}\)

\(b_1 = 0.27915= 0.6965 - 0.4245\) is equal to the difference in mean mercury levels between Northern and Southern lakes. (We’ve already seen that for categorical variables, the least-squares estimate is the mean, so this makes sense.)

We can use \(b_1\) to assess the size of the difference in mean mercury concentration levels.

Since the lakes we observed are only a sample of all lakes, we cannot assume the difference in mercury concentrations is exactly 0.4245 for all Northern vs Southern Florida lakes. We can use bootstrapping to find a reasonable range for this difference.

3.5.8 Bootstrapping for Northern vs Southern Lakes

Bootstrapping Procedure

Take bootstrap samples of 33 northern Lakes, and 20 southern Lakes, by sampling with replacement.

Fit a model and record regression coefficient \(b_1\).

Repeat steps 2 and 3 10,000 times, keeping track of the regression coefficient estimates in each bootstrap sample.

Look at the distribution of regression coefficients in the bootstrap samples. The variability in this distribution can be used to approximate the variability in the sampling distributions for the \(b_1\).

3.5.9 Code for Bootstrapping for N vs S Lakes

b1 <- rep(NA, 10000) #vector to store b1 values

for (i in 1:10000){

NLakes <- sample_n(FloridaLakes %>% filter(Location=="N"), 33, replace=TRUE) ## sample 33 northern lakes

SLakes <- sample_n(FloridaLakes %>% filter(Location=="S"), 20, replace=TRUE) ## sample 20 southern lakes

BootstrapSample <- rbind(NLakes, SLakes) ## combine Northern and Southern Lakes

M <- lm(data=BootstrapSample, AvgMercury ~ Location) ## fit linear model

b1[i] <- coef(M)[2] ## record b1

}

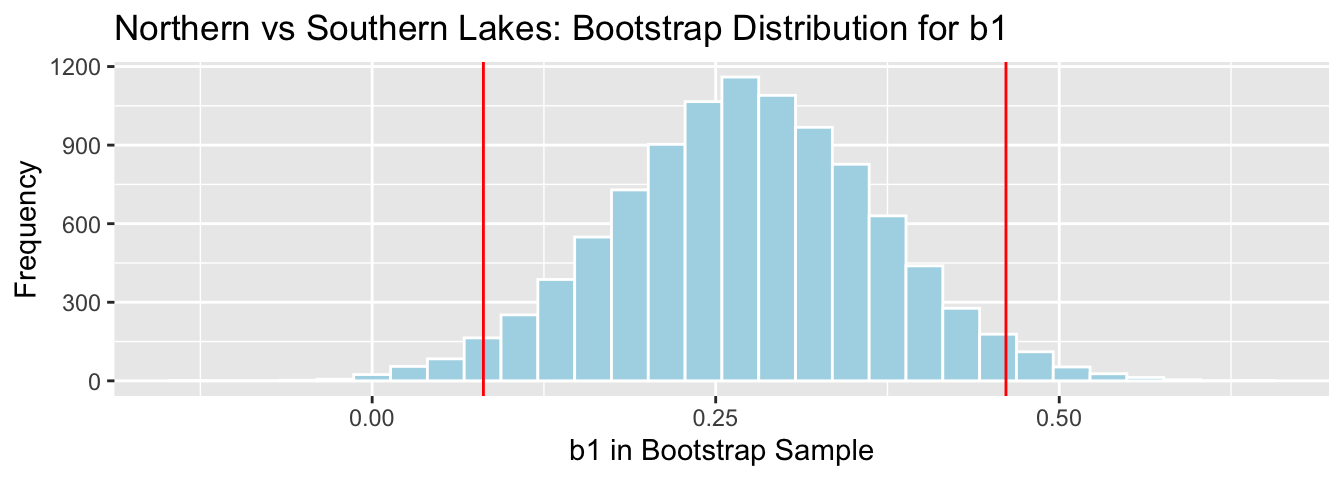

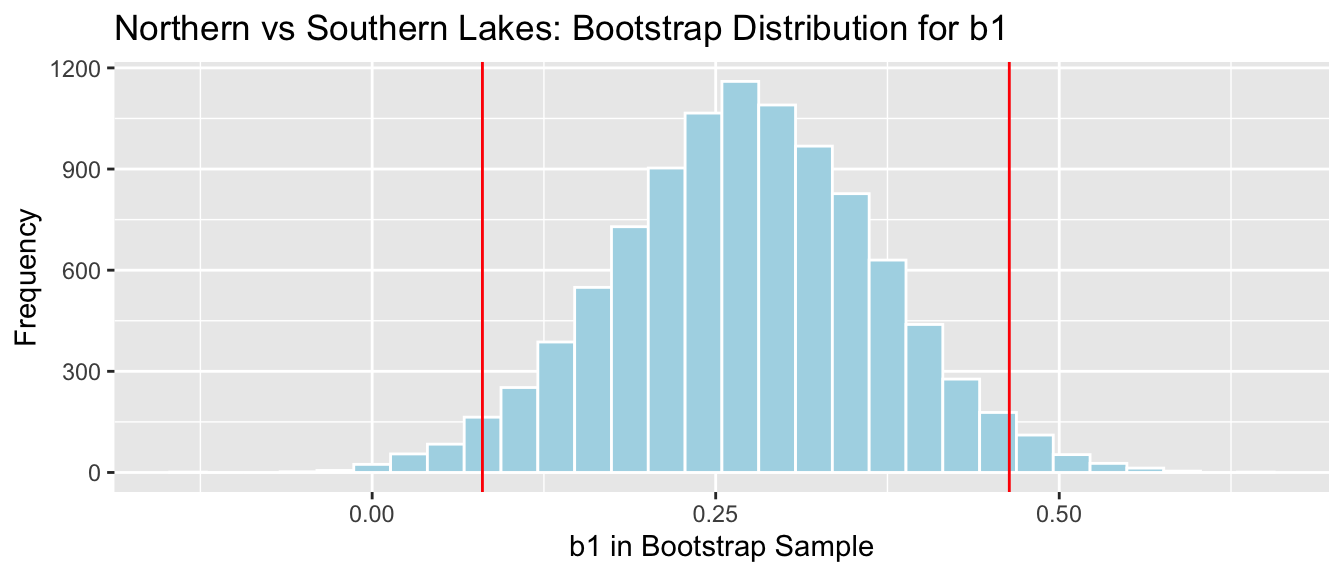

NS_Lakes_Bootstrap_Results <- data.frame(b1) #save results as dataframe3.5.10 Lakes: Bootstrap Percentile CI for Avg. Diff.

q.025 <- quantile(NS_Lakes_Bootstrap_Results$b1, 0.025)

q.975 <- quantile(NS_Lakes_Bootstrap_Results$b1, 0.975)

c(q.025, q.975)## 2.5% 97.5%

## 0.08095682 0.46122992

We are 95% confident the average mercury level in Southern Lakes is between 0.08 and 0.46 ppm higher than in Northern Florida.

3.5.11 Lakes: Bootstrap SE CI for Avg. Difference

SE <- sd(NS_Lakes_Bootstrap_Results$b1)

SE## [1] 0.09585589Note that this is fairly close to the standard error for \(b_1\) reported in the R model output, but does not match exactly. R is calculating standard errors using a different approach that we’ll discuss later.

MeanDiff <- coef(Lakes_M)[2]

c(MeanDiff-2*SE, MeanDiff+2*SE)## LocationS LocationS

## 0.08024277 0.463666333.5.12 Lakes: Bootstrap SE CI for Avg. Difference (cont.)

NS_Lakes_Bootstrap_Plot_b1 + geom_vline(xintercept=c(MeanDiff-2*SE, MeanDiff+2*SE), color="red")

We are 95% confident that the standard deviation in mercury level is all Florida Lakes is between 0.08 and 0.46 ppm.

3.6 Bootstrap Distribution for Simple Linear Regression Coefficients

3.6.1 Bootstrapping for Cars Regression Coefficients

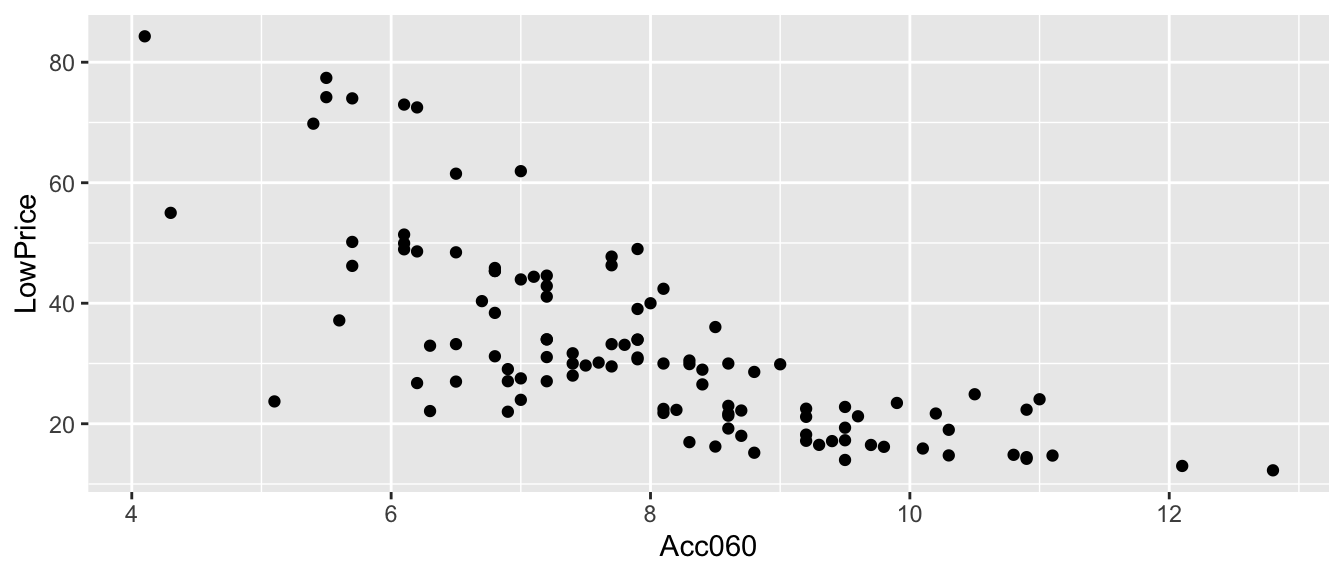

Recall the regression line estimating the relationship between a car’s price and acceleration time.

This line was calculated using a sample of 110 cars, released in 2015.

\(\widehat{Price} = 89.90 - 7.19\times\text{Acc. Time}\)

CarsA060

3.6.2 Cars Acc060 Model Output

summary(Cars_M_A060)##

## Call:

## lm(formula = LowPrice ~ Acc060, data = Cars2015)

##

## Residuals:

## Min 1Q Median 3Q Max

## -29.512 -6.544 -1.265 4.759 27.195

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 89.9036 5.0523 17.79 <0.0000000000000002 ***

## Acc060 -7.1933 0.6234 -11.54 <0.0000000000000002 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 10.71 on 108 degrees of freedom

## Multiple R-squared: 0.5521, Adjusted R-squared: 0.548

## F-statistic: 133.1 on 1 and 108 DF, p-value: < 0.000000000000000223.6.3 Bootstrapping Regression Slope for All Cars

Since \(b_0\) and \(b_1\) were calculated from a sample of 110 new 2015 cars, we do not expect them to be exactly the same as what we would have obtained if we had data on all 2015 new cars. We’ll use bootstrapping to get a sense for how much variability is associated with the slope and intercept of the regression line.

Bootstrapping Procedure

Take a bootstrap sample of size 110, by sampling cars with replacement.

Fit a linear regression model to the bootstrap sample with price as the response variable, and Acc060 as the explanatory variable. Record \(b_0\) and \(b_1\).

Repeat steps 2 and 3 10,000 times, keeping track of the values on \(b_0\) and \(b_1\) in each bootstrap sample.

Look at the distribution of \(b_0\) and \(b_1\) from the bootstrap samples. The variability in these distributions can be used to approximate the variability in the sampling distribution for the intercept and slope.

3.6.4 Code for Cars Regression Bootstrap

b0 <- rep(NA, 10000)

b1 <- rep(NA, 10000)

for (i in 1:10000){

BootstrapSample <- sample_n(Cars2015, 110, replace=TRUE)

Model_Bootstrap <- lm(data=BootstrapSample, LowPrice~Acc060)

b0[i] <- Model_Bootstrap$coeff[1]

b1[i] <-Model_Bootstrap$coeff[2]

}

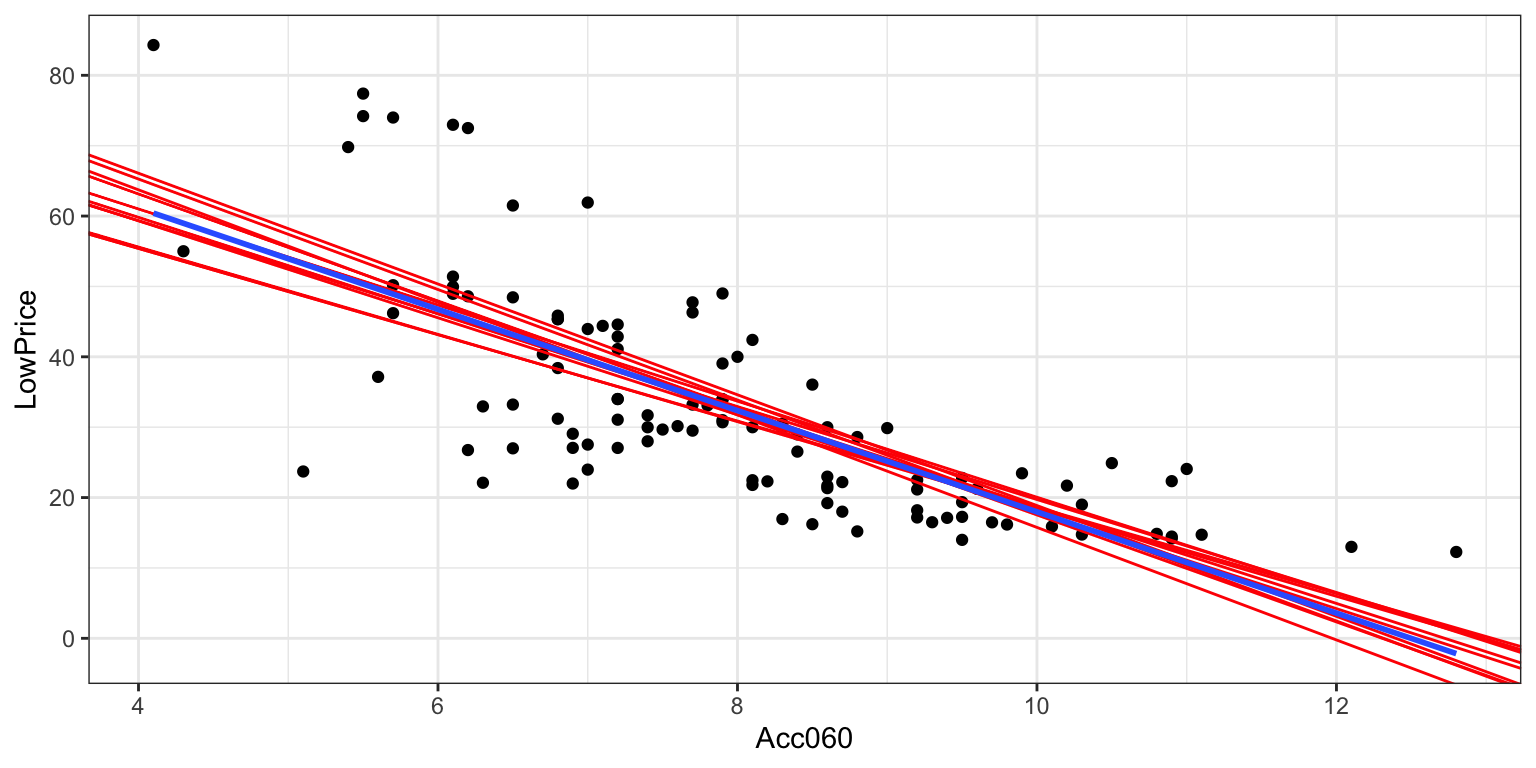

Cars_Bootstrap_Results_Acc060 <- data.frame(b0, b1)3.6.5 First 10 Bootstrap Regression Lines

ggplot(data=Cars2015, aes(x=Acc060,y=LowPrice))+ geom_point()+

theme_bw() +

geom_abline(data=Cars_Bootstrap_Results_Acc060[1:10, ],aes(slope=b1,intercept=b0),color="red") +

stat_smooth( method="lm", se=FALSE)

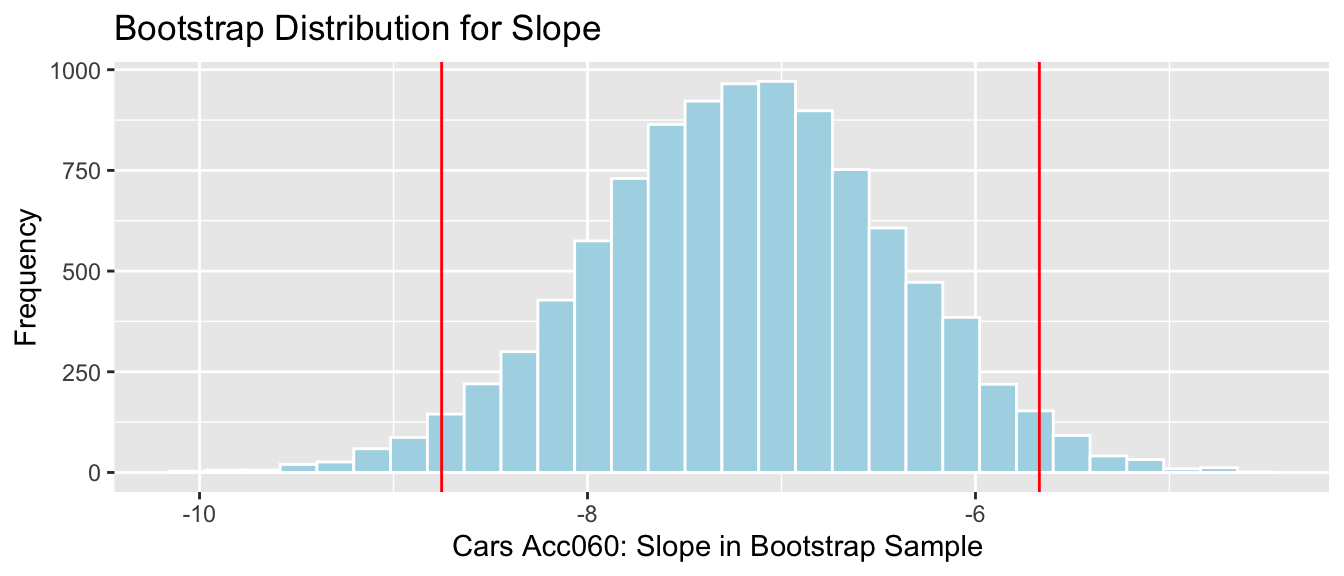

3.6.6 Bootstrap Percentile CI for Slope of Cars Reg. Line

q.025 <- quantile(Cars_Bootstrap_Results_Acc060$b1, 0.025)

q.975 <- quantile(Cars_Bootstrap_Results_Acc060$b1, 0.975)

c(q.025, q.975)## 2.5% 97.5%

## -8.751976 -5.670654

We are 95% confident that the average price of a new 2015 car decreases between -8.75 and -5.67 thousand dollars for each additional second it takes to accelerate from 0 to 60 mph.

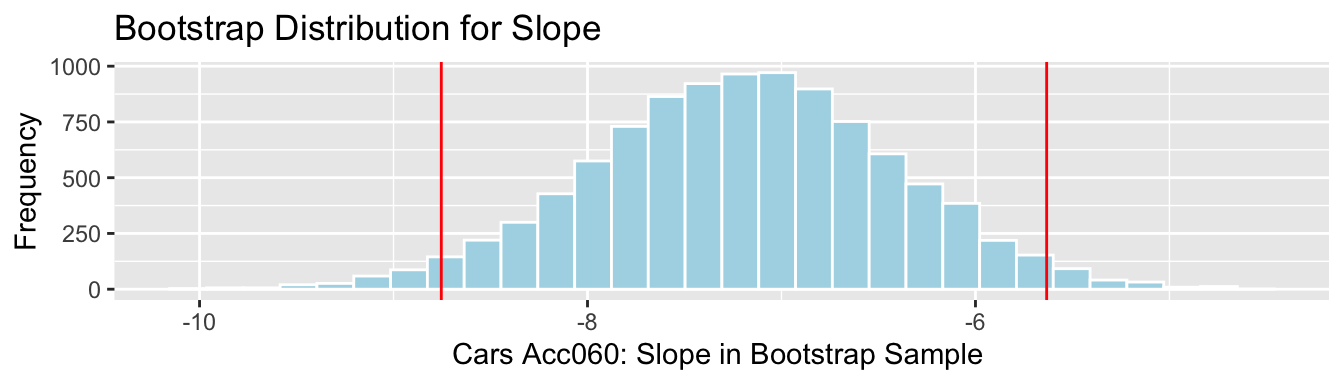

3.6.7 Cars: Bootstrap SE CI Slope

SE <- sd(Cars_Bootstrap_Results_Acc060$b1)

SE## [1] 0.7802172The bootstrap standard error is higher than the standard error in Acc060 line of the regression output.

Slope <- coef(Cars_M_A060)[2]

c(Slope-2*SE, Slope+2*SE)## Acc060 Acc060

## -8.753774 -5.6329053.6.8 Cars: Bootstrap SE CI Slope (cont.)

Cars_Acc060_B_Slope_Plot + geom_vline(xintercept=c(Slope-2*SE, Slope+2*SE), color="red")

We are 95% confident that the the average price of a new 2015 car decreases between -8.75 and -5.63 thousand dollars for each additional second it takes to accelerate from 0 to 60 mph.

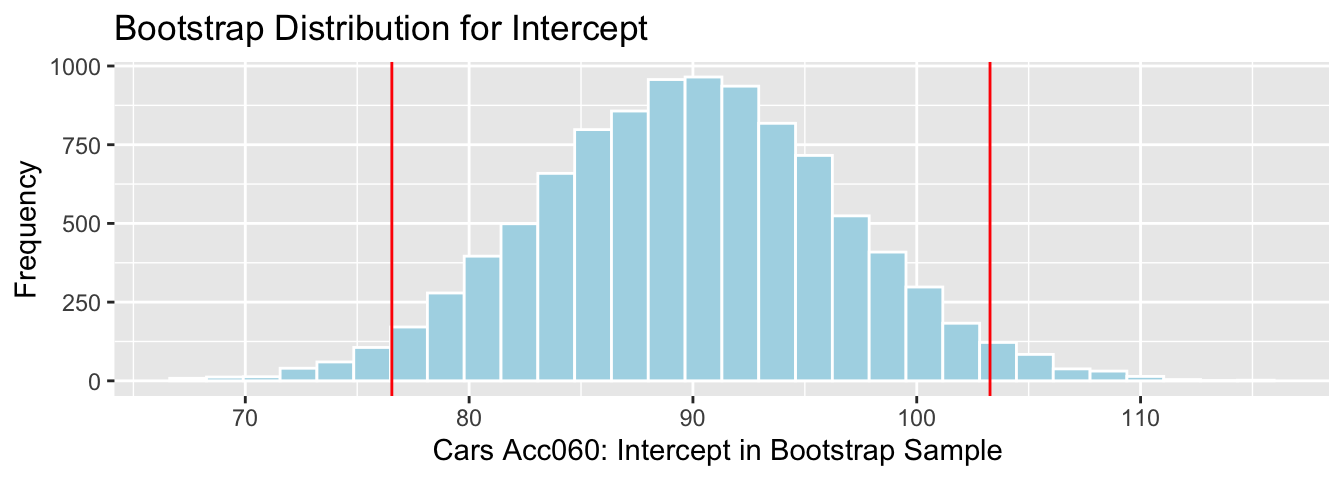

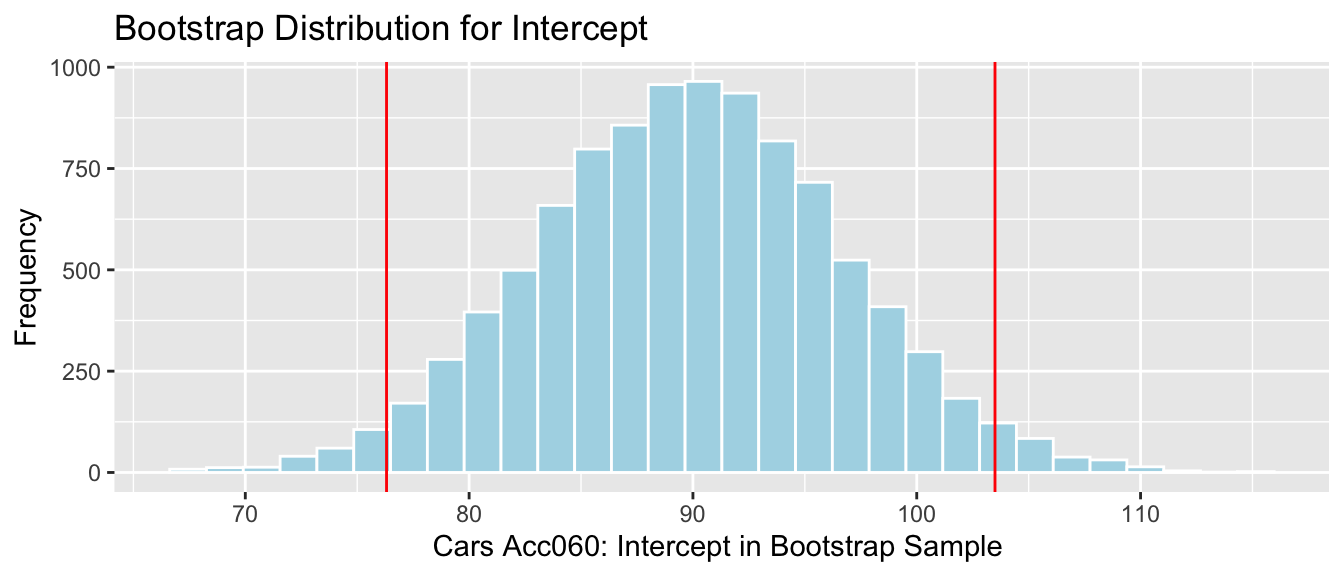

3.6.9 Bootstrap Percentile CI for Int. of Cars Reg. Line

q.025 <- quantile(Cars_Bootstrap_Results_Acc060$b0, 0.025)

q.975 <- quantile(Cars_Bootstrap_Results_Acc060$b0, 0.975)

c(q.025, q.975)## 2.5% 97.5%

## 76.54801 103.27401

We are 95% confident that the intercept for the regression line relating price to acceleration time is between 76.55 and 103.27 thousand dollars. This intercept does not have a meaningful interpretation in context.

3.6.10 Cars: Bootstrap SE CI Intercept

SE <- sd(Cars_Bootstrap_Results_Acc060$b0)

SE## [1] 6.796352The bootstrap standard error is higher than the standard error for the intercept in the regression output.

Int <- coef(Cars_M_A060)[1]

c(Int-2*SE, Int+2*SE)## (Intercept) (Intercept)

## 76.31088 103.496283.6.11 Cars: Bootstrap SE CI Intercept (cont.)

Cars_Acc060_B_Intercept_Plot + geom_vline(xintercept=c(Int-2*SE, Int+2*SE), color="red")

We are 95% confident that the intercept for the regression line relating price to acceleration time is between76.31 and 103.5 thousand dollars. This intercept does not have a meaningful interpretation in context.

3.7 Bootstrap Distribution for Multiple Regression Coefficients

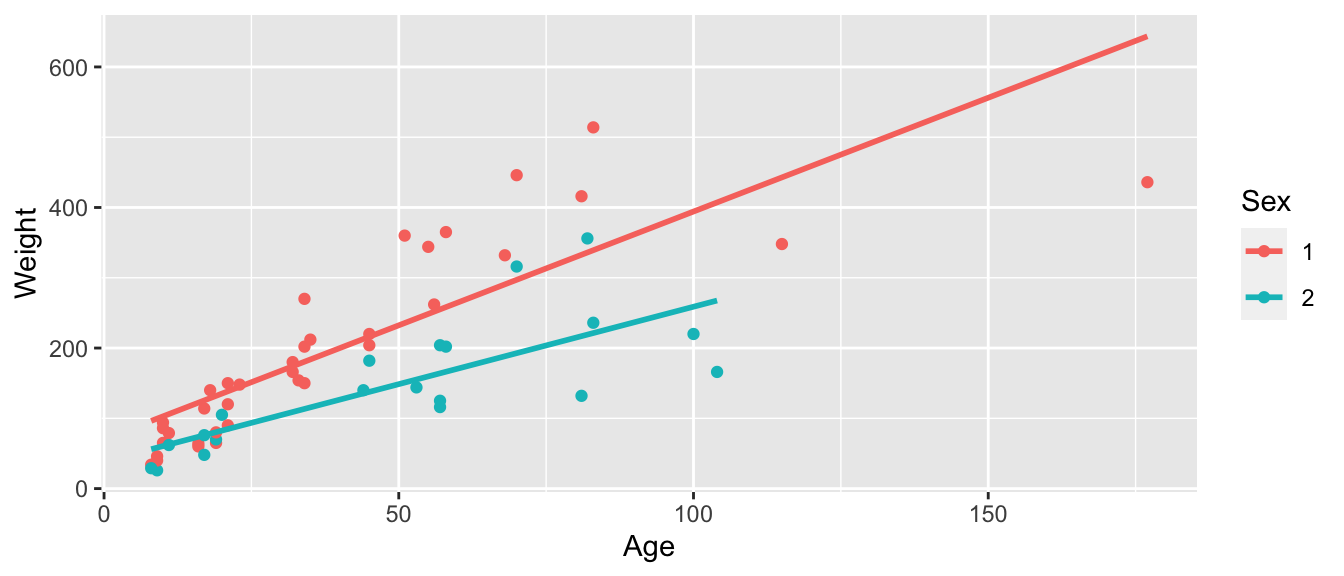

3.7.1 Model for Predicting Bear Weights

We previously used a linear regression model to predict the weights of wild bears, using a sample of 97 bears. Recall the model and its interpretations.

ggplot(data=Bears_Subset, aes(x=Age, y=Weight, color=Sex)) +

geom_point() + stat_smooth(method="lm", se=FALSE)

3.7.2 Model for Predicting Bear Weights (cont.)

Bear_M_Age_Sex_Int <- lm(data=Bears_Subset, Weight~ Age*Sex)

summary(Bear_M_Age_Sex_Int)##

## Call:

## lm(formula = Weight ~ Age * Sex, data = Bears_Subset)

##

## Residuals:

## Min 1Q Median 3Q Max

## -207.583 -38.854 -9.574 23.905 174.802

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 70.4322 17.7260 3.973 0.000219 ***

## Age 3.2381 0.3435 9.428 0.000000000000765 ***

## Sex2 -31.9574 35.0314 -0.912 0.365848

## Age:Sex2 -1.0350 0.6237 -1.659 0.103037

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 70.18 on 52 degrees of freedom

## (41 observations deleted due to missingness)

## Multiple R-squared: 0.6846, Adjusted R-squared: 0.6664

## F-statistic: 37.62 on 3 and 52 DF, p-value: 0.00000000000045523.7.3 Model Interpretations in Bears Interaction Model

\(\widehat{\text{Weight}}= 70.43+ 3.24 \times\text{Age}- 31.95\times\text{I}_{Female} -1.04\times\text{Age}\times\text{I}_{Female}\)

This model was fit using a sample of 97 wild bears. If we were to take a different sample, and fit a regression model, we would obtain different values for \(b_0\), \(b_1\), \(b_2\), and \(b_3\), as well as relevant quantities \(b_0-b_2\) and \(b_1-b_3\), due to sampling variability.

Questions of interest: Find a reasonable range for the following quantities:

- the mean monthly weight gain among all male bears

- the mean monthly weight gain among all female bears

- the mean weight among all 24 month old male bears

- the mean weight among all 24 month old female bears

Just as we did for sample means and proportions, we can answer these questions via bootstrapping.

3.7.4 Quantities of Interest

First, we need to find expressions for the quantities we are interested in, in terms of the model coefficients.

\(\widehat{\text{Weight}}= b_0+ b_1 \times\text{Age} - b_2\times\text{I}_{Female} + b_3\times\text{Age}\times\text{I}_{Female}\)

Expected Weight for Female Bears:

\[ \begin{aligned} \widehat{\text{Weight}} & = b_0+ b_1 \times\text{Age}+ b_2 + b_3\times\text{Age} \\ & (b_0 + b_2) + (b_1+b_3)\times\text{Age} \end{aligned} \]

Expected Weight for Male Bears:

\[ \begin{aligned} \widehat{\text{Weight}}= b_0 + b_1 \times\text{Age} \end{aligned} \]

Questions of interest: Find a reasonable range for the following quantities:

- the mean weight gain per month among all male bears (\(b_1\))

- the mean weight gain per month among all female bears (\(b_1 + b_3\))

- the mean weight among all 24 month old male bears (\(b_0 + 24b_1\))

- the mean weight among all 24 month old female bears (\(b_0 + b_2 + 24(b_1+b_3)\))

3.7.5 Bootstrapping for Bears Regression Coefficients

Bootstrapping Procedure

Take a bootstrap sample of size 97, by sampling bears with replacement.

Fit a model and record regression coefficients \(b_0\), \(b_1\), \(b_2\), \(b_3\).

Repeat steps 2 and 3 10,000 times, keeping track of the regression coefficient estimates in each bootstrap sample.

Look at the distribution of regression coefficients in the bootstrap samples. The variability in this distribution can be used to approximate the variability in the sampling distributions for the regression coefficients.

3.7.6 Bootstrap Code for Bears Regression Coefficients

b0 <- rep(NA, 10000)

b1 <- rep(NA, 10000)

b2 <- rep(NA, 10000)

b3 <- rep(NA, 10000)

for (i in 1:10000){

BootstrapSample <- sample_n(Bears_Subset, 97, replace=TRUE) #take bootstrap sample

M <- lm(data=BootstrapSample, Weight ~ Age*Sex) ## fit linear model

b0[i] <- coef(M)[1] ## record b0

b1[i] <- coef(M)[2] ## record b1

b2[i] <- coef(M)[3] ## record b2

b3[i] <- coef(M)[4] ## record b3

}

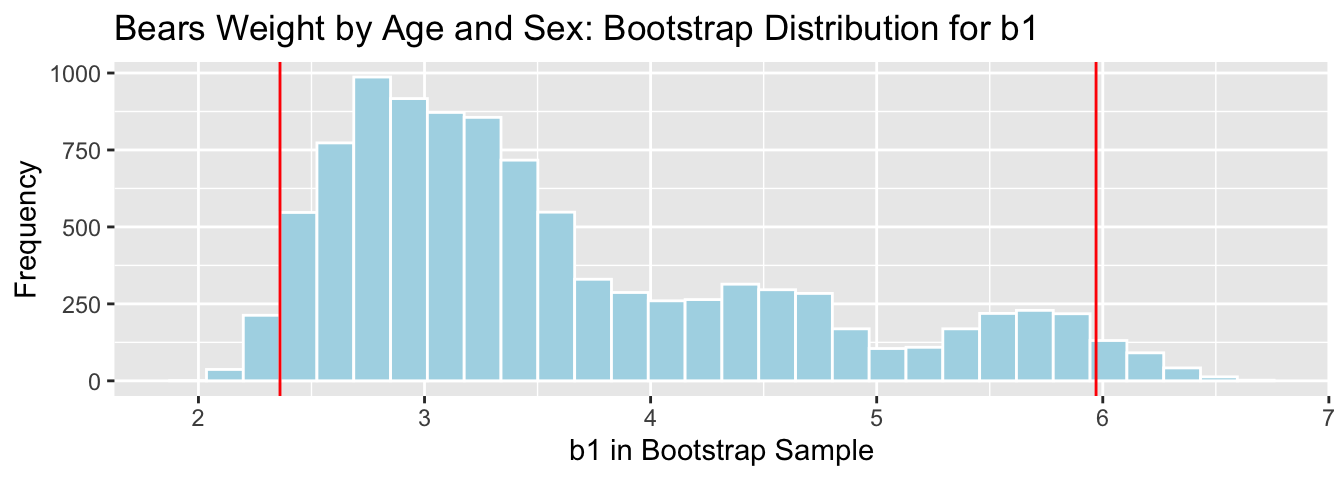

Bears_Bootstrap_Results <- data.frame(b0, b1, b2, b3)3.7.7 Bootstrap Percentile CI for \(b_1\) in Bears Model

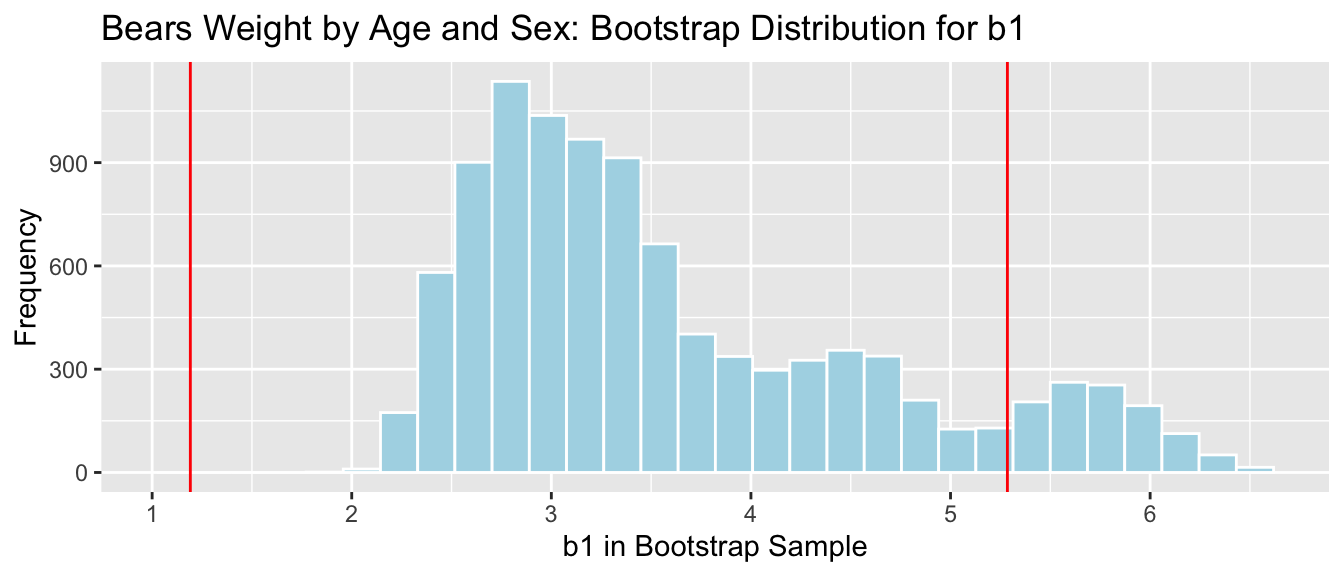

The average weight gain per month for male bears is represented by \(b_1\).

q.025 <- quantile(Bears_Bootstrap_Results$b1, 0.025)

q.975 <- quantile(Bears_Bootstrap_Results$b1, 0.975)

c(q.025, q.975)## 2.5% 97.5%

## 2.360746 5.970272

We are 95% confident that male bears gain between 2.36 and 5.97 pounds per month, on average.

3.7.8 Bootstrap SE CI for \(b_1\) in Bears Model

b1 <- coef(Bear_M_Age_Sex_Int)[2]

SE <- sd(Bears_Bootstrap_Results$b1)

SE## [1] 1.023381c(b1-2*SE, b1+2*SE)## Age Age

## 1.191375 5.2849003.7.9 Bootstrap SE CI for \(b_1\) in Bears Model

Bears_plot_b1 + geom_vline(xintercept=c(b1-2*SE, b1+2*SE), color="red")

There is a considerable discrepancy between the percentile and standard error confidence intervals. Since the bootstrap distribution for \(b_1\) is not symmetric, only the percentile interval is reliable.

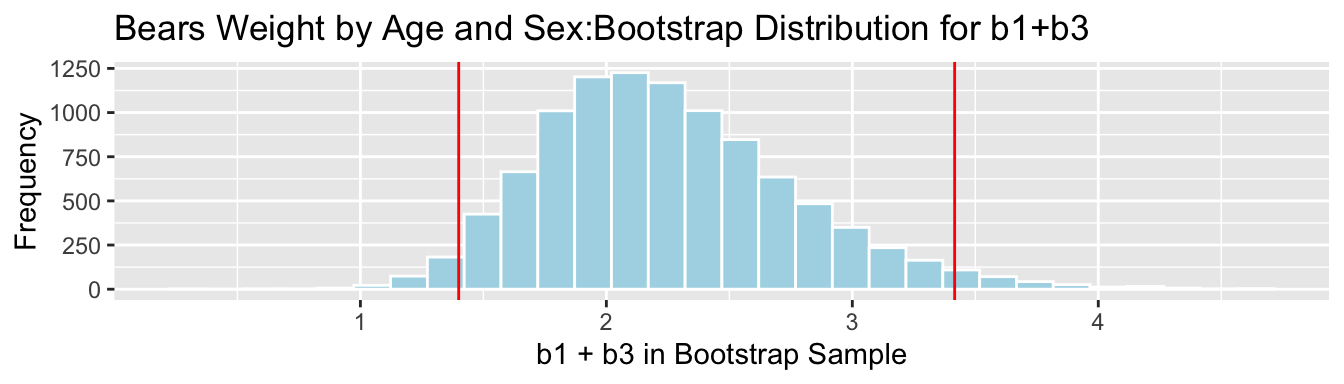

3.7.10 Bootstrap Percentile CI for \(b_1 + b_3\)

The average weight gain per month for female bears is represented by \(b_1 + b3\).

q.025 <- quantile(Bears_Bootstrap_Results$b1 + Bears_Bootstrap_Results$b3, 0.025)

q.975 <- quantile(Bears_Bootstrap_Results$b1 + Bears_Bootstrap_Results$b3, 0.975)c(q.025, q.975)## 2.5% 97.5%

## 1.399662 3.416039

We are 95% confident that female bears gain between 1.3996618 and 3.416039 pounds per month, on average.

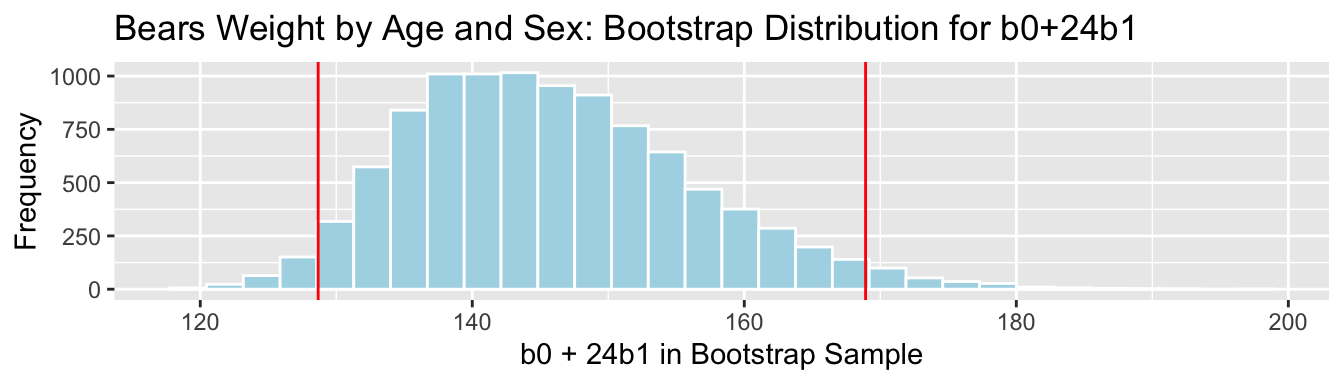

3.7.11 Bootstrap Percentile CI for \(b_0 + 24b_1\)

The mean weight of all 24 month old male bears is represented by \(b_0 + 24b_1\).

q.025 <- quantile(Bears_Bootstrap_Results$b0 + 24*Bears_Bootstrap_Results$b1, 0.025)

q.975 <- quantile(Bears_Bootstrap_Results$b0 + 24*Bears_Bootstrap_Results$b1, 0.975)c(q.025, q.975)## 2.5% 97.5%

## 128.6692 168.9187

We are 95% confident that the mean weight of all 24 month old bears is between 128.6692134 and 168.9186551 pounds.

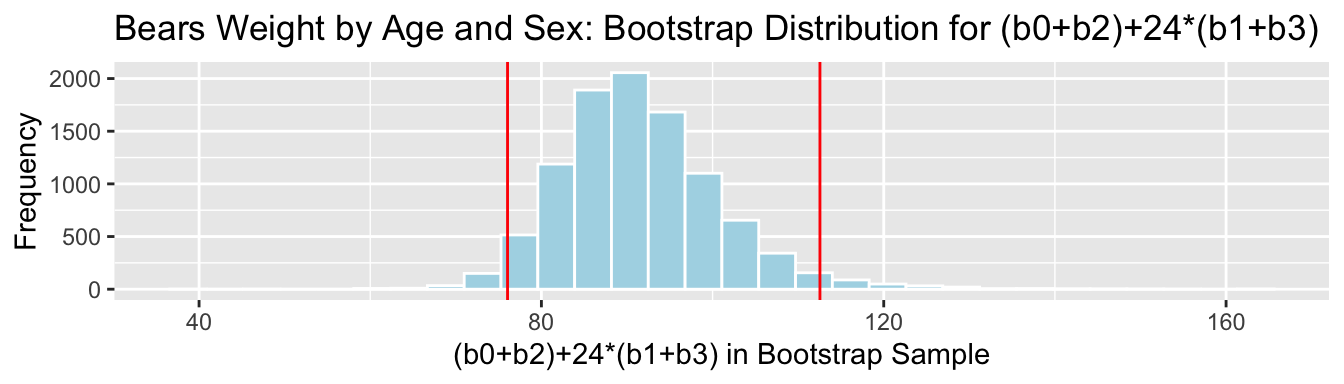

3.7.12 Bootstrap Percentile CI for \((b_0 + b_2) + 24(b_1+b_3)\)

The mean weight of all 24 month old female bears is represented by \((b_0 + b_2) + 24(b_1+b_3)\).

q.025 <- quantile(Bears_Bootstrap_Results$b0 + Bears_Bootstrap_Results$b2 +

24*(Bears_Bootstrap_Results$b1 + Bears_Bootstrap_Results$b3), 0.025)

q.975 <- quantile(Bears_Bootstrap_Results$b0 + Bears_Bootstrap_Results$b2 +

24*(Bears_Bootstrap_Results$b1 + Bears_Bootstrap_Results$b3), 0.975)c(q.025, q.975)## 2.5% 97.5%

## 76.04432 112.546053.7.13 Bootstrap Percentile CI for \((b_0 + b_2) + 24(b_1+b_3)\)

We are 95% confident that the mean weight of all 24 month old female bears is between 76.0443239 and 112.5460455 pounds.