12 Further Examples of Conditional Process Analysis

In this chapter [Hayes built] on the foundation laid by stepping through an analysis of a more complicated conditional process model that include[d] moderation of both the indirect effects in a simple mediation model. [He did] so by first using a piecemeal approach that focuse[d] on each pathway in the model. With some understanding gained by this examination of the components in the process, [he brought] the pieces together into an integrated conditional process analysis…

When an indirect effect of \(X\) on \(Y\) through \(M\) is moderated, we call this phenomenon moderated mediation. In such a scenario, the mechanism represented by the \(X \rightarrow M \rightarrow Y\) chain of events operates to varying degrees (or not at all) for certain people or in certain contexts. A similar-sounding phenomenon is mediated moderation, which refers to the scenario in which an interaction between X and some moderator \(W\) on \(Y\) is carried through a mediator \(M\). [We’ll see] in this chapter that a mediated moderation analysis is really nothing other than a mediation analysis with the product of two variables serving as the causal agent of focus. (p. 432)

12.1 Revisiting the disaster framing study

Here we load a couple necessary packages, load the data, and take a glimpse().

## Observations: 211

## Variables: 5

## $ id <dbl> 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, …

## $ frame <dbl> 1, 1, 1, 1, 1, 0, 0, 1, 0, 0, 1, 1, 0, 0, 1, 1, 1, 1, 0, 0, 1, 0, 1, 0, 1, 1, 0, 0, 0, 1, 0…

## $ donate <dbl> 5.6, 4.2, 4.2, 4.6, 3.0, 5.0, 4.8, 6.0, 4.2, 4.4, 5.8, 6.2, 6.0, 4.2, 4.4, 5.8, 5.4, 3.4, 7…

## $ justify <dbl> 2.95, 2.85, 3.00, 3.30, 5.00, 3.20, 2.90, 1.40, 3.25, 3.55, 1.55, 1.60, 1.65, 2.65, 3.15, 2…

## $ skeptic <dbl> 1.8, 5.2, 3.2, 1.0, 7.6, 4.2, 4.2, 1.2, 1.8, 8.8, 1.0, 5.4, 2.2, 3.6, 7.8, 1.6, 1.0, 6.4, 3…Load brms.

At the top of page 433, Hayes fit a simple univariable model

\[Y = b_0 + b_1 X + e_Y,\]

where the \(X\) is frame the \(Y\) is donate. Here’s the model.

Check the summary.

## Family: gaussian

## Links: mu = identity; sigma = identity

## Formula: donate ~ 1 + frame

## Data: disaster (Number of observations: 211)

## Samples: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup samples = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 4.563 0.128 4.312 4.817 1.001 3632 2727

## frame 0.084 0.181 -0.262 0.433 1.001 3158 3003

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma 1.328 0.066 1.208 1.465 1.002 3384 2761

##

## Samples were drawn using sampling(NUTS). For each parameter, Eff.Sample

## is a crude measure of effective sample size, and Rhat is the potential

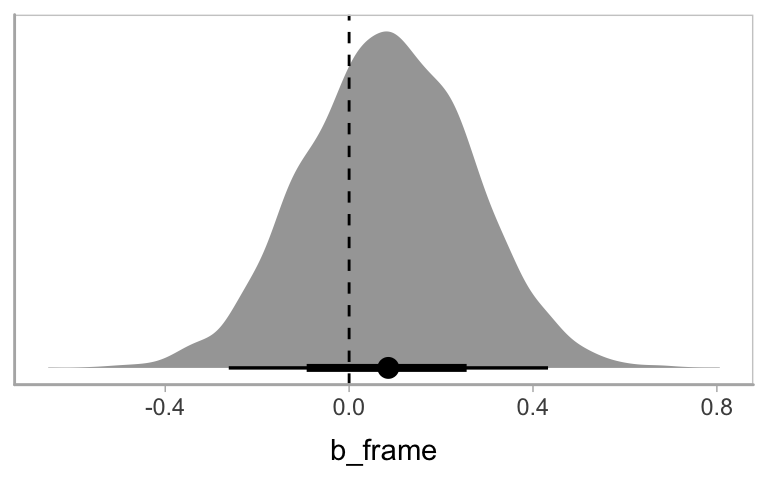

## scale reduction factor on split chains (at convergence, Rhat = 1).Hayes interpteted the coefficient for frame through the lens of a \(t\) statistic and accompanying \(p\)-value. We’ll just plot the posterior. For the figures in this chapter, we’ll take theme cues from the vignettes from Matthew Kay’s tidybayes package.

library(tidybayes)

theme_set(theme_tidybayes() + cowplot::panel_border())

posterior_samples(model12.1) %>%

ggplot(aes(x = b_frame, y = 0)) +

geom_halfeyeh() +

geom_vline(xintercept = 0, linetype = 2) +

scale_y_continuous(NULL, breaks = NULL)

Now we fit the moderation model

\[Y = b_0 + b_1 X + + b_2 W + b_3 XW + e_Y,\]

where skeptic is the \(W\) variable.

model12.2 <-

brm(data = disaster,

family = gaussian,

donate ~ 1 + frame + skeptic + frame:skeptic,

chains = 4, cores = 4)Our model12.2 summary matches nicely with the text.

## Family: gaussian

## Links: mu = identity; sigma = identity

## Formula: donate ~ 1 + frame + skeptic + frame:skeptic

## Data: disaster (Number of observations: 211)

## Samples: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup samples = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 5.028 0.227 4.585 5.477 1.003 2191 2424

## frame 0.681 0.331 0.019 1.328 1.004 1823 2369

## skeptic -0.138 0.058 -0.251 -0.026 1.002 2231 2822

## frame:skeptic -0.171 0.084 -0.334 -0.010 1.004 1766 2023

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma 1.243 0.061 1.130 1.369 1.001 3268 2555

##

## Samples were drawn using sampling(NUTS). For each parameter, Eff.Sample

## is a crude measure of effective sample size, and Rhat is the potential

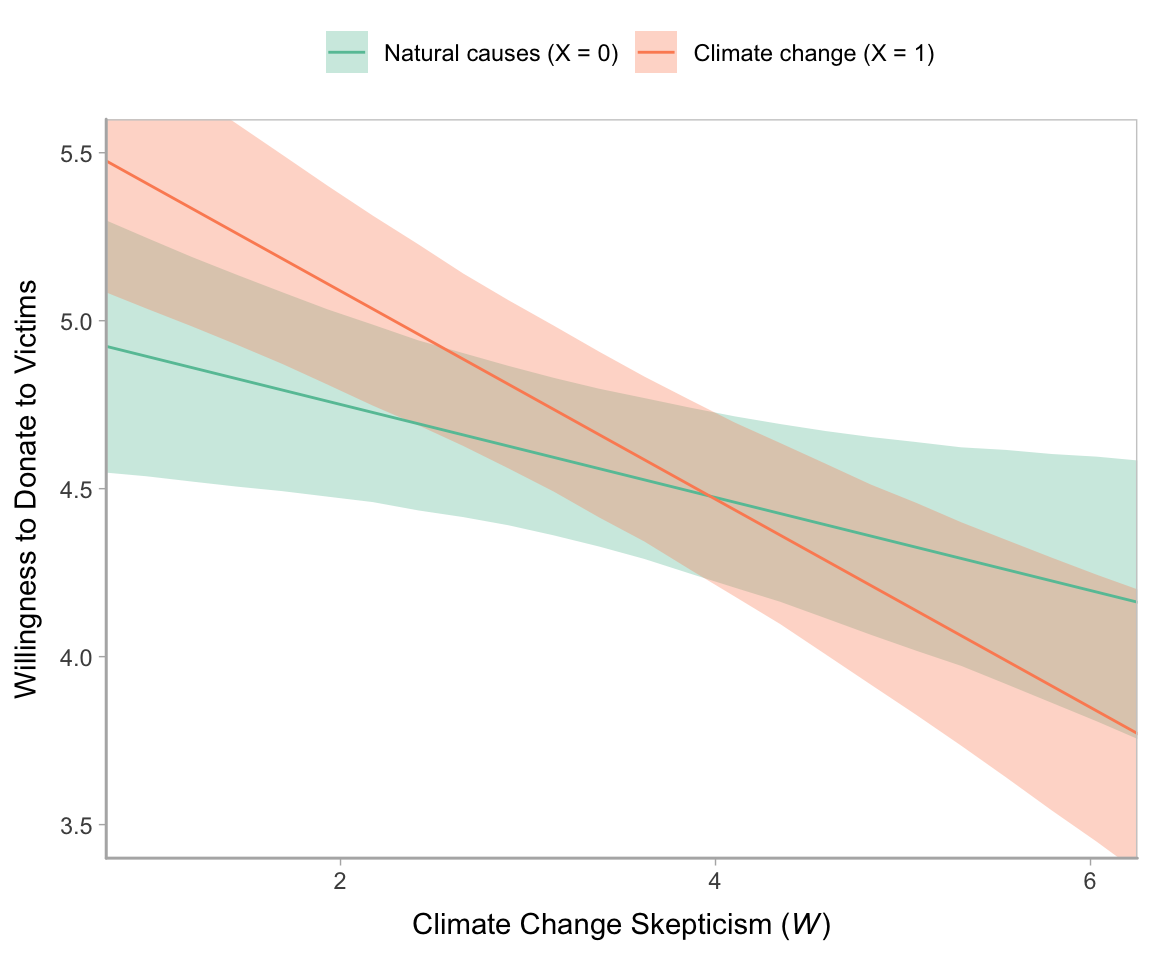

## scale reduction factor on split chains (at convergence, Rhat = 1).Here’s our Figure 12.2.

nd <-

tibble(frame = rep(0:1, each = 30),

skeptic = rep(seq(from = 0, to = 7, length.out = 30),

times = 2))

fitted(model12.2, newdata = nd) %>%

as_tibble() %>%

bind_cols(nd) %>%

mutate(frame = ifelse(frame == 0, str_c("Natural causes (X = ", frame, ")"),

str_c("Climate change (X = ", frame, ")"))) %>%

mutate(frame = factor(frame,

levels = c("Natural causes (X = 0)",

"Climate change (X = 1)"))) %>%

ggplot(aes(x = skeptic, y = Estimate)) +

geom_ribbon(aes(ymin = Q2.5, ymax = Q97.5, fill = frame),

alpha = 1/3) +

geom_line(aes(color = frame)) +

scale_fill_brewer(palette = "Set2") +

scale_color_brewer(palette = "Set2") +

coord_cartesian(xlim = 1:6,

ylim = c(3.5, 5.5)) +

labs(x = expression(paste("Climate Change Skepticism (", italic(W), ")")),

y = "Willingness to Donate to Victims") +

theme(legend.position = "top",

legend.direction = "horizontal",

legend.title = element_blank())

In Hayes’s Figure 12.2, he emphasized the differences at the three levels of skeptic. If you want the full difference score distributions in a pick-a-point-approach sort of way, you might plot the densities with tidybayes::geom_halfeyeh(), which places coefficient plots at the base of the densities. In this case, we show the posterior medians with the dots, the 50% intervals with the thick horizontal lines, and the 95% intervals with the thinner horizontal lines.

nd <-

crossing(frame = 0:1,

skeptic = quantile(disaster$skeptic, probs = c(.16, .5, .86)))

fitted(model12.2, summary = F,

newdata = nd) %>%

as_tibble() %>%

gather() %>%

bind_cols(

nd %>%

expand(nesting(frame, skeptic),

iter = 1:4000)

) %>%

select(-key) %>%

pivot_wider(names_from = frame, values_from = value) %>%

mutate(difference = `1` - `0`) %>%

ggplot(aes(x = difference, y = skeptic, fill = skeptic %>% as.character())) +

geom_halfeyeh(point_interval = median_qi, .width = c(0.95, 0.5)) +

scale_fill_brewer() +

scale_y_continuous(breaks = quantile(disaster$skeptic, probs = c(.16, .5, .86)),

labels = quantile(disaster$skeptic, probs = c(.16, .5, .86)) %>% round(2)) +

theme(legend.position = "none")

Here’s our simple mediation model, model12.3, using the multivariate syntax right in the brm() function.

model12.3 <-

brm(data = disaster,

family = gaussian,

bf(justify ~ 1 + frame) +

bf(donate ~ 1 + frame + justify) +

set_rescor(FALSE),

chains = 4, cores = 4)## Family: MV(gaussian, gaussian)

## Links: mu = identity; sigma = identity

## mu = identity; sigma = identity

## Formula: justify ~ 1 + frame

## donate ~ 1 + frame + justify

## Data: disaster (Number of observations: 211)

## Samples: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup samples = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## justify_Intercept 2.802 0.088 2.631 2.980 1.001 6148 2617

## donate_Intercept 7.233 0.233 6.780 7.693 1.001 6654 3125

## justify_frame 0.133 0.128 -0.122 0.385 1.000 5654 2656

## donate_frame 0.214 0.134 -0.049 0.472 1.000 6514 3247

## donate_justify -0.953 0.074 -1.100 -0.806 1.000 6443 2862

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma_justify 0.934 0.047 0.849 1.030 1.002 6558 2948

## sigma_donate 0.986 0.048 0.897 1.086 1.000 6022 3470

##

## Samples were drawn using sampling(NUTS). For each parameter, Eff.Sample

## is a crude measure of effective sample size, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).Consider the Bayesian \(R^2\) summaries.

## Estimate Est.Error Q2.5 Q97.5

## R2justify 0.010 0.011 0.000 0.041

## R2donate 0.449 0.038 0.366 0.518If you want the indirect effect with its intervals, you use posterior_samples() and data wrangle, as usual.

## # A tibble: 1 x 6

## ab .lower .upper .width .point .interval

## <dbl> <dbl> <dbl> <dbl> <chr> <chr>

## 1 -0.126 -0.363 0.117 0.95 mean qi12.2 Moderation of the direct and indirect effects in a conditional process model

Our conditional process model follows the form

\[\begin{align*} M & = i_M + a_1 X + a_2 W + a_3 XW + e_M \\ Y & = i_Y + c_1' X + c_2' W + c_3' XW + b M + e_Y. \end{align*}\]

We don’t need to do anything particularly special to fit a model like this with brms. It just requires we do a careful job specifying the formulas in our bf() arguments. If you find this syntax a little too cumbersome, you can always specify the formulas outside of brm(), save them as one or multiple objects, and plug those objects into brm().

model12.4 <-

brm(data = disaster,

family = gaussian,

bf(justify ~ 1 + frame + skeptic + frame:skeptic) +

bf(donate ~ 1 + frame + justify + skeptic + frame:skeptic) +

set_rescor(FALSE),

chains = 4, cores = 4)The model summary:

## Family: MV(gaussian, gaussian)

## Links: mu = identity; sigma = identity

## mu = identity; sigma = identity

## Formula: justify ~ 1 + frame + skeptic + frame:skeptic

## donate ~ 1 + frame + justify + skeptic + frame:skeptic

## Data: disaster (Number of observations: 211)

## Samples: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup samples = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## justify_Intercept 2.453 0.149 2.159 2.743 1.000 3284 3055

## donate_Intercept 7.290 0.277 6.760 7.847 1.002 3854 3312

## justify_frame -0.561 0.215 -0.997 -0.136 1.000 2945 2751

## justify_skeptic 0.105 0.038 0.030 0.181 1.000 3207 2944

## justify_frame:skeptic 0.200 0.055 0.092 0.307 1.000 2755 2553

## donate_frame 0.165 0.271 -0.380 0.707 1.000 3349 2550

## donate_justify -0.922 0.086 -1.089 -0.755 1.004 4814 2861

## donate_skeptic -0.042 0.047 -0.132 0.052 1.001 3608 3336

## donate_frame:skeptic 0.014 0.071 -0.123 0.147 1.000 3134 2858

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma_justify 0.818 0.041 0.742 0.902 1.002 5502 2832

## sigma_donate 0.988 0.047 0.900 1.087 1.001 5336 3242

##

## Samples were drawn using sampling(NUTS). For each parameter, Eff.Sample

## is a crude measure of effective sample size, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).Here are the Bayesian \(R^2\) summaries.

## Estimate Est.Error Q2.5 Q97.5

## R2justify 0.2478896 0.04512928 0.1567011 0.3321230

## R2donate 0.4523112 0.03782308 0.3716471 0.520673812.2.1 Estimation using PROCESS.

We just fit that model. Next!

12.2.2 Quantifying direct and indirect effects.

Here are summaries for \(a_1\) through \(a_3\).

## Estimate Est.Error Q2.5 Q97.5

## justify_frame -0.561 0.215 -0.997 -0.136

## justify_skeptic 0.105 0.038 0.030 0.181

## justify_frame:skeptic 0.200 0.055 0.092 0.307This is \(b\).

## Estimate Est.Error Q2.5 Q97.5

## -0.922 0.086 -1.089 -0.755We’ll need to employ posterior_samples() to compute \((a_1 + a_3 W)b\), as shown in Table 12.2.

post <-

posterior_samples(model12.4) %>%

mutate(`indirect effect when W is 1.592` = (b_justify_frame + `b_justify_frame:skeptic` * 1.592) * b_donate_justify,

`indirect effect when W is 2.800` = (b_justify_frame + `b_justify_frame:skeptic` * 2.800) * b_donate_justify,

`indirect effect when W is 5.200` = (b_justify_frame + `b_justify_frame:skeptic` * 5.200) * b_donate_justify)

post %>%

pivot_longer(starts_with("indirect")) %>%

group_by(name) %>%

median_qi(value, .prob = .95) %>%

mutate_if(is.double, round, digits = 3)## # A tibble: 3 x 7

## name value .lower .upper .width .point .interval

## <chr> <dbl> <dbl> <dbl> <dbl> <chr> <chr>

## 1 indirect effect when W is 1.592 0.224 -0.05 0.51 0.95 median qi

## 2 indirect effect when W is 2.800 0.003 -0.215 0.223 0.95 median qi

## 3 indirect effect when W is 5.200 -0.434 -0.735 -0.158 0.95 median qiAnd if you really want that full-on Table 12.2 layout, try this.

post %>%

mutate(a1 = b_justify_frame,

a3 = `b_justify_frame:skeptic`,

b = b_donate_justify,

c1 = b_donate_frame,

c3 = `b_donate_frame:skeptic`) %>%

expand(nesting(a1, a3, b, c1, c3),

w = c(1.592, 2.800, 5.200)) %>%

mutate(`a1 + a3w` = a1 + a3 * w,

`(a1 + a3w)b` = (a1 + a3 * w) * b,

`direct effect` = c1 + c3 * w) %>%

select(-(a1:a3), -(c1:c3)) %>%

pivot_longer(-w) %>%

group_by(w, name) %>%

summarise(mean = mean(value) %>% round(digits = 3)) %>%

pivot_wider(names_from = name,

values_from = mean) %>%

select(w, `a1 + a3w`, b, everything())## # A tibble: 3 x 5

## # Groups: w [3]

## w `a1 + a3w` b `(a1 + a3w)b` `direct effect`

## <dbl> <dbl> <dbl> <dbl> <dbl>

## 1 1.59 -0.243 -0.922 0.225 0.187

## 2 2.8 -0.002 -0.922 0.002 0.204

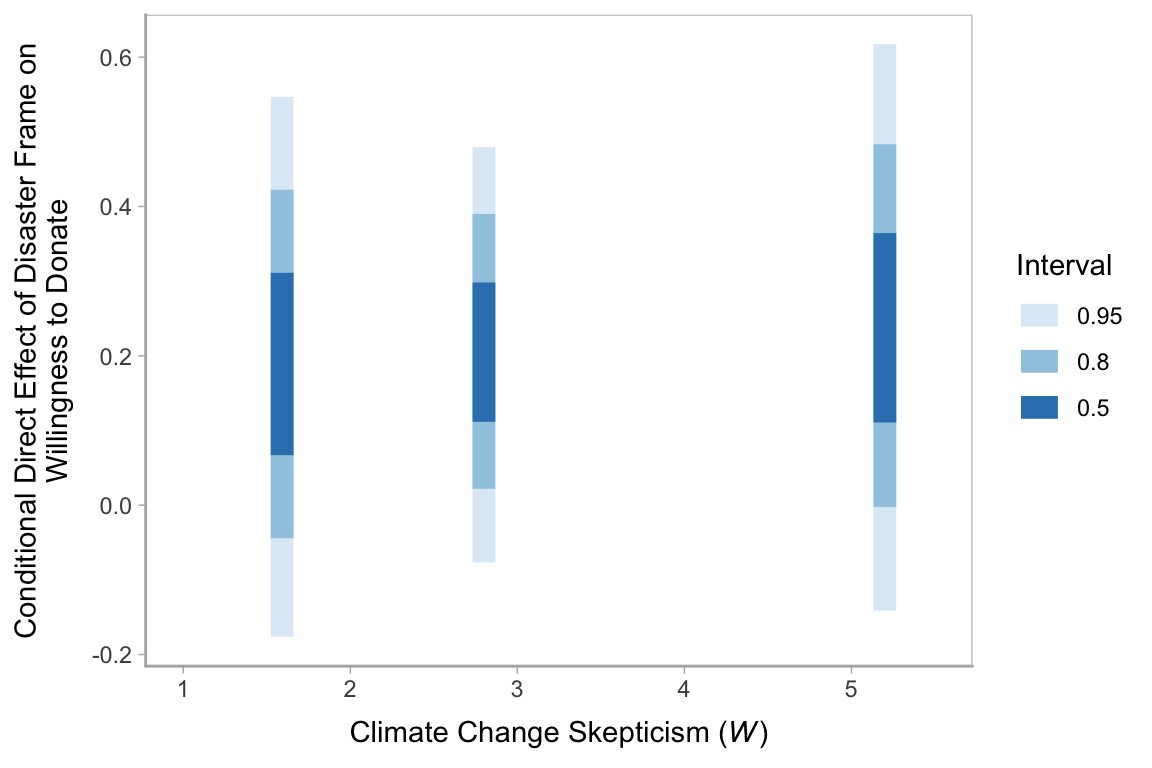

## 3 5.2 0.477 -0.922 -0.44 0.23912.2.2.1 The conditional direct effect of \(X\).

We already computed this in the last code block, above. But since we just focused on the posterior means, here’s a summary of their medians and 95% intervals.

post <-

post %>%

mutate(`direct effect when W is 1.592` = b_donate_frame + `b_donate_frame:skeptic` * 1.592,

`direct effect when W is 2.800` = b_donate_frame + `b_donate_frame:skeptic` * 2.800,

`direct effect when W is 5.200` = b_donate_frame + `b_donate_frame:skeptic` * 5.200)

post %>%

pivot_longer(starts_with("direct")) %>%

group_by(name) %>%

median_qi(value, .width = .95) %>%

mutate_if(is.double, round, digits = 3) %>%

select(name:.upper)## # A tibble: 3 x 4

## name value .lower .upper

## <chr> <dbl> <dbl> <dbl>

## 1 direct effect when W is 1.592 0.181 -0.176 0.547

## 2 direct effect when W is 2.800 0.204 -0.077 0.48

## 3 direct effect when W is 5.200 0.239 -0.141 0.617We can always plot, too.

w <- c(1.592, 2.800, 5.200)

post %>%

expand(nesting(b_donate_frame, `b_donate_frame:skeptic`),

w = w) %>%

mutate(`conditional direct effect` = b_donate_frame + `b_donate_frame:skeptic` * w) %>%

ggplot(aes(x = `conditional direct effect`, y = w, fill = w %>% as.character())) +

geom_halfeyeh(point_interval = median_qi, .width = c(0.95, 0.5)) +

scale_y_continuous(breaks = w) +

scale_fill_brewer() +

theme(legend.position = "none")

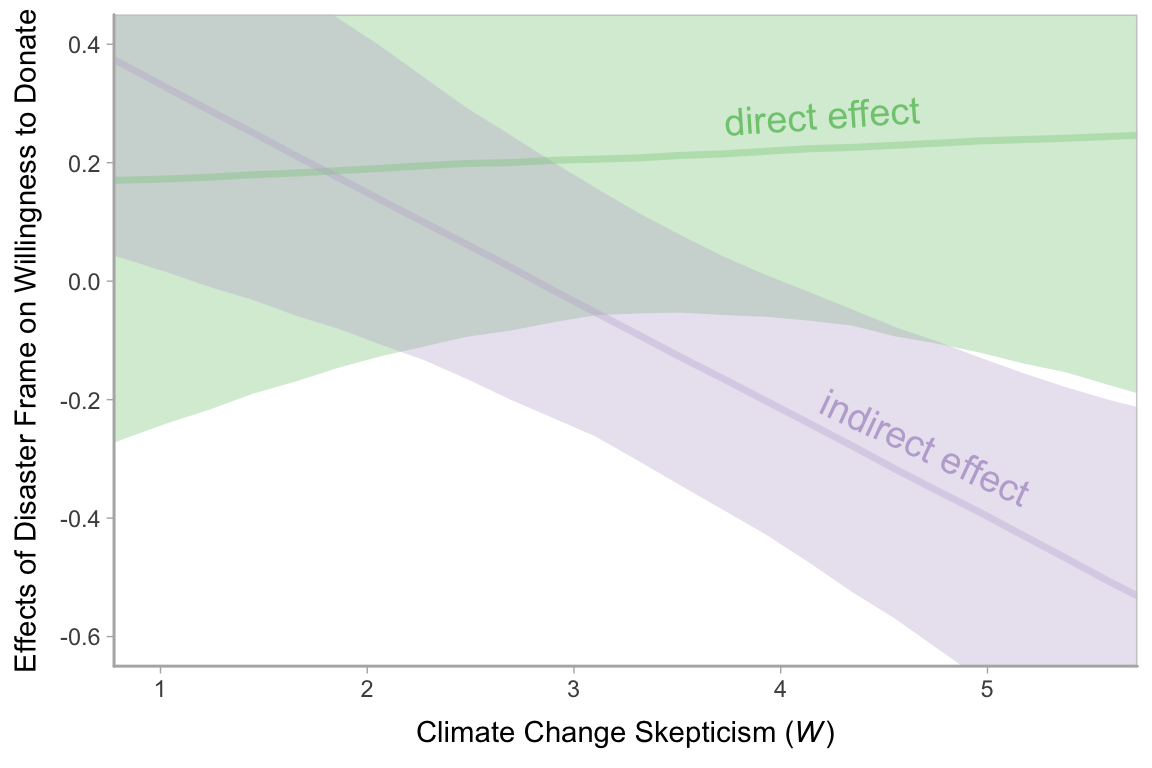

12.2.3 Visualizing the direct and indirect effects.

We’ll need to do some post wrangling before we’re ready to make our Figure 12.7. We’ll save the results as effects.

effects <-

post %>%

expand(nesting(b_justify_frame, `b_justify_frame:skeptic`, b_donate_justify, b_donate_frame, `b_donate_frame:skeptic`),

w = seq(from = 0, to = 6, length.out = 30)) %>%

mutate(`direct effect` = b_donate_frame + `b_donate_frame:skeptic` * w,

`indirect effect` = (b_justify_frame + `b_justify_frame:skeptic` * w) * b_donate_justify) %>%

pivot_longer(c(`direct effect`, `indirect effect`)) %>%

mutate(name = factor(name,

levels = c("direct effect", "indirect effect"))) %>%

select(w:value)

head(effects)## # A tibble: 6 x 3

## w name value

## <dbl> <fct> <dbl>

## 1 0 direct effect -0.442

## 2 0 indirect effect 1.32

## 3 0.207 direct effect -0.410

## 4 0.207 indirect effect 1.25

## 5 0.414 direct effect -0.377

## 6 0.414 indirect effect 1.18Now we plot.

# we'll need this for `geom_text()`

text <-

tibble(x = c(4.2, 4.7),

y = c(.28, -.28),

angle = c(3.6, 335),

name = factor(c("direct effect", "indirect effect"),

levels = c("direct effect", "indirect effect")))

# plot!

effects %>%

ggplot(aes(x = w, color = name, fill = name)) +

stat_lineribbon(aes(y = value),

.width = .95, alpha = 1/3) +

geom_text(data = text,

aes(x = x, y = y,

angle = angle,

label = name),

size = 5) +

scale_fill_brewer(type = "qual") +

scale_color_brewer(type = "qual") +

coord_cartesian(xlim = c(1, 5.5),

ylim = c(-.6, .4)) +

labs(x = expression(paste("Climate Change Skepticism (", italic(W), ")")),

y = "Effects of Disaster Frame on Willingness to Donate") +

theme(legend.position = "none")

Note how wide those 95% intervals are relative to the scale of the y-axis, which I specifically kept within the same range as Figure 12.7 in the text. To me the message is clear: include credible-interval ribbons in your regression slope plots. They help depict how uncertain the posterior is in a way a simple line slopes just don’t.

12.3 Statistical inference

12.3.1 Inference about the direct effect.

We’ve already computed the 95% intervals for the direct effect, \(\theta_{X \rightarrow Y}\), conditional on the three levels of \(W\). Here’s a different look at those intervals, superimposed on the 80% and 50% intervals, using the tidybayes::stat_interval() function.

post %>%

pivot_longer(starts_with("direct")) %>%

mutate(name = str_remove(name, "direct effect when W is ") %>% as.double()) %>%

ggplot(aes(x = name, y = value, group = name)) +

stat_interval(.width = c(.95, .80, .5)) +

scale_color_brewer("Interval") +

coord_cartesian(xlim = c(1, 5.5)) +

labs(x = expression(paste("Climate Change Skepticism (", italic(W), ")")),

y = "Conditional Direct Effect of Disaster Frame on\nWillingness to Donate")

12.3.2 Inference about the indirect effect.

12.3.2.1 A statistical test of moderated mediation.

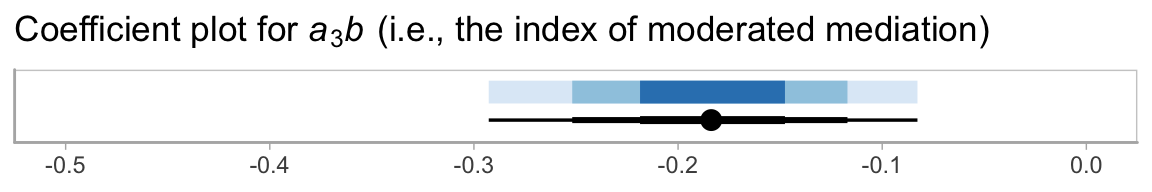

To get a sense of \(a_3 b\), we just:

post <-

post %>%

mutate(a3b = `b_justify_frame:skeptic` * b_donate_justify)

post %>%

select(a3b) %>%

median_qi(a3b) %>%

mutate_if(is.double, round, digits = 3)## # A tibble: 1 x 6

## a3b .lower .upper .width .point .interval

## <dbl> <dbl> <dbl> <dbl> <chr> <chr>

## 1 -0.184 -0.293 -0.083 0.95 median qiHere we’ll combine stat_intervalh() and stat_pointintervalh() to visualize \(a_3 b\) with a coefficient plot.

post %>%

ggplot(aes(x = a3b, y = 1)) +

stat_intervalh(.width = c(.95, .8, .5)) +

stat_pointintervalh(point_interval = median_qi, .width = c(.95, .8, .5),

position = position_nudge(y = -.75)) +

scale_color_brewer("Interval") +

scale_y_discrete(NULL, breaks = NULL) +

coord_cartesian(xlim = c(-.5, 0)) +

labs(title = expression(paste("Coefficient plot for ", italic(a)[3], italic(b), " (i.e., the index of moderated mediation)")),

x = NULL) +

theme(legend.position = "none")

12.3.2.2 Probing moderation of mediation.

As we discussed in Chapter 11, our Bayesian version of the JN technique should be fine because HMC does not impose the normality assumption on the parameter posteriors. In this instance, I’ll leave the JN technique plot as an exercise for the interested reader. Here we’ll just follow along with the text and pick a few points.

We computed and inspected these 95% intervals, above. Here’s another way we might geom_halfeyeh() to look at their entire densities.

post %>%

pivot_longer(starts_with("indirect")) %>%

rename(`conditional indirect effect` = value) %>%

mutate(W = str_remove(name, "indirect effect when W is ") %>% as.double()) %>%

ggplot(aes(x = `conditional indirect effect`, y = W, fill = W %>% as.character())) +

geom_vline(xintercept = 0, linetype = 2) +

geom_halfeyeh(point_interval = median_qi, .width = 0.95) +

scale_fill_brewer() +

scale_y_continuous(breaks = c(1.592, 2.8, 5.2),

labels = c(1.6, 2.8, 5.2)) +

coord_flip() +

# coord_cartesian(xlim = -1:1) +

theme(legend.position = "none",

panel.grid.minor.y = element_blank())

12.3.3 Pruning the model.

Fitting the model without the interaction term is just a small change to one of our formula arguments.

model12.5 <-

brm(data = disaster,

family = gaussian,

bf(justify ~ 1 + frame + skeptic + frame:skeptic) +

bf(donate ~ 1 + frame + justify + skeptic) +

set_rescor(FALSE),

chains = 4, cores = 4)Here are the results.

## Family: MV(gaussian, gaussian)

## Links: mu = identity; sigma = identity

## mu = identity; sigma = identity

## Formula: justify ~ 1 + frame + skeptic + frame:skeptic

## donate ~ 1 + frame + justify + skeptic

## Data: disaster (Number of observations: 211)

## Samples: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup samples = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## justify_Intercept 2.458 0.150 2.173 2.756 1.000 3631 3491

## donate_Intercept 7.264 0.230 6.812 7.714 1.000 5233 2990

## justify_frame -0.568 0.221 -0.996 -0.135 1.000 3310 3230

## justify_skeptic 0.104 0.038 0.028 0.177 1.000 3501 3304

## justify_frame:skeptic 0.202 0.055 0.092 0.311 1.001 3085 3041

## donate_frame 0.208 0.136 -0.060 0.467 1.000 5063 2999

## donate_justify -0.918 0.080 -1.075 -0.764 1.000 4733 3281

## donate_skeptic -0.037 0.037 -0.110 0.036 1.002 4771 3219

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma_justify 0.816 0.040 0.743 0.897 1.001 5608 3152

## sigma_donate 0.988 0.049 0.897 1.089 1.001 4733 2958

##

## Samples were drawn using sampling(NUTS). For each parameter, Eff.Sample

## is a crude measure of effective sample size, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).Since we’re altering the model, we may as well use information criteria to compare the two versions.

model12.4 <- add_criterion(model12.4, "loo")

model12.5 <- add_criterion(model12.5, "loo")

loo_compare(model12.4, model12.5) %>%

print(simplify = F)## elpd_diff se_diff elpd_loo se_elpd_loo p_loo se_p_loo looic se_looic

## model12.5 0.0 0.0 -557.5 16.5 10.8 1.3 1115.0 33.1

## model12.4 -1.3 0.3 -558.8 16.6 12.0 1.5 1117.6 33.2The difference in LOO-CV values for the two models was modest. There’s little predictive reason to choose one over the other. You could argue in favor of model12.5 because it’s simpler than model12.4. Since we’ve got a complex model either way, one might also consider which one was of primary theoretical interest.

12.4 Mediated moderation

Mediation is moderated if the indirect effect of \(X\) on \(Y\) through one or more mediators is contingent on a moderator. With evidence of moderated mediation, one can claim that the \(X \rightarrow M \rightarrow Y\) chain of events functions differently or to varying degrees for different people, in different contexts or conditions, or whatever the moderator variable represents. Although similar in name and pronunciation to moderated mediation, the term mediated moderation refers to the phenomenon in which an interaction between \(X\) and a moderator \(W\) in a model of \(Y\) is carried through a mediator. (p. 459, emphasis in the original)

Hayes later opined:

Although there is an abundance of published examples of mediated moderation analysis, their frequency of occurrence in the literature should not be confused with meaningfulness of the procedure itself. I will argue toward the end of this section that rarely is the phenomenon of mediated moderation interesting when interpreted as such. It is almost always substantively more meaningful to conceptualize a mediated moderation process in terms of moderated mediation. But before doing this, I will describe how a mediated moderation analysis is undertaken. (p. 460)

12.4.1 Mediated moderation as the indirect effect of a product.

Hayes explains this in the next subsection, but we’ve already fit this model presented in this subsection. We called it model12.4. Here’s the summary.

## Family: MV(gaussian, gaussian)

## Links: mu = identity; sigma = identity

## mu = identity; sigma = identity

## Formula: justify ~ 1 + frame + skeptic + frame:skeptic

## donate ~ 1 + frame + justify + skeptic + frame:skeptic

## Data: disaster (Number of observations: 211)

## Samples: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup samples = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## justify_Intercept 2.453 0.149 2.159 2.743 1.000 3284 3055

## donate_Intercept 7.290 0.277 6.760 7.847 1.002 3854 3312

## justify_frame -0.561 0.215 -0.997 -0.136 1.000 2945 2751

## justify_skeptic 0.105 0.038 0.030 0.181 1.000 3207 2944

## justify_frame:skeptic 0.200 0.055 0.092 0.307 1.000 2755 2553

## donate_frame 0.165 0.271 -0.380 0.707 1.000 3349 2550

## donate_justify -0.922 0.086 -1.089 -0.755 1.004 4814 2861

## donate_skeptic -0.042 0.047 -0.132 0.052 1.001 3608 3336

## donate_frame:skeptic 0.014 0.071 -0.123 0.147 1.000 3134 2858

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma_justify 0.818 0.041 0.742 0.902 1.002 5502 2832

## sigma_donate 0.988 0.047 0.900 1.087 1.001 5336 3242

##

## Samples were drawn using sampling(NUTS). For each parameter, Eff.Sample

## is a crude measure of effective sample size, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).12.4.2 Why mediated moderation is neither interesting nor meaningful.

Mediated moderation and moderated mediation are statistically the same.

The only difference between them is how they are interpreted, and on what part of the model your attention is focused.

Moderated mediation focuses on the conditional nature of an indirect effect–how an indirect effect is moderated. If you think of the terms “mediation” and “indirect effect” as essentially synonymous conceptually, then moderated mediation means a moderated indirect effect. Interpretive focus in a moderated mediation analysis is directed at estimating the indirect effect and how that effect varies as a function of a moderator. Mediated moderation, by contrast, asks about the mechanism through which an interaction between \(X\) and a moderator \(W\) operates, where the product of \(X\) and \(W\) is construed as the causal agent sending its effect to \(Y\) through \(M\). Focus in mediated moderation is the estimation of the indirect effect of the product of \(X\) and \(W\).(p. 465)

Hayes later concluded that we should avoid

the articulation of hypotheses or research questions in terms of the mediation of the effect of a product, abandoning the term mediated moderation entirely, and instead [reframe] such hypotheses and research questions in terms of the contingencies of an indirect effect–moderated mediation. (p. 467, emphasis in the original)

Session info

## R version 3.6.0 (2019-04-26)

## Platform: x86_64-apple-darwin15.6.0 (64-bit)

## Running under: macOS High Sierra 10.13.6

##

## Matrix products: default

## BLAS: /Library/Frameworks/R.framework/Versions/3.6/Resources/lib/libRblas.0.dylib

## LAPACK: /Library/Frameworks/R.framework/Versions/3.6/Resources/lib/libRlapack.dylib

##

## locale:

## [1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

##

## attached base packages:

## [1] stats graphics grDevices utils datasets methods base

##

## other attached packages:

## [1] tidybayes_1.1.0 brms_2.10.3 Rcpp_1.0.2 forcats_0.4.0 stringr_1.4.0 dplyr_0.8.3

## [7] purrr_0.3.3 readr_1.3.1 tidyr_1.0.0 tibble_2.1.3 ggplot2_3.2.1 tidyverse_1.2.1

##

## loaded via a namespace (and not attached):

## [1] colorspace_1.4-1 ellipsis_0.3.0 ggridges_0.5.1 rsconnect_0.8.15

## [5] ggstance_0.3.2 markdown_1.1 base64enc_0.1-3 rstudioapi_0.10

## [9] rstan_2.19.2 svUnit_0.7-12 DT_0.9 fansi_0.4.0

## [13] lubridate_1.7.4 xml2_1.2.0 bridgesampling_0.7-2 knitr_1.23

## [17] shinythemes_1.1.2 zeallot_0.1.0 bayesplot_1.7.0 jsonlite_1.6

## [21] broom_0.5.2 shiny_1.3.2 compiler_3.6.0 httr_1.4.0

## [25] backports_1.1.5 assertthat_0.2.1 Matrix_1.2-17 lazyeval_0.2.2

## [29] cli_1.1.0 later_1.0.0 htmltools_0.4.0 prettyunits_1.0.2

## [33] tools_3.6.0 igraph_1.2.4.1 coda_0.19-3 gtable_0.3.0

## [37] glue_1.3.1.9000 reshape2_1.4.3 cellranger_1.1.0 vctrs_0.2.0

## [41] nlme_3.1-139 crosstalk_1.0.0 xfun_0.10 ps_1.3.0

## [45] rvest_0.3.4 mime_0.7 miniUI_0.1.1.1 lifecycle_0.1.0

## [49] gtools_3.8.1 zoo_1.8-6 scales_1.0.0 colourpicker_1.0

## [53] hms_0.4.2 promises_1.1.0 Brobdingnag_1.2-6 parallel_3.6.0

## [57] inline_0.3.15 RColorBrewer_1.1-2 shinystan_2.5.0 gridExtra_2.3

## [61] loo_2.1.0 StanHeaders_2.19.0 stringi_1.4.3 dygraphs_1.1.1.6

## [65] pkgbuild_1.0.5 rlang_0.4.1 pkgconfig_2.0.3 matrixStats_0.55.0

## [69] evaluate_0.14 lattice_0.20-38 rstantools_2.0.0 htmlwidgets_1.5

## [73] labeling_0.3 cowplot_0.9.4 tidyselect_0.2.5 processx_3.4.1

## [77] plyr_1.8.4 magrittr_1.5 R6_2.4.0 generics_0.0.2

## [81] pillar_1.4.2 haven_2.1.0 withr_2.1.2 xts_0.11-2

## [85] abind_1.4-5 modelr_0.1.4 crayon_1.3.4 arrayhelpers_1.0-20160527

## [89] utf8_1.1.4 rmarkdown_1.13 grid_3.6.0 readxl_1.3.1

## [93] callr_3.3.2 threejs_0.3.1 digest_0.6.21 xtable_1.8-4

## [97] httpuv_1.5.2 stats4_3.6.0 munsell_0.5.0 shinyjs_1.0