11 Null Hypothesis Significance Testing

It’s worth repeating a couple paragraphs from page 298 (emphasis in the original):

The logic of conventional NHST goes like this. Suppose the coin is fair (i.e., \(\theta\) = 0.50). Then, when we flip the coin, we expect that about half the flips should come up heads. If the actual number of heads is far greater or fewer than half the flips, then we should reject the hypothesis that the coin is fair. To make this reasoning precise, we need to figure out the exact probabilities of all possible outcomes, which in turn can be used to figure out the probability of getting an outcome as extreme as (or more extreme than) the actually observed outcome. This probability, of getting an outcome from the null hypothesis that is as extreme as (or more extreme than) the actual outcome, is called a “\(p\) value.” If the \(p\) value is very small, say less than 5%, then we decide to reject the null hypothesis.

Notice that this reasoning depends on defining a space of all possible outcomes from the null hypothesis, because we have to compute the probabilities of each outcome relative to the space of all possible outcomes. The space of all possible outcomes is based on how we intend to collect data. For example, was the intention to flip the coin exactly \(N\) times? In that case, the space of possible outcomes contains all sequences of exactly \(N\) flips. Was the intention to flip until the \(z\)th head appeared? In that case, the space of possible outcomes contains all sequences for which the \(z\)th head appears on the last flip. Was the intention to flip for a fixed duration? In that case, the space of possible outcomes contains all combinations of \(N\) and \(z\) that could be obtained in that fixed duration. Thus, a more explicit definition of a \(p\) value is the probability of getting a sample outcome from the hypothesized population that is as extreme as or more extreme than the actual outcome when using the intended sampling and testing procedures.

11.1 Paved with good intentions

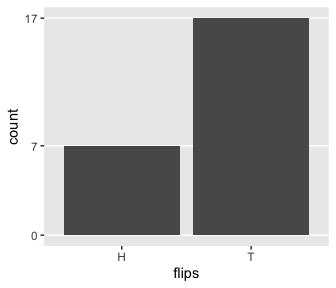

This is a little silly, but I wanted to challenge myself to randomly generate a series of 24 H and T characters for which there were 7 Hs. The base R sample() function gets us part of the way there.

## [1] "T" "T" "T" "T" "T" "T" "T" "T" "H" "T" "H" "H" "H" "T" "T" "T" "H"

## [18] "T" "H" "H" "H" "H" "H" "H"I wanted the solution to be reproducible, which required I find the appropriate seed for set.seed(). To do that, I made a custom h_counter() function into which I could input an arbitrary seed value and retrieve the count of H. I then fed a sequence of integers into h_counter() and filtered the output to find which seed produces the desirable outcome.

library(tidyverse)

h_counter <- function(seed) {

set.seed(seed)

coins <- sample(c("H", "T"), size = 24, replace = T)

length(which(coins == "H"))

}

coins <-

tibble(seed = 1:200) %>%

mutate(n_heads = map_dbl(seed, h_counter))

coins %>%

filter(n_heads == 7)## # A tibble: 2 x 2

## seed n_heads

## <int> <dbl>

## 1 115 7

## 2 143 7Looks like set.seed(115) will work.

## [1] "H" "T" "H" "T" "T" "T" "T" "H" "T" "H" "T" "T" "H" "T" "T" "H" "T"

## [18] "T" "T" "T" "T" "H" "T" "T"The sequence isn’t in the exact order as the data from page 300, but they do have the crucial ratio of heads to tails.

set.seed(115)

tibble(flips = sample(c("H", "T"), size = 24, replace = T)) %>%

ggplot(aes(x = flips)) +

geom_bar() +

scale_y_continuous(breaks = c(0, 7, 17)) +

theme(panel.grid.major.x = element_blank(),

panel.grid.minor.y = element_blank())

11.1.1 Definition of \(p\) value.

In summary, the likelihood function defines the probability for a single measurement, and the intended sampling process defines the cloud of possible sample outcomes. The null hypothesis is the likelihood function with its specific value for parameter \(\theta\), and the cloud of possible samples is defined by the stopping and testing intentions, denoted \(I\). Each imaginary sample generated from the null hypothesis is summarized by a descriptive statistic, denoted \(D_{\theta, I}\). In the case of a sample of coin flips, the descriptive summary statistic is \(z / N\) , the proportion of heads in the sample. Now, imagine generating infinitely many samples from the null hypothesis using stopping and testing intention \(I\) ; this creates a cloud of possible summary values \(D_{\theta, I}\), each of which has a particular probability. The probability distribution over the cloud of possibilities is the sampling distribution: \(p (D_{\theta, I} | \theta, I )\).

To compute the \(p\) value, we want to know how much of that cloud is as extreme as, or more extreme than, the actually observed outcome. To define “extremeness” we must determine the typical value of \(D_{\theta, I}\), which is usually defined as the expected value, \(E [D_{\theta, I}]\) (recall Equations 4.5 and 4.6). This typical value is the center of the cloud of possibilities. An outcome is more “extreme” when it is farther away from the central tendency. The \(p\) value of the actual outcome is the probability of getting a hypothetical outcome that is as or more extreme. Formally, we can express this as

\[p \text{ value} = p (D_{\theta, I} \succcurlyeq D_\text{actual} | \theta, I)\]

where “\(\succcurlyeq\)” in this context means “as extreme as or more extreme than, relative to the expected value from the hypothesis.” Most introductory applied statistics textbooks suppress the sampling intention \(I\) from the definition, but precedents for making the sampling intention explicit can be found in Wagenmakers (2007, Online Supplement A) and additional references cited therein. (p. 301)

11.1.2 With intention to fix \(N\).

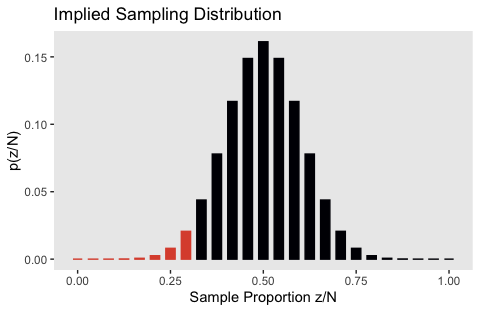

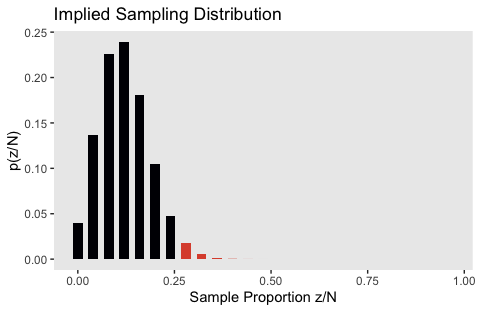

In this subsection, “the space of possible outcomes is restricted to combinations of \(z\) and \(N\) for which \(N\) is fixed at \(N = 24\)” (p. 302).

What is the probability of getting a particular number of heads when N is fixed? The answer is provided by the binomial probability distribution, which states that the probability of getting z heads out of N flips is

\[ p(z | N, \theta) = \begin{pmatrix} N \\ z \end{pmatrix} \theta^z (1 - \theta)^{N - z}\]

where the notation \(\begin{pmatrix} N \\ z \end{pmatrix}\) [is a shorthand notation defined in more detail in the text. It has to do with factorials and, getting more to the point,] the number of ways of allocating \(z\) heads among \(N\) flips, without duplicate counting of equivalent allocations, is \(N !/[(N − z)!z!]\). This factor is also called the number of ways of choosing \(z\) items from \(N\) possibilities, or “\(N\) choose \(z\)” for short, and is denoted \(\begin{pmatrix} N \\ z \end{pmatrix}\). Thus, the overall probability of getting \(z\) heads in \(N\) flips is the probability of any particular sequence of \(z\) heads in \(N\) flips times the number of ways of choosing \(z\) slots from among the \(N\) possible flips. (p. 303, emphasis in the original)

To do factorials in R, use the factorial() function. E.g., we can use the formula \(N! / [(N − z)!z!]\) like so:

## [1] 346104That value, recall, is a count, “the number of ways of allocating \(z\) heads among \(N\) flips, without duplicate counting of equivalent allocations” (p. 303). That formula’s a little cumbersome to work with. We can make our programming lives easier by wrapping it into a function.

Now we can employ our n_choose_z() function to help make the data we’ll use for Figure 11.3.b. Here are the data.

flips <-

tibble(z = 0:24) %>%

mutate(n_choose_z = n_choose_z(n, z)) %>%

mutate(`p(z/N)` = n_choose_z / sum(n_choose_z),

`Sample Proportion z/N` = z / n)

head(flips, n = 10)## # A tibble: 10 x 4

## z n_choose_z `p(z/N)` `Sample Proportion z/N`

## <int> <dbl> <dbl> <dbl>

## 1 0 1 0.0000000596 0

## 2 1 24.0 0.00000143 0.0417

## 3 2 276 0.0000165 0.0833

## 4 3 2024 0.000121 0.125

## 5 4 10626 0.000633 0.167

## 6 5 42504 0.00253 0.208

## 7 6 134596 0.00802 0.25

## 8 7 346104 0.0206 0.292

## 9 8 735471 0.0438 0.333

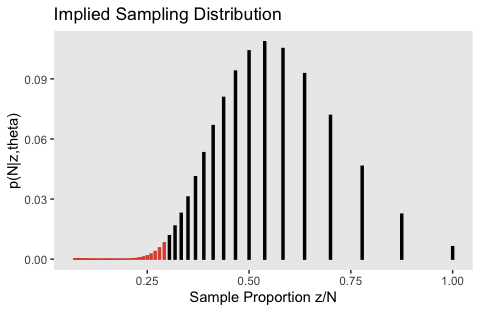

## 10 9 1307504 0.0779 0.375Now here’s the histogram of that sampling distribution.

flips %>%

ggplot(aes(x = `Sample Proportion z/N`, y = `p(z/N)`,

fill = z <= 7, color = z <= 7)) +

geom_col(width = .025) +

scale_fill_viridis_d(option = "B", end = .6) +

scale_color_viridis_d(option = "B", end = .6) +

ggtitle("Implied Sampling Distribution") +

theme(panel.grid = element_blank(),

legend.position = "none")

We can get the one-sided \(p\)-value with a quick filter() and summarise().

## # A tibble: 1 x 1

## p_value

## <dbl>

## 1 0.0320Here’s Figure 11.3.a.

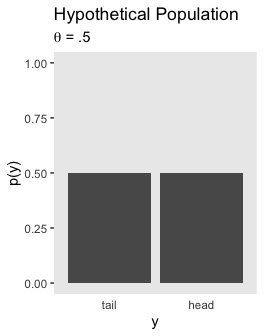

tibble(y = factor(c("tail", "head"), levels = c("tail", "head")),

`p(y)` = .5) %>%

ggplot(aes(x = y, y = `p(y)`)) +

geom_col() +

coord_cartesian(ylim = 0:1) +

labs(title = "Hypothetical Population",

subtitle = expression(paste(theta, " = .5"))) +

theme(panel.grid = element_blank(),

axis.ticks.x = element_blank())

As Kruschke wrote on page 304, “It is important to understand that the sampling distribution is a probability distribution over samples of data, and is not a probability distribution over parameter values.”

Here is the probability “of getting exactly \(z = 7\) heads in \(N = 24\) flips” (p. 304, emphasis in the original):

## # A tibble: 1 x 4

## z n_choose_z `p(z/N)` `Sample Proportion z/N`

## <int> <dbl> <dbl> <dbl>

## 1 7 346104 0.0206 0.292It was already sitting there in our p(z/N) column.

Here’s the conclusion for our particular case. The actual observation was \(z/N = 7/24\). The one-tailed probability is \(p = 0.032\), which was computed from Equation 11.4, and is shown in Figure 11.3. Because the \(p\) value is not less than 2.5%, we do not reject the null hypothesis that \(\theta = 0.5\). In NHST parlance, we would say that the result “has failed to reach significance.” This does not mean we accept the null hypothesis; we merely suspend judgment regarding rejection of this particular hypothesis. Notice that we have not determined any degree of belief in the hypothesis that \(\theta = 0.5\). The hypothesis might be true or might be false; we suspend judgment. (p. 305, emphasis in the original)

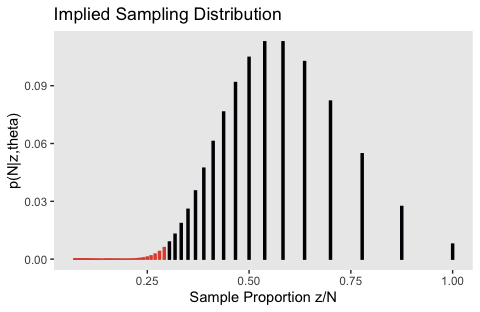

11.1.3 With intention to fix \(z\).

In this subsection, “\(z\) is fixed in advance and \(N\) is the random variable. We don’t talk about the probability of getting \(z\) heads out of \(N\) flips, we instead talk about the probability of taking \(N\) flips to get \(z\) heads” (p. 306).

This time we’re interested in

What is the probability of taking \(N\) flips to get \(z\) heads? To answer this question, consider this: We know that the \(N\)th flip is the \(z\)th head, because that is what caused flipping to stop. Therefore the previous \(N - 1\) flips had \(z - 1\) heads in some random sequence. The probability of getting \(z - 1\) heads in \(N - 1\) flips is \(\begin{pmatrix} N-1 \\ z-1 \end{pmatrix} \theta^{z-1} (1 - \theta)^{N - z}\). The probability that the last flip comes up heads is \(\theta\). Therefore, the probability that it takes \(N\) flips to get \(z\) heads is

\[\begin{align*} p(N | z, \theta) & = \begin{pmatrix} N-1 \\ z-1 \end{pmatrix} \theta^{z-1} (1 - \theta)^{N - z} \cdot \theta \\ & = \begin{pmatrix} N-1 \\ z-1 \end{pmatrix} \theta^z (1 - \theta)^{N - z} \\ & = \frac{z}{N} \begin{pmatrix} N \\ z \end{pmatrix} \theta^z (1 - \theta)^{N - z} \end{align*}\]

(This distribution is sometimes called the “negative binomial” but that term sometimes refers to other formulations and can be confusing, so I will not use it here.) This is a sampling distribution, like the binomial distribution, because it specifies the relative probabilities of all the possible data outcomes for the hypothesized fixed value of \(\theta\) and the intended stopping rule. (p. 306, emphasis added)

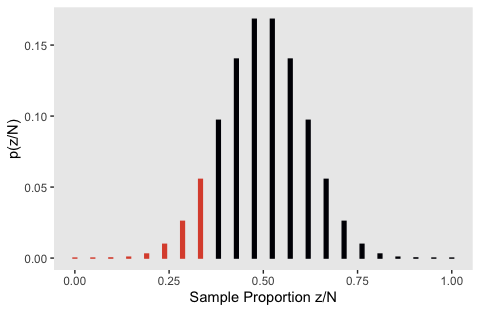

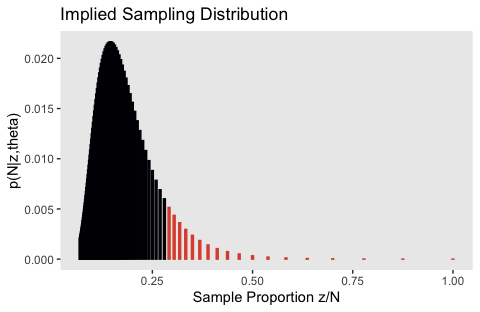

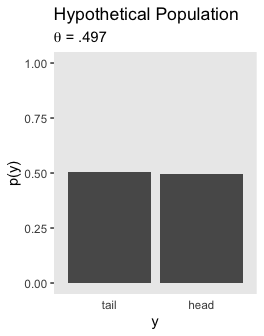

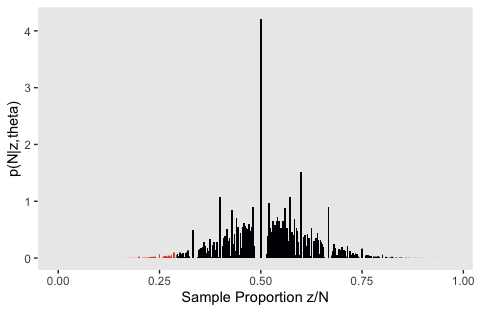

With that formula in hand, here’s how to generate the data for Figure 11.4.b.

theta <- .5

# we have to stop somewhere. where should we stop?

highest_n <- 100

flips <-

tibble(n = 7:highest_n) %>%

mutate(n_choose_z = n_choose_z(n, z)) %>%

mutate(`p(z/N)` = n_choose_z / sum(n_choose_z),

`Sample Proportion z/N` = z / n,

`p(N|z,theta)` = (z / n) * n_choose_z * (theta^z) * (1 - theta)^(n - z))To keep things simple, we just went up to \(N = 100\).

At the bottom of page 306, Kruschke described the probability “spikes” for various values of \(N\) when \(z/N = 7/7\), \(z/N = 7/8\), and \(z/N = 7/9\). We have those spike values in the Sample Proportion z/N column of our flips data. Here are those first three spikes.

## # A tibble: 3 x 5

## n n_choose_z `p(z/N)` `Sample Proportion z/N` `p(N|z,theta)`

## <int> <dbl> <dbl> <dbl> <dbl>

## 1 7 1 4.95e-12 1 0.00781

## 2 8 8 3.96e-11 0.875 0.0273

## 3 9 36 1.78e-10 0.778 0.0547Those values correspond to the rightmost vertical lines in our Figure 11.4.b, below.

flips %>%

ggplot(aes(x = `Sample Proportion z/N`, y = `p(N|z,theta)`,

fill = n >= 24, color = n >= 24)) +

geom_col(width = .005) +

scale_fill_viridis_d(option = "B", end = .6) +

scale_color_viridis_d(option = "B", end = .6) +

ggtitle("Implied Sampling Distribution") +

theme(panel.grid = element_blank(),

legend.position = "none")

Our Figure 11.4.a is the same as Figure 11.3.a, above. I won’t repeat it, here.

We got the formula for that last variable, p(N|z,theta), from formula 11.6 on page 306. You’ll note how Kruschke continued to refer to it as \(p(z|N)\) in his Figure 11.4. It’s entirely opaque, to me, how \(p(z|N) = p(N|z, \theta)\). I’m just going with it.

Here’s the \(p\)-value.

## # A tibble: 1 x 1

## p_value

## <dbl>

## 1 0.017If you experiment a bit with the highest_n value from above, you’ll see that the exact value for the \(p\)-value is dependent on what \(N\) you go up to.

11.1.4 With intention to fix duration.

In this subsection,

neither \(N\) nor \(z\) is fixed…

The key to analyzing this scenario is specifying how various combinations of \(z\) and \(N\) can arise when sampling for a fixed duration. There is no single, uniquely “correct” specification, because there are many different real-world constraints on sampling through time. But one approach is to think of the sample size \(N\) as a random value. (p. 308).

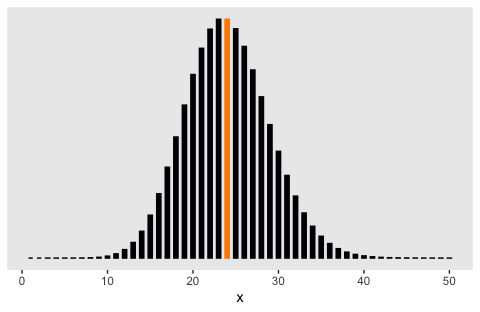

Here’s a glance at the Poisson distribution for which \(\lambda = 24\). The mean is colored orange.

tibble(x = 1:50) %>%

mutate(y = dpois(x = x, lambda = 24)) %>%

ggplot(aes(x = x, y = y,

fill = x == 24, color = x == 24)) +

geom_col(width = .5) +

scale_fill_viridis_d(option = "B", end = .75) +

scale_color_viridis_d(option = "B", end = .75) +

scale_y_continuous(NULL, breaks = NULL) +

theme(panel.grid = element_blank(),

legend.position = "none")

In the note for Figure 11.5, Kruschke explained the “sample sizes are drawn randomly from a Poisson distribution with mean \(\lambda\)”. Earlier in the prose he explained “\(\lambda\) was set to 24 merely to match \(N\) and make the example most comparable to the preceding examples” (p. 309). To do such a simulation, one must choose how many draws to take from \(\operatorname{Poisson}(24)\). Here’s an example where we take just one.

set.seed(11)

n_iter <- 1

flips <-

tibble(iter = 1:n_iter,

n = rpois(n_iter, lambda = 24)) %>%

mutate(z = map(n, ~seq(from = 0, to = ., by = 1))) %>%

unnest(z) %>%

mutate(n_choose_z = n_choose_z(n, z)) %>%

mutate(`p(z/N)` = n_choose_z / sum(n_choose_z),

`Sample Proportion z/N` = z / n)

flips## # A tibble: 22 x 6

## iter n z n_choose_z `p(z/N)` `Sample Proportion z/N`

## <int> <int> <dbl> <dbl> <dbl> <dbl>

## 1 1 21 0 1 0.000000477 0

## 2 1 21 1 21 0.0000100 0.0476

## 3 1 21 2 210 0.000100 0.0952

## 4 1 21 3 1330 0.000634 0.143

## 5 1 21 4 5985 0.00285 0.190

## 6 1 21 5 20349 0.00970 0.238

## 7 1 21 6 54264 0.0259 0.286

## 8 1 21 7 116280 0.0554 0.333

## 9 1 21 8 203490 0.0970 0.381

## 10 1 21 9 293930 0.140 0.429

## # … with 12 more rowsAs indicate in our n column, by chance we drew a 21. We then computed the same values for all possible values of z, ranging from 0 to 21. But this doesn’t make for a very interesting plot, nor does it make for the same kind of plot Kruschke made in Figure 11.5.b.

flips %>%

ggplot(aes(x = `Sample Proportion z/N`, y = `p(z/N)`,

fill = z <= 7, color = z <= 7)) +

geom_col(width = .01) +

scale_fill_viridis_d(option = "B", end = .6) +

scale_color_viridis_d(option = "B", end = .6) +

theme(panel.grid = element_blank(),

legend.position = "none")

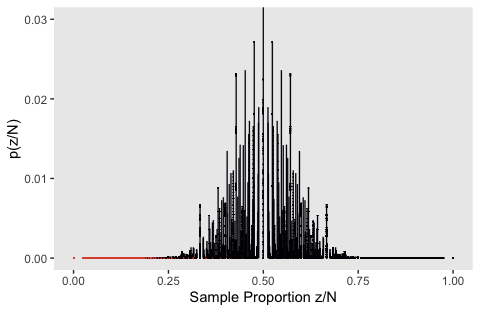

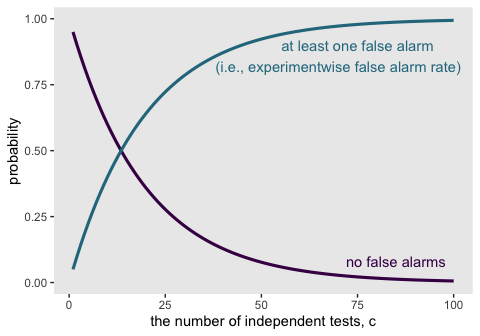

Instead we have to take many draws to take from \(\operatorname{Poisson}(24)\). Here’s what it looks like when we take 10,000.

n_iter <- 10000

set.seed(11)

flips <-

tibble(iter = 1:n_iter,

n = rpois(n_iter, lambda = 24)) %>%

mutate(z = map(n, ~seq(from = 0, to = ., by = 1))) %>%

unnest(z) %>%

mutate(n_choose_z = n_choose_z(n, z)) %>%

mutate(`p(z/N)` = n_choose_z / sum(n_choose_z),

`Sample Proportion z/N` = z / n)

flips %>%

ggplot(aes(x = `Sample Proportion z/N`, y = `p(z/N)`,

fill = z <= 7, color = z <= 7)) +

geom_col(width = .003, size = 1/15) +

scale_fill_viridis_d(option = "B", end = .6) +

scale_color_viridis_d(option = "B", end = .6) +

coord_cartesian(ylim = c(0, 0.03)) +

theme(panel.grid = element_blank(),

legend.position = "none")

I played around with the simulation a bit, and this is about as good as I’ve gotten. If you have a solution that more faithfully reproduces what Kruschke did, please share your code.

Here’s my attempt at the \(p\) value.

## # A tibble: 1 x 1

## p_value

## <dbl>

## 1 0.000218It’s unclear, to me, why it’s so much lower than the one Kruschke reported in the text.

11.1.5 With intention to make multiple tests.

I’m not sure how to do the simulation for this section. This, for example, doesn’t get the job done.

flips <-

crossing(z = 0:24,

coin = letters[1:2]) %>%

group_by(coin) %>%

mutate(n_choose_z = n_choose_z(n, z)) %>%

mutate(`p(z/N)` = n_choose_z / sum(n_choose_z),

`Sample Proportion z/N` = z / n)

head(flips, n = 10)## # A tibble: 10 x 5

## # Groups: coin [2]

## z coin n_choose_z `p(z/N)` `Sample Proportion z/N`

## <int> <chr> <dbl> <dbl> <dbl>

## 1 0 a 1 0.0000000596 0

## 2 0 b 1 0.0000000596 0

## 3 1 a 24.0 0.00000143 0.0417

## 4 1 b 24.0 0.00000143 0.0417

## 5 2 a 276 0.0000165 0.0833

## 6 2 b 276 0.0000165 0.0833

## 7 3 a 2024 0.000121 0.125

## 8 3 b 2024 0.000121 0.125

## 9 4 a 10626 0.000633 0.167

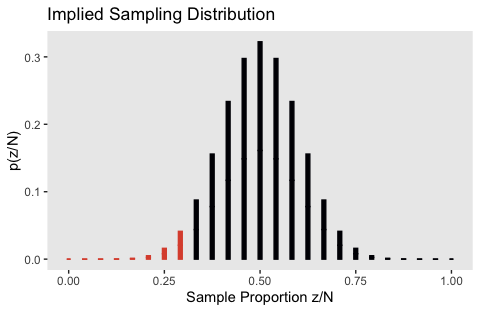

## 10 4 b 10626 0.000633 0.167The result is a failed attempt at Figure 11.6:

flips %>%

ggplot(aes(x = `Sample Proportion z/N`, y = `p(z/N)`,

fill = z <= 7, color = z <= 7)) +

geom_col(width = .01) +

scale_fill_viridis_d(option = "B", end = .6) +

scale_color_viridis_d(option = "B", end = .6) +

ggtitle("Implied Sampling Distribution") +

theme(panel.grid = element_blank(),

legend.position = "none")

If you know how to do the simulation properly, please share your code.

11.1.6 Soul searching.

Within the context of NHST, the solution is to establish the true intention of the researcher. This is the approach taken explicitly when applying corrections for multiple tests. The analyst determines what the truly intended tests are, and determines whether those testing intentions were honestly conceived a priori or post hoc (i.e., motivated only after seeing the data), and then computes the appropriate \(p\) value. The same approach should be taken for stopping rules: The data analyst should determine what the truly intended stopping rule was, and then compute the appropriate \(p\) value. Unfortunately, determining the true intentions can be difficult. Therefore, perhaps researchers who use \(p\) values to make decisions should be required to publicly pre-register their intended stopping rule and tests, before collecting the data. (p. 314, emphasis in the original)

11.1.7 Bayesian analysis.

Happily for us, “the Bayesian interpretation of data does not depend on the covert sampling and testing intentions of the data collector” (p. 314).

11.2 Prior knowledge

Nothing for us, here.

11.2.1 NHST analysis.

More nothing.

11.2.2 Bayesian analysis.

If you recall from Chapter 6, we need a function to compute the Bernoulli likelihood.

bernoulli_likelihood <- function(theta, data) {

n <- length(data)

z <- sum(data)

return(theta^z * (1 - theta)^(n - sum(data)))

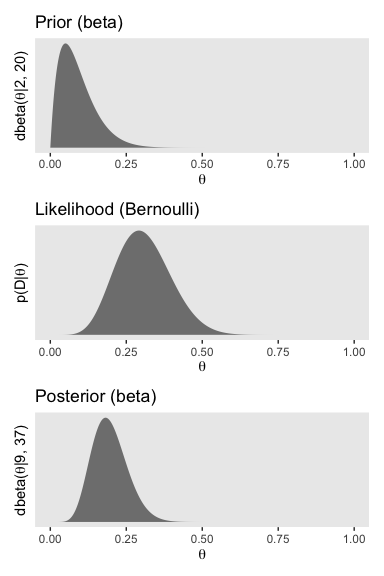

}There are a handful of steps before we can use our bernoulli_likelihood() function to make the plot data. All these are repeats from Chapter 6.

# we need these to compute the likelihood

n <- 24

z <- 7

trial_data <- c(rep(0, times = n - z), rep(1, times = z)) # (i.e., data)

d_nail <-

tibble(theta = seq(from = 0, to = 1, length.out = 1000)) %>% # (i.e., theta)

mutate(Prior = dbeta(x = theta, shape1 = 2, shape2 = 20)) %>%

mutate(Likelihood = bernoulli_likelihood(theta = theta, # (i.e., p(D | theta))

data = trial_data)) %>%

mutate(evidence = sum(Likelihood * Prior)) %>% # (i.e., p(D))

mutate(Posterior = Likelihood * Prior / evidence) # (i.e., p(theta | D))

glimpse(d_nail)## Observations: 1,000

## Variables: 5

## $ theta <dbl> 0.000000000, 0.001001001, 0.002002002, 0.003003003, 0…

## $ Prior <dbl> 0.0000000, 0.4124961, 0.8094267, 1.1912097, 1.5582533…

## $ Likelihood <dbl> 0.000000e+00, 9.900280e-22, 1.245822e-19, 2.092598e-1…

## $ evidence <dbl> 5.260882e-05, 5.260882e-05, 5.260882e-05, 5.260882e-0…

## $ Posterior <dbl> 0.000000e+00, 7.762627e-18, 1.916792e-15, 4.738222e-1…Here’s the left column of Figure 11.7.

p1 <-

d_nail %>%

ggplot(aes(x = theta)) +

geom_ribbon(aes(ymin = 0, ymax = Prior),

fill = "grey50") +

scale_y_continuous(breaks = NULL) +

labs(title = "Prior (beta)",

x = expression(theta),

y = expression(paste("dbeta(", theta, "|2, 20)"))) +

theme(panel.grid = element_blank())

p2 <-

d_nail %>%

ggplot(aes(x = theta)) +

geom_ribbon(aes(ymin = 0, ymax = Likelihood),

fill = "grey50") +

scale_y_continuous(breaks = NULL) +

labs(title = "Likelihood (Bernoulli)",

x = expression(theta),

y = expression(paste("p(D|", theta, ")"))) +

theme(panel.grid = element_blank(),

axis.text.y = element_text())

p3 <-

d_nail %>%

ggplot(aes(x = theta)) +

geom_ribbon(aes(ymin = 0, ymax = Posterior),

fill = "grey50") +

scale_y_continuous(breaks = NULL) +

labs(title = "Posterior (beta)",

x = expression(theta),

y = expression(paste("dbeta(", theta, "|9, 37)"))) +

theme(panel.grid = element_blank())

library(patchwork)

p1 / p2 / p3

If we’d like the 95% HDIs, we’ll need to redifine the hdi_of_icdf() function.

hdi_of_icdf <- function(name = qbeta, width = .95, tol = 1e-8, ... ) {

incredible_mass <- 1.0 - width

interval_width <- function(low_tail_prob, name, width, ...) {

name(width + low_tail_prob, ...) - name(low_tail_prob, ...)

}

opt_info <- optimize(interval_width, c(0, incredible_mass),

name = name, width = width,

tol = tol, ...)

hdi_lower_tail_prob <- opt_info$minimum

return(c(name(hdi_lower_tail_prob, ...),

name(width + hdi_lower_tail_prob, ...)))

}Note that this time we made the qbeta() function the default setting for the name argument. Here are the HDIs for the prior and posterior, above.

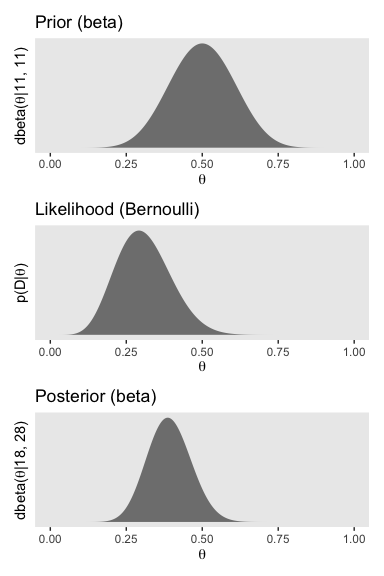

## [1] 0.002600585 0.208030932## [1] 0.08839668 0.31043265To get the left column of Figure 11.7, we have to update the data with our new prior, \(\operatorname{beta} (11, 11)\).

# here are the data based on our updated beta(11, 11) prior

d_coin <-

tibble(theta = seq(from = 0, to = 1, length.out = 1000)) %>%

mutate(Prior = dbeta(x = theta, shape1 = 11, shape2 = 11)) %>%

mutate(Likelihood = bernoulli_likelihood(theta = theta,

data = trial_data)) %>%

mutate(Posterior = Likelihood * Prior / sum(Likelihood * Prior))

# The updated plots:

p1 <-

d_coin %>%

ggplot(aes(x = theta)) +

geom_ribbon(aes(ymin = 0, ymax = Prior),

fill = "grey50") +

scale_y_continuous(breaks = NULL) +

labs(title = "Prior (beta)",

x = expression(theta),

y = expression(paste("dbeta(", theta, "|11, 11)"))) +

theme(panel.grid = element_blank())

p2 <-

d_coin %>%

ggplot(aes(x = theta)) +

geom_ribbon(aes(ymin = 0, ymax = Likelihood),

fill = "grey50") +

scale_y_continuous(breaks = NULL) +

labs(title = "Likelihood (Bernoulli)",

x = expression(theta),

y = expression(paste("p(D|", theta, ")"))) +

theme(panel.grid = element_blank(),

axis.text.y = element_text())

p3 <-

d_coin %>%

ggplot(aes(x = theta)) +

geom_ribbon(aes(ymin = 0, ymax = Posterior),

fill = "grey50") +

scale_y_continuous(breaks = NULL) +

labs(title = "Posterior (beta)",

x = expression(theta),

y = expression(paste("dbeta(", theta, "|18, 28)"))) +

theme(panel.grid = element_blank())

p1 / p2 / p3

Here are the corresponding HDIs for \(\operatorname{beta} (11, 11)\) and \(\operatorname{beta} (11 + 7, 11 + 24 - 7)\).

## [1] 0.2978068 0.7021932## [1] 0.2539378 0.531268511.2.2.1 Priors are overt and relevant.

In this subsection’s opening paragraph, Kruschke opined:

Prior beliefs are overt, explicitly debated, and founded on publicly accessible previous research. A Bayesian analyst might have personal priors that differ from what most people think, but if the analysis is supposed to convince an audience, then the analysis must use priors that the audience finds palatable. It is the job of the Bayesian analyst to make cogent arguments for the particular prior that is used. (p. 317)

11.3 Confidence interval and highest density interval

This section defines CIs and provides examples. It shows that, while CIs ameliorate some of the problems of \(p\) values, ultimately CIs suffer the same problems as \(p\) values because CIs are defined in terms of \(p\) values. Bayesian posterior distributions, on the other hand, provide the needed information. (p. 318)

11.3.1 CI depends on intention.

The primary goal of NHST is determining whether a particular “null” value of a parameter can be rejected. One can also ask what range of parameter values would not be rejected. This range of nonrejectable parameter values is called the CI. (There are different ways of defining an NHST CI; this one is conceptually the most general and coherent with NHST precepts.) The 95% CI consists of all values of \(\theta\) that would not be rejected by a (two-tailed) significance test that allows 5% false alarms. (p. 318, emphasis in the original)

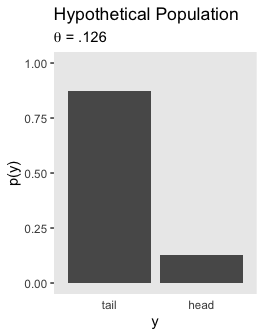

Here’s the upper left panel of Figure 11.8.

tibble(y = factor(c("tail", "head"), levels = c("tail", "head")),

`p(y)` = c(1 - .126, .126)) %>%

ggplot(aes(x = y, y = `p(y)`)) +

geom_col() +

coord_cartesian(ylim = 0:1) +

labs(title = "Hypothetical Population",

subtitle = expression(paste(theta, " = .126"))) +

theme(panel.grid = element_blank(),

axis.ticks.x = element_blank())

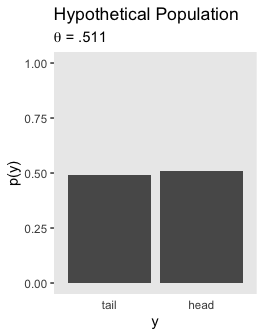

Here’s the lower left panel of Figure 11.8.

tibble(y = factor(c("tail", "head"), levels = c("tail", "head")),

`p(y)` = c(1 - .511, .511)) %>%

ggplot(aes(x = y, y = `p(y)`)) +

geom_col() +

coord_cartesian(ylim = 0:1) +

labs(title = "Hypothetical Population",

subtitle = expression(paste(theta, " = .511"))) +

theme(panel.grid = element_blank(),

axis.ticks.x = element_blank())

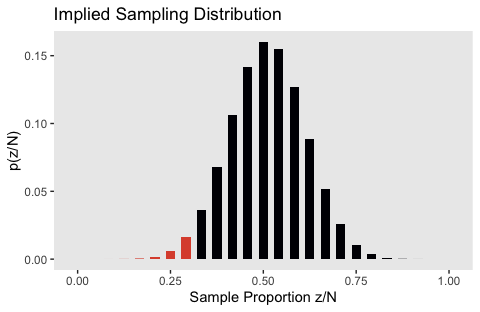

Here’s the upper right panel, the sampling distribution for when \(\theta = .126\).

tibble(z = 0:24,

y = dbinom(0:24, size = 24, prob = .126)) %>%

ggplot(aes(x = z/25, y = y,

fill = z >= 7)) +

geom_col(width = .025) +

scale_fill_viridis_d(option = "B", end = .6) +

labs(title = "Implied Sampling Distribution",

x = "Sample Proportion z/N",

y = "p(z/N)") +

theme(panel.grid = element_blank(),

legend.position = "none")

And here’s the lower right, the sampling distribution for when \(\theta = .511\).

tibble(z = 0:24,

y = dbinom(0:24, size = 24, prob = .511)) %>%

ggplot(aes(x = z / 24, y = y,

fill = z <= 7)) +

geom_col(width = .025) +

scale_fill_viridis_d(option = "B", end = .6) +

labs(title = "Implied Sampling Distribution",

x = "Sample Proportion z/N",

y = "p(z/N)") +

theme(panel.grid = element_blank(),

legend.position = "none")

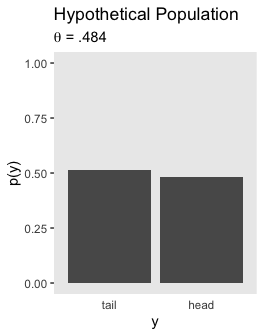

The upper left panel in Figure 11.9 is the same as 11.8, which you can see above. Here’s the lower left panel.

tibble(y = factor(c("tail", "head"), levels = c("tail", "head")),

`p(y)` = c(1 - .484, .484)) %>%

ggplot(aes(x = y, y = `p(y)`)) +

geom_col() +

coord_cartesian(ylim = 0:1) +

labs(title = "Hypothetical Population",

subtitle = expression(paste(theta, " = .484"))) +

theme(panel.grid = element_blank(),

axis.ticks.x = element_blank())

We’ll need fresh data for the upper right panel of Figure 11.9.

theta <- .126

flips <-

tibble(n = 7:100) %>%

mutate(n_choose_z = n_choose_z(n, z)) %>%

mutate(`p(z/N)` = n_choose_z / sum(n_choose_z),

`Sample Proportion z/N` = z / n,

`p(N|z,theta)` = (z / n) * n_choose_z * (theta^z) * (1 - theta)^(n - z))

flips %>%

ggplot(aes(x = `Sample Proportion z/N`, y = `p(N|z,theta)`,

fill = n <= 24, color = n <= 24)) +

geom_col(width = .005) +

scale_fill_viridis_d(option = "B", end = .6) +

scale_color_viridis_d(option = "B", end = .6) +

ggtitle("Implied Sampling Distribution") +

theme(panel.grid = element_blank(),

legend.position = "none")

And once again, we’ll renew theta and the data for the lower right panel.

theta <- .484

flips <-

tibble(n = 7:100) %>%

mutate(n_choose_z = n_choose_z(n, z)) %>%

mutate(`p(z/N)` = n_choose_z / sum(n_choose_z),

`Sample Proportion z/N` = z / n,

`p(N|z,theta)` = (z / n) * n_choose_z * (theta^z) * (1 - theta)^(n - z))

flips %>%

ggplot(aes(x = `Sample Proportion z/N`, y = `p(N|z,theta)`,

fill = n >= 24, color = n >= 24)) +

geom_col(width = .005) +

scale_fill_viridis_d(option = "B", end = .6) +

scale_color_viridis_d(option = "B", end = .6) +

ggtitle("Implied Sampling Distribution") +

theme(panel.grid = element_blank(),

legend.position = "none")

Here’s the lower left panel for Figure 11.10.

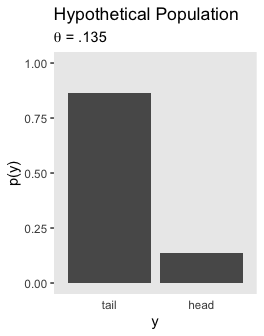

tibble(y = factor(c("tail", "head"), levels = c("tail", "head")),

`p(y)` = c(1 - .135, .135)) %>%

ggplot(aes(x = y, y = `p(y)`)) +

geom_col() +

coord_cartesian(ylim = 0:1) +

labs(title = "Hypothetical Population",

subtitle = expression(paste(theta, " = .135"))) +

theme(panel.grid = element_blank(),

axis.ticks.x = element_blank())

After switching out a few theta values, here’s the lower right panel of Figure 11.10.

tibble(y = factor(c("tail", "head"), levels = c("tail", "head")),

`p(y)` = c(1 - .497, .497)) %>%

ggplot(aes(x = y, y = `p(y)`)) +

geom_col() +

coord_cartesian(ylim = 0:1) +

labs(title = "Hypothetical Population",

subtitle = expression(paste(theta, " = .497"))) +

theme(panel.grid = element_blank(),

axis.ticks.x = element_blank())

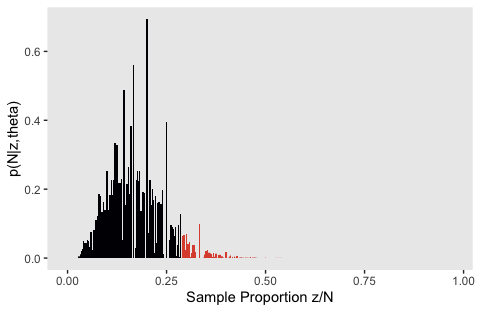

Like with Figure 11.5.b, my attempts for the right panels of Figure 11.10 just aren’t quite right. If you understand where I’m going wrong with the simulation, please share your code.

z_maker <- function(i) {

set.seed(i)

n <- rpois(n = 1, lambda = 24)

seq(from = 0, to = n, by = 1)

}

theta <- .135

tibble(seed = 1:100) %>%

mutate(z = map(seed, z_maker)) %>%

unnest(z) %>%

group_by(seed) %>%

mutate(n = n()) %>%

mutate(n_choose_z = n_choose_z(n, z)) %>%

mutate(`p(z/N)` = n_choose_z / sum(n_choose_z),

`Sample Proportion z/N` = z / n,

`p(N|z,theta)` = (z / n) * n_choose_z * (theta^z) * (1 - theta)^(n - z)) %>%

ggplot(aes(x = `Sample Proportion z/N`, y = `p(N|z,theta)`,

fill = `Sample Proportion z/N` >= 7 / 24)) +

geom_col(width = .004) +

scale_fill_viridis_d(option = "B", end = .6) +

theme(panel.grid = element_blank(),

legend.position = "none")

theta <- .497

tibble(seed = 1:100) %>%

mutate(z = map(seed, z_maker)) %>%

unnest(z) %>%

group_by(seed) %>%

mutate(n = n()) %>%

mutate(n_choose_z = n_choose_z(n, z)) %>%

mutate(`p(z/N)` = n_choose_z / sum(n_choose_z),

`Sample Proportion z/N` = z / n,

`p(N|z,theta)` = (z / n) * n_choose_z * (theta^z) * (1 - theta)^(n - z)) %>%

ggplot(aes(x = `Sample Proportion z/N`, y = `p(N|z,theta)`,

fill = `Sample Proportion z/N` <= 7 / 24)) +

geom_col(width = .004) +

scale_fill_viridis_d(option = "B", end = .6) +

theme(panel.grid = element_blank(),

legend.position = "none")

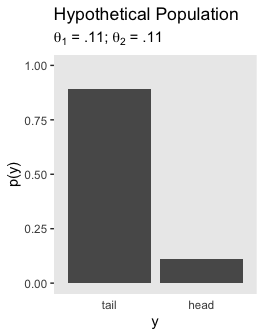

Let’s leave failure behind. Here’s the upper left panel for Figure 11.11.

tibble(y = factor(c("tail", "head"), levels = c("tail", "head")),

`p(y)` = c(1 - .11, .11)) %>%

ggplot(aes(x = y, y = `p(y)`)) +

geom_col() +

coord_cartesian(ylim = 0:1) +

labs(title = "Hypothetical Population",

subtitle = expression(paste(theta[1], " = .11; ", theta[2], " = .11"))) +

theme(panel.grid = element_blank(),

axis.ticks.x = element_blank())

And the lower panel for Figure 11.11.

tibble(y = factor(c("tail", "head"), levels = c("tail", "head")),

`p(y)` = c(1 - .539, .539)) %>%

ggplot(aes(x = y, y = `p(y)`)) +

geom_col() +

coord_cartesian(ylim = 0:1) +

labs(title = "Hypothetical Population",

subtitle = expression(paste(theta[1], " = .539; ", theta[2], " = .539"))) +

theme(panel.grid = element_blank(),

axis.ticks.x = element_blank())

Much like with Figure 11.6, I don’t understand how to do the simulation properly for the right panels of Figure 11.11. If you’ve got it, please share your code.

11.3.1.1 CI is not a distribution.

A CI is merely two end points. A common misconception of a confidence interval is that it indicates some sort of probability distribution over values of \(\theta\). It is very tempting to think that values of \(\theta\) in the middle of a CI should be more believable than values of \(\theta\) at or beyond the limits of the CI.

… Methods for imposing a distribution upon a CI seem to be motivated by a natural Bayesian intuition: Parameter values that are consistent with the data should be more credible than parameter values that are not consistent with the data (subject to prior credibility). If we were confined to frequentist methods, then the various proposals outlined above would be expressions of that intuition. But we are not confined to frequentist methods. Instead, we can express our natural Bayesian intuitions in fully Bayesian formalisms. (pp. 323–324)

11.3.2 Bayesian HDI.

“The 95% HDI consists of those values of \(\theta\) that have at least some minimal level of posterior credibility, such that the total probability of all such \(\theta\) values is 95%” (p. 324).

Once again, here’s how to analytically compute the 95% HDIs for our example of \(z = 7, N = 24\) and the prior of \(\operatorname{beta} (11, 11)\).

## [1] 0.2539378 0.531268511.4 Multiple comparisons

It’s worth quoting Kruschke at length:

When comparing multiple conditions, a key goal in NHST is to keep the overall false alarm rate down to a desired maximum such as 5%. Abiding by this constraint depends on the number of comparisons that are to be made, which in turn depends on the intentions of the experimenter. In a Bayesian analysis, however, there is just one posterior distribu- tion over the parameters that describe the conditions. That posterior distribution is unaffected by the intentions of the experimenter, and the posterior distribution can be examined from multiple perspectives however is suggested by insight and curiosity. (p. 325)

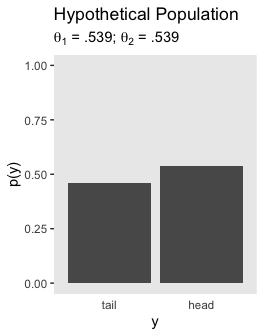

11.4.1 NHST correction for experiment wise error.

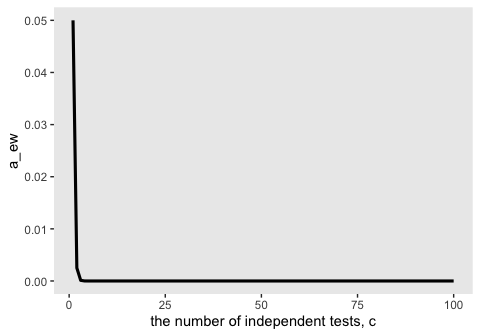

In NHST, we have to take into account all comparisons we intend for the whole experiment. Suppose we set a criterion for rejecting the null such that each decision has a “per-comparison” (PC) false alarm rate of \(\alpha_\text{PC}\), e.g., 5%. Our goal is to determine the overall false alarm rate when we conduct several comparisons. To get there, we do a little algebra. First, suppose the null hypothesis is true, which means that the groups are identical, and we get apparent differences in the samples by chance alone. This means that we get a false alarm on a proportion \(\alpha_\text{PC}\) of replications of a comparison test. Therefore, we do not get a false alarm on the complementary proportion \(1 - \alpha_\text{PC}\) of replications. If we run c independent comparison tests, then the probability of not getting a false alarm on any of the tests is \((1 - \alpha_\text{PC})^c\). Consequently, the probability of getting at least one false alarm is \(1 - (1 - \alpha_\text{PC})^c\). We call that probability of getting at least one false alarm, across all the comparisons in the experiment, the “experimentwise” false alarm rate, denoted \(\alpha_\text{EW}\). (pp. 325–326, emphasis in the original)

Here’s what this looks like in action.

alpha_pc <- .05

c <- 36

# the probability of not getting a false alarm on any of the tests

(1 - alpha_pc)^c## [1] 0.1577792## [1] 0.8422208For kicks and giggles, it might be interesting to plot this.

tibble(c = 1:100) %>%

mutate(p1 = (1 - alpha_pc)^c,

p2 = 1 - (1 - alpha_pc)^c) %>%

gather(key, probability, -c) %>%

ggplot(aes(x = c, y = probability, color = key)) +

geom_line(size = 1.1) +

geom_text(data = tibble(

c = c(85, 75, 70),

probability = c(.08, .9, .82),

label = c("no false alarms",

"at least one false alarm",

"(i.e., experimentwise false alarm rate)"),

key = c("p1", "p2", "p2")

),

aes(label = label)) +

scale_color_viridis_d(option = "D", end = .4) +

xlab("the number of independent tests, c") +

theme(panel.grid = element_blank(),

legend.position = "none")

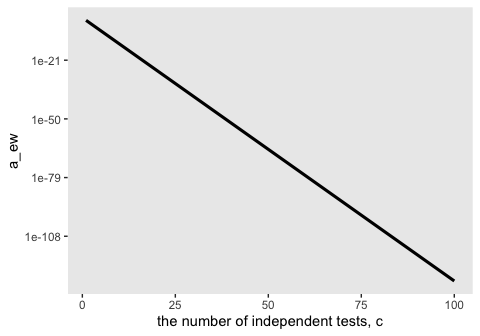

One way to keep the experimentwise false alarm rate down to 5% is by reducing the permitted false alarm rate for the individual comparisons, i.e., setting a more stringent criterion for rejecting the null hypothesis in individual comparisons. One often-used re- setting is the Bonferonni correction, which sets \(\alpha_\text{PC} = \alpha_\text{EW}^\text{desired} / c\).

Here’s how to apply the Bonferonni correction to our example if the desired false-alarm rate is .05.

## [1] 0.001388889Again, it might be useful to plot the consequence of Bonferonni’s correction on \(\alpha\) for different levels of \(c\).

tibble(c = 1:100) %>%

mutate(a_ew = alpha_pc^c) %>%

ggplot(aes(x = c, y = a_ew)) +

geom_line(size = 1.1) +

xlab("the number of independent tests, c") +

theme(panel.grid = element_blank())

A little shocking, isn’t it? If you put it on a log scale, you’ll see the relationship is linear.

tibble(c = 1:100) %>%

mutate(a_ew = alpha_pc^c) %>%

ggplot(aes(x = c, y = a_ew)) +

geom_line(size = 1.1) +

scale_y_log10() +

xlab("the number of independent tests, c") +

theme(panel.grid = element_blank())

But just look at how low the values on the y-axis get.

11.4.2 Just one Bayesian posterior no matter how you look at it.

In a Bayesian analysis, the interpretation of the data is not influenced by the experimenter’s stopping and testing intentions (assuming that those intentions do not affect the data). A Bayesian analysis yields a posterior distribution over the parameters of the model. The posterior distribution is the complete implication of the data. The posterior distribution can be examined in as many different ways as the analyst deems interesting; various comparisons of groups are merely different perspectives on the posterior distribution. (p. 328)

11.4.3 How Bayesian analysis mitigates false alarms.

From page 329: “How, then, does a Bayesian analysis address the problem of false alarms? By incorporating prior knowledge into the structure of the model.” In addition, we use heirarchical models when possible (e.g., Gelman, Hill, & Yajima, 2009).

11.5 What a sampling distribution is good for

“Sampling distributions tell us the probability of imaginary outcomes given a parameter value and an intention, \(p(D_{\theta, I}|\theta, I)\), instead of the probability of parameter values given the actual data, \((\theta|D_\text{actual})\).”

11.5.1 Planning an experiment.

Gelman touched on these sensibilities in a recent blog post.

Session info

## R version 3.6.0 (2019-04-26)

## Platform: x86_64-apple-darwin15.6.0 (64-bit)

## Running under: macOS High Sierra 10.13.6

##

## Matrix products: default

## BLAS: /Library/Frameworks/R.framework/Versions/3.6/Resources/lib/libRblas.0.dylib

## LAPACK: /Library/Frameworks/R.framework/Versions/3.6/Resources/lib/libRlapack.dylib

##

## locale:

## [1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

##

## attached base packages:

## [1] stats graphics grDevices utils datasets methods base

##

## other attached packages:

## [1] patchwork_1.0.0 forcats_0.4.0 stringr_1.4.0 dplyr_0.8.3

## [5] purrr_0.3.3 readr_1.3.1 tidyr_1.0.0 tibble_2.1.3

## [9] ggplot2_3.2.1 tidyverse_1.2.1

##

## loaded via a namespace (and not attached):

## [1] tidyselect_0.2.5 xfun_0.10 haven_2.1.0

## [4] lattice_0.20-38 colorspace_1.4-1 vctrs_0.2.0

## [7] generics_0.0.2 htmltools_0.4.0 viridisLite_0.3.0

## [10] yaml_2.2.0 utf8_1.1.4 rlang_0.4.1

## [13] pillar_1.4.2 glue_1.3.1.9000 withr_2.1.2

## [16] modelr_0.1.4 readxl_1.3.1 lifecycle_0.1.0

## [19] munsell_0.5.0 gtable_0.3.0 cellranger_1.1.0

## [22] rvest_0.3.4 evaluate_0.14 labeling_0.3

## [25] knitr_1.23 fansi_0.4.0 broom_0.5.2

## [28] Rcpp_1.0.2 scales_1.0.0 backports_1.1.5

## [31] jsonlite_1.6 hms_0.4.2 digest_0.6.21

## [34] stringi_1.4.3 grid_3.6.0 cli_1.1.0

## [37] tools_3.6.0 magrittr_1.5 lazyeval_0.2.2

## [40] crayon_1.3.4 pkgconfig_2.0.3 zeallot_0.1.0

## [43] ellipsis_0.3.0 xml2_1.2.0 lubridate_1.7.4

## [46] assertthat_0.2.1 rmarkdown_1.13 httr_1.4.0

## [49] rstudioapi_0.10 R6_2.4.0 nlme_3.1-139

## [52] compiler_3.6.0