14 Inference for comparing two independent means

We now extend the methods from Chapter 13 to apply confidence intervals and hypothesis tests to differences in population means that come from two groups, Group 1 and Group 2: \(\mu_1 - \mu_2.\)

In our investigations, we’ll identify a reasonable point estimate of \(\mu_1 - \mu_2\) based on the sample, and you may have already guessed its form: \(\bar{x}_1 - \bar{x}_2.\) Then we’ll look at the inferential analysis in three different ways: using a randomization test, applying bootstrapping for interval estimates, and, if we verify that the point estimate can be modeled using a normal distribution, we compute the estimate’s standard error and apply the mathematical framework.

In this section we consider a difference in two population means, \(\mu_1 - \mu_2,\) under the condition that the data are not paired. Just as with a single sample, we identify conditions to ensure we can use the \(t\)-distribution with a point estimate of the difference, \(\bar{x}_1 - \bar{x}_2,\) and a new standard error formula.

The details for working through inferential problems in the two independent means setting are strikingly similar to those applied to the two independent proportions setting. We first cover a randomization test where the observations are shuffled under the assumption that the null hypothesis is true. Then we bootstrap the data (with no imposed null hypothesis) to create a confidence interval for the true difference in population means, \(\mu_1 - \mu_2.\) The mathematical model, here the \(t\)-distribution, is able to describe both the randomization test and the bootstrapping as long as the conditions are met.

The inferential tools are applied to three different data contexts: determining whether stem cells can improve heart function, exploring the relationship between pregnant women’s smoking habits and birth weights of newborns, and exploring whether there is convincing evidence that one variation of an exam is harder than another variation. This section is motivated by questions like “Is there convincing evidence that newborns from mothers who smoke have a different average birth weight than newborns from mothers who don’t smoke?”

14.1 Randomization test for the difference in means

An instructor decided to run two slight variations of the same exam. Prior to passing out the exams, they shuffled the exams together to ensure each student received a random version. Anticipating complaints from students who took Version B, they would like to evaluate whether the difference observed in the groups is so large that it provides convincing evidence that Version B was more difficult (on average) than Version A.

14.1.1 Observed data

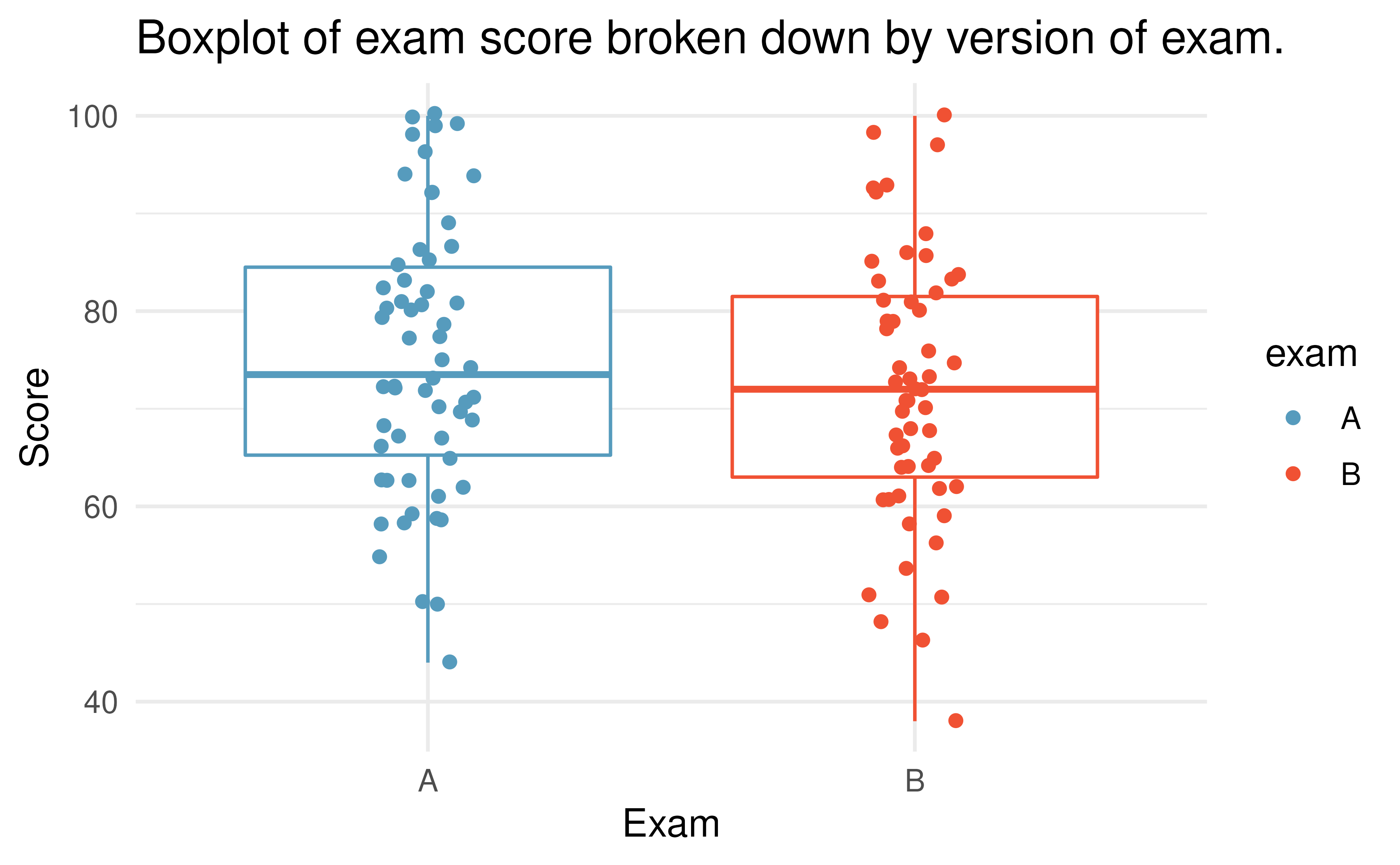

Summary statistics for how students performed on these two exams are shown in Table 14.1 and plotted in Figure 14.1.

| Group | n | Mean | SD | Min | Max |

|---|---|---|---|---|---|

| A | 58 | 75.1 | 13.9 | 44 | 100 |

| B | 55 | 72.0 | 13.8 | 38 | 100 |

Figure 14.1: Exam scores for students given one of three different exams.

Construct hypotheses to evaluate whether the observed difference in sample means, \(\bar{x}_A - \bar{x}_B=3.1,\) is likely to have happened due to chance, if the null hypothesis is true. We will later evaluate these hypotheses using \(\alpha = 0.01.\)165

Before moving on to evaluate the hypotheses in the previous Guided Practice, let’s think carefully about the dataset. Are the observations across the two groups independent? Are there any concerns about outliers?166

14.1.2 Variability of the statistic

In Section 6, the variability of the statistic (previously: \(\hat{p}_1 - \hat{p}_2)\) was visualized after shuffling the observations across the two treatment groups many times. The shuffling process implements the null hypothesis model (that there is no effect of the treatment). In the exam example, the null hypothesis is that exam A and exam B are equally difficult, so the average scores across the two tests should be the same. If the exams were equally difficult, due to natural variability, we would sometimes expect students to do slightly better on exam A \((\bar{x}_A > \bar{x}_B)\) and sometimes expect students to do slightly better on exam B \((\bar{x}_B > \bar{x}_A).\) The question at hand is: does \(\bar{x}_A - \bar{x}_B=3.1\) indicate that exam A is easier than exam B?

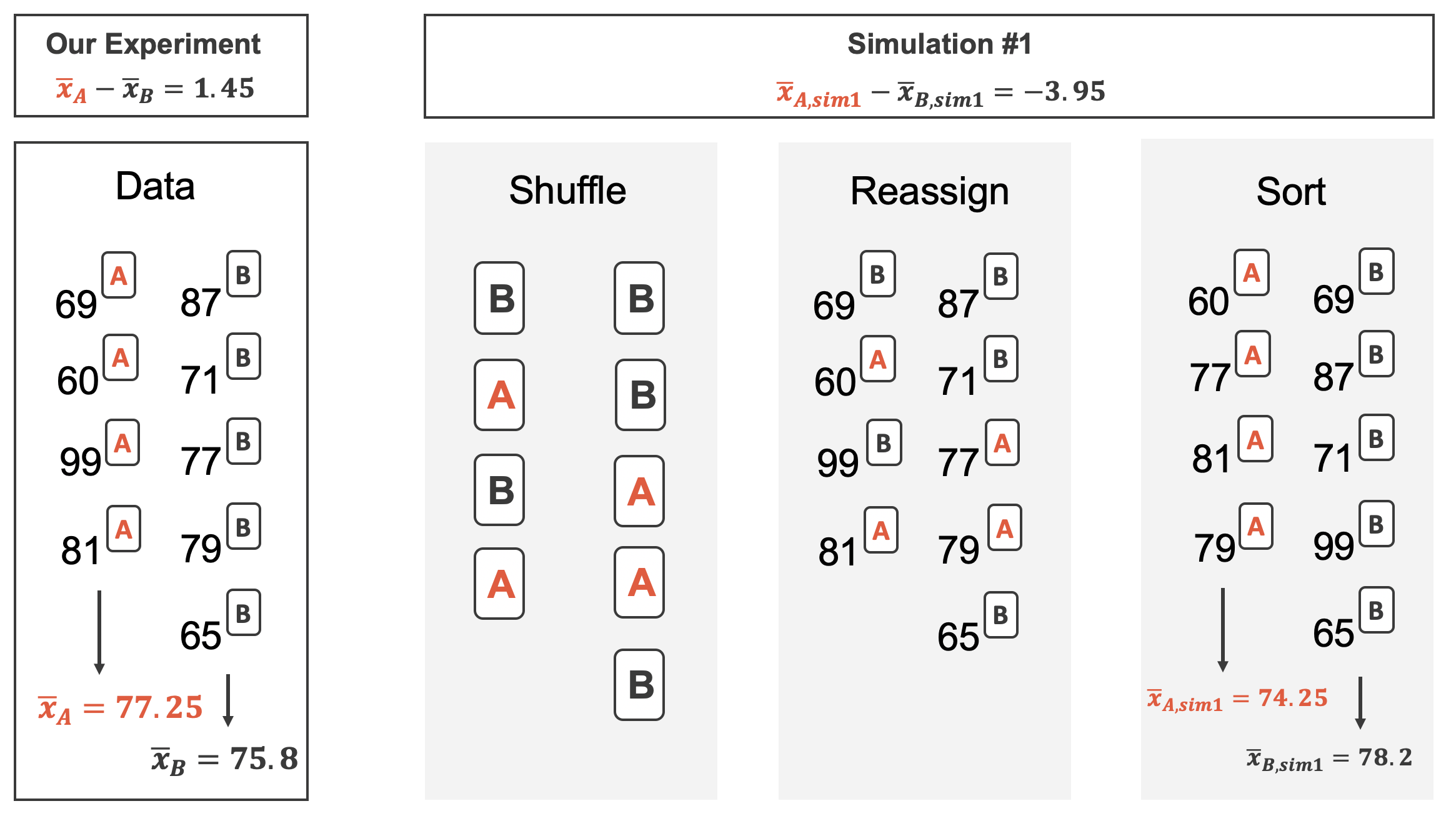

Figure 14.2 shows the process of randomizing the exam to the observed exam scores. If the null hypothesis is true, then the score on each exam should represent the true student ability on that material. It shouldn’t matter whether they were given exam A or exam B. By reallocating which student got which exam, we are able to understand how the difference in average exam scores changes due only to natural variability. There is only one iteration of the randomization process in Figure 14.2, leading to one simulated difference in average scores.

Figure 14.2: The version of the test (A or B) is randomly allocated to the test scores, under the null assumption that the tests are equally difficult.

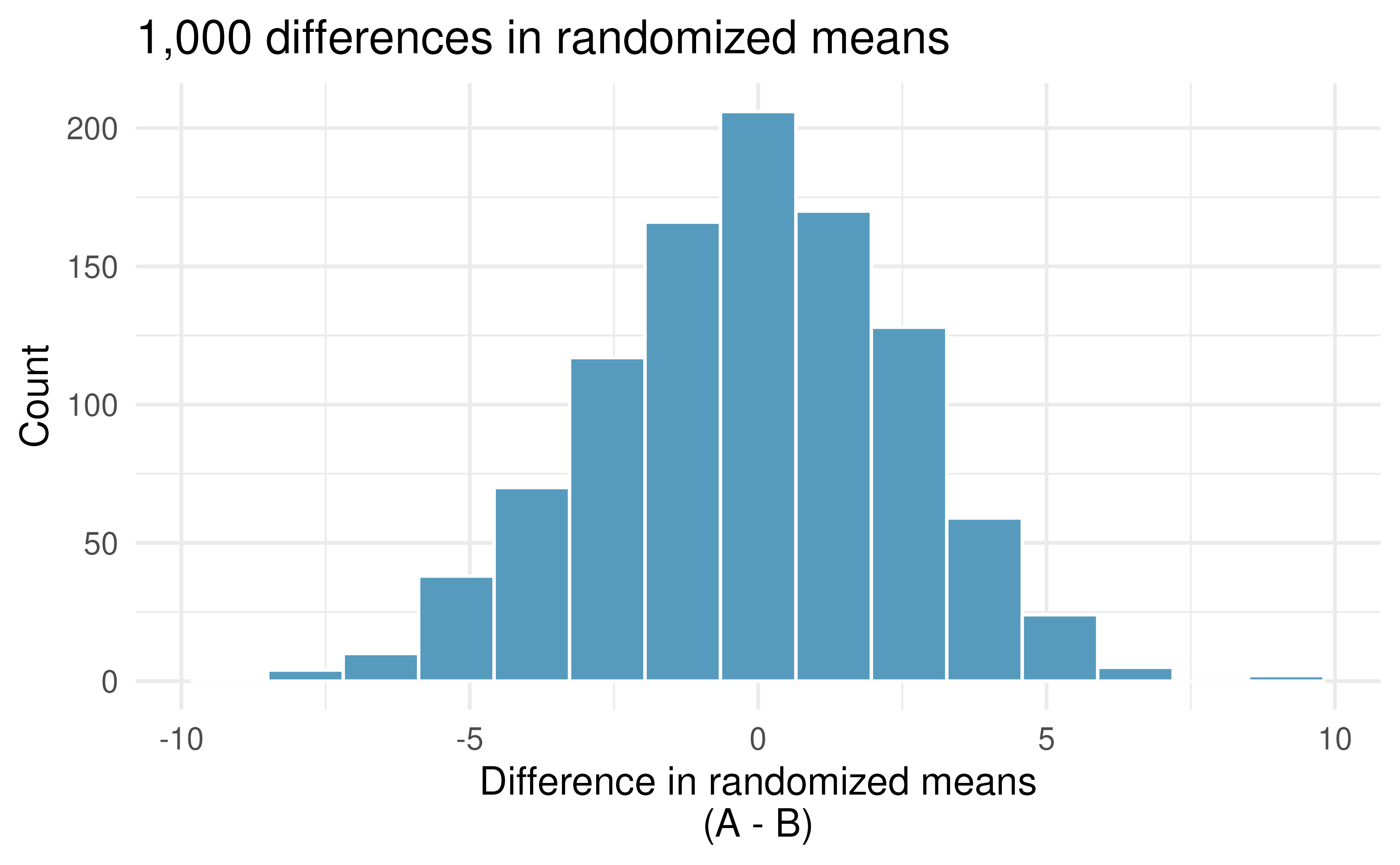

Building on Figure 14.2, Figure 14.3 shows the values of the simulated statistics \(\bar{x}_{1, sim} - \bar{x}_{2, sim}\) over 1,000 random simulations. We see that, just by chance, the difference in scores can range anywhere from -10 points to +10 points.

Figure 14.3: Histogram of differences in means, calculated from 1,000 different randomizations of the exam types.

14.1.3 Observed statistic vs. null statistics

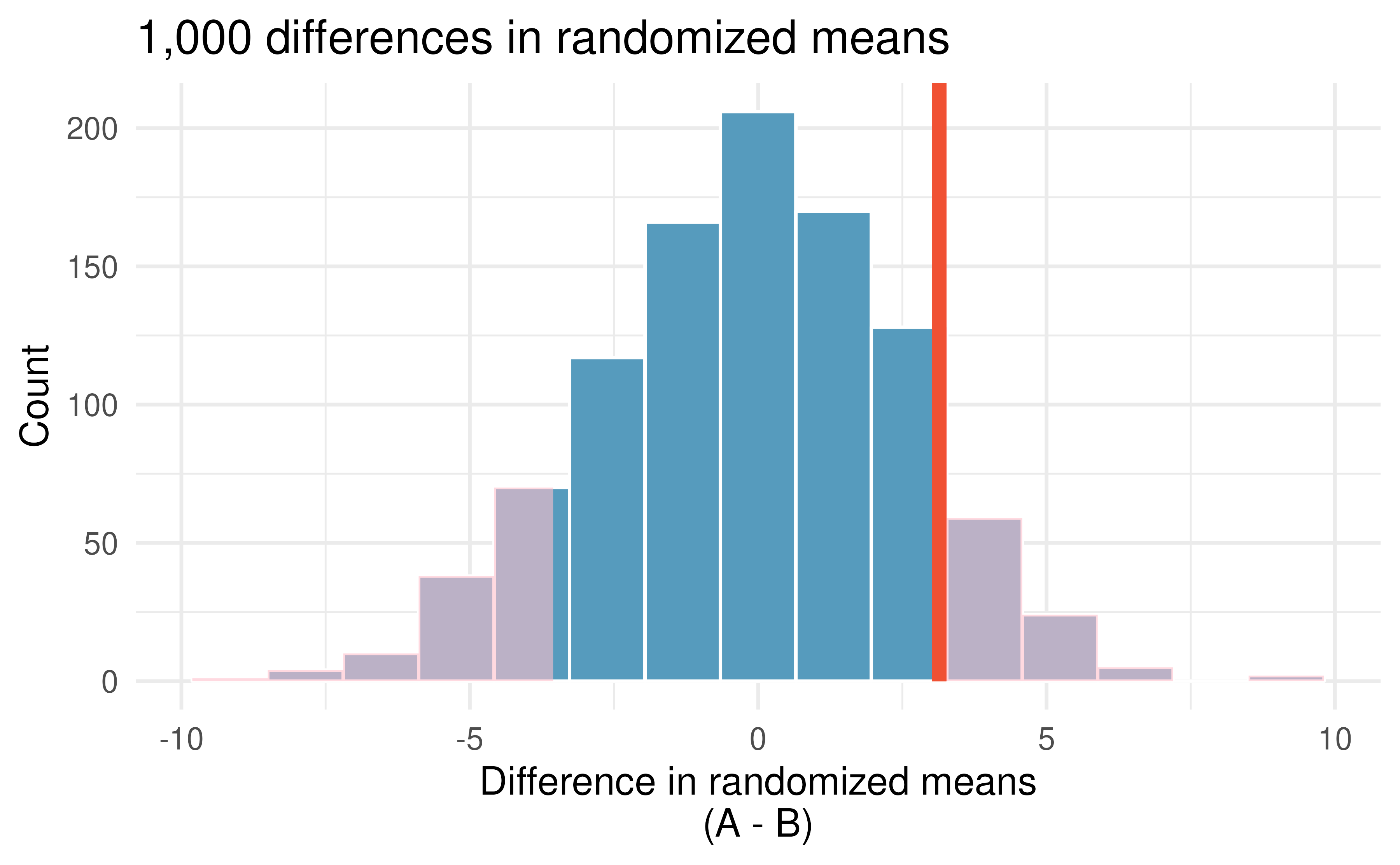

The goal of the randomization test is to assess the observed data, here the statistic of interest is \(\bar{x}_A - \bar{x}_B=3.1.\) The randomization distribution allows us to identify whether a difference of 3.1 points is more than one would expect by natural variability of the scores if the two tests were equally difficult. By plotting the value of 3.1 on Figure 14.4, we can measure how different or similar 3.1 is to the randomized differences which were generated under the null hypothesis.

Figure 14.4: Histogram of differences in means, calculated from 1,000 different randomizations of the exam types. The observed difference of 3.1 points is plotted as a vertical line, and the area more extreme than 3.1 is shaded to represent the p-value.

Approximate the p-value depicted in Figure 14.4, and provide a conclusion in the context of the case study.

Using software, we can find the number of shuffled differences in means that are less than the observed difference (of 3.14) is 19 (out of 1,000 randomizations). So 10% of the simulations are larger than the observed difference. To get the p-value, we double the proportion of randomized differences which are larger than the observed difference, p-value = 0.2.

Previously, we specified that we would use \(\alpha = 0.01.\) Since the p-value is larger than \(\alpha,\) we do not reject the null hypothesis. That is, the data do not convincingly show that one exam version is more difficult than the other, and the teacher should not be convinced that they should add points to the Version B exam scores.

The large p-value and consistency of \(\bar{x}_A - \bar{x}_B=3.1\) with the randomized differences leads us to not reject the null hypothesis. Said differently, there is no evidence to think that one of the tests is easier than the other. One might be inclined to conclude that the tests have the same level of difficulty, but that conclusion would be wrong. The hypothesis testing framework is set up only to reject a null claim, it is not set up to validate a null claim. As we concluded, the data are consistent with exams A and B being equally difficult, but the data are also consistent with exam A being 3.1 points “easier” than exam B. The data are not able to adjudicate on whether the exams are equally hard or whether one of them is slightly easier. Indeed, conclusions where the null hypothesis is not rejected often seem unsatisfactory. However, in this case, the teacher and class are probably all relieved that there is no evidence to demonstrate that one of the exams is more difficult than the other.

14.2 Bootstrap confidence interval for the difference in means

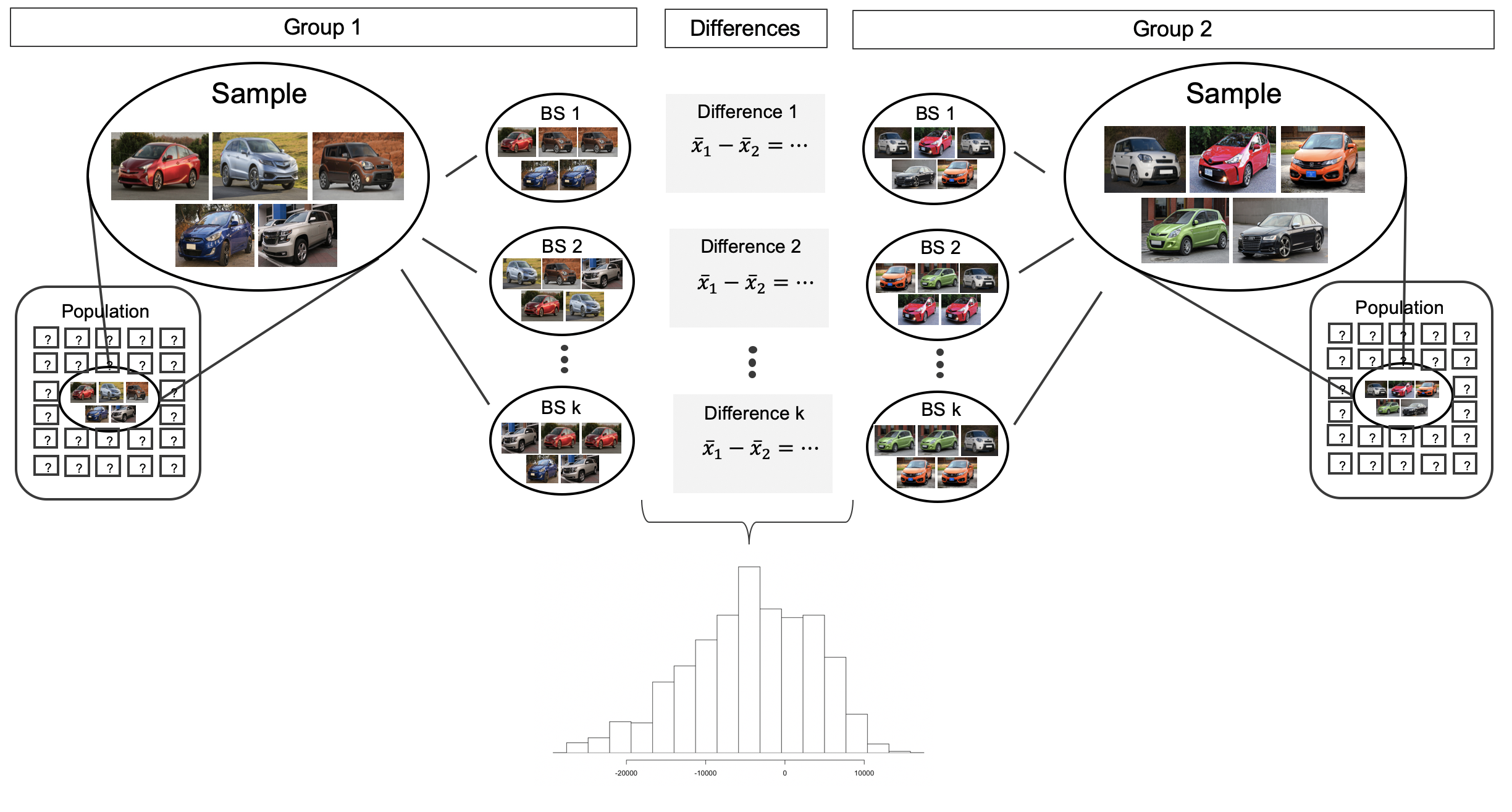

Before providing a full example working through a bootstrap analysis on actual data, we return to the fictional Awesome Auto example as a way to visualize the two sample bootstrap setting. Consider an expanded scenario where the research question centers on comparing the average price of a car at one Awesome Auto franchise (Group 1) to the average price of a car at a different Awesome Auto franchise (Group 2). The process of bootstrapping can be applied to each Group separately, and the differences of means recalculated each time. Figure 14.5 visually describes the bootstrap process when interest is in a statistic computed on two separate samples. The analysis proceeds as in the one sample case, but now the (single) statistic of interest is the difference in sample means. That is, a bootstrap resample is done on each of the groups separately, but the results are combined to have a single bootstrapped difference in means. Repetition will produce \(k\) bootstrapped differences in means, and the histogram will describe the natural sampling variability associated with the difference in means.

Figure 14.5: For the two group comparison, the bootstrap resampling is done separately on each group, but the statistic is calculated as a difference. The set of k differences is then analyzed as the statistic of interest with conclusions drawn on the parameter of interest.

14.2.1 Observed data

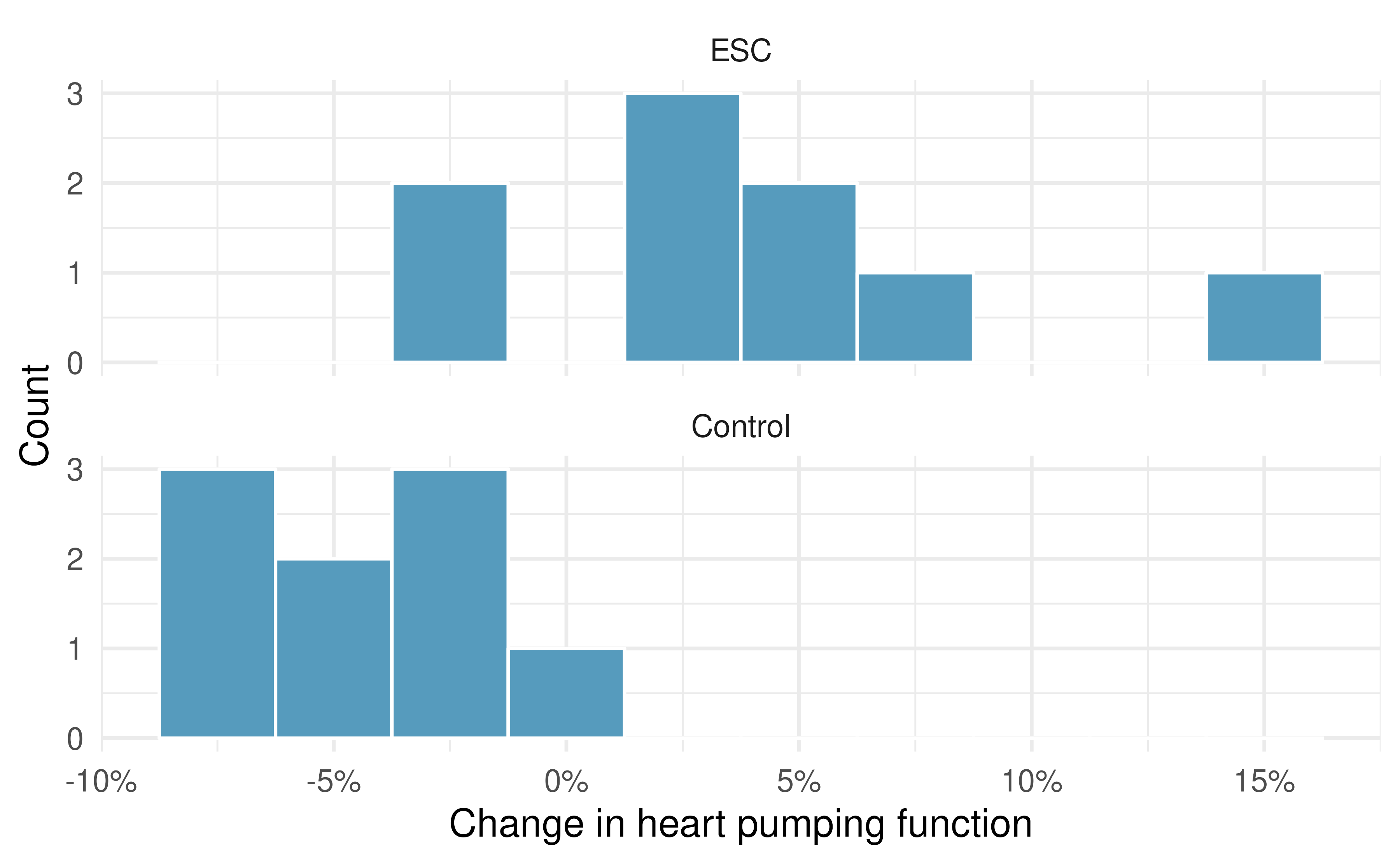

Does treatment using embryonic stem cells (ESCs) help improve heart function following a heart attack? Table 14.2 contains summary statistics for an experiment to test ESCs in sheep that had a heart attack. Each of these sheep was randomly assigned to the ESC or control group, and the change in their hearts’ pumping capacity was measured in the study. (Ménard et al. 2005) Figure 14.8 provides histograms of the two datasets. A positive value corresponds to increased pumping capacity, which generally suggests a stronger recovery. Our goal will be to identify a 95% confidence interval for the effect of ESCs on the change in heart pumping capacity relative to the control group.

| Group | n | Mean | SD |

|---|---|---|---|

| ESC | 9 | 3.50 | 5.17 |

| Control | 9 | -4.33 | 2.76 |

The point estimate of the difference in the heart pumping variable is straightforward to find: it is the difference in the sample means.

\[\bar{x}_{esc} - \bar{x}_{control}\ =\ 3.50 - (-4.33)\ =\ 7.83\]

14.2.2 Variability of the statistic

As we saw in Section 12.2, we will use bootstrapping to estimate the variability associated with the difference in sample means when taking repeated samples. In a method akin to two proportions, a separate sample is taken with replacement from each group (here ESCs and control), the sample means are calculated, and their difference is taken. The entire process is repeated multiple times to produce a bootstrap distribution of the difference in sample means (without the null hypothesis assumption).

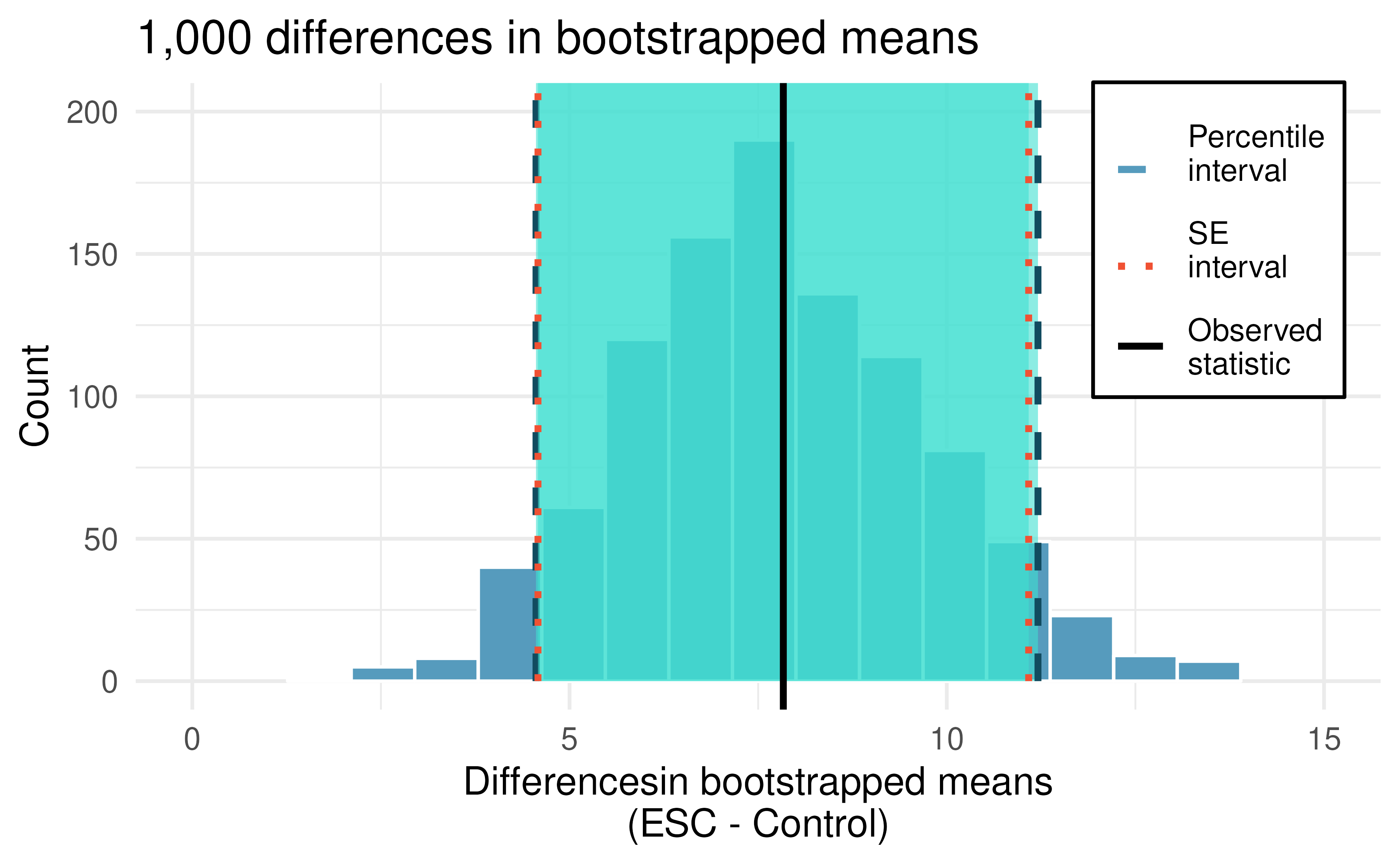

Figure 14.6 displays the variability of the differences in means with the 90% percentile and SE CIs super imposed.

Figure 14.6: Histogram of differences in means after 1,000 bootstrap samples from each of the two groups. The observed difference is plotted as a black vertical line at 7.83. The blue dashed and red dotted lines provide the bootstrap percentile and boostrap SE confidence intervals, respectively, for the difference in true population means.

Using the histogram of bootstrapped difference in means, estimate the standard error of the differences in sample means, \(\bar{x}_{ESC} - \bar{x}_{Control}.\)167

Choose one of the bootstrap confidence intervals for the true difference in average pumping capacity, \(\mu_{ESC} - \mu_{Control}.\) Does the interval show that there is a difference across the two treatments?

Because neither of the 90% intervals (either percentile or SE) above overlap zero (note that zero is never one of the bootstrapped differences so 95% and 99% intervals would have given the same conclusion!), we conclude that the ESC treatment is substantially better with respect to heart pumping capacity than the treatment.

Because the study is a randomized controlled experiment, we can conclude that it is the treatment (ESC) which is causing the change in pumping capacity.

14.3 Mathematical model for testing the difference in means

Every year, the US releases to the public a large data set containing information on births recorded in the country. This data set has been of interest to medical researchers who are studying the relation between habits and practices of expectant mothers and the birth of their children. We will work with a random sample of 1,000 cases from the data set released in 2014.

14.3.1 Observed data

Four cases from this dataset are represented in Table 14.3.

We are particularly interested in two variables: weight and smoke.

The weight variable represents the weights of the newborns and the smoke variable describes which mothers smoked during pregnancy.

| fage | mage | weeks | visits | gained | weight | sex | habit |

|---|---|---|---|---|---|---|---|

| 34 | 34 | 37 | 14 | 28 | 6.96 | male | nonsmoker |

| 36 | 31 | 41 | 12 | 41 | 8.86 | female | nonsmoker |

| 37 | 36 | 37 | 10 | 28 | 7.51 | female | nonsmoker |

| 16 | 38 | 29 | 6.19 | male | nonsmoker |

We would like to know, is there convincing evidence that newborns from mothers who smoke have a different average birth weight than newborns from mothers who don’t smoke? We will use data from this sample to try to answer this question.

Set up appropriate hypotheses to evaluate whether there is a relationship between a mother smoking and average birth weight.

The null hypothesis represents the case of no difference between the groups.

- \(H_0:\) There is no difference in average birth weight for newborns from mothers who did and did not smoke. In statistical notation: \(\mu_{n} - \mu_{s} = 0,\) where \(\mu_{n}\) represents non-smoking mothers and \(\mu_s\) represents mothers who smoked.

- \(H_A:\) There is some difference in average newborn weights from mothers who did and did not smoke \((\mu_{n} - \mu_{s} \neq 0).\)

Table 14.4 displays sample statistics from the data. We can see that the average birth weight of babies born to smoker moms is lower than those born to nonsmoker moms.

| Habit | n | Mean | SD |

|---|---|---|---|

| nonsmoker | 867 | 7.27 | 1.23 |

| smoker | 114 | 6.68 | 1.60 |

14.3.2 Variability of the statistic

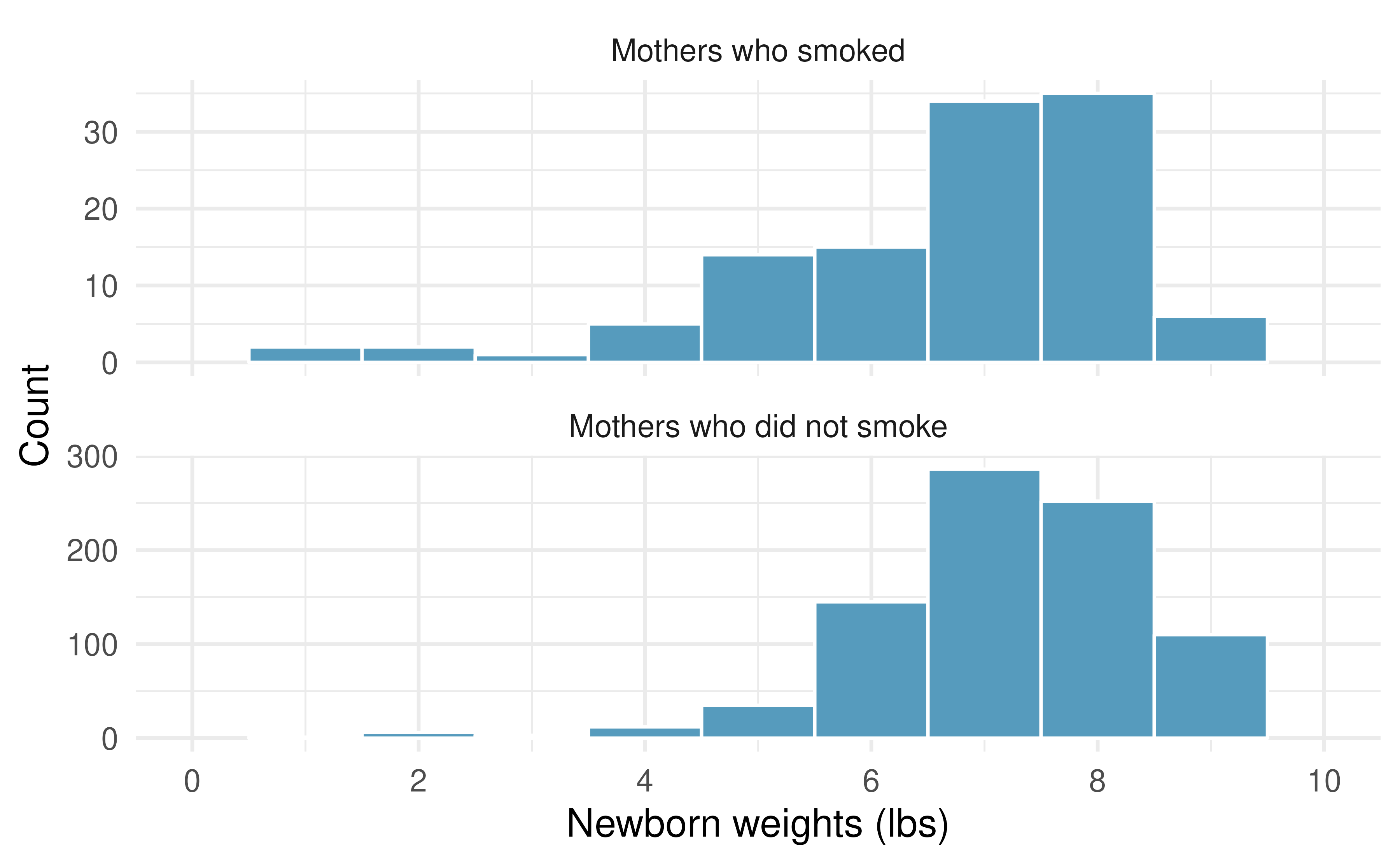

There are three conditions we need to check before we can model the difference in sample means using the \(t\)-distribution. The first two are the independence and normality conditions, like we had to check before using the \(t\)-distribution to model a single sample mean. The third condition is new and relates to the relative degree of variability in the two samples.

- Because the data come from a simple random sample, the observations are independent, both within and between samples.

- Both groups have a reasonably large sample size (over 30 observations) and we don’t find any particularly extreme outliers when we inspect the data in Figure 14.7.

- Both groups have similar standard deviations, so the amount of variability within each group is similar.

Since all three conditions are satisfied, the difference in sample means may be modeled using a \(t\)-distribution.

Figure 14.7: The top panel represents birth weights for infants whose mothers smoked during pregnancy. The bottom panel represents the birth weights for infants whose mothers who did not smoke during pregnancy.

14.3.3 Observed statistic vs. null statistics

The test statistic for comparing two means is a T.

The T score is a ratio of how the groups differ as compared to how the observations within groups vary.

\[T = \frac{(\bar{x}_1 - \bar{x}_2) - 0}{s_{\textit{pool}} \sqrt{\frac{1}{n_1} + \frac{1}{n_2}}}\] where \(s_{\textit{pool}}\) is the pooled standard deviation between the two groups:

\[ s_{\textit{pool}} = \sqrt{\frac{(n_1 - 1) s^2_1 + (n_2 - 1) s^2_2}{n_1 + n_2 - 2}} \]

When the null hypothesis is true and the conditions are met, T has a t-distribution with \(df = n_1 + n_2 - 2\).

Conditions:

- Independent observations within and between groups.

- Large samples and no extreme outliers.

- Similar variability in both groups.

Like the normality condition, “similar variability”169 is rather vague, so we introduce a rule of thumb.

General rule for performing the similar variability check.

As a rule of thumb for whether two samples have similar variability, calculate their sample standard deviations (\(s_1\) and \(s_2\)) and then find their ratio \(\frac{s_1}{s_2}\). If the ratio is between 0.5 and 2, the two samples can be said to have similar amounts of variability.

Compute the standard error of the point estimate for the average difference between the weights of babies born to nonsmoker and smoker mothers.170

Complete the hypothesis test started in the previous Example and Guided Practice on births14 dataset and research question.

Use a significance level of \(\alpha=0.05.\) For reference, \(\bar{x}_{n} - \bar{x}_{s} = 0.593,\) \(SE = 0.128,\) and the sample sizes were \(n_n = 867\) and \(n_s = 114\).

We can find the test statistic for this test using the previous information:

\[T = \frac{\ 0.593 - 0\ }{0.128} = 4.647\]

We find the single tail area using software. The degrees of freedom are \(df = n_n + n_s - 2 = 867 + 114 - 2 = 979\). The one tail area is roughly 0.0000019; doubling this value gives the two-tail area and p-value, 0.0000038.

The p-value is much smaller than the significance value, 0.05, so we reject the null hypothesis. The data provide convincing evidence that the difference in the average weights of babies born to mothers who smoked during pregnancy and those who did not is not a result of chance alone.

This result is likely not surprising. We all know that smoking is bad for you and you’ve probably also heard that smoking during pregnancy is not just bad for the mother but also for the baby as well. In fact, some in the tobacco industry actually had the audacity to tout that as a benefit of smoking:

It’s true. The babies born from women who smoke are smaller, but they’re just as healthy as the babies born from women who do not smoke. And some women would prefer having smaller babies. - Joseph Cullman, Philip Morris’ Chairman of the Board on CBS’ Face the Nation, Jan 3, 1971

Furthermore, health differences between babies born to mothers who smoke and those who do not are not limited to weight differences.171

14.4 Mathematical model for estimating the difference in means

14.4.1 Observed data

As with hypothesis testing, for the question of whether we can model the difference using a \(t\)-distribution, we’ll need to check some conditions. Like the 2-proportion cases, we will require a more robust version of independence so we are confident the two groups are also independent. Second, we also check for normality in each group separately, which in practice is a check for outliers. Finally, we check whether the two groups have similar amounts of variability.

Using the \(t\)-distribution for a difference in means.

The \(t\)-distribution can be used for inference when working with the standardized difference of two means if

- Independence (extended). The data are independent within and between the two groups, e.g., the data come from independent random samples or from a randomized experiment.

- Normality. We check the outliers for each group separately.

- Similar variability. The samples should have standard deviations that are not too different.

The standard error may be computed as \[ SE = s_{\textit{pool}} \sqrt{\frac{1}{n_1} + \frac{1}{n_2}} \] where \(s_{\textit{pool}}\) is the pooled standard deviation between the two groups:

\[ s_{\textit{pool}} = \sqrt{\frac{(n_1 - 1) s^2_1 + (n_2 - 1) s^2_2}{n_1 + n_2 - 2}} \]

The degrees of freedom are given by \(df = n_1 + n_2 - 2\).

Recall that the margin of error is defined by the standard error. The margin of error for \(\bar{x}_1 - \bar{x}_2\) can be directly obtained from \(SE(\bar{x}_1 - \bar{x}_2).\)

Margin of error for \(\bar{x}_1 - \bar{x}_2.\)

The margin of error is \(t^\star_{df} \times s_{\textit{pool}} \sqrt{\frac{1}{n_1} + \frac{1}{n_2}}\) where \(t^\star_{df}\) is calculated from a specified percentile on the t-distribution with df degrees of freedom.

14.4.2 Variability of the statistic

Can the \(t\)-distribution be used to make inference using the point estimate, \(\bar{x}_{ESC} - \bar{x}_{control} = 7.83\) in the example above?

First, we check for independence. Because the sheep were randomized into the groups, independence within and between groups is satisfied.

Figure 14.8 does not reveal any clear outliers in either group.

Although the ESC group has more variability, it is not so much more that we violate the condition of similar variability (\(s_{ESC} / s_{control} = 5.17 / 2.76 = 1.87\)).

With all conditions met, we can use the \(t\)-distribution to model the difference of sample means.

Figure 14.8: Histograms for the difference in heart pumping function after a heart attack for both the treatment group (ESC, which received an the embryonic stem cell treatment) and the control group (which did not receive the treatment).

Calculate a 95% confidence interval for the effect of ESCs on the change in heart pumping capacity of sheep after they’ve suffered a heart attack.

We already computed the point estimate of the difference in means as \(\bar{x}_{esc} - \bar{x}_{control} = 7.83\).

The pooled standard deviation is \[ \begin{aligned} s_{\textit{pool}} & = \sqrt{\frac{(n_1 - 1) s^2_1 + (n_2 - 1) s^2_2}{n_1 + n_2 - 2}} \\ & = \sqrt{\frac{(9 - 1) 5.17^2 + (9 - 1) 2.76^2}{9 + 9 - 2}} \\ & = \sqrt{\frac{274.8}{16}} \\ & = 4.14 \end{aligned} \] The standard error is \(SE = s_{\textit{pool}} \sqrt{1 / n_1 + 1 / n_2} = 4.14 \sqrt{1 / 9 + 1 / 9} = 1.95\).

Using \(df = n_1 + n_2 - 2 = 9 + 9 - 2 = 16,\) we can identify the critical value of \(t^{\star}_{16} = 2.12\) for a 95% confidence interval. Finally, we can enter the values into the confidence interval formula:

\[ \begin{aligned} \text{point estimate} \ &\pm\ t^{\star} \times SE \\ 7.83 \ &\pm\ 2.12\times 1.95 \\ (3.70 \ &, \ 11.96) \end{aligned} \]

We are 95% confident that the heart pumping function in sheep that received embryonic stem cells is between 3.70% and 11.96% higher than for sheep that did not receive the stem cell treatment.

14.5 Chapter review

14.5.1 Summary

In this chapter we extended the single mean inferential methods to questions of differences in means. You may have seen parallels from the chapters that extended a single proportion (Chapter 11) to differences in proportions (Chapter 12). When considering differences in sample means (indeed, when considering many quantitative statistics), we use the t-distribution to describe the sampling distribution of the T score (the standardized difference in sample means). Ideas of confidence level and type of error which might occur from a hypothesis test conclusion are similar to those seen in other chapters (see Section 9).

14.5.2 Terms

We introduced the following terms in the chapter. If you’re not sure what some of these terms mean, we recommend you go back in the text and review their definitions. We are purposefully presenting them in alphabetical order, instead of in order of appearance, so they will be a little more challenging to locate. However you should be able to easily spot them as bolded text.

| difference in means | SE difference in means | t-test |

| point estimate | T score | |

| pooled standard deviation | t-CI |

14.6 Exercises

Answers to odd numbered exercises can be found in Appendix A.13.

-

Experimental baker. A baker working on perfecting their bagel recipe is experimenting with active dry (AD) and instant (I) yeast. They bake a dozen bagels with each type of yeast and score each bagel on a scale of 1 to 10 on how well the bagels rise. They come up with the following set of hypotheses for evaluating whether there is a difference in the average rise of bagels baked with active dry and instant yeast. What is wrong with the hypotheses as stated?

\[H_0: \bar{x}_{AD} \leq \bar{x}_{I} \quad \quad H_A: \bar{x}_{AD} > \bar{x}_{I}\]

Fill in the blanks. We use a ___ to evaluate if data provide convincing evidence of a difference between two population means and we use a ___ to estimate this difference.

-

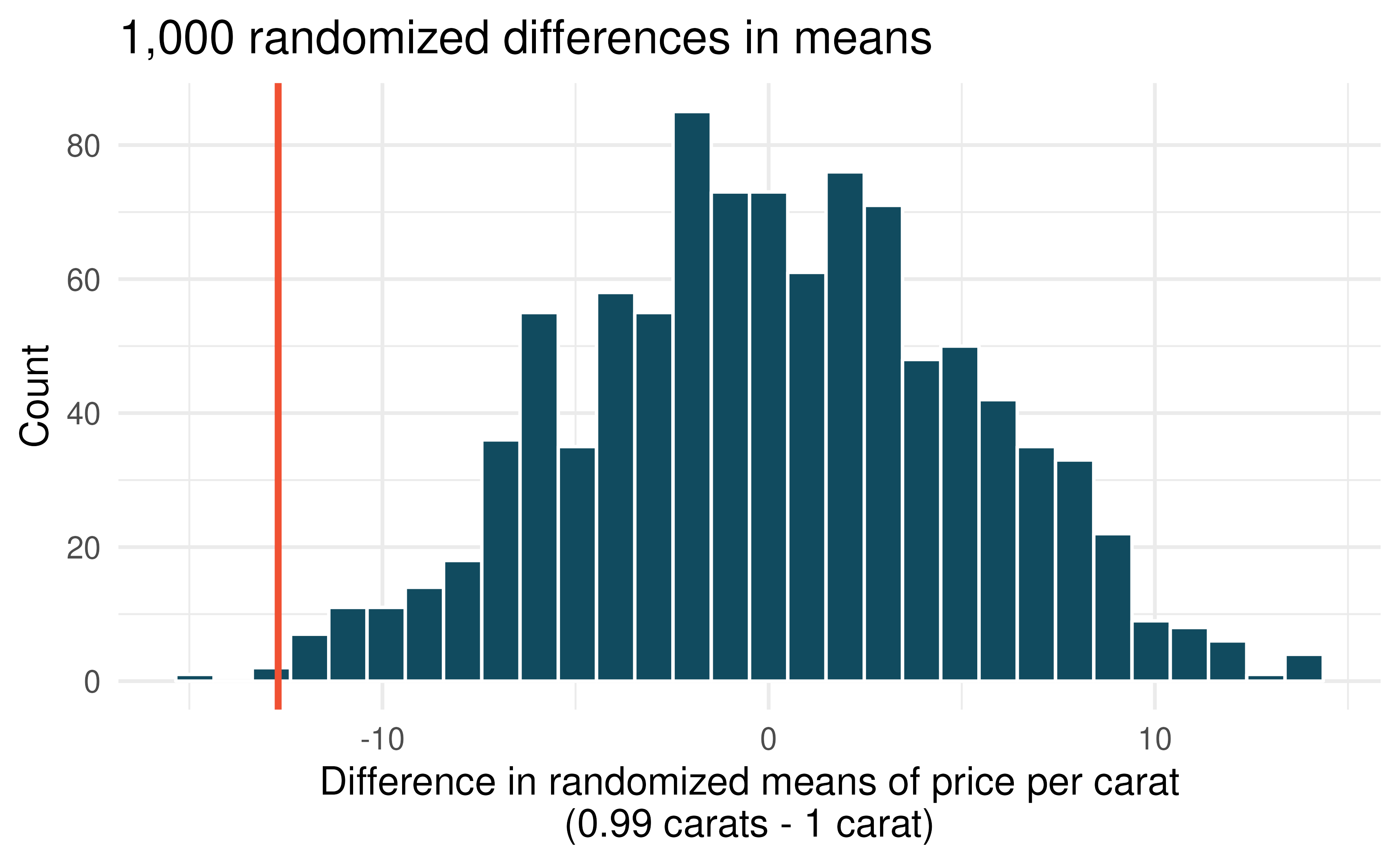

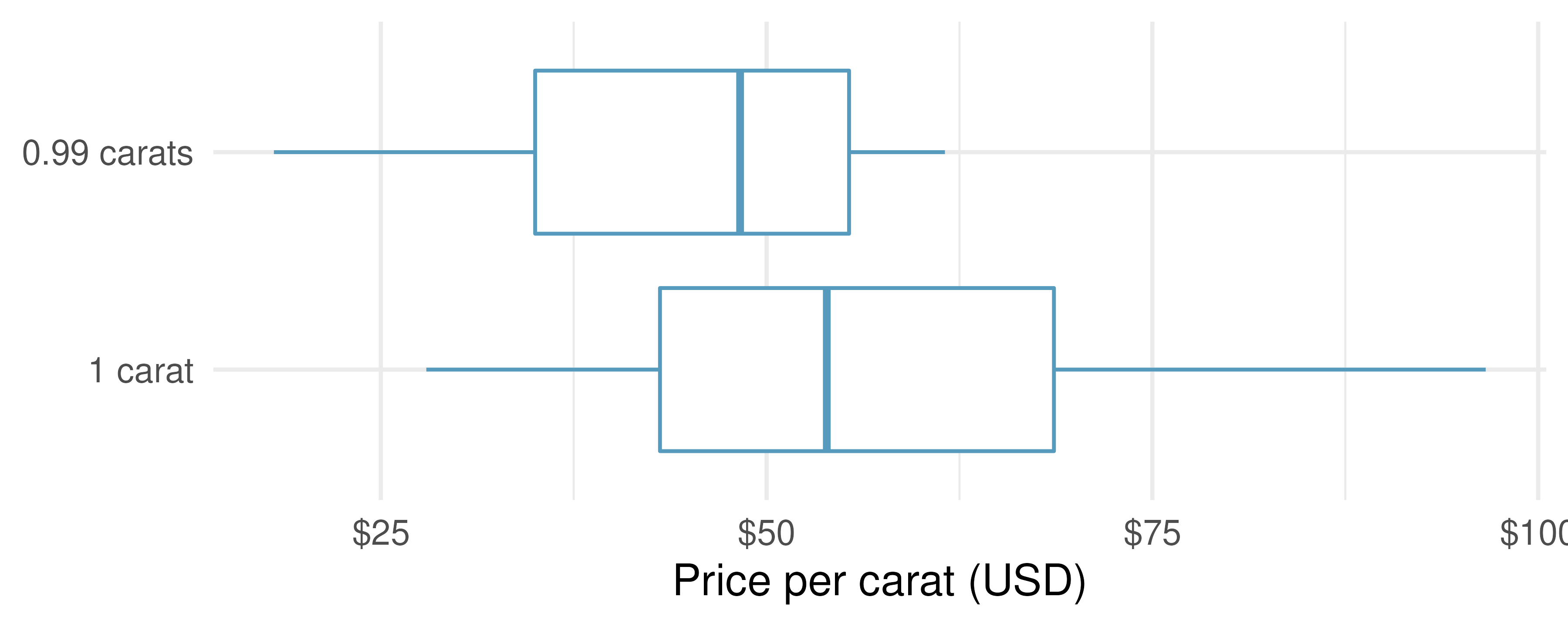

Diamonds, randomization test. The prices of diamonds go up as the carat weight increases, but the increase is not smooth. For example, the difference between the size of a 0.99 carat diamond and a 1 carat diamond is undetectable to the naked human eye, but the price of a 1 carat diamond tends to be much higher than the price of a 0.99 diamond. In this question we use two random samples of diamonds, 0.99 carats and 1 carat, each sample of size 23, and randomize the carat weight to the price values in order compare the average prices of the diamonds to a null distribution. In order to be able to compare equivalent units, we first divide the price for each diamond by 100 times its weight in carats. That is, for a 0.99 carat diamond, we divide the price by 99. or a 1 carat diamond, we divide the price by 100. The randomization distribution (with 1,000 repetitions) below describes the null distribution of the difference in sample means (of price per carat) if there really was no difference in the population from which these diamonds came.172 (Wickham 2016)

Using the randomization distribution of the difference in average price per carat (1,000 randomizations were run), conduct a hypothesis test to evaluate if there is a difference between the prices per carat of diamonds that weigh 0.99 carats and diamonds that weigh 1 carat. Make sure to state your hypotheses clearly and interpret your results in context of the data. (Wickham 2016)

-

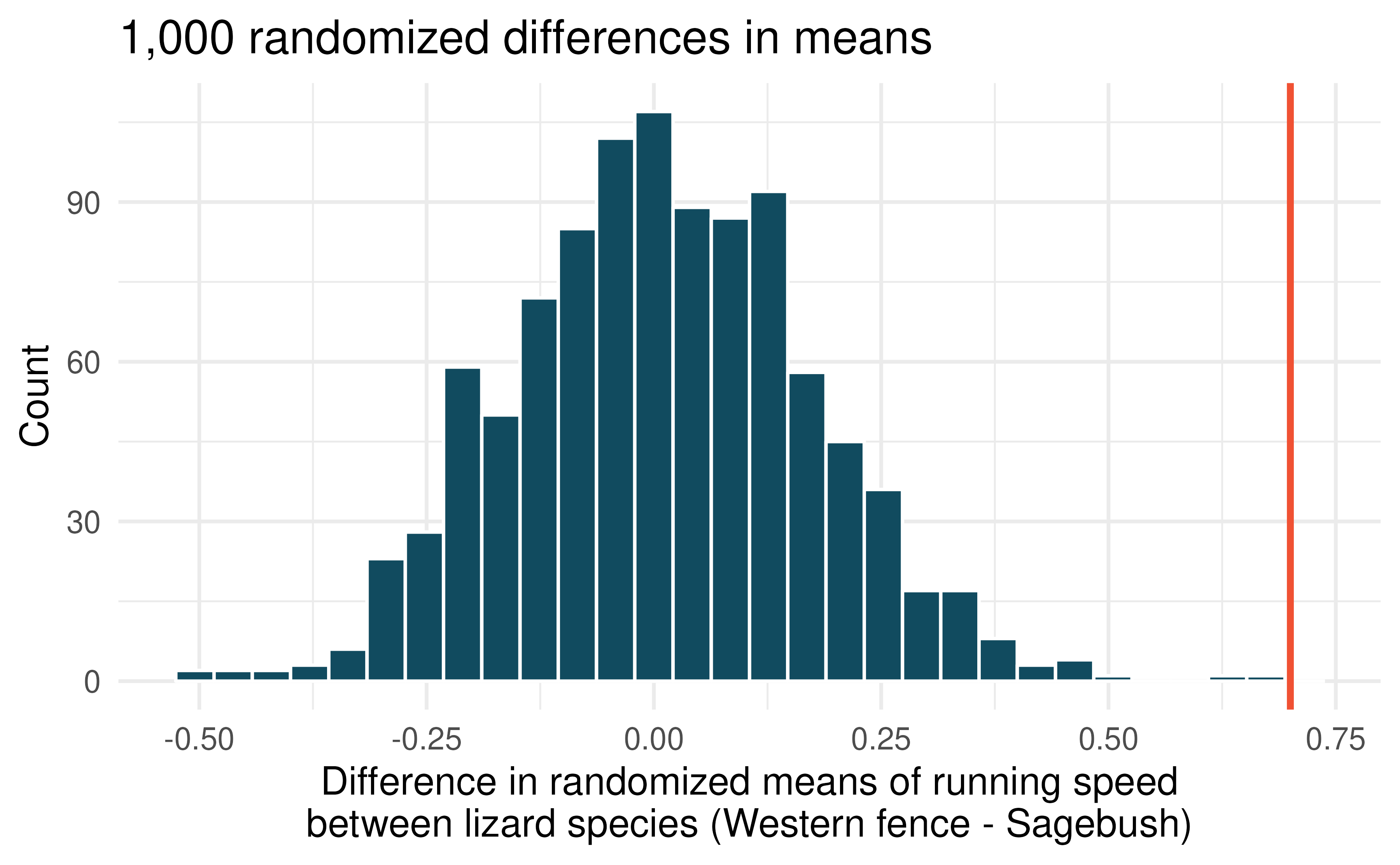

Lizards running, randomization test. In order to assess physiological characteristics of common lizards, data on top speeds (in m/sec) measured on a laboratory race track for two species of lizards: Western fence lizard (Sceloporus occidentalis) and Sagebrush lizard (Sceloporus graciosus). The original observed difference in lizard speeds is \(\bar{x}_{Western fence} - \bar{x}_{Sagebrush} = 0.7 \mbox{m/sec}.\) The histogram below shows the distribution of average differences when speed has been randomly allocated across lizard species 1,000 times.173 (Adolph 1987)

Using the randomization distribution, conduct a hypothesis test to evaluate if there is a difference between the average speed of the Western fence lizard as compared to the Sagebrush lizard. Make sure to state your hypotheses clearly and interpret your results in context of the data.

-

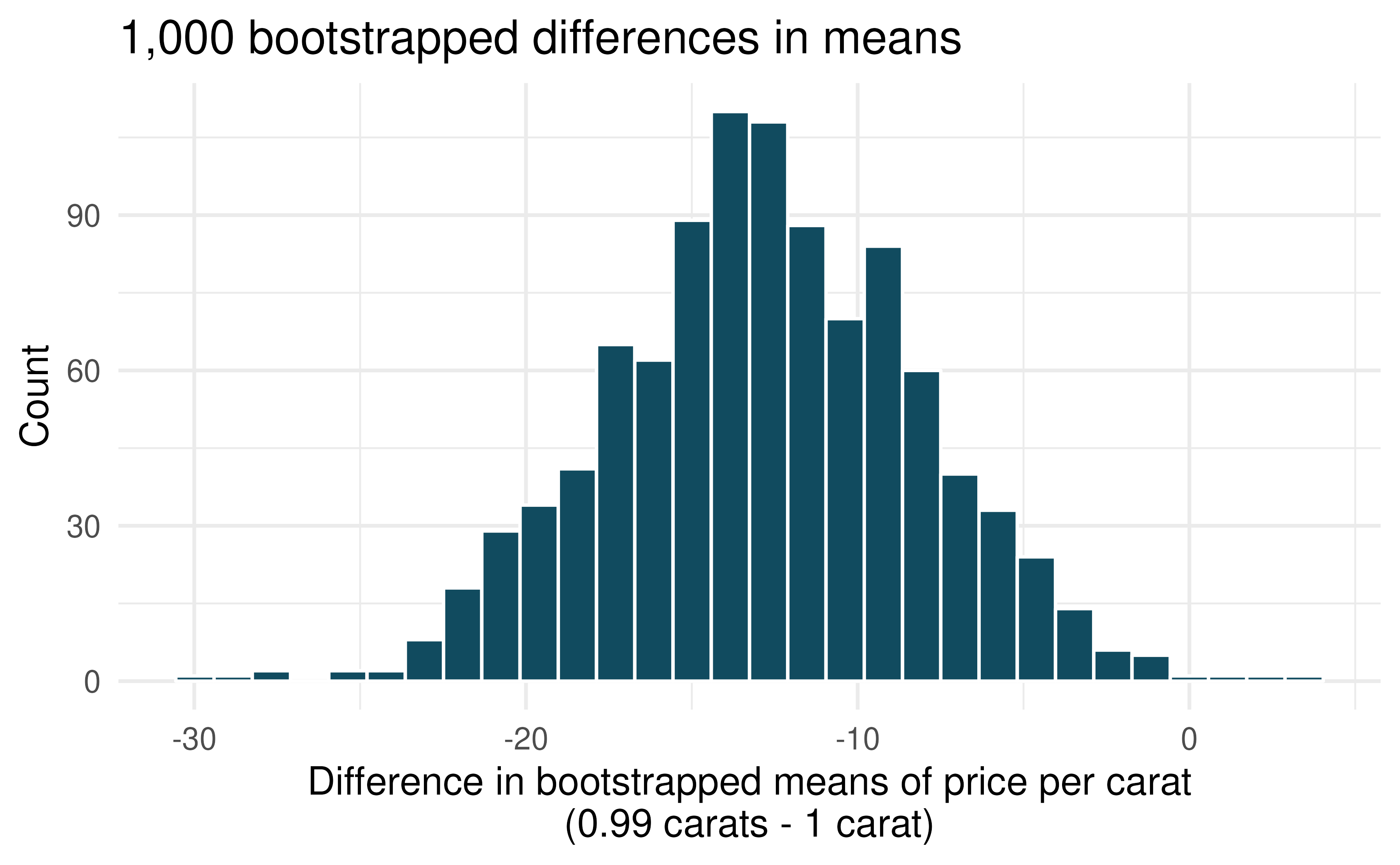

Diamonds, bootstrap interval. We have data on two random samples of diamonds: one with diamonds that weigh 0.99 carats and one with diamonds that weigh 1 carat. Each sample has 23 diamonds. Provided below is a histogram of bootstrap differences in means of price per carat of diamonds that weight 0.99 carats and diamonds that weigh 1 carat. (Wickham 2016)

Using the bootstrap distribution, create a (rough) 95% bootstrap percentile confidence interval for the true population difference in prices per carat of diamonds that weigh 0.99 carats and 1 carat.

Using the bootstrap distribution, create a (rough) 95% bootstrap SE confidence interval for the true population difference in prices per carat of diamonds that weigh 0.99 carats and 1 carat.

-

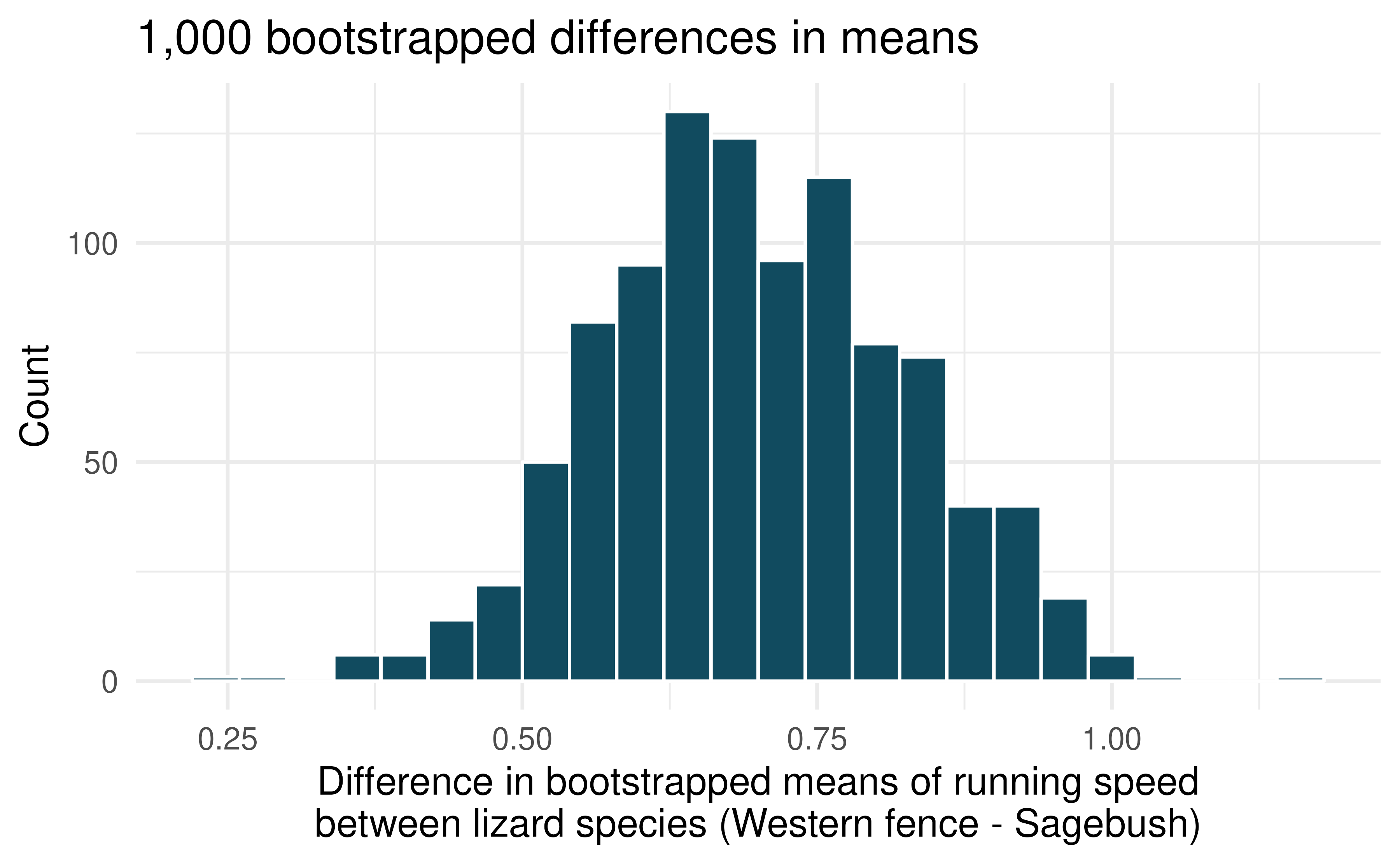

Lizards running, bootstrap interval. We have data on top speeds (in m/sec) measured on a laboratory race track for two species of lizards: Western fence lizard (Sceloporus occidentalis) and Sagebrush lizard (Sceloporus graciosus). The bootstrap distribution below describes the variability of difference in means captured from 1,000 bootstrap samples of the lizard data. (Adolph 1987)

Using the bootstrap distribution, create a (rough) 90% percentile bootrap confidence interval for the true population difference in price per carat for Western fence lizard as compared with Sagebrush lizard.

Using the bootstrap distribution, create a (rough) 90% bootstrap SE confidence interval for the true population difference in price per carat for Western fence lizard as compared with Sagebrush lizard.

Weight loss. You are reading an article in which the researchers have created a 95% confidence interval for the difference in average weight loss for two diets. They are 95% confident that the true difference in average weight loss over 6 months for the two diets is somewhere between (1 lb, 25 lbs). The authors claim that, “therefore diet A (\(\bar{x}_A\) = 20 lbs average loss) results in a much larger average weight loss as compared to diet B (\(\bar{x}_B\) = 7 lbs average loss).” Comment on the authors’ claim.

-

Possible randomized means. Data were collected on data from two groups (A and B). There were 3 measurements taken on Group A and two measurements

Group

Measurement 1

Measurement 2

Measurement 3

A

1

15

5

B

7

3

If the data are (repeatedly) randomly allocated across the two conditions, provide the following: (1) the values which are assigned to group A, (2) the values which are assigned to group B, and (3) the difference in averages \((\bar{x}_A - \bar{x}_B)\) for each of the following:

When the randomized difference in averages is as big as possible.

When the randomized difference in averages is as small as possible (a big in magnitude negative number).

When the randomized difference in averages is as close to zero as possible.

When the observed values are randomly assigned to the two groups, to which of the previous parts would you expect the difference in means to fall closest? Explain your reasoning.

-

Diamonds, mathematical test. We have data on two random samples of diamonds: one with diamonds that weigh 0.99 carats and one with diamonds that weigh 1 carat. Each sample has 23 diamonds. Sample statistics for the price per carat of diamonds in each sample are provided below. Conduct a hypothesis test using a mathematical model to evaluate if there is a difference between the prices per carat of diamonds that weigh 0.99 carats and diamonds that weigh 1 carat Make sure to state your hypotheses clearly, check relevant conditions, and interpret your results in context of the data. (Wickham 2016)

Mean

SD

n

0.99 carats

$44.51

$13.32

23

1 carat

$57.20

$18.19

23

A/B testing. A/B testing is a user experience research methodology where two variants of a page are shown to users at random. A company wants to evaluate whether users will spend more time, on average, on Page A or Page B using an A/B test. Two user experience designers at the company, Lucie and Müge, are tasked with conducting the analysis of the data collected. They agree on how the null hypothesis should be set: on average, users spend the same amount of time on Page A and Page B. Lucie believes that Page B will provide a better experience for users and hence wants to use a one-tailed test, Müge believes that a two-tailed test would be a better choice. Which designer do you agree with, and why?

-

Diamonds, mathematical interval. We have data on two random samples of diamonds: one with diamonds that weigh 0.99 carats and one with diamonds that weigh 1 carat. Each sample has 23 diamonds. Sample statistics for the price per carat of diamonds in each sample are provided below. Assuming that the conditions for conducting inference using a mathematical model are satisfied, construct a 95% confidence interval for the true population difference in prices per carat of diamonds that weigh 0.99 carats and 1 carat. (Wickham 2016)

Mean

SD

n

0.99 carats

$44.51

$13.32

23

1 carat

$57.20

$18.19

23

-

True / False: comparing means. Determine if the following statements are true or false, and explain your reasoning for statements you identify as false.

As the degrees of freedom increases, the \(t\)-distribution approaches normality.

If a 95% confidence interval for the difference between two population means contains 0, a 99% confidence interval calculated based on the same two samples will also contain 0.

If a 95% confidence interval for the difference between two population means contains 0, a 90% confidence interval calculated based on the same two samples will also contain 0.

-

Difference of means. Suppose we will collect two random samples from the following distributions. In each of the parts below, consider the sample means \(\bar{x}_1\) and \(\bar{x}_2\) that we might observe from these two samples.

Mean

Standard deviation

Sample size

Sample 1

15

20

50

Sample 2

20

10

30

What is the associated mean and standard deviation of \(\bar{x}_1\)?

What is the associated mean and standard deviation of \(\bar{x}_2\)?

Calculate and interpret the mean and standard deviation associated with the difference in sample means for the two groups, \(\bar{x}_2 - \bar{x}_1\).

How are the standard deviations from parts (a), (b), and (c) related?

Gaming, distracted eating, and intake. A group of researchers who are interested in the possible effects of distracting stimuli during eating, such as an increase or decrease in the amount of food consumption, monitored food intake for a group of 44 patients who were randomized into two equal groups. The treatment group ate lunch while playing solitaire, and the control group ate lunch without any added distractions. Patients in the treatment group ate 52.1 grams of biscuits, with a standard deviation of 45.1 grams, and patients in the control group ate 27.1 grams of biscuits, with a standard deviation of 26.4 grams. Do these data provide convincing evidence that the average food intake (measured in amount of biscuits consumed) is different for the patients in the treatment group compared to the control group? Assume that conditions for conducting inference using mathematical models are satisfied. (Oldham-Cooper et al. 2011)

-

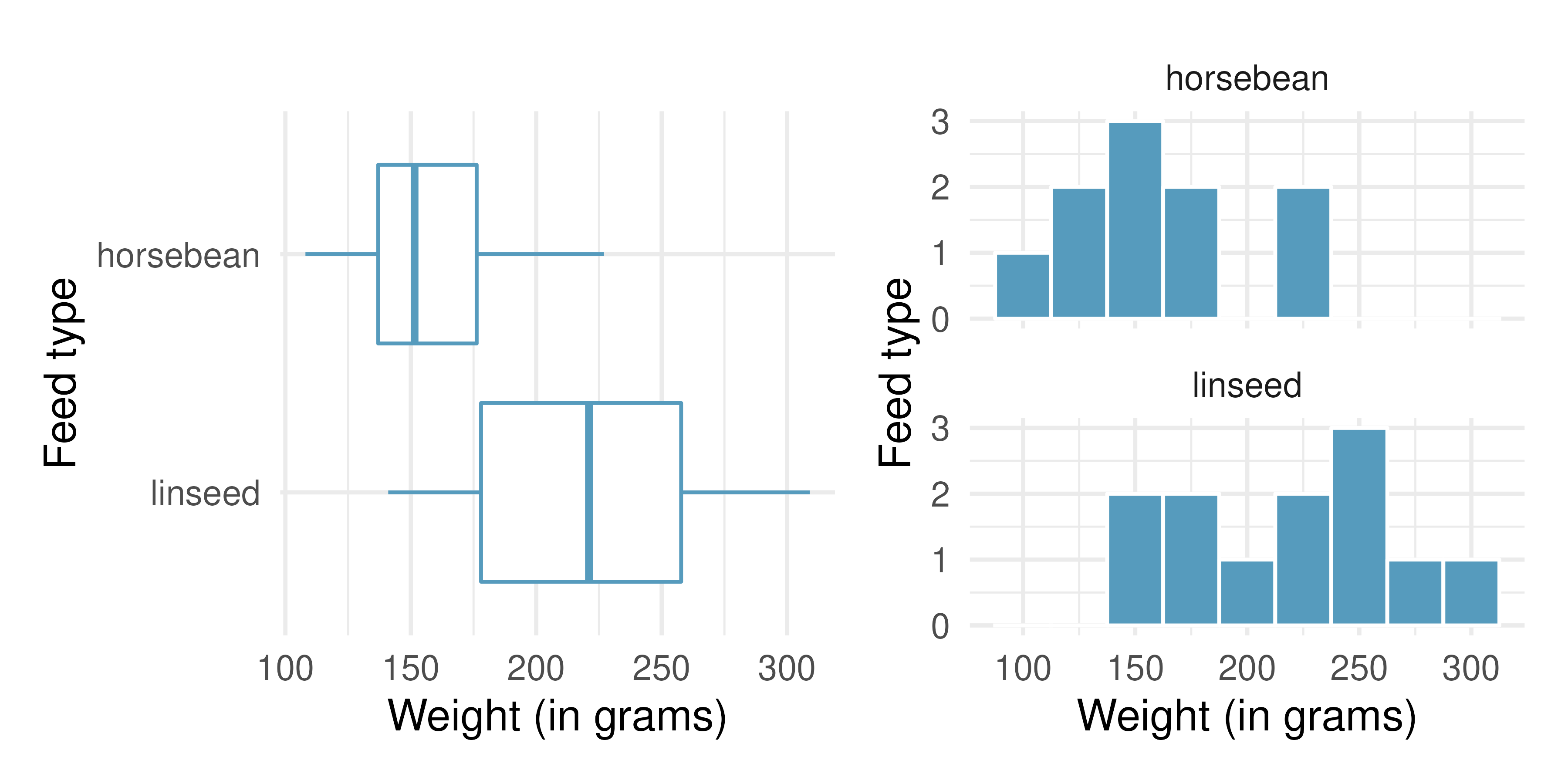

Chicken diet: horsebean vs. linseed. Chicken farming is a multi-billion dollar industry, and any methods that increase the growth rate of young chicks can reduce consumer costs while increasing company profits, possibly by millions of dollars. An experiment was conducted to measure and compare the effectiveness of various feed supplements on the growth rate of chickens. Newly hatched chicks were randomly allocated into six groups, and each group was given a different feed supplement. In this exercise we consider chicks that were fed horsebean and linseed. Below are some summary statistics from this dataset along with box plots showing the distribution of weights by feed type.174 (McNeil 1977)

Feed type

Mean

SD

n

horsebean

160.20

38.63

10

linseed

218.75

52.24

12

Describe the distributions of weights of chickens that were fed horsebean and linseed.

Do these data provide strong evidence that the average weights of chickens that were fed linseed and horsebean are different? Use a 5% significance level.

What type of error might we have committed? Explain.

Would your conclusion change if we used \(\alpha = 0.01\)?

-

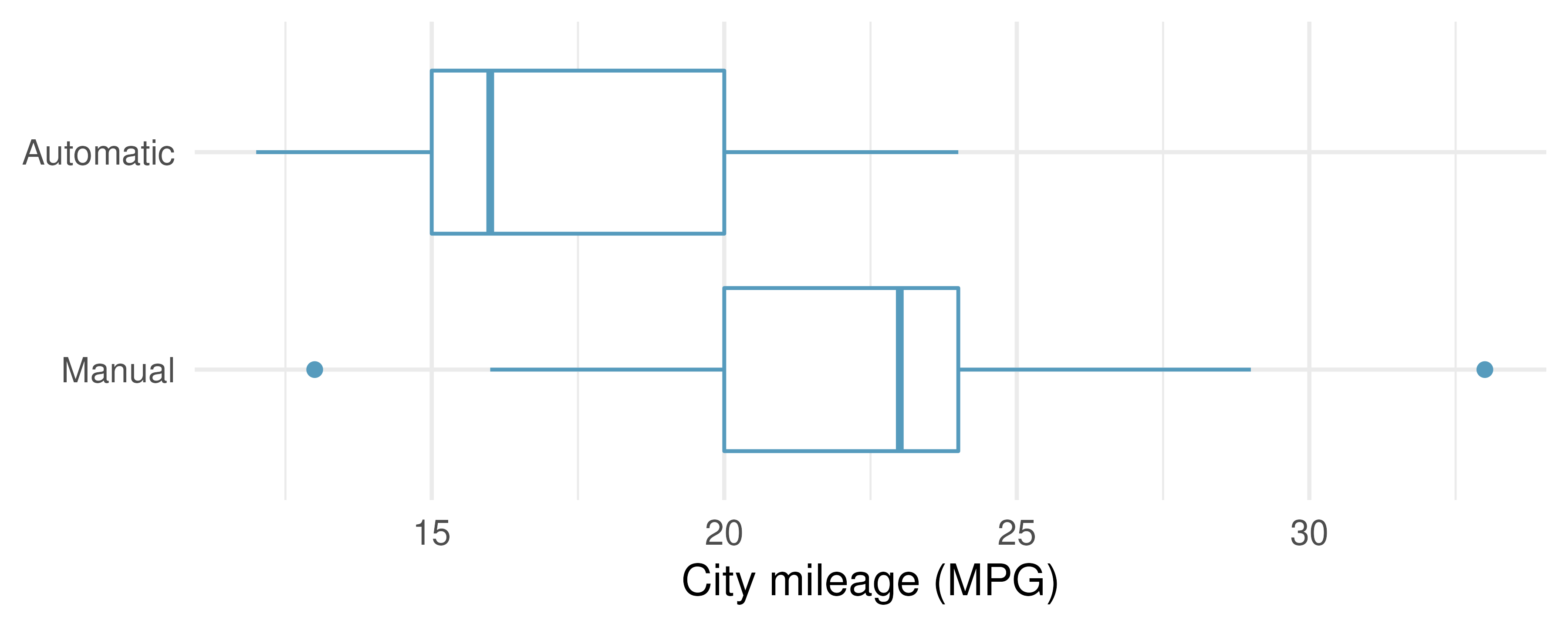

Fuel efficiency in the city. Each year the US Environmental Protection Agency (EPA) releases fuel economy data on cars manufactured in that year. Below are summary statistics on fuel efficiency (in miles/gallon) from random samples of cars with manual and automatic transmissions manufactured in 2021. Do these data provide strong evidence of a difference between the average fuel efficiency of cars with manual and automatic transmissions in terms of their average city mileage?175 (US DOE EPA 2021)

CITY

Mean

SD

n

Automatic

17.4

3.44

25

Manual

22.7

4.58

25

-

Chicken diet: casein vs. soybean. Casein is a common weight gain supplement for humans. Does it have an effect on chickens? An experiment was conducted to measure and compare the effectiveness of various feed supplements on the growth rate of chickens. Newly hatched chicks were randomly allocated into six groups, and each group was given a different feed supplement. In this exercise we consider chicks that were fed casein and soybean. Assume that the conditions for conducting inference using mathematical models are met, and using the data provided below, test the hypothesis that the average weight of chickens that were fed casein is different than the average weight of chickens that were fed soybean. If your hypothesis test yields a statistically significant result, discuss whether or not the higher average weight of chickens can be attributed to the casein diet. (McNeil 1977)

Feed type

Mean

SD

n

casein

323.58

64.43

12

soybean

246.43

54.13

14

-

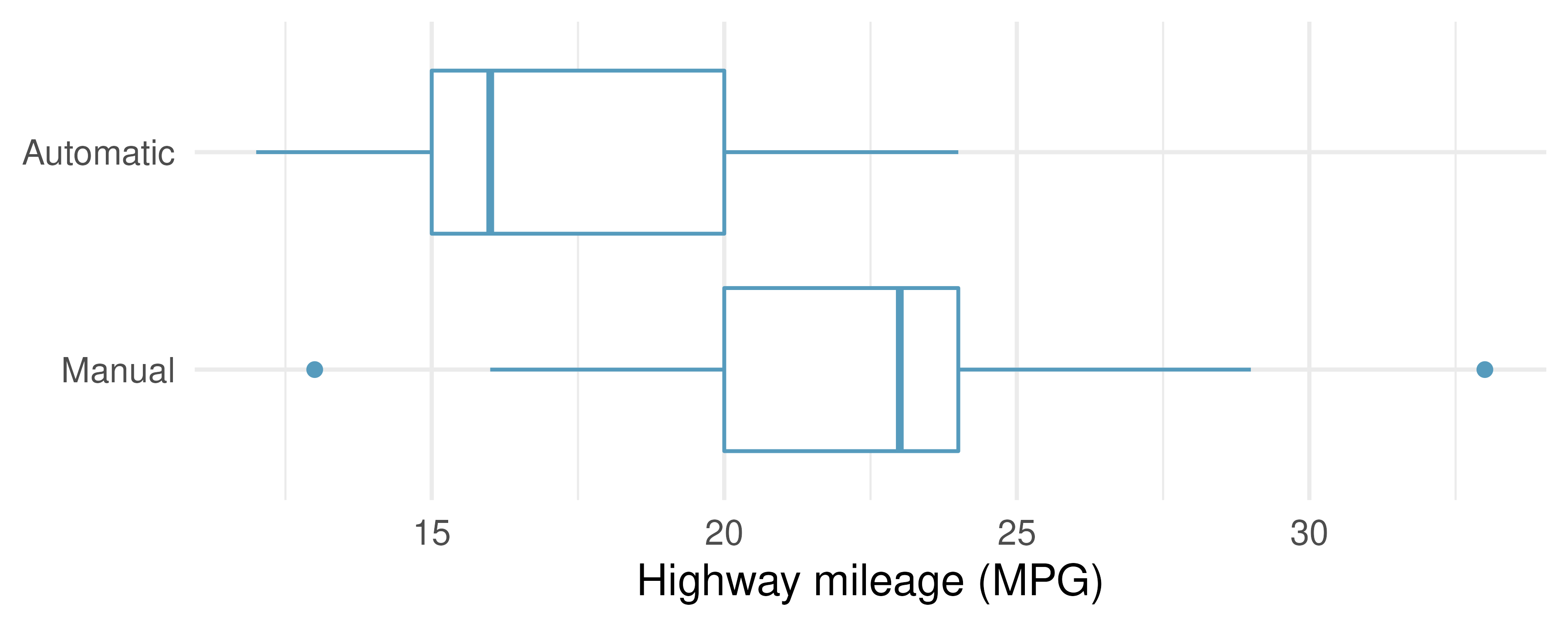

Fuel efficiency on the highway. Each year the US Environmental Protection Agency (EPA) releases fuel economy data on cars manufactured in that year. Below are summary statistics on fuel efficiency (in miles/gallon) from random samples of cars with manual and automatic transmissions manufactured in 2021. Do these data provide strong evidence of a difference between the average fuel efficiency of cars with manual and automatic transmissions in terms of their average highway mileage? (US DOE EPA 2021)

HIGHWAY

Mean

SD

n

Automatic

23.7

3.90

25

Manual

30.9

5.13

25

Gaming, distracted eating, and intake. A group of researchers who are interested in the possible effects of distracting stimuli during eating, such as an increase or decrease in the amount of food consumption, monitored food intake for a group of 44 patients who were randomized into two equal groups. The treatment group ate lunch while playing solitaire, and the control group ate lunch without any added distractions. Patients in the treatment group ate 52.1 grams of biscuits, with a standard deviation of 45.1 grams, and patients in the control group ate 27.1 grams of biscuits, with a standard deviation of 26.4 grams. Do these data provide convincing evidence that the average food intake (measured in amount of biscuits consumed) is different for the patients in the treatment group compared to the control group? Assume that conditions for conducting inference using mathematical models are satisfied. (Oldham-Cooper et al. 2011)

Gaming, distracted eating, and recall. A group of researchers who are interested in the possible effects of distracting stimuli during eating, such as an increase or decrease in the amount of food consumption, monitored food intake for a group of 44 patients who were randomized into two equal groups. The 22 patients in the treatment group who ate their lunch while playing solitaire were asked to do a serial-order recall of the food lunch items they ate. The average number of items recalled by the patients in this group was 4. 9, with a standard deviation of 1.8. The average number of items recalled by the patients in the control group (no distraction) was 6.1, with a standard deviation of 1.8. Do these data provide strong evidence that the average numbers of food items recalled by the patients in the treatment and control groups are different? Assume that conditions for conducting inference using mathematical models are satisfied. (Oldham-Cooper et al. 2011)