5 Questionnaire Design

Designing an effective questionnaire is crucial for gathering accurate and meaningful data. A well-structured questionnaire ensures clarity, minimizes bias, and enhances response rates. This chapter covers the key aspects of questionnaire design, including different types of survey questions and best practices for structuring them. Before proceeding to the next level of this topic, please consider watching the following video.

In this session, we’ll break down the fundamentals of questionnaire design—covering different question types, structuring techniques, and strategies to improve response rates. Whether you’re conducting research, gathering customer feedback, or analyzing trends, a well-designed questionnaire can make all the difference.

5.1 Types of Survey Questions

When designing a questionnaire, choosing the right type of questions is essential. Different survey question types serve distinct purposes and influence the quality and reliability of responses. Below are some of the most common types of survey questions, along with examples:

| Question Type | Description | Example |

|---|---|---|

| Open-Ended Questions | Allow respondents to provide detailed and free-form responses, useful for exploring opinions, experiences, and suggestions. | “What improvements would you like to see in our customer service?” |

| Closed-Ended Questions | Provide specific response options, making it easier to analyze data quantitatively. | “Which of the following best describes your level of satisfaction with our service?” - Very Satisfied - Satisfied - Neutral - Dissatisfied - Very Dissatisfied |

| Likert Scale Questions | Measure attitudes and perceptions using a scale, typically ranging from agreement to disagreement. | “On a scale of 1 to 5, how strongly do you agree with the following statement: ‘The online shopping experience was easy and convenient’?” - 1: Strongly Disagree - 2: Disagree - 3: Neutral - 4: Agree - 5: Strongly Agree |

| Rating Questions | Allow respondents to evaluate a statement, service, or product on a numerical or graphical scale. | “How would you rate your overall experience with our mobile app on a scale of 1 to 10?” |

| Multiple-Choice Questions | Provide respondents with a predefined list of answers, allowing single or multiple selections. | “Which of the following features do you use most often? (Select all that apply)” - Live Chat Support - FAQ Section - Email Support - Phone Support |

| Dichotomous Questions | Offer only two possible answers, such as “Yes” or “No.” | “Have you purchased from our store in the past six months?” (Yes/No) |

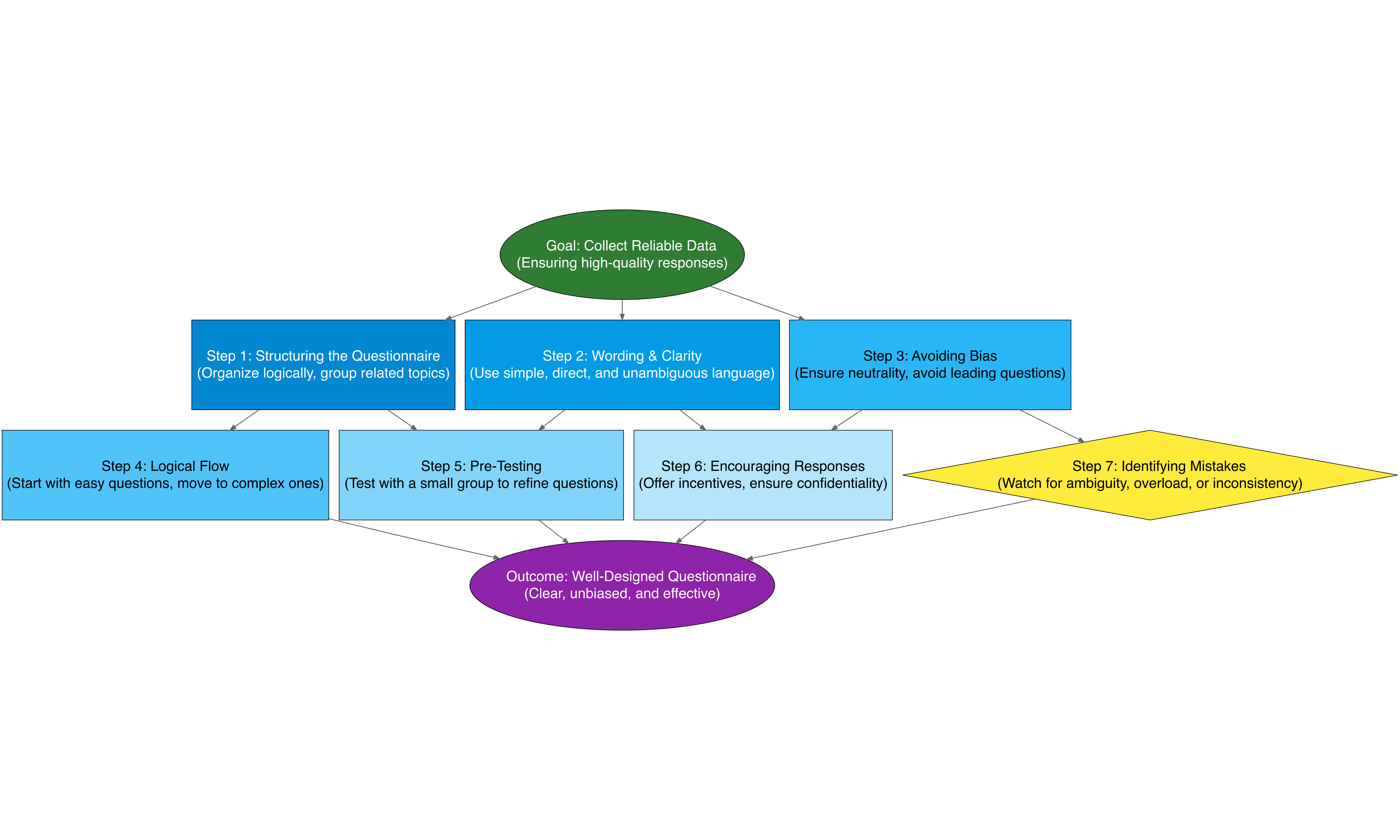

5.2 Structuring a Questionnaire

A well-structured questionnaire follows a logical sequence that flows smoothly for respondents. Begin with easy and engaging questions before moving to more complex or sensitive ones. Group similar topics together for clarity.

5.2.1 Structuring the Questionnaire

A well-structured questionnaire should have a clear sequence to guide respondents smoothly through the survey.

- Organize questions logically to create a natural flow.

- Group related topics together for clarity and coherence.

- Begin with easy and engaging questions to encourage participation before moving to more complex ones.

- Use a mix of question types (multiple-choice, open-ended, Likert scale) to collect diverse data.

- Ensure consistency in question formatting and terminology.

5.2.2 Wording & Clarity

To ensure clarity and avoid confusion:

- Use simple, clear, and concise language that respondents can easily understand.

- Avoid technical jargon unless your audience is highly familiar with it.

- Frame questions in a way that eliminates ambiguity and misinterpretation.

- Use direct wording to improve response accuracy.

- Avoid double-barreled questions that ask about multiple things at once.

Example of a Poor Question: - “How do you perceive the intrinsic value and overall utility of our digital solutions?”

Improved Version: - “How useful do you find our digital solutions?”

5.2.3 Avoiding Bias

To prevent unintentional influence on responses:

- Avoid leading questions that push respondents toward a particular answer.

- Use neutral wording that does not assume a certain opinion.

- Provide a balanced set of response options to prevent bias in answers.

- Use randomized answer choices (where applicable) to reduce order bias.

- Avoid emotionally loaded language that could sway opinions.

Example of a Biased Question: - “Don’t you think our product is excellent?”

Unbiased Version: - “How would you rate our product?”

5.2.4 Ensuring Logical Flow

A well-structured sequence of questions improves response quality:

- Start with general and non-threatening questions to engage respondents.

- Move to more specific or sensitive questions gradually.

- Use conditional logic (skip logic) to direct respondents to relevant questions based on their previous answers.

- Place demographic or classification questions at the end to prevent early disengagement.

- Test the question order to ensure it makes sense and is intuitive.

5.2.5 Pre-Testing & Pilot Surveys

Before finalizing the questionnaire:

- Conduct a pilot survey with a small, diverse group representing your target audience.

- Identify ambiguities, unclear wording, and potential biases based on pilot responses.

- Collect feedback on the overall questionnaire experience.

- Adjust question wording, order, or format based on pilot survey findings.

- Use statistical analysis to check for inconsistencies or confusion in responses.

5.2.6 Encouraging Responses

To maximize participation and reduce dropouts:

- Keep the questionnaire short, relevant, and engaging.

- Clearly communicate the purpose of the survey and how the data will be used.

- Offer incentives (e.g., discounts, prize draws, or exclusive content) to encourage participation.

- Send reminders via email or SMS to those who have not completed the survey.

- Ensure confidentiality and anonymity to make respondents feel comfortable sharing honest opinions.

- Optimize the questionnaire for different devices (desktop, mobile, tablet) to enhance accessibility.

5.2.7 Identifying & Fixing Mistakes

Common mistakes that reduce the effectiveness of a questionnaire:

- Too many questions – Keep it concise to maintain engagement.

- Ambiguous wording – Ensure clarity and precision in every question.

- Inconsistent scales – Use uniform response options to avoid confusion.

- Lack of logical flow – Arrange questions in a way that makes sense to respondents.

- Overuse of open-ended questions – Balance open-ended and close-ended questions for efficiency.

- Ignoring cultural differences – Adapt questions to fit the background of diverse respondents.

By applying these best practices, you can design a questionnaire that is clear, unbiased, engaging, and effective in gathering high-quality and reliable data. Ensuring proper structure, clarity, and flow will improve response rates and data accuracy, leading to more insightful and actionable results.

5.3 Study Case Questionnaire

Background:

ABC Online Store, a fast-growing e-commerce platform, has been experiencing a decline in customer retention. Recent data shows that while many users visit the platform and make initial purchases, repeat purchases have decreased significantly. The management suspects that customer satisfaction issues might be a contributing factor, but they lack specific insights into customer experiences, preferences, and pain points.

To address this issue, the company decides to conduct a structured customer satisfaction survey using a well-designed questionnaire.

Research Objective: The primary goal of this study is to:

- Identify key factors affecting customer satisfaction.

- Understand pain points in the shopping experience.

- Determine how product quality, pricing, and customer support impact retention.

- Gather data to enhance future services and improve customer retention.

5.3.1 Structuring the Questionnaire

Logical Flow: The survey starts with general questions about the shopping experience, then moves to specific issues like website usability, product satisfaction, and customer service.

Grouping Topics: The questionnaire is divided into sections:

- Shopping Experience

- Product Quality

- Pricing and Promotions

- Customer Service

- Future Expectations

Question Types: The survey uses multiple-choice, Likert scale, and open-ended questions.

5.3.2 Wording & Clarity

Example of Poor Question:

“Do you find the pricing of our products to be strategically aligned with the industry standards while maintaining high product quality?”Improved Version:

“Are our product prices reasonable compared to their quality?”Example of Poor Question:

“Did you face any inconveniences due to poor customer service response times, incorrect information, or lack of issue resolution?”Improved Version:

“How satisfied are you with the response time of our customer service?”

5.3.3 Avoiding Bias

Example of a Biased Question:

“Don’t you think our product is excellent?”Unbiased Version:

“How would you rate our product?”Example of Leading Question:

“Would you say our customer service is excellent?”Unbiased Version:

“How would you rate your experience with our customer service?”

5.3.4 Ensuring Logical Flow

Question Order Strategy:

- Start with an engaging question: “How often do you shop online?”

- Move to usability: “How easy is it to find what you need on our website?”

- Then product satisfaction: “How satisfied are you with the quality of the products?”

- End with demographics and classification questions.

5.3.5 Pre-Testing & Pilot Survey

- Before launching, ABC Online Store conducts a pilot test with 30 existing customers.

- Feedback includes:

- Some questions felt repetitive.

- The survey was slightly too long.

- A few technical terms needed simplification.

- Adjustments were made before rolling it out to the broader audience.

5.3.6 Encouraging Responses

To increase participation, ABC Online Store: - Offers a 10% discount for completing the survey. - Sends email reminders after 3 days. - Assures anonymity to encourage honest feedback. - Makes the survey mobile-friendly for easy access.

5.3.7 Identifying & Fixing Mistakes

After collecting initial responses, the team notices: - Some respondents skipped open-ended questions, so wording was adjusted to encourage short responses. - The customer service satisfaction scale was inconsistent with other rating scales, so it was standardized. - A few questions lacked a “Not Applicable” option, which was then added.

By following a structured questionnaire design process, ABC Online Store successfully gathered valuable data that directly improved customer satisfaction and retention.