10 Counting and Classification

All over the world, every day, scientists throw away information. Sometimes this is through the removal of “outliers,” cases in the data that offend the model and are exiled. More routinely, counted things are converted to proportions before analysis. Why does analysis of proportions throw away information? Because 10/20 and ½ are the same proportion, one-half, but have very different sample sizes. Once converted to proportions, and treated as outcomes in a linear regression, the information about sample size has been destroyed.

It’s easy to retain the information about sample size. All that is needed is to model what has actually been observed, the counts instead of the proportions. (McElreath, 2015, p. 291)

In this chapter, we focus on the two most common types of count models: the binomial and the Poisson.

Side note: For a nice Bayesian way to accommodate outliers in your Gaussian models, check out my blog post, Robust linear regression with Student’s \(t\)-distribution.

10.1 Binomial regression

The basic binomial model follows the form

\[y \sim \operatorname{Binomial}(n, p),\]

where \(y\) is some count variable, \(n\) is the number of trials, and \(p\) it the probability a given trial was a 1, which is sometimes termed a success. When \(n = 1\), then \(y\) is a vector of 0s and 1s. Presuming the logit link, which we just covered in Chapter 9, models of this type are commonly termed logistic regression. When \(n > 1\), and still presuming the logit link, we might call our model an aggregated logistic regression model, or more generally an aggregated binomial regression model.

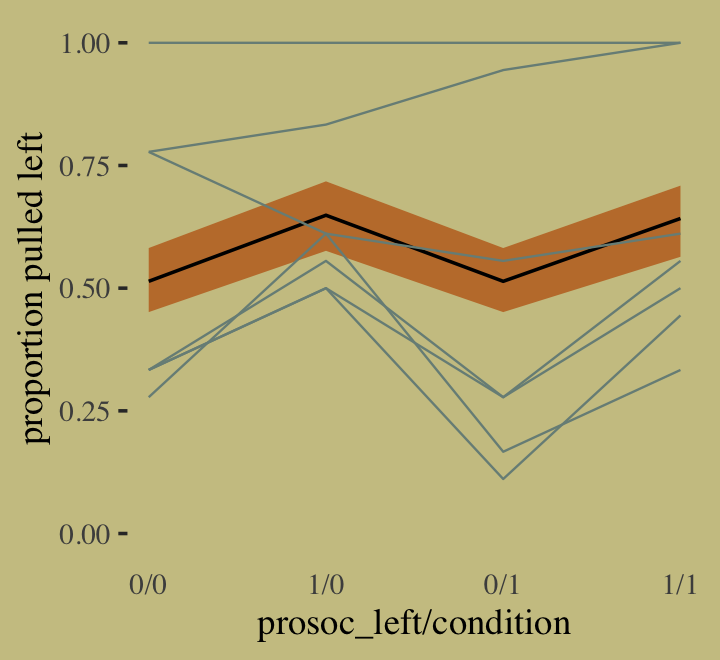

10.1.2 Aggregated binomial: Chimpanzees again, condensed.

With the tidyverse, we use group_by() and summarise() to achieve what McElreath did with aggregate().

d_aggregated <-

d %>%

select(-recipient, -block, -trial, -chose_prosoc) %>%

group_by(actor, condition, prosoc_left) %>%

summarise(x = sum(pulled_left))

d_aggregated %>%

filter(actor %in% c(1, 2))## # A tibble: 8 × 4

## # Groups: actor, condition [4]

## actor condition prosoc_left x

## <int> <int> <int> <int>

## 1 1 0 0 6

## 2 1 0 1 9

## 3 1 1 0 5

## 4 1 1 1 10

## 5 2 0 0 18

## 6 2 0 1 18

## 7 2 1 0 18

## 8 2 1 1 18To fit an aggregated binomial model in brms, we augment the <criterion> | trials() syntax where the value that goes in trials() is either a fixed number, as in this case, or variable in the data indexing \(n\). Either way, at least some of those trials will have an \(n > 1\). Here we’ll use the hard-code method, just like McElreath did in the text.

b10.5 <-

brm(data = d_aggregated,

family = binomial,

x | trials(18) ~ 1 + prosoc_left + condition:prosoc_left,

prior = c(prior(normal(0, 10), class = Intercept),

prior(normal(0, 10), class = b)),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

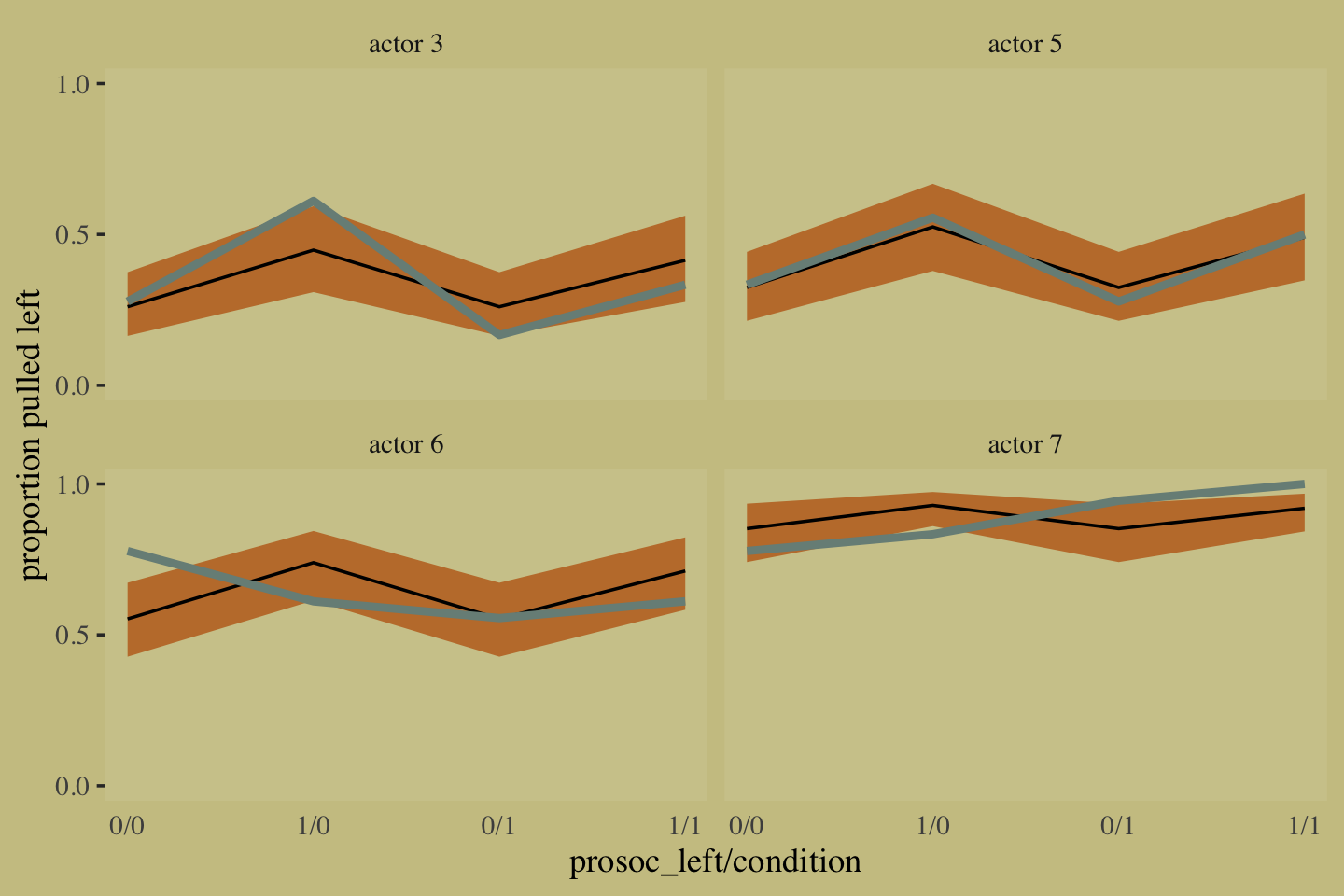

file = "fits/b10.05")We might compare b10.3 with b10.5 like this.

fixef(b10.3) %>% round(digits = 2)## Estimate Est.Error Q2.5 Q97.5

## Intercept 0.05 0.13 -0.20 0.29

## prosoc_left 0.61 0.23 0.17 1.08

## prosoc_left:condition -0.10 0.27 -0.64 0.43fixef(b10.5) %>% round(digits = 2)## Estimate Est.Error Q2.5 Q97.5

## Intercept 0.04 0.13 -0.21 0.30

## prosoc_left 0.62 0.23 0.17 1.08

## prosoc_left:condition -0.10 0.27 -0.63 0.43A coefficient plot can offer a complimentary perspective.

# wrangle

bind_rows(

fixef(b10.3) %>%

data.frame() %>%

rownames_to_column("term"),

fixef(b10.5) %>%

data.frame() %>%

rownames_to_column("term")

) %>%

mutate(fit = rep(c("b10.3", "b10.5"), each = n() / 2)) %>%

# plot

ggplot() +

geom_pointrange(aes(x = fit, y = Estimate,

ymin = Q2.5, ymax = Q97.5,

color = term),

shape = 16) +

scale_color_manual(values = wes_palette("Moonrise2")[c(1:2, 4)]) +

labs(x = NULL, y = NULL) +

coord_flip() +

theme(axis.ticks.y = element_blank(),

legend.position = "none") +

facet_wrap(~term, ncol = 1)

The two are close within simulation error.

10.1.3 Aggregated binomial: Graduate school admissions.

Load the infamous UCBadmit data (see Bickel et al., 1975).

# detach(package:brms)

library(rethinking)

data(UCBadmit)

d <- UCBadmitSwitch from rethinking to brms.

detach(package:rethinking, unload = T)

library(brms)

rm(UCBadmit)

d## dept applicant.gender admit reject applications

## 1 A male 512 313 825

## 2 A female 89 19 108

## 3 B male 353 207 560

## 4 B female 17 8 25

## 5 C male 120 205 325

## 6 C female 202 391 593

## 7 D male 138 279 417

## 8 D female 131 244 375

## 9 E male 53 138 191

## 10 E female 94 299 393

## 11 F male 22 351 373

## 12 F female 24 317 341Now compute our newly-constructed dummy variable, male.

d <-

d %>%

mutate(male = ifelse(applicant.gender == "male", 1, 0))The univariable logistic model with male as the sole predictor of admit follows the form

\[\begin{align*} n_{\text{admit}_i} & \sim \operatorname{Binomial}(n_i, p_i) \\ \operatorname{logit}(p_i) & = \alpha + \beta \text{male}_i \\ \alpha & \sim \operatorname{Normal}(0, 10) \\ \beta & \sim \operatorname{Normal}(0, 10). \end{align*}\]

The second model omits the male predictor.

b10.6 <-

brm(data = d,

family = binomial,

admit | trials(applications) ~ 1 + male ,

prior = c(prior(normal(0, 10), class = Intercept),

prior(normal(0, 10), class = b)),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

# this line isn't usually necessary,

# but it will help with our `loo_moment_match()` code below

save_pars = save_pars(all = T),

file = "fits/b10.06")

b10.7 <-

brm(data = d,

family = binomial,

admit | trials(applications) ~ 1,

prior(normal(0, 10), class = Intercept),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

save_pars = save_pars(all = T),

file = "fits/b10.07")Compute the information criteria for each model and save the results within the brmfit objects.

b10.6 <- add_criterion(b10.6, "waic")

b10.7 <- add_criterion(b10.7, "waic")Here’s the WAIC comparison.

w <- loo_compare(b10.6, b10.7, criterion = "waic")

print(w, simplify = F)## elpd_diff se_diff elpd_waic se_elpd_waic p_waic se_p_waic waic se_waic

## b10.6 0.0 0.0 -495.4 163.6 113.1 40.4 990.8 327.1

## b10.7 -28.8 82.7 -524.2 164.2 85.1 37.1 1048.4 328.3If you prefer the difference in the WAIC metric, use our cbind() conversion method from above. Here are the WAIC weights.

model_weights(b10.6, b10.7, weights = "waic") %>%

round(digits = 2)## b10.6 b10.7

## 1 0Bonus: Information criteria digression.

Let’s see what happens if we switch to the LOO.

b10.6 <- add_criterion(b10.6, "loo")

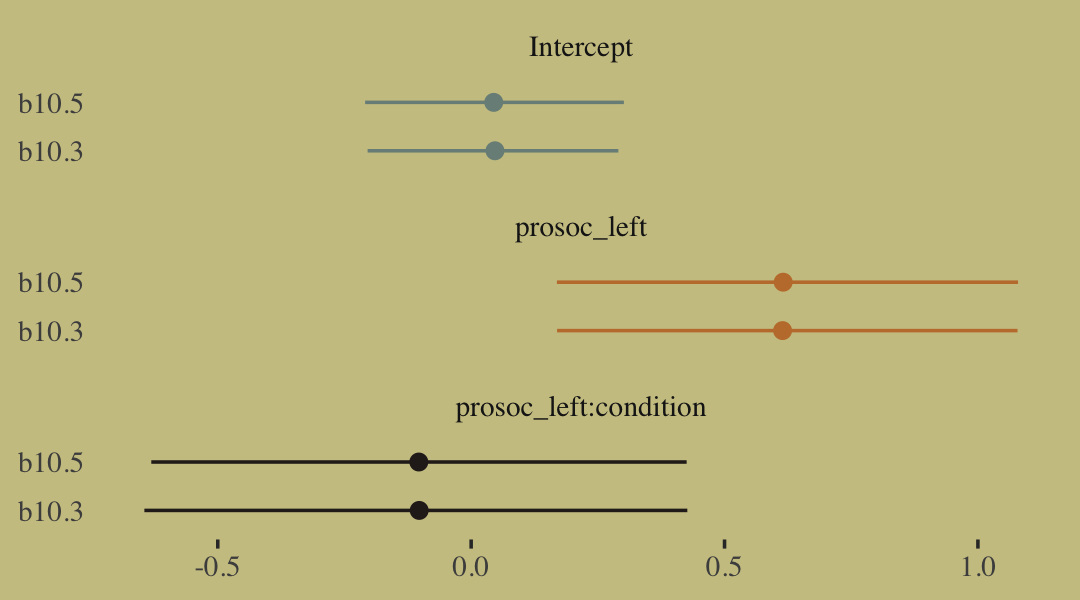

b10.7 <- add_criterion(b10.7, "loo")If you just ape the text and use the WAIC, everything appears fine. But holy smokes look at those nasty warning messages from the loo()! One of the frightening but ultimately handy things about working with the PSIS-LOO is that it requires we estimate a Pareto \(k\) parameter, which you can learn all about in the loo-package section of the loo reference manual (Gabry, 2022a). As it turns out, the Pareto \(k\) can be used as a diagnostic tool (Vehtari & Gabry, 2022a). Each case in the data gets its own \(k\) value and we like it when those \(k\)’s are low. The makers of the loo package get worried when those \(k\)’s exceed 0.7 and as a result, loo() spits out a warning message when they do.

First things first, if you explicitly open the loo package, you’ll have access to some handy diagnostic functions.

library(loo)We’ll be leveraging those \(k\) values with the pareto_k_table() and pareto_k_ids() functions. Both functions take objects created by the loo() or psis() functions. So, before we can get busy, we’ll first make two objects with the loo().

l_b10.6 <- loo(b10.6)

l_b10.7 <- loo(b10.7)There are those warning messages, again. Using the loo-object for model b10.6, which we’ve named l_b10.6, let’s take a look at the pareto_k_table() function.

pareto_k_table(l_b10.6)## Pareto k diagnostic values:

## Count Pct. Min. n_eff

## (-Inf, 0.5] (good) 1 8.3% 2161

## (0.5, 0.7] (ok) 4 33.3% 190

## (0.7, 1] (bad) 2 16.7% 44

## (1, Inf) (very bad) 5 41.7% 1You may have noticed that this same table pops out when you just do something like loo(b10.6). Recall that this data set has 12 observations (i.e., execute count(d)). With pareto_k_table(), we see how the Pareto \(k\) values have been categorized into bins ranging from “good” to “very bad”. Clearly, we like low \(k\)’s. In this example, our observations are all over the place, with 7 in the “bad” to “very bad” \(k\) ranges. We can take a closer look like this:

plot(l_b10.6)

So when you plot() a loo object, you get a nice diagnostic plot for those \(k\) values, ordered by observation number. Our plot indicates cases 1, 2, 3, 11, and 12 had “very bad” \(k\) values for this model. If we wanted to further verify to ourselves which observations those were, we’d use the pareto_k_ids() function.

pareto_k_ids(l_b10.6, threshold = 1)## [1] 1 2 3 11 12Note our use of the threshold argument. Play around with different values to see how it works. If you want an explicit look at those \(k\) values, you execute something like this.

l_b10.6$diagnostics## $pareto_k

## [1] 2.1770531 1.2663216 1.5215908 0.2205991 0.5275680 0.8549286 0.8477665 0.6206897 0.5285953 0.6322458

## [11] 2.4093592 1.5944555

##

## $n_eff

## [1] 1.363650 3.820216 2.444108 2161.377064 1042.390298 44.456561 94.424724 351.994475

## [9] 604.074132 189.906852 6.019581 4.665071The pareto_k values can be used to examine cases that are overly-influential on the model parameters, something like a Cook’s \(D_{i}\). See, for example this discussion on stackoverflow.com in which several members of the Stan team weighed in. The issue is also discussed in Vehtari et al. (2017) and in this presentation by Aki Vehtari.

Anyway, the implication of all this is these values suggest model b10.6 isn’t a great fit for these data.

Part of the warning message for model b10.6 read:

It is recommended to set ‘moment_match = TRUE’ in order to perform moment matching for problematic observations.

Let’s do that using the brms::loo_moment_match() function.

l_b10.6_mm <- loo_moment_match(b10.6, loo = l_b10.6)Check the results.

l_b10.6_mm##

## Computed from 4000 by 12 log-likelihood matrix

##

## Estimate SE

## elpd_loo -498.6 163.4

## p_loo 111.1 40.9

## looic 997.2 326.9

## ------

## Monte Carlo SE of elpd_loo is 0.2.

##

## Pareto k diagnostic values:

## Count Pct. Min. n_eff

## (-Inf, 0.5] (good) 5 41.7% 1044

## (0.5, 0.7] (ok) 7 58.3% 156

## (0.7, 1] (bad) 0 0.0% <NA>

## (1, Inf) (very bad) 0 0.0% <NA>

##

## All Pareto k estimates are ok (k < 0.7).

## See help('pareto-k-diagnostic') for details.Now that looks better. We’ll do the same thing for model b10.7.

l_b10.7_mm <- loo_moment_match(b10.7, loo = l_b10.7)Okay, let’s compare models with formal \(\text{elpd}_\text{loo}\) differences before and after adjusting with the moment match approach.

loo_compare(l_b10.6, l_b10.7)## elpd_diff se_diff

## b10.6 0.0 0.0

## b10.7 -29.6 78.7loo_compare(l_b10.6_mm, l_b10.7_mm)## elpd_diff se_diff

## b10.6 0.0 0.0

## b10.7 -23.5 80.1In this case, the results are similar. The standard errors for the differences are huge compared to the point estimates, suggesting large uncertainty. Watch out for this in your real-world data analyses, though.

But this has all been a tangent from the central thrust of this section.

Back from our information criteria digression.

Let’s get back on track with the text. Here’s a look at b10.6, the unavailable model.

print(b10.6)## Family: binomial

## Links: mu = logit

## Formula: admit | trials(applications) ~ 1 + male

## Data: d (Number of observations: 12)

## Draws: 2 chains, each with iter = 2500; warmup = 500; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept -0.83 0.05 -0.93 -0.73 1.00 2895 2364

## male 0.61 0.06 0.49 0.73 1.00 2976 2390

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).Here’s the relative difference in admission odds.

fixef(b10.6)[2] %>%

exp() %>%

round(digits = 2)## [1] 1.84And now we’ll compute difference in admission probabilities.

post <- as_draws_df(b10.6)

post %>%

mutate(p_admit_male = inv_logit_scaled(b_Intercept + b_male),

p_admit_female = inv_logit_scaled(b_Intercept)) %>%

mutate(diff_admit = p_admit_male - p_admit_female) %>%

summarise(`2.5%` = quantile(diff_admit, probs = .025),

`50%` = median(diff_admit),

`97.5%` = quantile(diff_admit, probs = .975))## # A tibble: 1 × 3

## `2.5%` `50%` `97.5%`

## <dbl> <dbl> <dbl>

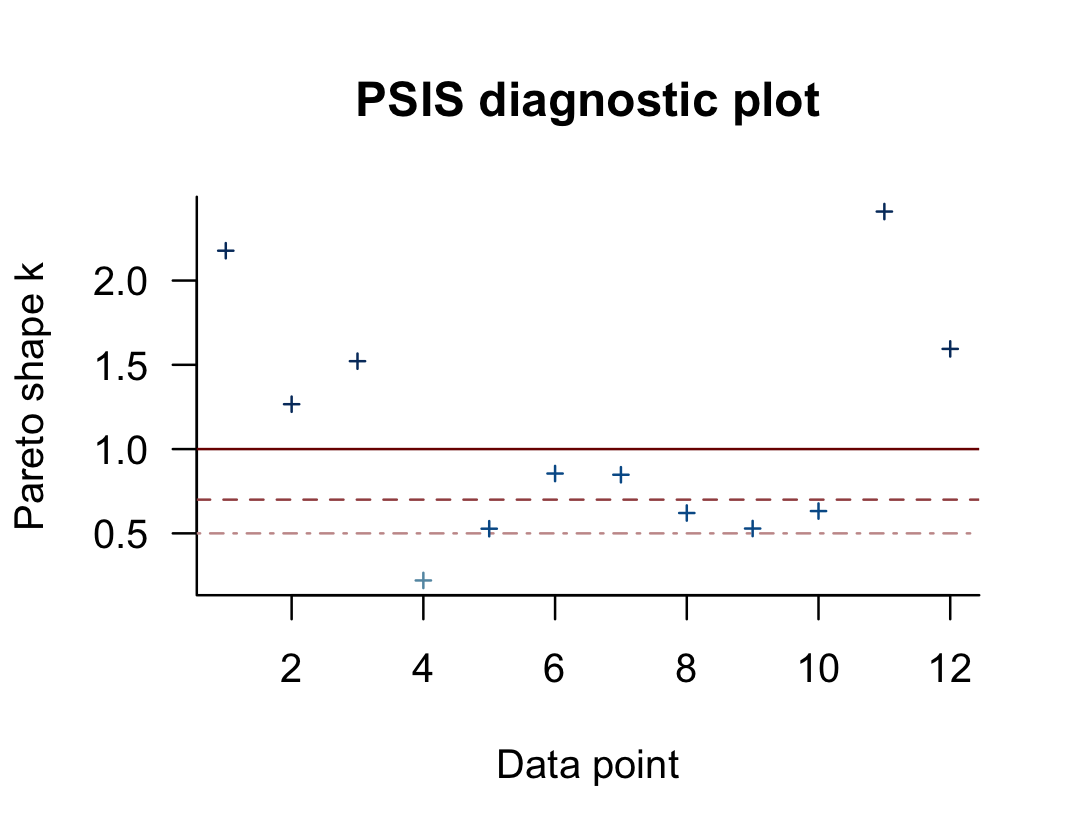

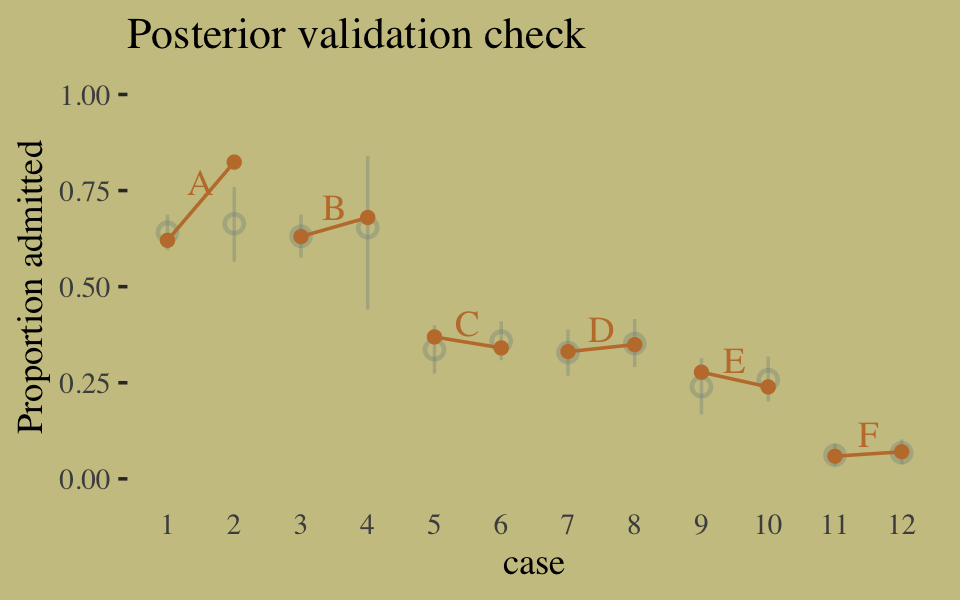

## 1 0.114 0.142 0.169Instead of the summarise() code, we could have also used tidybayes::median_qi(diff_admit). It’s good to have options. Here’s our version of Figure 10.5.

d <-

d %>%

mutate(case = factor(1:12))

p <-

predict(b10.6) %>%

as_tibble() %>%

bind_cols(d)

text <-

d %>%

group_by(dept) %>%

summarise(case = mean(as.numeric(case)),

admit = mean(admit / applications) + .05)

ggplot(data = d, aes(x = case, y = admit / applications)) +

geom_pointrange(data = p,

aes(y = Estimate / applications,

ymin = Q2.5 / applications ,

ymax = Q97.5 / applications),

color = wes_palette("Moonrise2")[1],

shape = 1, alpha = 1/3) +

geom_point(color = wes_palette("Moonrise2")[2]) +

geom_line(aes(group = dept),

color = wes_palette("Moonrise2")[2]) +

geom_text(data = text,

aes(y = admit, label = dept),

color = wes_palette("Moonrise2")[2],

family = "serif") +

labs(title = "Posterior validation check",

y = "Proportion admitted") +

coord_cartesian(ylim = c(0, 1)) +

theme(axis.ticks.x = element_blank())

As alluded to in all that LOO/pareto_k talk, above, this is not a great fit. So we’ll ditch the last model paradigm for one that answers the new question “What is the average difference in probability of admission between females and males within departments?” (p. 307). The statistical formula for the full model follows the form

\[\begin{align*} n_{\text{admit}_i} & \sim \operatorname{Binomial}(n_i, p_i) \\ \operatorname{logit}(p_i) & = \alpha_{\text{dept}_i} + \beta \text{male}_i \\ \alpha_{\text{dept}} & \sim \operatorname{Normal}(0, 10) \\ \beta & \sim \operatorname{Normal}(0, 10). \end{align*}\]

We don’t need to coerce an index like McElreath did in the text. But here are the models.

b10.8 <-

brm(data = d,

family = binomial,

admit | trials(applications) ~ 0 + dept,

prior = prior(normal(0, 10), class = b),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

file = "fits/b10.08")

b10.9 <-

update(b10.8,

newdata = d,

formula = admit | trials(applications) ~ 0 + dept + male,

seed = 10,

file = "fits/b10.09")Let’s make two more loo() objects, this time using the reloo = TRUE approach.

l_b10.8_reloo <- loo(b10.8, reloo = T)

l_b10.9_reloo <- loo(b10.9, reloo = T)Now compare them.

loo_compare(l_b10.6_mm, l_b10.7_mm, l_b10.8_reloo, l_b10.9_reloo)## elpd_diff se_diff

## b10.9 0.0 0.0

## b10.8 -3.2 7.5

## b10.6 -432.6 161.4

## b10.7 -456.2 159.4Here are the LOO weights.

model_weights(b10.6, b10.7, b10.8, b10.9,

weights = "loo") %>%

round(digits = 3)## b10.6 b10.7 b10.8 b10.9

## 0.000 0.000 0.953 0.047The parameters summaries for our multivariable model, b10.9, look like this.

fixef(b10.9) %>% round(digits = 2)## Estimate Est.Error Q2.5 Q97.5

## deptA 0.68 0.10 0.49 0.88

## deptB 0.64 0.12 0.41 0.87

## deptC -0.58 0.08 -0.73 -0.44

## deptD -0.61 0.08 -0.78 -0.45

## deptE -1.06 0.10 -1.26 -0.87

## deptF -2.63 0.16 -2.95 -2.33

## male -0.10 0.08 -0.27 0.06And on the proportional odds scale, the posterior mean for b_male is:

fixef(b10.9)[7, 1] %>% exp()## [1] 0.9047538Since we’ve been using brms, there’s no need to fit our version of McElreath’s m10.9stan. We already have that in our b10.9. But just for kicks and giggles, here’s another way to get the model summary.

b10.9$fit## Inference for Stan model: 1eaca228b69c838edbfea8a7cfc9f693.

## 2 chains, each with iter=2500; warmup=500; thin=1;

## post-warmup draws per chain=2000, total post-warmup draws=4000.

##

## mean se_mean sd 2.5% 25% 50% 75% 97.5% n_eff Rhat

## b_deptA 0.68 0.00 0.10 0.49 0.62 0.68 0.75 0.88 1727 1

## b_deptB 0.64 0.00 0.12 0.41 0.56 0.64 0.72 0.87 1797 1

## b_deptC -0.58 0.00 0.08 -0.73 -0.63 -0.58 -0.53 -0.44 2978 1

## b_deptD -0.61 0.00 0.08 -0.78 -0.67 -0.61 -0.56 -0.45 2438 1

## b_deptE -1.06 0.00 0.10 -1.26 -1.13 -1.06 -0.99 -0.87 4194 1

## b_deptF -2.63 0.00 0.16 -2.95 -2.74 -2.63 -2.52 -2.33 3408 1

## b_male -0.10 0.00 0.08 -0.27 -0.16 -0.10 -0.04 0.06 1361 1

## lprior -22.60 0.00 0.00 -22.61 -22.60 -22.60 -22.60 -22.59 4043 1

## lp__ -70.72 0.04 1.90 -75.42 -71.72 -70.38 -69.33 -68.03 1808 1

##

## Samples were drawn using NUTS(diag_e) at Wed Jan 18 11:52:42 2023.

## For each parameter, n_eff is a crude measure of effective sample size,

## and Rhat is the potential scale reduction factor on split chains (at

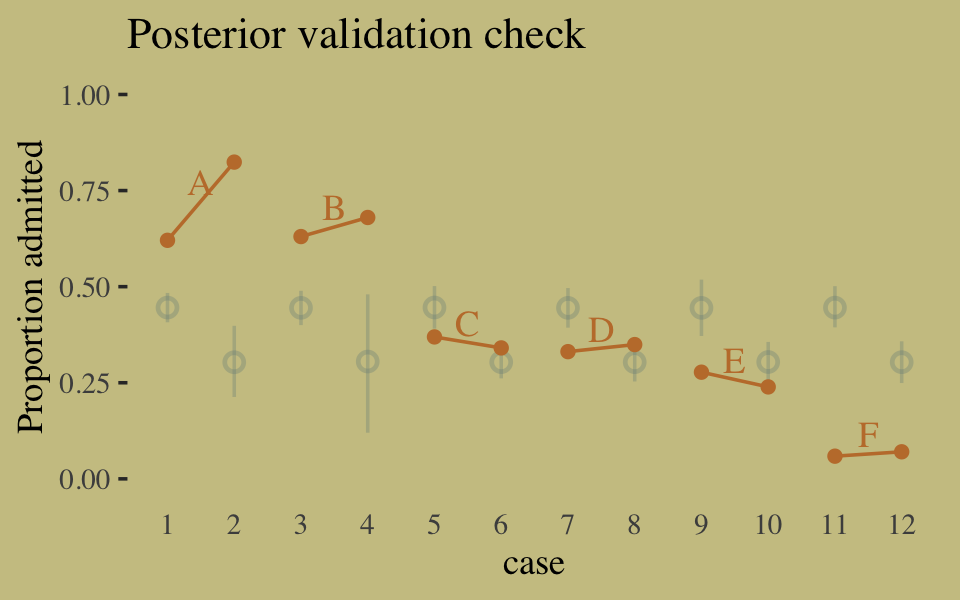

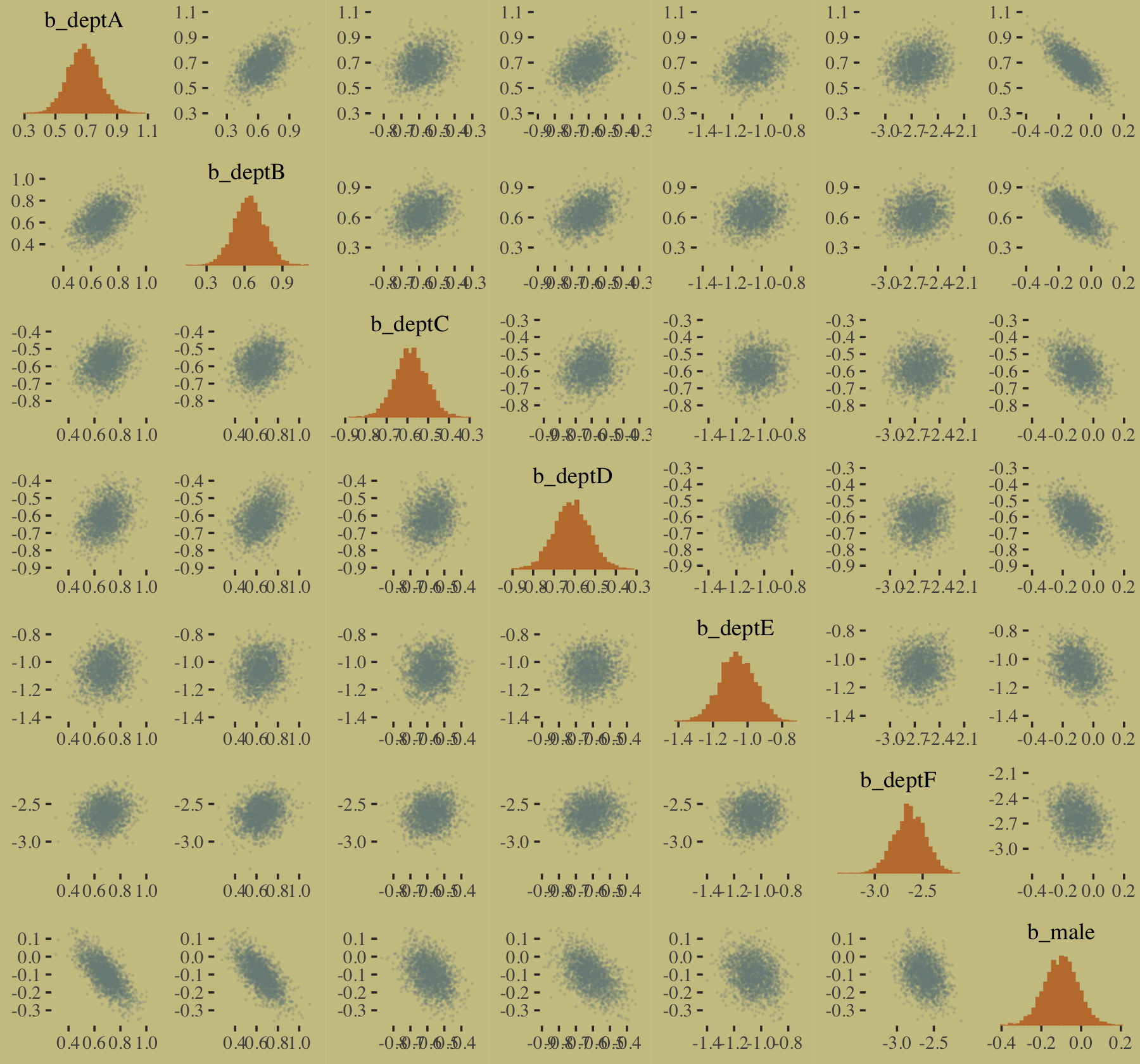

## convergence, Rhat=1).Here’s our version of Figure 10.6, the posterior validation check.

predict(b10.9) %>%

as_tibble() %>%

bind_cols(d) %>%

ggplot(aes(x = case, y = admit / applications)) +

geom_pointrange(aes(y = Estimate / applications,

ymin = Q2.5 / applications ,

ymax = Q97.5 / applications),

color = wes_palette("Moonrise2")[1],

shape = 1, alpha = 1/3) +

geom_point(color = wes_palette("Moonrise2")[2]) +

geom_line(aes(group = dept),

color = wes_palette("Moonrise2")[2]) +

geom_text(data = text,

aes(y = admit, label = dept),

color = wes_palette("Moonrise2")[2],

family = "serif") +

labs(title = "Posterior validation check",

y = "Proportion admitted") +

coord_cartesian(ylim = c(0, 1)) +

theme(axis.ticks.x = element_blank())

The model predictions are imperfect, but way more valid than before. The posterior looks reasonably multivariate Gaussian.

pairs(b10.9,

off_diag_args = list(size = 1/10, alpha = 1/6))

10.1.3.1 Rethinking: Simpson’s paradox is not a paradox.

This empirical example is a famous one in statistical teaching. It is often used to illustrate a phenomenon known as Simpson’s paradox. Like most paradoxes, there is no violation of logic, just of intuition. And since different people have different intuition, Simpson’s paradox means different things to different people. The poor intuition being violated in this case is that a positive association in the entire population should also hold within each department. (p. 309, emphasis in the original)

In my field of clinical psychology, Simpson’s paradox is an important, if under-appreciated, phenomenon. If you’re in the social sciences as well, I highly recommend spending more time thinking about it. To get you started, I blogged about it here and Kievit et al. (2013) wrote a great tutorial paper called Simpson’s paradox in psychological science: a practical guide.

10.1.3.2 Overthinking: WAIC and aggregated binomial models.

McElreath wrote:

The

WAICfunction inrethinkingdetects aggregated binomial models and automatically splits them apart into 0/1 Bernoulli trials, for the purpose of calculating WAIC. It does this, because WAIC is computed point by point (see Chapter 6). So what you define as a “point” affects WAIC’s value. In an aggregated binomial each “point” is a bunch of independent trials that happen to share the same predictor values. In order for the disaggregated and aggregated models to agree, it makes sense to use the disaggregated representation. (p. 309)

To my knowledge, brms::waic() and brms::loo() do not do this, which might well be why some of our values didn’t match up with those in the text. If you have additional insight on this, please share with the rest of the class.

10.1.4 Fitting binomial regressions with glm().

We’re not here to learn frequentist code, so we’re going to skip most of this section. But model m.good is worth fitting. Here are the data.

# outcome and predictor almost perfectly associated

y <- c(rep(0, 10), rep(1, 10))

x <- c(rep(-1, 9), rep(1, 11))We are going to depart from McElreath’s naming convention in the text and call this fit b10.good. It’ll make it easier to find in this project’s fits folder.

b10.good <-

brm(data = list(y = y, x = x),

family = binomial,

y | trials(1) ~ 1 + x,

prior = c(prior(normal(0, 10), class = Intercept),

prior(normal(0, 10), class = b)),

seed = 10,

file = "fits/b10.good") Our model summary will differ a bit from the one in the text. It seems this is because of the MAP/HMC contrast and our choice of priors.

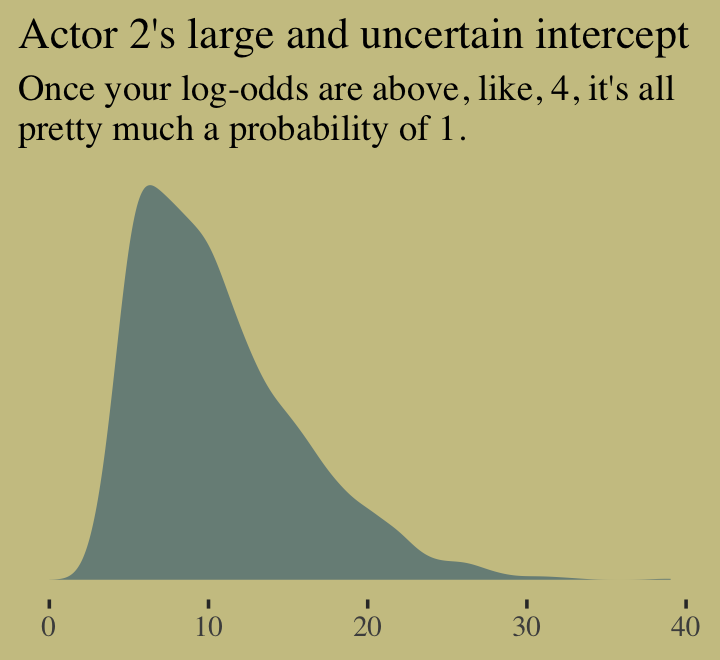

print(b10.good)## Family: binomial

## Links: mu = logit

## Formula: y | trials(1) ~ 1 + x

## Data: list(y = y, x = x) (Number of observations: 20)

## Draws: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept -5.44 4.36 -15.65 0.36 1.01 431 605

## x 8.20 4.35 2.39 18.48 1.01 442 606

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

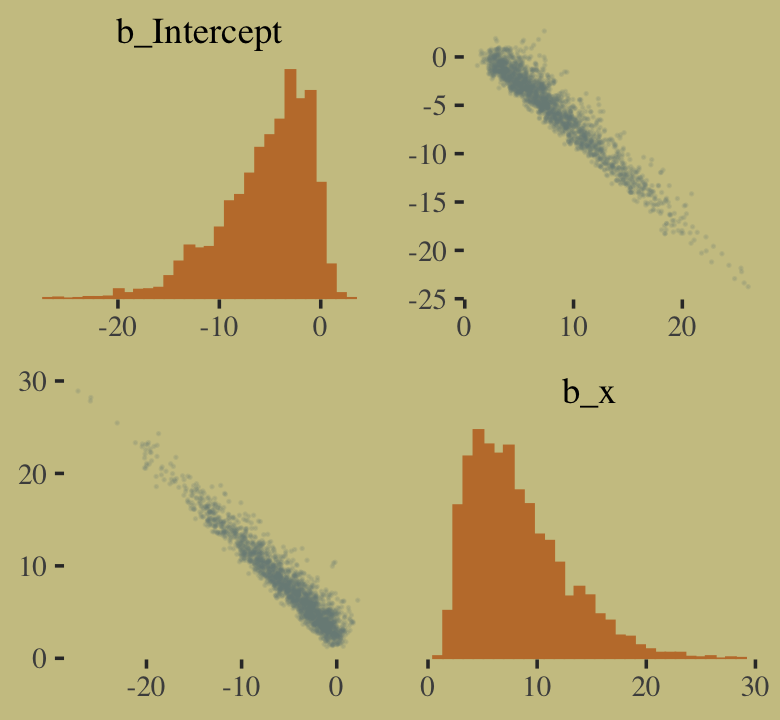

## scale reduction factor on split chains (at convergence, Rhat = 1).You might experiment with different prior \(\textit{SD}\)’s to see how they influence the posterior \(\textit{SD}\)’s. Anyways, here’s the pairs() plot McElreath excluded from the text.

pairs(b10.good,

off_diag_args = list(size = 1/10, alpha = 1/6))

That posterior, my friends, is not multivariate Gaussian. The plot deserves an extensive quote from McElreath.

Inspecting the pairs plot (

notshown) demonstrates just how subtle even simple models can be, once we start working with GLMs. I don’t say this to scare the reader. But it’s true that even simple models can behave in complicated ways. How you fit the model is part of the model, and in principle no GLM is safe for MAP estimation. (p. 311, emphasis added)

10.2 Poisson regression

When a binomial distribution has a very small probability of an event \(p\) and a very large number of trials \(n\), then it takes on a special shape. The expected value of a binomial distribution is just \(np\), and its variance is \(np(1 - p)\). But when \(n\) is very large and \(p\) is very small, then these are approximately the same. (p. 311)

Data of this kind are often called count data. Here we simulate some.

set.seed(10) # make the results reproducible

tibble(y = rbinom(1e5, 1000, 1/1000)) %>%

summarise(y_mean = mean(y),

y_variance = var(y))## # A tibble: 1 × 2

## y_mean y_variance

## <dbl> <dbl>

## 1 0.994 0.995Yes, those statistics are virtually the same. When dealing with Poisson data, \(\mu = \sigma^2\). When you have a number of trials for which \(n\) is unknown or much larger than seen in the data, the Poisson likelihood is a useful tool. We define it as

\[y \sim \operatorname{Poisson}(\lambda).\]

As \(\lambda\) expresses both mean and variance because, within this model, the variance scales right along with the mean. Since \(\lambda\) is constrained to be positive, we typically use the log link. Thus the basic Poisson regression model is

\[\begin{align*} y_i & \sim \operatorname{Poisson}(\lambda_i) \\ \log (\lambda_i) & = \alpha + \beta x_i, \end{align*}\]

where all model parameters receive priors following the forms we’ve been practicing. We read further:

The parameter \(\lambda\) is the expected value, but it’s also commonly thought of as a rate. Both interpretations are correct, and realizing this allows to make Poisson models for which the exposure varies across cases \(i\)…

Implicitly, \(\lambda\) is equal to an expected number of events, \(\mu\), per unit of time or distance, \(\tau\). This implies that \(\lambda = \mu/\tau\), which lets us redefine the link:

\[\begin{align*} y_i & \sim \operatorname{Poisson}(\lambda_i) \\ \log \lambda_i & = \log \frac{\mu_i}{\tau_i} = \alpha + \beta x_i \end{align*}\]

Since the logarithm of a ratio is the same as a difference of logarithms, we can also write:

\[\log \lambda_i = \log \mu_i - \log \tau_i = \alpha + \beta x_i\]

These \(\tau\) values are the “exposures.” So if different observations \(i\) have different exposures, this implies that the expected value on row \(i\) is given by:

\[\log \mu_i = \log \tau_i + \alpha + \beta x_i\]

When \(\tau_i = 1\), then \(\log \tau_i = 0\) and we’re back where we started. But when the exposure varies across cases, then \(\tau_i\) does the important work of correctly scaling the expected number of events for each case \(i\). (pp. 312–313)

10.2.1 Example: Oceanic tool complexity.

Load the Kline data (see Kline & Boyd, 2010).

library(rethinking)

data(Kline)

d <- KlineSwitch from rethinking to brms.

detach(package:rethinking, unload = T)

library(brms)

rm(Kline)

d## culture population contact total_tools mean_TU

## 1 Malekula 1100 low 13 3.2

## 2 Tikopia 1500 low 22 4.7

## 3 Santa Cruz 3600 low 24 4.0

## 4 Yap 4791 high 43 5.0

## 5 Lau Fiji 7400 high 33 5.0

## 6 Trobriand 8000 high 19 4.0

## 7 Chuuk 9200 high 40 3.8

## 8 Manus 13000 low 28 6.6

## 9 Tonga 17500 high 55 5.4

## 10 Hawaii 275000 low 71 6.6Here are our new columns.

d <-

d %>%

mutate(log_pop = log(population),

contact_high = ifelse(contact == "high", 1, 0))Our statistical model will follow the form

\[\begin{align*} \text{total_tools}_i & \sim \operatorname{Poisson}(\lambda_i) \\ \log (\lambda_i) & = \alpha + \beta_1 \text{log_pop}_i + \beta_2 \text{contact_high}_i + \beta_3 \text{contact_high}_i \times \text{log_pop}_i \\ \alpha & \sim \operatorname{Normal}(0, 100) \\ \beta_1 & \sim \operatorname{Normal}(0, 1) \\ \beta_2 & \sim \operatorname{Normal}(0, 1) \\ \beta_3 & \sim \operatorname{Normal}(0, 1). \end{align*}\]

The only new thing in our model code is family = poisson. brms defaults to the log() link.

b10.10 <-

brm(data = d,

family = poisson,

total_tools ~ 1 + log_pop + contact_high + contact_high:log_pop,

prior = c(prior(normal(0, 100), class = Intercept),

prior(normal(0, 1), class = b)),

iter = 3000, warmup = 1000, chains = 4, cores = 4,

seed = 10,

file = "fits/b10.10") print(b10.10)## Family: poisson

## Links: mu = log

## Formula: total_tools ~ 1 + log_pop + contact_high + contact_high:log_pop

## Data: d (Number of observations: 10)

## Draws: 4 chains, each with iter = 3000; warmup = 1000; thin = 1;

## total post-warmup draws = 8000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 0.93 0.37 0.21 1.64 1.00 3816 4552

## log_pop 0.26 0.04 0.20 0.34 1.00 4107 4468

## contact_high -0.11 0.82 -1.72 1.51 1.00 2801 3560

## log_pop:contact_high 0.04 0.09 -0.13 0.22 1.00 2823 3585

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).We can use vcov() to extract the correlation matrix for the parameters.

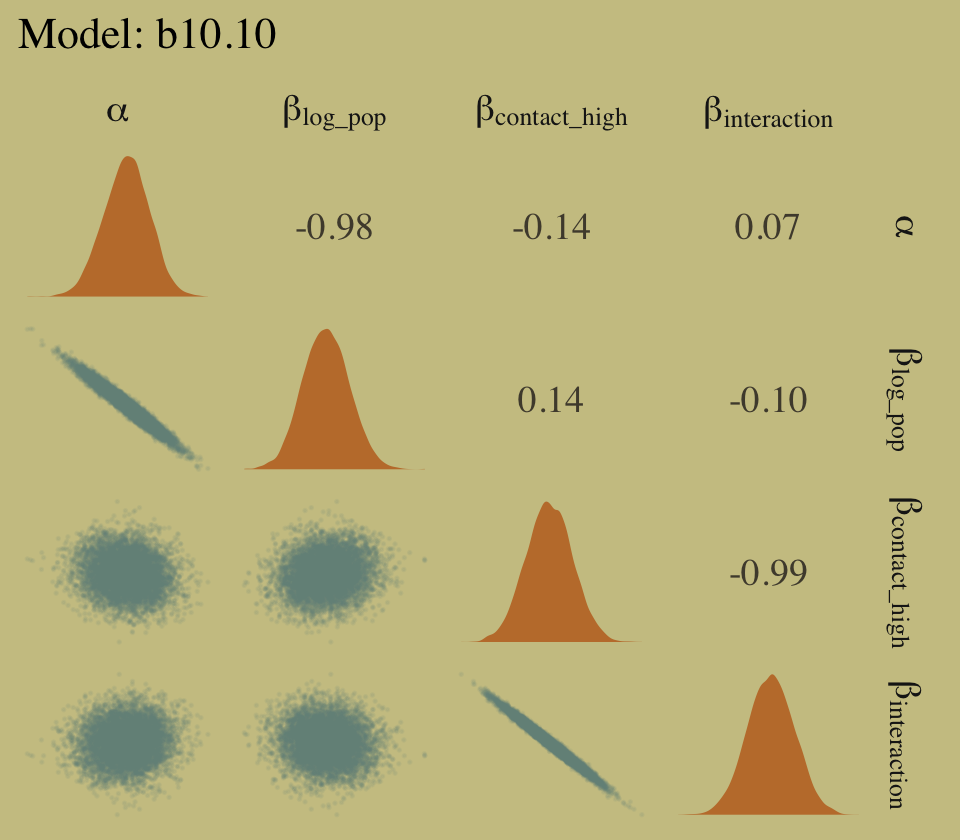

vcov(b10.10, cor = T) %>%

round(digits = 2)## Intercept log_pop contact_high log_pop:contact_high

## Intercept 1.00 -0.98 -0.14 0.07

## log_pop -0.98 1.00 0.14 -0.10

## contact_high -0.14 0.14 1.00 -0.99

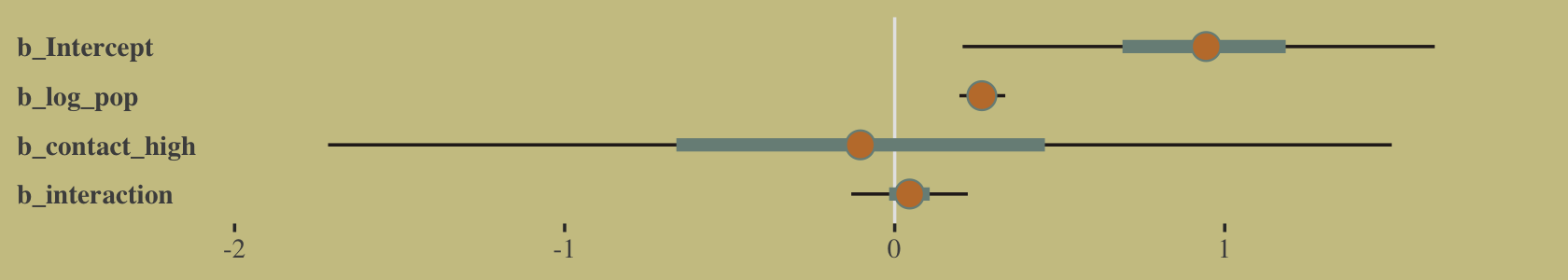

## log_pop:contact_high 0.07 -0.10 -0.99 1.00And here’s the coefficient plot via bayesplot::mcmc_intervals().

post <- as_draws_df(b10.10)

# we'll set a renewed color theme

color_scheme_set(wes_palette("Moonrise2")[c(2, 1, 4, 2, 1, 1)])

post %>%

rename(b_interaction = `b_log_pop:contact_high`) %>%

mcmc_intervals(pars = vars(starts_with("b_")),

prob = .5,

prob_outer = .95) +

theme(axis.text.y = element_text(hjust = 0),

axis.ticks.y = element_blank())

How plausible is it a high-contact island will have more tools than a low-contact island?

post <-

post %>%

mutate(lambda_high = exp(b_Intercept + b_contact_high + (b_log_pop + `b_log_pop:contact_high`) * 8),

lambda_low = exp(b_Intercept + b_log_pop * 8)) %>%

mutate(diff = lambda_high - lambda_low)

post %>%

summarise(sum = sum(diff > 0) / length(diff))## # A tibble: 1 × 1

## sum

## <dbl>

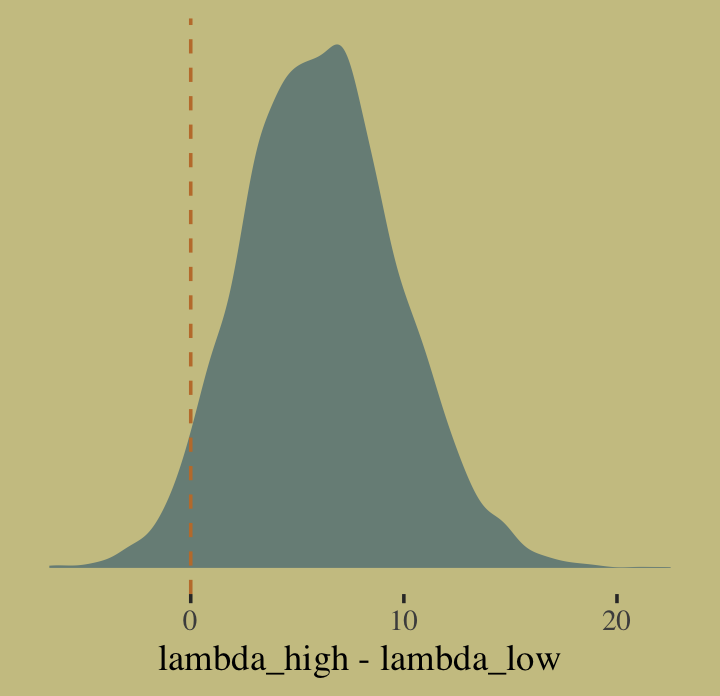

## 1 0.960Quite, it turns out. Behold the corresponding Figure 10.8.a.

post %>%

ggplot(aes(x = diff)) +

geom_density(color = "transparent",

fill = wes_palette("Moonrise2")[1]) +

geom_vline(xintercept = 0, linetype = 2,

color = wes_palette("Moonrise2")[2]) +

scale_y_continuous(NULL, breaks = NULL) +

xlab("lambda_high - lambda_low")

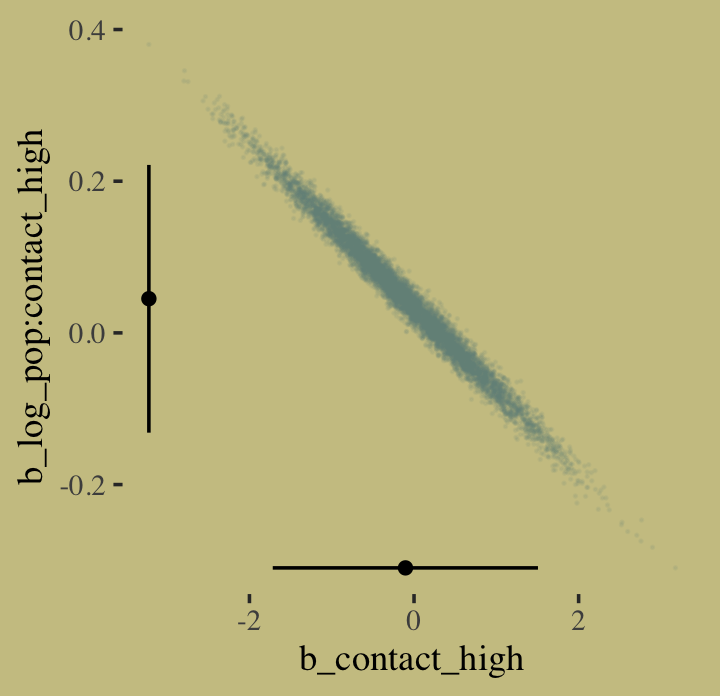

I’m not happy with how clunky this solution is, but one way to get those marginal dot and line plots for the axes is to make intermediary tibbles. Anyway, here’s a version of Figure 10.8.b.

# intermediary tibbles for our the dot and line portoin of the plot

point_tibble <-

tibble(x = c(median(post$b_contact_high), min(post$b_contact_high)),

y = c(min(post$`b_log_pop:contact_high`), median(post$`b_log_pop:contact_high`)))

line_tibble <-

tibble(parameter = rep(c("b_contact_high", "b_log_pop:contact_high"), each = 2),

x = c(quantile(post$b_contact_high, probs = c(.025, .975)),

rep(min(post$b_contact_high), times = 2)),

y = c(rep(min(post$`b_log_pop:contact_high`), times = 2),

quantile(post$`b_log_pop:contact_high`, probs = c(.025, .975))))

# the plot

post %>%

ggplot(aes(x = b_contact_high, y = `b_log_pop:contact_high`)) +

geom_point(color = wes_palette("Moonrise2")[1],

size = 1/10, alpha = 1/10) +

geom_point(data = point_tibble,

aes(x = x, y = y)) +

geom_line(data = line_tibble,

aes(x = x, y = y, group = parameter))

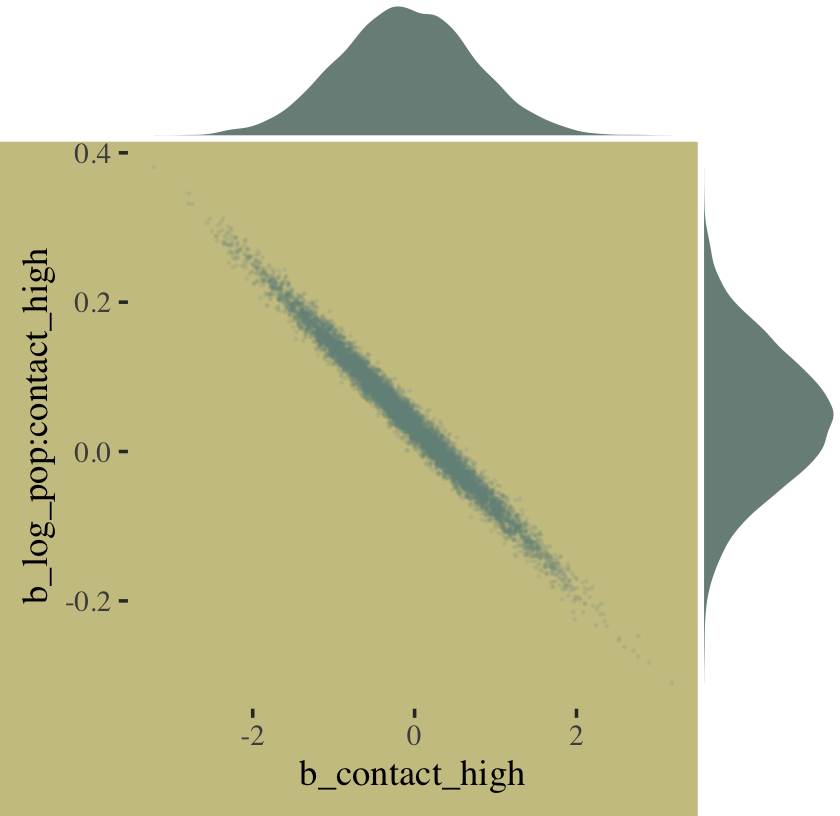

The ggMarginal() function from the ggExtra package (Attali & Baker, 2022) offers an interesting alternative.

library(ggExtra)

# the base plot

p <-

post %>%

ggplot(aes(x = b_contact_high, y = `b_log_pop:contact_high`)) +

geom_point(color = wes_palette("Moonrise2")[1],

size = 1/10, alpha = 1/10)

# add the marginal plots

p %>%

ggMarginal(data = post,

type = 'density',

color = "transparent",

fill = wes_palette("Moonrise2")[1], size = 4)

To get a feel for what’s possible with ggMarginal(), check out Dean Attali’s great shiny app.

Here we deconstruct model b10.10, bit by bit.

# no interaction

b10.11 <-

update(b10.10, formula = total_tools ~ 1 + log_pop + contact_high,

seed = 10,

file = "fits/b10.11")

# no contact rate

b10.12 <-

update(b10.10, formula = total_tools ~ 1 + log_pop,

seed = 10,

file = "fits/b10.12")

# no log-population

b10.13 <-

update(b10.10, formula = total_tools ~ 1 + contact_high,

seed = 10,

file = "fits/b10.13")

# intercept only

b10.14 <-

update(b10.10, formula = total_tools ~ 1,

seed = 10,

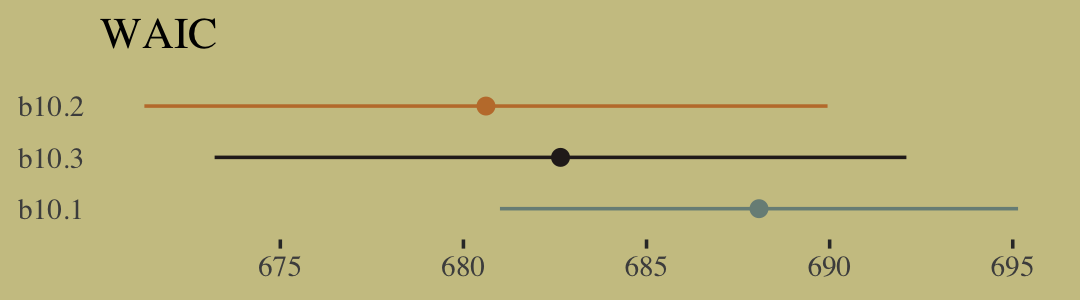

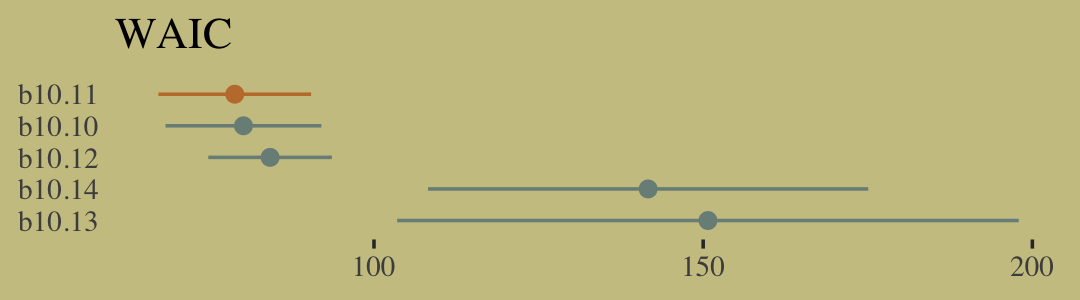

file = "fits/b10.14")I know we got all excited with the LOO, above. Let’s just be lazy and go WAIC. [Though beware, the LOO opens up a similar can of worms, here.]

b10.10 <- add_criterion(b10.10, criterion = "waic")

b10.11 <- add_criterion(b10.11, criterion = "waic")

b10.12 <- add_criterion(b10.12, criterion = "waic")

b10.13 <- add_criterion(b10.13, criterion = "waic")

b10.14 <- add_criterion(b10.14, criterion = "waic")Now compare them.

w <- loo_compare(b10.10, b10.11, b10.12, b10.13, b10.14, criterion = "waic")

cbind(waic_diff = w[, 1] * -2,

se = w[, 2] * 2) %>%

round(digits = 2)## waic_diff se

## b10.11 0.00 0.00

## b10.10 1.32 1.32

## b10.12 5.36 8.21

## b10.14 62.79 34.57

## b10.13 71.87 46.93Let’s get those WAIC weights, too.

model_weights(b10.10, b10.11, b10.12, b10.13, b10.14, weights = "waic") %>%

round(digits = 2)## b10.10 b10.11 b10.12 b10.13 b10.14

## 0.33 0.63 0.04 0.00 0.00Now wrangle w a little and make the WAIC plot.

w %>%

data.frame() %>%

rownames_to_column(var = "model") %>%

ggplot(aes(x = waic,

xmin = waic - se_waic,

xmax = waic + se_waic,

y = reorder(model, -waic),

color = model)) +

geom_pointrange(shape = 16, show.legend = F) +

scale_color_manual(values = wes_palette("Moonrise2")[c(1, 2, 1, 1, 1)]) +

labs(title = "WAIC",

x = NULL,

y = NULL) +

theme(axis.ticks.y = element_blank())

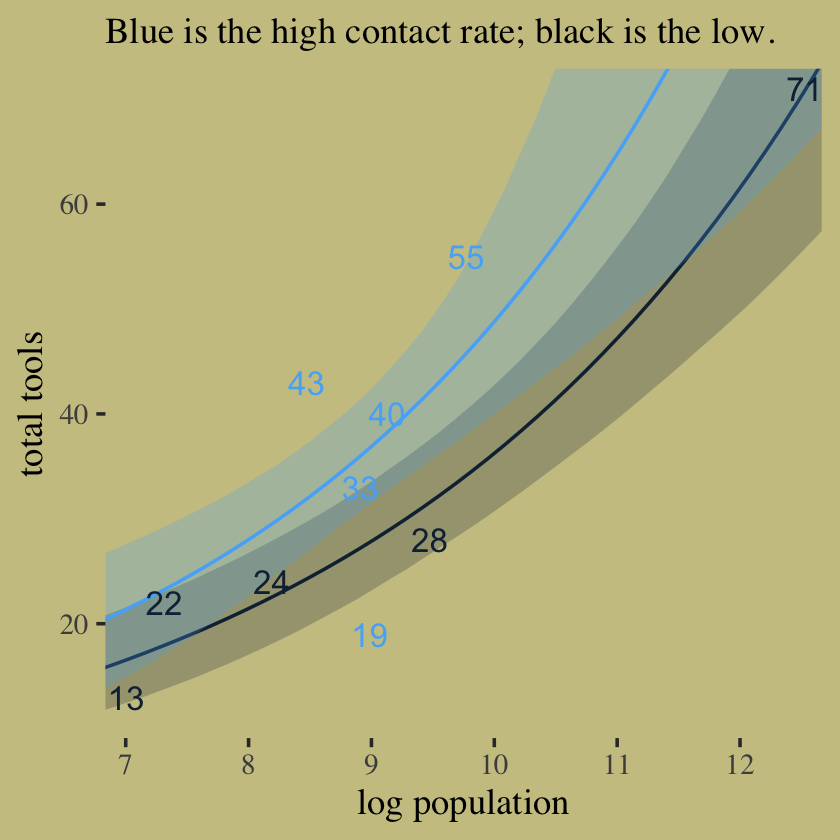

Here’s our version of Figure 10.9. Recall, to do an “ensemble” posterior prediction in brms, one uses the pp_average() function. I know we were just lazy and focused on the WAIC. But let’s play around, a bit. Here we’ll weight the models based on the LOO by adding a weights = "loo" argument to the pp_average() function. If you check the corresponding section of the brms reference manual (Bürkner, 2022h), you’ll find several weighting schemes.

nd <-

crossing(contact_high = 0:1,

log_pop = seq(from = 6.5, to = 13, length.out = 50))

ppa <-

pp_average(b10.10, b10.11, b10.12,

weights = "loo",

method = "fitted",

newdata = nd) %>%

as_tibble() %>%

bind_cols(nd)

ppa %>%

ggplot(aes(x = log_pop, group = contact_high, color = contact_high)) +

geom_smooth(aes(y = Estimate, ymin = Q2.5, ymax = Q97.5, fill = contact_high),

stat = "identity",

alpha = 1/4, linewidth = 1/2) +

geom_text(data = d,

aes(y = total_tools, label = total_tools),

size = 3.5) +

labs(subtitle = "Blue is the high contact rate; black is the low.",

x = "log population",

y = "total tools") +

coord_cartesian(xlim = c(7.1, 12.4),

ylim = c(12, 70)) +

theme(legend.position = "none",

panel.border = element_blank())

In case you were curious, here are those LOO weights.

model_weights(b10.10, b10.11, b10.12,

weights = "loo")## b10.10 b10.11 b10.12

## 0.31099478 0.63757617 0.0514290510.2.2 MCMC islands.

We fit our analogue to m10.10stan, b10.10, some time ago.

print(b10.10)## Family: poisson

## Links: mu = log

## Formula: total_tools ~ 1 + log_pop + contact_high + contact_high:log_pop

## Data: d (Number of observations: 10)

## Draws: 4 chains, each with iter = 3000; warmup = 1000; thin = 1;

## total post-warmup draws = 8000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 0.93 0.37 0.21 1.64 1.00 3816 4552

## log_pop 0.26 0.04 0.20 0.34 1.00 4107 4468

## contact_high -0.11 0.82 -1.72 1.51 1.00 2801 3560

## log_pop:contact_high 0.04 0.09 -0.13 0.22 1.00 2823 3585

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

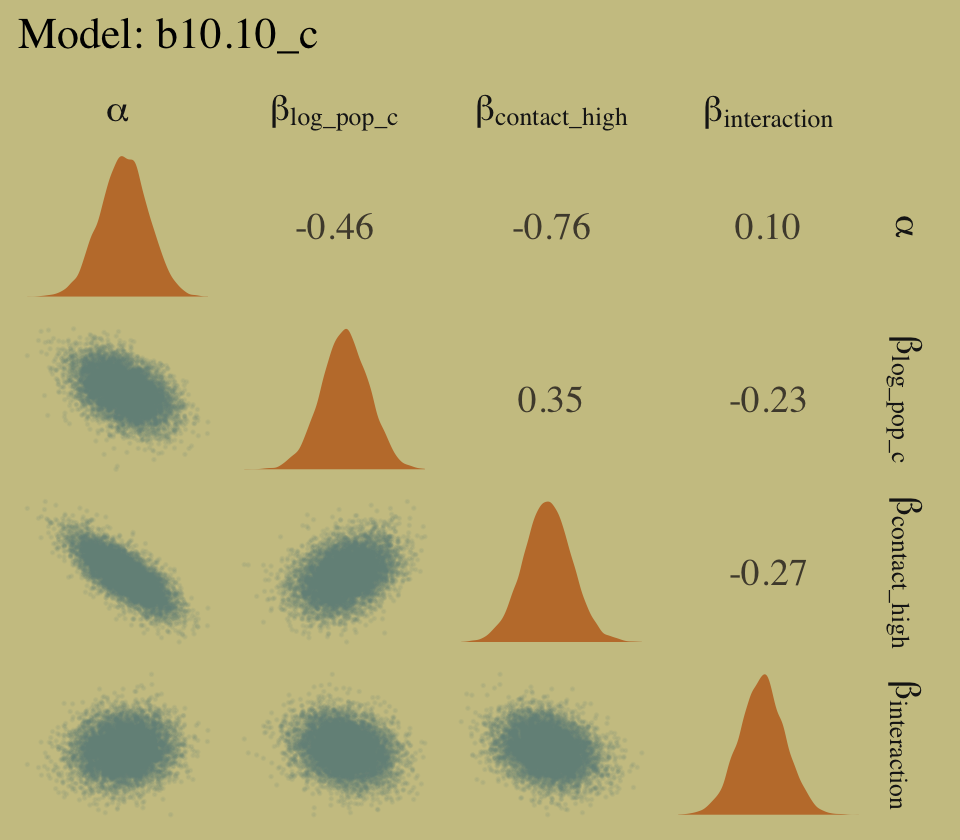

## scale reduction factor on split chains (at convergence, Rhat = 1).Center log_pop.

d <-

d %>%

mutate(log_pop_c = log_pop - mean(log_pop))Now fit the log_pop-centered model.

b10.10_c <-

brm(data = d,

family = poisson,

total_tools ~ 1 + log_pop_c + contact_high + contact_high:log_pop_c,

prior = c(prior(normal(0, 10), class = Intercept),

prior(normal(0, 1), class = b)),

iter = 3000, warmup = 1000, chains = 4, cores = 4,

seed = 10,

file = "fits/b10.10_c")print(b10.10_c)## Family: poisson

## Links: mu = log

## Formula: total_tools ~ 1 + log_pop_c + contact_high + contact_high:log_pop_c

## Data: d (Number of observations: 10)

## Draws: 4 chains, each with iter = 3000; warmup = 1000; thin = 1;

## total post-warmup draws = 8000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 3.31 0.09 3.14 3.48 1.00 5352 6011

## log_pop_c 0.26 0.04 0.19 0.33 1.00 6267 6190

## contact_high 0.28 0.12 0.05 0.51 1.00 5639 5820

## log_pop_c:contact_high 0.07 0.17 -0.27 0.40 1.00 6428 5818

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

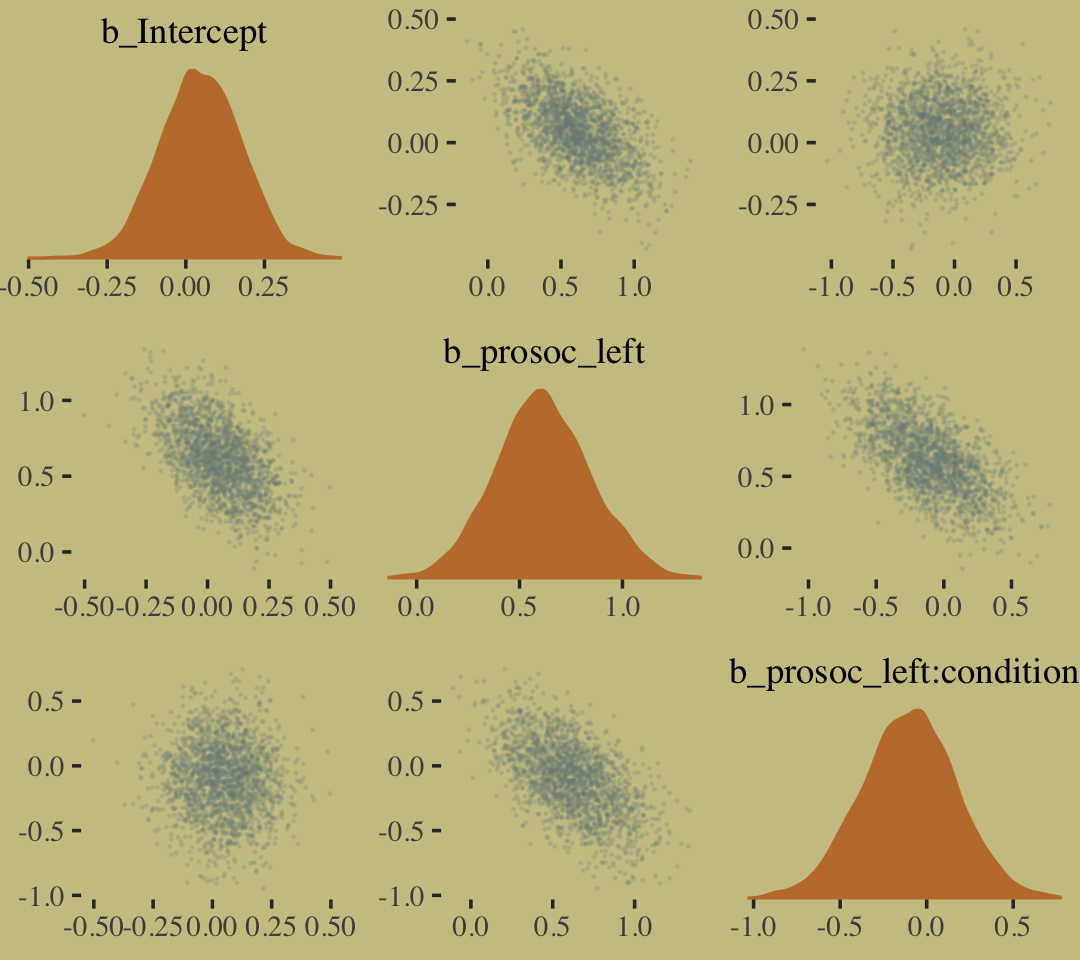

## scale reduction factor on split chains (at convergence, Rhat = 1).We’ve been using bayesplot::mcmc_pairs() a lot for our posterior pairs plots. Let’s get in a little practice with GGally::ggpairs() for Figure 10.10. In Chapters 5 and 8, we used custom functions to augment the default ggpairs() output. We’ll continue that trend, here. Here are the custom functions for the upper triangle, the diagonal, and the lower triangle.

my_upper <- function(data, mapping, ...) {

# get the x and y data to use the other code

x <- eval_data_col(data, mapping$x)

y <- eval_data_col(data, mapping$y)

r <- unname(cor.test(x, y)$estimate)

rl <- formatC(r, format = 'f', digits = 2)

# plot the cor value

ggally_text(

label = rl,

mapping = aes(),

size = 4,

color = wes_palette("Moonrise2")[4],

alpha = 4/5,

family = "Times") +

theme_void()

}

my_diag <- function(data, mapping, ...) {

ggplot(data = data, mapping = mapping) +

geom_density(fill = wes_palette("Moonrise2")[2], linewidth = 0) +

theme_void()

}

my_lower <- function(data, mapping, ...) {

ggplot(data = data, mapping = mapping) +

geom_point(color = wes_palette("Moonrise2")[1],

size = 1/10, alpha = 1/10) +

theme_void()

}To learn more about the nature of the code for the my_upper() function, check out issue #139 in the GGally GitHub repository. Here are our plots for the left and right panels of Figure 10.10.

library(GGally)

# left panel for `b10.10`

as_draws_df(b10.10) %>%

select(starts_with("b_")) %>%

set_names(c("alpha", "beta[log_pop]", "beta[contact_high]", "beta[interaction]")) %>%

ggpairs(upper = list(continuous = my_upper),

diag = list(continuous = my_diag),

lower = list(continuous = my_lower),

labeller = "label_parsed") +

ggtitle("Model: b10.10") +

theme(strip.text = element_text(size = 11))

# right panel for `b10.10_c`

as_draws_df(b10.10_c) %>%

select(starts_with("b_")) %>%

set_names(c("alpha", "beta[log_pop_c]", "beta[contact_high]", "beta[interaction]")) %>%

ggpairs(upper = list(continuous = my_upper),

diag = list(continuous = my_diag),

lower = list(continuous = my_lower),

labeller = "label_parsed") +

ggtitle("Model: b10.10_c") +

theme(strip.text = element_text(size = 11))

In case you were wondering, it turns out you cannot combine GGally::ggpairs() plots with syntax from the patchwork package. Check out issue #52 from the patchwork GitHub repo to learn why.

10.2.3 Example: Exposure and the offset.

For the last Poisson example, we’ll look at a case where the exposure varies across observations. When the length of observation, area of sampling, or intensity of sampling varies, the counts we observe also naturally vary. Since a Poisson distribution assumes that the rate of events is constant in time (or space), it’s easy to handle this. All we need to do, as explained on page 312 [of the text], is to add the logarithm of the exposure to the linear model. The term we add is typically called an offset. (p. 321, emphasis in the original)

Here we simulate our data.

set.seed(10)

num_days <- 30

num_weeks <- 4

y <- rpois(num_days, 1.5)

y_new <- rpois(num_weeks, 0.5 * 7)Let’s make them tidy and add log_days.

(

d <-

tibble(y = c(y, y_new),

days = c(rep(1, num_days), rep(7, num_weeks)),

monastery = c(rep(0, num_days), rep(1, num_weeks))) %>%

mutate(log_days = log(days))

)## # A tibble: 34 × 4

## y days monastery log_days

## <int> <dbl> <dbl> <dbl>

## 1 1 1 0 0

## 2 1 1 0 0

## 3 1 1 0 0

## 4 2 1 0 0

## 5 0 1 0 0

## 6 1 1 0 0

## 7 1 1 0 0

## 8 1 1 0 0

## 9 2 1 0 0

## 10 1 1 0 0

## # … with 24 more rowsWith the brms package, you use the offset() syntax, in which you put a pre-processed variable like log_days or the log of a variable, such as log(days).

b10.15 <-

brm(data = d,

family = poisson,

y ~ 1 + offset(log_days) + monastery,

prior = c(prior(normal(0, 100), class = Intercept),

prior(normal(0, 1), class = b)),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

file = "fits/b10.15")As we look at the model summary, keep in mind that the parameters are on the per-one-unit-of-time scale. Since we simulated the data based on summary information from two units of time–one day and seven days–, this means the parameters are in the scale of \(\log (\lambda)\) per one day.

print(b10.15)## Family: poisson

## Links: mu = log

## Formula: y ~ 1 + offset(log_days) + monastery

## Data: d (Number of observations: 34)

## Draws: 2 chains, each with iter = 2500; warmup = 500; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 0.30 0.16 -0.04 0.61 1.00 2479 2240

## monastery -1.09 0.31 -1.69 -0.50 1.00 2720 2477

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).The model summary helps clarify that when you use offset(), brm() fixes the value. Thus there is no parameter estimate for the offset(). It’s a fixed part of the model not unlike how the \(\nu\) parameter of Student’s \(t\) gets fixed to infinity when you use the Gaussian likelihood.

To get the posterior distributions for average daily outputs for the old and new monasteries, respectively, we’ll use use the formulas

\[\begin{align*} \lambda_\text{old} & = \exp (\alpha) \;\;\; \text{and} \\ \lambda_\text{new} & = \exp (\alpha + \beta_\text{monastery}). \end{align*}\]

Following those transformations, we’ll summarize the \(\lambda\) distributions with medians and 89% HDIs with help from the tidybayes::mean_hdi() function.

library(tidybayes)

as_draws_df(b10.15) %>%

transmute(lambda_old = exp(b_Intercept),

lambda_new = exp(b_Intercept + b_monastery)) %>%

gather() %>%

mutate(key = factor(key, levels = c("lambda_old", "lambda_new"))) %>%

group_by(key) %>%

mean_hdi(value, .width = .89) %>%

mutate_if(is.double, round, digits = 2)## # A tibble: 2 × 7

## key value .lower .upper .width .point .interval

## <fct> <dbl> <dbl> <dbl> <dbl> <chr> <chr>

## 1 lambda_old 1.36 1.03 1.73 0.89 mean hdi

## 2 lambda_new 0.47 0.27 0.65 0.89 mean hdiAs McElreath pointed out in the text, “Your estimates will be slightly different, because you got different randomly simulated data” (p. 322). However, if you look back up to our simulation code, those median values are pretty close to the 1.5 and 0.5 values we plugged into the rpois() functions.

10.3 Other count regressions

The next two of the remaining four models are maximum entropy distributions for certain problem types. The last two are mixtures, of which we’ll see more in the next chapter.

10.3.1 Multinomial.

When more than two types of unordered events are possible, and the probability of each type of event is constant across trials, then the maximum entropy distribution is the multinomial distribution. [We] already met the multinomial, implicitly, in Chapter 9 when we tossed pebbles into buckets as an introduction to maximum entropy. The binomial is really a special case of this distribution. And so its distribution formula resembles the binomial, just extrapolated out to three or more types of events. If there are \(K\) types of events with probabilities \(p_1, \dots, p_K\), then the probability of observing \(y_1, \dots, y_K\) events of each type out of \(n\) trials is (p. 323):

\[\Pr (y_1, ..., y_K | n, p_1, ..., p_K) = \frac{n!}{\prod_i y_i!} \prod_{i = 1}^K p_i^{y_i}\]

Compare that equation with the simpler version in section 2.3.1 (page 33 in the text).

10.3.1.1 Explicit multinomial models.

“The conventional and natural link is this context is the multinomial logit. This link function takes a vector of scores, one for each \(K\) event types, and computed the probability of a particular type of event \(K\) as” (p. 323, emphasis in the original)

\[\Pr(k | s_1, s_2, \dots, s_K) = \frac{\exp (s_k)}{\sum_{i = 1}^K \exp (s_i)}\]

⚠️ McElreath then went on to explain how multinomial logistic regression models are among the more difficult of the GLMs to master. He wasn’t kidding. To get a grasp on these, we’ll cover them in a little more detail, and our section headers will be more granular than those in the text. Whereas McElreath kept all of the material in Section 10.3.1.1 together, we will break things up into subsections 10.3.1.1.1 through 10.3.1.1.3. ⚠️

10.3.1.1.1 “Intercepts”-only.

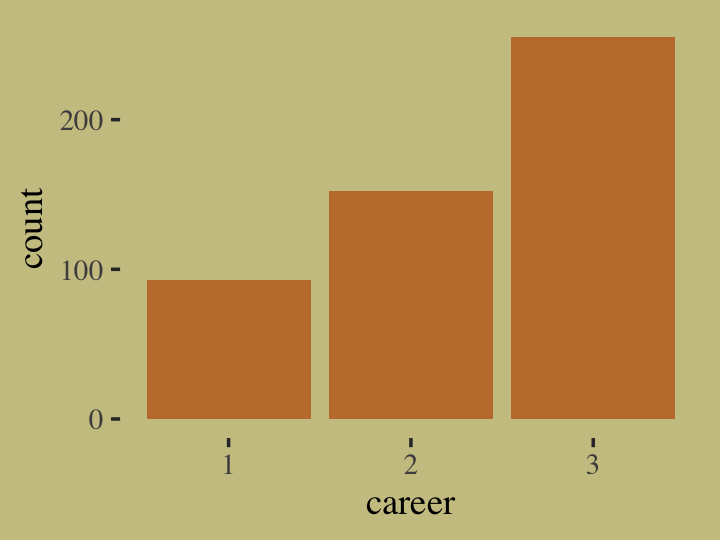

To begin, let’s simulate the data just like McElreath did in the R code 10.56 block.

library(rethinking)

# simulate career choices among 500 individuals

n <- 500 # number of individuals

income <- 1:3 # expected income of each career

score <- 0.5 * income # scores for each career, based on income

# next line converts scores to probabilities

p <- softmax(score[1], score[2], score[3])

# now simulate choice

# outcome career holds event type values, not counts

career <- rep(NA, n) # empty vector of choices for each individual

set.seed(10)

# sample chosen career for each individual

for(i in 1:n) career[i] <- sample(1:3, size = 1, prob = p)Here’s what the data look like.

tibble(career = career) %>%

ggplot(aes(x = career)) +

geom_bar(linewidth = 0, fill = wes_palette("Moonrise2")[2])

Our career variable is composed of three categories, 1:3, with each category more likely than the one before. Here’s a breakdown of the counts, percentages, and probabilities of each category.

tibble(career) %>%

count(career) %>%

mutate(percent = (100 * n / sum(n)),

probability = n / sum(n))## # A tibble: 3 × 4

## career n percent probability

## <int> <int> <dbl> <dbl>

## 1 1 93 18.6 0.186

## 2 2 152 30.4 0.304

## 3 3 255 51 0.51To help build an appreciation for how we simulated data with these proportions and how the process links in with the formulas, above, we’ll retrace the first few simulation steps within a tidyverse-centric work flow. Recall how in those first few steps we defined values for income, score, and p. Here they are again in a tibble.

tibble(income = 1:3) %>%

mutate(score = 0.5 * income) %>%

mutate(p = exp(score) / sum(exp(score)))## # A tibble: 3 × 3

## income score p

## <int> <dbl> <dbl>

## 1 1 0.5 0.186

## 2 2 1 0.307

## 3 3 1.5 0.506Notice how the values in the p column match up well with the probability values from the output from the block just above. Our simulation successfully produces data corresponding to the data-generating values. Woot! Also note how the code we just used to compute those p values, p = exp(score) / sum(exp(score)), corresponds nicely with the formula from above,

\[\Pr(k |s_1, s_2, \dots, s_K) = \frac{\exp (s_k)}{\sum_{i = 1}^K \exp (s_i)}.\]

What still might seem mysterious is what those \(s\) values in the equation are. In the simulation and in the prose, McElreath called them scores. Another way to think about them is as weights. The thing to get is that their exact values aren’t important so much as their difference one from another. You’ll note that score for income == 2 was 0.5 larger than that of income == 1. The same was true for income == 3 and income == 2. So if we add an arbitrary constant to each of those score values, like 104, we’ll get the same p values.

tibble(score = 104 + c(0.5, 1, 1.5)) %>%

mutate(p = exp(score) / sum(exp(score)))## # A tibble: 3 × 2

## score p

## <dbl> <dbl>

## 1 104. 0.186

## 2 105 0.307

## 3 106. 0.506Now keeping that in mind, recall how McElreath said that though we have \(K\) categories, \(K = 3\) in this case, we only estimate \(K - 1\) linear models. “In a multinomial (or categorical) GLM, you need \(K - 1\) linear models for \(K\) types of events” (pp. 323–324). Right before he showed the code for m10.16, he further wrote:

We also have to pick one of the event types to be the reference type. We’ll use the first one. Instead of getting a linear model, that type is assigned a constant value. Then the other types get linear models that contain parameters relative to the reference type. (p. 324)

In his model code (R code 10.57), you’ll see he used zero as that constant value. As it turns out, it is common practice to set the score value for the reference category to zero. It’s also a common practice to use the first event type as the reference category. Importantly, in his (2022g) vignette, Parameterization of response distributions in brms, Bürkner clarified the brms default is to use the first response category as the reference and set it to a zero as well. Returning to our tibble, here are what the p values for each income level are if we set the score for income == 1 to 0 and have each following score value increase by 0.5.

tibble(income = 1:3,

score = c(0, 0.5, 1)) %>%

mutate(p = exp(score) / sum(exp(score)))## # A tibble: 3 × 3

## income score p

## <int> <dbl> <dbl>

## 1 1 0 0.186

## 2 2 0.5 0.307

## 3 3 1 0.506Those p values are still the same as in the prior examples. If our model fitting is successful, our statistical model will return just those probability estimates. To get ready to fit our model, let’s switch out rethinking for brms.

detach(package:rethinking, unload = T)

library(brms)Before we fit the model, we might take a quick look at the prior structure with brms::get_prior().

get_prior(data = list(career = career),

family = categorical(link = logit),

career ~ 1)## prior class coef group resp dpar nlpar lb ub source

## (flat) Intercept default

## student_t(3, 0, 2.5) Intercept mu2 default

## student_t(3, 0, 2.5) Intercept mu3 defaultIn brms-parlance, this an Intercepts-only model. We have two “intercepts”, which are differentiated in the dpar column. We’ll talk more about what these are in just a bit; don’t worry. I show this here because as of brms 2.12.0, “specifying global priors for regression coefficients in categorical models is deprecated.” The upshot is even if we want to use the same prior for both, we need to use the dpar argument for each. With that in mind, here’s our multinomial model in brms. Do note the specification family = categorical(link = logit).

b10.16 <-

brm(data = list(career = career),

family = categorical(link = logit),

career ~ 1,

prior = c(prior(normal(0, 5), class = Intercept, dpar = mu2),

prior(normal(0, 5), class = Intercept, dpar = mu3)),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

file = "fits/b10.16")Check the results.

print(b10.16)## Family: categorical

## Links: mu2 = logit; mu3 = logit

## Formula: career ~ 1

## Data: list(career = career) (Number of observations: 500)

## Draws: 2 chains, each with iter = 2500; warmup = 500; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## mu2_Intercept 0.50 0.14 0.23 0.77 1.00 1405 1647

## mu3_Intercept 1.02 0.12 0.78 1.26 1.00 1490 1817

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).brms::brm() referred to the \(K\) categories as mu1, mu2, and mu3. Since career == 1 is the reference category, the score for which was set to zero, there is no parameter for mu1_Intercept. That’s a zero. Now notice how mu2_Intercept is about 0.5 and mu3_Intercept is about 1. That’s just like those score values from our last tibble block! But these parameters are of scores or weights; they are not probabilities. This means just applying the inv_logit_scaled() function to them won’t work.

fixef(b10.16)[, -2] %>% inv_logit_scaled()## Estimate Q2.5 Q97.5

## mu2_Intercept 0.6218770 0.5583327 0.6827385

## mu3_Intercept 0.7340148 0.6846142 0.7796135Those Estimate values are not the probability values we’re looking for. Why? Because the weights are all relative to one another. The easiest way to get what we want, the probabilities for the three categories, is with fitted(). Since this model has no predictors, only intercepts, we won’t specify any newdata. In such a case, fitted() will return fitted values for each case in the data. Going slow, let’s take a look at the structure of the output.

fitted(b10.16) %>% str()## num [1:500, 1:4, 1:3] 0.186 0.186 0.186 0.186 0.186 ...

## - attr(*, "dimnames")=List of 3

## ..$ : NULL

## ..$ : chr [1:4] "Estimate" "Est.Error" "Q2.5" "Q97.5"

## ..$ : chr [1:3] "P(Y = 1)" "P(Y = 2)" "P(Y = 3)"Just as expected, we have 500 rows–one for each case in the original data. We have four summary columns, the typical Estimate, Est.Error, Q2.5, and Q97.5. We also have a third dimension composed of three levels, P(Y = 1), P(Y = 2), and P(Y = 3). Those index which of the three career categories each probability summary is for. Since the results are identical for each row, we’ll simplify the output by only keeping the first row.

fitted(b10.16)[1, , ] %>%

round(digits = 2)## P(Y = 1) P(Y = 2) P(Y = 3)

## Estimate 0.19 0.30 0.51

## Est.Error 0.02 0.02 0.02

## Q2.5 0.15 0.26 0.47

## Q97.5 0.22 0.35 0.55If we take the transpose of that, it will put the results in the order we’re more accustomed to.

fitted(b10.16)[1, , ] %>%

t() %>%

round(digits = 2)## Estimate Est.Error Q2.5 Q97.5

## P(Y = 1) 0.19 0.02 0.15 0.22

## P(Y = 2) 0.30 0.02 0.26 0.35

## P(Y = 3) 0.51 0.02 0.47 0.55Now compare those summaries with the empirically-derived percent and probability values we computed earlier.

tibble(career) %>%

count(career) %>%

mutate(percent = (100 * n / sum(n)),

probability = n / sum(n))## # A tibble: 3 × 4

## career n percent probability

## <int> <int> <dbl> <dbl>

## 1 1 93 18.6 0.186

## 2 2 152 30.4 0.304

## 3 3 255 51 0.51We did it!

“Be aware that the estimates you get from these models are extraordinarily difficult to interpret. You absolutely must convert them to a vector of probabilities, to make much sense of them” (p. 325). Indeed. We spent a lot of time fussing about score values. But at no time did we really ever care about those. We wanted probabilities! And somewhat maddeningly, the parameters of our brm() model were in the metric of \(K - 1\) scores, not probabilities. Sigh.

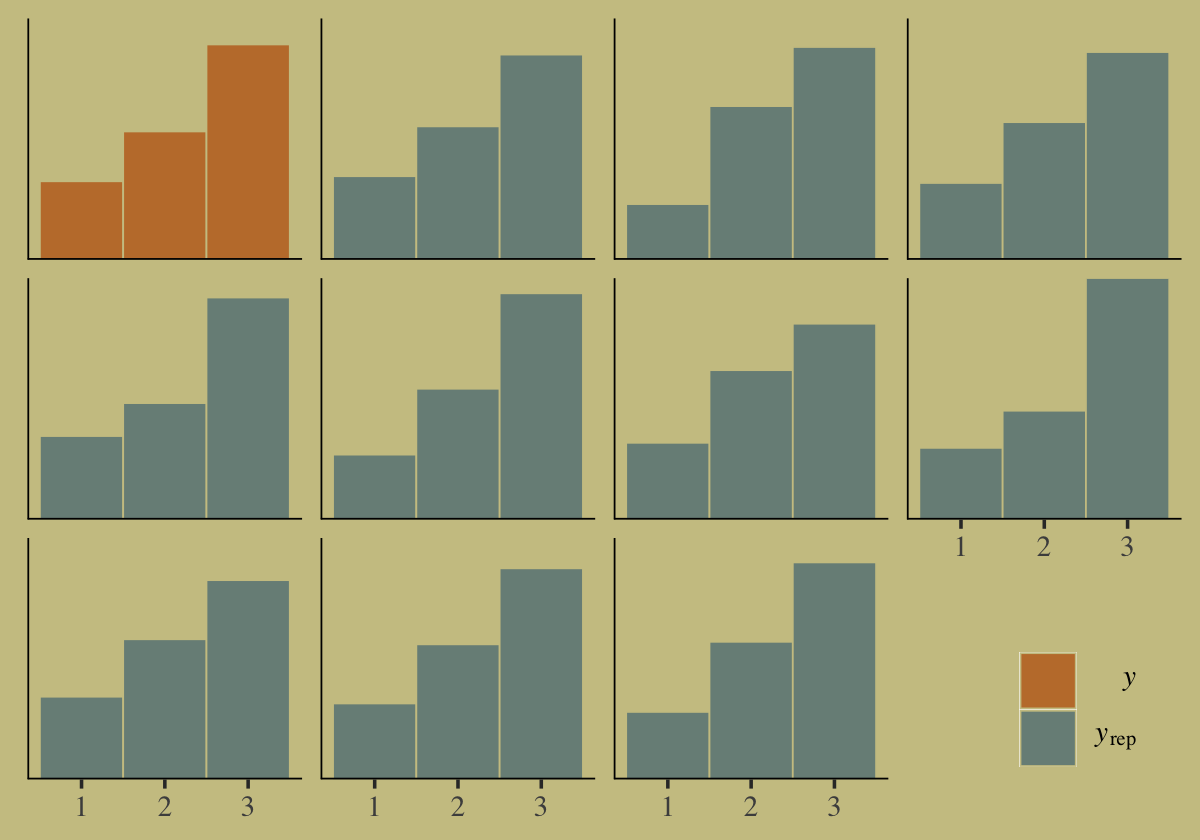

At least we can get an intuitive diagnostic summary with pp_check().

# this helps us set our custom color scheme

color_scheme_set(wes_palette("Moonrise2")[c(1, 3, 2, 2, 2, 3)])

pp_check(b10.16, type = "hist", binwidth = 1) +

theme(legend.position = c(.91, .125),

legend.key = element_rect(color = "transparent"))

Our posterior predictive check indicates the model produces synthetic data that resemble the original data.

Before we move on, I have a confession to make. If you look closely at the code McElreath used fo fit his m10.16, you’ll see it yields a single b parameter. But our b10.16 model has two parameters. What gives? Whereas we estimated the score values for career == 2 and career == 3 separately, McElreath used an interesting nonlinear syntax to get them with one. We’ll discuss this in detail in Section 10.3.1.1.3. In the meantime, we’ll move along to the next example in the text.

10.3.1.1.2 Add a predictor into the mix.

Here is the second data simulation, this time based on McElreath’s R code 10.58.

library(rethinking)

n <- 100

set.seed(10)

# simulate family incomes for each individual

family_income <- runif(n)

# assign a unique coefficient for each type of event

b <- (1:-1)

career <- rep(NA, n) # empty vector of choices for each individual

for (i in 1:n) {

score <- 0.5 * (1:3) + b * family_income[i]

p <- softmax(score[1], score[2], score[3])

career[i] <- sample(1:3, size = 1, prob = p)

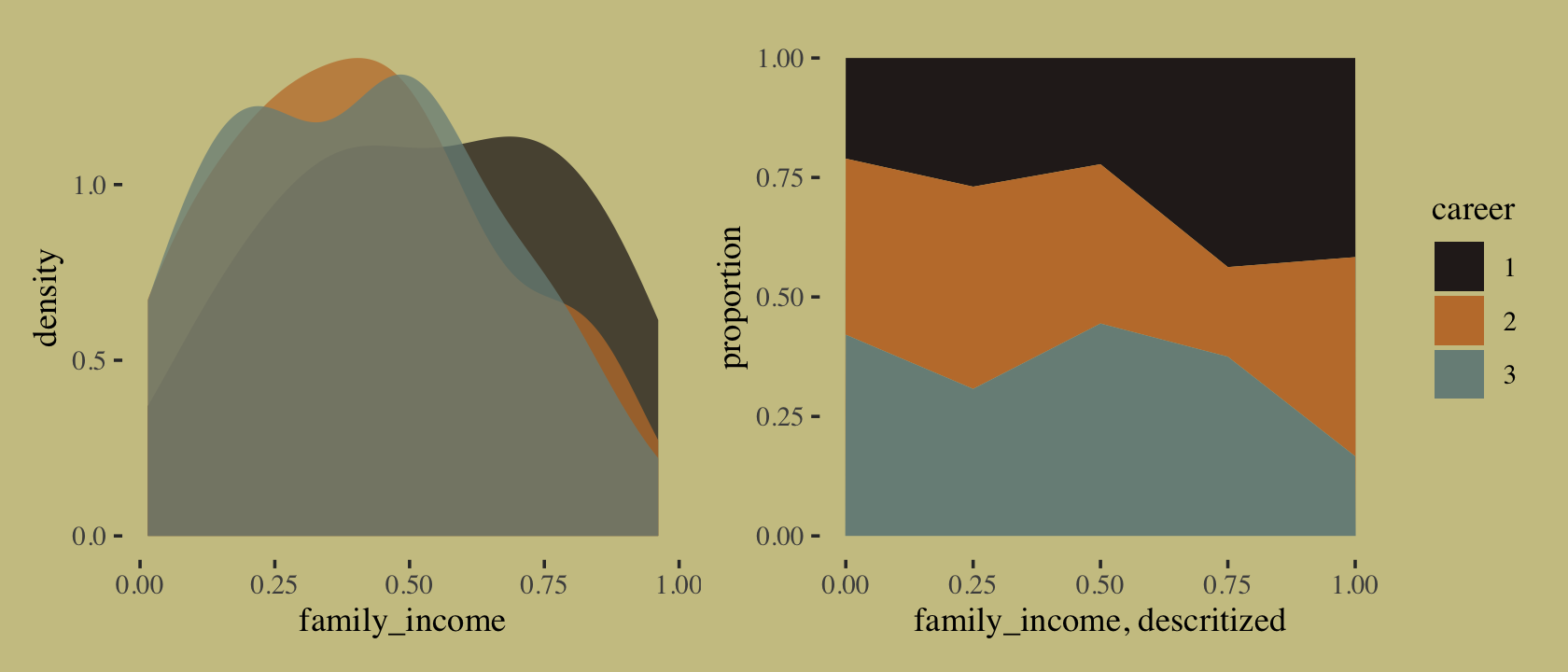

}We might examine what the family_income distributions look like across the three levels of career. We’ll do it in two plots and combine them with the patchwork syntax. The first will be overlapping densities. For the second, we’ll display the proportions of career across a discretized version of family_income in a stacked area plot.

p1 <-

tibble(career = as.factor(career),

family_income) %>%

ggplot(aes(x = family_income, fill = career)) +

geom_density(linewidth = 0, alpha = 3/4) +

scale_fill_manual(values = wes_palette("Moonrise2")[c(4, 2, 1)]) +

theme(legend.position = "none")

p2 <-

tibble(career = as.factor(career),

family_income) %>%

mutate(fi = santoku::chop_width(family_income, width = .2, start = 0, labels = 1:5)) %>%

count(fi, career) %>%

group_by(fi) %>%

mutate(proportion = n / sum(n)) %>%

mutate(f = as.double(fi)) %>%

ggplot(aes(x = (f - 1) / 4, y = proportion, fill = career)) +

geom_area() +

scale_fill_manual(values = wes_palette("Moonrise2")[c(4, 2, 1)]) +

xlab("family_income, descritized")

library(patchwork)

p1 + p2

If we were working with a larger \(N\), we could have gotten away with discretizing family_income into narrower bins. This is about as good as it gets with only 100 cases. It’s time to switch out rethinking for brms.

detach(package:rethinking, unload = T)

library(brms)Here’s the brms version of McElreath’s m10.17.

b10.17 <-

brm(data = list(career = career, # note how we used a list instead of a tibble

family_income = family_income),

family = categorical(link = logit),

career ~ 1 + family_income,

prior = c(prior(normal(0, 5), class = Intercept, dpar = mu2),

prior(normal(0, 5), class = Intercept, dpar = mu3),

prior(normal(0, 5), class = b, dpar = mu2),

prior(normal(0, 5), class = b, dpar = mu3)),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

file = "fits/b10.17")Check the summary.

print(b10.17)## Family: categorical

## Links: mu2 = logit; mu3 = logit

## Formula: career ~ 1 + family_income

## Data: list(career = career, family_income = family_incom (Number of observations: 100)

## Draws: 2 chains, each with iter = 2500; warmup = 500; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## mu2_Intercept 1.03 0.54 0.01 2.09 1.00 2626 2664

## mu3_Intercept 1.06 0.54 0.00 2.13 1.00 2663 2507

## mu2_family_income -1.77 1.00 -3.79 0.16 1.00 2701 2350

## mu3_family_income -1.78 1.01 -3.72 0.26 1.00 2736 2378

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

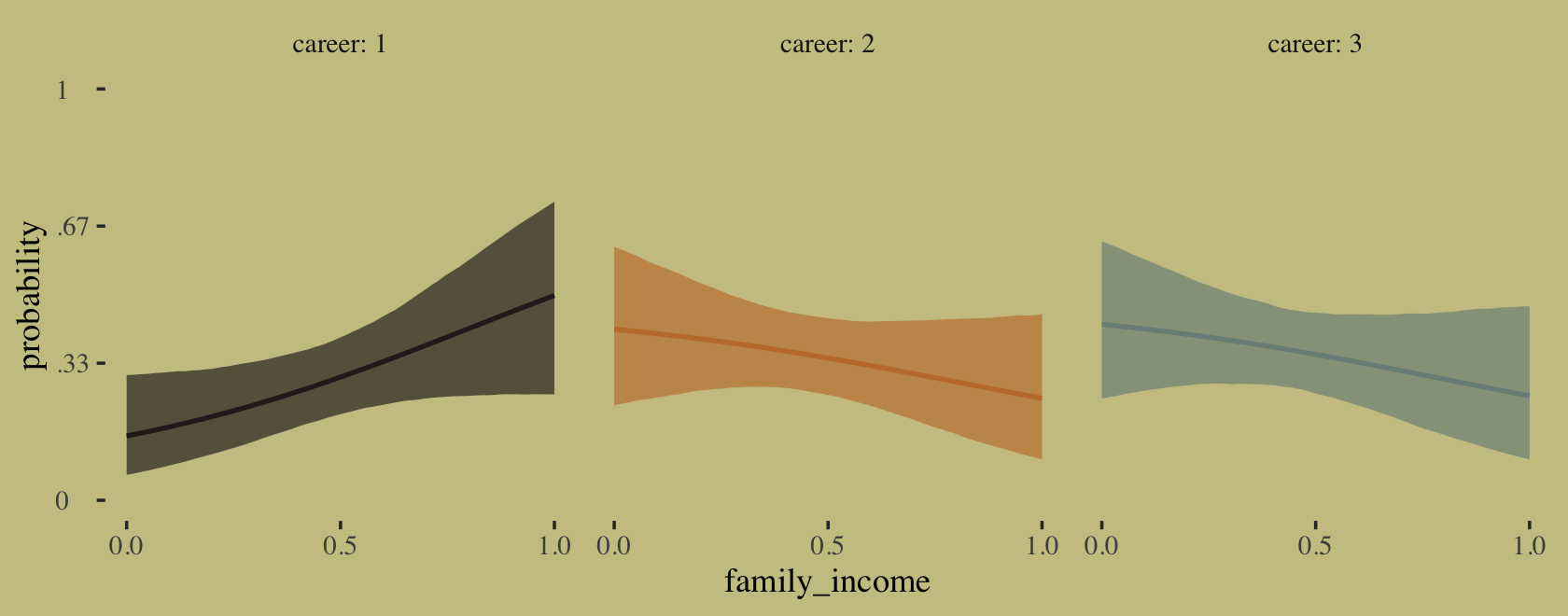

## scale reduction factor on split chains (at convergence, Rhat = 1).Happily, these results cohere with the rethinking model. Fit the model with McElreath’s rethinking::map() code and you’ll see. “Again, computing implied predictions is the safest way to interpret these models. They do a great job of classifying discrete, unordered events. But the parameters are on a scale that is very hard to interpret” (p. 325). Like before, we’ll do that with fitted(). Now we have a predictor, this time we will use the newdata argument.

nd <- tibble(family_income = seq(from = 0, to = 1, length.out = 60))

f <-

fitted(b10.17,

newdata = nd)First we’ll plot the fitted probabilities for each career level across the full range of family_income values.

# wrangle

rbind(f[, , 1],

f[, , 2],

f[, , 3]) %>%

data.frame() %>%

bind_cols(nd %>%

expand_grid(career = 1:3) %>%

arrange(career, family_income)) %>%

mutate(career = str_c("career: ", career)) %>%

# plot

ggplot(aes(x = family_income, y = Estimate,

ymin = Q2.5, ymax = Q97.5)) +

geom_ribbon(aes(fill = career),

alpha = 2/3) +

geom_line(aes(color = career),

linewidth = 3/4) +

scale_fill_manual(values = wes_palette("Moonrise2")[c(4, 2, 1)]) +

scale_color_manual(values = wes_palette("Moonrise2")[c(4, 2, 1)]) +

scale_x_continuous(breaks = 0:2 / 2) +

scale_y_continuous("probability", limits = c(0, 1),

breaks = 0:3 / 3,

labels = c("0", ".33", ".67", "1")) +

theme(axis.text.y = element_text(hjust = 0),

legend.position = "none") +

facet_wrap(~career)

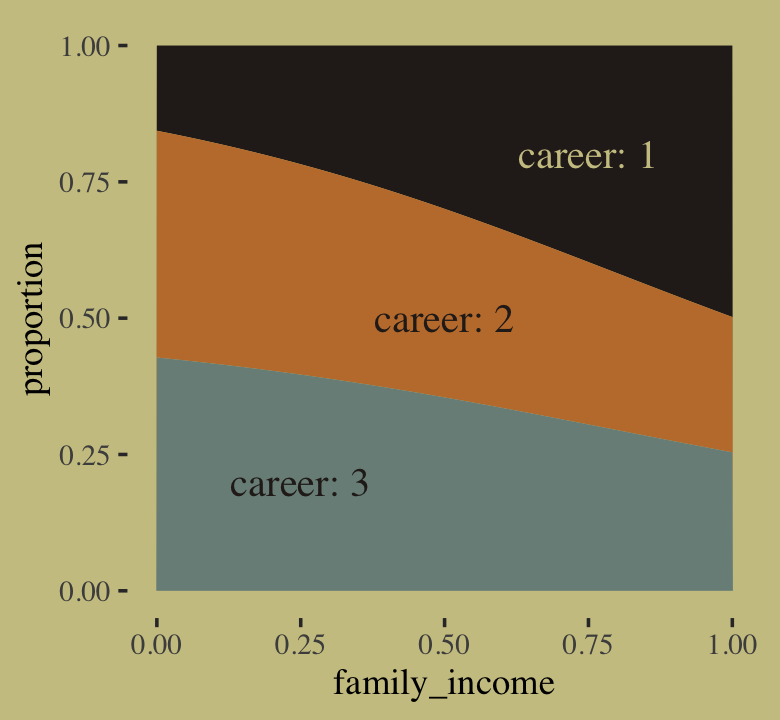

If we’re willing to summarize those fitted lines by their posterior means, we could also make a model-implied version of the stacked area plot from above.

# annotation

text <-

tibble(family_income = c(.25, .5, .75),

proportion = c(.2, .5, .8),

label = str_c("career: ", 3:1),

color = c("a", "a", "b"))

# wrangle

rbind(f[, , 1],

f[, , 2],

f[, , 3]) %>%

data.frame() %>%

bind_cols(nd %>%

expand_grid(career = 1:3) %>%

arrange(career, family_income)) %>%

group_by(family_income) %>%

mutate(proportion = Estimate / sum(Estimate),

career = factor(career)) %>%

# plot!

ggplot(aes(x = family_income, y = proportion)) +

geom_area(aes(fill = career)) +

geom_text(data = text,

aes(label = label, color = color),

family = "Times", size = 4.25) +

scale_color_manual(values = wes_palette("Moonrise2")[4:3]) +

scale_fill_manual(values = wes_palette("Moonrise2")[c(4, 2, 1)]) +

theme(legend.position = "none")

10.3.1.1.3 The non-linear syntax is the solution.

One of the things that differentiates this ebook with my (2023b) translation of McElreath’s (2020a) 2nd edition is the non-linear syntax. Virtually all of the models in this book can be handled with what you might call the conventional brms syntax. However, brms offers another very flexible way of specifying models with what Bürkner calls the non-linear syntax. For a detailed introduction, see Bürkner’s (2022d) vignette, Estimating non-linear models with brms. McElreath has peppered his 2nd edition with models that require this non-linear syntax, which means readers of my translation of the 2nd edition get a lot of practice using the non-linear syntax. But for this first edition, McElreath’s m10.16 is the only model requiring the non-linear syntax. In this section, I’ll provide a brief introduction.

First, let’s simulate the data from McElreath’s R code 10.56 block again.

library(rethinking)

# simulate career choices among 500 individuals

n <- 500 # number of individuals

income <- 1:3 # expected income of each career

score <- 0.5 * income # scores for each career, based on income

# next line converts scores to probabilities

p <- softmax(score[1], score[2], score[3])

# now simulate choice

# outcome career holds event type values, not counts

career <- rep(NA, n) # empty vector of choices for each individual

set.seed(10)

# sample chosen career for each individual

for(i in 1:n) career[i] <- sample(1:3, size = 1, prob = p)There are at least two alternative ways to fit our b10.16, which is based on the conventional brms syntax. The first uses what we might call a verbose version of the conventional syntax. We’ll call it b10.16_verbose. The second uses the non-linear syntax. We’ll call that one b10.16_nonlinear. Fit the models.

# verbose syntax

b10.16_verbose <-

brm(data = list(career = career),

family = categorical(link = logit),

bf(career ~ 1,

mu2 ~ 1,

mu3 ~ 1),

prior = c(prior(normal(0, 5), class = Intercept, dpar = mu2),

prior(normal(0, 5), class = Intercept, dpar = mu3)),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

file = "fits/b10.16_verbose")

# nonlinear syntax

b10.16_nonlinear <-

brm(data = list(career = career),

family = categorical(link = logit),

bf(career ~ 1,

nlf(mu2 ~ a2),

nlf(mu3 ~ a3),

a2 + a3 ~ 1),

prior = c(prior(normal(0, 5), class = b, nlpar = a2),

prior(normal(0, 5), class = b, nlpar = a3)),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

file = "fits/b10.16_nonlinear")We might use fixef() to show that the parameter summaries are the same for all three variants–differing only by simulation variance.

fixef(b10.16) %>% round(digits = 2)## Estimate Est.Error Q2.5 Q97.5

## mu2_Intercept 0.50 0.14 0.23 0.77

## mu3_Intercept 1.02 0.12 0.78 1.26fixef(b10.16_verbose) %>% round(digits = 2)## Estimate Est.Error Q2.5 Q97.5

## mu2_Intercept 0.50 0.14 0.23 0.77

## mu3_Intercept 1.02 0.12 0.78 1.26fixef(b10.16_nonlinear) %>% round(digits = 2)## Estimate Est.Error Q2.5 Q97.5

## a2_Intercept 0.49 0.13 0.23 0.76

## a3_Intercept 1.01 0.12 0.78 1.25I point this out because it’s the non-linear approach that will allow us to fit a model like McElreath’s m10.16. My hope is the syntax we used in the b10.16_verbose model will help clarify what’s going on with the non-linear syntax. When we fit multinomial models with brms, the terse conventional formula syntax might not make clear how there are actually \(K - 1\) formulas. The more verbose syntax of our b10.16_verbose model shows how we can specify those models directly. In our case, that was with those mu2 ~ 1, mu3 ~ 1 lines. When we switch to the non-linear syntax, we explicitly model mu2 and mu3 and, as is typical of the non-linear syntax, we name our parameters. You can see another comparison of these three ways of fitting a multinomial model at the Nonlinear syntax with a multinomial model? thread on the Stan Forums.

Now it’s time to focus on the correct brms version of McElreath’s m10.16. I’m going to call this b10.16_true.

b10.16_true <-

brm(data = list(career = career),

family = categorical(link = logit),

bf(career ~ 1,

nlf(mu2 ~ b * 2),

nlf(mu3 ~ b * 3),

b ~ 1),

prior(normal(0, 5), class = b, nlpar = b),

iter = 2500, warmup = 500, cores = 2, chains = 2,

seed = 10,

file = "fits/b10.16_true")What did we just estimate?

print(b10.16_true)## Family: categorical

## Links: mu2 = logit; mu3 = logit

## Formula: career ~ 1

## mu2 ~ b * 2

## mu3 ~ b * 3

## b ~ 1

## Data: list(career = career) (Number of observations: 500)

## Draws: 2 chains, each with iter = 2500; warmup = 500; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## b_Intercept 0.34 0.04 0.26 0.43 1.00 1433 1781

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).This summary is probably even more difficult to interpret than the summary for b10.16. Getting these posterior draws out of the log-odds metric will help.

library(tidybayes)

as_draws_df(b10.16_true) %>%

transmute(b = inv_logit_scaled(b_b_Intercept)) %>%

mean_qi(b) %>%

mutate_if(is.double, round, digits = 2)## # A tibble: 1 × 6

## b .lower .upper .width .point .interval

## <dbl> <dbl> <dbl> <dbl> <chr> <chr>

## 1 0.59 0.57 0.61 0.95 mean qiThe posterior for b is about 0.59. What might that be? Well, let’s spell this out by substituting it into the model formula for b10.16b. We get mu2 ~ 0.59 * 2 and mu3 ~ 0.59 * 3. Now look back at the first few lines of code from McElreath’s R code 10.56.

# simulate career choices among 500 individuals

n <- 500 # number of individuals

income <- 1:3 # expected income of each career

score <- 0.5 * income # scores for each career, based on incomeOur mu2 is the same as the second score value. Our 0.59 is our estimate for the 0.5 value in the bottom line. The * 2 part in our formula syntax is the same as * income in the bottom line. What our non-linear model did was allow us to estimate the 0.5 constant we multiplied the income values by to simulate the original score data.

You may be wondering what we might do with our vector of b_b_Intercept draws. Well, after converting the draws out of the log-odds scale, we can carefully multiply them by our three income values, which will return the posteriors for the three score values. We can then use the formula form above,

\[\Pr(k |s_1, s_2, ..., s_K) = \frac{\exp (s_k)}{\sum_{i = 1}^K \exp (s_i)},\]

to return the posterior probabilities for each of the career choices based on their income values.

as_draws_df(b10.16_true) %>%

transmute(draw = .draw,

s1 = inv_logit_scaled(b_b_Intercept) * income[1],

s2 = inv_logit_scaled(b_b_Intercept) * income[2],

s3 = inv_logit_scaled(b_b_Intercept) * income[3]) %>%

gather(key, score, -draw) %>%

mutate(income = str_remove(key, "s")) %>%

group_by(draw) %>%

# use the formula

mutate(p = exp(score) / sum(exp(score))) %>%

group_by(income) %>%

mean_qi(p) %>%

mutate_if(is.double, round, digits = 2)## # A tibble: 3 × 7

## income p .lower .upper .width .point .interval

## <chr> <dbl> <dbl> <dbl> <dbl> <chr> <chr>

## 1 1 0.17 0.16 0.17 0.95 mean qi

## 2 2 0.3 0.3 0.3 0.95 mean qi

## 3 3 0.54 0.53 0.54 0.95 mean qiThose are fairly close to the empirical probabilities based on the data-generating values.

tibble(income = income,

score = score) %>%

mutate(p = exp(score) / sum(exp(score)))## # A tibble: 3 × 3

## income score p

## <int> <dbl> <dbl>

## 1 1 0.5 0.186

## 2 2 1 0.307

## 3 3 1.5 0.506I’m not going to dive any further into the brms non-linear syntax in this ebook. If you want more, check out Bürkner’s vignette, Estimating non-linear models with brms, or my translation of McElreath’s 2nd edition. For more practice fitting multinomial models with brms, you might also check out my translation of Chapter 22 of Kruschke’s (2015) text.

10.3.1.2 Multinomial in disguise as Poisson.

Here we fit a multinomial likelihood by refactoring it to a series of Poisson’s. Let’s retrieve the Berkeley data.

library(rethinking)

data(UCBadmit)

d <- UCBadmit

rm(UCBadmit)

detach(package:rethinking, unload = T)

library(brms)Fit the models.

set_rescor(FALSE)

# binomial model of overall admission probability

b10.binom <-

brm(data = d,

family = binomial,

admit | trials(applications) ~ 1,

prior = prior(normal(0, 100), class = Intercept),

iter = 2000, warmup = 1000, cores = 3, chains = 3,

seed = 10,

file = "fits/b10.binom")

# Poisson model of overall admission rate and rejection rate

b10.pois <-

brm(data = d %>%

mutate(rej = reject), # 'reject' is a reserved word

family = poisson,

bf(mvbind(admit, rej) ~ 1) + set_rescor(FALSE),

prior = c(prior(normal(0, 100), class = Intercept, resp = admit),

prior(normal(0, 100), class = Intercept, resp = rej)),

iter = 2000, warmup = 1000, cores = 3, chains = 3,

seed = 10,

file = "fits/b10.pois")Note, the mvbind() syntax made b10.pois a multivariate Poisson model. Starting with version 2.0.0, brms supports a variety of multivariate models, which you might learn al about in Bürkner’s (2022c) vignette, Estimating multivariate models with brms. Anyway, here are the implications of b10.pois.

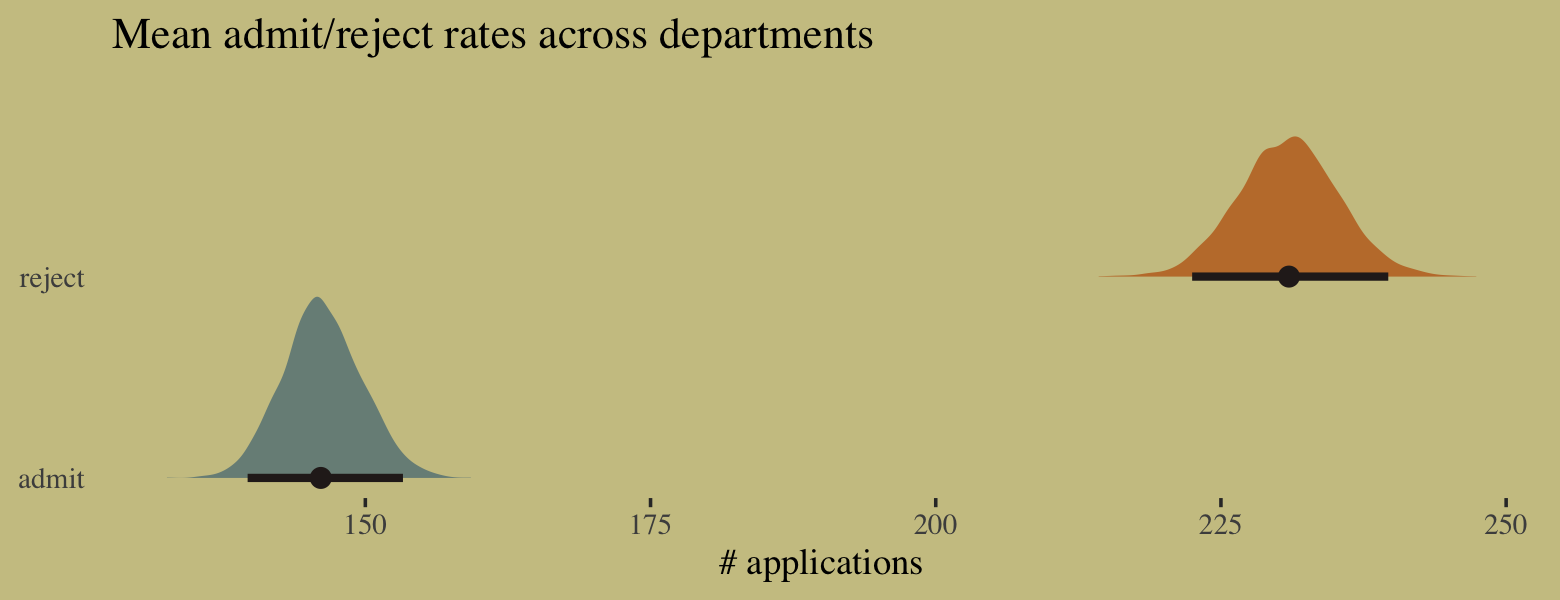

# extract the samples

post <- as_draws_df(b10.pois)

# wrangle

post %>%

transmute(admit = exp(b_admit_Intercept),

reject = exp(b_rej_Intercept)) %>%

gather() %>%

# plot

ggplot(aes(x = value, y = key, fill = key)) +

stat_halfeye(point_interval = median_qi, .width = .95,

color = wes_palette("Moonrise2")[4]) +

scale_fill_manual(values = c(wes_palette("Moonrise2")[1],

wes_palette("Moonrise2")[2])) +

scale_y_discrete(expand = expansion(add = 0.1)) +

labs(title = " Mean admit/reject rates across departments",

x = "# applications",

y = NULL) +

theme(axis.ticks.y = element_blank(),

legend.position = "none")

We might compare the model summaries.

summary(b10.binom)$fixed## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept -0.4578619 0.02977366 -0.5154444 -0.3996616 1.001555 1008.303 1441.787summary(b10.pois)$fixed## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## admit_Intercept 4.984947 0.02388462 4.939364 5.032378 1.000629 2675.898 2387.018

## rej_Intercept 5.441904 0.01909303 5.404822 5.479258 1.001133 2233.843 1255.651Here’s the posterior mean for the probability of admission, based on b10.binom.

fixef(b10.binom)[, "Estimate"] %>%

inv_logit_scaled()## [1] 0.3874932Happily, we get the same value within simulation error from model b10.pois.

k <-

fixef(b10.pois) %>%

as.numeric()

exp(k[1]) / (exp(k[1]) + exp(k[2]))## [1] 0.387708The formula for what we just did in code is

\[p_\text{admit} = \frac{\lambda_1}{\lambda_1 + \lambda_2} = \frac{\exp (\alpha_1)}{\exp (\alpha_1) + \exp (\alpha_2)}.\]

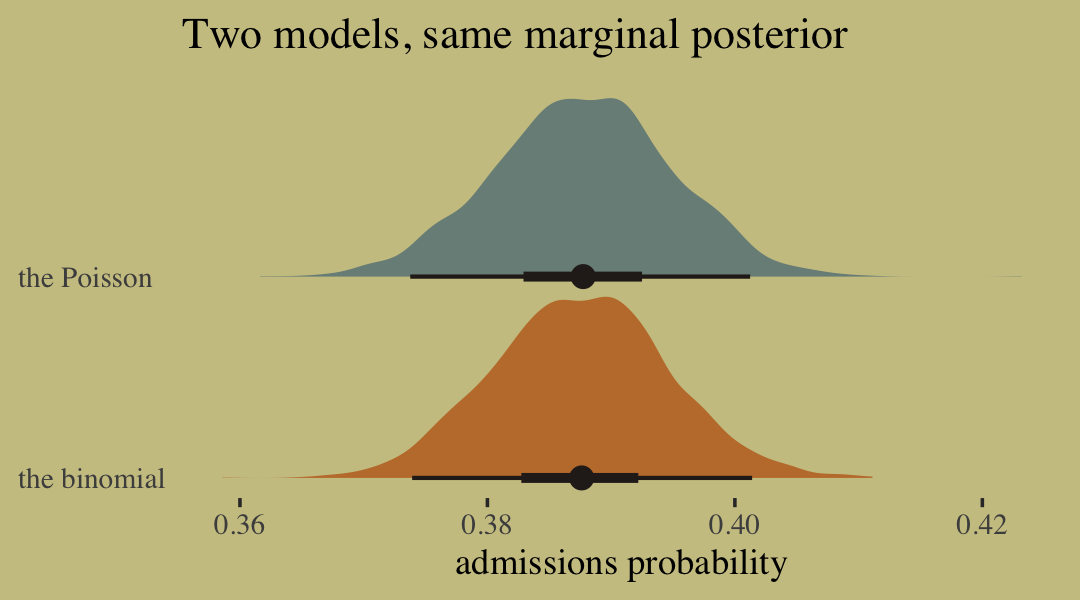

To get a better appreciation on how well the two model types converge on the same solution, we might plot the full poster for admissions probability from each.

# wrangle

bind_cols(

as_draws_df(b10.pois) %>%

transmute(`the Poisson` = exp(b_admit_Intercept) / (exp(b_admit_Intercept) + exp(b_rej_Intercept))),

as_draws_df(b10.binom) %>%

transmute(`the binomial` = inv_logit_scaled(b_Intercept))

) %>%

pivot_longer(everything()) %>%

# plot

ggplot(aes(x = value, y = name, fill = name)) +

stat_halfeye(point_interval = median_qi, .width = c(.95, .5),

color = wes_palette("Moonrise2")[4]) +

scale_fill_manual(values = c(wes_palette("Moonrise2")[2:1])) +

labs(title = "Two models, same marginal posterior",

x = "admissions probability",

y = NULL) +

scale_y_discrete(expand = expansion(add = 0.1)) +

theme(axis.text.y = element_text(hjust = 0),

axis.ticks.y = element_blank(),

legend.position = "none")

10.3.2 Geometric.

Sometimes a count variable is a number of events up until something happened. Call this “something” the terminating event. Often we want to model the probability of that event, a kind of analysis known as event history analysis or survival analysis. When the probability of the terminating event is constant through time (or distance), and the units of time (or distance) are discrete, a common likelihood function is the geometric distribution. This distribution has the form:

\[\Pr(y | p) = p (1 - p) ^{y - 1}\]

where \(y\) is the number of time steps (events) until the terminating event occurred and \(p\) is the probability of that event in each time step. This distribution has maximum entropy for unbounded counts with constant expected value. (pp. 327–328)

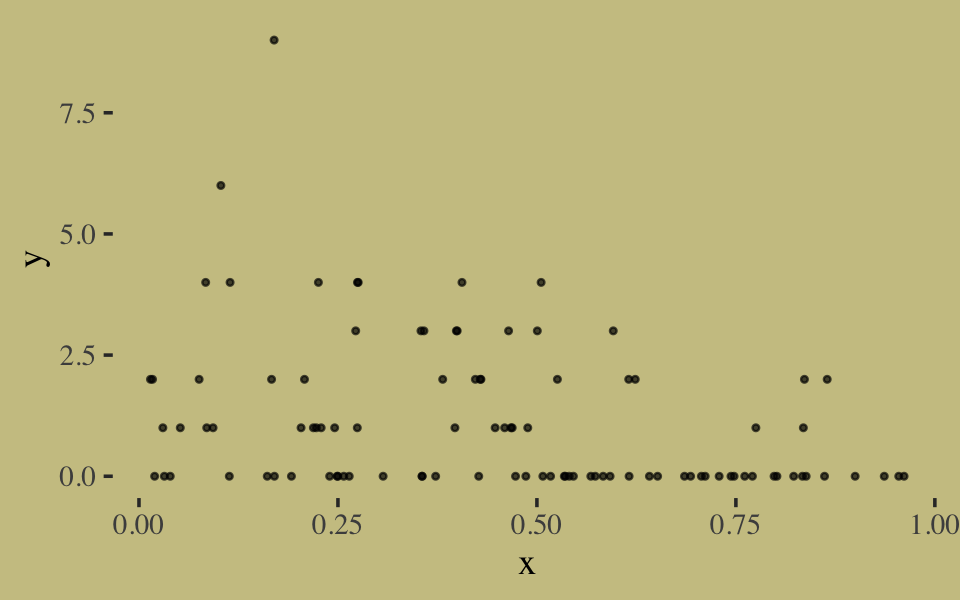

Here we simulate exemplar data.

# simulate

n <- 100

set.seed(10)

x <- runif(n)

set.seed(10)

y <- rgeom(n, prob = inv_logit_scaled(-1 + 2 * x))In case you’re curious, here are the data.

list(x = x, y = y) %>%

as_tibble() %>%

ggplot(aes(x = x, y = y)) +

geom_point(size = 3/5, alpha = 2/3)

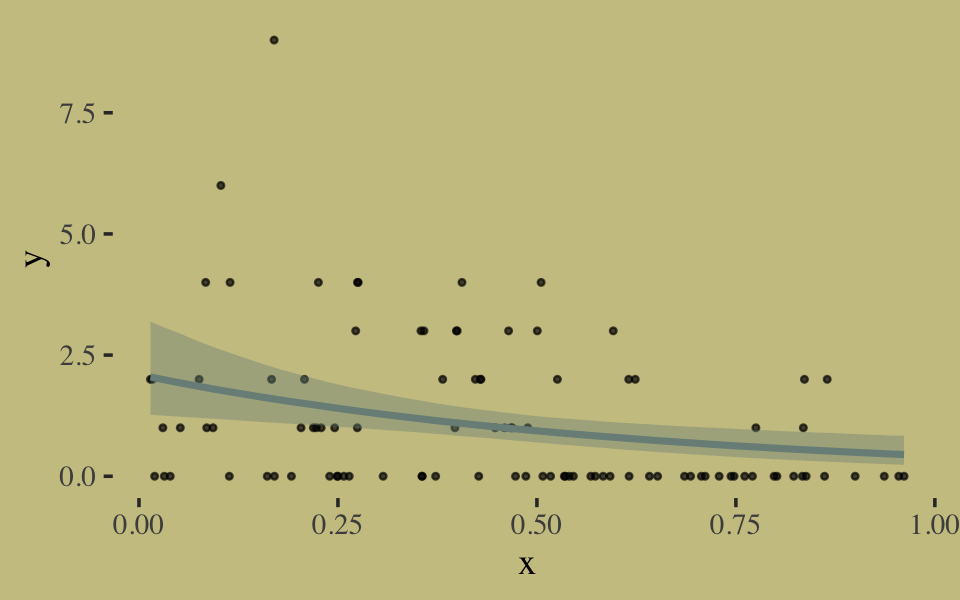

We fit the geometric model by setting family = geometric(link = log).

b10.18 <-

brm(data = list(y = y, x = x),

family = geometric(link = log),

y ~ 0 + Intercept + x,

prior = c(prior(normal(0, 10), class = b, coef = Intercept),

prior(normal(0, 1), class = b, coef = x)),

iter = 2500, warmup = 500, chains = 2, cores = 2,

seed = 10,

file = "fits/b10.18")Inspect the results.

print(b10.18, digits = 2)## Family: geometric

## Links: mu = log

## Formula: y ~ 0 + Intercept + x

## Data: list(y = y, x = x) (Number of observations: 100)

## Draws: 2 chains, each with iter = 2500; warmup = 500; thin = 1;