1.1 The Bayes’ rule

As expected, the starting point for performing Bayesian inference is Bayes’ rule, which provides the solution to the inverse problem of determining causes from observed effects. This rule combines prior beliefs with objective probabilities based on repeatable experiments, allowing us to move from observations to probable causes.2

Formally, the conditional probability of \(A_i\) given \(B\) is equal to the conditional probability of \(B\) given \(A_i\), multiplied by the marginal probability of \(A_i\), divided by the marginal probability of \(B\):

\[\begin{align} P(A_i|B)&=\frac{P(A_i,B)}{P(B)}\\ &=\frac{P(B|A_i) \times P(A_i)}{P(B)}, \tag{1.1} \end{align}\] where equation (1.1) is Bayes’ rule.

By the law of total probability, \(P(B) = \sum_i P(B \mid A_i) P(A_i) \neq 0\), and \(\{ A_i, i = 1, 2, \dots \}\) is a finite or countably infinite partition of the sample space.

In the Bayesian framework, \(B\) represents sample information that updates a probabilistic statement about an unknown object \(A_i\) according to probability rules. This is done using Bayes’ rule, which incorporates prior “beliefs” about \(A_i\), i.e., \(P(A_i)\), sample information relating \(B\) to the particular state of nature \(A_i\) through a probabilistic statement, \(P(B \mid A_i)\), and the probability of observing that specific sample information, \(P(B)\).

Let’s consider a simple example, the base rate fallacy:

Assume that the sample information comes from a positive result from a test whose true positive rate (sensitivity) is 98%, i.e., \(P(+ \mid \text{disease}) = 0.98\). In addition, the false positive rate is 2%, i.e., \(P(+ \mid \lnot\text{disease}) = 0.02\). On the other hand, the prior probability of being infected with the disease is given by the base incidence rate, \(P(\text{disease}) = 0.002\). The question is: What is the probability of actually being infected, given a positive test result?

This is an example of the base rate fallacy, where a positive test result for a disease with a very low base incidence rate still gives a low probability of actually having the disease.

The key to answering this question lies in understanding the difference between the probability of having the disease given a positive test result, \(P(\text{disease} \mid +)\), and the probability of a positive result given the disease, \(P(+ \mid \text{disease})\). The former is the crucial result, and Bayes’ rule helps us to compute it. Using Bayes’ rule (equation (1.1)):

\[ P(\text{disease} \mid +) = \frac{P(+ \mid \text{disease}) \times P(\text{disease})}{P(+)} = \frac{0.98 \times 0.002}{0.98 \times 0.002 + 0.02 \times (1-0.002)} = 0.09 \]

where \(P(+) = P(+ \mid \text{disease}) \times P(\text{disease}) + P(+ \mid \lnot \text{disease}) \times P(\lnot \text{disease})\).3

The following code shows how to perform this exercise in R.

# Define known probabilities

prob_disease <- 0.002 # P(Disease)

sensitivity <- 0.98 # P(Positive test | Disease) - True Positive Rate

false_positive_rate <- 0.02 # P(Positive test | No disease) - False Positive Rate

# Compute posterior using Bayes' theorem

posterior_prob <- (prob_disease * sensitivity) /

(prob_disease * sensitivity + (1 - prob_disease) * false_positive_rate)

# Print the result

message(sprintf("Probability of disease given a positive test is %.2f", posterior_prob))## Probability of disease given a positive test is 0.09We observe that despite having a positive result, the probability of actually having the disease remains low. This is due to the base rate being so small.

Another interesting example—one that lies at the heart of the origin of Bayes’ theorem (Thomas Bayes 1763)—concerns the existence of God (Stigler 2018). In Section X of David Hume’s An Inquiry concerning Human Understanding (1748), titled Of Miracles, Hume argues that when someone claims to have witnessed a miracle, the claim provides weak evidence that the event actually occurred, since it contradicts our everyday experience. In response, Richard Price—who edited and published An Essay Towards Solving a Problem in the Doctrine of Chances in 1763 (following Bayes’ death in 1761)—criticizes Hume’s argument by distinguishing between logical and physical impossibility. He illustrates this with the example of a die with a million sides: while rolling a specific number might seem impossible, it is merely improbable, whereas rolling a number not present on the die is physically impossible. The former may occur with enough trials; the latter never will.

Note on the following example:

The next example is adapted from a modern blog post that illustrates the base rate fallacy using a resurrection scenario. While it is not taken from the original writings of Hume or Price, it reflects themes central to their philosophical debate on probability and miracles. It is included here to demonstrate how Bayes’ rule behaves in cases involving extremely low prior probabilities. References to figures such as Jesus and Elvis are used purely for illustrative and pedagogical purposes. The goal is to explore the statistical reasoning—not to make any theological claims. Readers are encouraged to focus on the mathematical and conceptual content of the example.

To illustrate the statistical implications of this discussion, let us consider the following scenario.4

The scenario involves two reported cases of resurrection: Jesus Christ and Elvis Presley. The base rate is therefore two out of the total number of people who have ever lived—estimated at approximately 117 billion,5—that is,

\(P(\text{Res}) = \frac{2}{117 \times 10^9}\).

Suppose now that the sample information comes from a highly reliable witness, with a true positive rate of 0.9999999. Unlike the original blog post, we assume a more conservative false positive rate of 50%.

We ask: What is the probability that a resurrection actually occurred, given that a witness claims it did?

Using Bayes’ rule, and letting Res denote the event of resurrection, and Witness the event of a witness declaring a resurrection:

\[\begin{align*} P(\text{Res} \mid \text{Witness}) &= \frac{P(\text{Witness} \mid \text{Res}) \times P(\text{Res})}{P(\text{Witness})} \\ &= \frac{0.9999999 \times \frac{2}{117 \times 10^9}}{0.9999999 \times \frac{2}{117 \times 10^9} + 0.5 \times \left(1 - \frac{2}{117 \times 10^9} \right)} \\ &\approx 3.42 \times 10^{-11} \end{align*}\]

Here, the denominator is the marginal probability of a witness reporting a resurrection:

\[ P(\text{Witness}) = P(\text{Witness} \mid \text{Res}) \times P(\text{Res}) + P(\text{Witness} \mid \lnot\text{Res}) \times (1 - P(\text{Res})) \]

Thus, the probability that a resurrection actually occurred—even when reported by an extremely reliable witness—is approximately \(3.42 \times 10^{-11}\). This value is exceedingly small, but importantly, it is not zero. A value of zero would imply impossibility, whereas this result reflects extremely low probability.

The following code shows how to perform this exercise in R.

# Define known probabilities

prior_resurrection <- 2 / (117 * 1e9) # P(Resurrection)

true_positive_rate <- 0.9999999 # P(Witness | Resurrection)

# Assume a 50% false positive rate as implied in earlier context

false_positive_rate <- 0.5 # P(Witness | No Resurrection)

# Compute posterior probability using Bayes' rule

posterior_resurrection <- (prior_resurrection * true_positive_rate) /

(prior_resurrection * true_positive_rate +

(1 - prior_resurrection) * false_positive_rate)

# Print result

message(sprintf("Probability of resurrection given a witness is %.2e", posterior_resurrection))## Probability of resurrection given a witness is 3.42e-11Observe that we can condition on multiple events in Bayes’ rule. Let’s consider two conditioning events, \(B\) and \(C\). Then, equation (1.1) becomes

\[\begin{align} P(A_i\mid B,C)&=\frac{P(A_i,B,C)}{P(B,C)}\nonumber\\ &=\frac{P(B\mid A_i,C) \times P(A_i\mid C) \times P(C)}{P(B\mid C)P(C)}. \tag{1.2} \end{align}\]

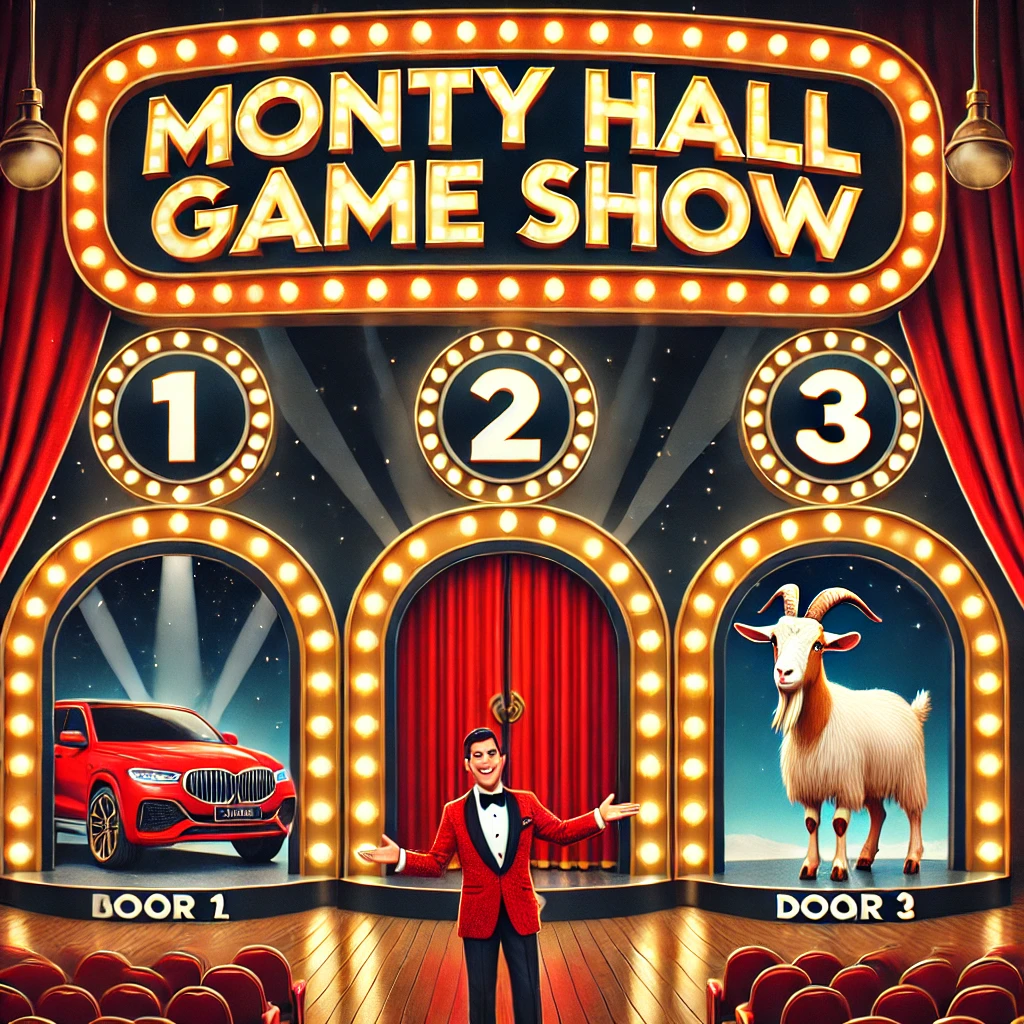

Let’s use this rule in one of the most intriguing statistical puzzles, the Monty Hall problem, to illustrate how to use equation (1.2) (Selvin 1975; Morgan et al. 1991). This was the situation faced by a contestant in the American television game show Let’s Make a Deal. In this game, the contestant was asked to choose a door, behind one of which there is a car, and behind the others, goats.

Let’s say the contestant picks door No. 1, and the host (Monty Hall), who knows what is behind each door, opens door No. 3, revealing a goat. Then, the host asks the tricky question: Do you want to pick door No. 2?

Let’s define the following events:

- \(P_i\): the event contestant picks door No. \(i\), which stays closed,

- \(H_i\): the event host picks door No. \(i\), which is open and contains a goat,

- \(C_i\): the event car is behind door No. \(i\).

In this particular setting, the contestant is interested in the probability of the event \(P(C_2 \mid H_3, P_1)\). A naive answer would be that it is irrelevant, as initially, \(P(C_i) = \frac{1}{3}, \ i = 1, 2, 3\), and now \(P(C_i \mid H_3) = \frac{1}{2}, \ i = 1, 2\), since the host opened door No. 3. So, why bother changing the initial guess if the odds are the same (1:1)?

The important point here is that the host knows what is behind each door and always picks a door where there is a goat, given the contestant’s choice. In this particular setting:

\[ P(H_3 \mid C_3, P_1) = 0, \quad P(H_3 \mid C_2, P_1) = 1, \quad P(H_3 \mid C_1, P_1) = \frac{1}{2}. \]

Then, using equation (1.2), we can calculate the posterior probability.

\[\begin{align*} P(C_2\mid H_3,P_1)&= \frac{P(C_2,H_3,P_1)}{P(H_3,P_1)}\\ &= \frac{P(H_3\mid C_2,P_1)P(C_2\mid P_1)P(P_1)}{P(H_3\mid P_1)\times P(P_1)}\\ &= \frac{P(H_3\mid C_2,P_1)P(C_2)}{P(H_3\mid P_1)}\\ &=\frac{1\times 1/3}{1/2}, \end{align*}\]

Where the third equation uses the fact that \(C_i\) and \(P_i\) are independent events, and \(P(H_3 \mid P_1) = \frac{1}{2}\) because this depends only on \(P_1\) (not on \(C_2\)).

Therefore, changing the initial decision increases the probability of getting the car from \(\frac{1}{3}\) to \(\frac{2}{3}\)! Thus, it is always a good idea to change the door.

Let’s see a simulation exercise in R to check this answer:

# Set simulation seed for reproducibility

set.seed(10101)

# Number of simulations

num_simulations <- 100000

# Monty Hall game function

simulate_game <- function(switch_door = FALSE) {

doors <- 1:3

car_location <- sample(doors, 1)

first_guess <- sample(doors, 1)

# Host reveals a goat

if (car_location != first_guess) {

host_opens <- doors[!doors %in% c(car_location, first_guess)]

} else {

host_opens <- sample(doors[doors != first_guess], 1)

}

# Determine second guess if player switches

second_guess <- doors[!doors %in% c(first_guess, host_opens)]

win_if_no_switch <- (first_guess == car_location)

win_if_switch <- (second_guess == car_location)

if (switch_door) {

return(win_if_switch)

} else {

return(win_if_no_switch)

}

}

# Simulate without switching

prob_no_switch <- mean(replicate(num_simulations, simulate_game(switch_door = FALSE)))

message(sprintf("Winning probability without switching: %.3f", prob_no_switch))## Winning probability without switching: 0.331# Simulate with switching

prob_with_switch <- mean(replicate(num_simulations, simulate_game(switch_door = TRUE)))

message(sprintf("Winning probability with switching: %.3f", prob_with_switch))## Winning probability with switching: 0.667References

Note that I use the term “Bayes’ rule” rather than “Bayes’ theorem.” It was Laplace (P. S. Laplace 1774) who generalized Bayes’ theorem (Thomas Bayes 1763), and his generalization is referred to as Bayes’ rule.↩︎

\(\lnot\) is the negation symbol.↩︎