8 Markov Chain Monte Carlo

“This chapter introduces one of the more marvelous examples of how Fortuna and Minerva cooperate: the estimation of posterior probability distributions using a stochastic process known as Markov chain Monte Carlo (MCMC) estimation” (McElreath, 2015, p. 241). Though we’ve been using MCMC via the brms package for chapters, now, this chapter should clarify some of the questions you might have about the details.

8.1 Good King Markov and His island kingdom

Here we simulate King Markov’s journey. In this version of the code, we’ve added set.seed(), which helps make the exact results reproducible.

library(tidyverse)

set.seed(8)

num_weeks <- 1e5

positions <- rep(0, num_weeks)

current <- 10

for (i in 1:num_weeks) {

# record current position

positions[i] <- current

# flip coin to generate proposal

proposal <- current + sample(c(-1, 1), size = 1)

# now make sure he loops around the archipelago

if (proposal < 1) proposal <- 10

if (proposal > 10) proposal <- 1

# move?

prob_move <- proposal / current

current <- ifelse(runif(1) < prob_move, proposal, current)

}In this chapter, we’ll borrow a theme, theme_ipsum(), from the hrbrthemes package (Rudis, 2020).

# install.packages("hrbrthemes", dependencies = T)

# macOS users may need to install XQuartz, too (https://www.xquartz.org/)

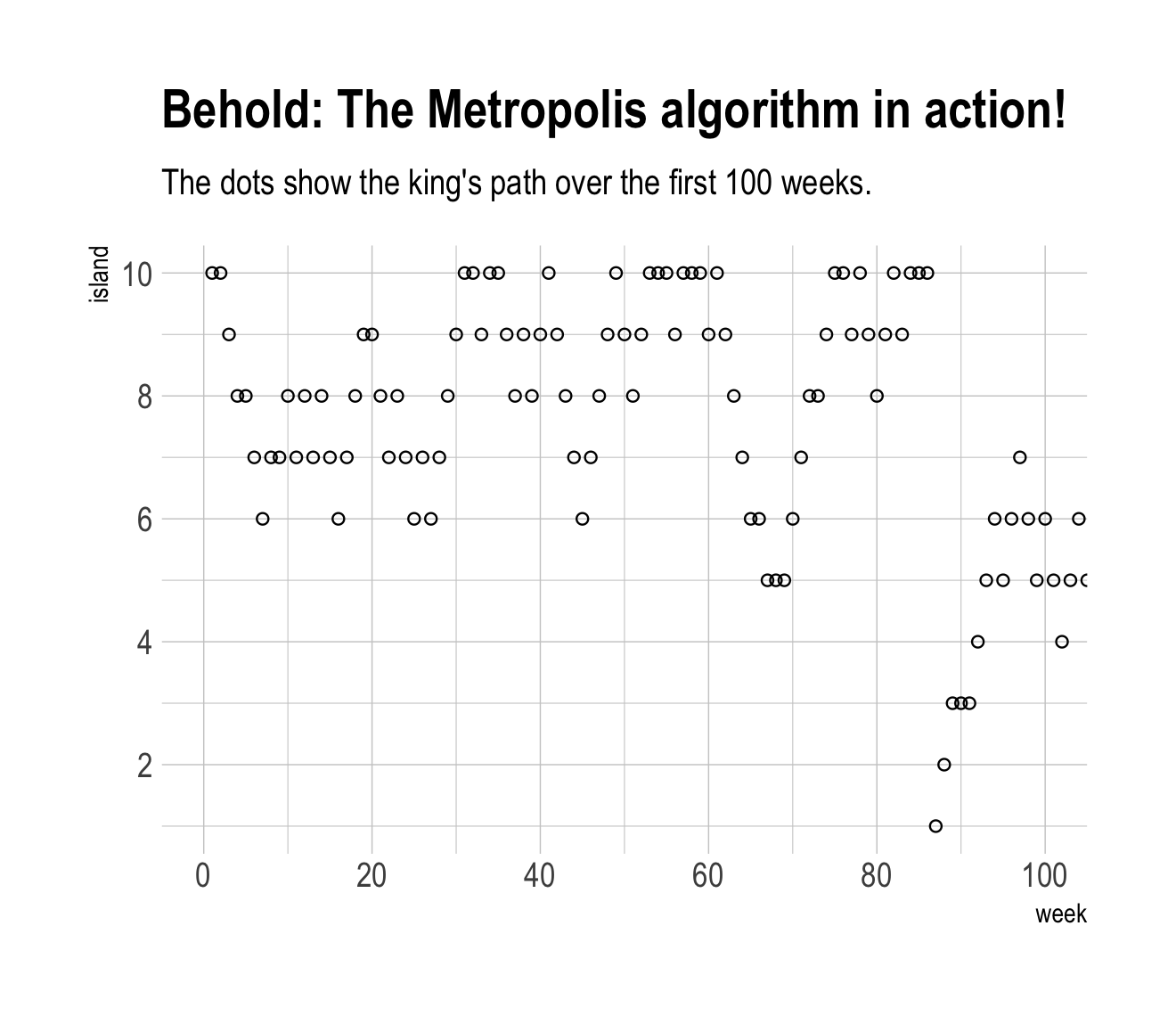

library(hrbrthemes)Figure 8.2.a.

library(tidyverse)

tibble(week = 1:1e5,

island = positions) %>%

ggplot(aes(x = week, y = island)) +

geom_point(shape = 1) +

scale_x_continuous(breaks = seq(from = 0, to = 100, by = 20)) +

scale_y_continuous(breaks = seq(from = 0, to = 10, by = 2)) +

coord_cartesian(xlim = c(0, 100)) +

labs(title = "Behold: The Metropolis algorithm in action!",

subtitle = "The dots show the king's path over the first 100 weeks.") +

theme_ipsum()

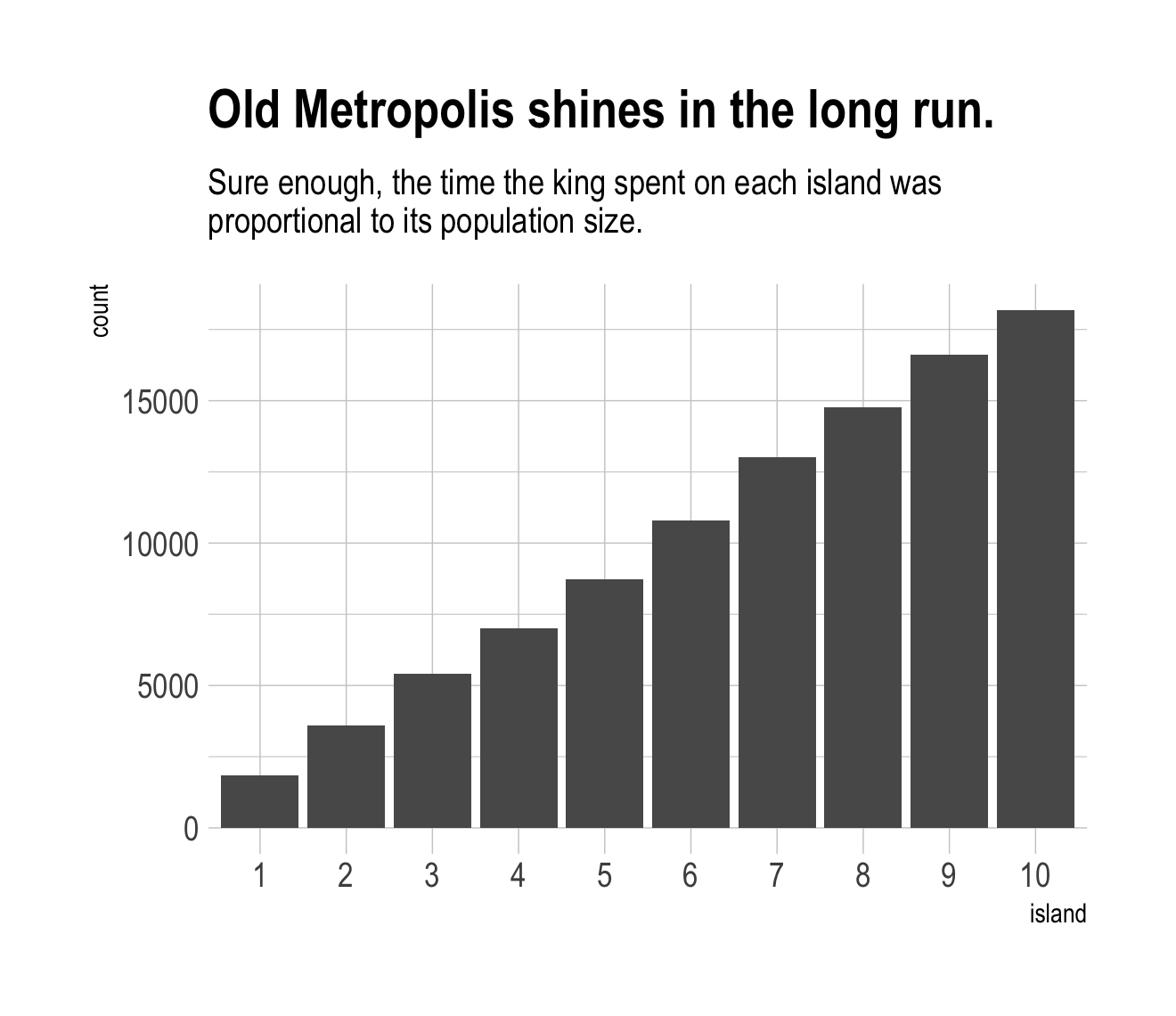

Figure 8.2.b.

tibble(week = 1:1e5,

island = positions) %>%

mutate(island = factor(island)) %>%

ggplot(aes(x = island)) +

geom_bar() +

labs(title = "Old Metropolis shines in the long run.",

subtitle = "Sure enough, the time the king spent on each island was\nproportional to its population size.") +

theme_ipsum()

8.2 Markov chain Monte Carlo

“The metropolis algorithm is the grandparent of several different strategies for getting samples from unknown posterior distributions” (p. 245). If you’re interested, Robert & Casella (2011) wrote a good historical overview of MCMC.

8.2.1 Gibbs sampling.

The Gibbs sampler (Casella & George, 1992; Geman & Geman, 1984) uses conjugate pairs (i.e., pairs of priors and likelihoods that have analytic solutions for the posterior of an individual parameter) to efficiently sample from the posterior. Gibbs was the workhorse algorithm during the rise of Bayesian computation in the 1990s. However, it’s limited in that (a) you might not want to use conjugate priors and (b) it can be quite inefficient with complex hierarchical models, which we’ll be fitting soon.

We will not be using the Gibbs sampler in this project. It’s available for use in R. For an extensive applied introduction, check out Kruschke’s (2015) text.

8.2.2 Hamiltonian Monte Carlo.

Hamiltonian Monte Carlo (HMC) is more computationally costly and more efficient than Gibbs at sampling from the posterior. It needs fewer samples, especially when fitting models with many parameters. To learn more about how HMC works, check out McElreath’s lecture on the topic from January 2019 or one of these lectures (here, here, or here) by Michael Betancourt.

8.3 Easy HMC: map2stan brm()

Much like McElreath’s rethinking package, brms provides a convenient interface to HMC via Stan. Other packages providing Stan interfaces include rstanarm (Brilleman et al., 2018; Gabry & Goodrich, 2022) and blavaan (Merkle et al., 2021, 2022; Merkle & Rosseel, 2018). I’m not aware of any up-to-date comparisons across the packages. If you’re ever inclined to make one, let the rest of us know!

Here we load the rugged data.

library(rethinking)

data(rugged)

d <- ruggedSwitch from rethinking to brms.

detach(package:rethinking)

library(brms)

rm(rugged)It takes just a sec to do a little data manipulation.

d <-

d %>%

mutate(log_gdp = log(rgdppc_2000))

dd <-

d %>%

drop_na(rgdppc_2000)In the context of this chapter, it doesn’t make sense to translate McElreath’s m8.1 map() code to brm() code. Below, we’ll just go directly to the brm() variant of his m8.1stan.

8.3.1 Preparation.

When working with brms, you don’t need to do the data processing McElreath did on pages 248 and 249. If you wanted to, however, here’s how you might do it within the tidyverse.

dd.trim <-

dd %>%

select(log_gdp, rugged, cont_africa)

str(dd.trim)8.3.2 Estimation.

Finally, we get to work that sweet HMC via brms::brm().

b8.1 <-

brm(data = dd,

family = gaussian,

log_gdp ~ 1 + rugged + cont_africa + rugged:cont_africa,

prior = c(prior(normal(0, 100), class = Intercept),

prior(normal(0, 10), class = b),

prior(cauchy(0, 2), class = sigma)),

seed = 8,

file = "fits/b08.01")Now we have officially ditched the uniform distribution for \(\sigma\). Never again! Here’s the posterior.

print(b8.1)## Family: gaussian

## Links: mu = identity; sigma = identity

## Formula: log_gdp ~ 1 + rugged + cont_africa + rugged:cont_africa

## Data: dd (Number of observations: 170)

## Draws: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 9.23 0.14 8.94 9.51 1.00 3351 3290

## rugged -0.20 0.08 -0.36 -0.05 1.00 3375 3389

## cont_africa -1.95 0.23 -2.40 -1.51 1.00 3007 3101

## rugged:cont_africa 0.39 0.13 0.13 0.65 1.00 2854 3320

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma 0.95 0.05 0.85 1.06 1.00 4356 2577

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).Do note a couple things: If you look closely at the summary information at the top, you’ll see that the brm() function defaults to chains = 4. If you check the manual, you’ll see it also defaults to cores = 1, as well as iter = 2000 and warmup = 1000. Also of note, McElreath’s rethinking::precis() returns highest posterior density intervals (HPDIs) when summarizing map2stan() models. Not so with brms. If you want HPDIs, you’ll have to use the convenience functions from the tidybayes package. Here’s an example.

library(tidybayes)

post <- as_draws_df(b8.1)

post %>%

select(b_Intercept:sigma) %>%

gather() %>%

group_by(key) %>%

mean_hdi(value, .width = .89) # note our rare use of 89% intervals## # A tibble: 5 × 7

## key value .lower .upper .width .point .interval

## <chr> <dbl> <dbl> <dbl> <dbl> <chr> <chr>

## 1 b_cont_africa -1.95 -2.31 -1.59 0.89 mean hdi

## 2 b_Intercept 9.23 9.00 9.45 0.89 mean hdi

## 3 b_rugged -0.203 -0.327 -0.0743 0.89 mean hdi

## 4 b_rugged:cont_africa 0.391 0.167 0.586 0.89 mean hdi

## 5 sigma 0.950 0.870 1.04 0.89 mean hdiThere’s one more important difference in our brms summary output compared to McElreath’s rethinking::precis() output. In the text we learn precis() returns n_eff values for each parameter. Earlier versions of brms used to have a direct analogue named Eff.Sample. Both were estimates of the effective number of samples (a.k.a. the effective sample size) for each parameter. As with typical sample size, the more the merrier. Starting with version 2.10.0, brms now returns two columns: Bulk_ESS and Tail_ESS. These originate from a (2021) paper by Stan-team all-stars Vehtari, Gelman, Simpson, Carpenter, and Bürkner. From their paper, we read:

If you plan to report quantile estimates or posterior intervals, we strongly suggest assessing the convergence of the chains for these quantiles. In Section 4.3 we show that convergence of Markov chains is not uniform across the parameter space and propose diagnostics and effective sample sizes specifically for extreme quantiles. This is different from the standard ESS estimate (which we refer to as the “bulk-ESS”), which mainly assesses how well the centre of the distribution is resolved. Instead, these “tail-ESS” measures allow the user to estimate the MCSE for interval estimates. (p. 5, emphasis in the original)

For more technical details, see the paper. In short, Bulk_ESS in the output from brms 2.10.0+ is what was previously referred to as Eff.Sample in earlier versions. It’s also what corresponds to what McElreath calls n_eff. This indexed the number of effective samples in ‘the center of the’ posterior distribution (i.e., the posterior mean or median). But since we also care about uncertainty in our parameters, we care about stability in the 95% intervals and such. The new Tail_ESS in brms output allows us to gauge the effective sample size for those intervals.

8.3.3 Sampling again, in parallel.

Here we sample in parallel by adding cores = 4.

b8.1b <-

update(b8.1,

cores = 4,

seed = 8,

file = "fits/b08.01b")This model sampled so fast that it really didn’t matter if we sampled in parallel or not. It will for others.

print(b8.1b)## Family: gaussian

## Links: mu = identity; sigma = identity

## Formula: log_gdp ~ 1 + rugged + cont_africa + rugged:cont_africa

## Data: dd (Number of observations: 170)

## Draws: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 9.23 0.14 8.94 9.51 1.00 3351 3290

## rugged -0.20 0.08 -0.36 -0.05 1.00 3375 3389

## cont_africa -1.95 0.23 -2.40 -1.51 1.00 3007 3101

## rugged:cont_africa 0.39 0.13 0.13 0.65 1.00 2854 3320

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma 0.95 0.05 0.85 1.06 1.00 4356 2577

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).8.3.4 Visualization.

Unlike the way rethinking’s extract.samples() yields a list, brms’s as_draws_df() returns a data frame.

post <- as_draws_df(b8.1)

str(post)## draws_df [4,000 × 10] (S3: draws_df/draws/tbl_df/tbl/data.frame)

## $ b_Intercept : num [1:4000] 9.31 9.14 9.07 9.1 9.21 ...

## $ b_rugged : num [1:4000] -0.27 -0.205 -0.256 -0.194 -0.223 ...

## $ b_cont_africa : num [1:4000] -2.14 -2.19 -1.87 -1.85 -2 ...

## $ b_rugged:cont_africa: num [1:4000] 0.554 0.48 0.404 0.412 0.395 ...

## $ sigma : num [1:4000] 0.931 0.942 0.946 0.899 0.996 ...

## $ lprior : num [1:4000] -16.6 -16.6 -16.6 -16.5 -16.6 ...

## $ lp__ : num [1:4000] -247 -248 -250 -247 -247 ...

## $ .chain : int [1:4000] 1 1 1 1 1 1 1 1 1 1 ...

## $ .iteration : int [1:4000] 1 2 3 4 5 6 7 8 9 10 ...

## $ .draw : int [1:4000] 1 2 3 4 5 6 7 8 9 10 ...Though we won’t be doing so in this ebook, you could instead use the as_draws() or as_draws_list() functions if you really wanted your posterior draws in a list format.

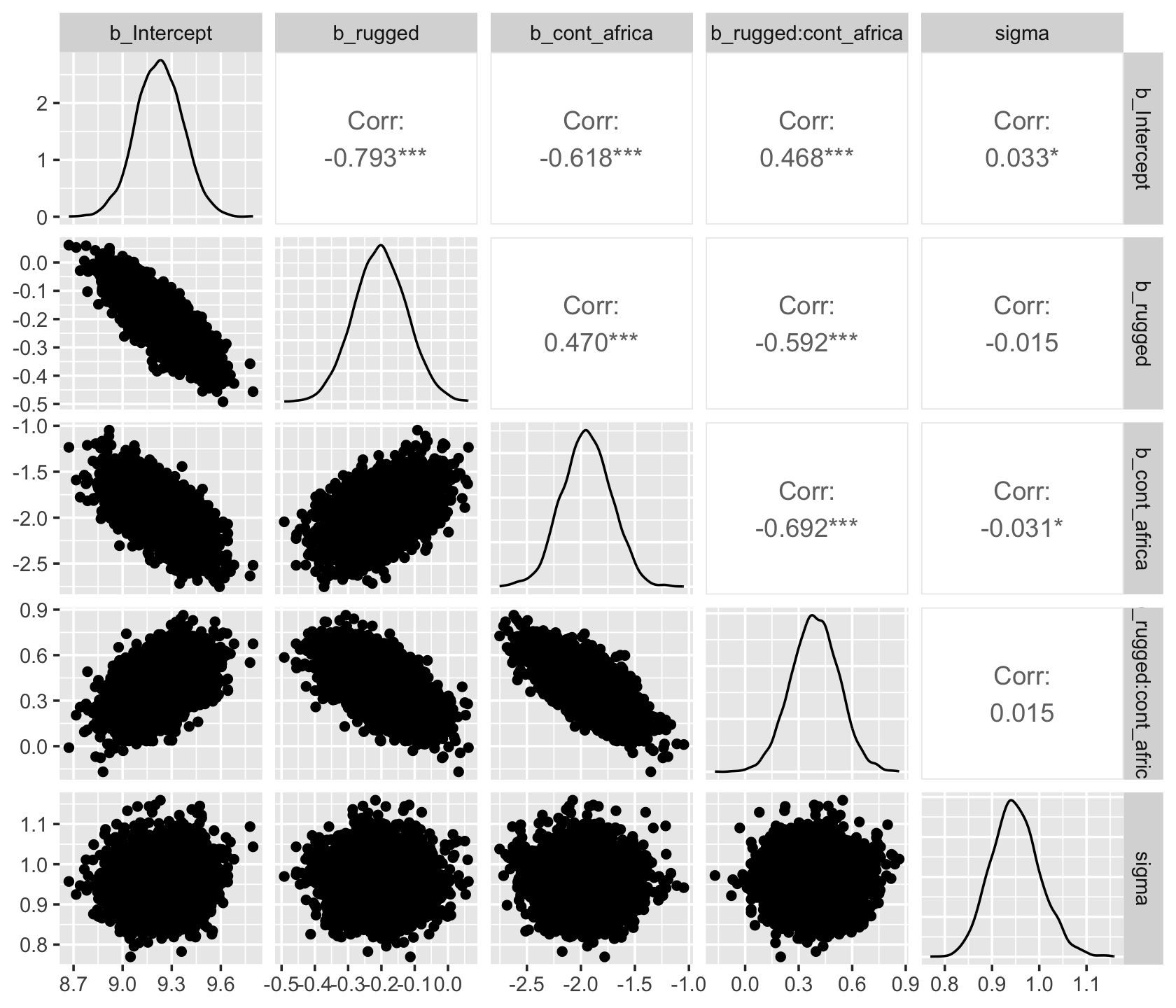

As with McElreath’s rethinking, brms allows users to put the post data frame or the brmsfit object directly in pairs().

pairs(b8.1,

off_diag_args = list(size = 1/5, alpha = 1/5))

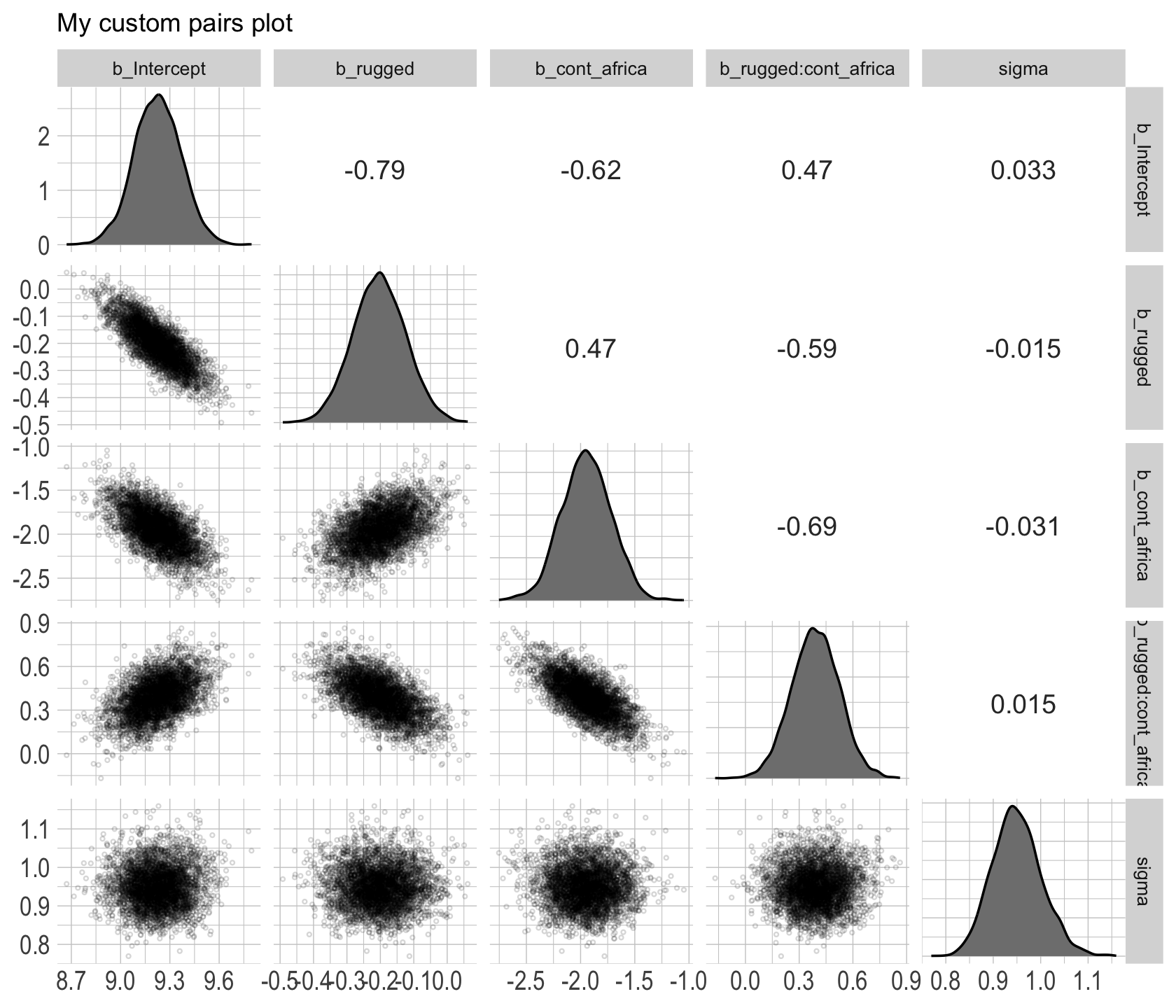

Another nice way to customize your pairs plot is with the GGally package.

library(GGally)post %>%

select(b_Intercept:sigma) %>%

ggpairs()

Since GGally::ggpairs() returns a ggplot2 object, you can customize it as you please.

my_upper <- function(data, mapping, ...) {

# get the x and y data to use the other code

x <- eval_data_col(data, mapping$x)

y <- eval_data_col(data, mapping$y)

r <- unname(cor.test(x, y)$estimate)

rt <- format(r, digits = 2)[1]

tt <- as.character(rt)

# plot the cor value

ggally_text(

label = tt,

mapping = aes(),

size = 4,

color = "grey20") +

theme_void()

}

my_diag <- function(data, mapping, ...) {

ggplot(data = data, mapping = mapping) +

geom_density(fill = "grey50") +

theme_ipsum()

}

my_lower <- function(data, mapping, ...) {

ggplot(data = data, mapping = mapping) +

geom_point(shape = 1, size = 1/2, alpha = 1/6) +

theme_ipsum()

}

post %>%

select(b_Intercept:sigma) %>%

ggpairs(upper = list(continuous = my_upper),

diag = list(continuous = my_diag),

lower = list(continuous = my_lower)) +

labs(subtitle = "My custom pairs plot") +

theme(strip.text = element_text(size = 8))

For more ideas on customizing a ggpairs() plot, go here.

8.3.5 Using the samples.

Older versions of brms allowed users to include information criteria as a part of the model summary by adding loo = T and/or waic = T in the summary() function (e.g., summary(b8.1, loo = T, waic = T). However, this is no longer the case. E.g.,

summary(b8.1, loo = T, waic = T)## Family: gaussian

## Links: mu = identity; sigma = identity

## Formula: log_gdp ~ 1 + rugged + cont_africa + rugged:cont_africa

## Data: dd (Number of observations: 170)

## Draws: 4 chains, each with iter = 2000; warmup = 1000; thin = 1;

## total post-warmup draws = 4000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 9.23 0.14 8.94 9.51 1.00 3351 3290

## rugged -0.20 0.08 -0.36 -0.05 1.00 3375 3389

## cont_africa -1.95 0.23 -2.40 -1.51 1.00 3007 3101

## rugged:cont_africa 0.39 0.13 0.13 0.65 1.00 2854 3320

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma 0.95 0.05 0.85 1.06 1.00 4356 2577

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).Although R didn’t bark at us for adding loo = T, waic = T, they didn’t do anything. Nowadays, if you want that information, you’ll have to use the waic() and/or loo() functions.

waic(b8.1)##

## Computed from 4000 by 170 log-likelihood matrix

##

## Estimate SE

## elpd_waic -234.7 7.4

## p_waic 5.1 0.9

## waic 469.3 14.8

##

## 2 (1.2%) p_waic estimates greater than 0.4. We recommend trying loo instead.(l_b8.1 <- loo(b8.1))##

## Computed from 4000 by 170 log-likelihood matrix

##

## Estimate SE

## elpd_loo -234.7 7.4

## p_loo 5.2 0.9

## looic 469.5 14.9

## ------

## Monte Carlo SE of elpd_loo is 0.0.

##

## All Pareto k estimates are good (k < 0.5).

## See help('pareto-k-diagnostic') for details.The recommended workflow since brms version 2.8.0 is to save the information criteria information with your brm() fit objects with the add_criterion() function.

b8.1 <- add_criterion(b8.1, c("waic", "loo"))You retrieve that information by subsetting the fit object.

b8.1$criteria$waic##

## Computed from 4000 by 170 log-likelihood matrix

##

## Estimate SE

## elpd_waic -234.7 7.4

## p_waic 5.1 0.9

## waic 469.3 14.8

##

## 2 (1.2%) p_waic estimates greater than 0.4. We recommend trying loo instead.b8.1$criteria$loo##

## Computed from 4000 by 170 log-likelihood matrix

##

## Estimate SE

## elpd_loo -234.7 7.4

## p_loo 5.2 0.9

## looic 469.5 14.9

## ------

## Monte Carlo SE of elpd_loo is 0.0.

##

## All Pareto k estimates are good (k < 0.5).

## See help('pareto-k-diagnostic') for details.In response to the brms version 2.8.0 update, which itself accommodated updates to the loo package and both of which occurred years after McElreath published the first edition of his text, we’ve been bantering on about the \(\text{elpd}\) and its relation to the WAIC and the LOO since Chapter 6. This is a fine place to go into some detail.

The elpd values returned by loo() and waic() are the expected log pointwise predictive density for new data. It follows the formula

\[\text{elpd} = \sum_{i = 1}^n \int p_t (\tilde y_i) \log p (\tilde y_i | y) d \tilde y_i,\]

where \(p_t (\tilde y_i)\) is the distribution representing the true data-generating process for \(\tilde y_i\). The \(p_t (\tilde y_i)\)’s are unknown, and we will use cross-validation or WAIC to approximate. In a regression, these distributions are also implicitly conditioned on any predictors in the model. (Vehtari et al., 2017, p. 2).

Later in the paper, we learn the elpd_loo (i.e., the Bayesian LOO estimate of out-of-sample predictive fit) is defined as

\[\text{elpd}_\text{loo} = \sum_{i = 1}^n \log p (y_i | y - _i),\]

where

\[p (y_i | y - _i) = \int p (y_i | \theta) p (\theta | y - _i) d \theta\]

“is the leave-one-out predictive density given the data without the \(i\)th data point” (p. 3). And recall, you can convert the \(\text{elpd}\) to the conventional information criteria metric by multiplying it by -2.

To learn more about the \(\text{elpd}\), read the rest of the paper and the other works referenced by the loo package team. And if you prefer watching video lectures to reading technical papers, check out Vehtari’s Model assessment, selection and averaging.

8.3.6 Checking the chain.

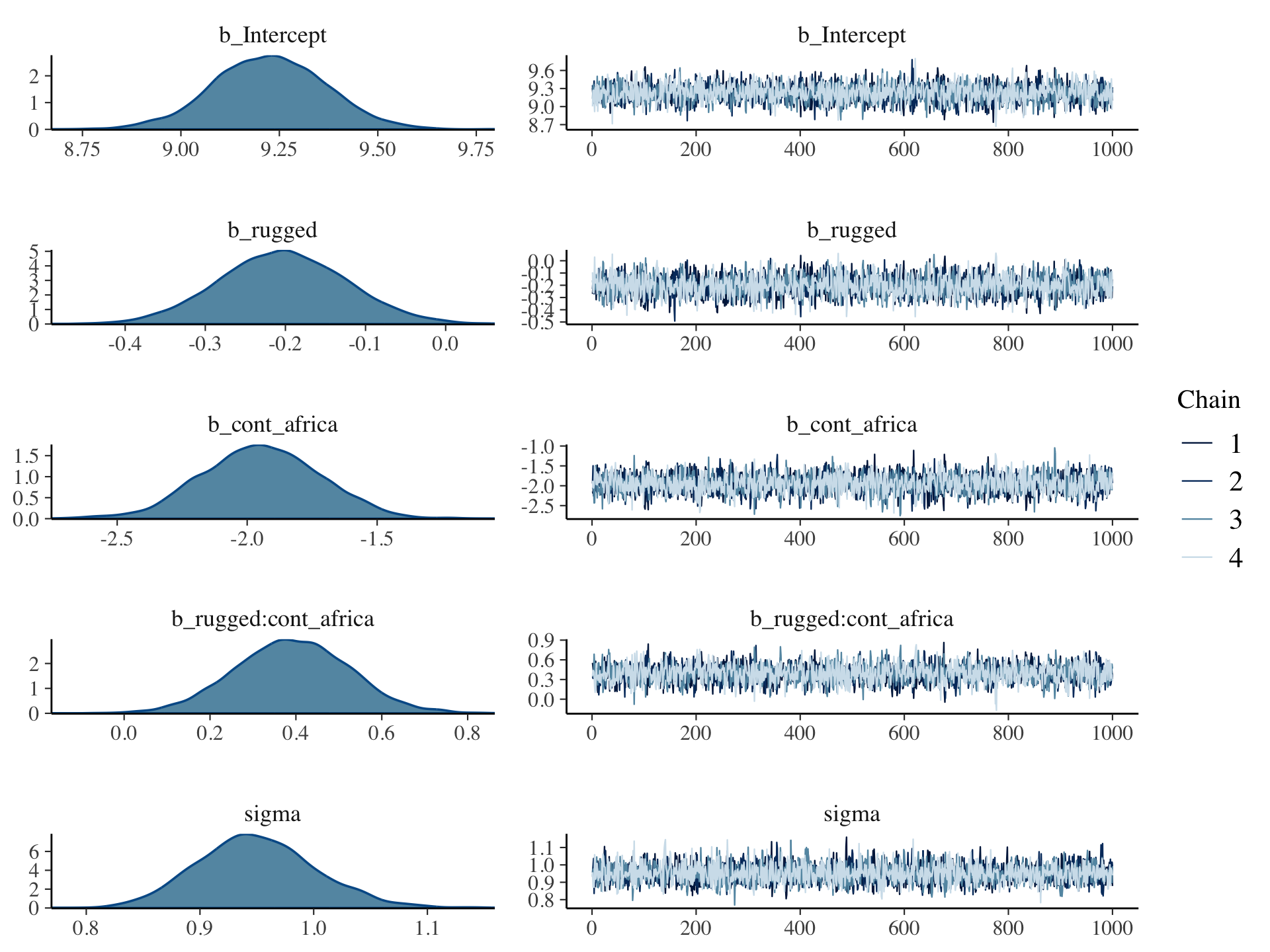

Using plot() for a brm() fit returns both density and trace lots for the parameters.

plot(b8.1, widths = c(2, 3))

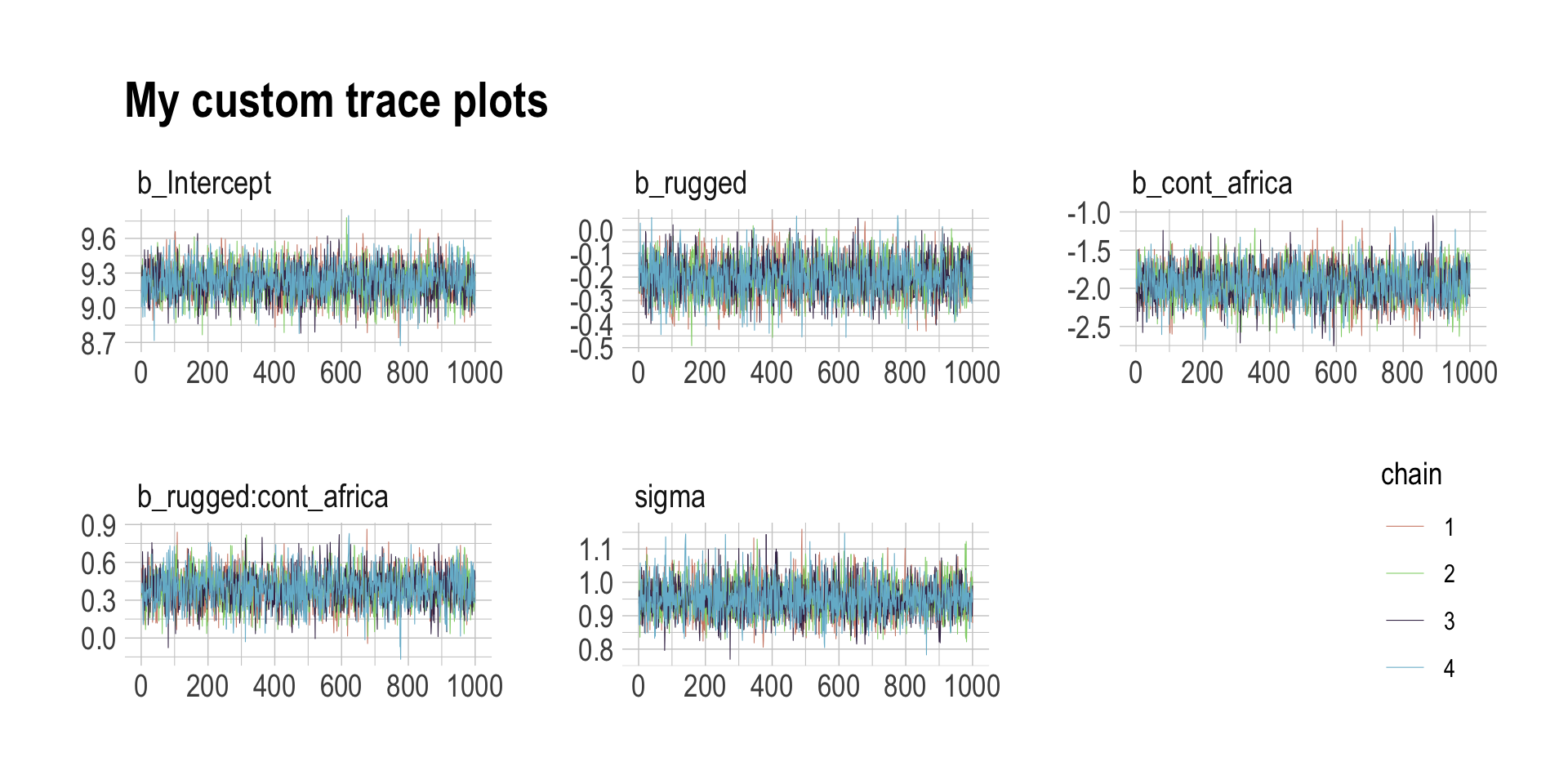

The bayesplot package allows a little more control. Here, we use bayesplot’s mcmc_trace() to show only trace plots with our custom theme. Note that mcmc_trace() works with data frames, not brmfit objects.

library(bayesplot)

post <- as_draws_df(b8.1)

mcmc_trace(post,

pars = vars(b_Intercept:sigma),

facet_args = list(ncol = 3),

size = 0.15) +

scale_color_ipsum() +

labs(title = "My custom trace plots") +

theme_ipsum() +

theme(legend.position = c(.95, .2))

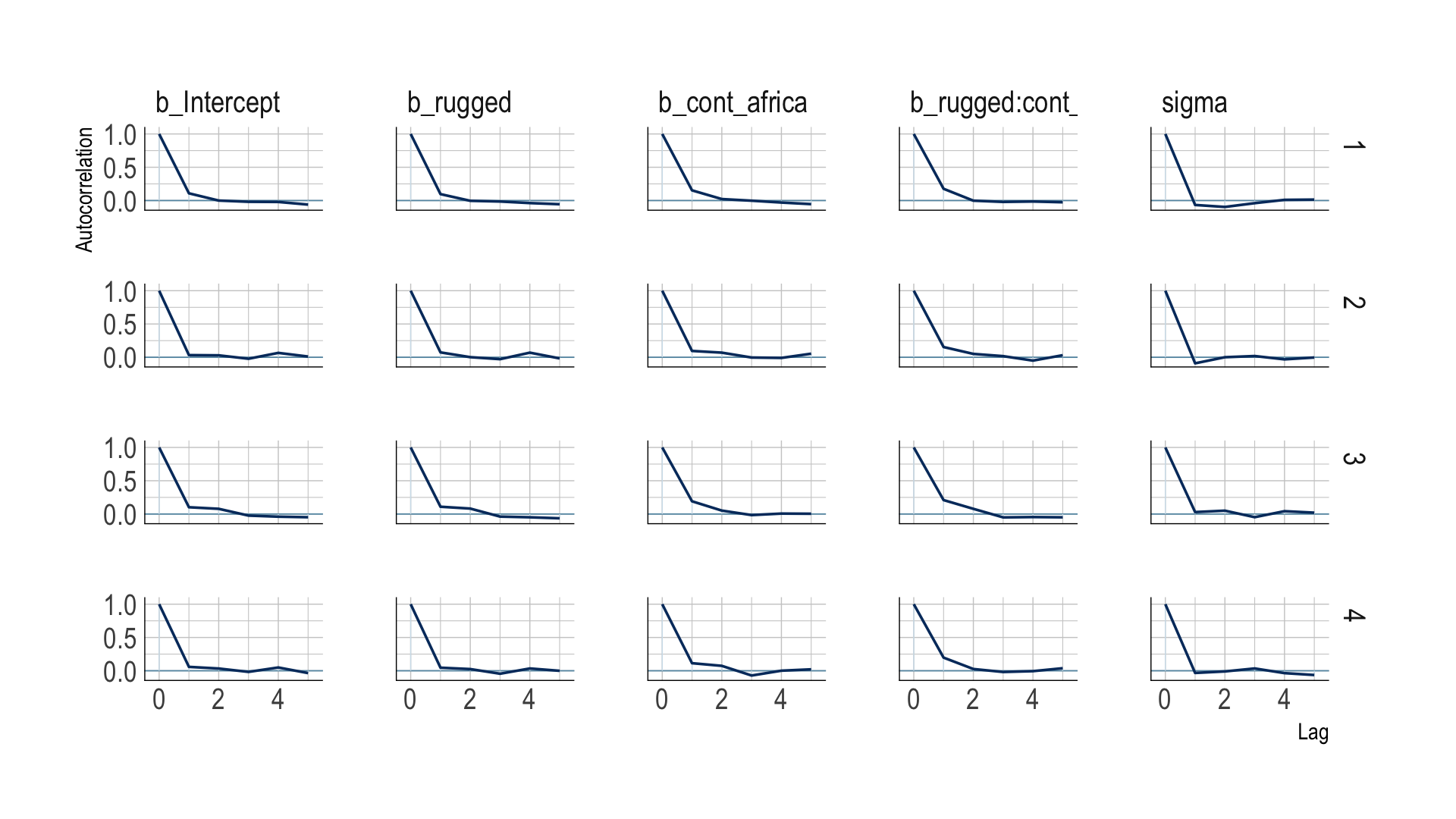

The bayesplot package offers a variety of diagnostic plots. Here we make autocorrelation plots for all model parameters, one for each HMC chain.

mcmc_acf(post,

pars = vars(b_Intercept:sigma),

lags = 5) +

scale_color_ipsum() +

theme_ipsum()

That’s just what we like to see–nice L-shaped autocorrelation plots. Those are the kinds of shapes you’d expect when you have reasonably large effective samples.

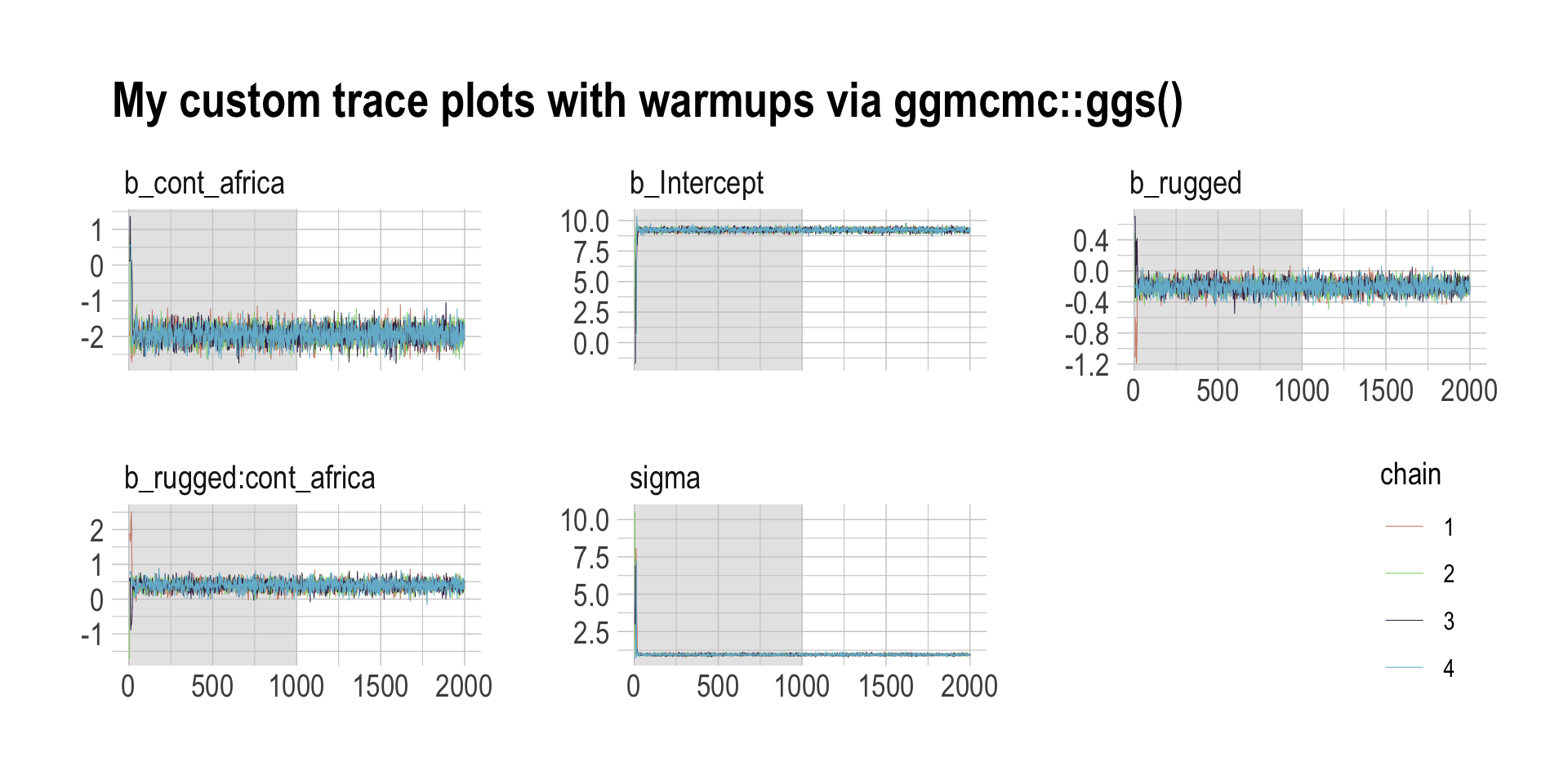

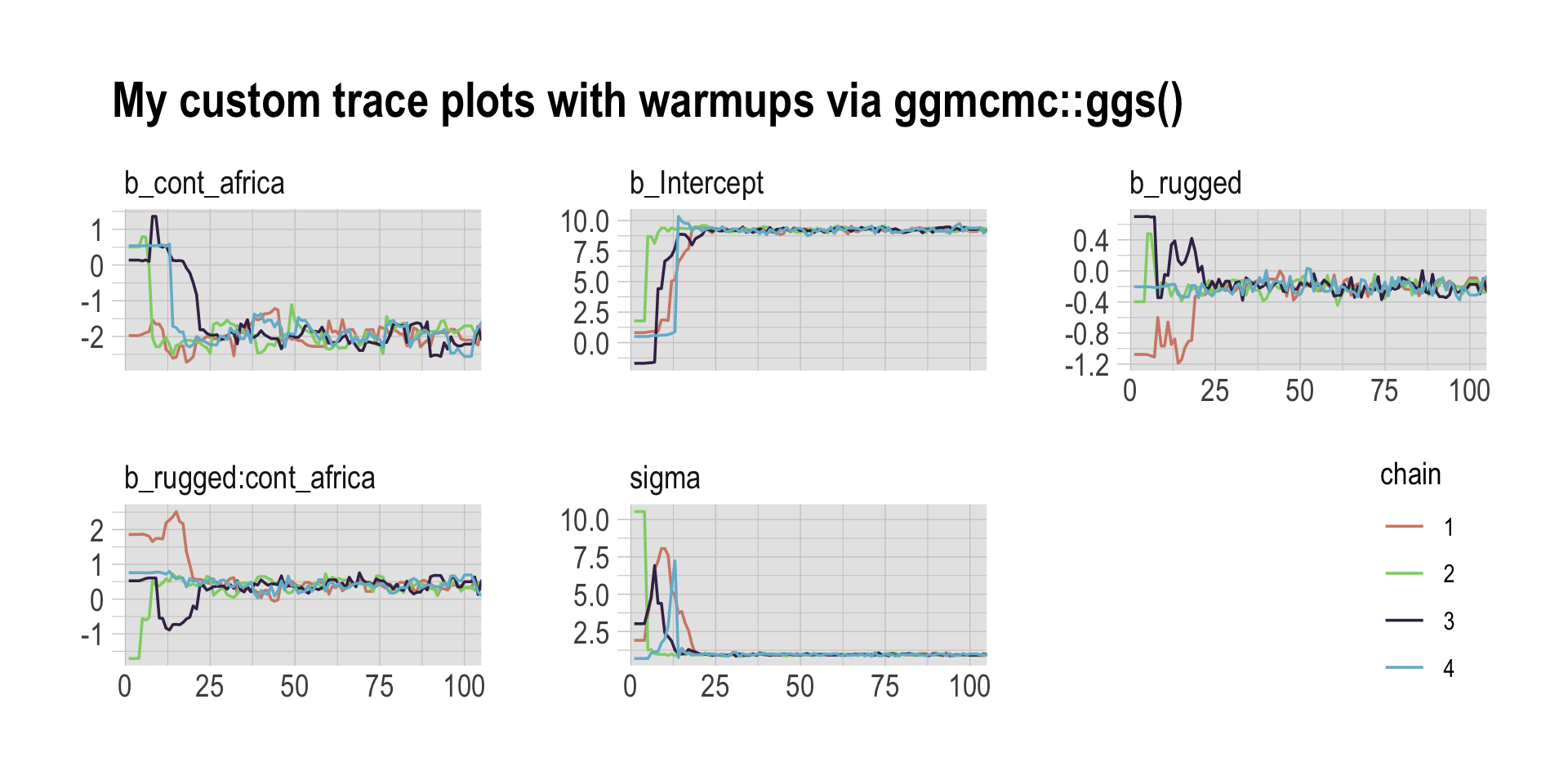

Before we move on, there’s an important difference between the trace plots McElreath showed in the text and the ones we just made. McElreath’s trace plots include the warmup iterations. Ours did not. That’s why his \(x\)-axis values ranged from 1 to 2,000 and ours only ranged from 1 to 1,000. To my knowledge, neither the brms::plot() nor the bayesplot::mcmc_trace() functions support including warmups in their trace plots. One quick way to get them is with the ggmcmc package (Fernández i Marín, 2016, 2021).

library(ggmcmc)The ggmcmc package has a variety of convenience functions for working with MCMC chains. The ggs() function extracts the posterior draws, including warmup, and arranges them in a tidy tibble.

ggs(b8.1) %>%

str()## tibble [48,000 × 4] (S3: tbl_df/tbl/data.frame)

## $ Iteration: int [1:48000] 1 2 3 4 5 6 7 8 9 10 ...

## $ Chain : int [1:48000] 1 1 1 1 1 1 1 1 1 1 ...

## $ Parameter: Factor w/ 6 levels "b_cont_africa",..: 2 2 2 2 2 2 2 2 2 2 ...

## $ value : num [1:48000] 0.815 0.815 0.815 0.815 0.871 ...

## - attr(*, "nChains")= int 4

## - attr(*, "nParameters")= int 6

## - attr(*, "nIterations")= int 2000

## - attr(*, "nBurnin")= num 1000

## - attr(*, "nThin")= num 1

## - attr(*, "description")= chr "644a2f8c915761c6340d991526fba09f"With this in hand, we can now include those warmup draws in our trace plots. Here’s how to do so without convenience functions like bayesplot::mcmc_trace().

ggs(b8.1) %>%

filter(Parameter != "lprior") %>%

mutate(chain = factor(Chain)) %>%

ggplot(aes(x = Iteration, y = value)) +

# this marks off the warmups

annotate(geom = "rect",

xmin = 0, xmax = 1000, ymin = -Inf, ymax = Inf,

fill = "grey80", alpha = 1/2, linewidth = 0) +

geom_line(aes(color = chain),

linewidth = 0.15) +

scale_color_ipsum() +

labs(title = "My custom trace plots with warmups via ggmcmc::ggs()",

x = NULL, y = NULL) +

theme_ipsum() +

theme(legend.position = c(.95, .2)) +

facet_wrap(~Parameter, scales = "free_y")

Following brms defaults, we won’t include warmup iterations in the trace plots for other models in this book. A nice thing about plots that do contain them, though, is they reveal how quickly our HMC chains transition away from their start values into the posterior. To get a better sense of this, let’s make those trace plots once more, but this time zooming in on the first 100 iterations.

ggs(b8.1) %>%

filter(Parameter != "lprior") %>%

mutate(chain = factor(Chain)) %>%

ggplot(aes(x = Iteration, y = value)) +

# this marks off the warmups

annotate(geom = "rect",

xmin = 0, xmax = 1000, ymin = -Inf, ymax = Inf,

fill = "grey80", alpha = 1/2, linewidth = 0) +

geom_line(aes(color = chain),

linewidth = 0.5) +

scale_color_ipsum() +

labs(title = "My custom trace plots with warmups via ggmcmc::ggs()",

x = NULL, y = NULL) +

coord_cartesian(xlim = c(1, 100)) +

theme_ipsum() +

theme(legend.position = c(.95, .2)) +

facet_wrap(~Parameter, scales = "free_y")

For each parameter, the all four chains had moved away from their starting values to converge on the marginal posteriors by the 50th iteration or so.

8.3.6.1 Overthinking: Raw Stan model code.

The stancode() function works in brms much like it does in rethinking.

brms::stancode(b8.1)## // generated with brms 2.18.0

## functions {

## }

## data {

## int<lower=1> N; // total number of observations

## vector[N] Y; // response variable

## int<lower=1> K; // number of population-level effects

## matrix[N, K] X; // population-level design matrix

## int prior_only; // should the likelihood be ignored?

## }

## transformed data {

## int Kc = K - 1;

## matrix[N, Kc] Xc; // centered version of X without an intercept

## vector[Kc] means_X; // column means of X before centering

## for (i in 2:K) {

## means_X[i - 1] = mean(X[, i]);

## Xc[, i - 1] = X[, i] - means_X[i - 1];

## }

## }

## parameters {

## vector[Kc] b; // population-level effects

## real Intercept; // temporary intercept for centered predictors

## real<lower=0> sigma; // dispersion parameter

## }

## transformed parameters {

## real lprior = 0; // prior contributions to the log posterior

## lprior += normal_lpdf(b | 0, 10);

## lprior += normal_lpdf(Intercept | 0, 100);

## lprior += cauchy_lpdf(sigma | 0, 2)

## - 1 * cauchy_lccdf(0 | 0, 2);

## }

## model {

## // likelihood including constants

## if (!prior_only) {

## target += normal_id_glm_lpdf(Y | Xc, Intercept, b, sigma);

## }

## // priors including constants

## target += lprior;

## }

## generated quantities {

## // actual population-level intercept

## real b_Intercept = Intercept - dot_product(means_X, b);

## }You can also get that information with b8.1$model or b8.1$fit@stanmodel.

8.4 Care and feeding of your Markov chain.

Markov chain Monte Carlo is a highly technical and usually automated procedure. Most people who use it don’t really understand what it is doing. That’s okay, up to a point. Science requires division of labor, and if every one of us had to write our own Markov chains from scratch, a lot less research would get done in the aggregate. (p. 255)

But if you do want to learn more about HMC, McElreath has some nice introductory lectures on the topic (see here and here). To dive even deeper, Michael Betancourt from the Stan team has given many lectures on the topic (e.g., here and here).

8.4.1 How many samples do you need?

The brms defaults for iter and warmup match those of McElreath’s rethinking.

If all you want are posterior means, it doesn’t take many samples at all to get very good estimates. Even a couple hundred samples will do. But if you care about the exact shape in the extreme tails of the posterior, the 99th percentile or so, then you’ll need many many more. So there is no universally useful number of samples to aim for. In most typical regression applications, you can get a very good estimate of the posterior mean with as few as 200 effective samples. And if the posterior is approximately Gaussian, then all you need in addition is a good estimate of the variance, which can be had with one order of magnitude more, in most cases. For highly skewed posteriors, you’ll have to think more about which region of the distribution interests you. (p. 255)

And remember, with changes from brms version 2.10.0, we now have both Bulk_ESS and Tail_ESS to consult when thinking about the effective sample size.

8.4.2 How many chains do you need?

“Using 3 or 4 chains is conventional, and quite often more than enough to reassure us that the sampling is working properly” (p. 257).

8.4.2.1 Convergence diagnostics.

Times have changed. In the text, we read:

The default diagnostic output from Stan includes two metrics,

n_effandRhat. The first is a measure of the effective number of samples. The second is the Gelman-Rubin convergence diagnostic, \(\widehat R\). Whenn_effis much lower than the actual number of iterations (minus warmup) of your chains, it means the chains are inefficient, but possibly still okay. WhenRhatis above 1.00, it usually indicates that the chain has not yet converged, and probably you shouldn’t trust the samples. If you draw more iterations, it could be fine, or it could never converge. See the Stan user manual for more details. It’s important however not to rely too much on these diagnostics. Like all heuristics, there are cases in which they provide poor advice. (p. 257)

We’ve already covered how brms has expanded the traditional notion of effective samples (i.e., n_eff) to Bulk_ESS and Tail_ESS. Times are changing for the \(\widehat R\), too. As it turns out, the Stan team has found some deficiencies with the \(\widehat R\), for which they’ve made recommendations that will be implemented in the Stan ecosystem sometime soon (see here for a related thread on the Stan Forums). In the meantime, you can read all about it in Vehtari et al. (2021) and in one of Dan Simpson’s blog posts.

For more on these topics, you might also check out Gabry and Modrák’s (2022) vignette, Visual MCMC diagnostics using the bayesplot package.

8.4.3 Taming a wild chain.

As with rethinking, brms can take data in the form of a list. Recall however, that in order to specify starting values, you need to specify a list of lists with an init argument rather than with start.

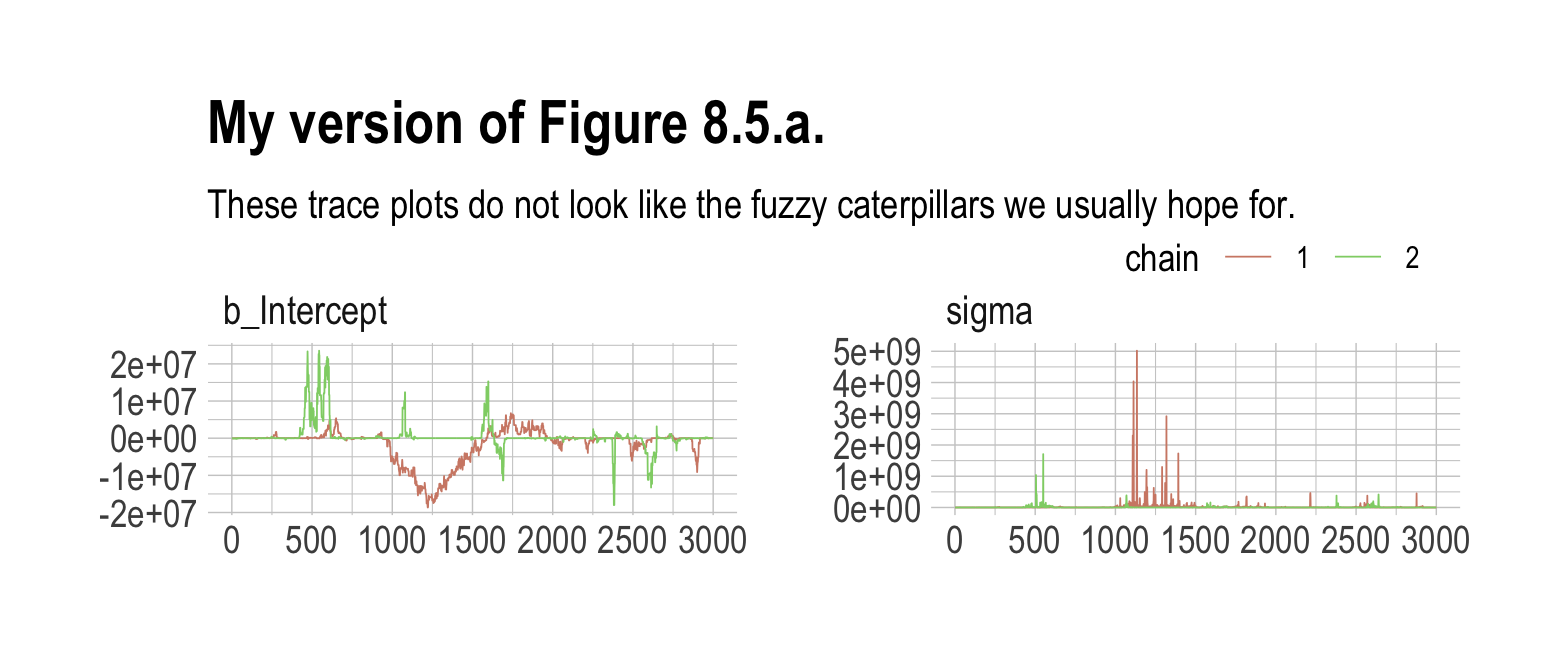

b8.2 <-

brm(data = list(y = c(-1, 1)),

family = gaussian,

y ~ 1,

prior = c(prior(uniform(-1e10, 1e10), class = Intercept),

prior(uniform(0, 1e10), class = sigma, ub = 1e10)),

init = list(list(Intercept = 0, sigma = 1),

list(Intercept = 0, sigma = 1)),

iter = 4000, warmup = 1000, chains = 2,

seed = 8,

file = "fits/b08.02")Those were some silly flat priors. Check the damage.

post <- as_draws_df(b8.2)

mcmc_trace(post,

pars = vars(b_Intercept:sigma),

size = 0.25) +

labs(title = "My version of Figure 8.5.a.",

subtitle = "These trace plots do not look like the fuzzy caterpillars we usually hope for.") +

scale_color_ipsum() +

theme_ipsum() +

theme(legend.direction = "horizontal",

legend.position = c(.85, 1.5))

Let’s peek at the summary.

print(b8.2)## Warning: Parts of the model have not converged (some Rhats are > 1.05). Be careful when analysing

## the results! We recommend running more iterations and/or setting stronger priors.## Warning: There were 276 divergent transitions after warmup. Increasing adapt_delta above 0.8 may

## help. See http://mc-stan.org/misc/warnings.html#divergent-transitions-after-warmup## Family: gaussian

## Links: mu = identity; sigma = identity

## Formula: y ~ 1

## Data: list(y = c(-1, 1)) (Number of observations: 2)

## Draws: 2 chains, each with iter = 4000; warmup = 1000; thin = 1;

## total post-warmup draws = 6000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept -546879.04 4143195.56 -13079947.72 6389605.41 1.06 24 16

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma 14987222.47 126012619.27 1220.06 85397775.81 1.04 26 86

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).Holy smokes, those parameters are a mess! Plus we got nasty warning messages, too. Watch our reasonable priors save the day.

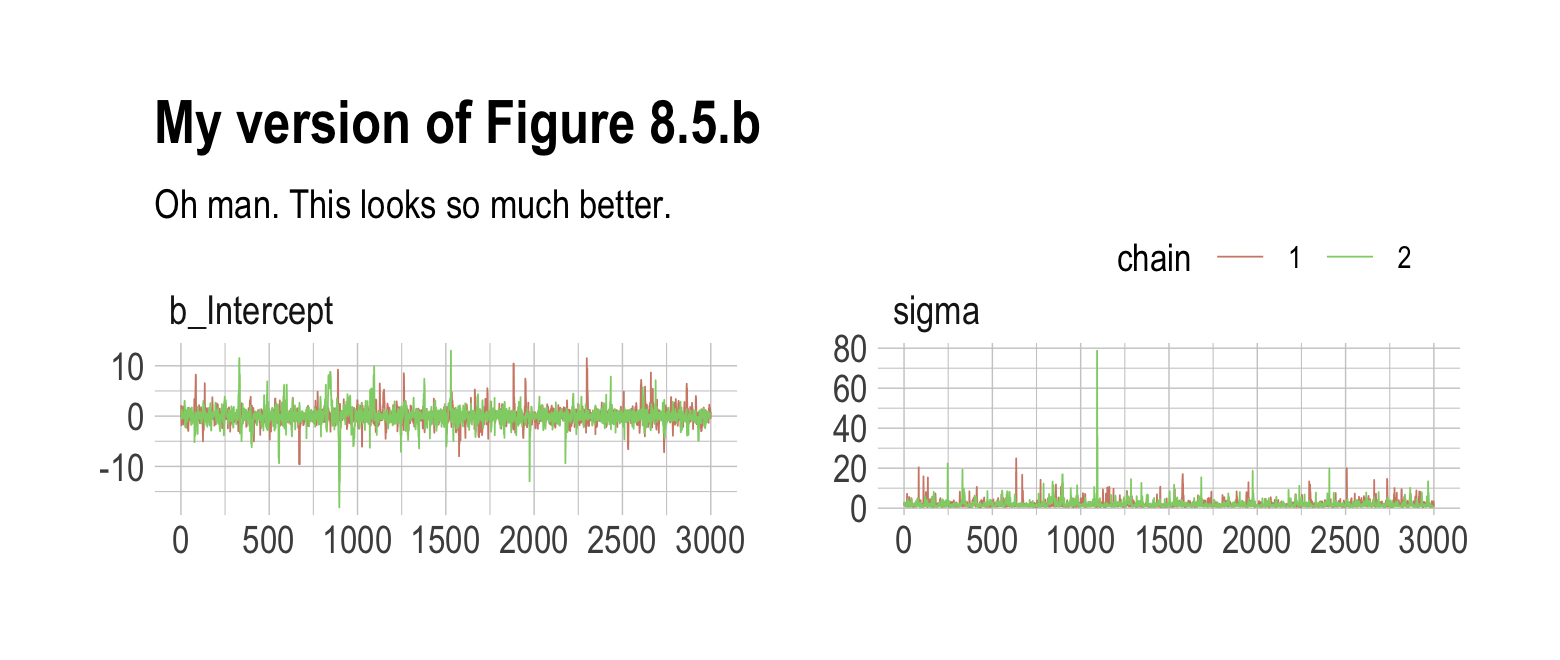

b8.3 <-

brm(data = list(y = c(-1, 1)),

family = gaussian,

y ~ 1,

prior = c(prior(normal(0, 10), class = Intercept),

prior(cauchy(0, 1), class = sigma)),

init = list(list(Intercept = 0, sigma = 1),

list(Intercept = 0, sigma = 1)),

iter = 4000, warmup = 1000, chains = 2,

seed = 8,

file = "fits/b08.03")print(b8.3)## Family: gaussian

## Links: mu = identity; sigma = identity

## Formula: y ~ 1

## Data: list(y = c(-1, 1)) (Number of observations: 2)

## Draws: 2 chains, each with iter = 4000; warmup = 1000; thin = 1;

## total post-warmup draws = 6000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## Intercept 0.04 1.74 -3.28 3.63 1.00 1638 1069

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma 2.05 2.13 0.62 6.84 1.00 1307 1940

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).As in the text, no more warning signs and no more silly estimates. The trace plots look great, too.

post <- as_draws_df(b8.3)

mcmc_trace(post,

pars = vars(b_Intercept:sigma),

size = 0.25) +

labs(title = "My version of Figure 8.5.b",

subtitle = "Oh man. This looks so much better.") +

scale_color_ipsum() +

theme_ipsum() +

theme(legend.direction = "horizontal",

legend.position = c(.85, 1.5))

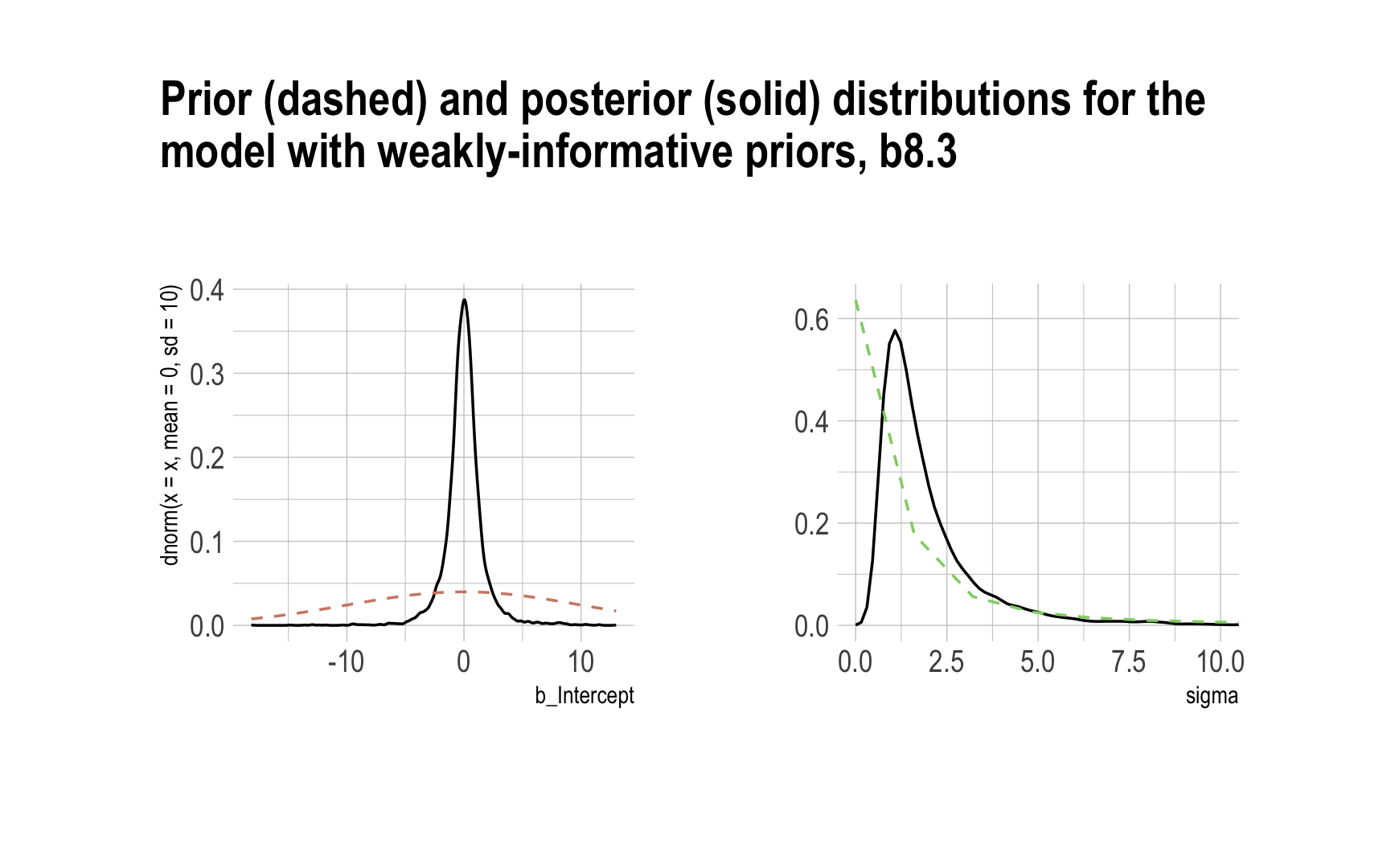

Now behold our version of Figure 8.6.

# left

p1 <-

post %>%

select(b_Intercept) %>%

ggplot(aes(x = b_Intercept)) +

stat_density(geom = "line") +

geom_line(data = data.frame(x = seq(from = min(post$b_Intercept),

to = max(post$b_Intercept),

length.out = 50)),

aes(x = x, y = dnorm(x = x, mean = 0, sd = 10)),

color = ipsum_pal()(1), linetype = 2) +

theme_ipsum()

# right

p2 <-

post %>%

select(sigma) %>%

ggplot(aes(x = sigma)) +

stat_density(geom = "line") +

geom_line(data = data.frame(x = seq(from = 0,

to = max(post$sigma),

length.out = 50)),

aes(x = x, y = dcauchy(x = x, location = 0, scale = 1)*2),

color = ipsum_pal()(2)[2], linetype = 2) +

coord_cartesian(xlim = c(0, 10)) +

ylab(NULL) +

theme_ipsum()

# combine the two

library(patchwork)

p1 + p2 + plot_annotation(title = "Prior (dashed) and posterior (solid) distributions for the\nmodel with weakly-informative priors, b8.3",

theme = theme_ipsum())

8.4.3.1 Overthinking: Cauchy distribution.

Behold the beautiful Cauchy probability density:

\[p(x|x_0, \gamma) = \left ( \pi \gamma \left [ 1 + \left ( \frac{x - x_0}{\gamma} \right ) ^2 \right ] \right ) ^{-1}.\]

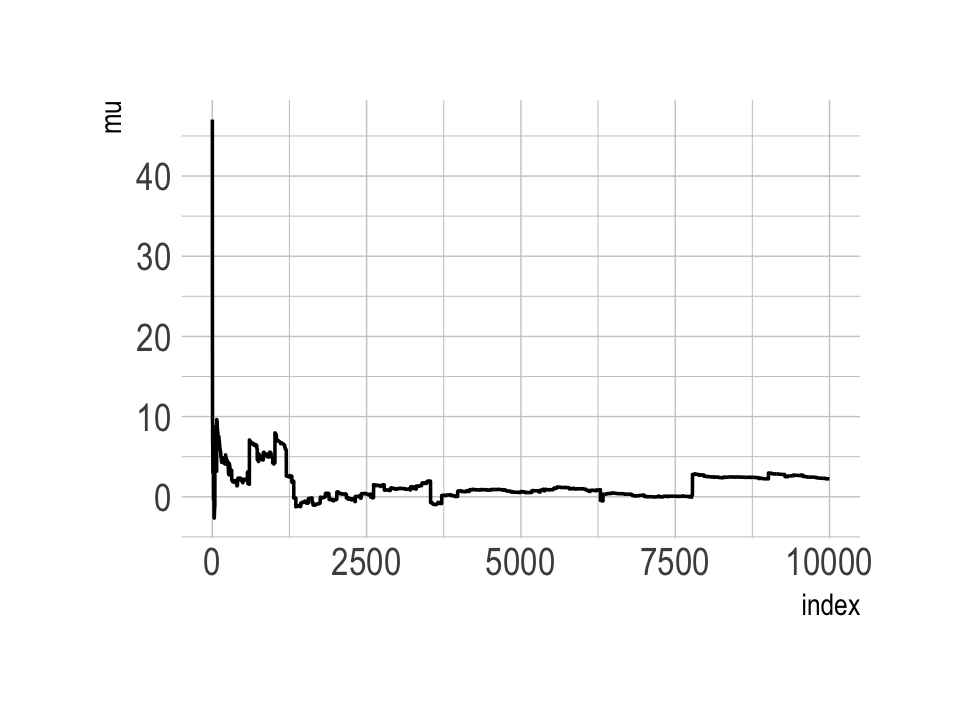

The Cauchy has no mean and variance, but \(x_0\) is the location and \(\gamma\) is the scale. Here’s our version of the simulation. Note our use of the cummean() function.

n <- 1e4

set.seed(8)

tibble(y = rcauchy(n, location = 0, scale = 5),

mu = cummean(y),

index = 1:n) %>%

ggplot(aes(x = index, y = mu)) +

geom_line() +

theme_ipsum()

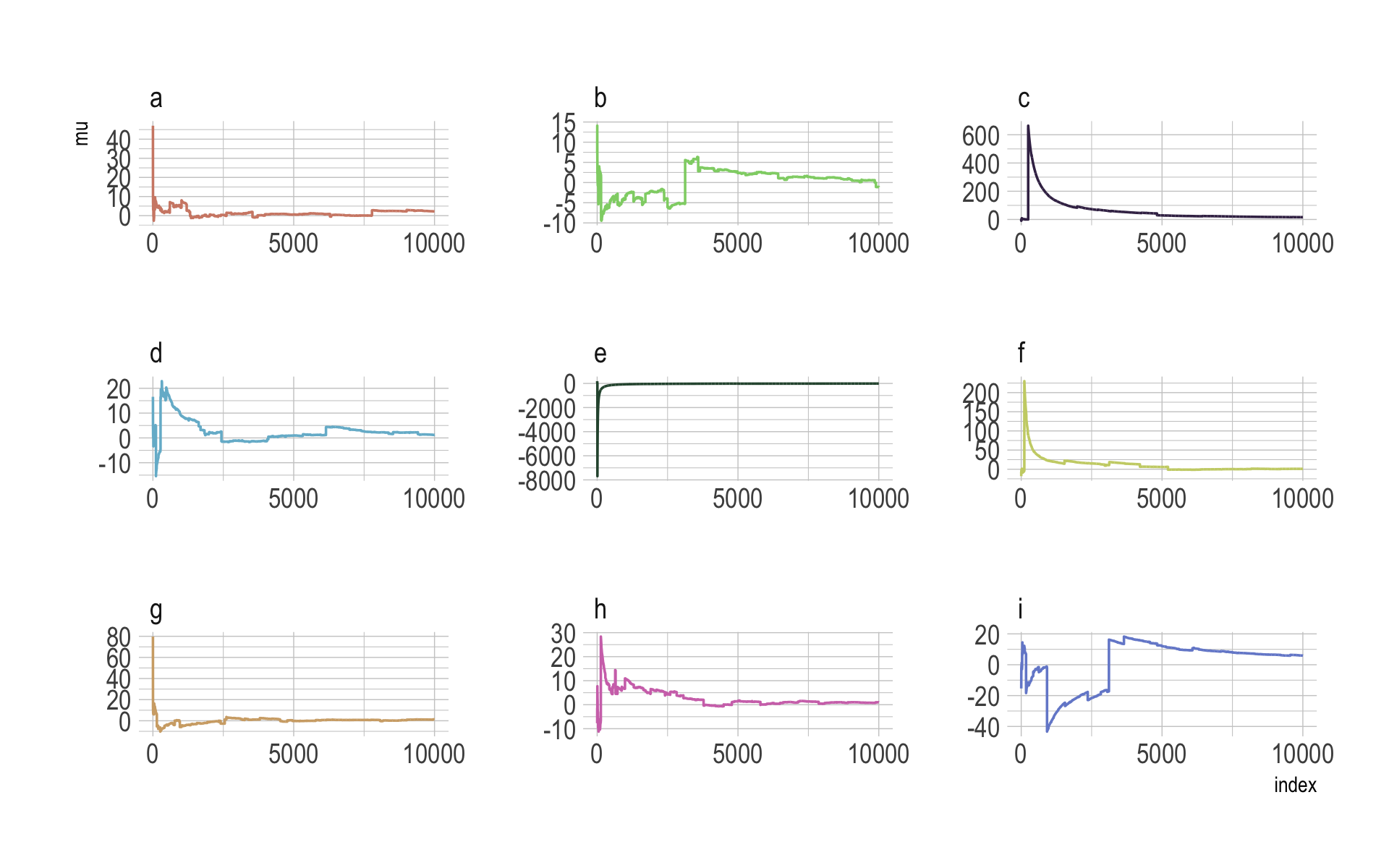

The whole thing is quite remarkable. Just for kicks, here we do it again with nine simulations.

n <- 1e4

set.seed(8)

tibble(a = rcauchy(n, location = 0, scale = 5),

b = rcauchy(n, location = 0, scale = 5),

c = rcauchy(n, location = 0, scale = 5),

d = rcauchy(n, location = 0, scale = 5),

e = rcauchy(n, location = 0, scale = 5),

f = rcauchy(n, location = 0, scale = 5),

g = rcauchy(n, location = 0, scale = 5),

h = rcauchy(n, location = 0, scale = 5),

i = rcauchy(n, location = 0, scale = 5)) %>%

gather() %>%

group_by(key) %>%

mutate(mu = cummean(value)) %>%

ungroup() %>%

mutate(index = rep(1:n, times = 9)) %>%

ggplot(aes(x = index, y = mu)) +

geom_line(aes(color = key)) +

scale_color_manual(values = ipsum_pal()(9)) +

scale_x_continuous(breaks = c(0, 5000, 10000)) +

theme_ipsum() +

theme(legend.position = "none") +

facet_wrap(~key, ncol = 3, scales = "free")

8.4.4 Non-identifiable parameters.

It appears that the only way to get a brms version of McElreath’s m8.4 and m8.5 is to augment the data. In addition to the Gaussian y vector, we’ll add two constants to the data, intercept_1 = 1 and intercept_2 = 1.

set.seed(8)

y <- rnorm(100, mean = 0, sd = 1)b8.4 <-

brm(data = list(y = y,

intercept_1 = 1,

intercept_2 = 1),

family = gaussian,

y ~ 0 + intercept_1 + intercept_2,

prior = c(prior(uniform(-1e10, 1e10), class = b),

prior(cauchy(0, 1), class = sigma)),

init = list(list(intercept_1 = 0, intercept_2 = 0, sigma = 1),

list(intercept_1 = 0, intercept_2 = 0, sigma = 1)),

iter = 4000, warmup = 1000, chains = 2,

seed = 8,

file = "fits/b08.04")Our model results don’t perfectly mirror McElreath’s, but they’re identical in spirit.

print(b8.4)## Warning: Parts of the model have not converged (some Rhats are > 1.05). Be careful when analysing

## the results! We recommend running more iterations and/or setting stronger priors.## Family: gaussian

## Links: mu = identity; sigma = identity

## Formula: y ~ 0 + intercept_1 + intercept_2

## Data: list(y = y, intercept_1 = 1, intercept_2 = 1) (Number of observations: 100)

## Draws: 2 chains, each with iter = 4000; warmup = 1000; thin = 1;

## total post-warmup draws = 6000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## intercept_1 -118.40 1513.42 -2685.34 2723.23 2.54 2 21

## intercept_2 118.30 1513.43 -2723.26 2685.36 2.54 2 21

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma 1.10 0.08 0.96 1.26 1.05 24 67

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

## scale reduction factor on split chains (at convergence, Rhat = 1).Note the frightening warning message. Those results are a mess! Let’s try again.

b8.5 <-

brm(data = list(y = y,

intercept_1 = 1,

intercept_2 = 1),

family = gaussian,

y ~ 0 + intercept_1 + intercept_2,

prior = c(prior(normal(0, 10), class = b),

prior(cauchy(0, 1), class = sigma)),

init = list(list(intercept_1 = 0, intercept_2 = 0, sigma = 1),

list(intercept_1 = 0, intercept_2 = 0, sigma = 1)),

iter = 4000, warmup = 1000, chains = 2,

seed = 8,

file = "fits/b08.05")print(b8.5)## Family: gaussian

## Links: mu = identity; sigma = identity

## Formula: y ~ 0 + intercept_1 + intercept_2

## Data: list(y = y, intercept_1 = 1, intercept_2 = 1) (Number of observations: 100)

## Draws: 2 chains, each with iter = 4000; warmup = 1000; thin = 1;

## total post-warmup draws = 6000

##

## Population-Level Effects:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## intercept_1 -0.27 6.88 -13.79 13.69 1.00 1513 1714

## intercept_2 0.18 6.88 -13.80 13.70 1.00 1514 1728

##

## Family Specific Parameters:

## Estimate Est.Error l-95% CI u-95% CI Rhat Bulk_ESS Tail_ESS

## sigma 1.09 0.08 0.95 1.25 1.00 2300 1996

##

## Draws were sampled using sampling(NUTS). For each parameter, Bulk_ESS

## and Tail_ESS are effective sample size measures, and Rhat is the potential

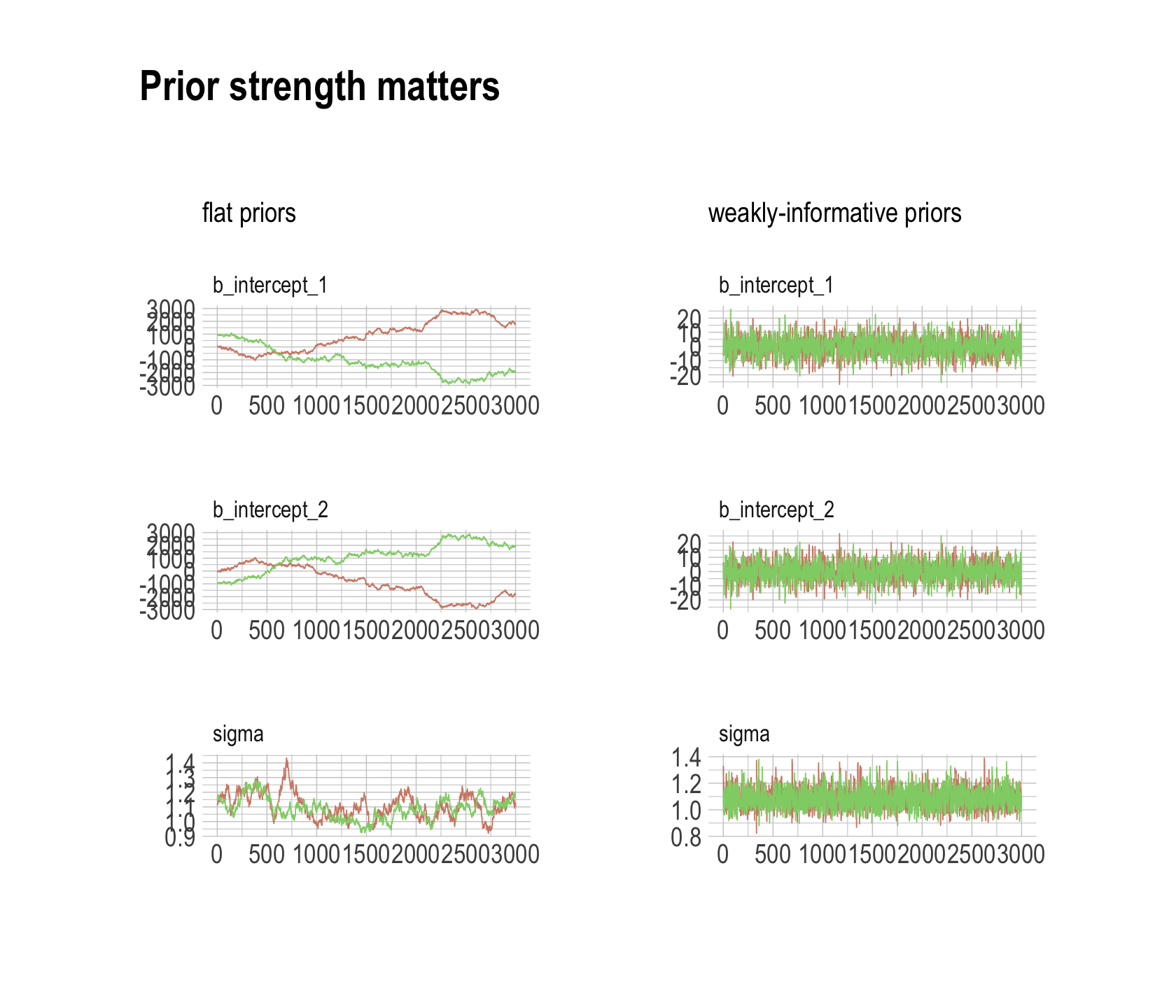

## scale reduction factor on split chains (at convergence, Rhat = 1).Much better. For our version of Figure 8.7, we’ll make the trace plots for the two models separately and combine them with patchwork.

post <- as_draws_df(b8.4)

p1 <-

mcmc_trace(post,

pars = vars(b_intercept_1:sigma),

size = 0.25,

facet_args = c(ncol = 1)) +

scale_color_ipsum() +

labs(subtitle = "flat priors") +

theme_ipsum() +

theme(legend.position = "none",

strip.text = element_text(size = 10))

post <- as_draws_df(b8.5)

p2 <-

mcmc_trace(post,

pars = vars(b_intercept_1:sigma),

size = 0.25,

facet_args = c(ncol = 1)) +

scale_color_ipsum() +

labs(subtitle = "weakly-informative priors") +

theme_ipsum() +

theme(legend.position = "none",

strip.text = element_text(size = 10))

p1 + p2 + plot_annotation(title = "Prior strength matters",

theme = theme_ipsum())

The central message in the text, default to weakly-regularizing priors, holds for brms just as it does in rethinking. For more on the topic, see the recommendations from the Stan team. If you want to dive deeper, check out Simpson’s post on Gelman’s blog and their corresponding (2017) paper with Betancourt, The prior can often only be understood in the context of the likelihood

Session info

sessionInfo()## R version 4.2.2 (2022-10-31)

## Platform: x86_64-apple-darwin17.0 (64-bit)

## Running under: macOS Big Sur ... 10.16

##

## Matrix products: default

## BLAS: /Library/Frameworks/R.framework/Versions/4.2/Resources/lib/libRblas.0.dylib

## LAPACK: /Library/Frameworks/R.framework/Versions/4.2/Resources/lib/libRlapack.dylib

##

## locale:

## [1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

##

## attached base packages:

## [1] parallel stats graphics grDevices utils datasets methods base

##

## other attached packages:

## [1] patchwork_1.1.2 ggmcmc_1.5.1.1 bayesplot_1.10.0 GGally_2.1.2

## [5] tidybayes_3.0.2 brms_2.18.0 Rcpp_1.0.9 cmdstanr_0.5.3

## [9] rstan_2.21.8 StanHeaders_2.21.0-7 extrafont_0.18 hrbrthemes_0.8.0

## [13] forcats_0.5.1 stringr_1.4.1 dplyr_1.0.10 purrr_1.0.1

## [17] readr_2.1.2 tidyr_1.2.1 tibble_3.1.8 ggplot2_3.4.0

## [21] tidyverse_1.3.2

##

## loaded via a namespace (and not attached):

## [1] readxl_1.4.1 backports_1.4.1 systemfonts_1.0.4 plyr_1.8.7

## [5] igraph_1.3.4 svUnit_1.0.6 sp_1.5-0 splines_4.2.2

## [9] crosstalk_1.2.0 TH.data_1.1-1 rstantools_2.2.0 inline_0.3.19

## [13] digest_0.6.31 htmltools_0.5.3 rethinking_2.21 fansi_1.0.3

## [17] magrittr_2.0.3 checkmate_2.1.0 googlesheets4_1.0.1 tzdb_0.3.0

## [21] modelr_0.1.8 RcppParallel_5.1.5 matrixStats_0.63.0 xts_0.12.1

## [25] sandwich_3.0-2 extrafontdb_1.0 prettyunits_1.1.1 colorspace_2.0-3

## [29] rvest_1.0.2 ggdist_3.2.1 rgdal_1.5-30 haven_2.5.1

## [33] xfun_0.35 callr_3.7.3 crayon_1.5.2 jsonlite_1.8.4

## [37] lme4_1.1-31 survival_3.4-0 zoo_1.8-10 glue_1.6.2

## [41] gtable_0.3.1 gargle_1.2.0 emmeans_1.8.0 distributional_0.3.1

## [45] pkgbuild_1.3.1 Rttf2pt1_1.3.10 shape_1.4.6 abind_1.4-5

## [49] scales_1.2.1 mvtnorm_1.1-3 DBI_1.1.3 miniUI_0.1.1.1

## [53] xtable_1.8-4 HDInterval_0.2.4 stats4_4.2.2 DT_0.24

## [57] htmlwidgets_1.5.4 httr_1.4.4 threejs_0.3.3 RColorBrewer_1.1-3

## [61] arrayhelpers_1.1-0 posterior_1.3.1 ellipsis_0.3.2 reshape_0.8.9

## [65] pkgconfig_2.0.3 loo_2.5.1 farver_2.1.1 sass_0.4.2

## [69] dbplyr_2.2.1 utf8_1.2.2 tidyselect_1.2.0 labeling_0.4.2

## [73] rlang_1.0.6 reshape2_1.4.4 later_1.3.0 munsell_0.5.0

## [77] cellranger_1.1.0 tools_4.2.2 cachem_1.0.6 cli_3.6.0

## [81] generics_0.1.3 broom_1.0.2 evaluate_0.18 fastmap_1.1.0

## [85] processx_3.8.0 knitr_1.40 fs_1.5.2 nlme_3.1-160

## [89] projpred_2.2.1 mime_0.12 xml2_1.3.3 compiler_4.2.2

## [93] shinythemes_1.2.0 rstudioapi_0.13 gamm4_0.2-6 reprex_2.0.2

## [97] bslib_0.4.0 stringi_1.7.8 highr_0.9 ps_1.7.2

## [101] Brobdingnag_1.2-8 gdtools_0.2.4 lattice_0.20-45 Matrix_1.5-1

## [105] nloptr_2.0.3 markdown_1.1 shinyjs_2.1.0 tensorA_0.36.2

## [109] vctrs_0.5.1 pillar_1.8.1 lifecycle_1.0.3 jquerylib_0.1.4

## [113] bridgesampling_1.1-2 estimability_1.4.1 httpuv_1.6.5 R6_2.5.1

## [117] bookdown_0.28 promises_1.2.0.1 gridExtra_2.3 codetools_0.2-18

## [121] boot_1.3-28 colourpicker_1.1.1 MASS_7.3-58.1 gtools_3.9.4

## [125] assertthat_0.2.1 withr_2.5.0 shinystan_2.6.0 multcomp_1.4-20

## [129] mgcv_1.8-41 hms_1.1.1 grid_4.2.2 minqa_1.2.5

## [133] coda_0.19-4 rmarkdown_2.16 googledrive_2.0.0 shiny_1.7.2

## [137] lubridate_1.8.0 base64enc_0.1-3 dygraphs_1.1.1.6