Fundamentals of Wrangling Healthcare Data with R

Data Wrangling is the process of gathering, selecting, transforming and mapping “raw data” into another format with the intent of making it more appropriate and valuable for a variety of downstream analytic purposes.

The Primary goal in data wrangling is to assure quality and useful data.

Also known as “data cleaning”, “data processing”, or “data munging”; many professionals will tell you that data management related tasks will consume upwards of 80% of their time, leaving only 20% for modeling.

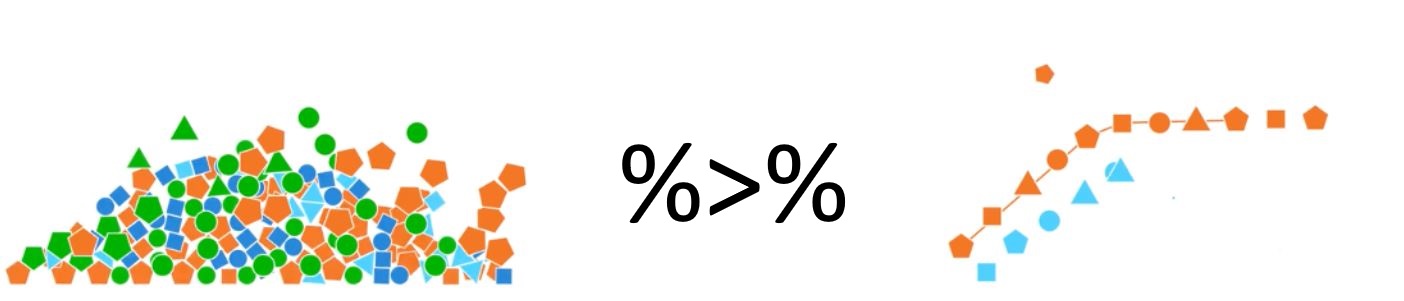

Figure 0.1: Data Wrangling

Wikipedia describes several key aspects of data wrangling:

Aspects of Data Wrangling

-

Discovering

- Gain a better understanding of the data: different data is worked and organized in different ways.

-

Structuring

- Raw data is typically unorganized and much of it may not be useful for the end product. This step is important for easier computation and analysis in the later steps.

-

Cleaning

- There are many different forms of cleaning data, for example one form of cleaning data is catching dates formatted in a different way, another form is removing outliers that will skew results and also formatting null values, other steps might include imputing missing values.

- This is important in assuring the overall quality of the data.

-

Enriching

- Determine whether or not additional data would benefit the data set that could be easily added.

-

Validating

- Use repetitive sequences of validation rules to assure data consistency as well as quality and security. An example of a validation rule is confirming the accuracy of fields via cross checking data.

-

Publishing

- Prepare the data set for use downstream, which could include use for users or software. Be sure to document any steps and logic during wrangling.

Moreover, research is becoming clear that our algorithms may not perform as expected if the data-quality is inconsistent. Thus, Feature Engineering is becoming a greater area of research to improve existing model performance.

Version: 0.0.1.A.6.26.2022 | Philadelphia, PA, USA.